进行数据清洗首先开启Hadoop

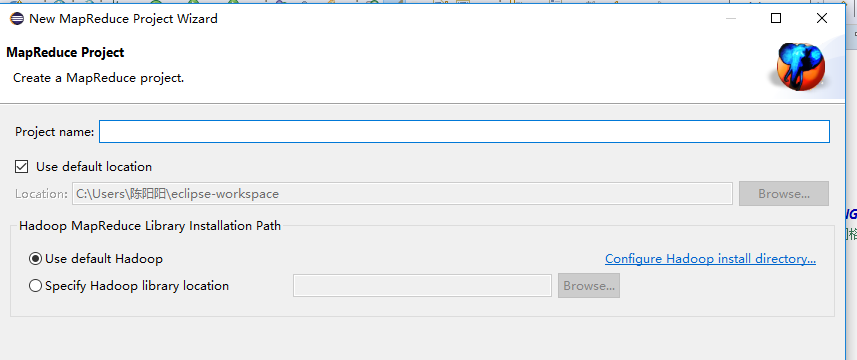

然后在eclipse里面创建MapReduce项目

之后写代码:

package 数据清洗hive; import java.io.IOException; import java.text.SimpleDateFormat; import java.util.Date; import java.util.Locale; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.input.TextInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; public class shujuqingxi { public static class Map extends Mapper<Object,Text,Text,Text>{ public static final SimpleDateFormat FORMAT = new SimpleDateFormat("d/MMM/yyyy:HH:mm:ss", Locale.ENGLISH); //原时间格式 public static final SimpleDateFormat dateformat1 = new SimpleDateFormat("yyyy-MM-dd-HH:mm:ss");//现时间格式 private static Date parseDateFormat(String string) { //转换时间格式 Date parse = null; try { parse = FORMAT.parse(string); } catch (Exception e) { e.printStackTrace(); } return parse; } private static Text newKey = new Text(); private static Text newvalue = new Text(); public void map(Object key,Text value,Context context) throws IOException, InterruptedException{ String line = value.toString(); System.out.println(line); String arr[] = line.split(","); newKey.set(arr[0]); final int first = arr[1].indexOf(""); final int last = arr[1].indexOf(" +0800"); String time = arr[1].substring(first + 1, last).trim(); Date date = parseDateFormat(time); arr[1] = dateformat1.format(date); newvalue.set(arr[1]+" "+arr[2]+" "+arr[3]+" "+arr[4]+" "+arr[5]); context.write(newKey,newvalue); } } public static class Reduce extends Reducer<Text, Text, Text, Text> { protected void reduce(Text key, Iterable<Text> values, Context context)throws IOException, InterruptedException { for(Text text : values){ context.write(key,text); } } } public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { Configuration conf = new Configuration(); System.out.println("start"); Job job=Job.getInstance(conf); job.setJobName("filter"); job.setJarByClass(shujuqingxi.class); job.setMapperClass(Map.class); job.setReducerClass(Reduce.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(Text.class); job.setInputFormatClass(TextInputFormat.class); job.setOutputFormatClass(TextOutputFormat.class); Path in=new Path("hdfs://192.168.57.128:9000/testhdfs1026/result.txt"); Path out=new Path("hdfs://192.168.57.128:9000/testhdfs1026/result"); FileInputFormat.addInputPath(job, in); FileOutputFormat.setOutputPath(job, out); boolean flag = job.waitForCompletion(true); System.out.println(flag); System.exit(flag? 0 : 1); } }

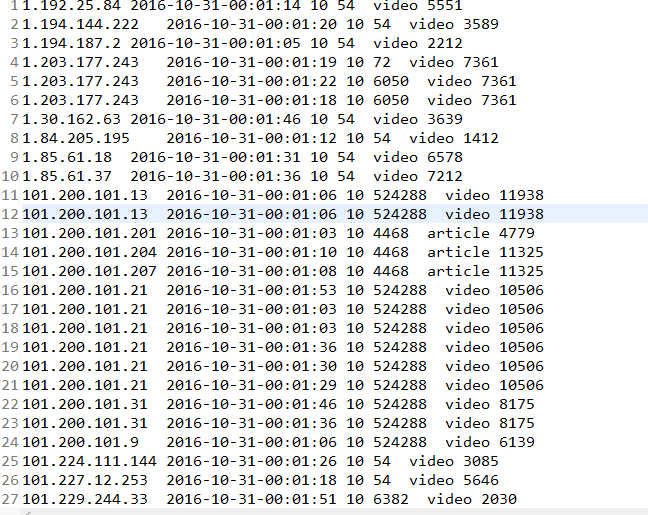

程序运行成功后: