=================第2周 神经网络基础===============

===4.1 深层神经网络===

Although for any given problem it might be hard to predict in advance exactly how deep a neural network you would want,so it would be reasonable to try logistic regression,try one and then two hidden layers,and view the number of hidden layers as another hyper parameter。我们用上标 [ ] 表示层数。

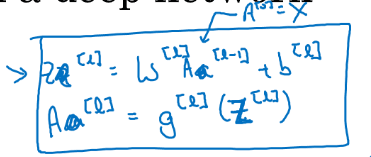

===4.2 深层网络中的前向传播===

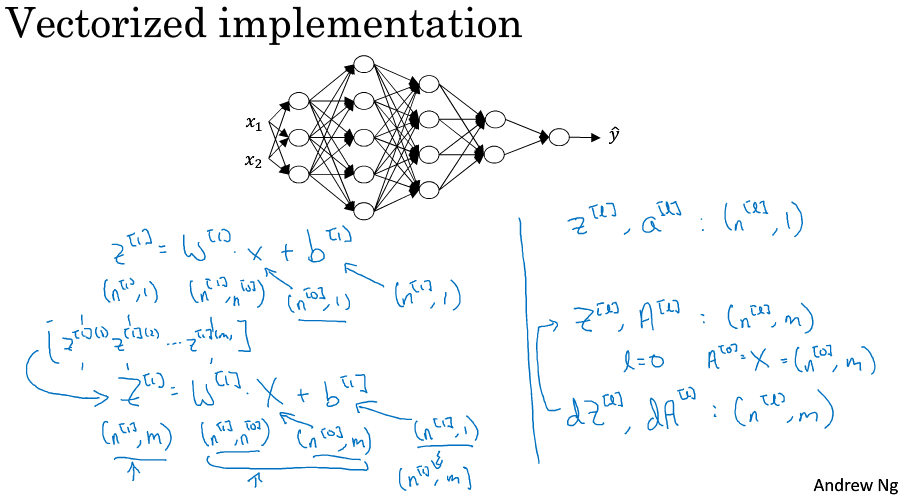

===4.3 核对矩阵的维数===

非常仔细和系统化地去思考矩阵的维数是一个减少bug的好方法。变量导数的维度应该和变量自身维度一致,特别注意向量化时,m个样本同时输入时,对各层的Z和A的导数维度依然包含m个样本,但是它们对各层的W和b的导数维度依然和W,b本身一样,m个样本对它们的导数要集合起来哦!

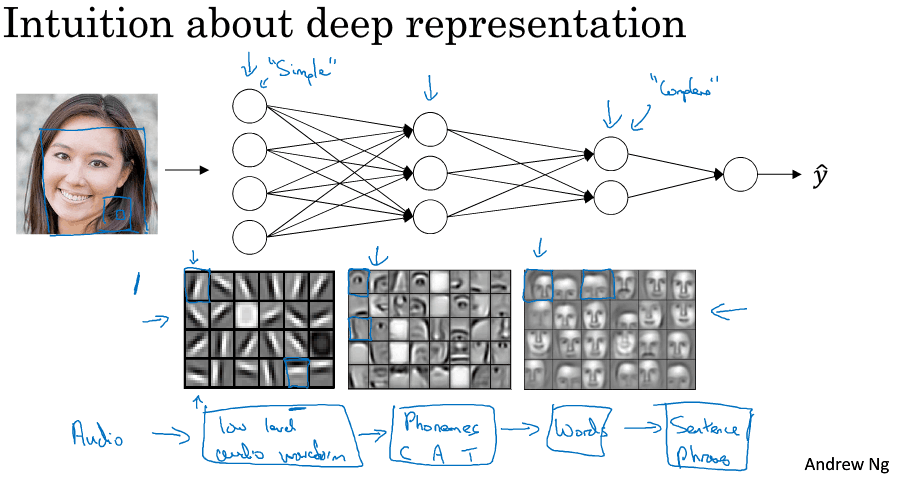

===4.4 为什么使用深层表示===

深层神经网络可以实现 simple to complex hierarchical representation or compositional representation,例如对图像,对语音。

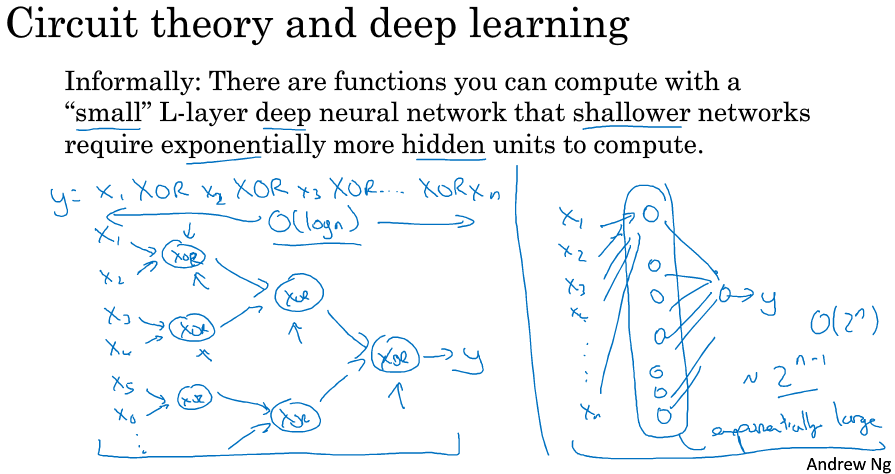

另外一个关于神经网络为何有效的理论来源于电路理论,which pertains to thinking about what types of functions you can compute with different and gates and or gates。如果不适用多层而只是用一层解决ppt中的异或问题,那么隐层神经元的个数是指数增加的(每个神经元代表x1-xn的所有二进制情况中的一种,如10010)。我希望这能让你有点概念,意识到有很多数学函数用深度网络计算比浅网络要容易得多。我个人觉得电路理论不是那么直观,但人们在解释深度为什么好时还是会经常提到。

说真心话,其实深度学习这个名字挺唬人的,本来就叫多隐层的神经网络,包装了一下后顿时高大上,激发了大众的想象力。但是当我开始解决一个新问题时,我通常会从logistic回归开始,再试试一到两个隐层,把层数作为调参参数找到合适的深度。但是近几年以来,there has been a trend toward people finding that for some applications very very deep neural networks can sometimes be the best model for a problem。

===4.5 搭建深层神经网络块===

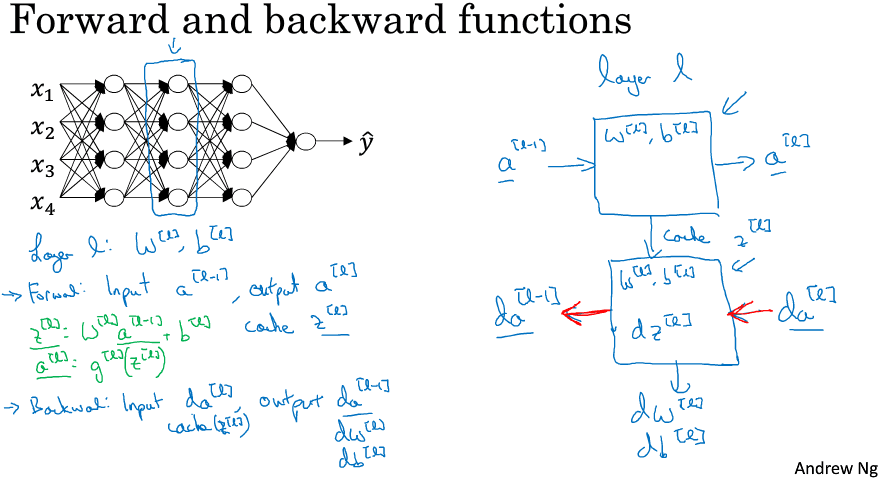

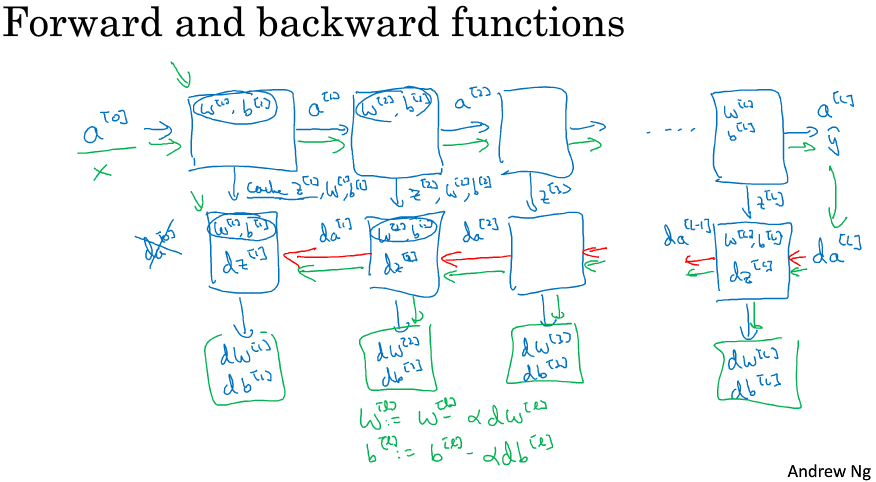

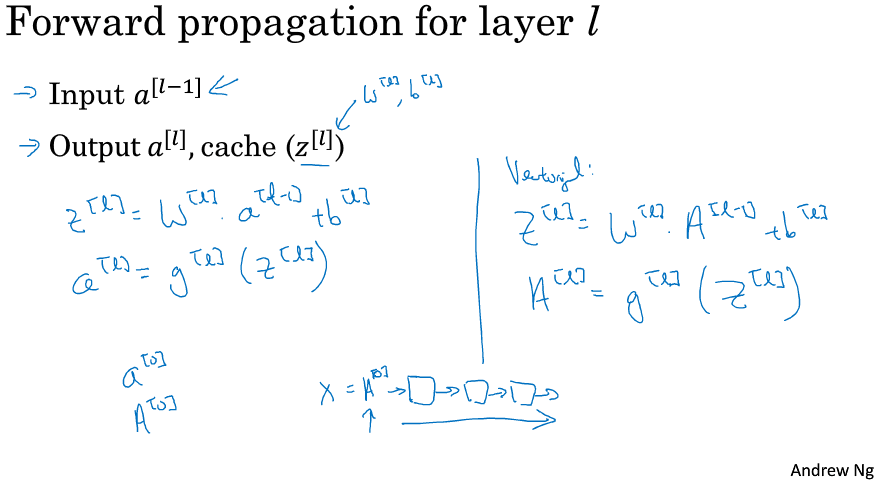

Cache z,因为缓存的z^[l]对以后的正向反向传播的步骤非常有用。ppt中的图示很清晰,希望你在实际的编程练习中有更深的体会。

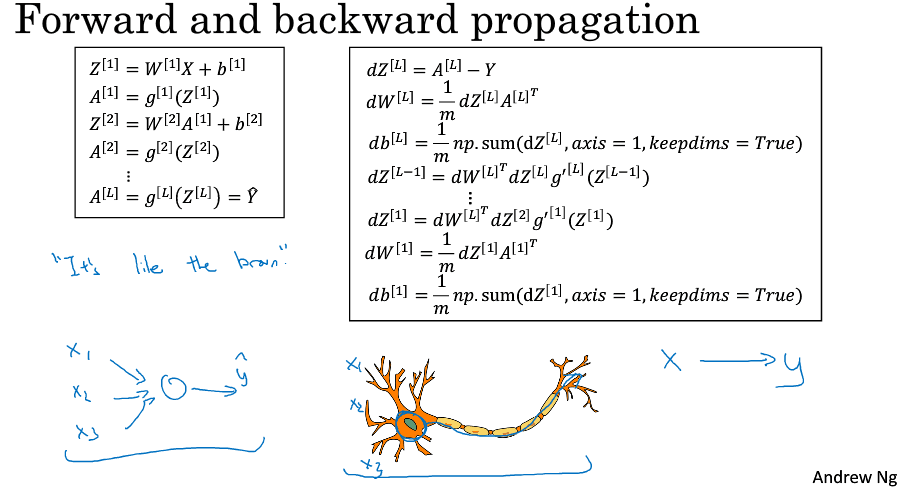

===4.6 前向和反向传播===

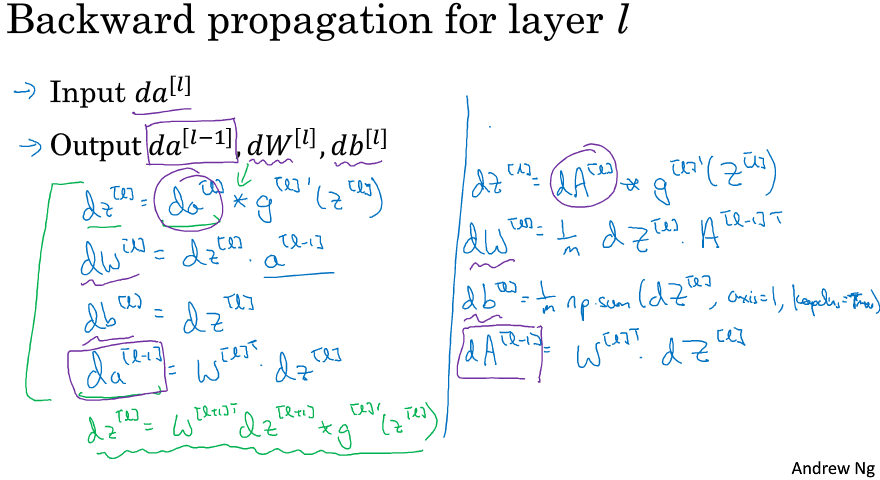

I didn't explicitly put that (a^[l]) in the cache, where it turns out you need this as well(PS:个人感觉这方面课程讲得很抽象,到底要缓存什么?自己动手实现以下吧!亲自实现一下肯定就清楚了,另外之前说缓存 z,那么再加个激活函数不就也相当于缓存了 a吗^_^)。注意符号 * 代表 逐元素相乘。另外,看到ppt中向量化的 dW 式子里有个 1/m 吗?就像前面4.3节提示你要注意的,哈哈:)。

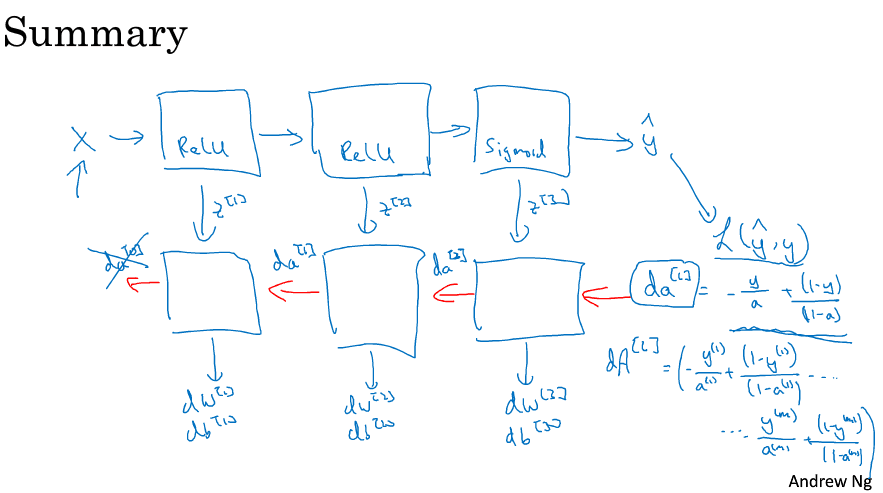

注意Summary的ppt中dA的计算,这是以最后一层为Logistic回归为例子的,注意dA的大小!m个样本中的每一个是对应一个导数的,而不是融合在一起!我的建议还是,做做编程作业,你会觉得神清气爽。虽然我必须要说 ,即使现在我在实现一个算法,有时我也会惊讶,怎么莫名其妙就成功了。那是因为机器学习里的复杂性是来源于数据本身,而不是一行行的代码。所有有时候,you implement a few lines of code not quite sure what it did,but it almost magically work. And it's because of all the magic is actually not in the piece of code you write. 即使是我有时当算法works时也会有点惊讶,because lots of complexity of your learning algorithm comes from the data rather than the code。

===4.7 参数 VS 超参数===

what I've seen is that first I've seen researchers from one discipline try to go to a different one and sometimes the intuitions about hyper parameters carries over (可以推广), and sometimes it doesn't. 所以我经常建议人们,特别是刚开始应用于新问题的时候,to just try out a range of values and see what works。在课程Part.2,we'll see some systematic ways for trying out a range of values all right.

其次,even if you're working on one application for a long time, as you make progress on the problem, it is quite possible there the best value for the learning rate or number of hidden units and so on might change. So even if you tune your system to the best value of hyper parameters today, if possible you find that the best value might change a year from now. Maybe because the computer infrastructure, CPUs or the type of GPU running on or something has changed.

所以有一条经验规律, you know every now and then maybe every few months if you're working on a problem for an extended period of time for many years, just try a few values for the hyper parameters and double check (勤于检查) if there's a better value for the hyper parameters. And as you do, 相信你慢慢会获得设定最适合你问题的超参数的直觉。

这也是深度学习让人不满的一部分原因,你必须尝试很多次不同可能性,深度学习的这个领域还在发展,也许过段时间后会有更好的调参指导建议出来。But it's also possible that because CPUs and GPUs and networks and datasets are all changing, and it is possible that the guidance won't to converge for some time and you just need to keep trying out different values and evaluate them on a hold out cross-validation set or something and pick the value that works for your problems.

===4.8 这和大脑有什么关系====

我觉得关联不大。我认为至今为止甚至连神经科学家们都很难解释究竟一个神经元在做什么,一个小小的神经元其实却是极其复杂的,以至于我们无法在神经科学的角度描述清楚。这种类比已经逐渐过时了,我自己尽量不会这么说。