2. 以人为中心的视觉理解 (ceiwu lu, SJU)

2.1 基于视频的时序建模和动作识别方法 (liming wang, NJU)

-

dataset

- 两张图:

- 注意一个区分:trimmed and untrimmed videos

-

outline

- action recognition

- action temporal localization

- action spatial detection

- action spatial-temporal detection

-

opportunities and challenges

- opportunities :

- videos provide huge and rich data for visual learning

- action is core in motion perception and has many applications in video understanding

- challenges:

- complex dynamics and temporal variations

- action vocabulary is not well defined

- noisy and weakly labels (dense labeling is expensive)

- High computational and memory cost

- opportunities :

-

temporal structure: 需要对动作进行分解:decomposition

-

常用的 Deep networks

- large-scale video classification with CNN (feifei li, CVPR2014)

- Two-Stream CNN for action recognition in videos (NIPS2014)

- learning spatiotemporal features with 3D CNN (Du Tran, ICCV2015)

- TDD (liming wang, CVPR2015)

- Real-time action recognition with enhanced motion vector CNNs (CVPR2016)

- Two Stream I3D (CVPR2017)

- R(2+1)D (CVPR2018)

- SlowFast Networks (kaiming he, CVPR2019)

-

liming wang 自己的3篇工作

- shot-term -> middle-term -> long term modeling,对应的论文是 (ARTNet -> TSN -> UntrimmedNet)

- 更多细节理解,直接看他的PPT写读后感

- 按照 liming wang 自己说的,video action recognition/detection 对于 我的VAD 基本没有帮助

-

之前看到一些很好的zhihu link: 动作识别-1, 动作识别-2, 时序行为检测-1, 时序行为检测-2, 时序行为检测-3, 时序行为检测-4,

-

所有的PPT图片

2.2 复杂视频的深度高效分析与理解方法 (yu qiao, CAS)

-

DL的一些经验性Trick介绍

-

人脸识别的开集特点 (Open-set 和 novelty detection有点类似,参考TODO)

-

Center Loss (ECCV2016)

center loss意思即为:为每一个类别提供一个类别中心,最小化min-batch中每个样本与对应类别中心的距离,这样就可以达到缩小类内距离的目的。

center loss的原理主要是在softmax loss的基础上,通过对训练集的每个类别在特征空间分别维护一个类中心,在训练过程,增加样本经过网络映射后在特征空间与类中心的距离约束,从而兼顾了类内聚合与类间分离。

-

Center Loss的改进 (IJCV2019): 用投影方向代替类中心

-

Large Margin思想设计的Loss:

- L-softmax (Liu, ICML 2016)

- A-softmax (Liu, CVPR2017)

- Additive Margin Softmax (ICLR 2018 workshop)

- CosLoss (wang, CVPR2018)

- ArcFace (CVPR2019)

-

-

Range Loss :有效应对类间样本数不均衡造成的长尾问题

- motivation:少数人(明星)具有大量的图片,多数人却只有少量图片,这种长尾分布启发了两个动机:(1)长尾分布如何影响模型性能的理论分析;(2)设计新的Loss解决这个问题

- 此处有一张图片,并且Range Loss 的 PPT缺失了

-

video action recognition

- 姿态注意力机制 RPAN (ICCV2017, Oral)

- 把行为识别和姿态估计两个任务进行结合

- 利用姿态变化,引导 RNN 对行为的动态过程进行建模

- 姿态注意力机制 RPAN (ICCV2017, Oral)

-

一篇文章

- Temporal Hallucinating for Action Recognition with Few Still Images (CVPR 2018)

-

一些图

2.3 understanding emotions in videos (yanwei fu, FDU)

- 个人感觉:这是个刚挖的新坑,有趣,值得了解下

-

applicaltion

- web video search

- video recommendation system

- avoid inappropriate advertisement

-

Tasks of Emotions in videos

- Emotion recognition

- emotion attribution

- emotion-oriented summarization

-

Challenges

- Sparsely expressed in videos

- Diverse content and variable quality

-

Knowledge Transfer

- Zero-shot Emotion learning (配一张图)

- A multi-task neural approach for emotion attribution, classification and summarization (TMM)

- Frame-Transfermer emotion classification Network (*CMR 2017)

- Zero-shot Emotion learning (配一张图)

-

Emotion-oriented summarization

- 相当于选择关键帧以及帧信息融合

-

Face emotion

- Posture, Expression, Identity in faces

-

一些图:

2.4 以人为中心视觉识别和定位中的结构化深度学习方法探索 (wanli ouyang, sdney university)

-

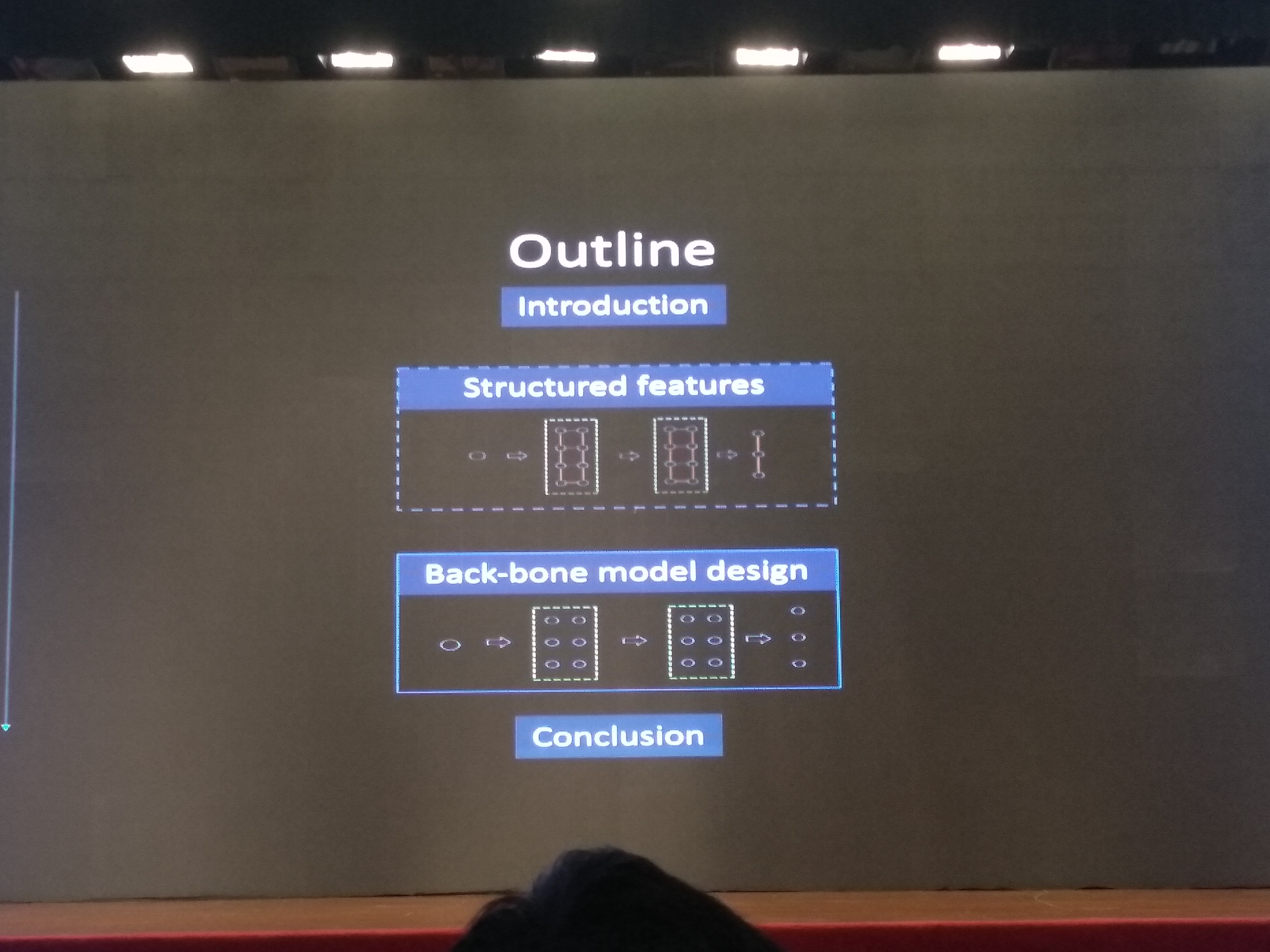

outline

- introduction

- structured feature learning

- back-bone model design

- conclusion

-

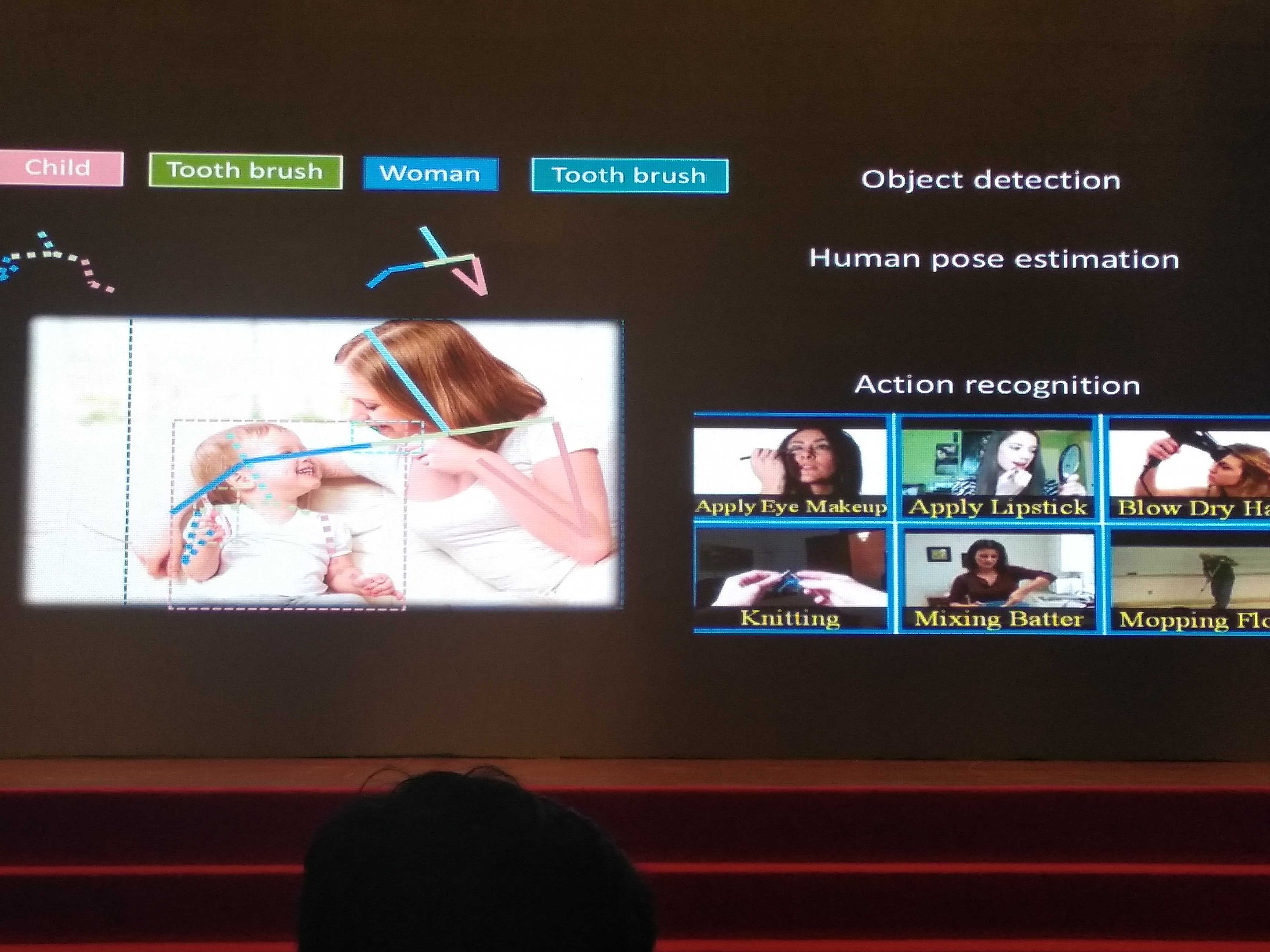

introduction

- object detection

- human pose estimation

- action recognition

-

structured feature learning

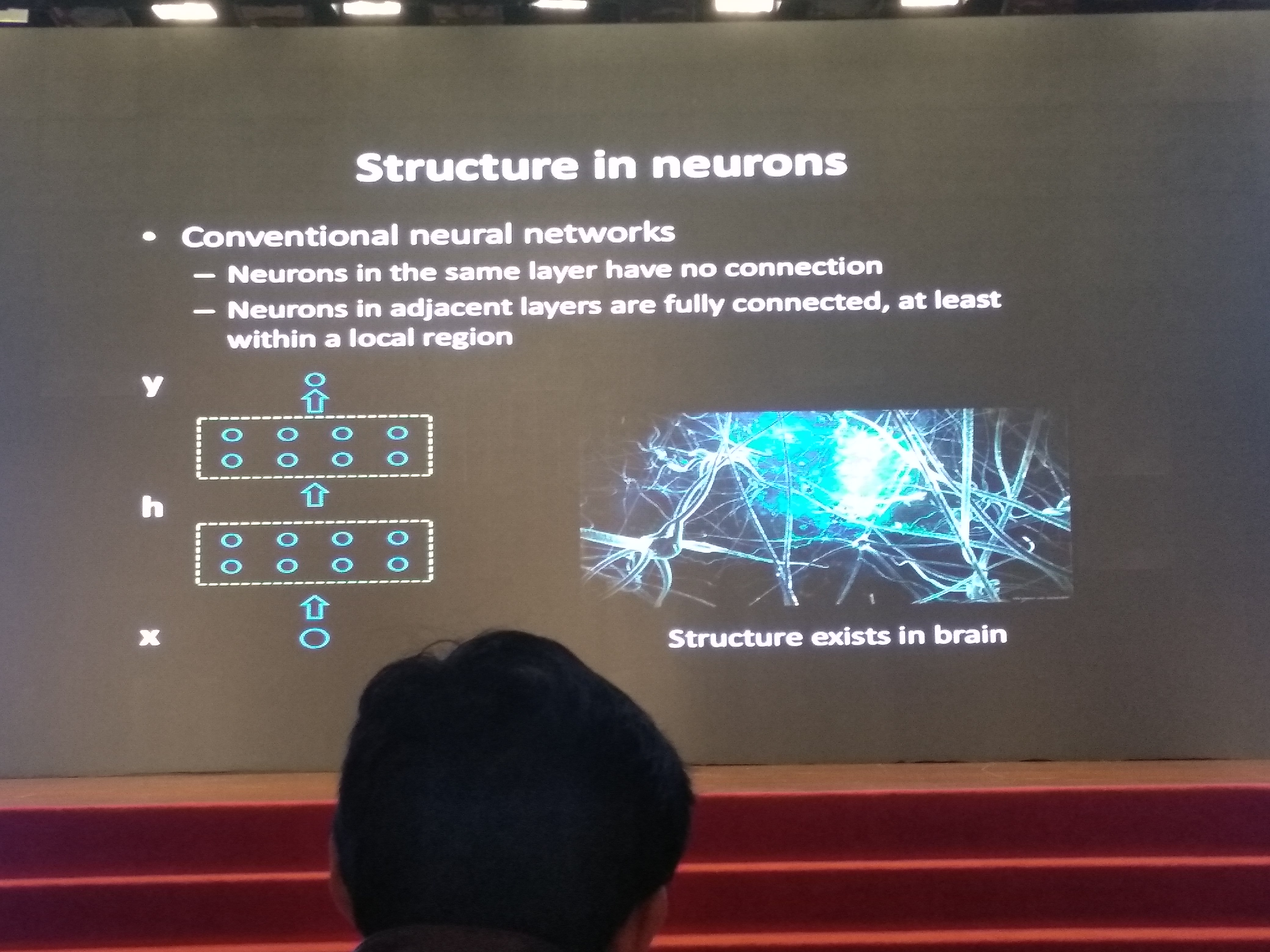

- structure in neurons

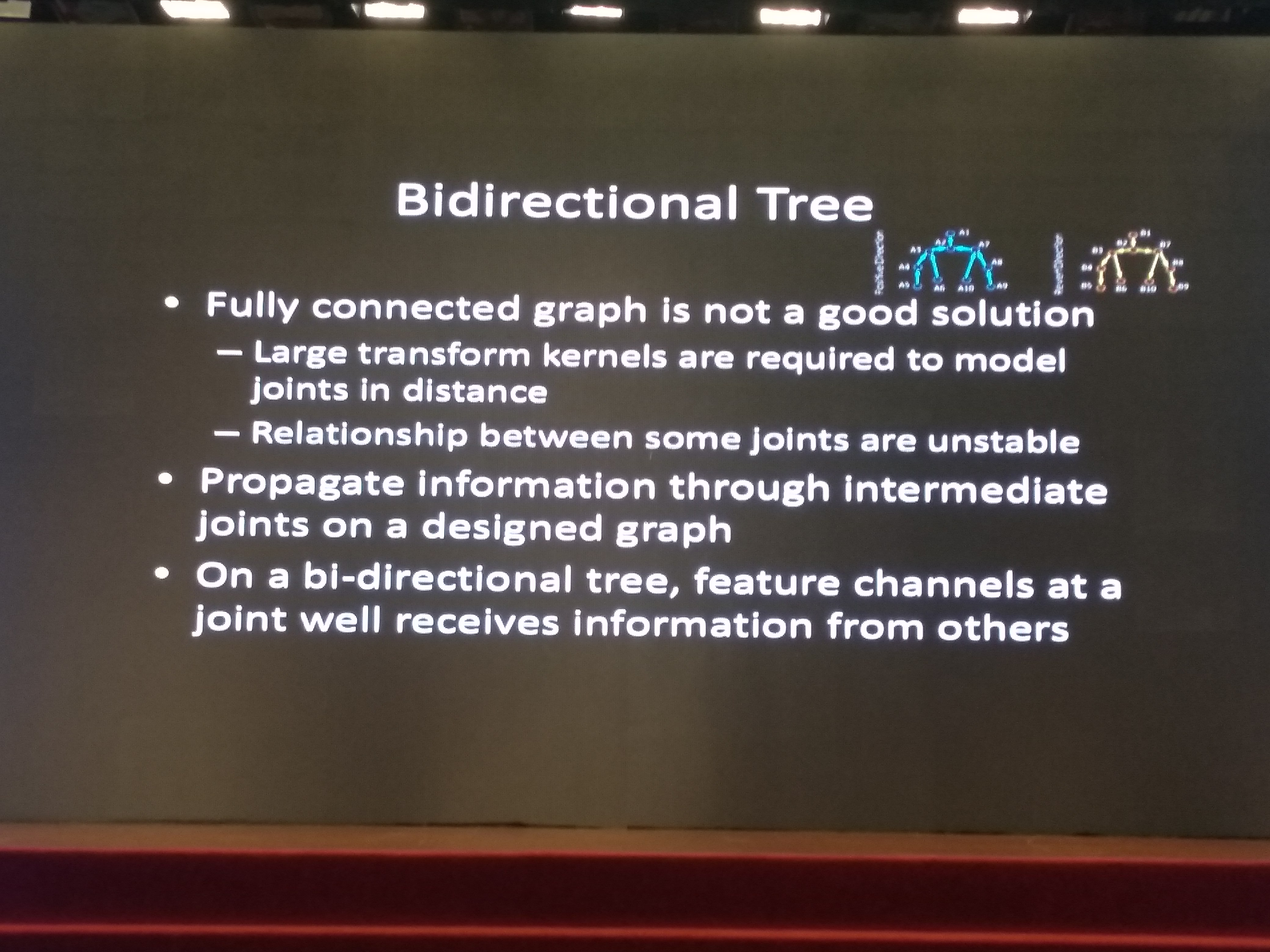

- motivation:传统 neurons 在同一层没有连接,在相邻层存在局部或者全部连接,没有保证局部区域的信息。从而引出每一层网络的各神经元具有结构化信息的。然后以人体姿态估计为例,分析了基于全连接神经网络的问题:在对人体节点的距离进行建模需要大的卷积核以及一些关节点的关系是不稳定。提出结构化特征学习的人体姿态估计模型(Bidirectional Tree)。

- Bidirectional Tree

- 对应的papers

- end-to-end learning of deformable mixture of parts and deep convolutional neural networks for human pose estimation (CVPR2016)

- structure feature learning for pose estimation (CVPR2016)

- CRF-CNN, modeling structured information in human pose estimation (CVPR2016)

- learning deep structured multi-scale features using attention-gated CRFs for contour prediction (NIPS 2017)

- application of structured feature learning

- 有一张图片

- structure in neurons

-

back-bone model design

- Hourglass for classification (Encode-Decoder 结构,比如 UNet,一般用于图像分割,不用于分类)

- 希望: feature with high-level semantics and high resolution is good

- 现实:feature with high-level semantics with low resolution

- Hourglass for classification has poor performance的原因分析:Different tasks require different resolution of features,所以提出 FishNet

- FishNet

- motivation: 为了统一利用像素级、区域级以及图像级任务的优势,欧阳万里老师提出了FishNet,FishNet的优势是:更好的将梯度传到浅层网络,所提取的特征包含了丰富的低层和高层语义信息并保留和微调了各层级信息。

- pros.

- better gradient flow to shallow layers

- features:

- contain rich low-level and high-level semantics

- are preserved and refined from each other (信息互相交流)

- code: https://github.com/kevin-ssy/FishNet

- Hourglass for classification (Encode-Decoder 结构,比如 UNet,一般用于图像分割,不用于分类)

-

conclusion

- structured deep learning is (1)effective (2)from observation

- end-to-end joint training bridges the gap between structure modeling and feature learning

-

一些图

2.5 面向监控视频的行为识别与理解 (xiaowei lin, SJU)

- 行为识别领域的task

- 基于轨迹的行为分析

- 面向任意视频的行为识别 (liming wang)

- 面向监控视频的行为识别

- 目标检测的几个点

- Tiny DSOD (BMVC 2018)

- Toward accurate one-stage object detection with AP-Loss (CVPR 2019)

- kill two birds with one stone: boosting both object detection accuracy and speed with adaptive patch-of-interest composition (2017)

- 若干应用

- 三维目标检测与姿态估计

- 多目标跟踪

- 基于目标检测、跟踪的实时场景统计分析

- 多相机跟踪

- (correspondence structure Re-ID)

- learning correspondence structure for person re-identification (TIP2017)

- person re-identification with correspondence sturcture learning (ICCV 2015)

- (Group Re-ID)

- Group re-identification: leveraging and integrating multi-grain information, (MM2018)

- 车载跨相机定位

- 无人超市

- 野生东北虎 Re-ID

- (correspondence structure Re-ID)

- 行为识别

- 多尺度特征

- action recognition with coarse-to-fine deep feature interation and asynchronous fusion (AAAI 2018)

- 时空异步guanlian

- cross-stream selective networks for action recognition (CVPR workshop 2019)

- 时空行为定位

- finding action tubes with an integrated sparse-to-dense framework (arxiv 2019)

- 监控行为识别

- 有一张图

- 多尺度特征

- 其他应用

- 实时行为/事件检测

- 基于轨迹的行为分析-个体行为分析

- a tube-and-droplet-based apporach for representing and analyzing motion trajectories (TPAMI 2017),非DL,无好感

- 基于轨迹的行为分析-轨迹聚类与挖掘

- unsupervised trajectory clustering via adaptive multi-kernel-based shrinkage (ICCV 2015),比较老。。。但可以以它为base,看最新的引用它的高质量论文即可

- 密集场景行为分析

- a diffusion and clustering-based approach for finding coherent motions and understanding crowd scenes (TIP 2016)

- finding coherent motions and semantic regions in crowd scenes: a diffusion and clustering apporach (ECCV 2014)

- 主页:link-1