第 7 章 Kafka 与 Flume

7.1 Kafka 与 Flume 比较

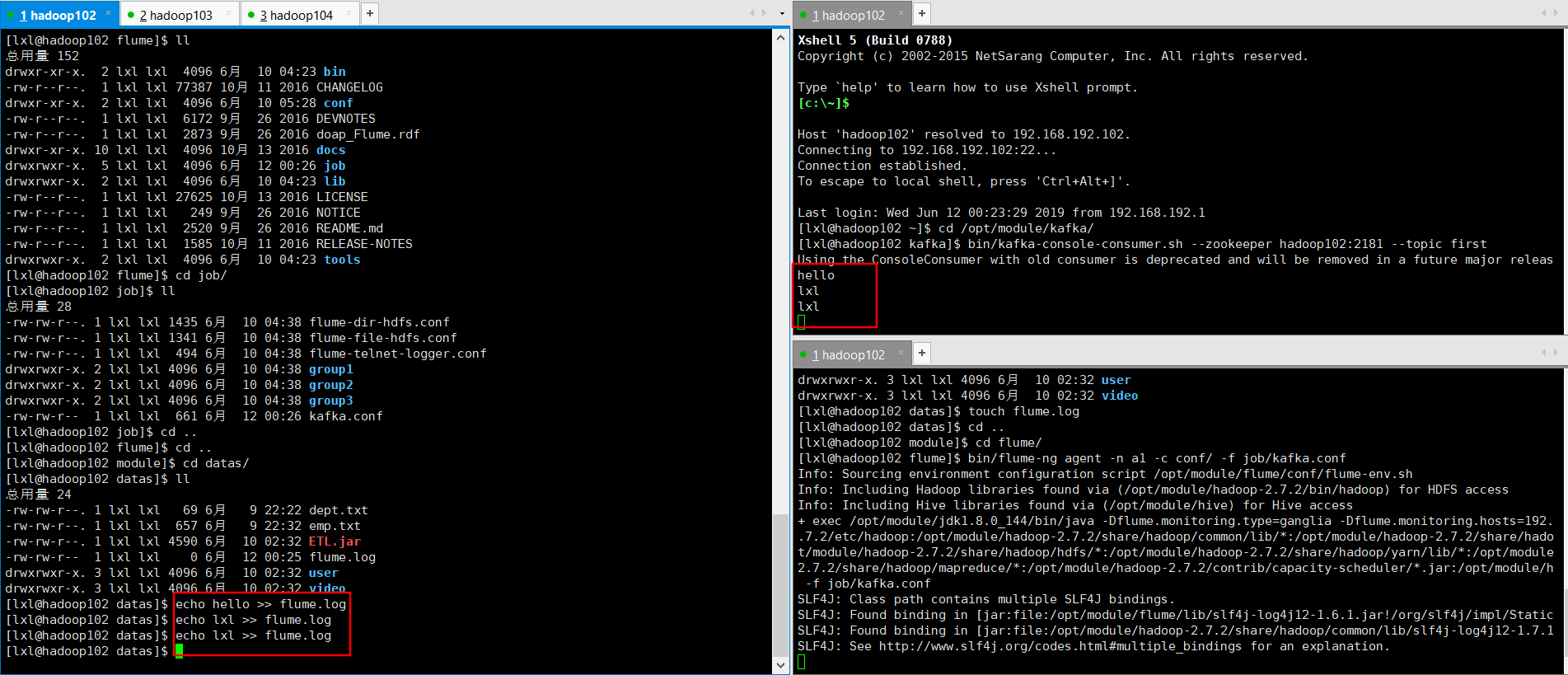

7.2 Flume 与 kafka 集成

# define a1.sources = r1 a1.sinks = k1 a1.channels = c1

# source a1.sources.r1.type = exec a1.sources.r1.command = tail -F -c +0 /opt/module/datas/flume.log a1.sources.r1.shell = /bin/bash -c

# sink a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink a1.sinks.k1.kafka.bootstrap.servers = hadoop102:9092,hadoop103:9092,hadoop104:9092 a1.sinks.k1.kafka.topic = first a1.sinks.k1.kafka.flumeBatchSize = 20 a1.sinks.k1.kafka.producer.acks = 1 a1.sinks.k1.kafka.producer.linger.ms = 1

# channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100

# bind a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

$ bin/flume-ng agent -c conf/ -n a1 -f jobs/flume-kafka.conf

$ echo hello > /opt/module/datas/flume.log

结果显示:

7.3 Kafka 配置信息

7.3.1 Broker 配置信息

|

属性 |

默认值 |

描述 |

|

broker.id |

|

必填参数,broker 的唯一标识 |

|

log.dirs |

/tmp/ka fka-logs |

Kafka 数据存放的目录。可以指定多个目录, 中间用逗号分隔,当新 partition 被创建的时会被存放到当前存放 partition 最少的目录。 |

|

port |

9092 |

BrokerServer 接受客户端连接的端口号 |

|

zookeeper.connect |

null |

Zookeeper 的 连 接 串 , 格 式 为 : hostname1:port1,hostname2:port2,hostn ame3:port3。可以填一个或多个,为了提高可靠性,建议都填上。注意,此配置允许我们 指定一个 zookeeper 路径来存放此 kafka 集 群的所有数据,为了与其他应用集群区分开, 建议在此配置中指定本集群存放目录,格式为 : hostname1:port1,hostname2:port2,hostn ame3:port3/chroot/path 。需要注意的是, 消费者的参数要和此参数一致。 |

|

message.max.bytes |

1000000 |

服务器可以接收到的最大的消息大小。注意此参 数 要 和 consumer 的 maximum.message.size 大小一致,否则会 因为生产者生产的消息太大导致消费者无法消费。 |

|

num.io.threads |

8 |

服务器用来执行读写请求的 IO 线程数,此参数的数量至少要等于服务器上磁盘的数量。 |

|

queued.max.requests |

500 |

I/O 线程可以处理请求的队列大小,若实际请 求数超过此大小,网络线程将停止接收新的请求。 |

|

socket.send.buffer.bytes |

100 * 1024 |

The SO_SNDBUFF buffer the server prefers for socket connections. |

|

socket.receive.buffer.byt es |

100 * 1024 |

The SO_RCVBUFF buffer the server prefers for socket connections. |

|

socket.request.max.bytes |

100 * 1024 * 1024 |

服务器允许请求的最大值, 用来防止内存溢出,其值应该小于 Java heap size. |

|

num.partitions |

1 |

默认 partition 数量,如果 topic 在创建时没有指定 partition 数量,默认使用此值,建议 改为 5 |

|

log.segment.bytes |

1024 * 1024 * 1024 |

Segment 文件的大小,超过此值将会自动新 建一个 segment,此值可以被 topic 级别的参数覆盖。 |

|

log.roll.{ms,hours} |

24 * 7 hours |

新建segment 文件的时间,此值可以被 topic 级别的参数覆盖。 |

|

log.retention.{ms,minute s,hours} |

7 days |

Kafka segment log 的保存周期,保存周期超过此时间日志就会被删除。此参数可以被topic 级别参数覆盖。数据量大时,建议减小 此值。 |

|

log.retention.bytes |

-1 |

每个 partition 的最大容量,若数据量超过此 值,partition 数据将会被删除。注意这个参数 |

|

|

|

控制的是每个 partition 而不是 topic。此参数 可以被 log 级别参数覆盖。 |

|

log.retention.check.inter val.ms |

5 minutes |

删除策略的检查周期 |

|

auto.create.topics.enable |

true |

自动创建 topic 参数,建议此值设置为false, 严格控制 topic 管理,防止生产者错写 topic。 |

|

default.replication.factor |

1 |

默认副本数量,建议改为 2。 |

|

replica.lag.time.max.ms |

10000 |

在此窗口时间内没有收到follower 的fetch 请 求,leader 会将其从 ISR(in-sync replicas)中移除。 |

|

replica.lag.max.messages |

4000 |

如果replica 节点落后leader 节点此值大小的 消息数量,leader 节点就会将其从ISR 中移除。 |

|

replica.socket.timeout.m s |

30 * 1000 |

replica 向 leader 发送请求的超时时间。 |

|

replica.socket.receive.buf fer.bytes |

64 * 1024 |

The socket receive buffer for network requests to the leader for replicating data. |

|

replica.fetch.max.bytes |

1024 * 1024 |

The number of byes of messages to attempt to fetch for each partition in the fetch requests the replicas send to the leader. |

|

replica.fetch.wait.max.ms |

500 |

The maximum amount of time to wait time for data to arrive on the leader in the |

|

|

|

fetch requests sent by the replicas to the leader. |

|

num.replica.fetchers |

1 |

Number of threads used to replicate messages from leaders. Increasing this value can increase the degree of I/O parallelism in the follower broker. |

|

fetch.purgatory.purge.int erval.requests |

1000 |

The purge interval (in number of requests) of the fetch request purgatory. |

|

zookeeper.session.timeo ut.ms |

6000 |

ZooKeeper session 超时时间。如果在此时间内 server 没有向 zookeeper 发送心跳, zookeeper 就会认为此节点已挂掉。 此值太 低导致节点容易被标记死亡;若太高,.会导致 太迟发现节点死亡。 |

|

zookeeper.connection.ti meout.ms |

6000 |

客户端连接 zookeeper 的超时时间。 |

|

zookeeper.sync.time.ms |

2000 |

H ZK follower 落后 ZK leader 的时间。 |

|

controlled.shutdown.ena ble |

true |

允许 broker shutdown。如果启用,broker 在关闭自己之前会把它上面的所有leaders 转 移到其它 brokers 上,建议启用,增加集群稳定性。 |

|

auto.leader.rebalance.en able |

true |

If this is enabled the controller will automatically try to balance leadership for partitions among the brokers by |

|

|

|

periodically returning leadership to the “preferred” replica for each partition if it is available. |

|

leader.imbalance.per.bro ker.percentage |

10 |

The percentage of leader imbalance allowed per broker. The controller will rebalance leadership if this ratio goes above the configured value per broker. |

|

leader.imbalance.check.i nterval.seconds |

300 |

The frequency with which to check for leader imbalance. |

|

offset.metadata.max.byt es |

4096 |

The maximum amount of metadata to allow clients to save with their offsets. |

|

connections.max.idle.ms |

600000 |

Idle connections timeout: the server socket processor threads close the connections that idle more than this. |

|

num.recovery.threads.per .data.dir |

1 |

The number of threads per data directory to be used for log recovery at startup and flushing at shutdown. |

|

unclean.leader.election.e nable |

true |

Indicates whether to enable replicas not in the ISR set to be elected as leader as a last resort, even though doing so may result in data loss. |

|

delete.topic.enable |

false |

启用 deletetopic 参数,建议设置为 true。 |

|

offsets.topic.num.partitio ns |

50 |

The number of partitions for the offset commit topic. Since changing this after deployment is currently unsupported, we recommend using a higher setting for production (e.g., 100-200). |

|

offsets.topic.retention.mi nutes |

1440 |

Offsets that are older than this age will be marked for deletion. The actual purge will occur when the log cleaner compacts the offsets topic. |

|

offsets.retention.check.in terval.ms |

600000 |

The frequency at which the offset manager checks for stale offsets. |

|

offsets.topic.replication.f actor |

3 |

The replication factor for the offset commit topic. A higher setting (e.g., three or four) is recommended in order to ensure higher availability. If the offsets topic is created when fewer brokers than the replication factor then the offsets topic will be created with fewer replicas. |

|

offsets.topic.segment.byt es |

1048576 00 |

Segment size for the offsets topic. Since it uses a compacted topic, this should be kept relatively low in order to facilitate faster log compaction and loads. |

|

offsets.load.buffer.size |

5242880 |

An offset load occurs when a broker becomes the offset manager for a set of consumer groups (i.e., when it becomes a leader for an offsets topic partition). This setting corresponds to the batch size (in bytes) to use when reading from the offsets segments when loading offsets into the offset manager’s cache. |

|

offsets.commit.required. acks |

-1 |

The number of acknowledgements that are required before the offset commit can be accepted. This is similar to the producer’s acknowledgement setting. In general, the default should not be overridden. |

|

offsets.commit.timeout. ms |

5000 |

The offset commit will be delayed until this timeout or the required number of replicas have received the offset commit. This is similar to the producer request timeout. |

7.1.1 Producer 配置信息

|

属性 |

默认值 |

描述 |

|

metadata.broker.list |

|

启动时 producer 查询 brokers 的列表,可以 |

|

|

|

是集群中所有 brokers 的一个子集。注意,这个参数只是用来获取 topic 的元信息用, producer 会从元信息中挑选合适的 broker 并 与 之 建 立 socket 连 接 。 格 式 是 : host1:port1,host2:port2。 |

|

request.required.acks |

0 |

参见 3.2 节介绍 |

|

request.timeout.ms |

10000 |

Broker 等待 ack 的超时时间,若等待时间超过此值,会返回客户端错误信息。 |

|

producer.type |

sync |

同步异步模式。async 表示异步,sync 表示同步。如果设置成异步模式,可以允许生产者以batch 的形式 push 数据,这样会极大的提高 broker 性能,推荐设置为异步。 |

|

serializer.class |

kafka.ser ializer.De faultEnc oder |

序列号类,.默认序列化成 byte[] 。 |

|

key.serializer.class |

|

Key 的序列化类,默认同上。 |

|

partitioner.class |

kafka.pr oducer.D efaultPar titioner |

Partition 类,默认对key 进行 hash。 |

|

compression.codec |

none |

指定 producer 消息的压缩格式,可选参数为: “none”, “gzip” and “snappy”。关于 |

|

|

|

压缩参见 4.1 节 |

|

compressed.topics |

null |

启用压缩的 topic 名称。若上面参数选择了一个压缩格式, 那么压缩仅对本参数指定的topic 有效,若本参数为空,则对所有 topic 有效。 |

|

message.send.max.retrie s |

3 |

Producer 发送失败时重试次数。若网络出现问题,可能会导致不断重试。 |

|

retry.backoff.ms |

100 |

Before each retry, the producer refreshes the metadata of relevant topics to see if a new leader has been elected. Since leader election takes a bit of time, this property specifies the amount of time that the producer waits before refreshing the metadata. |

|

topic.metadata.refresh.i nterval.ms |

600 * 1000 |

The producer generally refreshes the topic metadata from brokers when there is a failure (partition missing, leader not available…). It will also poll regularly (default: every 10min so 600000ms). If you set this to a negative value, metadata will only get refreshed on failure. If you set this to zero, the metadata will get refreshed after each message sent (not |

|

|

|

recommended). Important note: the refresh happen only AFTER the message is sent, so if the producer never sends a message the metadata is never refreshed |

|

queue.buffering.max.ms |

5000 |

启用异步模式时,producer 缓存消息的时间。 比如我们设置成 1000 时,它会缓存 1 秒的数据再一次发送出去, 这样可以极大的增加 broker 吞吐量,但也会造成时效性的降低。 |

|

queue.buffering.max.me ssages |

10000 |

采用异步模式时 producer buffer 队列里最大缓存的消息数量, 如果超过这个数值, producer 就会阻塞或者丢掉消息。 |

|

queue.enqueue.timeout. ms |

-1 |

当达到上面参数值时 producer 阻塞等待的时间。如果值设置为 0 , buffer 队列满时producer 不会阻塞,消息直接被丢掉。若值 设置为-1,producer 会被阻塞,不会丢消息。 |

|

batch.num.messages |

200 |

采用异步模式时,一个 batch 缓存的消息数 量。达到这个数量值时 producer 才会发送消息。 |

|

send.buffer.bytes |

100 * 1024 |

Socket write buffer size |

|

client.id |

“” |

The client id is a user-specified string sent in each request to help trace calls. It should logically identify the application |

|

|

|

making the request. |

7.1.2 Consumer 配置信息

|

属性 |

默认值 |

描述 |

|

group.id |

|

Consumer 的 组 ID , 相 同 goup.id 的 consumer 属于同一个组。 |

|

zookeeper.connect |

|

Consumer 的 zookeeper 连接串, 要和 broker 的配置一致。 |

|

consumer.id |

null |

如果不设置会自动生成。 |

|

socket.timeout.ms |

30 * 1000 |

网络请求的 socket 超时时间。实际超时时间 由 max.fetch.wait + socket.timeout.ms 确定。 |

|

socket.receive.buffer.byt es |

64 * 1024 |

The socket receive buffer for network requests. |

|

fetch.message.max.byte s |

1024 * 1024 |

查询topic-partition 时允许的最大消息大小。 consumer 会为每个 partition 缓存此大小的消息到内存, 因此, 这个参数可以控制consumer 的内存使用量。这个值应该至少比 server 允 许 的 最 大 消 息 大 小 大 , 以 免 producer 发送的消息大于 consumer 允许的消息。 |

|

num.consumer.fetchers |

1 |

The number fetcher threads used to fetch data. |

|

auto.commit.enable |

true |

如果此值设置为 true,consumer 会周期性的 把当前消费的 offset 值保存到 zookeeper。 |

|

|

|

当 consumer 失败重启之后将会使用此值作为新开始消费的值。 |

|

auto.commit.interval.ms |

60 * 1000 |

Consumer 提交 offset 值到 zookeeper 的周期。 |

|

queued.max.message.ch unks |

2 |

用来被 consumer 消费的 message chunks 数 量 , 每 个 chunk 可 以 缓 存 fetch.message.max.bytes 大小的数据量。 |

|

auto.commit.interval.ms |

60 * 1000 |

Consumer 提交 offset 值到 zookeeper 的周期。 |

|

queued.max.message.ch unks |

2 |

用来被 consumer 消费的 message chunks 数 量 , 每 个 chunk 可 以 缓 存 fetch.message.max.bytes 大小的数据量。 |

|

fetch.min.bytes |

1 |

The minimum amount of data the server should return for a fetch request. If insufficient data is available the request will wait for that much data to accumulate before answering the request. |

|

fetch.wait.max.ms |

100 |

The maximum amount of time the server will block before answering the fetch request if there isn’t sufficient data to immediately satisfy fetch.min.bytes. |

|

rebalance.backoff.ms |

2000 |

Backoff time between retries during |

|

|

|

rebalance. |

|

refresh.leader.backoff.ms |

200 |

Backoff time to wait before trying to determine the leader of a partition that has just lost its leader. |

|

auto.offset.reset |

largest |

What to do when there is no initial offset in ZooKeeper or if an offset is out of range ;smallest : automatically reset the offset to the smallest offset; largest : automatically reset the offset to the largest offset;anything else: throw exception to the consumer |

|

consumer.timeout.ms |

-1 |

若在指定时间内没有消息消费,consumer 将会抛出异常。 |

|

exclude.internal.topics |

true |

Whether messages from internal topics (such as offsets) should be exposed to the consumer. |

|

zookeeper.session.timeo ut.ms |

6000 |

ZooKeeper session timeout. If the consumer fails to heartbeat to ZooKeeper for this period of time it is considered dead and a rebalance will occur. |

|

zookeeper.connection.ti meout.ms |

6000 |

The max time that the client waits while establishing a connection to zookeeper. |

|

zookeeper.sync.time.ms |

2000 |

How far a ZK follower can be behind a ZK leader |