auto : 神经网络结构搜索(NAS)

ImageNet 上Top1-acc Top5-Acc:带*的是多模型结果

| auto | version | arxiv | Top1-Acc | Top5-Acc | Params | ||

| VGG | |||||||

| GoogleNet | inception-v1 | https://arxiv.org/pdf/1409.4842.pdf | - | 93.33 | |||

| inception-v2 | https://arxiv.org/pdf/1502.03167.pdf | 78 | 94.18 | ||||

| 79.9 | 95.1 | ||||||

| inception-v3 | https://arxiv.org/pdf/1512.00567.pdf | *82.8 | *96.42 | 27.1M | |||

| inception-v4 | https://arxiv.org/pdf/1602.07261.pdf | *83.5 | *96.9 | 42.0M | |||

| ResNet | ResNet50 | https://arxiv.org/pdf/1512.03385.pdf | 75.3 | 92.70 | 26.0M | ||

| ResNet101 | 76.4 | 92.9 | |||||

| ResNet152 | 77 | 93.3 | 66.0M | ||||

| ResNeXt | ResNeXt101-32xd | https://arxiv.org/pdf/1611.05431.pdf | 78.8 | 94.4 | |||

| 64x4d | 79.6 | 94.7 | |||||

| DenseNet | densenet121 | https://arxiv.org/pdf/1608.06993.pdf | 74.98 | 92.3 | |||

| densenet264 | 77.9 | 93.9 | 34M | ||||

| SENet | SENet50 | https://arxiv.org/pdf/1709.01507.pdf | 27.7 | ||||

| SENet101 | 79.42 | - | 49.2M | ||||

| SENet154 | 82.7 | 96.2 | 146M | ||||

| ResNet-BoT | ResNet50 | https://arxiv.org/pdf/1812.01187.pdf | 79.29 | 94.63 | |||

| ResNet101 | 80.54 | - | 44.6M | ||||

| MixNet(MixConv) | √ | MixNet-S | https://arxiv.org/pdf/1907.09595.pdf | 75.8 | 92.8 | 4.1M | |

| √ | MixNet-M | 77.0 | 93.3 | 5.3M | |||

| √ | MixNet-L | 78.9 | 94.2 | 7.0M | |||

| EfficientNet: | B1 | https://arxiv.org/pdf/1905.11946.pdf | 79.2 | 94.5 | 7.8M | ||

| B2 | 80.3 | 95 | 9.2M | ||||

| B3 | 81.7 | 95.6 | 12M | ||||

| B4 | 83 | 96.3 | 19M | ||||

| B7 | 84.4 | 97.1 | 66M | ||||

| SKNet | SKNet50 | https://arxiv.org/pdf/1903.06586.pdf | 79.21 | - | 27.5M | ||

| SKNet101 | 79.81 | - | 48.9M | ||||

| ResNeSt | ResNeSt50 | https://arxiv.org/pdf/2004.08955.pdf | 81.13 | - | 27.5M | ||

| ResNeSt101 | 82.27 | - | 48.3M |

ResNet-BoT: Bag of tricks 无痛涨点的几大方法:

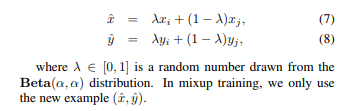

(1) Mixup 数据增强 :非常简单两张图,按像素相加。

(2)label-smooth: 第一次提出用于训练inceptionv2

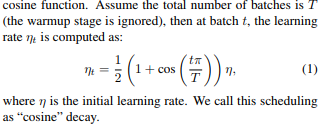

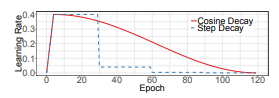

(3)cosine学习率:与warmup结合, 先从0开始

MixNet(MixConv):针对卷积的改进 有些类似Res2Net

使用分组卷积:不同组使用不同核大小的depthwise 卷积,做一层卷积层就相当于有不同的感受野。在用NAS得到MixNet家族。