from mxnet import nd,autograd,init,gluon from mxnet.gluon import data as gdata,loss as gloss,nn num_inputs = 2 num_examples = 1000 true_w = [2,-3.4] true_b = 4.2 features = nd.random.normal(scale=1,shape=(num_examples,num_inputs)) labels = true_w[0]*features[:,0] + true_w[1]*features[:,1] + true_b labels += nd.random.normal(scale=0.01,shape=labels.shape) # 造小批量数据集 dataset = gdata.ArrayDataset(features,labels) batch_size = 10 data_iter = gdata.DataLoader(dataset,batch_size,shuffle=True) # 定义网络 net = nn.Sequential() net.add(nn.Dense(1)) net.initialize(init.Normal(sigma=0)) # 损失函数 loss = gloss.L2Loss() # 优化算法 trainer = gluon.Trainer(net.collect_params(),'sgd',{'learning_rate':0.01}) num_epochs = 3 for epoch in range(1, num_epochs + 1): for X, y in data_iter: print(X) print(y) with autograd.record(): l = loss(net(X), y) print(l) l.backward() trainer.step(batch_size) l = loss(net(features), labels) print('epoch %d, loss: %f' % (epoch, l.mean().asnumpy()))

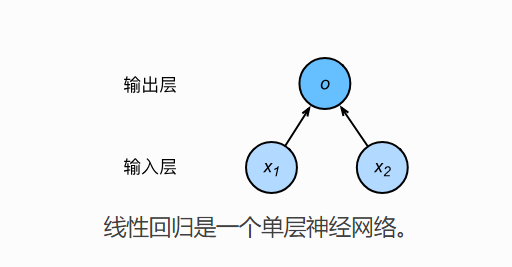

从最简单的线性回归来说,小批量随机梯度下降的时候,X,y 从迭代器中取出,也是bach_size大小的数据集,那么网络的计算,同样也是小批量的。

即代码 l = loss(net(X),y) 包含了,小批量数据集,每一个数据丢到网络中,计算出返回值以后,和真实值得损失。