目录

创建普通用户

#仅测试环境使用

hostnamectl set-hostname centos-hadoop01

chmod 777 /opt/

chmod 777 /etc/profile

#仅测试环境使用

sudo su -

useradd centos

echo "centos:centos" | chpasswd

su - centos

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

cd ~/.ssh

cat id_rsa.pub >> authorized_keys

chmod 755 authorized_keys

ssh localhost

下载jdk

jdk-8u201-linux-x64.tar.gz

下载目录

https://www.oracle.com/technetwork/java/javase/downloads/jdk9-downloads-3848520.html

https://www.oracle.com/java/technologies/javase-java-archive-javase8-downloads.html

可复制标红处链接下载

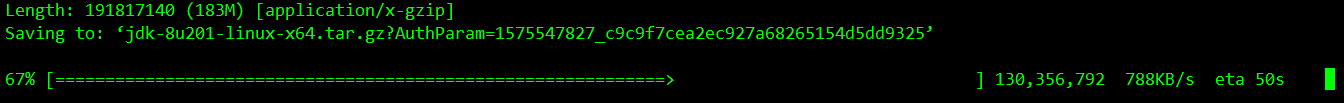

wget https://download.oracle.com/otn/java/jdk/8u201-b09/42970487e3af4f5aa5bca3f542482c60/jdk-8u201-linux-x64.tar.gz?AuthParam=1575547827_c9c9f7cea2ec927a68265154d5dd9325

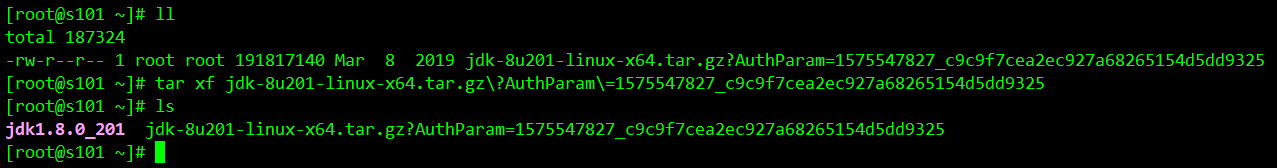

正常解压即可

tar xf jdk-8u201-linux-x64.tar.gz

echo -e '

#配置java环境变量' >> /etc/profile

echo -e 'export JAVA_HOME=/opt/jdk1.8.0_201' >> /etc/profile

echo -e 'export PATH=$PATH:$JAVA_HOME/bin' >> /etc/profile

source /etc/profile

java -version

下载hadoop

hadoop下载地址

https://archive.apache.org/dist/hadoop/common

https://www.apache.org/dyn/closer.cgi/hadoop/common/

wget http://mirrors.tuna.tsinghua.edu.cn/apache/hadoop/common/hadoop-3.2.1/hadoop-3.2.1.tar.gz

tar xf hadoop-3.2.1.tar.gz

安装配置hadoop

core-site.xml

vim /opt/hadoop-3.2.1/etc/hadoop/core-site.xml

<configuration>

<!--指定HDFS中NameNode的址-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://s101:9000</value>

</property>

<!--指定hadoop运行产生文件(DataNode)的存储目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop/data/tmp</value>

</property>

</configuration>

添加变量

echo -e '

#配置hadoop环境变量' >> /etc/profile

echo -e 'export HADOOP_HOME=/opt/hadoop-3.2.1' >> /etc/profile

echo -e 'export PATH=$PATH:$HADOOP_HOME/bin' >> /etc/profile

echo -e 'export PATH=$PATH:$HADOOP_HOME/sbin' >> /etc/profile

source /etc/profile

core-site.xml

vim /opt/hadoop/etc/hadoop/core-site.xml

<configuration>

<!--指定HDFS中NameNode的址-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://s101:9000</value>

</property>

<!--指定hadoop运行产生文件(DataNode)的存储目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop/data/tmp</value>

</property>

</configuration>

hdfs-site.xml

vim /opt/hadoop-3.2.1/etc/hadoop/hdfs-site.xml

<configuration>

<!--指定HDFS副本数量,这里只设置了一个节点(hadoop01)副本数量为1)-->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

hadoop-env.sh

vim /opt/hadoop-3.2.1/etc/hadoop/hadoop-env.sh

echo 'export JAVA_HOME=/opt/jdk1.8.0_201' >> /opt/hadoop-3.2.1/etc/hadoop/hadoop-env.sh

mapred-site.xml

vim /opt/hadoop-3.2.1/etc/hadoop/mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

yarn-site.xml

vim /opt/hadoop-3.2.1/etc/hadoop/yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>s101</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

hadoop逻辑格式化

hdfs namenode -format

测试hadoop

启动namenode:

start-all.sh

检查里程

jps

浏览器打开检查

http://ip:50070/

错误

Starting namenodes on [s101]

ERROR: Attempting to operate on hdfs namenode as root

ERROR: but there is no HDFS_NAMENODE_USER defined. Aborting operation.

Starting datanodes

ERROR: Attempting to operate on hdfs datanode as root

ERROR: but there is no HDFS_DATANODE_USER defined. Aborting operation.

Starting secondary namenodes [s101]

ERROR: Attempting to operate on hdfs secondarynamenode as root

ERROR: but there is no HDFS_SECONDARYNAMENODE_USER defined. Aborting operation.

出现以上问题,表明不接受root用户启动hdfs。把sbin/start-dfs.sh和sbin/stop-dfs.sh在文件头部追加以下内容

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root