参考博客:https://mritd.me/2018/04/19/set-up-kubernetes-1.10.1-cluster-by-hyperkube/

一、环境

(1)系统环境

| IP | 操作系统 | docker版本 | 节点用途 |

| 172.16.60.95 | CentOs7 | 18.03.0-ce | master-01、etcd1 |

| 172.16.60.96 | CentOs7 | 18.03.0-ce | master-02、etcd2 |

| 172.16.60.97 | CentOs7 | 18.03.0-ce | node-01、etcd3 |

| 172.16.60.98 | CentOs7 | 18.03.0-ce | node-02 |

| 172.16.60.99 | CentOs7 | 18.03.0-ce | node-03 |

环境共5台虚拟机,2个master节点,3个etcd节点,3个node节点,网络采用Calico,集群开启RBAC。

参考博客中有安装包下载,可以使用其中大部分资源

(2)修改hosts(所有设备)

172.16.60.95 master-01 172.16.60.96 master-02 172.16.60.97 node-01 172.16.60.98 node-02 172.16.60.99 node-03

(3)开启转发功能(所有设备)

# cat <<EOF > /etc/sysctl.d/k8s.conf net.ipv4.ip_forward = 1 net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF # sysctl -p /etc/sysctl.d/k8s.conf

(4)关闭swap(所有设备)

swapoff -a && sysctl -w vm.swappiness=0

二、搭建ETCD集群

2.1 证书说明

由于 Etcd 和 Kubernetes 全部采用 TLS 通讯,所以先要生成 TLS 证书,证书生成工具采用 cfssl

| 证书名称 | 配置文件 | 用途 |

|---|---|---|

| etcd-root-ca.pem | etcd-root-ca-csr.json | etcd 根 CA 证书 |

| etcd.pem | etcd-gencert.json、etcd-csr.json | etcd 集群证书 |

| k8s-root-ca.pem | k8s-root-ca-csr.json | k8s 根 CA 证书 |

| kube-proxy.pem | k8s-gencert.json、kube-proxy-csr.json | kube-proxy 使用的证书 |

| admin.pem | k8s-gencert.json、admin-csr.json | kubectl 使用的证书 |

| kubernetes.pem | k8s-gencert.json、kubernetes-csr.json | kube-apiserver 使用的证书 |

2.2 CFSSL工具安装

首先下载 cfssl,并给予可执行权限,然后扔到 PATH 目录下(etcd安装在master-01上执行)

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 mv cfssl_linux-amd64 /usr/local/bin/cfssl mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

2.3 生成etcd证书

Etcd 证书生成所需配置文件如下:

(1)vim etcd-csr.json

{

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"O": "etcd",

"OU": "etcd Security",

"L": "Hangzhou",

"ST": "Hangzhou",

"C": "CN"

}

],

"CN": "etcd",

"hosts": [

"127.0.0.1",

"localhost",

"172.16.60.95",

"172.16.60.96",

"172.16.60.97"

]

}

(2)etcd-gencert.json

{

"signing": {

"default": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

(3)etcd-root-ca-csr.json

{

"key": {

"algo": "rsa",

"size": 4096

},

"names": [

{

"O": "etcd",

"OU": "etcd Security",

"L": "Hangzhou",

"ST": "Hangzhou",

"C": "CN"

}

],

"CN": "etcd-root-ca"

}

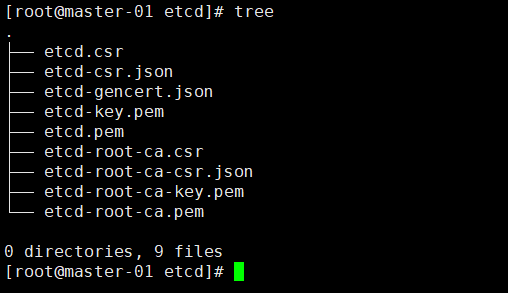

(4)生成证书

cfssl gencert --initca=true etcd-root-ca-csr.json | cfssljson --bare etcd-root-ca cfssl gencert --ca etcd-root-ca.pem --ca-key etcd-root-ca-key.pem --config etcd-gencert.json etcd-csr.json | cfssljson --bare etcd

2.4 安装Etcd

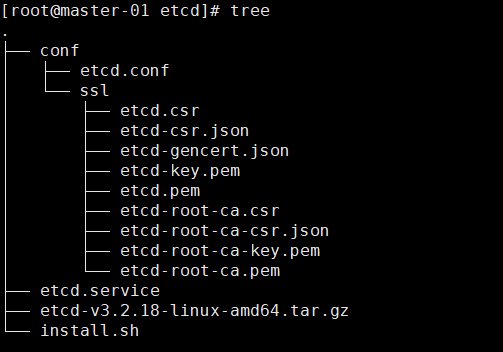

替换下载包中etcd的conf中证书,并修改etcd.conf中节点信息和ip地址,最后在master-01、master-02、node-01上安装etcd(运行install.sh)

(1)etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

EnvironmentFile=-/etc/etcd/etcd.conf

User=etcd

# set GOMAXPROCS to number of processors

ExecStart=/bin/bash -c "GOMAXPROCS=$(nproc) /usr/local/bin/etcd --name="${ETCD_NAME}" --data-dir="${ETCD_DATA_DIR}" --listen-client-urls="${ETCD_LISTEN_CLIENT_URLS}""

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

(2)etcd.conf

# [member] ETCD_NAME=etcd1 ETCD_DATA_DIR="/var/lib/etcd/etcd1.etcd" ETCD_WAL_DIR="/var/lib/etcd/wal" ETCD_SNAPSHOT_COUNT="100" ETCD_HEARTBEAT_INTERVAL="100" ETCD_ELECTION_TIMEOUT="1000" ETCD_LISTEN_PEER_URLS="https://172.16.60.95:2380" ETCD_LISTEN_CLIENT_URLS="https://172.16.60.95:2379,http://127.0.0.1:2379" ETCD_MAX_SNAPSHOTS="5" ETCD_MAX_WALS="5" #ETCD_CORS="" # [cluster] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.16.60.95:2380" # if you use different ETCD_NAME (e.g. test), set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..." ETCD_INITIAL_CLUSTER="etcd1=https://172.16.60.95:2380,etcd2=https://172.16.60.96:2380,etcd3=https://172.16.60.97:2380" ETCD_INITIAL_CLUSTER_STATE="new" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_ADVERTISE_CLIENT_URLS="https://172.16.60.95:2379" #ETCD_DISCOVERY="" #ETCD_DISCOVERY_SRV="" #ETCD_DISCOVERY_FALLBACK="proxy" #ETCD_DISCOVERY_PROXY="" #ETCD_STRICT_RECONFIG_CHECK="false" #ETCD_AUTO_COMPACTION_RETENTION="0" # [proxy] #ETCD_PROXY="off" #ETCD_PROXY_FAILURE_WAIT="5000" #ETCD_PROXY_REFRESH_INTERVAL="30000" #ETCD_PROXY_DIAL_TIMEOUT="1000" #ETCD_PROXY_WRITE_TIMEOUT="5000" #ETCD_PROXY_READ_TIMEOUT="0" # [security] ETCD_CERT_FILE="/etc/etcd/ssl/etcd.pem" ETCD_KEY_FILE="/etc/etcd/ssl/etcd-key.pem" ETCD_CLIENT_CERT_AUTH="true" ETCD_TRUSTED_CA_FILE="/etc/etcd/ssl/etcd-root-ca.pem" ETCD_AUTO_TLS="true" ETCD_PEER_CERT_FILE="/etc/etcd/ssl/etcd.pem" ETCD_PEER_KEY_FILE="/etc/etcd/ssl/etcd-key.pem" ETCD_PEER_CLIENT_CERT_AUTH="true" ETCD_PEER_TRUSTED_CA_FILE="/etc/etcd/ssl/etcd-root-ca.pem" ETCD_PEER_AUTO_TLS="true" # [logging] #ETCD_DEBUG="false" # examples for -log-package-levels etcdserver=WARNING,security=DEBUG #ETCD_LOG_PACKAGE_LEVELS=""

(3)install.sh

#!/bin/bash

set -e

ETCD_VERSION="3.2.18"

function download(){

if [ ! -f "etcd-v${ETCD_VERSION}-linux-amd64.tar.gz" ]; then

wget https://github.com/coreos/etcd/releases/download/v${ETCD_VERSION}/etcd-v${ETCD_VERSION}-linux-amd64.tar.gz

tar -zxvf etcd-v${ETCD_VERSION}-linux-amd64.tar.gz

fi

}

function preinstall(){

getent group etcd >/dev/null || groupadd -r etcd

getent passwd etcd >/dev/null || useradd -r -g etcd -d /var/lib/etcd -s /sbin/nologin -c "etcd user" etcd

}

function install(){

echo -e "�33[32mINFO: Copy etcd...�33[0m"

tar -zxvf etcd-v${ETCD_VERSION}-linux-amd64.tar.gz

cp etcd-v${ETCD_VERSION}-linux-amd64/etcd* /usr/local/bin

rm -rf etcd-v${ETCD_VERSION}-linux-amd64

echo -e "�33[32mINFO: Copy etcd config...�33[0m"

cp -r conf /etc/etcd

chown -R etcd:etcd /etc/etcd

chmod -R 755 /etc/etcd/ssl

echo -e "�33[32mINFO: Copy etcd systemd config...�33[0m"

cp systemd/*.service /lib/systemd/system

systemctl daemon-reload

}

function postinstall(){

if [ ! -d "/var/lib/etcd" ]; then

mkdir /var/lib/etcd

chown -R etcd:etcd /var/lib/etcd

fi

}

download

preinstall

install

postinstall

整体目录结构如下

直接运行install.sh就安装好了

2.5 启动和验证

集群 etcd 要 3 个一起启动,单个启动查看状态等半天也没有反应

systemctl daemon-reload systemctl start etcd systemctl enable etcd

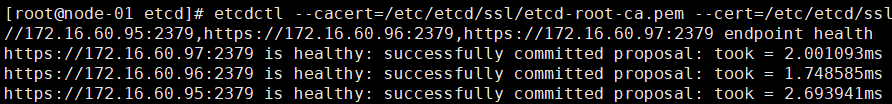

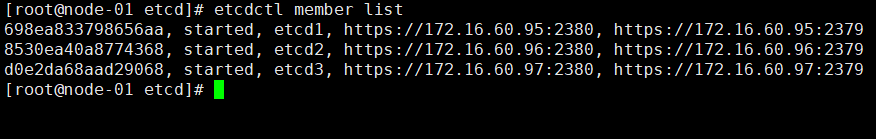

验证etcd集群

export ETCDCTL_API=3 etcdctl --cacert=/etc/etcd/ssl/etcd-root-ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://172.16.60.95:2379,https://172.16.60.96:2379,https://172.16.60.97:2379 endpoint health

三、安装Kubernetes集群组件

大部分还是使用参考博客中的安装方法,但是证书要自己生成

3.1 生成Kubernetes证书

由于 kubelet 和 kube-proxy 用到的 kubeconfig 配置文件需要借助 kubectl 来生成,所以需要先安装一下 kubectl(下载包中已经存在)

wget https://storage.googleapis.com/kubernetes-release/release/v1.10.1/bin/linux/amd64/hyperkube -O hyperkube_1.10.1 chmod +x hyperkube_1.10.1 cp hyperkube_1.10.1 /usr/local/bin/hyperkube ln -s /usr/local/bin/hyperkube /usr/local/bin/kubectl

(1)admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Hangzhou",

"L": "Hangzhou",

"O": "system:masters",

"OU": "System"

}

]

}

(2)k8s-gencert.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

(3)k8s-root-ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 4096

},

"names": [

{

"C": "CN",

"ST": "Hangzhou",

"L": "Hangzhou",

"O": "k8s",

"OU": "System"

}

]

}

(4)kube-apiserver-csr.json

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.254.0.1",

"172.16.60.95",

"172.16.60.96",

"172.16.60.97",

"172.16.60.98",

"172.16.60.99",

"localhost",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "HangZhou",

"L": "HangZhou",

"O": "k8s",

"OU": "System"

}

]

}

(5)kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Hangzhou",

"L": "Hangzhou",

"O": "k8s",

"OU": "System"

}

]

}

生成证书和配置

# 生成 CA

cfssl gencert --initca=true k8s-root-ca-csr.json | cfssljson --bare k8s-root-ca

# 依次生成其他组件证书

for targetName in kube-apiserver admin kube-proxy; do

cfssl gencert --ca k8s-root-ca.pem --ca-key k8s-root-ca-key.pem --config k8s-gencert.json --profile kubernetes $targetName-csr.json | cfssljson --bare $targetName

done

# 地址默认为 127.0.0.1:6443

# 如果在 master 上启用 kubelet 请在生成后的 kubeconfig 中

# 修改该地址为 当前MASTER_IP:6443

KUBE_APISERVER="https://127.0.0.1:6443"

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

echo "Tokne: ${BOOTSTRAP_TOKEN}"

# 不要质疑 system:bootstrappers 用户组是否写错了,有疑问请参考官方文档

# https://kubernetes.io/docs/admin/kubelet-tls-bootstrapping/

cat > token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:bootstrappers"

EOF

echo "Create kubelet bootstrapping kubeconfig..."

# 设置集群参数

kubectl config set-cluster kubernetes

--certificate-authority=k8s-root-ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap

--token=${BOOTSTRAP_TOKEN}

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default

--cluster=kubernetes

--user=kubelet-bootstrap

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

echo "Create kube-proxy kubeconfig..."

# 设置集群参数

kubectl config set-cluster kubernetes

--certificate-authority=k8s-root-ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=kube-proxy.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kube-proxy

--client-certificate=kube-proxy.pem

--client-key=kube-proxy-key.pem

--embed-certs=true

--kubeconfig=kube-proxy.kubeconfig

# 设置上下文参数

kubectl config set-context default

--cluster=kubernetes

--user=kube-proxy

--kubeconfig=kube-proxy.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

# 创建高级审计配置

cat >> audit-policy.yaml <<EOF

# Log all requests at the Metadata level.

apiVersion: audit.k8s.io/v1beta1

kind: Policy

rules:

- level: Metadata

EOF

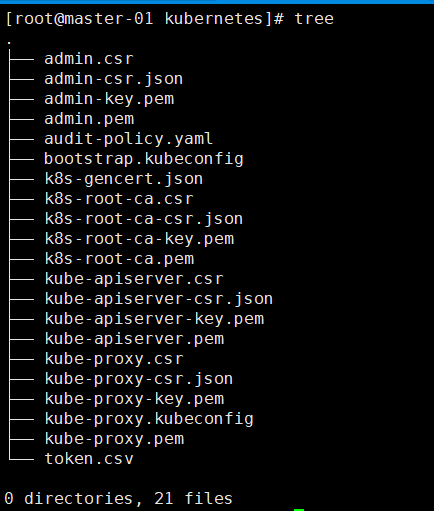

生成的证书

替换下载包中的证书

# 路径 $path//k8s/conf # path是解压后的路径

3.2 准备systemd配置

(1)kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

After=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/apiserver

User=kube

ExecStart=/usr/local/bin/hyperkube apiserver

$KUBE_LOGTOSTDERR

$KUBE_LOG_LEVEL

$KUBE_ETCD_SERVERS

$KUBE_API_ADDRESS

$KUBE_API_PORT

$KUBELET_PORT

$KUBE_ALLOW_PRIV

$KUBE_SERVICE_ADDRESSES

$KUBE_ADMISSION_CONTROL

$KUBE_API_ARGS

Restart=on-failure

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

(2)kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/controller-manager

User=kube

ExecStart=/usr/local/bin/hyperkube controller-manager

$KUBE_LOGTOSTDERR

$KUBE_LOG_LEVEL

$KUBE_MASTER

$KUBE_CONTROLLER_MANAGER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

(3)kubelet.service

[Unit]

Description=Kubernetes Kubelet Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/kubelet

ExecStart=/usr/local/bin/hyperkube kubelet

$KUBE_LOGTOSTDERR

$KUBE_LOG_LEVEL

$KUBELET_API_SERVER

$KUBELET_ADDRESS

$KUBELET_PORT

$KUBELET_HOSTNAME

$KUBE_ALLOW_PRIV

$KUBELET_ARGS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

(4)kube-proxy.service

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/proxy

ExecStart=/usr/local/bin/hyperkube proxy

$KUBE_LOGTOSTDERR

$KUBE_LOG_LEVEL

$KUBE_MASTER

$KUBE_PROXY_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

(5)kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler Plugin

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/config

EnvironmentFile=-/etc/kubernetes/scheduler

User=kube

ExecStart=/usr/local/bin/hyperkube scheduler

$KUBE_LOGTOSTDERR

$KUBE_LOG_LEVEL

$KUBE_MASTER

$KUBE_SCHEDULER_ARGS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

3.3 Master节点配置

Master 节点主要会运行 3 各组件: kube-apiserver、kube-controller-manager、kube-scheduler,其中用到的配置文件如下(将刚才k8s目录分发到所有节点,待会都需要使用到)

(1)config

config 是一个通用配置文件,值得注意的是由于安装时对于 Node、Master 节点都会包含该文件,在 Node 节点上请注释掉 KUBE_MASTER 变量,因为 Node 节点需要做 HA,要连接本地的 6443 加密端口;而这个变量将会覆盖 kubeconfig 中指定的 127.0.0.1:6443 地址

### # kubernetes system config # # The following values are used to configure various aspects of all # kubernetes services, including # # kube-apiserver.service # kube-controller-manager.service # kube-scheduler.service # kubelet.service # kube-proxy.service # logging to stderr means we get it in the systemd journal KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug KUBE_LOG_LEVEL="--v=2" # Should this cluster be allowed to run privileged docker containers KUBE_ALLOW_PRIV="--allow-privileged=true" # How the controller-manager, scheduler, and proxy find the apiserver KUBE_MASTER="--master=http://127.0.0.1:8080"

(2)apiserver

apiserver 配置相对于 1.8 略有变动,其中准入控制器(admission control)选项名称变为了 --enable-admission-plugins,控制器列表也有相应变化,这里采用官方推荐配置,具体请参考 官方文档

###

# kubernetes system config

#

# The following values are used to configure the kube-apiserver

#

# The address on the local server to listen to.

KUBE_API_ADDRESS="--advertise-address=172.16.60.95 --bind-address=172.16.60.95"

# The port on the local server to listen on.

KUBE_API_PORT="--secure-port=6443"

# Port minions listen on

# KUBELET_PORT="--kubelet-port=10250"

# Comma separated list of nodes in the etcd cluster

KUBE_ETCD_SERVERS="--etcd-servers=https://172.16.60.95:2379,https://172.16.60.96:2379,https://172.16.60.97:2379"

# Address range to use for services

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16"

# default admission control policies

KUBE_ADMISSION_CONTROL="--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,DefaultStorageClass,DefaultTolerationSeconds,MutatingAdmissionWebhook,ValidatingAdmissionWebhook,ResourceQuota,NodeRestriction"

# Add your own!

KUBE_API_ARGS=" --anonymous-auth=false

--apiserver-count=3

--audit-log-maxage=30

--audit-log-maxbackup=3

--audit-log-maxsize=100

--audit-log-path=/var/log/kube-audit/audit.log

--audit-policy-file=/etc/kubernetes/audit-policy.yaml

--authorization-mode=Node,RBAC

--client-ca-file=/etc/kubernetes/ssl/k8s-root-ca.pem

--enable-bootstrap-token-auth

--enable-garbage-collector

--enable-logs-handler

--enable-swagger-ui

--etcd-cafile=/etc/etcd/ssl/etcd-root-ca.pem

--etcd-certfile=/etc/etcd/ssl/etcd.pem

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem

--etcd-compaction-interval=5m0s

--etcd-count-metric-poll-period=1m0s

--event-ttl=48h0m0s

--kubelet-https=true

--kubelet-timeout=3s

--log-flush-frequency=5s

--token-auth-file=/etc/kubernetes/token.csv

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem

--service-node-port-range=30000-50000

--service-account-key-file=/etc/kubernetes/ssl/k8s-root-ca.pem

--storage-backend=etcd3

--enable-swagger-ui=true"

(3)controller-manager

###

# The following values are used to configure the kubernetes controller-manager

# defaults from config and apiserver should be adequate

# Add your own!

KUBE_CONTROLLER_MANAGER_ARGS=" --bind-address=0.0.0.0

--cluster-name=kubernetes

--cluster-signing-cert-file=/etc/kubernetes/ssl/k8s-root-ca.pem

--cluster-signing-key-file=/etc/kubernetes/ssl/k8s-root-ca-key.pem

--controllers=*,bootstrapsigner,tokencleaner

--deployment-controller-sync-period=10s

--experimental-cluster-signing-duration=86700h0m0s

--leader-elect=true

--node-monitor-grace-period=40s

--node-monitor-period=5s

--pod-eviction-timeout=5m0s

--terminated-pod-gc-threshold=50

--root-ca-file=/etc/kubernetes/ssl/k8s-root-ca.pem

--service-account-private-key-file=/etc/kubernetes/ssl/k8s-root-ca-key.pem

--feature-gates=RotateKubeletServerCertificate=true"

(5)scheduler

###

# kubernetes scheduler config

# default config should be adequate

# Add your own!

KUBE_SCHEDULER_ARGS=" --address=0.0.0.0

--leader-elect=true

--algorithm-provider=DefaultProvider"

3.4 Node节点配置

Node 节点上主要有 kubelet、kube-proxy 组件,用到的配置如下

(1)kubelet

kubeket 默认也开启了证书轮换能力以保证自动续签相关证书,同时增加了 --node-labels 选项为 node 打一个标签,关于这个标签最后部分会有讨论,如果在 master 上启动 kubelet,请将 node-role.kubernetes.io/k8s-node=true 修改为 node-role.kubernetes.io/k8s-master=true

###

# kubernetes kubelet (minion) config

# The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces)

KUBELET_ADDRESS="--node-ip=172.16.60.95"

# The port for the info server to serve on

# KUBELET_PORT="--port=10250"

# You may leave this blank to use the actual hostname

KUBELET_HOSTNAME="--hostname-override=master-01"

# location of the api-server

# KUBELET_API_SERVER=""

# Add your own!

KUBELET_ARGS=" --bootstrap-kubeconfig=/etc/kubernetes/bootstrap.kubeconfig

--cert-dir=/etc/kubernetes/ssl

--cgroup-driver=cgroupfs

--cluster-dns=10.254.0.2

--cluster-domain=cluster.local.

--fail-swap-on=false

--feature-gates=RotateKubeletClientCertificate=true,RotateKubeletServerCertificate=true

--node-labels=node-role.kubernetes.io/k8s-master=true

--image-gc-high-threshold=70

--image-gc-low-threshold=50

--kube-reserved=cpu=500m,memory=512Mi,ephemeral-storage=1Gi

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig

--system-reserved=cpu=1000m,memory=1024Mi,ephemeral-storage=1Gi

--serialize-image-pulls=false

--sync-frequency=30s

--pod-infra-container-image=k8s.gcr.io/pause-amd64:3.0

--resolv-conf=/etc/resolv.conf

--rotate-certificates"

(2)proxy

###

# kubernetes proxy config

# default config should be adequate

# Add your own!

KUBE_PROXY_ARGS="--bind-address=0.0.0.0

--hostname-override=master-01

--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

--cluster-cidr=10.254.0.0/16"

3.5、安装集群组件

k8s

├── conf

│ ├── apiserver

│ ├── audit-policy.yaml

│ ├── bootstrap.kubeconfig

│ ├── config

│ ├── controller-manager

│ ├── kubelet

│ ├── kube-proxy.kubeconfig

│ ├── proxy

│ ├── scheduler

│ ├── ssl

│ │ ├── admin.csr

│ │ ├── admin-csr.json

│ │ ├── admin-key.pem

│ │ ├── admin.pem

│ │ ├── k8s-gencert.json

│ │ ├── k8s-root-ca.csr

│ │ ├── k8s-root-ca-csr.json

│ │ ├── k8s-root-ca-key.pem

│ │ ├── k8s-root-ca.pem

│ │ ├── kube-apiserver.csr

│ │ ├── kube-apiserver-csr.json

│ │ ├── kube-apiserver-key.pem

│ │ ├── kube-apiserver.pem

│ │ ├── kube-proxy.csr

│ │ ├── kube-proxy-csr.json

│ │ ├── kube-proxy-key.pem

│ │ └── kube-proxy.pem

│ └── token.csv

├── hyperkube_1.10.1

├── install.sh

└── systemd

├── kube-apiserver.service

├── kube-controller-manager.service

├── kubelet.service

├── kube-proxy.service

└── kube-scheduler.service

最后执行此脚本安装即可,此外,应确保每个节点安装了 ipset、conntrack 两个包,因为 kube-proxy 组件会使用其处理 iptables 规则等

yum -y install ipset conntrack-tools

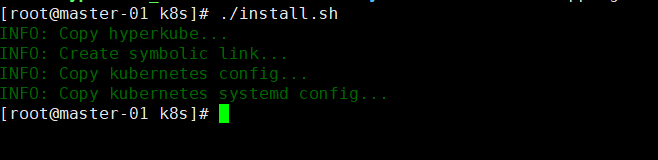

运行install.sh安装组件

四、启动Kubernetes master节点

对于 master 节点启动无需做过多处理,多个 master 只要保证 apiserver 等配置中的 ip 地址监听没问题后直接启动即可

systemctl daemon-reload systemctl start kube-apiserver systemctl start kube-controller-manager systemctl start kube-scheduler systemctl enable kube-apiserver systemctl enable kube-controller-manager systemctl enable kube-scheduler

完成后截图如下

五、启动Kubernetes Node节点

由于 HA 等功能需要,对于 Node 需要做一些处理才能启动,主要有以下两个地方需要处理

5.1 nginx-proxy

在启动 kubelet、kube-proxy 服务之前,需要在本地启动 nginx 来 tcp 负载均衡 apiserver 6443 端口,nginx-proxy 使用 docker + systemd 启动,配置如下

注意: 对于在 master 节点启动 kubelet 来说,不需要 nginx 做负载均衡;可以跳过此步骤,并修改 kubelet.kubeconfig、kube-proxy.kubeconfig 中的 apiserver 地址为当前 master ip 6443 端口即可

- nginx-proxy.service

[Unit]

Description=kubernetes apiserver docker wrapper

Wants=docker.socket

After=docker.service

[Service]

User=root

PermissionsStartOnly=true

ExecStart=/usr/bin/docker run -p 127.0.0.1:6443:6443

-v /etc/nginx:/etc/nginx

--name nginx-proxy

--net=host

--restart=on-failure:5

--memory=512M

nginx:1.13.12-alpine

ExecStartPre=-/usr/bin/docker rm -f nginx-proxy

ExecStop=/usr/bin/docker stop nginx-proxy

Restart=always

RestartSec=15s

TimeoutStartSec=30s

[Install]

WantedBy=multi-user.target

- nginx.conf

error_log stderr notice;

worker_processes auto;

events {

multi_accept on;

use epoll;

worker_connections 1024;

}

stream {

upstream kube_apiserver {

least_conn;

server 172.16.60.95:6443;

server 172.16.60.96:6443;

}

server {

listen 0.0.0.0:6443;

proxy_pass kube_apiserver;

proxy_timeout 10m;

启动apiserver的本地负载均衡

mkdir /etc/nginx cp nginx.conf /etc/nginx cp nginx-proxy.service /lib/systemd/system systemctl daemon-reload systemctl start nginx-proxy systemctl enable nginx-proxy

5.2 TLS bootstrapping

创建好 nginx-proxy 后不要忘记为 TLS Bootstrap 创建相应的 RBAC 规则,这些规则能实现证自动签署 TLS Bootstrap 发出的 CSR 请求,从而实现证书轮换(创建一次即可)

tls-bootstrapping-clusterrole.yaml

# A ClusterRole which instructs the CSR approver to approve a node requesting a # serving cert matching its client cert. kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: system:certificates.k8s.io:certificatesigningrequests:selfnodeserver rules: - apiGroups: ["certificates.k8s.io"] resources: ["certificatesigningrequests/selfnodeserver"] verbs: ["create"]

在master执行创建

# 给与 kubelet-bootstrap 用户进行 node-bootstrapper 的权限

kubectl create clusterrolebinding kubelet-bootstrap

--clusterrole=system:node-bootstrapper

--user=kubelet-bootstrap

kubectl create -f tls-bootstrapping-clusterrole.yaml

# 自动批准 system:bootstrappers 组用户 TLS bootstrapping 首次申请证书的 CSR 请求

kubectl create clusterrolebinding node-client-auto-approve-csr

--clusterrole=system:certificates.k8s.io:certificatesigningrequests:nodeclient

--group=system:bootstrappers

# 自动批准 system:nodes 组用户更新 kubelet 自身与 apiserver 通讯证书的 CSR 请求

kubectl create clusterrolebinding node-client-auto-renew-crt

--clusterrole=system:certificates.k8s.io:certificatesigningrequests:selfnodeclient

--group=system:nodes

# 自动批准 system:nodes 组用户更新 kubelet 10250 api 端口证书的 CSR 请求

kubectl create clusterrolebinding node-server-auto-renew-crt

--clusterrole=system:certificates.k8s.io:certificatesigningrequests:selfnodeserver

--group=system:nodes

5.3 修改配置文件

在所有node服务器上,也要运行install.sh,然后修改/etc/kubernetes中的配置文件

(1)config

注释掉最后一句

# KUBE_MASTER="--master=http://127.0.0.1:8080"

(2)kubelet

###

# kubernetes kubelet (minion) config

# The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces)

KUBELET_ADDRESS="--node-ip=172.16.60.98"

# The port for the info server to serve on

# KUBELET_PORT="--port=10250"

# You may leave this blank to use the actual hostname

KUBELET_HOSTNAME="--hostname-override=node-02"

# location of the api-server

# KUBELET_API_SERVER=""

# Add your own!

KUBELET_ARGS=" --bootstrap-kubeconfig=/etc/kubernetes/bootstrap.kubeconfig

--cert-dir=/etc/kubernetes/ssl

--cgroup-driver=cgroupfs

--cluster-dns=10.254.0.2

--cluster-domain=cluster.local.

--fail-swap-on=false

--feature-gates=RotateKubeletClientCertificate=true,RotateKubeletServerCertificate=true

--node-labels=node-role.kubernetes.io/k8s-node=true

--image-gc-high-threshold=70

--image-gc-low-threshold=50

--kube-reserved=cpu=250m,memory=256Mi,ephemeral-storage=1Gi

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig

--system-reserved=cpu=500m,memory=512Mi,ephemeral-storage=1Gi

--serialize-image-pulls=false

--sync-frequency=30s

--pod-infra-container-image=k8s.gcr.io/pause-amd64:3.0

--resolv-conf=/etc/resolv.conf

--rotate-certificates"

(3)proxy

###

# kubernetes proxy config

# default config should be adequate

# Add your own!

KUBE_PROXY_ARGS="--bind-address=0.0.0.0

--hostname-override=node-02

--kubeconfig=/etc/kubernetes/kube-proxy.kubeconfig

--cluster-cidr=10.254.0.0/16"

5.4 执行启动

systemctl daemon-reload systemctl start kubelet systemctl start kube-proxy systemctl enable kubelet systemctl enable kube-proxy

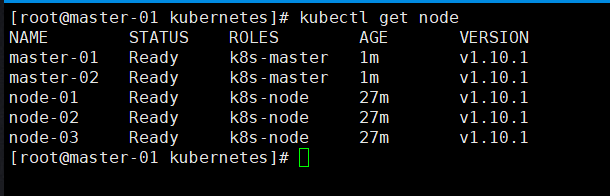

这样3个node节点就好了,截图如下:

5.5 将master节点也加入node集群中

在master启动kubelet和proxy前,先修改/etc/kubernetes中bootstrap.kubeconfig 和kube-proxy.kubeconfig,将 https://127.0.0.1:6443 改为 https://master_ip:6443

systemctl daemon-reload systemctl start kubelet systemctl start kube-proxy systemctl enable kubelet systemctl enable kube-proxy

六、安装calico

6.1 修改calico配置

master-01执行

mkdir calico

cd calico

vim getCalico.sh

wget https://docs.projectcalico.org/v3.1/getting-started/kubernetes/installation/hosted/calico.yaml -O calico.example.yaml

ETCD_CERT=`cat /etc/etcd/ssl/etcd.pem | base64 | tr -d '

'`

ETCD_KEY=`cat /etc/etcd/ssl/etcd-key.pem | base64 | tr -d '

'`

ETCD_CA=`cat /etc/etcd/ssl/etcd-root-ca.pem | base64 | tr -d '

'`

ETCD_ENDPOINTS="https://172.16.60.95:2379,https://172.16.60.96:2379,https://172.16.60.97:2379"

cp calico.example.yaml calico.yaml

sed -i "s@.*etcd_endpoints:.*@ etcd_endpoints: "${ETCD_ENDPOINTS}"@gi" calico.yaml

sed -i "s@.*etcd-cert:.*@ etcd-cert: ${ETCD_CERT}@gi" calico.yaml

sed -i "s@.*etcd-key:.*@ etcd-key: ${ETCD_KEY}@gi" calico.yaml

sed -i "s@.*etcd-ca:.*@ etcd-ca: ${ETCD_CA}@gi" calico.yaml

sed -i 's@.*etcd_ca:.*@ etcd_ca: "/calico-secrets/etcd-ca"@gi' calico.yaml

sed -i 's@.*etcd_cert:.*@ etcd_cert: "/calico-secrets/etcd-cert"@gi' calico.yaml

sed -i 's@.*etcd_key:.*@ etcd_key: "/calico-secrets/etcd-key"@gi' calico.yaml

# 注释掉 calico-node 部分(由 Systemd 接管)

sed -i '123,219s@.*@#&@gi' calico.yaml

6.2 创建systemd文件

创建 systemd service 配置文件要在每个节点上都执行

calico-systemd.sh

K8S_MASTER_IP="172.16.60.95"

HOSTNAME=`cat /etc/hostname`

ETCD_ENDPOINTS="https://172.16.60.95:2379,https://172.16.60.96:2379,https://172.16.60.97:2379"

cat > /lib/systemd/system/calico-node.service <<EOF

[Unit]

Description=calico node

After=docker.service

Requires=docker.service

[Service]

User=root

Environment=ETCD_ENDPOINTS=${ETCD_ENDPOINTS}

PermissionsStartOnly=true

ExecStart=/usr/bin/docker run --net=host --privileged --name=calico-node \

-e ETCD_ENDPOINTS=${ETCD_ENDPOINTS} \

-e ETCD_CA_CERT_FILE=/etc/etcd/ssl/etcd-root-ca.pem \

-e ETCD_CERT_FILE=/etc/etcd/ssl/etcd.pem \

-e ETCD_KEY_FILE=/etc/etcd/ssl/etcd-key.pem \

-e NODENAME=${HOSTNAME} \

-e IP= \

-e IP_AUTODETECTION_METHOD=can-reach=${K8S_MASTER_IP} \

-e AS=64512 \

-e CLUSTER_TYPE=k8s,bgp \

-e CALICO_IPV4POOL_CIDR=10.20.0.0/16 \

-e CALICO_IPV4POOL_IPIP=always \

-e CALICO_LIBNETWORK_ENABLED=true \

-e CALICO_NETWORKING_BACKEND=bird \

-e CALICO_DISABLE_FILE_LOGGING=true \

-e FELIX_IPV6SUPPORT=false \

-e FELIX_DEFAULTENDPOINTTOHOSTACTION=ACCEPT \

-e FELIX_LOGSEVERITYSCREEN=info \

-e FELIX_IPINIPMTU=1440 \

-e FELIX_HEALTHENABLED=true \

-e CALICO_K8S_NODE_REF=${HOSTNAME} \

-v /etc/calico/etcd-root-ca.pem:/etc/etcd/ssl/etcd-root-ca.pem \

-v /etc/calico/etcd.pem:/etc/etcd/ssl/etcd.pem \

-v /etc/calico/etcd-key.pem:/etc/etcd/ssl/etcd-key.pem \

-v /lib/modules:/lib/modules \

-v /var/lib/calico:/var/lib/calico \

-v /var/run/calico:/var/run/calico \

quay.io/calico/node:v3.1.0

ExecStop=/usr/bin/docker rm -f calico-node

Restart=always

RestartSec=10

[Install]

WantedBy=multi-user.target

EOF

执行shell文件,将calico-systemd.sh复制集群其他机器。并修改其中的节点信息和ip

对于以上脚本中的 K8S_MASTER_IP 变量,只需要填写一个 master ip 即可,这个变量用于 calico 自动选择 IP 使用;在宿主机有多张网卡的情况下,calcio node 会自动获取一个 IP,获取原则就是尝试是否能够联通这个 master ip

由于 calico 需要使用 etcd 存储数据,所以需要复制 etcd 证书到相关目录,/etc/calico 需要在每个节点都有

mkdir -p /etc/calico cp /etc/etcd/ssl/* /etc/calico

6.3 修改kubelet配置

使用 Calico 后需要修改 kubelet 配置增加 CNI 设置(--network-plugin=cni),修改后配置如下

###

# kubernetes kubelet (minion) config

# The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces)

KUBELET_ADDRESS="--node-ip=172.16.60.99"

# The port for the info server to serve on

# KUBELET_PORT="--port=10250"

# You may leave this blank to use the actual hostname

KUBELET_HOSTNAME="--hostname-override=node-03"

# location of the api-server

# KUBELET_API_SERVER=""

# Add your own!

KUBELET_ARGS=" --bootstrap-kubeconfig=/etc/kubernetes/bootstrap.kubeconfig

--cert-dir=/etc/kubernetes/ssl

--cgroup-driver=cgroupfs

--network-plugin=cni

--cluster-dns=10.254.0.2

--cluster-domain=cluster.local.

--fail-swap-on=false

--feature-gates=RotateKubeletClientCertificate=true,RotateKubeletServerCertificate=true

--node-labels=node-role.kubernetes.io/k8s-node=true

--image-gc-high-threshold=70

--image-gc-low-threshold=50

--kube-reserved=cpu=250m,memory=256Mi,ephemeral-storage=1Gi

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig

--system-reserved=cpu=500m,memory=512Mi,ephemeral-storage=1Gi

--serialize-image-pulls=false

--sync-frequency=30s

--pod-infra-container-image=k8s.gcr.io/pause-amd64:3.0

--resolv-conf=/etc/resolv.conf

--rotate-certificates"

6.4 创建Calico Daemonset

# 先创建 RBAC kubectl apply -f https://docs.projectcalico.org/v3.1/getting-started/kubernetes/installation/rbac.yaml # 再创建 Calico Daemonset kubectl create -f calico.yaml

6.5 启动Calico Node

systemctl daemon-reload systemctl restart calico-node systemctl enable calico-node # 等待 20s 拉取镜像 sleep 20 systemctl restart kubelet

# 由于防火墙的原因,有些镜像获取不到,所以可以下载一个可以使用的镜像,再为其打个tag(所有节点执行) docker pull kubernetes/pause docker tag kubernetes/pause k8s.gcr.io/pause-amd64:3.0

6.6 测试网络

网络测试与其他几篇文章一样,创建几个 pod 测试即可

在master-01上执行

# 创建 deployment

cat << EOF >> demo.deploy.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: demo-deployment

spec:

replicas: 5

selector:

matchLabels:

app: demo

template:

metadata:

labels:

app: demo

spec:

containers:

- name: demo

image: mritd/demo

imagePullPolicy: IfNotPresent

ports:

- containerPort: 80

EOF

kubectl create -f demo.deploy.yml

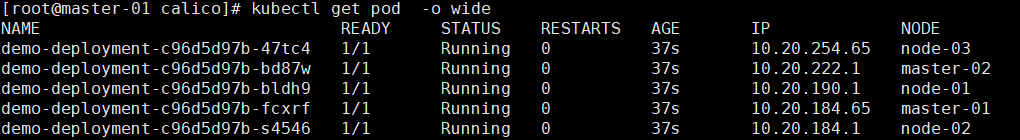

执行结果:

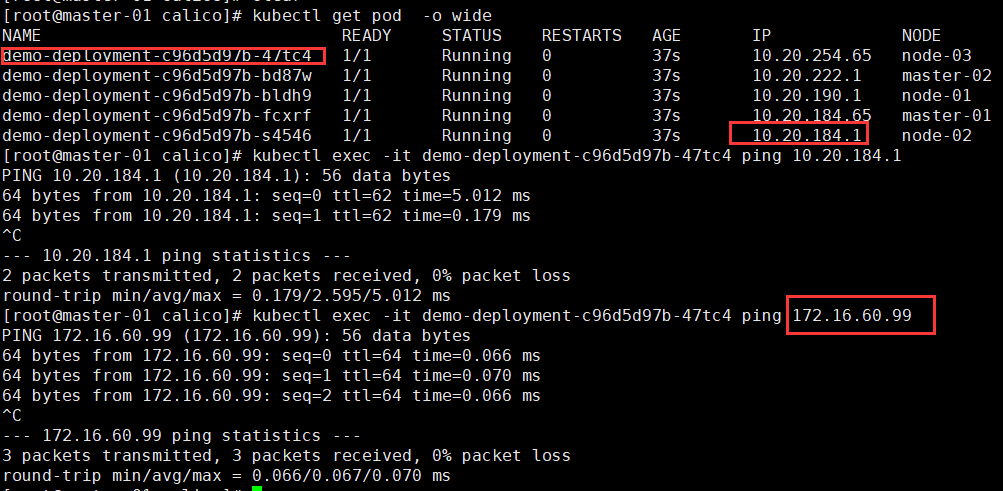

测试相互执行能否ping通:

pod 之间 和 pod 与主机之间都能ping通

七、部署集群DNS

7.1 部署CoreDNS

CoreDNS 给出了标准的 deployment 配置,如下

- coredns.yaml.sed

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

health

kubernetes CLUSTER_DOMAIN REVERSE_CIDRS {

pods insecure

upstream

fallthrough in-addr.arpa ip6.arpa

}

prometheus :9153

proxy . /etc/resolv.conf

cache 30

}

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: kube-dns

kubernetes.io/name: "CoreDNS"

spec:

replicas: 2

strategy:

type: RollingUpdate

rollingUpdate:

maxUnavailable: 1

selector:

matchLabels:

k8s-app: kube-dns

template:

metadata:

labels:

k8s-app: kube-dns

spec:

serviceAccountName: coredns

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

containers:

- name: coredns

image: coredns/coredns:1.1.1

imagePullPolicy: IfNotPresent

args: [ "-conf", "/etc/coredns/Corefile" ]

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

---

apiVersion: v1

kind: Service

metadata:

name: kube-dns

namespace: kube-system

annotations:

prometheus.io/scrape: "true"

labels:

k8s-app: kube-dns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: kube-dns

clusterIP: CLUSTER_DNS_IP

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

protocol: TCP

然后直接使用脚本替换即可(脚本变量我已经修改了)

#!/bin/bash

# Deploys CoreDNS to a cluster currently running Kube-DNS.

SERVICE_CIDR=${1:-10.254.0.0/16}

POD_CIDR=${2:-10.20.0.0/16}

CLUSTER_DNS_IP=${3:-10.254.0.2}

CLUSTER_DOMAIN=${4:-cluster.local}

YAML_TEMPLATE=${5:-`pwd`/coredns.yaml.sed}

sed -e s/CLUSTER_DNS_IP/$CLUSTER_DNS_IP/g -e s/CLUSTER_DOMAIN/$CLUSTER_DOMAIN/g -e s?SERVICE_CIDR?$SERVICE_CIDR?g -e s?POD_CIDR?$POD_CIDR?g $YAML_TEMPLATE > coredns.yaml

创建

# 执行上面的替换脚本 ./deploy.sh # 创建 CoreDNS kubectl create -f coredns.yaml

查看

[root@master-01 coredns]# kubectl exec -it demo-deployment-c96d5d97b-47tc4 bash bash-4.4# cat /etc/resolv.conf nameserver 10.254.0.2 search default.svc.cluster.local. svc.cluster.local. cluster.local. options ndots:5

注意:直接ping ClusterIP是ping不通的,ClusterIP是根据IPtables路由到服务的endpoint上,只有结合ClusterIP加端口才能访问到对应的服务。

八、部署heapster

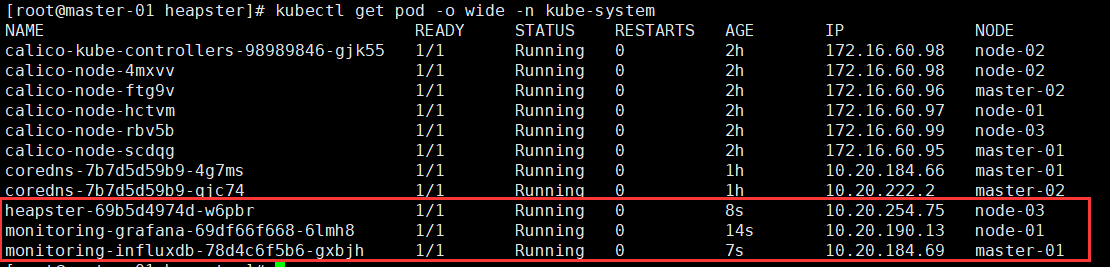

heapster 部署相对简单的多,yaml 创建一下就可以了

kubectl create -f https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/influxdb/grafana.yaml kubectl create -f https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/influxdb/heapster.yaml kubectl create -f https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/influxdb/influxdb.yaml kubectl create -f https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/rbac/heapster-rbac.yaml

这些yaml文件中个的镜像很可能由于防火墙的原因下载不下来,需要自己搜合适的镜像,再为其打个tag

如:

docker search heapster-grafana-amd64 docker pull pupudaye/heapster-grafana-amd64 docker tag pupudaye/heapster-grafana-amd64 k8s.gcr.io/heapster-grafana-amd64:v4.4.3

九、部署Dashboard

9.1 安装dashboard

(1)下载yaml文件

wget https://raw.githubusercontent.com/kubernetes/dashboard/master/src/deploy/recommended/kubernetes-dashboard.yaml -O kubernetes-dashboard.yaml

将最后部分的端口暴露修改如下

# ------------------- Dashboard Service ------------------- #

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort

ports:

- name: dashboard-tls

port: 443

targetPort: 8443

nodePort: 30000

protocol: TCP

selector:

k8s-app: kubernetes-dashboard

(2)修改镜像地址

docker pull k8scn/kubernetes-dashboard-amd64 docker tag k8scn/kubernetes-dashboard-amd64 k8s.gcr.io/kubernetes-dashboard-amd64:v1.8.3

(3)制作证书

使用NodePort的方式来访问Dashboard时,需要指定有效的证书,才能访问。参考Certificate management。

mkdir ~/certs [root@master-01 certs]# openssl genrsa -des3 -passout pass:x -out dashboard.pass.key 2048 Generating RSA private key, 2048 bit long modulus .....................+++ .............................................+++ e is 65537 (0x10001) [root@master-01 certs]# openssl rsa -passin pass:x -in dashboard.pass.key -out dashboard.key writing RSA key [root@master-01 certs]# ls dashboard.key dashboard.pass.key [root@master-01 certs]# rm dashboard.pass.key rm: remove regular file ‘dashboard.pass.key’? y [root@master-01 certs]# openssl req -new -key dashboard.key -out dashboard.csr You are about to be asked to enter information that will be incorporated into your certificate request. What you are about to enter is what is called a Distinguished Name or a DN. There are quite a few fields but you can leave some blank For some fields there will be a default value, If you enter '.', the field will be left blank. ----- Country Name (2 letter code) [XX]:CN State or Province Name (full name) []:ZJ Locality Name (eg, city) [Default City]:HZ Organization Name (eg, company) [Default Company Ltd]:YM Organizational Unit Name (eg, section) []:YM Common Name (eg, your name or your server's hostname) []:JF Email Address []:123@qq.com Please enter the following 'extra' attributes to be sent with your certificate request A challenge password []:123456 An optional company name []:123456 [root@master-01 certs]# openssl x509 -req -sha256 -days 365 -in dashboard.csr -signkey dashboard.key -out dashboard.crt Signature ok subject=/C=CN/ST=ZJ/L=HZ/O=YM/OU=YM/CN=JF/emailAddress=123@qq.com Getting Private key

查看证书

[root@master-01 ~]# tree ~/certs /root/certs ├── dashboard.crt ├── dashboard.csr └── dashboard.key

(4)使用证书生成kubernetes-dashboard 秘钥

kubectl create secret generic kubernetes-dashboard-certs --from-file=$HOME/certs -n kube-system

查看kubernetes secret

[root@master-01 ~]# kubectl describe secret kubernetes-dashboard-certs -n kube-system Name: kubernetes-dashboard-certs Namespace: kube-system Labels: <none> Annotations: <none> Type: Opaque Data ==== dashboard.crt: 1208 bytes dashboard.csr: 1070 bytes dashboard.key: 1679 bytes

(5)安装dashboard

kubectl create -f kubernetes-dashboard.yaml

[root@master-01 ~]# kubectl get pod -o wide -n kube-system | grep dashboard kubernetes-dashboard-7d5dcdb6d9-pfkm8 1/1 Running 0 15h 10.20.222.6 master-02 [root@master-01 ~]# kubectl get service kubernetes-dashboard -n kube-system NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes-dashboard NodePort 10.254.105.48 <none> 443:30000/TCP 15h

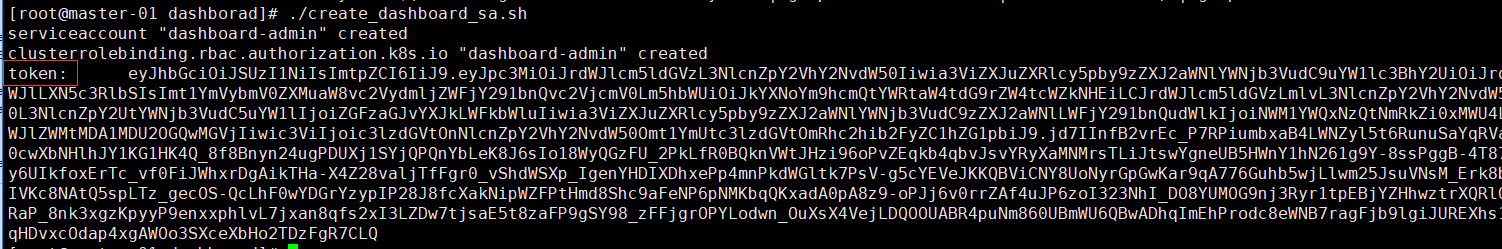

9.2 创建admin账户

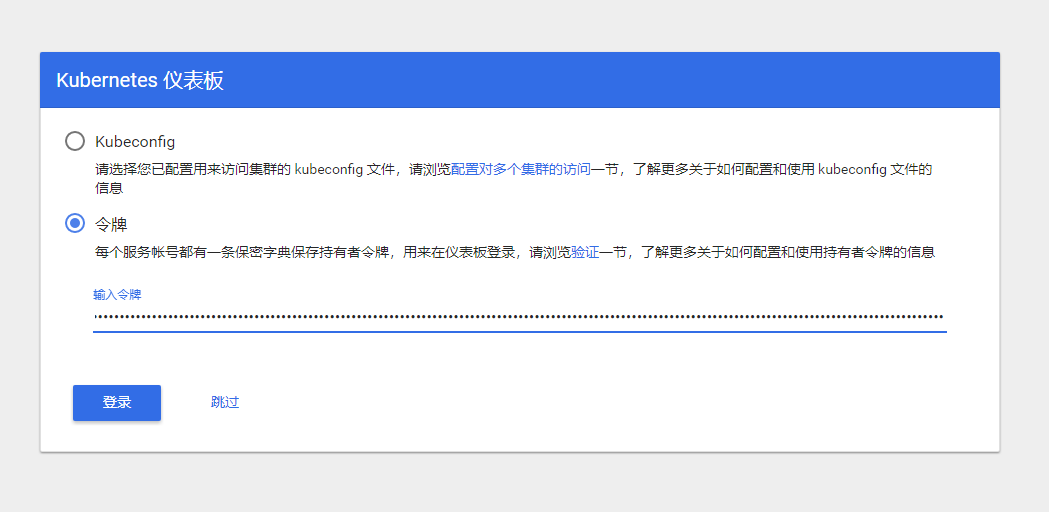

默认情况下部署成功后可以直接访问 https://NODE_IP:30000 访问,但是想要登录进去查看的话需要使用 kubeconfig 或者 access token 的方式;实际上这个就是 RBAC 授权控制,以下提供一个创建 admin access token 的脚本

#!/bin/bash

if kubectl get sa dashboard-admin -n kube-system &> /dev/null;then

echo -e "�33[33mWARNING: ServiceAccount dashboard-admin exist!�33[0m"

else

kubectl create sa dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

fi

kubectl describe secret -n kube-system $(kubectl get secrets -n kube-system | grep dashboard-admin | cut -f1 -d ' ') | grep -E '^token'

选择令牌,复制token