go单任务版爬虫(爬取珍爱网)

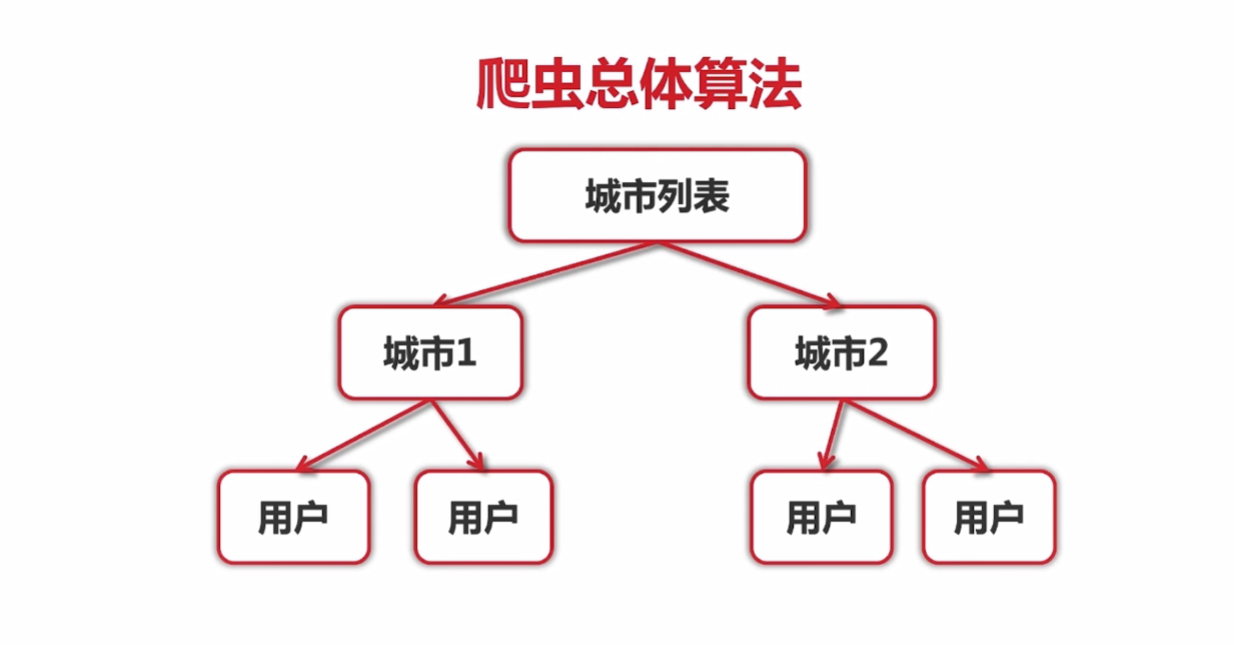

爬虫总体算法

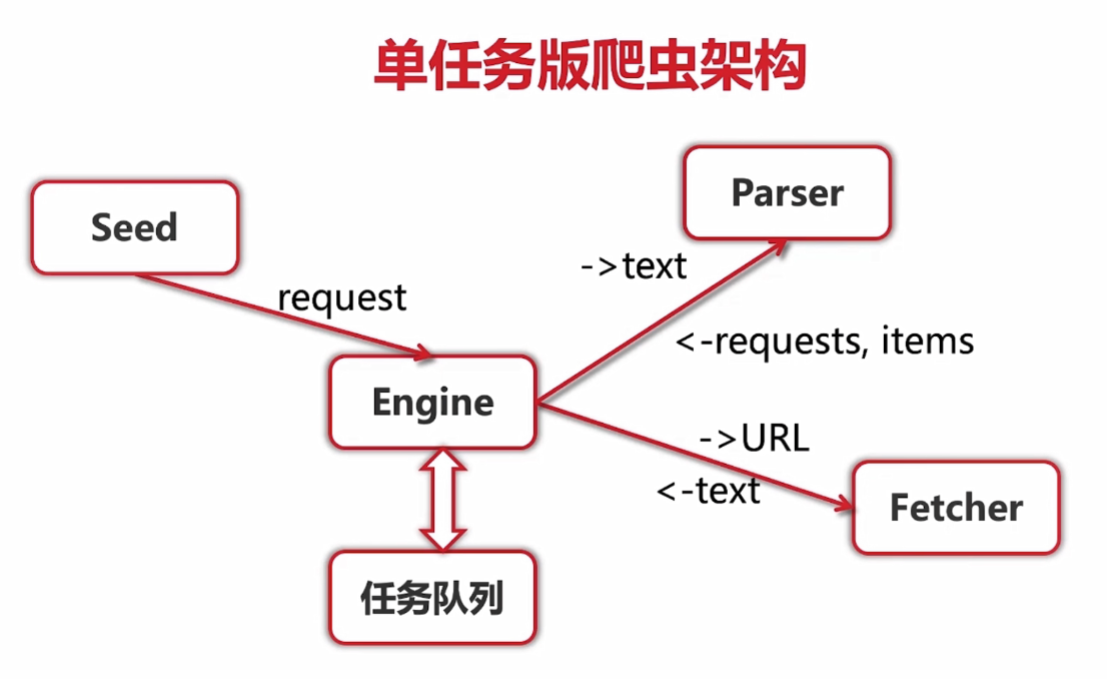

单任务版爬虫架构

任务

获取并打印所在城市第一页用户的详细信息

代码实现

/crawler/main.go

package main

import (

"crawler/engine"

"crawler/zhenai/parser"

)

func main() {

engine.Run(engine.Request{

Url:"http://www.zhenai.com/zhenghun",

ParseFunc: parser.ParseCityList,

})

}

/crawler/engine/engine.go

package engine

import (

"crawler/fetcher"

"log"

)

// seeds:url种子

func Run(seeds ...Request) {

var requests []Request

for _, r := range seeds {

requests = append(requests, r)

}

for len(requests) > 0 {

r := requests[0]

requests = requests[1:]

log.Printf("Fetching: %s", r.Url)

body, err := fetcher.Fetch(r.Url)

if err != nil {

log.Printf("Fetcher: error" + "fetching url %s: %v", r.Url, err)

continue

}

parseResult := r.ParseFunc(body)

requests = append(requests, parseResult.Requests...)

for _, item := range parseResult.Items{

log.Printf("Got item %v", item)

}

}

}

/crawler/engine/types.go

package engine

type Request struct {

Url string

ParseFunc func([]byte) ParseResult

}

type ParseResult struct {

Requests []Request

Items []interface{}

}

func NilParser([]byte) ParseResult{

return ParseResult{}

}

/crawler/fetcher/fetcher.go

package fetcher

import (

"bufio"

"fmt"

"golang.org/x/net/html/charset"

"golang.org/x/text/encoding"

"golang.org/x/text/encoding/unicode"

"golang.org/x/text/transform"

"io/ioutil"

"log"

"net/http"

)

//获取内容

func Fetch(url string) ([]byte, error) {

client := &http.Client{}

req, err := http.NewRequest("GET", url, nil)

if err != nil {

return nil, err

}

req.Header.Set("User-Agent", "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36")

resp, err := client.Do(req)

if err != nil {

return nil, err

}

defer resp.Body.Close()

if resp.StatusCode != http.StatusOK {

return nil, fmt.Errorf("Wrong status code: %d", resp.StatusCode)

}

bodyReader := bufio.NewReader(resp.Body)

e := determineEncoding(bodyReader)

utf8Reader := transform.NewReader(bodyReader, e.NewDecoder())

return ioutil.ReadAll(utf8Reader)

}

//转换编码

func determineEncoding(r *bufio.Reader) encoding.Encoding {

bytes, err := r.Peek(1024)

if err != nil {

log.Printf("Fetcher error: %v", err)

return unicode.UTF8

}

e, _, _ := charset.DetermineEncoding(bytes, "")

return e

}

/crawler/zhenai/parser/citylist.go

package parser

import (

"crawler/engine"

"regexp"

)

//解析UTF8文本,获取城市url列表

const cityListRe = `<a href="(http://www.zhenai.com/zhenghun/[0-9a-z]+)" [^>]*>([^<]+)</a>`

func ParseCityList(contents []byte) engine.ParseResult {

re := regexp.MustCompile(cityListRe)

matches := re.FindAllSubmatch(contents, -1)

result := engine.ParseResult{}

limit := 5 //设置限制,便于测试

for _, m := range matches {

result.Items = append(result.Items, "City: "+string(m[2]))

result.Requests = append(result.Requests, engine.Request{

Url: string(m[1]),

ParseFunc: ParseCity,

})

limit--

if limit == 0 {

break

}

}

return result

}

/crawler/zhenai/parser/city.go

package parser

import (

"crawler/engine"

"regexp"

)

//解析单个城市,获取用户

const cityRe = `<a href="(http://album.zhenai.com/u/[0-9]+)" [^>]*>([^<]+)</a>`

func ParseCity(contents []byte) engine.ParseResult {

re := regexp.MustCompile(cityRe)

matches := re.FindAllSubmatch(contents, -1)

result := engine.ParseResult{}

for _, m := range matches {

name := string(m[2])

result.Items = append(result.Items, "User: "+name)

result.Requests = append(result.Requests, engine.Request{

Url: string(m[1]),

ParseFunc: func(c []byte) engine.ParseResult {

return ParseProfile(c, "name:"+name)

},

})

}

return result

}

/crawler/zhenai/parser/profile.go

package parser

import (

"crawler/engine"

"crawler/model"

"regexp"

)

//获取用户信息

const all = `<div class="m-btn purple" data-v-8b1eac0c>([^<]+)</div>`

func ParseProfile(contents []byte, name string) engine.ParseResult {

profile := model.Profile{}

profile.User = append(profile.User, name)

re := regexp.MustCompile(all)

match := re.FindAllSubmatch(contents,-1)

if match != nil {

for _, m := range match {

profile.User = append(profile.User, string(m[1]))

}

}

result := engine.ParseResult{

Items: []interface{}{profile},

}

return result

}

/crawler/model/profile.go

package model

type Profile struct {

User []string

}

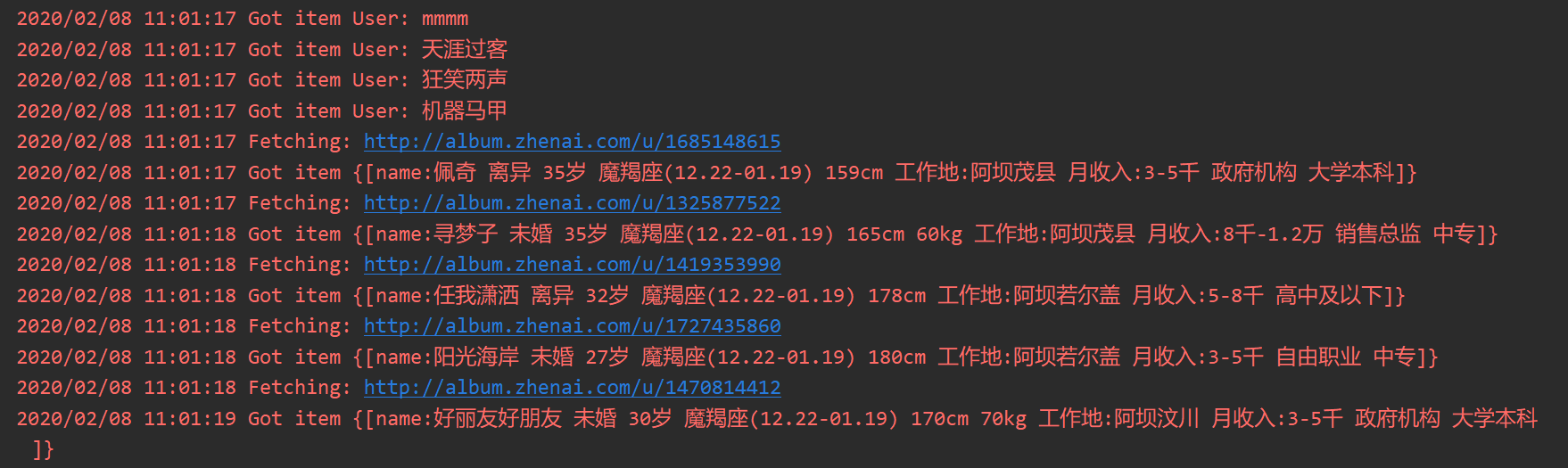

任务结果

完整代码

https://gitee.com/FenYiYuan/golang-oncrawler.git