1、开发环境搭建

一、新建一个普通的java工程

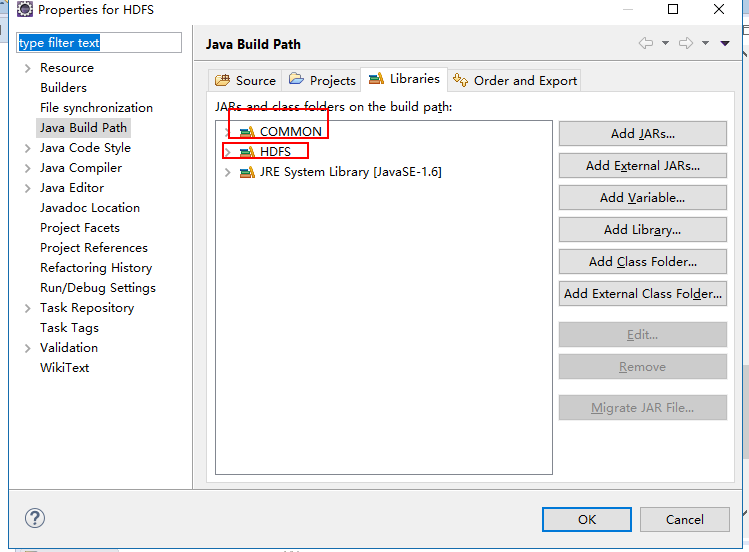

二、引入hdfs相关的jar包

需要引入的jar包:

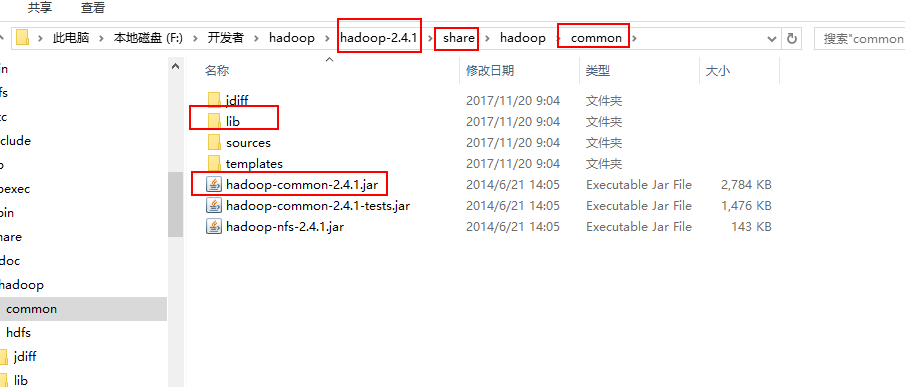

common下的jar

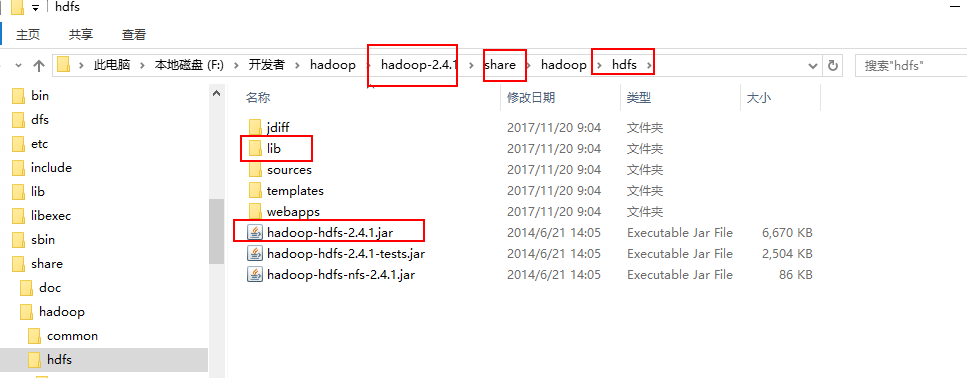

hdfs下的jar

2、编写HDFS相关的程序

package com.cvicse.ump.hadoop.hdfs; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FSDataOutputStream; import org.apache.hadoop.fs.FileStatus; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; public class FileOperation { //创建文件 public static void createFile(String dst,byte[] contents) throws Exception{ Configuration conf = new Configuration(); FileSystem fs = FileSystem.get(conf); Path dstPath = new Path(dst); FSDataOutputStream outputStream = fs.create(dstPath);; outputStream.write(contents); outputStream.close(); fs.close(); System.out.println(dst+",文件创建成果"); } //上传文件 public static void uploadFile(String src,String dst) throws Exception{ Configuration conf = new Configuration(); FileSystem fs = FileSystem.get(conf); Path srcPath = new Path(src); Path dstPath = new Path(dst); fs.copyFromLocalFile(srcPath, dstPath); System.out.println("Upload to "+conf.get("fs.default.name")); System.out.println("------list files---------"+" "); FileStatus[] fileStatus = fs.listStatus(dstPath); for(FileStatus file:fileStatus){ System.out.println(file.getPath()); } fs.close(); } //删除目录 public static void delete(String filePath)throws Exception{ Configuration conf = new Configuration(); FileSystem fs = FileSystem.get(conf); Path path = new Path(filePath); boolean isOk = fs.deleteOnExit(path); if(isOk){ System.out.println("delete OK."); }else{ System.out.println("delete failure."); } fs.close(); } //创建目录 public static void mkdir(String path)throws Exception{ Configuration conf = new Configuration(); FileSystem fs = FileSystem.get(conf); Path srcPath = new Path(path); boolean isOK = fs.mkdirs(srcPath); if(isOK){ System.out.println("create dir ok!"); }else{ System.out.println("create dir failure!"); } fs.close(); } //下载文件 public static void downFile(String src,String dst)throws Exception{ Configuration conf = new Configuration(); FileSystem fs = FileSystem.get(conf); Path srcPath = new Path(src); Path dstPath = new Path(dst); fs.copyToLocalFile(srcPath, dstPath); System.out.println("down load over"); } public static void main(String[] args) throws Exception { /*String dst = args[0]; byte[] contents = "hello,dyh".getBytes(); createFile(dst, contents);*/ /*String src = args[0]; String dst = args[1]; uploadFile(src, dst);*/ /*String filePath = args[0]; delete(filePath);*/ /*String path = args[0]; mkdir(path);*/ String src = args[0]; String dst = args[1]; downFile(src, dst); } }

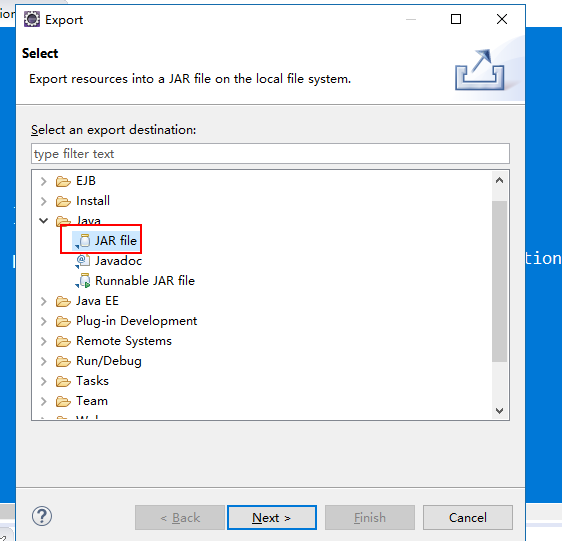

导出jar包

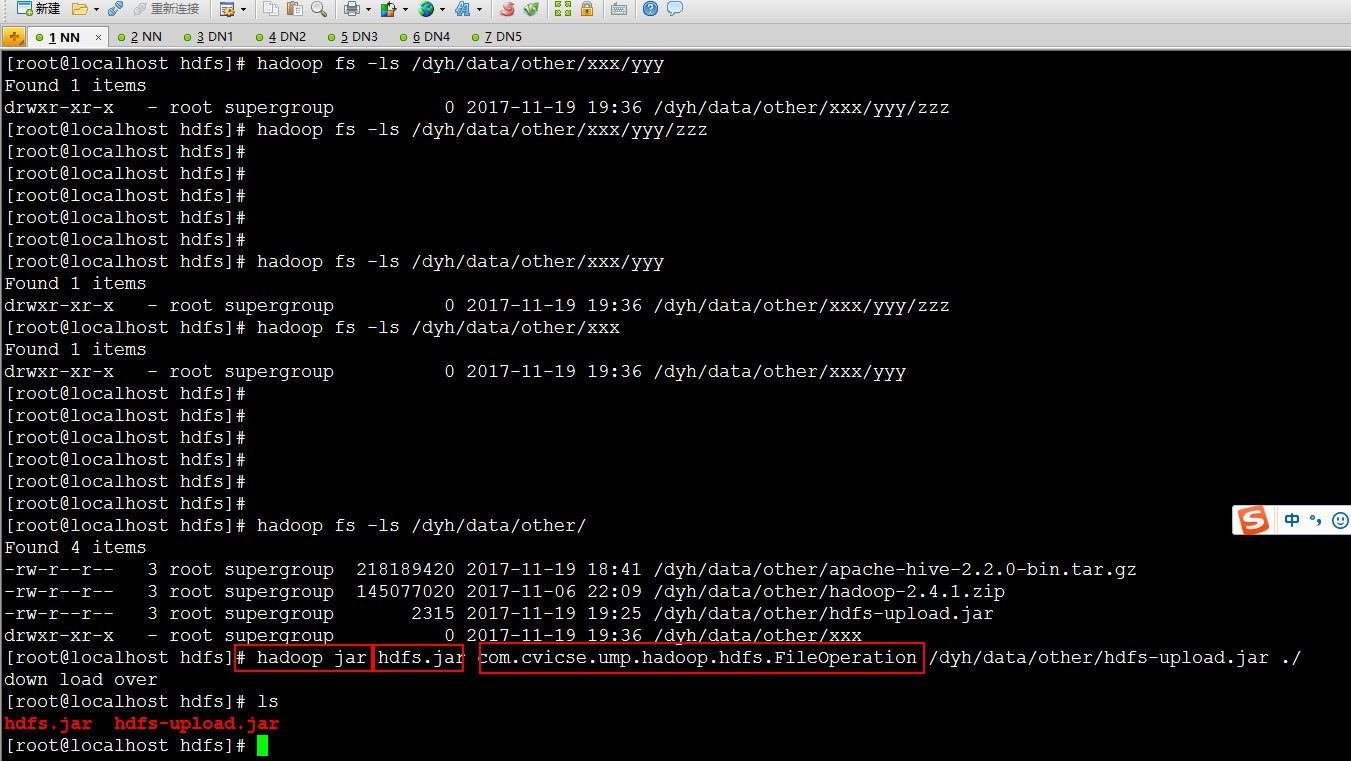

上传jar到HADOOP运行环境,并执行

执行命令:hadoop jar jar包名字 main函数所在的类