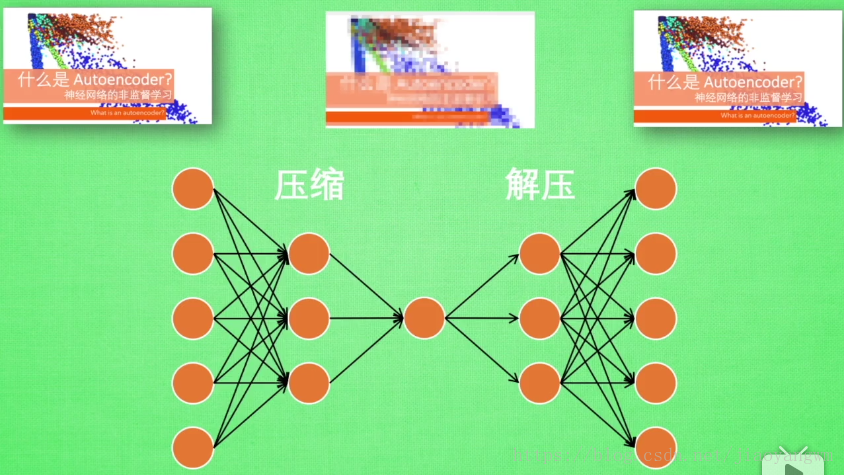

自编码Autoencoder

神经网络的非监督学习

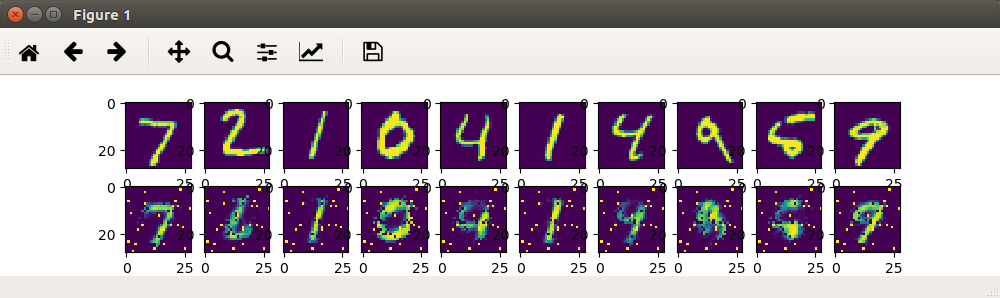

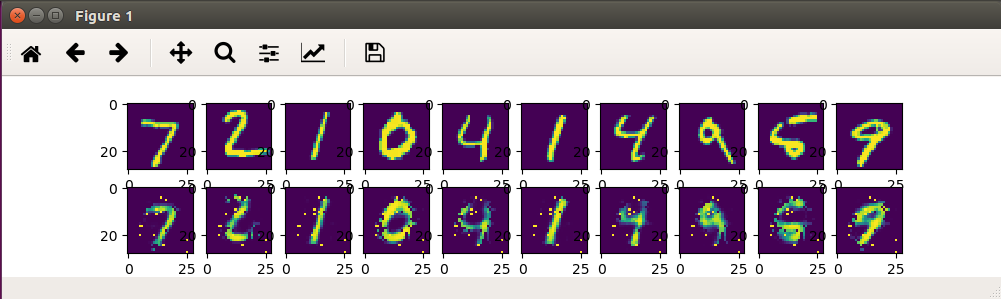

神经网络接收图像→→给图像打马赛克→→再还原

原有的图像被压缩,再用所储存的特征信息,经过解压获得原图。

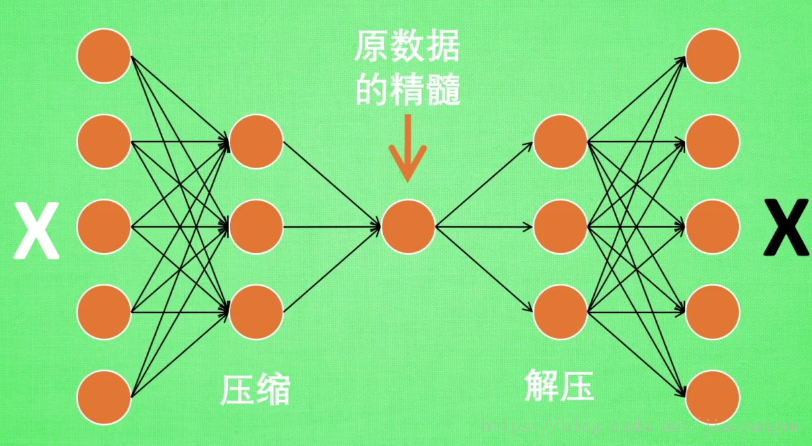

如果神经元直接从获取的高清图像中取学习信息,会是一件很吃力的事情,所以通过特征提取,提取出能够重构出原图的主要信息,把缩减后的信息放入神经网络中进行学习,就可以更加轻松的学习。

输入:白色的X

输出:黑色的X

求取两者的误差,经过误差反向传递,逐步提升自编码准确性,中间的隐层就是能够提取出原数据最主要特征的神经元。

为什么说其是非监督学习:因为该过程只是用了X,而不用其标签,所以使非监督学习。

一般使用的时候只是用前半部分

因为前面已经学习了数据的精髓,我们只需要创建一个神经网络来学习这些精髓就好啦,可以达到和普通神经网络一样的效果,并且很高效。

编码器:前半部分

解码器:后半部分

自编码和PCA类似,可以提取出特征,可以给特征降维,自编码超越了PCA。

代码一:

#!/usr/bin/env python2 # -*- coding: utf-8 -*- """ Created on Thu Apr 11 00:02:38 2019 @author: xiexj """ import tensorflow as tf import numpy as np import matplotlib.pyplot as plt from tensorflow.examples.tutorials.mnist import input_data mnist = input_data.read_data_sets("/tmp/data/", one_hot=False) # Parameters learning_rate = 0.01 training_epochs = 5 batch_size = 256 display_step = 1 examples_to_show = 10 # Network Parameters n_input = 784 # MNIST data input (img shape: 28*28) # tf Graph input (only pictures) X = tf.placeholder("float", [None, n_input]) # hidden layer settings n_hidden_1 = 256 # 1st layer num features n_hidden_2 = 128 # 2nd layer num features weights = { 'encoder_h1': tf.Variable(tf.random_normal([n_input, n_hidden_1])), 'encoder_h2': tf.Variable(tf.random_normal([n_hidden_1, n_hidden_2])), 'decoder_h1': tf.Variable(tf.random_normal([n_hidden_2, n_hidden_1])), 'decoder_h2': tf.Variable(tf.random_normal([n_hidden_1, n_input])), } biases = { 'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])), 'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])), 'decoder_b1': tf.Variable(tf.random_normal([n_hidden_1])), 'decoder_b2': tf.Variable(tf.random_normal([n_input])), } # Building the encoder def encoder(x): # Encoder Hidden layer with sigmoid activation #1 layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']), biases['encoder_b1'])) # Decoder Hidden layer with sigmoid activation #2 layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']), biases['encoder_b2'])) return layer_2 # Building the decoder def decoder(x): # Encoder Hidden layer with sigmoid activation #1 layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']), biases['decoder_b1'])) # Decoder Hidden layer with sigmoid activation #2 layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']), biases['decoder_b2'])) return layer_2 # Construct model encoder_op = encoder(X) decoder_op = decoder(encoder_op) # Prediction y_pred = decoder_op # Targets (Labels) are the input data. y_true = X # Define loss and optimizer, minimize the squared error cost = tf.reduce_mean(tf.pow(y_true - y_pred, 2)) optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost) # Launch the graph with tf.Session() as sess: init = tf.global_variables_initializer() sess.run(init) total_batch = int(mnist.train.num_examples/batch_size) for epoch in range(training_epochs): for i in range(total_batch): batch_xs, batch_ys = mnist.train.next_batch(batch_size) _, c = sess.run([optimizer, cost], feed_dict={X:batch_xs}) if epoch % display_step == 0: print("Epoch:", '%04d' % (epoch+1),"cost=", "{:.9f}".format(c)) print("Epoch:", '%04d' % (epoch+1),"cost=", "{:.9f}".format(c)) print("Optimization Finished!") encode_decode = sess.run(y_pred, feed_dict={X:mnist.test.images[:examples_to_show]}) f, a = plt.subplots(2, 10, figsize=(10, 2)) for i in range(examples_to_show): a[0][i].imshow(np.reshape(mnist.test.images[i],(28,28))) a[1][i].imshow(np.reshape(encode_decode[i],(28,28))) plt.show()

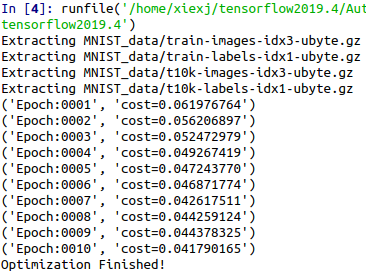

training_epochs = 5 # 训练批数

training_epochs = 10 # 训练批数

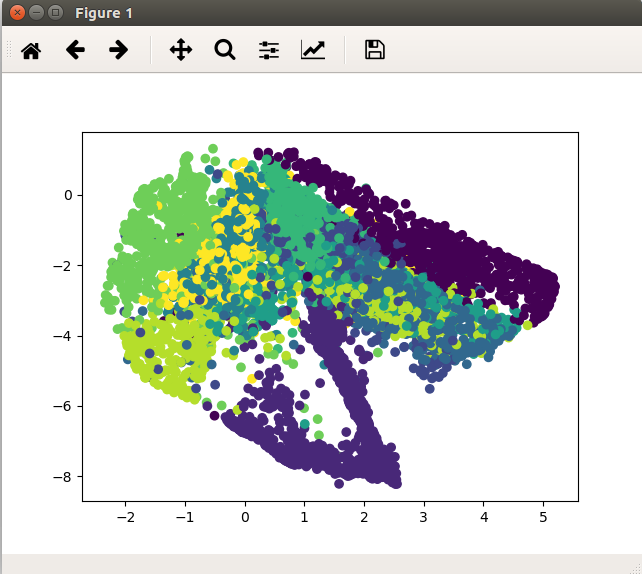

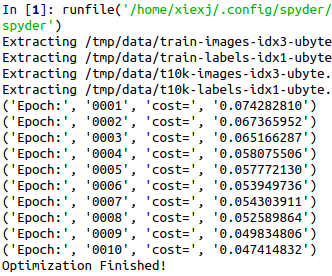

代码二:

#!/usr/bin/env python2 # -*- coding: utf-8 -*- """ Created on Wed Apr 10 21:43:11 2019 @author: xiexj """ import tensorflow as tf import matplotlib.pyplot as plt from tensorflow.examples.tutorials.mnist import input_data mnist = input_data.read_data_sets("MNIST_data/", one_hot=False) learning_rate = 0.01 trainning_epochs = 10 #20 batch_size = 256 display_step = 1 n_input = 784 X = tf.placeholder(tf.float32, [None, n_input]) n_hidden_1 = 128 n_hidden_2 = 64 n_hidden_3 = 10 n_hidden_4 = 2 weights = { 'encoder_h1':tf.Variable(tf.truncated_normal([n_input, n_hidden_1],)), 'encoder_h2':tf.Variable(tf.truncated_normal([n_hidden_1, n_hidden_2],)), 'encoder_h3':tf.Variable(tf.truncated_normal([n_hidden_2, n_hidden_3],)), 'encoder_h4':tf.Variable(tf.truncated_normal([n_hidden_3, n_hidden_4],)), 'decoder_h1':tf.Variable(tf.truncated_normal([n_hidden_4, n_hidden_3],)), 'decoder_h2':tf.Variable(tf.truncated_normal([n_hidden_3, n_hidden_2],)), 'decoder_h3':tf.Variable(tf.truncated_normal([n_hidden_2, n_hidden_1],)), 'decoder_h4':tf.Variable(tf.truncated_normal([n_hidden_1, n_input],)), } biases = { 'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])), 'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])), 'encoder_b3': tf.Variable(tf.random_normal([n_hidden_3])), 'encoder_b4': tf.Variable(tf.random_normal([n_hidden_4])), 'decoder_b1': tf.Variable(tf.random_normal([n_hidden_3])), 'decoder_b2': tf.Variable(tf.random_normal([n_hidden_2])), 'decoder_b3': tf.Variable(tf.random_normal([n_hidden_1])), 'decoder_b4': tf.Variable(tf.random_normal([n_input])), } def encoder(x): layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x,weights['encoder_h1']), biases['encoder_b1'])) layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']), biases['encoder_b2'])) layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['encoder_h3']), biases['encoder_b3'])) # layer_4 dont use af layer_4 = tf.add(tf.matmul(layer_3, weights['encoder_h4']), biases['encoder_b4']) return layer_4 def decoder(x): layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x,weights['decoder_h1']), biases['decoder_b1'])) layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1,weights['decoder_h2']), biases['decoder_b2'])) layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['decoder_h3']), biases['decoder_b3'])) layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3, weights['decoder_h4']), biases['decoder_b4'])) return layer_4 encoder_op = encoder(X) decoder_op = decoder(encoder_op) y_pred = decoder_op y_true = X cost = tf.reduce_mean(tf.pow(y_true - y_pred, 2)) optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost) init = tf.global_variables_initializer() with tf.Session() as sess: sess.run(init) total_batch = int(mnist.train.num_examples/batch_size) for epoch in range(trainning_epochs): for i in range(total_batch): batch_xs, batch_ys = mnist.train.next_batch(batch_size) _, c = sess.run([optimizer, cost], feed_dict={X:batch_xs}) if epoch % display_step == 0: print("Epoch:%04d" % (epoch+1),"cost={:.9f}".format(c)) print("Optimization Finished!") encoder_result = sess.run(encoder_op, feed_dict={X:mnist.test.images}) plt.scatter(encoder_result[:,0],encoder_result[:,1],c=mnist.test.labels) plt.show()