wordcount1类

/**

* wordcount单词统计

*/

public class wordcount1 {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//单例作业

Configuration conf = new Configuration();

conf.set("fs.defaultFS","file:///");

Job job = Job.getInstance(conf);

//设置job的各种属性

job.setJobName("wordcountAPP"); //设置job名称

job.setJarByClass(wordcount1.class); //设置搜索类

// job.setInputFormatClass(org.apache.hadoop.mapreduce.lib.input.TextInputFormat.class); //设置输入格式

//设置输出格式类

job.setOutputFormatClass(org.apache.hadoop.mapreduce.lib.output.SequenceFileOutputFormat.class);

FileInputFormat.addInputPath(job, new Path(args[0])); //添加输入路径

FileOutputFormat.setOutputPath(job,new Path(args[1]));//设置输出路径

job.setInputFormatClass(org.apache.hadoop.mapreduce.lib.input.TextInputFormat.class);

job.setMapperClass(map.class); //设置mapper类

job.setReducerClass(reduce.class); //设置reduecer类

job.setNumReduceTasks(1); //设置reduce个数

job.setMapOutputKeyClass(Text.class); //设置之map输出key

job.setMapOutputValueClass(IntWritable.class); //设置map输出value

job.setOutputKeyClass(Text.class); //设置mapreduce 输出key

job.setOutputValueClass(IntWritable.class); //设置mapreduce输出value

job.waitForCompletion(true);

}

}mapper类和reducer类源码见

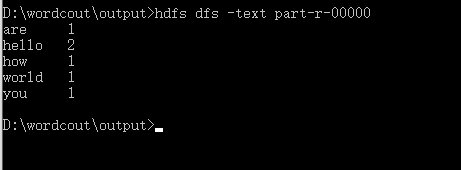

查看生成的序列文件

设置分区

定义分区类

package com.cr.hdfs.com.cr;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;

public class Mypartioner extends Partitioner<Text,IntWritable>{

public int getPartition(Text text, IntWritable intWritable, int i) {

System.out.println("start mypartionner");

return 0;

}

}

在wordcount设置分区类和合成类

/**

* wordcount单词统计

*/

public class wordcount1 {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

//单例作业

Configuration conf = new Configuration();

conf.set("fs.defaultFS","file:///");

Job job = Job.getInstance(conf);

//设置job的各种属性

job.setJobName("wordcountAPP"); //设置job名称

job.setJarByClass(wordcount1.class); //设置搜索类

// job.setInputFormatClass(org.apache.hadoop.mapreduce.lib.input.TextInputFormat.class); //设置输入格式

//设置输出格式类

job.setOutputFormatClass(org.apache.hadoop.mapreduce.lib.output.SequenceFileOutputFormat.class);

//设置自定义分区类

job.setPartitionerClass(Mypartioner.class);

//设置合成类

job.setCombinerClass(reduce.class);

FileInputFormat.addInputPath(job, new Path(args[0])); //添加输入路径

FileOutputFormat.setOutputPath(job,new Path(args[1]));//设置输出路径

job.setInputFormatClass(org.apache.hadoop.mapreduce.lib.input.TextInputFormat.class);

job.setMapperClass(map.class); //设置mapper类

job.setReducerClass(reduce.class); //设置reduecer类

job.setNumReduceTasks(3); //设置reduce个数

job.setMapOutputKeyClass(Text.class); //设置之map输出key

job.setMapOutputValueClass(IntWritable.class); //设置map输出value

job.setOutputKeyClass(Text.class); //设置mapreduce 输出key

job.setOutputValueClass(IntWritable.class); //设置mapreduce输出value

job.waitForCompletion(true);

}reducer类

package com.cr.hdfs;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class reduce extends Reducer<Text,IntWritable,Text,IntWritable>{

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

System.out.println("come into reduce...");

int count = 0;

for(IntWritable iw : values){

count += iw.get();

}

//获取当前线程

String tno = Thread.currentThread().getName();

System.out.println("线程==>"+ tno + "===> reducer ===> " + key.toString() + "===>" + count);

context.write(key,new IntWritable(count));

}

}mapper类

package com.cr.hdfs;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class map extends Mapper<LongWritable,Text,Text,IntWritable> {

/**

* WordCountMapper 处理文本为<k,v>对

* @param key 每一行字节数的偏移量

* @param value 每一行的文本

* @param context 上下文

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

Text keyOut = new Text();

IntWritable valueout = new IntWritable();

String[] arr = value.toString().split(" ");

for(String s : arr){

keyOut.set(s);

valueout.set(1);

context.write(keyOut,valueout);

}

System.out.println("come into mapper...");

}

}

运行结果

come into mapper...

come into mapper...

come into reduce...

线程==>LocalJobRunner Map Task Executor #0===> reducer ===> are===>1

come into reduce...

线程==>LocalJobRunner Map Task Executor #0===> reducer ===> hello===>2

come into reduce...

线程==>LocalJobRunner Map Task Executor #0===> reducer ===> how===>1

come into reduce...

线程==>LocalJobRunner Map Task Executor #0===> reducer ===> world===>1

come into reduce...

线程==>LocalJobRunner Map Task Executor #0===> reducer ===> you===>1

come into reduce...

线程==>pool-3-thread-1===> reducer ===> are===>1

come into reduce...

线程==>pool-3-thread-1===> reducer ===> hello===>2

come into reduce...

线程==>pool-3-thread-1===> reducer ===> how===>1

come into reduce...

线程==>pool-3-thread-1===> reducer ===> world===>1

come into reduce...

线程==>pool-3-thread-1===> reducer ===> you===>1

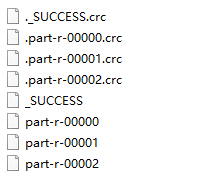

产生了三个reduce聚合的文件

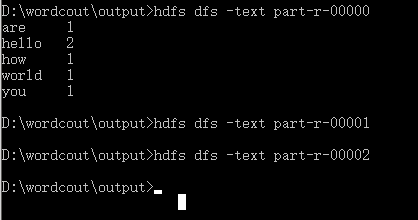

查看结果

发现只有第一个聚合文件里面有内容,后面两个都没有