参考,http://spark.incubator.apache.org/docs/latest/streaming-programming-guide.html

Overview

SparkStreaming支持多种流输入,like Kafka, Flume, Twitter, ZeroMQ or plain old TCP sockets,并且可以在上面进行transform操作,最终数据存入HDFS,数据库或dashboard

另外可以把Spark’s in-built machine learning algorithms, and graph processing algorithms用于spark streaming,这个比较有意思

SparkStreaming的原理,下面那幅图很清晰,将stream数据离散化,提出的概念DStream,其实就是sequence of RDDs

Spark Streaming is an extension of the core Spark API that allows enables high-throughput, fault-tolerant stream processing of live data streams.

Data can be ingested from many sources like Kafka, Flume, Twitter, ZeroMQ or plain old TCP sockets and be processed using complex algorithms expressed with high-level functions like map, reduce, join and window. Finally, processed data can be pushed out to filesystems, databases, and live dashboards. In fact, you can apply Spark’s in-built machine learning algorithms, and graph processing algorithms on data streams.

Internally, it works as follows. Spark Streaming receives live input data streams and divides the data into batches, which are then processed by the Spark engine to generate the final stream of results in batches.

Spark Streaming provides a high-level abstraction called discretized stream or DStream, which represents a continuous stream of data. DStreams can be created either from input data stream from sources such as Kafka and Flume, or by applying high-level operations on other DStreams. Internally, a DStream is represented as a sequence of RDDs.

A Quick Example

Before we go into the details of how to write your own Spark Streaming program, let’s take a quick look at what a simple Spark Streaming program looks like.

Initializing

StreamingContext是SparkStreaming的入口,就像SparkContext对于spark一样

其实StreamingContext就是SparkContext的封装,通过streamingContext.sparkContext可以取得

参数除了batchDuration,其他的都和SparkContext没有区别

To initialize a Spark Streaming program in Scala, a StreamingContext object has to be created, which is the main entry point of all Spark Streaming functionality.

A StreamingContext object can be created by using

The master parameter is a standard Spark cluster URL and can be “local” for local testing.

The appName is a name of your program, which will be shown on your cluster’s web UI.

The batchInterval is the size of the batches, as explained earlier.

Finally, the last two parameters are needed to deploy your code to a cluster if running in distributed mode, as described in the Spark programming guide.

Additionally, the underlying SparkContext can be accessed as streamingContext.sparkContext.

The batch interval must be set based on the latency requirements of your application and available cluster resources. See the Performance Tuning section for more details.

DStreams

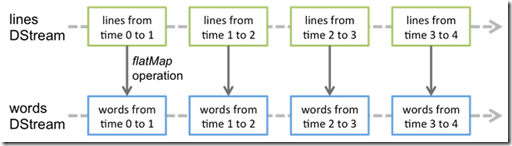

通过图示很清晰的说明什么是DStream,和基于DStream的transform是怎样的?

Discretized Stream or DStream is the basic abstraction provided by Spark Streaming. It represents a continuous stream of data, either the input data stream received from source, or the processed data stream generated by transforming the input stream. Internally, it is represented by a continuous sequence of RDDs, which is Spark’s abstraction of an immutable, distributed dataset. Each RDD in a DStream contains data from a certain interval, as shown in the following figure.

Any operation applied on a DStream translates to operations on the underlying RDDs. For example, in the earlier example of converting a stream of lines to words, the flatmap operation is applied on each RDD in the lines DStream to generate the RDDs of the words DStream. This is shown the following figure.

These underlying RDD transformations are computed by the Spark engine. The DStream operations hide most of these details and provides the developer with higher-level API for convenience. These operations are discussed in detail in later sections.

Operations

DStream支持transformations and output,和Spark的action不太一样

There are two kinds of DStream operations - transformations and output operations.

Similar to RDD transformations, DStream transformations operate on one or more DStreams to create new DStreams with transformed data.

After applying a sequence of transformations to the input streams, output operations need to called, which write data out to an external data sink, such as a filesystem or a database.

Transformations大部分都和RDD中的一样,参考原文,重点说下底下的几个,

UpdateStateByKey Operation

用流数据持续更新state,比如下面的例子,wordcount

只需要定义updateFunction,如何根据newValue更新现有的state

The updateStateByKey operation allows you to maintain arbitrary stateful computation, where you want to maintain some state data and continuously update it with new information.

To use this, you will have to do two steps.

- Define the state - The state can be of arbitrary data type.

- Define the state update function - Specify with a function how to update the state using the previous state and the new values from input stream.

Let’s illustrate this with an example. Say you want to maintain a running count of each word seen in a text data stream. Here, the running count is the state and it is an integer. We define the update function as

Transform Operation

Transform可以在DStream上apply任意的RDD操作,尤其有意思的是,可以通过Transform使用Spark的MLlib和Graph算法

The transform operation (along with its variations like transformWith) allows arbitrary RDD-to-RDD functions to be applied on a DStream. It can be used to apply any RDD operation that is not exposed in the DStream API. For example, the functionality of joining every batch in a data stream with another dataset is not directly exposed in the DStream API. However, you can easily use transform to do this. This enables very powerful possibilities. For example, if you want to do real-time data cleaning by joining the input data stream with precomputed spam information (maybe generated with Spark as well) and then filtering based on it.

In fact, you can also use machine learning and graph computation algorithms in the transform method.

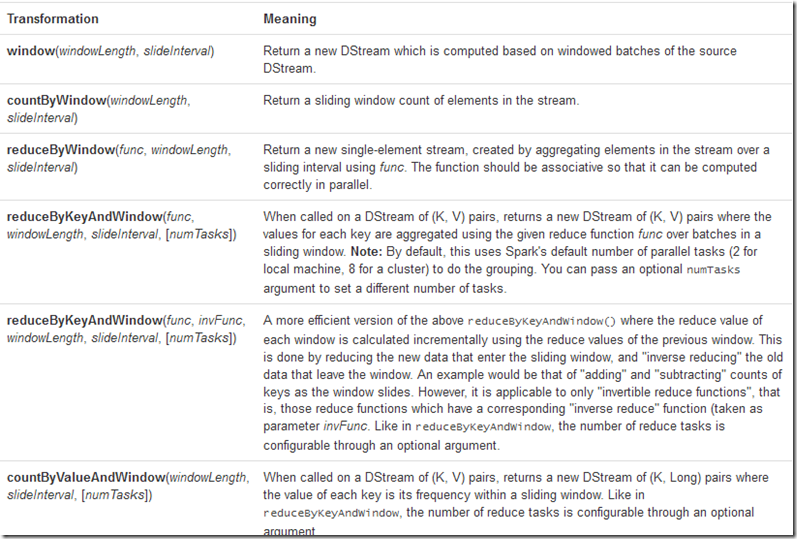

Window Operations

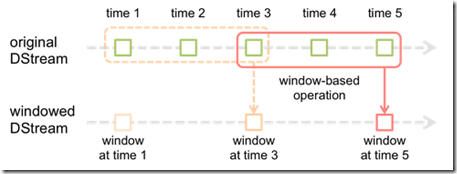

作为streaming处理,当然需要支持典型的slide-windows based的方法

可以看下面的图,可以将original中的slide-windows上的多个RDDs生成windowed DStream上的单个RDD

两个重要的参数,windows length和slide inteval,下面的图中分别是3和2

Finally, Spark Streaming also provides windowed computations, which allow you to apply transformations over a sliding window of data. This following figure illustrates this sliding window.

As shown in the figure, every time the window slides over a source DStream, the source RDDs that fall within the window are combined and operated upon to produce the RDDs of the windowed DStream. In this specific case, the operation is applied over last 3 time units of data, and slides by 2 time units. This shows that any window-based operation needs to specify two parameters.

- window length - The duration of the window (3 in the figure)

- slide interval - The interval at which the window-based operation is performed (2 in the figure).

These two parameters must be multiples of the batch interval of the source DStream (1 in the figure).

给个例子,统计过去30秒的word count,每次递进10秒

Let’s illustrate the window operations with an example. Say, you want to extend the earlier example by generating word counts over last 30 seconds of data, every 10 seconds. To do this, we have to apply the reduceByKey operation on the pairs DStream of (word, 1) pairs over the last 30 seconds of data. This is done using the operation reduceByKeyAndWindow.

// Reduce last 30 seconds of data, every 10 seconds

val windowedWordCounts = pairs.reduceByKeyAndWindow(_ + _, Seconds(30), Seconds(10))Some of the common window-based operations are as follows. All of these operations take the said two parameters - windowLength and slideInterval.

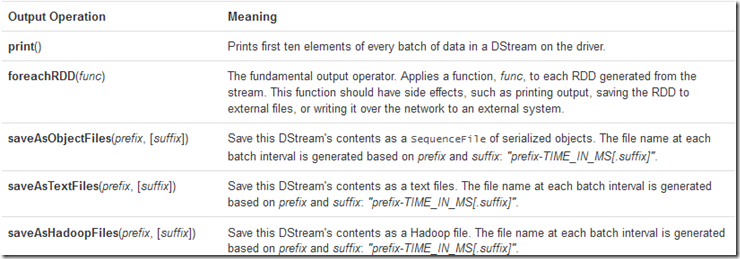

Output Operations

When an output operator is called, it triggers the computation of a stream. Currently the following output operators are defined:

Persistence

DStream的persistence是通过persist之上的每个RDD来实现的,并没有什么特别的地方

需要注意的是,window-based operations会自动将persist设为true,因为slide-windows based算法中,每个RDD都会被读多遍

而且对于来自Kafka, Flume, sockets, etc.的数据,default的persist策略是,产生2个replicas并同时写入两个节点,便于fault-tolerance

Spark的fault-tolerance取决于replay,对于普通的Spark数据是从文件读的,所以replay只是重新读取数据而已,很简单

但对于流数据,数据一旦流过就无法replay,所以SparkStreaming必须在source采取2-replicas的策略

Similar to RDDs, DStreams also allow developers to persist the stream’s data in memory. That is, using persist() method on a DStream would automatically persist every RDD of that DStream in memory. This is useful if the data in the DStream will be computed multiple times (e.g., multiple operations on the same data). For window-based operations like reduceByWindow and reduceByKeyAndWindow and state-based operations like updateStateByKey, this is implicitly true. Hence, DStreams generated by window-based operations are automatically persisted in memory, without the developer calling persist().

For input streams that receive data over the network (such as, Kafka, Flume, sockets, etc.), the default persistence level is set to replicate the data to two nodes for fault-tolerance.

Note that, unlike RDDs, the default persistence level of DStreams keeps the data serialized in memory. This is further discussed in the Performance Tuning section. More information on different persistence levels can be found in Spark Programming Guide.

RDD Checkpointing

同样DStream也支持checkpoint,即把RDD写入HDFS中,当然cp会影响相应时间,所以要处理好这个balance,一般cp interval为5 - 10 times of sliding interval,至少10秒

在DStream中cp主要用于stateful operation,比如前面说的wordcount,由于streaming处理的特点,即使persist,也只可能保留一段时间的数据,所以如果不去做cp,crash后是无法replay出太久以前的结果的,所以对于DStream而已CP的意义更为重要(对于spark,cp只是节省时间)

A stateful operation is one which operates over multiple batches of data. This includes all window-based operations and the updateStateByKey operation. Since stateful operations have a dependency on previous batches of data, they continuously accumulate metadata over time. To clear this metadata, streaming supports periodic checkpointing by saving intermediate data to HDFS. Note that checkpointing also incurs the cost of saving to HDFS which may cause the corresponding batch to take longer to process. Hence, the interval of checkpointing needs to be set carefully. At small batch sizes (say 1 second), checkpointing every batch may significantly reduce operation throughput. Conversely, checkpointing too slowly causes the lineage and task sizes to grow which may have detrimental effects. Typically, a checkpoint interval of 5 - 10 times of sliding interval of a DStream is good setting to try.

To enable checkpointing, the developer has to provide the HDFS path to which RDD will be saved. This is done by using

ssc.checkpoint(hdfsPath) // assuming ssc is the StreamingContext or JavaStreamingContext

The interval of checkpointing of a DStream can be set by using

dstream.checkpoint(checkpointInterval)

For DStreams that must be checkpointed (that is, DStreams created by updateStateByKey and reduceByKeyAndWindow with inverse function), the checkpoint interval of the DStream is by default set to a multiple of the DStream’s sliding interval such that its at least 10 seconds.

Performance Tuning

在性能优化上,主要考虑两点

1. 如果降低每个batch的执行时间,增加并发度,减少不必要的serialization和降低task launch的overhead(减少tasksize和使用Standalone or coarse-grained Mesos mode )

2. 设置合适batch size,batch size越小肯定越接近流处理,但是overhead越大,所以需要balance,建议是先在一个比较保守的size和比较小的流量下进行测试,然后如果系统稳定,在逐步减少size和加大流量

Getting the best performance of a Spark Streaming application on a cluster requires a bit of tuning. This section explains a number of the parameters and configurations that can tuned to improve the performance of you application. At a high level, you need to consider two things:

- Reducing the processing time of each batch of data by efficiently using cluster resources.

- Setting the right batch size such that the data processing can keep up with the data ingestion.

Reducing the Processing Time of each Batch

There are a number of optimizations that can be done in Spark to minimize the processing time of each batch. These have been discussed in detail in Tuning Guide. This section highlights some of the most important ones.

Level of Parallelism

Cluster resources maybe under-utilized if the number of parallel tasks used in any stage of the computation is not high enough. For example, for distributed reduce operations like reduceByKey and reduceByKeyAndWindow, the default number of parallel tasks is 8. You can pass the level of parallelism as an argument (see the PairDStreamFunctions documentation), or set the config property spark.default.parallelism to change the default.

Data Serialization

The overhead of data serialization can be significant, especially when sub-second batch sizes are to be achieved. There are two aspects to it.

Serialization of RDD data in Spark: Please refer to the detailed discussion on data serialization in the Tuning Guide. However, note that unlike Spark, by default RDDs are persisted as serialized byte arrays to minimize pauses related to GC.

Serialization of input data: To ingest external data into Spark, data received as bytes (say, from the network) needs to deserialized from bytes and re-serialized into Spark’s serialization format. Hence, the deserialization overhead of input data may be a bottleneck.

Task Launching Overheads

If the number of tasks launched per second is high (say, 50 or more per second), then the overhead of sending out tasks to the slaves maybe significant and will make it hard to achieve sub-second latencies. The overhead can be reduced by the following changes:

Task Serialization: Using Kryo serialization for serializing tasks can reduced the task sizes, and therefore reduce the time taken to send them to the slaves.

Execution mode: Running Spark in Standalone mode or coarse-grained Mesos mode leads to better task launch times than the fine-grained Mesos mode. Please refer to the Running on Mesos guide for more details.

These changes may reduce batch processing time by 100s of milliseconds, thus allowing sub-second batch size to be viable.

Setting the Right Batch Size

For a Spark Streaming application running on a cluster to be stable, the processing of the data streams must keep up with the rate of ingestion of the data streams. Depending on the type of computation, the batch size used may have significant impact on the rate of ingestion that can be sustained by the Spark Streaming application on a fixed cluster resources. For example, let us consider the earlier WordCountNetwork example. For a particular data rate, the system may be able to keep up with reporting word counts every 2 seconds (i.e., batch size of 2 seconds), but not every 500 milliseconds.

A good approach to figure out the right batch size for your application is to test it with a conservative batch size (say, 5-10 seconds) and a low data rate. To verify whether the system is able to keep up with data rate, you can check the value of the end-to-end delay experienced by each processed batch (either look for “Total delay” in Spark driver log4j logs, or use the StreamingListener interface). If the delay is maintained to be comparable to the batch size, then system is stable. Otherwise, if the delay is continuously increasing, it means that the system is unable to keep up and it therefore unstable. Once you have an idea of a stable configuration, you can try increasing the data rate and/or reducing the batch size. Note that momentary increase in the delay due to temporary data rate increases maybe fine as long as the delay reduces back to a low value (i.e., less than batch size).

24/7 Operation

Streaming操作,数据是无限的,所以必然需要cleanup metadata,比如generated RDD

可以在SparkContext创建前,通过spark.cleaner.ttl 设置cleanup时间

注意cleanup时间必须要比任意window operation的窗口大小要长

By default, Spark does not forget any of the metadata (RDDs generated, stages processed, etc.). But for a Spark Streaming application to operate 24/7, it is necessary for Spark to do periodic cleanup of it metadata. This can be enabled by setting the configuration property spark.cleaner.ttl to the number of seconds you want any metadata to persist. For example, setting spark.cleaner.ttl to 600 would cause Spark periodically cleanup all metadata and persisted RDDs that are older than 10 minutes. Note, that this property needs to be set before the SparkContext is created.

This value is closely tied with any window operation that is being used. Any window operation would require the input data to be persisted in memory for at least the duration of the window. Hence it is necessary to set the delay to at least the value of the largest window operation used in the Spark Streaming application. If this delay is set too low, the application will throw an exception saying so.

Monitoring

Besides Spark’s in-built monitoring capabilities, the progress of a Spark Streaming program can also be monitored using the [StreamingListener] (streaming/index.html#org.apache.spark.scheduler.StreamingListener) interface, which allows you to get statistics of batch processing times, queueing delays, and total end-to-end delays. Note that this is still an experimental API and it is likely to be improved upon (i.e., more information reported) in the future.

Memory Tuning

Tuning the memory usage and GC behavior of Spark applications have been discussed in great detail in the Tuning Guide. It is recommended that you read that. In this section, we highlight a few customizations that are strongly recommended to minimize GC related pauses in Spark Streaming applications and achieving more consistent batch processing times.

Default persistence level of DStreams: Unlike RDDs, the default persistence level of DStreams serializes the data in memory (that is, StorageLevel.MEMORY_ONLY_SER for DStream compared to StorageLevel.MEMORY_ONLY for RDDs). Even though keeping the data serialized incurs higher serialization/deserialization overheads, it significantly reduces GC pauses.

Clearing persistent RDDs: By default, all persistent RDDs generated by Spark Streaming will be cleared from memory based on Spark’s in-built policy (LRU). If

spark.cleaner.ttlis set, then persistent RDDs that are older than that value are periodically cleared. As mentioned earlier, this needs to be careful set based on operations used in the Spark Streaming program. However, a smarter unpersisting of RDDs can be enabled by setting the configuration propertyspark.streaming.unpersisttotrue. This makes the system to figure out which RDDs are not necessary to be kept around and unpersists them. This is likely to reduce the RDD memory usage of Spark, potentially improving GC behavior as well.Concurrent garbage collector: Using the concurrent mark-and-sweep GC further minimizes the variability of GC pauses. Even though concurrent GC is known to reduce the overall processing throughput of the system, its use is still recommended to achieve more consistent batch processing times.

Fault-tolerance Properties

简而言之,Spark的fault-tolerance是很容易做的,因为只有source data存在,任何RDD都是可以被replay出来的

所以关键问题在于如何保证source data的fault-tolerance

In this section, we are going to discuss the behavior of Spark Streaming application in the event of a node failure. To understand this, let us remember the basic fault-tolerance properties of Spark’s RDDs.

- An RDD is an immutable, deterministically re-computable, distributed dataset. Each RDD remembers the lineage of deterministic operations that were used on a fault-tolerant input dataset to create it.

- If any partition of an RDD is lost due to a worker node failure, then that partition can be re-computed from the original fault-tolerant dataset using the lineage of operations.

Since all data transformations in Spark Streaming are based on RDD operations, as long as the input dataset is present, all intermediate data can recomputed. Keeping these properties in mind, we are going to discuss the failure semantics in more detail.

Failure of a Worker Node

对于worker node的失败,只需要换个worker把RDD给replay出来就可以了,前提就是要能够重新取到source data

所以对于用HDFS作为input source的case,fault-tolerance是没有问题的

关键是,以network作为input source的case(比如,kafka,flume,socket),如何保证能够重新读到source data?

做法是首先以2-replicas把source data放到两个node的memory中,一个fail,可以用另一个replay

但是一种情况下,还是会丢数据,就是当network receiver所在的node fail的时候,那么从network上读到并还没有来得及完成replicas的数据就会丢失

另外需要注意的是,对于RDD的transform是可以保证exactly-once semantics的,但是对于output operations就只能保证at-least once semantics

比如,某个RDD已经部分被写到file中,这个时候fail,当worker replay出RDD的时候会重新写一遍

所以如果要保证exactly-once,必须自己加上additional transactions-like mechanisms

There are two failure behaviors based on which input sources are used.

- Using HDFS files as input source - Since the data is reliably stored on HDFS, all data can re-computed and therefore no data will be lost due to any failure.

- Using any input source that receives data through a network - For network-based data sources like Kafka and Flume, the received input data is replicated in memory between nodes of the cluster (default replication factor is 2). So if a worker node fails, then the system can recompute the lost from the the left over copy of the input data. However, if the worker node where a network receiver was running fails, then a tiny bit of data may be lost, that is, the data received by the system but not yet replicated to other node(s). The receiver will be started on a different node and it will continue to receive data.

Since all data is modeled as RDDs with their lineage of deterministic operations, any recomputation always leads to the same result. As a result, all DStream transformations are guaranteed to have exactly-once semantics. That is, the final transformed result will be same even if there were was a worker node failure. However, output operations (like foreachRDD) have at-least once semantics, that is, the transformed data may get written to an external entity more than once in the event of a worker failure. While this is acceptable for saving to HDFS using the saveAs*Files operations (as the file will simply get over-written by the same data), additional transactions-like mechanisms may be necessary to achieve exactly-once semantics for output operations.

Failure of the Driver Node

对于Driver Node failure ,Spark是没有做任何处理的,job会失败

而对于SparkStreaming而言,这样是无法接受的,因为流数据你无法重新读出的,对于流处理必须要保证24/7

所以这里做的更多,会定期把DStreams的metadata chechpoint到HDFS上,这样当Driver Node failure,被restart后,可以冲checkpoint文件中把状态恢复过来,继续执行

但是当前对于以network作为input source的情况下,数据还是会丢失,由于driver node的fail会导致sourcedata丢失,虽然你metadata是checkpoint了

To allows a streaming application to operate 24/7, Spark Streaming allows a streaming computation to be resumed even after the failure of the driver node. Spark Streaming periodically writes the metadata information of the DStreams setup through the StreamingContext to a HDFS directory (can be any Hadoop-compatible filesystem). This periodic checkpointing can be enabled by setting a the checkpoint directory using ssc.checkpoint(<checkpoint directory>) as described earlier. On failure of the driver node, the lost StreamingContext can be recovered from this information, and restarted.

To allow a Spark Streaming program to be recoverable, it must be written in a way such that it has the following behavior:

- When the program is being started for the first time, it will create a new StreamingContext, set up all the streams and then call start().

- When the program is being restarted after failure, it will re-create a StreamingContext from the checkpoint data in the checkpoint directory.

// Function to create and setup a new StreamingContext def functionToCreateContext(): StreamingContext = { val ssc = new StreamingContext(...) // new context val lines = ssc.socketTextStream(...) // create DStreams ... ssc.checkpoint(checkpointDirectory) // set checkpoint directory ssc } // Get StreaminContext from checkpoint data or create a new one val context = StreamingContext.getOrCreate(checkpointDirectory, functionToCreateContext _) // Do additional setup on context that needs to be done, // irrespective of whether it is being started or restarted context. ... // Start the context context.start() context.awaitTermination()

If the checkpointDirectory exists, then the context will be recreated from the checkpoint data. If the directory does not exist (i.e., running for the first time), then the function functionToCreateContext will be called to create a new context and set up the DStreams. See the Scala example RecoverableNetworkWordCount. This example appends the word counts of network data into a file.You can also explicitly create a StreamingContext from the checkpoint data and start the computation by using new StreamingContext(checkpointDirectory).

Note: If Spark Streaming and/or the Spark Streaming program is recompiled, you must create a new StreamingContext or JavaStreamingContext, not recreate from checkpoint data. This is because trying to load a context from checkpoint data may fail if the data was generated before recompilation of the classes. So, if you are using getOrCreate, then make sure that the checkpoint directory is explicitly deleted every time recompiled code needs to be launched.

This failure recovery can be done automatically using Spark’s standalone cluster mode, which allows any Spark application’s driver to be as well as, ensures automatic restart of the driver on failure (see supervise mode). This can be tested locally by launching the above example using the supervise mode in a local standalone cluster and killing the java process running the driver (will be shown as DriverWrapper when jps is run to show all active Java processes). The driver should be automatically restarted, and the word counts will cont

For other deployment environments like Mesos and Yarn, you have to restart the driver through other mechanisms.

Recovery Semantics

There are two different failure behaviors based on which input sources are used.

- Using HDFS files as input source - Since the data is reliably stored on HDFS, all data can re-computed and therefore no data will be lost due to any failure.

- Using any input source that receives data through a network - The received input data is replicated in memory to multiple nodes. Since, all the data in the Spark worker’s memory is lost when the Spark driver fails, the past input data will not be accessible and driver recovers. Hence, if stateful and window-based operations are used (like

updateStateByKey,window,countByValueAndWindow, etc.), then the intermediate state will not be recovered completely.

In future releases, we will support full recoverability for all input sources. Note that for non-stateful transformations like map, count, and reduceByKey, with all input streams, the system, upon restarting, will continue to receive and process new data