mnist

- mnist classification with tensorflow(nn,cnn,lstm,nlstm,bi-lstm,cnn-rnn)

mnist

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

def compute_accuracy(v_x, v_y):

global prediction

#input v_x to nn and get the result with y_pre

y_pre = sess.run(prediction, feed_dict={x:v_x})

#find how many right

correct_prediction = tf.equal(tf.argmax(y_pre,1), tf.argmax(v_y,1))

#calculate average

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

#get input content

result = sess.run(accuracy,feed_dict={x: v_x, y: v_y})

return result

def add_layer(inputs, in_size, out_size, activation_function=None,):

#init w: a matric in x*y

Weights = tf.Variable(tf.random_normal([in_size, out_size]))

#init b: a matric in 1*y

biases = tf.Variable(tf.zeros([1, out_size]) + 0.1,)

#calculate the result

Wx_plus_b = tf.matmul(inputs, Weights) + biases

#add the active hanshu

if activation_function is None:

outputs = Wx_plus_b

else:

outputs = activation_function(Wx_plus_b,)

return outputs

#load mnist data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

#define placeholder for input

x = tf.placeholder(tf.float32, [None, 784])

y = tf.placeholder(tf.float32, [None, 10])

#add layer

prediction = add_layer(x, 784, 10, activation_function=tf.nn.softmax)

#calculate the loss

cross_entropy = tf.reduce_mean(-tf.reduce_sum(y*tf.log(prediction), reduction_indices=[1]))

#use Gradientdescentoptimizer

train_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy)

#init session

sess = tf.Session()

#init all variables

sess.run(tf.global_variables_initializer())

#start training

for i in range(1000):

#get batch to learn easily

batch_x, batch_y = mnist.train.next_batch(100)

res=sess.run(train_step,feed_dict={x: batch_x, y: batch_y})

if i % 10 == 0:

print(res)

if i % 50 == 0:

print(compute_accuracy(mnist.test.images, mnist.test.labels))

mnist_vis_tensorboard

# encoding=utf-8

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

myGraph = tf.Graph()

with myGraph.as_default():

with tf.name_scope('inputsAndLabels'):

x_raw = tf.placeholder(tf.float32, shape=[None, 784])

y = tf.placeholder(tf.float32, shape=[None, 10])

with tf.name_scope('hidden1'):

x = tf.reshape(x_raw, shape=[-1,28,28,1])

W_conv1 = weight_variable([5,5,1,32])

b_conv1 = bias_variable([32])

l_conv1 = tf.nn.relu(tf.nn.conv2d(x,W_conv1, strides=[1,1,1,1],padding='SAME') + b_conv1)

l_pool1 = tf.nn.max_pool(l_conv1, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

tf.summary.image('x_input',x,max_outputs=10)

tf.summary.histogram('W_con1',W_conv1)

tf.summary.histogram('b_con1',b_conv1)

with tf.name_scope('hidden2'):

W_conv2 = weight_variable([5,5,32,64])

b_conv2 = bias_variable([64])

l_conv2 = tf.nn.relu(tf.nn.conv2d(l_pool1, W_conv2, strides=[1,1,1,1], padding='SAME')+b_conv2)

l_pool2 = tf.nn.max_pool(l_conv2, ksize=[1,2,2,1],strides=[1,2,2,1], padding='SAME')

tf.summary.histogram('W_con2', W_conv2)

tf.summary.histogram('b_con2', b_conv2)

with tf.name_scope('fc1'):

W_fc1 = weight_variable([64*7*7, 1024])

b_fc1 = bias_variable([1024])

l_pool2_flat = tf.reshape(l_pool2, [-1, 64*7*7])

l_fc1 = tf.nn.relu(tf.matmul(l_pool2_flat, W_fc1) + b_fc1)

keep_prob = tf.placeholder(tf.float32)

l_fc1_drop = tf.nn.dropout(l_fc1, keep_prob)

tf.summary.histogram('W_fc1', W_fc1)

tf.summary.histogram('b_fc1', b_fc1)

with tf.name_scope('fc2'):

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv = tf.matmul(l_fc1_drop, W_fc2) + b_fc2

tf.summary.histogram('W_fc1', W_fc1)

tf.summary.histogram('b_fc1', b_fc1)

with tf.name_scope('train'):

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=y_conv, labels=y))

train_step = tf.train.AdamOptimizer(learning_rate=1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar('loss', cross_entropy)

tf.summary.scalar('accuracy', accuracy)

with tf.Session(graph=myGraph) as sess:

sess.run(tf.global_variables_initializer())

saver = tf.train.Saver()

merged = tf.summary.merge_all()

summary_writer = tf.summary.FileWriter('./mnistEven/', graph=sess.graph)

for i in range(2000):

batch = mnist.train.next_batch(50)

#cross_entropy = -tf.reduce_sum(y_ * tf.log(y))

_, loss_val = sess.run([train_step, cross_entropy],feed_dict={x_raw:batch[0], y:batch[1], keep_prob:0.5})

if i%100 == 0:

train_accuracy = accuracy.eval(feed_dict={x_raw:batch[0], y:batch[1], keep_prob:1.0})

print('step %d training accuracy:%g'%(i, train_accuracy))

print('loss = ' + str(loss_val))

summary = sess.run(merged,feed_dict={x_raw:batch[0], y:batch[1], keep_prob:1.0})

summary_writer.add_summary(summary,i)

test_accuracy = accuracy.eval(feed_dict={x_raw:mnist.test.images, y:mnist.test.labels, keep_prob:1.0})

print('test accuracy:%g' %test_accuracy)

saver.save(sess,save_path='./model/mnistmodel',global_step=1)

mnist_nlstm

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

def compute_accuracy(v_x, v_y):

global pred

#input v_x to nn and get the result with y_pre

y_pre = sess.run(pred, feed_dict={x:v_x})

#find how many right

correct_prediction = tf.equal(tf.argmax(y_pre,1), tf.argmax(v_y,1))

#calculate average

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

#get input content

result = sess.run(accuracy,feed_dict={x: v_x, y: v_y})

return result

def LSTM_cell():

return tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

def Drop_lstm_cell():

return tf.contrib.rnn.DropoutWrapper(LSTM_cell(), output_keep_prob=0.5)

def Mul_lstm_cell():

return tf.contrib.rnn.MultiRNNCell([Drop_lstm_cell() for _ in range(lstm_layer)], state_is_tuple=True)

def RNN(X,weights,biases):

# hidden layer for input

X = tf.reshape(X, [-1, n_inputs])

X_in = tf.matmul(X, weights['in']) + biases['in']

X_in = tf.reshape(X_in, [-1,n_steps, n_hidden_units])

# cell

#lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

lstm_cell = Mul_lstm_cell()

_init_state = lstm_cell.zero_state(batch_size, dtype=tf.float32)

outputs,states = tf.nn.dynamic_rnn(lstm_cell, X_in, initial_state=_init_state, time_major=False)

#hidden layer for output as the final results

#results = tf.matmul(states[2][1], weights['out']) + biases['out']

# or

outputs = tf.unstack(tf.transpose(outputs, [1,0,2]))

results = tf.matmul(outputs[-1], weights['out']) + biases['out']

return results

#load mnist data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

# parameters init

lstm_layer = 3

l_r = 0.001

training_iters = 100000

batch_size = 128

n_inputs = 28

n_steps = 28

n_hidden_units = 128

n_classes = 10

#define placeholder for input

x = tf.placeholder(tf.float32, [None, n_steps, n_inputs])

y = tf.placeholder(tf.float32, [None, n_classes])

# define w and b

weights = {

'in': tf.Variable(tf.random_normal([n_inputs,n_hidden_units])),

'out': tf.Variable(tf.random_normal([n_hidden_units,n_classes]))

}

biases = {

'in': tf.Variable(tf.constant(0.1,shape=[n_hidden_units,])),

'out': tf.Variable(tf.constant(0.1,shape=[n_classes,]))

}

pred = RNN(x, weights, biases)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred,labels=y))

train_op = tf.train.AdamOptimizer(l_r).minimize(cost)

correct_pred = tf.equal(tf.argmax(pred,1),tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

#init session

sess = tf.Session()

#init all variables

sess.run(tf.global_variables_initializer())

#start training

# x_image,x_label = mnist.test.next_batch(500)

# x_image = x_image.reshape([500, n_steps, n_inputs])

for i in range(training_iters):

#get batch to learn easily

batch_x, batch_y = mnist.train.next_batch(batch_size)

batch_x = batch_x.reshape([batch_size, n_steps, n_inputs])

sess.run(train_op,feed_dict={x: batch_x, y: batch_y})

if i % 50 == 0:

print(sess.run(accuracy,feed_dict={x: batch_x, y: batch_y,}))

# print(sess.run(accuracy,feed_dict={x: x_image, y: x_label}))

mnist_lstm

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

def RNN(X,weights,biases):

# hidden layer for input

X = tf.reshape(X, [-1, n_inputs])

X_in = tf.matmul(X, weights['in']) + biases['in']

X_in = tf.reshape(X_in, [-1,n_steps, n_hidden_units])

# cell

lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

_init_state = lstm_cell.zero_state(batch_size, dtype=tf.float32)

outputs,states = tf.nn.dynamic_rnn(lstm_cell, X_in, initial_state=_init_state, time_major=False)

#hidden layer for output as the final results

#results = tf.matmul(states[1], weights['out']) + biases['out']

# or

outputs = tf.unstack(tf.transpose(outputs, [1,0,2]))

results = tf.matmul(outputs[-1], weights['out']) + biases['out']

return results

#load mnist data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

# parameters init

l_r = 0.001

training_iters = 100000

batch_size = 128

n_inputs = 28

n_steps = 28

n_hidden_units = 128

n_classes = 10

#define placeholder for input

x = tf.placeholder(tf.float32, [None, n_steps, n_inputs])

y = tf.placeholder(tf.float32, [None, n_classes])

# define w and b

weights = {

'in': tf.Variable(tf.random_normal([n_inputs,n_hidden_units])),

'out': tf.Variable(tf.random_normal([n_hidden_units,n_classes]))

}

biases = {

'in': tf.Variable(tf.constant(0.1,shape=[n_hidden_units,])),

'out': tf.Variable(tf.constant(0.1,shape=[n_classes,]))

}

pred = RNN(x, weights, biases)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred,labels=y))

train_op = tf.train.AdamOptimizer(l_r).minimize(cost)

correct_pred = tf.equal(tf.argmax(pred,1),tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_pred,tf.float32))

#init session

sess = tf.Session()

#init all variables

sess.run(tf.global_variables_initializer())

#start training

#for i in range(training_iters):

for i in range(training_iters):

#get batch to learn easily

batch_x, batch_y = mnist.train.next_batch(batch_size)

batch_x = batch_x.reshape([batch_size, n_steps, n_inputs])

sess.run(train_op,feed_dict={x: batch_x, y: batch_y})

if i % 50 == 0:

print(sess.run(accuracy,feed_dict={x: batch_x, y: batch_y,}))

#test_data = mnist.test.images.reshape([-1, n_steps, n_inputs])

#test_label = mnist.test.labels

#print("Testing Accuracy: ", sess.run(accuracy, feed_dict={x: test_data, y: test_label}))

mnist_cnn

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

def compute_accuracy(v_x, v_y):

global prediction

y_pre = sess.run(prediction, feed_dict={x:v_x, keep_prob:1})

correct_prediction = tf.equal(tf.argmax(y_pre,1), tf.argmax(v_y,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

result = sess.run(accuracy,feed_dict={x: v_x, y: v_y, keep_prob:1})

return result

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

# strides=[1,x_movement,y_movement,1]

return tf.nn.conv2d(x, W, strides=[1,1,1,1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

# load mnist data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

x = tf.placeholder(tf.float32, [None,784])

y = tf.placeholder(tf.float32, [None,10])

keep_prob = tf.placeholder(tf.float32)

# reshape(data you want to reshape, [-1, reshape_height, reshape_weight, imagine layers]) image layers=1 when the imagine is in white and black, =3 when the imagine is RGB

x_image = tf.reshape(x, [-1,28,28,1])

# ********************** conv1 *********************************

# transfer a 5*5*1 imagine into 32 sequence

W_conv1 = weight_variable([5,5,1,32])

b_conv1 = bias_variable([32])

# input a imagine and make a 5*5*1 to 32 with stride=1*1, and activate with relu

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) # output size 28*28*32

h_pool1 = max_pool_2x2(h_conv1) # output size 14*14*32

# ********************** conv2 *********************************

# transfer a 5*5*32 imagine into 64 sequence

W_conv2 = weight_variable([5,5,32,64])

b_conv2 = bias_variable([64])

# input a imagine and make a 5*5*32 to 64 with stride=1*1, and activate with relu

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) # output size 14*14*64

h_pool2 = max_pool_2x2(h_conv2) # output size 7*7*64

# ********************* func1 layer *********************************

W_fc1 = weight_variable([7*7*64, 1024])

b_fc1 = bias_variable([1024])

# reshape the image from 7,7,64 into a flat (7*7*64)

h_pool2_flat = tf.reshape(h_pool2, [-1,7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

h_fc1_drop = tf.nn.dropout(h_fc1,keep_prob)

# ********************* func2 layer *********************************

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

prediction = tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

# calculate the loss

cross_entropy = tf.reduce_mean(-tf.reduce_sum(y*tf.log(prediction), reduction_indices=[1]))

# use Gradientdescentoptimizer

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

# init session

sess = tf.Session()

sess.run(tf.global_variables_initializer())

for i in range(1000):

batch_x, batch_y = mnist.train.next_batch(100)

sess.run(train_step,feed_dict={x: batch_x, y: batch_y, keep_prob: 0.5})

if i % 50 == 0:

print(compute_accuracy(mnist.test.images, mnist.test.labels))

mnist_cnn_rnn

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

lr = 0.001

training_iters = 100000

batch_size = 128

n_input = 49

n_steps = 64

n_hidden_units = 128

n_classes = 10

def compute_accuracy(v_x, v_y):

global prediction

y_pre = sess.run(prediction, feed_dict={x:v_x, keep_prob:1})

correct_prediction = tf.equal(tf.argmax(y_pre,1), tf.argmax(v_y,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

result = sess.run(accuracy,feed_dict={x: v_x, y: v_y, keep_prob:1})

return result

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

# strides=[1,x_movement,y_movement,1]

return tf.nn.conv2d(x, W, strides=[1,1,1,1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

def conv_pool_layer(X, img_len, img_hi, out_seq):

W = weight_variable([img_len, img_len, img_hi, out_seq])

b = bias_variable([out_seq])

h_conv = tf.nn.relu(conv2d(X, W) + b)

return max_pool_2x2(h_conv)

def lstm(X):

lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

_init_state = lstm_cell.zero_state(batch_size, dtype=tf.float32)

outputs,states = tf.nn.dynamic_rnn(lstm_cell, X, initial_state=_init_state, time_major=False)

W = weight_variable([n_hidden_units, n_classes])

b = bias_variable([n_classes])

outputs = tf.unstack(tf.transpose(outputs, [1,0,2]))

results = tf.matmul(outputs[-1], W) + b

return results

# load mnist data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

x = tf.placeholder(tf.float32, [None,784])

y = tf.placeholder(tf.float32, [None,10])

keep_prob = tf.placeholder(tf.float32)

# reshape(data you want to reshape, [-1, reshape_height, reshape_weight, imagine layers]) image layers=1 when the imagine is in white and black, =3 when the imagine is RGB

x_image = tf.reshape(x, [-1,28,28,1])

# ********************** conv1 *********************************

# transfer a 5*5*1 imagine into 32 sequence

#W_conv1 = weight_variable([5,5,1,32])

#b_conv1 = bias_variable([32])

# input a imagine and make a 5*5*1 to 32 with stride=1*1, and activate with relu

#h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) # output size 28*28*32

#h_pool1 = max_pool_2x2(h_conv1) # output size 14*14*32

h_pool1 = conv_pool_layer(x_image, 5, 1, 32)

# ********************** conv2 *********************************

# transfer a 5*5*32 imagine into 64 sequence

#W_conv2 = weight_variable([5,5,32,64])

#b_conv2 = bias_variable([64])

# input a imagine and make a 5*5*32 to 64 with stride=1*1, and activate with relu

#h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) # output size 14*14*64

#h_pool2 = max_pool_2x2(h_conv2) # output size 7*7*64

h_pool2 = conv_pool_layer(h_pool1, 5, 32, 64)

# reshape data

X_in = tf.reshape(h_pool2, [-1,49,64])

X_in = tf.transpose(X_in, [0,2,1])

#put into a lstm layer

prediction = lstm(X_in)

# ********************* func1 layer *********************************

#W_fc1 = weight_variable([7*7*64, 1024])

#b_fc1 = bias_variable([1024])

# reshape the image from 7,7,64 into a flat (7*7*64)

#h_pool2_flat = tf.reshape(h_pool2, [-1,7*7*64])

#h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

#h_fc1_drop = tf.nn.dropout(h_fc1,keep_prob)

# ********************* func2 layer *********************************

#W_fc2 = weight_variable([1024, 10])

#b_fc2 = bias_variable([10])

#prediction = tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

# calculate the loss

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=prediction, labels=y))

# use Gradientdescentoptimizer

train_step = tf.train.AdamOptimizer(lr).minimize(cross_entropy)

correct_pred = tf.equal(tf.argmax(prediction,1),tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

# init session

sess = tf.Session()

sess.run(tf.global_variables_initializer())

for i in range(training_iters):

batch_x, batch_y = mnist.train.next_batch(batch_size)

sess.run(train_step,feed_dict={x: batch_x, y: batch_y, keep_prob: 0.5})

if i % 50 == 0:

print(sess.run(accuracy,feed_dict={x: batch_x, y: batch_y,}))

mnist_bi_lstm

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

def compute_accuracy(v_x, v_y):

global pred

#input v_x to nn and get the result with y_pre

y_pre = sess.run(pred, feed_dict={x:v_x})

#find how many right

correct_prediction = tf.equal(tf.argmax(y_pre,1), tf.argmax(v_y,1))

#calculate average

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

#get input content

result = sess.run(accuracy,feed_dict={x: v_x, y: v_y})

return result

def Bi_lstm(X):

lstm_f_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

lstm_b_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden_units, forget_bias=1.0, state_is_tuple=True)

return tf.contrib.rnn.static_bidirectional_rnn(lstm_f_cell, lstm_b_cell, X, dtype=tf.float32)

def RNN(X,weights,biases):

# hidden layer for input

X = tf.reshape(X, [-1, n_inputs])

X_in = tf.matmul(X, weights['in']) + biases['in']

#reshape data put into bi-lstm cell

X_in = tf.reshape(X_in, [-1,n_steps, n_hidden_units])

X_in = tf.transpose(X_in, [1,0,2])

X_in = tf.reshape(X_in, [-1, n_hidden_units])

X_in = tf.split(X_in, n_steps)

outputs, _, _ = Bi_lstm(X_in)

#hidden layer for output as the final results

results = tf.matmul(outputs[-1], weights['out']) + biases['out']

return results

#load mnist data

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

# parameters init

l_r = 0.001

training_iters = 100000

batch_size = 128

n_inputs = 28

n_steps = 28

n_hidden_units = 128

n_classes = 10

#define placeholder for input

x = tf.placeholder(tf.float32, [None, n_steps, n_inputs])

y = tf.placeholder(tf.float32, [None, n_classes])

# define w and b

weights = {

'in': tf.Variable(tf.random_normal([n_inputs,n_hidden_units])),

'out': tf.Variable(tf.random_normal([2*n_hidden_units,n_classes]))

}

biases = {

'in': tf.Variable(tf.constant(0.1,shape=[n_hidden_units,])),

'out': tf.Variable(tf.constant(0.1,shape=[n_classes,]))

}

pred = RNN(x, weights, biases)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred,labels=y))

train_op = tf.train.AdamOptimizer(l_r).minimize(cost)

correct_pred = tf.equal(tf.argmax(pred,1),tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

#init session

sess = tf.Session()

#init all variables

sess.run(tf.global_variables_initializer())

#start training

# x_image,x_label = mnist.test.next_batch(500)

# x_image = x_image.reshape([500, n_steps, n_inputs])

for i in range(500):

#get batch to learn easily

batch_x, batch_y = mnist.train.next_batch(batch_size)

batch_x = batch_x.reshape([batch_size, n_steps, n_inputs])

sess.run(train_op,feed_dict={x: batch_x, y: batch_y})

if i % 50 == 0:

print(sess.run(accuracy,feed_dict={x: batch_x, y: batch_y,}))

test_data = mnist.test.images.reshape([-1, n_steps, n_inputs])

test_label = mnist.test.labels

#print("Testing Accuracy:", sess.run(accuracy, feed_dict={x: test_data, y: test_label}))

print("Testing Accuracy: ", compute_accuracy(test_data, test_label))

mnist.ipynb

# encoding=utf-8

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

myGraph = tf.Graph()

with myGraph.as_default():

with tf.name_scope('inputsAndLabels'):

x_raw = tf.placeholder(tf.float32, shape=[None, 784])

y = tf.placeholder(tf.float32, shape=[None, 10])

with tf.name_scope('hidden1'):

x = tf.reshape(x_raw, shape=[-1,28,28,1])

W_conv1 = weight_variable([5,5,1,32])

b_conv1 = bias_variable([32])

l_conv1 = tf.nn.relu(tf.nn.conv2d(x,W_conv1, strides=[1,1,1,1],padding='SAME') + b_conv1)

l_pool1 = tf.nn.max_pool(l_conv1, ksize=[1,2,2,1], strides=[1,2,2,1], padding='SAME')

tf.summary.image('x_input',x,max_outputs=10)

tf.summary.histogram('W_con1',W_conv1)

tf.summary.histogram('b_con1',b_conv1)

with tf.name_scope('hidden2'):

W_conv2 = weight_variable([5,5,32,64])

b_conv2 = bias_variable([64])

l_conv2 = tf.nn.relu(tf.nn.conv2d(l_pool1, W_conv2, strides=[1,1,1,1], padding='SAME')+b_conv2)

l_pool2 = tf.nn.max_pool(l_conv2, ksize=[1,2,2,1],strides=[1,2,2,1], padding='SAME')

tf.summary.histogram('W_con2', W_conv2)

tf.summary.histogram('b_con2', b_conv2)

with tf.name_scope('fc1'):

W_fc1 = weight_variable([64*7*7, 1024])

b_fc1 = bias_variable([1024])

l_pool2_flat = tf.reshape(l_pool2, [-1, 64*7*7])

l_fc1 = tf.nn.relu(tf.matmul(l_pool2_flat, W_fc1) + b_fc1)

keep_prob = tf.placeholder(tf.float32)

l_fc1_drop = tf.nn.dropout(l_fc1, keep_prob)

tf.summary.histogram('W_fc1', W_fc1)

tf.summary.histogram('b_fc1', b_fc1)

with tf.name_scope('fc2'):

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv = tf.matmul(l_fc1_drop, W_fc2) + b_fc2

tf.summary.histogram('W_fc1', W_fc1)

tf.summary.histogram('b_fc1', b_fc1)

with tf.name_scope('train'):

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=y_conv, labels=y))

train_step = tf.train.AdamOptimizer(learning_rate=1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar('loss', cross_entropy)

tf.summary.scalar('accuracy', accuracy)

with tf.Session(graph=myGraph) as sess:

sess.run(tf.global_variables_initializer())

saver = tf.train.Saver()

merged = tf.summary.merge_all()

summary_writer = tf.summary.FileWriter('./mnistEven/', graph=sess.graph)

for i in range(10001):

batch = mnist.train.next_batch(50)

sess.run(train_step,feed_dict={x_raw:batch[0], y:batch[1], keep_prob:0.5})

if i%100 == 0:

train_accuracy = accuracy.eval(feed_dict={x_raw:batch[0], y:batch[1], keep_prob:1.0})

print('step %d training accuracy:%g'%(i, train_accuracy))

summary = sess.run(merged,feed_dict={x_raw:batch[0], y:batch[1], keep_prob:1.0})

summary_writer.add_summary(summary,i)

test_accuracy = accuracy.eval(feed_dict={x_raw:mnist.test.images, y:mnist.test.labels, keep_prob:1.0})

print('test accuracy:%g' %test_accuracy)

saver.save(sess,save_path='./model/mnistmodel',global_step=1)

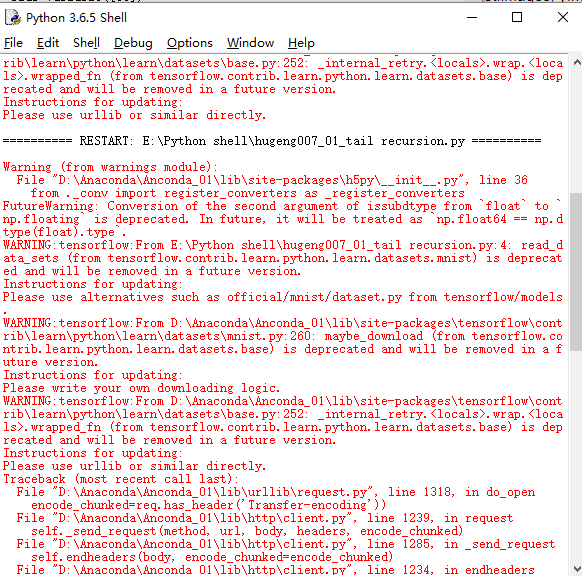

Warning (from warnings module):

File "D:AnacondaAnconda_01libsite-packagesh5py\__init__.py", line 36

from ._conv import register_converters as _register_converters

FutureWarning: Conversion of the second argument of issubdtype from `float` to `np.floating` is deprecated. In future, it will be treated as `np.float64 == np.dtype(float).type`.

WARNING:tensorflow:From E:Python shellhugeng007_01_tail recursion.py:4: read_data_sets (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use alternatives such as official/mnist/dataset.py from tensorflow/models.

WARNING:tensorflow:From D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsmnist.py:260: maybe_download (from tensorflow.contrib.learn.python.learn.datasets.base) is deprecated and will be removed in a future version.

Instructions for updating:

Please write your own downloading logic.

WARNING:tensorflow:From D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsase.py:252: _internal_retry.<locals>.wrap.<locals>.wrapped_fn (from tensorflow.contrib.learn.python.learn.datasets.base) is deprecated and will be removed in a future version.

Instructions for updating:

Please use urllib or similar directly.

========== RESTART: E:Python shellhugeng007_01_tail recursion.py ==========

Warning (from warnings module):

File "D:AnacondaAnconda_01libsite-packagesh5py\__init__.py", line 36

from ._conv import register_converters as _register_converters

FutureWarning: Conversion of the second argument of issubdtype from `float` to `np.floating` is deprecated. In future, it will be treated as `np.float64 == np.dtype(float).type`.

WARNING:tensorflow:From E:Python shellhugeng007_01_tail recursion.py:4: read_data_sets (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use alternatives such as official/mnist/dataset.py from tensorflow/models.

WARNING:tensorflow:From D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsmnist.py:260: maybe_download (from tensorflow.contrib.learn.python.learn.datasets.base) is deprecated and will be removed in a future version.

Instructions for updating:

Please write your own downloading logic.

WARNING:tensorflow:From D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsase.py:252: _internal_retry.<locals>.wrap.<locals>.wrapped_fn (from tensorflow.contrib.learn.python.learn.datasets.base) is deprecated and will be removed in a future version.

Instructions for updating:

Please use urllib or similar directly.

Traceback (most recent call last):

File "D:AnacondaAnconda_01liburllib

equest.py", line 1318, in do_open

encode_chunked=req.has_header('Transfer-encoding'))

File "D:AnacondaAnconda_01libhttpclient.py", line 1239, in request

self._send_request(method, url, body, headers, encode_chunked)

File "D:AnacondaAnconda_01libhttpclient.py", line 1285, in _send_request

self.endheaders(body, encode_chunked=encode_chunked)

File "D:AnacondaAnconda_01libhttpclient.py", line 1234, in endheaders

self._send_output(message_body, encode_chunked=encode_chunked)

File "D:AnacondaAnconda_01libhttpclient.py", line 1026, in _send_output

self.send(msg)

File "D:AnacondaAnconda_01libhttpclient.py", line 964, in send

self.connect()

File "D:AnacondaAnconda_01libhttpclient.py", line 1392, in connect

super().connect()

File "D:AnacondaAnconda_01libhttpclient.py", line 936, in connect

(self.host,self.port), self.timeout, self.source_address)

File "D:AnacondaAnconda_01libsocket.py", line 724, in create_connection

raise err

File "D:AnacondaAnconda_01libsocket.py", line 713, in create_connection

sock.connect(sa)

TimeoutError: [WinError 10060] 由于连接方在一段时间后没有正确答复或连接的主机没有反应,连接尝试失败。

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "E:Python shellhugeng007_01_tail recursion.py", line 4, in <module>

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

File "D:AnacondaAnconda_01libsite-packages ensorflowpythonutildeprecation.py", line 272, in new_func

return func(*args, **kwargs)

File "D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsmnist.py", line 260, in read_data_sets

source_url + TRAIN_IMAGES)

File "D:AnacondaAnconda_01libsite-packages ensorflowpythonutildeprecation.py", line 272, in new_func

return func(*args, **kwargs)

File "D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsase.py", line 252, in maybe_download

temp_file_name, _ = urlretrieve_with_retry(source_url)

File "D:AnacondaAnconda_01libsite-packages ensorflowpythonutildeprecation.py", line 272, in new_func

return func(*args, **kwargs)

File "D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsase.py", line 205, in wrapped_fn

return fn(*args, **kwargs)

File "D:AnacondaAnconda_01libsite-packages ensorflowcontriblearnpythonlearndatasetsase.py", line 233, in urlretrieve_with_retry

return urllib.request.urlretrieve(url, filename)

File "D:AnacondaAnconda_01liburllib

equest.py", line 248, in urlretrieve

with contextlib.closing(urlopen(url, data)) as fp:

File "D:AnacondaAnconda_01liburllib

equest.py", line 223, in urlopen

return opener.open(url, data, timeout)

File "D:AnacondaAnconda_01liburllib

equest.py", line 526, in open

response = self._open(req, data)

File "D:AnacondaAnconda_01liburllib

equest.py", line 544, in _open

'_open', req)

File "D:AnacondaAnconda_01liburllib

equest.py", line 504, in _call_chain

result = func(*args)

File "D:AnacondaAnconda_01liburllib

equest.py", line 1361, in https_open

context=self._context, check_hostname=self._check_hostname)

File "D:AnacondaAnconda_01liburllib

equest.py", line 1320, in do_open

raise URLError(err)

urllib.error.URLError: <urlopen error [WinError 10060] 由于连接方在一段时间后没有正确答复或连接的主机没有反应,连接尝试失败。>

WARNING:tensorflow:From <ipython-input-4-a36400cc0616>:4: read_data_sets (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use alternatives such as official/mnist/dataset.py from tensorflow/models.

WARNING:tensorflow:From /home/binder/.pyenv/versions/3.6.5/lib/python3.6/site-packages/tensorflow/contrib/learn/python/learn/datasets/mnist.py:260: maybe_download (from tensorflow.contrib.learn.python.learn.datasets.base) is deprecated and will be removed in a future version.

Instructions for updating:

Please write your own downloading logic.

WARNING:tensorflow:From /home/binder/.pyenv/versions/3.6.5/lib/python3.6/site-packages/tensorflow/contrib/learn/python/learn/datasets/mnist.py:262: extract_images (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use tf.data to implement this functionality.

Extracting MNIST_data/train-images-idx3-ubyte.gz

WARNING:tensorflow:From /home/binder/.pyenv/versions/3.6.5/lib/python3.6/site-packages/tensorflow/contrib/learn/python/learn/datasets/mnist.py:267: extract_labels (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use tf.data to implement this functionality.

Extracting MNIST_data/train-labels-idx1-ubyte.gz

WARNING:tensorflow:From /home/binder/.pyenv/versions/3.6.5/lib/python3.6/site-packages/tensorflow/contrib/learn/python/learn/datasets/mnist.py:110: dense_to_one_hot (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use tf.one_hot on tensors.

Extracting MNIST_data/t10k-images-idx3-ubyte.gz

Extracting MNIST_data/t10k-labels-idx1-ubyte.gz

WARNING:tensorflow:From /home/binder/.pyenv/versions/3.6.5/lib/python3.6/site-packages/tensorflow/contrib/learn/python/learn/datasets/mnist.py:290: DataSet.__init__ (from tensorflow.contrib.learn.python.learn.datasets.mnist) is deprecated and will be removed in a future version.

Instructions for updating:

Please use alternatives such as official/mnist/dataset.py from tensorflow/models.

WARNING:tensorflow:From <ipython-input-4-a36400cc0616>:60: softmax_cross_entropy_with_logits (from tensorflow.python.ops.nn_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Future major versions of TensorFlow will allow gradients to flow

into the labels input on backprop by default.

See @{tf.nn.softmax_cross_entropy_with_logits_v2}.

step 0 training accuracy:0.08

step 100 training accuracy:0.9

step 200 training accuracy:0.94

step 300 training accuracy:0.94

step 400 training accuracy:0.94

step 500 training accuracy:0.86

step 600 training accuracy:0.96

step 700 training accuracy:0.92

step 800 training accuracy:0.96

step 900 training accuracy:0.98

step 1000 training accuracy:0.96

step 1100 training accuracy:0.96

step 1200 training accuracy:1

step 1300 training accuracy:0.96

step 1400 training accuracy:0.98

step 1500 training accuracy:0.98

step 1600 training accuracy:0.98

step 1700 training accuracy:1

step 1800 training accuracy:0.96

step 1900 training accuracy:1

step 2000 training accuracy:1

step 2100 training accuracy:0.94

step 2200 training accuracy:1

step 2300 training accuracy:1

step 2400 training accuracy:1

step 2500 training accuracy:1

step 2600 training accuracy:0.98

step 2700 training accuracy:0.96

step 2800 training accuracy:1

step 2900 training accuracy:1

step 3000 training accuracy:0.98

step 3100 training accuracy:0.96

step 3200 training accuracy:0.96

step 3300 training accuracy:1

step 3400 training accuracy:0.98

step 3500 training accuracy:0.98

step 3600 training accuracy:0.96

step 3700 training accuracy:0.96

step 3800 training accuracy:0.96

step 3900 training accuracy:0.98

step 4000 training accuracy:0.98

step 4100 training accuracy:0.98

step 4200 training accuracy:1

step 4300 training accuracy:0.98

step 4400 training accuracy:0.98

step 4500 training accuracy:1

step 4600 training accuracy:0.98

step 4700 training accuracy:1

step 4800 training accuracy:1

step 4900 training accuracy:0.98

step 5000 training accuracy:0.98

step 5100 training accuracy:1

step 5200 training accuracy:1

step 5300 training accuracy:1

step 5400 training accuracy:1

step 5500 training accuracy:0.98

step 5600 training accuracy:1

step 5700 training accuracy:1

step 5800 training accuracy:0.98

step 5900 training accuracy:0.98

step 6000 training accuracy:1

step 6100 training accuracy:1

step 6200 training accuracy:0.96

step 6300 training accuracy:1

step 6400 training accuracy:1

step 6500 training accuracy:1

step 6600 training accuracy:0.96

step 6700 training accuracy:1

step 6800 training accuracy:1

step 6900 training accuracy:1

step 7000 training accuracy:1

step 7100 training accuracy:1

step 7200 training accuracy:1

step 7300 training accuracy:1

step 7400 training accuracy:1

step 7500 training accuracy:1

step 7600 training accuracy:1

step 7700 training accuracy:1

step 7800 training accuracy:0.98

step 7900 training accuracy:1

step 8000 training accuracy:1

step 8100 training accuracy:1

step 8200 training accuracy:1

step 8300 training accuracy:1

step 8400 training accuracy:1

step 8500 training accuracy:1

step 8600 training accuracy:0.98

step 8700 training accuracy:1

step 8800 training accuracy:0.98

step 8900 training accuracy:1

step 9000 training accuracy:1

step 9100 training accuracy:1

step 9200 training accuracy:0.98

step 9300 training accuracy:1

step 9400 training accuracy:1

step 9500 training accuracy:1

step 9600 training accuracy:1

step 9700 training accuracy:1

step 9800 training accuracy:1

step 9900 training accuracy:1

step 10000 training accuracy:1