一、前言

1、这一文学习使用Hive

二、Hive介绍与安装

Hive介绍:Hive是基于Hadoop的一个数据仓库工具,可以通过HQL语句(类似SQL)来操作HDFS上面的数据,其原理就是将用户写的HQL语句转换成MapReduce任务去执行,这样不用开发者去写繁琐的MapReduce程序,直接编写简单的HQL语句,降低了很多学习成本。由于Hive实际上是执行MapReduce,所以Hive的查询速度较慢,不适合用于实时的计算任务

1、下载Hive的tar包,并解压

tar zxvf /work/soft/installer/apache-hive-2.3.4-bin.tar.gz

2、配置环境变量

vim /etc/profile #set hive env export HIVE_HOME=/work/soft/apache-hive-2.3.4-bin export PATH=$PATH:$HIVE_HOME/bin source /etc/profile

3、修改配置文件(进入Hive的config目录)

(1)先把模板配置文件复制一份,并编辑(配置一些目录,以及将数据库引擎换成MySQL,这里需要有MySQL环境)

cp hive-default.xml.template hive-site.xml

(2)配置的hdfs目录手动创建

hadoop fs -mkdir -p /user/hive/warehouse hadoop fs -mkdir -p /user/hive/tmp hadoop fs -mkdir -p /user/hive/log

(3)将配置文件中的${system:java.io.tmpdir}全部替换成/work/tmp(要记得创建目录)

(4)将配置文件中的${system:user.name}全部替换成${user.name}

(5)下面配置中,配置MySQL驱动的包名,如果像我一样使用高版本的驱动,包名注意是(com.mysql.cj.jdbc.Driver)

cp hive-default.xml.template hive-site.xml <property> <name>hive.metastore.warehouse.dir</name> <value>/user/hive/warehouse</value> <description>location of default database for the warehouse</description> </property> <property> <name>hive.exec.scratchdir</name> <value>/user/hive/tmp</value> <description>HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: ${hive.exec.scratchdir}/<username> is created, with ${hive.scratch.dir.permission}.</description> </property> <property> <name>hive.querylog.location</name> <value>/user/hive/log/hadoop</value> <description>Location of Hive run time structured log file</description> </property> <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:mysql://192.168.3.123:3306/myhive?createDatabaseIfNotExist=true&serverTimezone=UTC</value> <description> JDBC connect string for a JDBC metastore. To use SSL to encrypt/authenticate the connection, provide database-specific SSL flag in the connection URL. For example, jdbc:postgresql://myhost/db?ssl=true for postgres database. </description> </property> <property> <name>javax.jdo.option.ConnectionDriverName</name> <value>com.mysql.cj.jdbc.Driver</value> <description>Driver class name for a JDBC metastore</description> </property> <property> <name>javax.jdo.option.ConnectionUserName</name> <value>root</value> <description>Username to use against metastore database</description> </property> <property> <name>javax.jdo.option.ConnectionPassword</name> <value>123456</value> <description>password to use against metastore database</description> </property>

(5)下载好MySQL的驱动包(mysql-connector-java-8.0.13.jar),并放到lib目录下

(6)接下来修改脚本文件,同样将模板复制一份并编辑

cp hive-env.sh.template hive-env.sh HADOOP_HOME=/work/soft/hadoop-2.6.4 export HIVE_CONF_DIR=/work/soft/apache-hive-2.3.4-bin/conf

三、Hive启动

1、首先初始化MySQL,进入到bin目录下,执行初始化命令

bash schematool -initSchema -dbType mysql

2、看到如下打印,说明初始化ok

3、启动之前先设置一下HDFS的目录权限,改成777(可读可写可执行)

hadoop fs -chmod -R 777 /

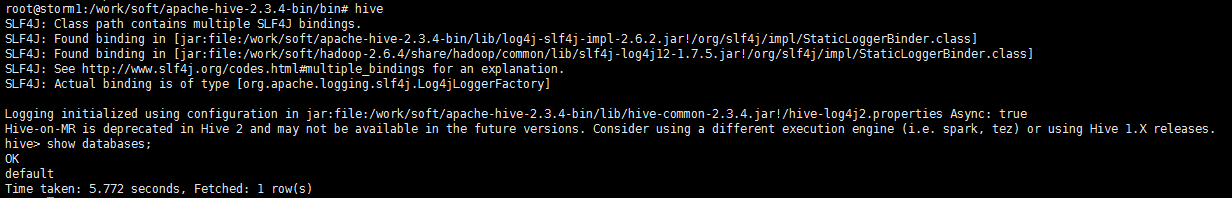

4、执行命令启动Hive,见到如下打印,说明启动ok

hive

show databases;