##前期工作

- 安装JDK

- 安装Hadoop

- 安装MySQL

##安装Hive ###下载Hive安装包 可以从 Apache 其中一个镜像站点中下载最新稳定版的 Hive, apache-hive-2.1.1-bin.tar.gz。

解压安装Hive

使用以下命令安装 Hive:

sudo mv apache-hive-2.1.1-bin.tar.gz /opt

cd /opt

sudo tar -xzvf apache-hive-2.1.1-bin.tar.gz ##解压

sudo ln -s apache-hive-2.1.1-bin hive ##创建软链接

###设置 Hive环境变量 编辑 .bash_profile 文件, 在其中添加以下内容:

# Hive Env

export HIVE_HOME=/opt/hive

export PATH=$PATH:$HIVE_HOME/bin

使环境变量生效:

source .bash_profile

##配置Hive ###配置文件重命名 在运行 Hive 之前需要使用以下命令修改配置文件:

cd /opt/hive/conf

cp hive-env.sh.template hive-env.sh

cp hive-default.xml.template hive-site.xml

cp hive-log4j2.properties.template hive-log4j2.properties

cp hive-exec-log4j2.properties.template hive-exec-log4j2.properties

###修改hive-env.sh 因为 Hive 使用了 Hadoop, 需要在 hive-env.sh 文件中指定 Hadoop 安装路径:

export JAVA_HOME=/opt/java ##Java路径

export HADOOP_HOME=/opt/hadoop ##Hadoop安装路径

export HIVE_HOME=/opt/hive ##Hive安装路径

export HIVE_CONF_DIR=/opt/hive/conf ##Hive配置文件路径

###创建HDFS目录 在 Hive 中创建表之前需要创建以下 HDFS 目录并给它们赋相应的权限。

hdfs dfs -mkdir -p /user/hive/warehouse

hdfs dfs -mkdir -p /user/hive/tmp

hdfs dfs -mkdir -p /user/hive/log

hdfs dfs -chmod g+w /user/hive/warehouse

hdfs dfs -chmod g+w /user/hive/tmp

hdfs dfs -chmod g+w /user/hive/log

###修改hive-site.xml 将 hive-site.xml 文件中以下几个配置项的值设置成上一步中创建的几个路径。

<property>

<name>hive.exec.scratchdir</name>

<value>/user/hive/tmp</value>

<description>HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: ${hive.exec.scratchdir}/<username> is created, with ${hive.scratch.dir.permission}.</description>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

<description>location of default database for the warehouse</description>

</property>

<property>

<name>hive.querylog.location</name>

<value>/user/hive/log</value>

<description>Location of Hive run time structured log file</description>

</property>

##Hive Metastore 默认情况下, Hive 的元数据保存在内嵌的 Derby 数据库里, 但一般情况下生产环境会使用 MySQL 来存放 Hive 元数据。

创建数据库和用户

假定你已经安装好 MySQL。下面创建一个 hive 数据库用来存储 Hive 元数据,且数据库访问的用户名和密码都为 hive。

mysql> CREATE DATABASE hive;

mysql> USE hive;

mysql> CREATE USER 'hive'@'localhost' IDENTIFIED BY 'hive';

mysql> GRANT ALL ON hive.* TO 'hive'@'localhost' IDENTIFIED BY 'hive';

mysql> GRANT ALL ON hive.* TO 'hive'@'%' IDENTIFIED BY 'hive';

mysql> FLUSH PRIVILEGES;

mysql> quit;

###修改hive-site.xml 需要在 hive-site.xml 文件中配置 MySQL 数据库连接信息。

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&characterEncoding=UTF-8&useSSL=false</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>hive</value>

</property>

##运行Hive 在命令行运行 hive 命令时必须保证以下两点:

- HDFS 已经启动。可以使用 start-dfs.sh 脚本来启动 HDFS。

- MySQL Java 连接器添加到 $HIVE_HOME/lib 目录下。我安装时使用的是 mysql-connector-java-5.1.39.jar。

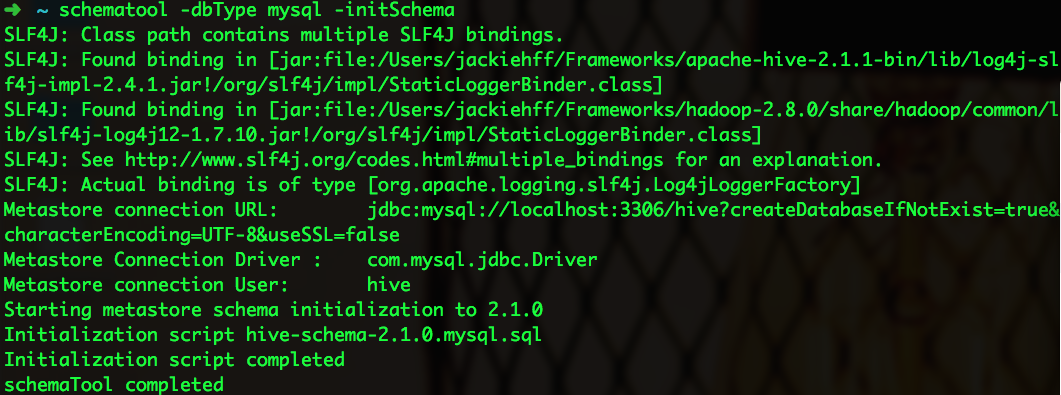

从 Hive 2.1 版本开始, 我们需要先运行 schematool 命令来执行初始化操作。

schematool -dbType mysql -initSchema

终端输出信息如下:

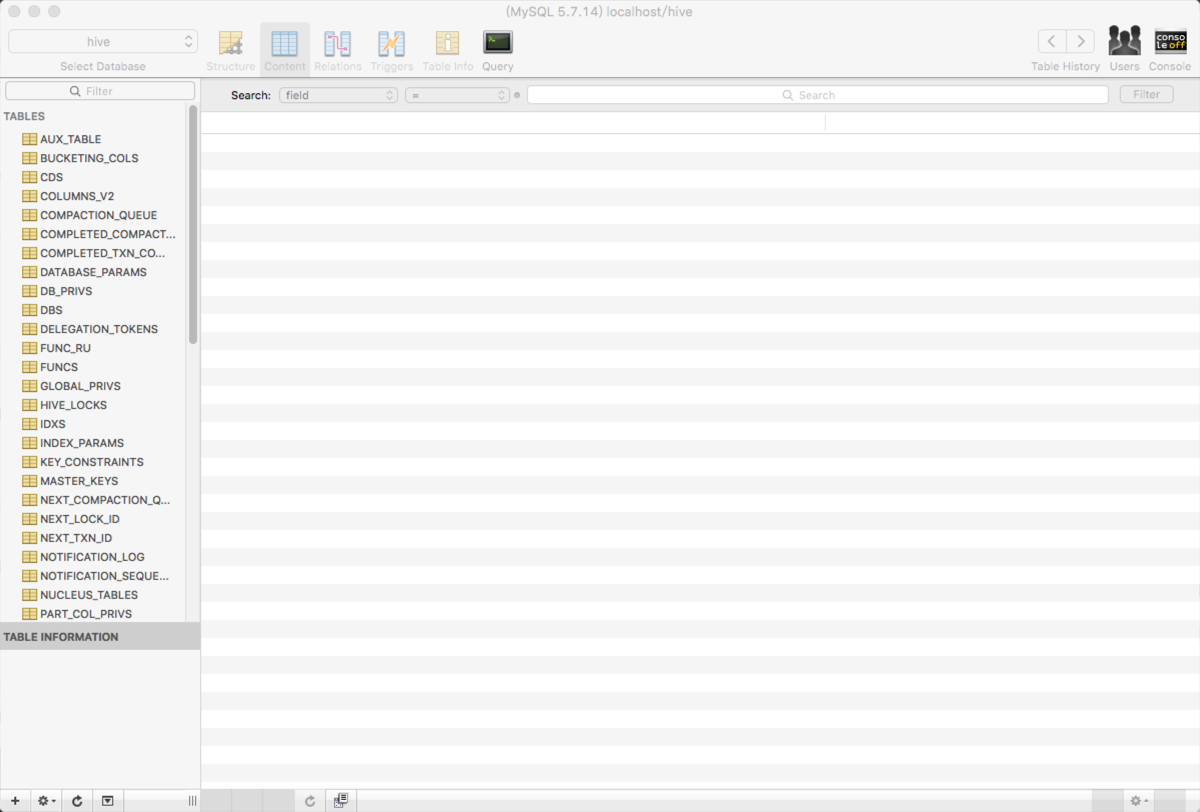

执行成功后,可以使用 Sequel Pro 查看元数据库 hive 是否已经创建成功。

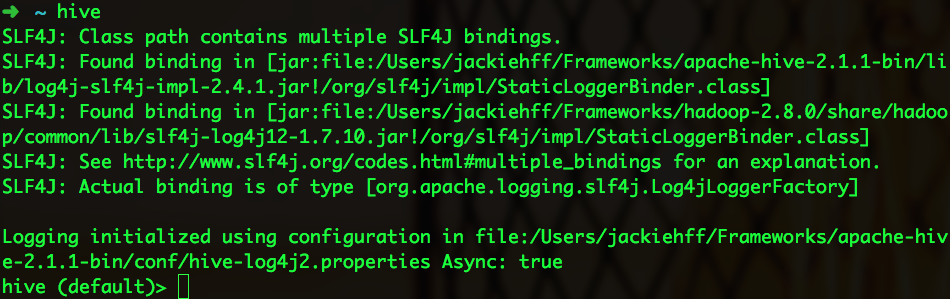

要使用 Hive CLI(Hive command line interface), 可以在终端输入以下命令:

hive

启动信息如下:

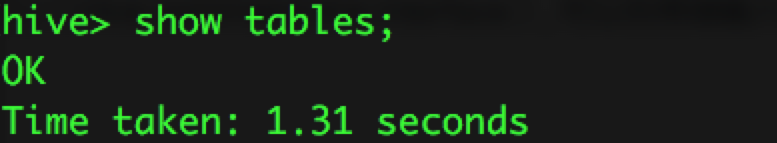

使用 show tables 来显示所有的表:

##问题总结

SSL问题

启动 hive 时,提示以下信息:

Sat May 21 10:25:12 CST 2016 WARN: Establishing SSL connection without server's identity verification is not recommended. According to MySQL 5.5.45+, 5.6.26+ and 5.7.6+ requirements SSL

connection must be established by default if explicit option isn't set. For compliance with existing applications not using SSL the verifyServerCertificate property is set to 'false'. You need either to explicitly disable SSL by setting useSSL=false, or set useSSL=true and provide truststore for server certificate verification.

解决方法:在 hive-site.xml 配置文件中 javax.jdo.option.ConnectionURL 配置项的值上加上 useSSL=false。即最后的配置如下:

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&useUnicode=true&characterEncoding=UTF-8&useSSL=false</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

initSchema问题

启动 hive 时,提示以下信息:

Exception in thread "main" java.lang.RuntimeException: Hive metastore database is not initialized. Please use schematool (e.g. ./schematool -initSchema -dbType ...) to create the schema. If needed, don't forget to include the option to auto-create the underlying database in your JDBC connection string (e.g. ?createDatabaseIfNotExist=true for mysql)

解决方法:执行 schematool -initSchema -dbType mysql。即安装 Hive 后,在首次运行 hive 之前要执行以上命令。

###相对路径问题 启动Hive时报以下错误:

Exception in thread "main"java.lang.RuntimeException: java.lang.IllegalArgumentException:java.net.URISyntaxException: Relative path in absolute URI:${system:java.io.tmpdir%7D/$%7Bsystem:user.name%7D

atorg.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:444)

atorg.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:672)

atorg.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:616)

atsun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

atsun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

atsun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

atjava.lang.reflect.Method.invoke(Method.java:606)

atorg.apache.hadoop.util.RunJar.main(RunJar.java:160)

Caused by: java.lang.IllegalArgumentException:java.net.URISyntaxException: Relative path in absolute URI:${system:java.io.tmpdir%7D/$%7Bsystem:user.name%7D

atorg.apache.hadoop.fs.Path.initialize(Path.java:148)

atorg.apache.hadoop.fs.Path.<init>(Path.java:126)

atorg.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java:487)

atorg.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:430)

... 7more

解决方案:将 hive-site.xml 中的 ${system:java.io.tmpdir} 和 ${system:user.name} 分别替换成 /tmp 和 ${user.name} ###mysql-connector-java版本问题 启动Hive时报以下错误:

Exception in thread "main" java.lang.RuntimeException: org.apache.hadoop.hive.ql.metadata.HiveException: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:578)

at org.apache.hadoop.hive.ql.session.SessionState.beginStart(SessionState.java:518)

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:705)

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:641)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.RunJar.run(RunJar.java:221)

at org.apache.hadoop.util.RunJar.main(RunJar.java:136)

Caused by: org.apache.hadoop.hive.ql.metadata.HiveException: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient

at org.apache.hadoop.hive.ql.metadata.Hive.registerAllFunctionsOnce(Hive.java:226)

at org.apache.hadoop.hive.ql.metadata.Hive.<init>(Hive.java:366)

at org.apache.hadoop.hive.ql.metadata.Hive.create(Hive.java:310)

at org.apache.hadoop.hive.ql.metadata.Hive.getInternal(Hive.java:290)

at org.apache.hadoop.hive.ql.metadata.Hive.get(Hive.java:266)

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:545)

... 9 more

Caused by: java.lang.RuntimeException: Unable to instantiate org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient

at org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1627)

at org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.<init>(RetryingMetaStoreClient.java:80)

at org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.getProxy(RetryingMetaStoreClient.java:130)

at org.apache.hadoop.hive.metastore.RetryingMetaStoreClient.getProxy(RetryingMetaStoreClient.java:101)

at org.apache.hadoop.hive.ql.metadata.Hive.createMetaStoreClient(Hive.java:3317)

at org.apache.hadoop.hive.ql.metadata.Hive.getMSC(Hive.java:3356)

at org.apache.hadoop.hive.ql.metadata.Hive.getMSC(Hive.java:3336)

at org.apache.hadoop.hive.ql.metadata.Hive.getAllFunctions(Hive.java:3590)

at org.apache.hadoop.hive.ql.metadata.Hive.reloadFunctions(Hive.java:236)

at org.apache.hadoop.hive.ql.metadata.Hive.registerAllFunctionsOnce(Hive.java:221)

... 14 more

Caused by: java.lang.reflect.InvocationTargetException

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.hive.metastore.MetaStoreUtils.newInstance(MetaStoreUtils.java:1625)

... 23 more

Caused by: javax.jdo.JDODataStoreException: Exception thrown obtaining schema column information from datastore

NestedThrowables:

java.sql.SQLException: Column name pattern can not be NULL or empty.

at org.datanucleus.api.jdo.NucleusJDOHelper.getJDOExceptionForNucleusException(NucleusJDOHelper.java:543)

at org.datanucleus.api.jdo.JDOPersistenceManager.jdoMakePersistent(JDOPersistenceManager.java:720)

at org.datanucleus.api.jdo.JDOPersistenceManager.makePersistent(JDOPersistenceManager.java:740)

at org.apache.hadoop.hive.metastore.ObjectStore.setMetaStoreSchemaVersion(ObjectStore.java:7763)

at org.apache.hadoop.hive.metastore.ObjectStore.checkSchema(ObjectStore.java:7657)

at org.apache.hadoop.hive.metastore.ObjectStore.verifySchema(ObjectStore.java:7632)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.hive.metastore.RawStoreProxy.invoke(RawStoreProxy.java:101)

at com.sun.proxy.$Proxy21.verifySchema(Unknown Source)

at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.getMS(HiveMetaStore.java:547)

at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.createDefaultDB(HiveMetaStore.java:612)

at org.apache.hadoop.hive.metastore.HiveMetaStore$HMSHandler.init(HiveMetaStore.java:398)

at org.apache.hadoop.hive.metastore.RetryingHMSHandler.<init>(RetryingHMSHandler.java:78)

at org.apache.hadoop.hive.metastore.RetryingHMSHandler.getProxy(RetryingHMSHandler.java:84)

at org.apache.hadoop.hive.metastore.HiveMetaStore.newRetryingHMSHandler(HiveMetaStore.java:6396)

at org.apache.hadoop.hive.metastore.HiveMetaStoreClient.<init>(HiveMetaStoreClient.java:236)

at org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient.<init>(SessionHiveMetaStoreClient.java:70)

... 28 more

解决方案:将 $HIVE_HOME/lib 下 的 mysql-connector-java-6.0.3.jar 替换成 mysql-connector-java-5.1.39.jar。 原因分析:mysql-connector-java 6.x 版本 和 5.1.x 版本不兼容 , nullNamePatternMatchesAll 连接属性的默认值在 mysql-connector-java 5.1 和 6.0 之间发生了改变. 在 5.1 版本中默认值是 true, 而 6.0 版本中默认值是 false。可以参考文章 https://liquibase.jira.com/browse/CORE-2723。

##参考资料 https://cwiki.apache.org/confluence/display/Hive/GettingStarted