概述

Flume是Cloudera提供的一个高可用的,高可靠的,分布式的海量日志采集、聚合和传输的系统。Flume基于流式架构,灵活简单。

主要作用:实时读取服务器本地磁盘数据,将数据写入HDFS;

优点:

- 可以和任意存储进程集成。

- 输入的的数据速率大于写入目的存储的速率(读写速率不同步),flume会进行缓冲,减小hdfs的压力。

- flume中的事务基于channel,使用了两个事务模型(sender + receiver),确保消息被可靠发送。

Flume使用两个独立的事务分别负责从soucrce到channel,以及从channel到sink的事件传递。一旦事务中所有的数据全部成功提交到channel,那么source才认为该数据读取完成。同理,只有成功被sink写出去的数据,才会从channel中移除;失败后就重新提交;

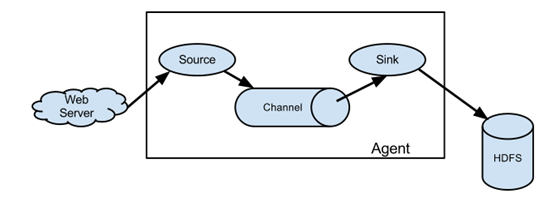

组成:Agent 由 source+channel+sink构成;

source是数据来源的抽象,sink是数据去向的抽象;

Source

Source是负责接收数据到Flume Agent的组件。Source组件可以处理各种类型、各种格式的日志数据

数据输入端输入类型:spooling directory(spooldir)文件夹里边的数据不停的滚动、exec 命令的执行结果被采集

syslog系统日志、avro上一层的flume、netcat网络端传输的数据

Channel

Channel是位于Source和Sink之间的缓冲区。因此,Channel允许Source和Sink运作在不同的速率上。Channel是线程安全的,可以同时处理几个Source的写入操作和几个Sink的读取操作。

Flume自带两种Channel:Memory Channel和File Channel。

Memory Channel是内存中的队列。Memory Channel在不需要关心数据丢失的情景下适用。如果需要关心数据丢失,那么Memory Channel就不应该使用,因为程序死亡、机器宕机或者重启都会导致数据丢失。

File Channel将所有事件写到磁盘。因此在程序关闭或机器宕机的情况下不会丢失数据。

Channel选择器是决定Source接收的一个特定事件写入哪些Channel的组件,它们告知Channel处理器,然后由其将事件写入到每个Channel。

Channel Selector有两种类型:Replicating Channel Selector(default,会把所有的数据发给所有的Channel)和Multiplexing Chanell Selector(选择把哪个数据发到哪个channel)和自定义选择器;

Source 发送的 Event 通过 Channel 选择器来选择以哪种方式写入到 Channel 中,Flume 提供三种类型 Channel 选择器,分别是复制、复用和自定义选择器。

- 复制选择器: 一个 Source 以复制的方式将一个 Event 同时写入到多个 Channel 中,不同的 Sink 可以从不同的 Channel 中获取相同的 Event,比如一份日志数据同时写 Kafka 和 HDFS,一个 Event 同时写入两个 Channel,然后不同类型的 Sink 发送到不同的外部存储。

该选择器复制每个事件到通过Source的channels参数所指定的所有的Channels中。复制Channel选择器还有一个可选参数optional,该参数是空格分隔的channel名字列表。此参数指定的所有channel都认为是可选的,所以如果事件写入这些channel时,若有失败发生,会忽略。而写入其他channel失败时会抛出异常。

2. (多路)复用选择器: 需要和拦截器配合使用,根据 Event 的头信息中不同键值数据来判断 Event 应该写入哪个 Channel 中。

还有一种是kafka channel,它是没有sink;

3. 自定义选择器

Sink

数据去向常见的目的地有:HDFS、Kafka、logger(记录INFO级别的日志)、avro(下一层的Flume)、File、Hbase、solr、ipc、thrift自定义等

Sink不断地轮询Channel中的事件且批量地移除它们,并将这些事件批量写入到存储或索引系统、或者被发送到另一个Flume Agent。

Sink是完全事务性的。在从Channel批量删除数据之前,每个Sink用Channel启动一个事务。批量事件一旦成功写出到存储系统或下一个Flume Agent,Sink就利用Channel提交事务。事务一旦被提交,该Channel从自己的内部缓冲区删除事件。

Sink groups允许组织多个sink到一个实体上。 Sink processors(处理器)能够提供在组内所有Sink之间实现负载均衡的能力,而且在失败的情况下能够进行故障转移从一个Sink到另一个Sink。简单的说就是一个source 对应一个Sinkgroups,即多个sink,这里实际上复用/复制情况差不多,只是这里考虑的是可靠性与性能,即故障转移与负载均衡的设置。

DefaultSink Processor 接收单一的Sink,不强制用户为Sink创建Processor

FailoverSink Processor故障转移处理器会通过配置维护了一个优先级列表。保证每一个有效的事件都会被处理。

工作原理是将连续失败sink分配到一个池中,在那里被分配一个冷冻期,在这个冷冻期里,这个sink不会做任何事。一旦sink成功发送一个event,sink将被还原到live 池中。

Load balancing Processor负载均衡处理器提供在多个Sink之间负载平衡的能力。实现支持通过① round_robin(轮询)或者② random(随机)参数来实现负载分发,默认情况下使用round_robin

事务

Put事务流程:

doPut将批数据先写入临时缓冲区putList; doCommit:检查channel内存队列是否足够合并; doRollback:channel内存队列空间不足,回滚数据;

尝试put先把数据put到putList里边,然后commit提交,查看channel中事务是否提交成功,如果都提交成功了就把这个事件从putList中拿出来;如果失败就重写提交,rollTback到putList;

Take事务:

doTake先将数据取到临时缓冲区takeList; doCommit如果数据全部发送成功,则清除临时缓冲区takeList; doRollback数据发送过程中如果出现异常,rollback将临时缓存takeList中的数据归还给channel内存队列;

拉取事件到takeList中,尝试提交,如果提交成功就把takeList中数据清除掉;如果提交失败就重写提交,返回到channel后重写提交;

这种事务:flume有可能有重复的数据;

Event

传输单元,Flume数据传输的基本单元,以事件的形式将数据从源头送至目的地。 Event由可选的header和载有数据的一个byte array 构成。Header是容纳了key-value字符串对的HashMap。

拦截器(interceptor)

拦截器是简单插件式组件,设置在Source和Source写入数据的Channel之间。每个拦截器实例只处理同一个Source接收到的事件。

因为拦截器必须在事件写入channel之前完成转换操作,只有当拦截器已成功转换事件后,channel(和任何其他可能产生超时的source)才会响应发送事件的客户端或sink。

Flume官方提供了一些常用的拦截器,也可以自定义拦截器对日志进行处理。自定义拦截器只需以下几步:

- 使用的Flume版本为:apache-flume-1.6.0

实现org.apache.flume.interceptor.Interceptor接口

Flume拓扑结构

① 串联:channel多,但flume层数不宜过多;这种模式是将多个flume给顺序连接起来了,从最初的source开始到最终sink传送的目的存储系统。此模式不建议桥接过多的flume数量, flume数量过多不仅会影响传输速率,而且一旦传输过程中某个节点flume宕机,会影响整个传输系统。

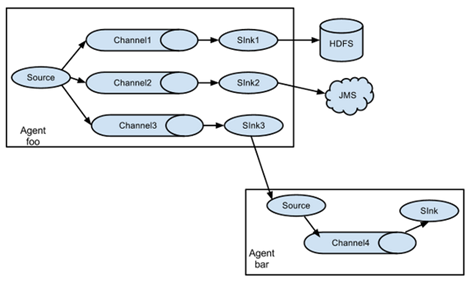

② 单source,多channel、sink; 一个channel对应多个sink; 多个channel对应多个sink;

---->sink1 ---->channel1 --->sink1

单source ---> channel----->sink2 source

----->sink3 ------>channel2---->sink2

Flume支持将事件流向一个或者多个目的地。这种模式将数据源复制到多个channel中,每个channel都有相同的数据,sink可以选择传送的不同的目的地。

③ 负载均衡 Flume支持使用将多个sink逻辑上分到一个sink组,flume将数据发送到不同的sink,主要解决负载均衡和故障转移问题。

负载均衡 :并排的三个channel都是轮询,好处是增大流量并且保证数据的安全;(一个挂了,三个不会都挂;缓冲区比较长,如果hdfs出现问题,两层的channel,多个flune的并联可以保证数据的安全且增大缓冲区)

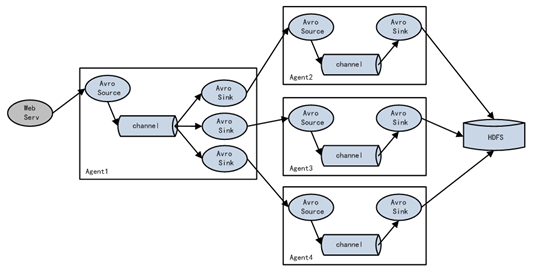

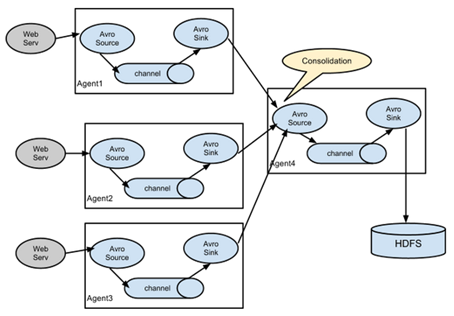

④ Flume agent聚合 日常web应用通常分布在上百个服务器,大者甚至上千个、上万个服务器。产生的日志,处理起来也非常麻烦。用flume的这种组合方式能很好的解决这一问题,每台服务器部署一个flume采集日志,传送到一个集中收集日志的flume,再由此flume上传到hdfs、hive、hbase、jms等,进行日志分析。

安装

将apache-flume-1.7.0-bin.tar.gz上传到linux的/opt/software目录下 解压apache-flume-1.7.0-bin.tar.gz到/opt/module/目录下 [kris@hadoop101 software]$ tar -zxf apache-flume-1.7.0-bin.tar.gz -C /opt/module/ [kris@hadoop101 module]$ mv apache-flume-1.7.0-bin/ flume [kris@hadoop101 conf]$ mv flume-env.sh.template flume-env.sh [kris@hadoop101 conf]$ vim flume-env.sh export JAVA_HOME=/opt/module/jdk1.8.0_144

Flume异常处理

1)问题描述:如果启动消费Flume抛出如下异常

ERROR hdfs.HDFSEventSink: process failed

java.lang.OutOfMemoryError: GC overhead limit exceeded

2)解决方案步骤:

(1)在hadoop101服务器的/opt/module/flume/conf/flume-env.sh文件中增加如下配置

export JAVA_OPTS="-Xms100m -Xmx2000m -Dcom.sun.management.jmxremote"

同步配置到hadoop102、hadoop103服务器

[kris@hadoop101 conf]$ xsync flume-env.sh

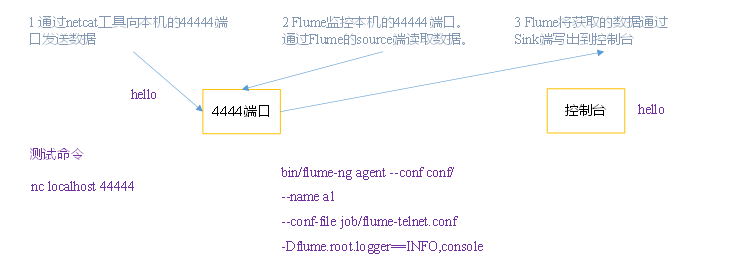

1. 监控端口数据--netcat

监控端口数据:

端口(netcat)--->flume--->Sink(logger)到控制台

[kris@hadoop101 flume]$ mkdir job [kris@hadoop101 flume]$ cd job/ [kris@hadoop101 job]$ touch flume-netcat-logger.conf [kris@hadoop101 job]$ vim flume-netcat-logger.conf

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = netcat #a1的输入源类型为netcat端口类型 a1.sources.r1.bind = localhost #表示a1的主机 a1.sources.r1.port = 44444 #表示a1的监听端口 # Describe the sink a1.sinks.k1.type = logger #表示a1的输出目的地是控制台logger类型 # Use a channel which buffers events in memory a1.channels.c1.type = memory #表示a1的channel类型是memory内存类型 a1.channels.c1.capacity = 1000 #表示a1的channel总容量是1000个event a1.channels.c1.transactionCapacity = 100 #表示a1的channel传输时收集到了100条event以后再去提交到事务 # Bind the source and sink to the channel a1.sources.r1.channels = c1 #表示r1和c1连接起来 a1.sinks.k1.channel = c1 #表示k1和c1连接起来

安装nc工具 [kris@hadoop101 software]$ sudo yum install -y nc 判断44444端口是否被占用 [kris@hadoop101 flume]$ sudo netstat -tunlp | grep 44444 先开启flume监听端口 [kris@hadoop101 flume]$ bin/flume-ng agent --conf conf/ --name a1 --conf-file job/flume-netcat-logger.conf -Dflume.root.logger=INFO,console --conf conf/表配置文件在conf/目录; --name a1是给agent起名a1; --conf-file job/...表本次读取配置文件是在job文件夹下的flume-netcat-logger.conf文件;

-D表flume运行时动态修改flume.root.logger参数属性值,并将控制台打印级别设置为INFO级别

[kris@hadoop101 ~]$ cd /opt/module/flume/ 向本机的44444端口发送内容 [kris@hadoop101 flume]$ nc localhost 44444 hello OK kris OK 在Flume监听页面观察接收数据情况 2019-02-20 10:01:41,151 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 68 65 6C 6C 6F hello } 2019-02-20 10:01:45,153 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 6B 72 69 73 kris } netstat -nltp [kris@hadoop101 ~]$ netstat -nltp #出现了监听这个端口号说明成功; tcp 0 0 ::ffff:127.0.0.1:44444 :::* LISTEN 4841/java

nc hadoop102 44444, flume不能接收到

netstat命令是一个监控TCP/IP网络的非常有用的工具,它可以显示路由表、实际的网络连接以及每一个网络接口设备的状态信息。

-t或--tcp:显示TCP传输协议的连线状况;

-u或--udp:显示UDP传输协议的连线状况;

-n或--numeric:直接使用ip地址,而不通过域名服务器;

-l或--listening:显示监控中的服务器的Socket;

-p或--programs:显示正在使用Socket的程序识别码(PID)和程序名称;

2. 实时读取本地文件到HDFS

实时读取本地文件到HDFS:

hive.log(exec)--->flume--->Sink(HDFS)

取Linux系统中的文件,就得按照Linux命令的规则执行命令。由于Hive日志在Linux系统中所以读取文件的类型选择:exec即execute执行的意思。表示执行Linux命令来读取文件。

1.Flume要想将数据输出到HDFS,必须持有Hadoop相关jar包

将commons-configuration-1.6.jar、 hadoop-auth-2.7.2.jar、 hadoop-common-2.7.2.jar、 hadoop-hdfs-2.7.2.jar、 commons-io-2.4.jar、 htrace-core-3.1.0-incubating.jar 拷贝到/opt/module/flume/lib文件夹下

2.创建flume-file-hdfs.conf文件

[kris@hadoop101 job]$ vim flume-file-hdfs.conf

# Name the components on this agent a2.sources = r2 a2.sinks = k2 a2.channels = c2 # Describe/configure the source a2.sources.r2.type = exec a2.sources.r2.command = tail -F /opt/module/hive/logs/hive.log a2.sources.r2.shell = /bin/bash -c # Describe the sink a2.sinks.k2.type = hdfs a2.sinks.k2.hdfs.path = hdfs://hadoop102:9000/flume/%Y%m%d/%H #上传文件的前缀 a2.sinks.k2.hdfs.filePrefix = logs- #是否按照时间滚动文件夹 a2.sinks.k2.hdfs.round = true #多少时间单位创建一个新的文件夹 a2.sinks.k2.hdfs.roundValue = 1 #重新定义时间单位 a2.sinks.k2.hdfs.roundUnit = hour #是否使用本地时间戳 a2.sinks.k2.hdfs.useLocalTimeStamp = true #积攒多少个Event才flush到HDFS一次 a2.sinks.k2.hdfs.batchSize = 1000 #设置文件类型,可支持压缩 a2.sinks.k2.hdfs.fileType = DataStream #多久生成一个新的文件 a2.sinks.k2.hdfs.rollInterval = 60 #设置每个文件的滚动大小 a2.sinks.k2.hdfs.rollSize = 134217700 #文件的滚动与Event数量无关 a2.sinks.k2.hdfs.rollCount = 0 # Use a channel which buffers events in memory a2.channels.c2.type = memory a2.channels.c2.capacity = 1000 a2.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r2.channels = c2 a2.sinks.k2.channel = c2

tail -F /opt/module/hive/logs/hive.log -F实时监控

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a2 -f job/flume-file-hdfs.conf

开启Hadoop和Hive并操作Hive产生日志 sbin/start-dfs.sh sbin/start-yarn.sh bin/hive

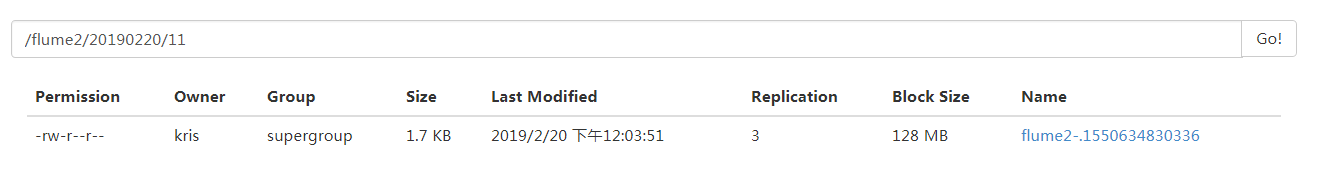

在HDFS上查看文件。

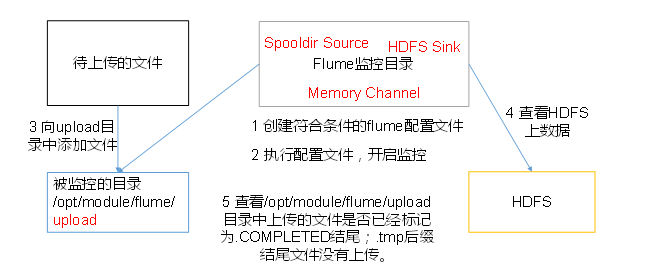

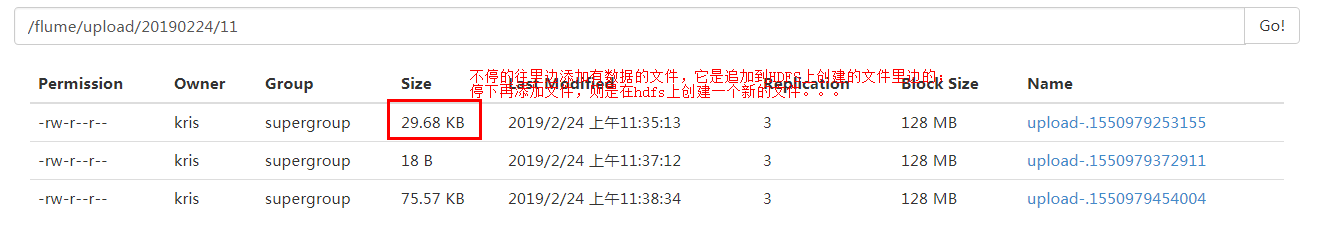

3. 实时读取目录文件到HDFS

实时读取目录文件到HDFS:

目录dir(spooldir)--->flume--->Sink(HDFS)

[kris@hadoop101 job]$ vim flume-dir-hdfs.conf

a3.sources = r3 a3.sinks = k3 a3.channels = c3 # Describe/configure the source a3.sources.r3.type = spooldir #定义source类型为目录 a3.sources.r3.spoolDir = /opt/module/flume/upload #定义监控日志 a3.sources.r3.fileSuffix = .COMPLETED #定义文件上传完,后缀 a3.sources.r3.fileHeader = true #是否有文件头 #忽略所有以.tmp结尾的文件,不上传 a3.sources.r3.ignorePattern = ([^ ]*.tmp) # Describe the sink a3.sinks.k3.type = hdfs a3.sinks.k3.hdfs.path = hdfs://hadoop101:9000/flume/upload/%Y%m%d/%H #上传文件的前缀 a3.sinks.k3.hdfs.filePrefix = upload- #是否按照时间滚动文件夹 a3.sinks.k3.hdfs.round = true #多少时间单位创建一个新的文件夹 a3.sinks.k3.hdfs.roundValue = 1 #重新定义时间单位 a3.sinks.k3.hdfs.roundUnit = hour #是否使用本地时间戳 a3.sinks.k3.hdfs.useLocalTimeStamp = true #积攒多少个Event才flush到HDFS一次 a3.sinks.k3.hdfs.batchSize = 100 #设置文件类型,可支持压缩 a3.sinks.k3.hdfs.fileType = DataStream #多久生成一个新的文件 a3.sinks.k3.hdfs.rollInterval = 60 #设置每个文件的滚动大小大概是128M a3.sinks.k3.hdfs.rollSize = 134217700 #文件的滚动与Event数量无关 a3.sinks.k3.hdfs.rollCount = 0 # Use a channel which buffers events in memory a3.channels.c3.type = memory a3.channels.c3.capacity = 1000 a3.channels.c3.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r3.channels = c3 a3.sinks.k3.channel = c3

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a3 -f job/flume-dir-hdfs.conf [kris@hadoop101 flume]$ mkdir upload [kris@hadoop101 flume]$ cd upload/ [kris@hadoop101 upload]$ touch kris.txt [kris@hadoop101 upload]$ touch kris.tmp [kris@hadoop101 upload]$ touch kris.log [kris@hadoop101 upload]$ ll ##创建文件,hdfs上就会生成/flume/upload/20190224/11/upload-155...的文件;不添加内容就是空的;vim kri.log.COMPLETED写入东西hdfs上还是空的,它只是监控文件夹的创建; 总用量 0 -rw-rw-r--. 1 kris kris 0 2月 20 11:09 kris.log.COMPLETED -rw-rw-r--. 1 kris kris 0 2月 20 11:08 kris.tmp -rw-rw-r--. 1 kris kris 0 2月 20 11:08 kris.txt.COMPLETED [kris@hadoop101 flume]$ cp README.md upload/ [kris@hadoop101 flume]$ cp LICENSE upload/ [kris@hadoop101 upload]$ ll 总用量 32 -rw-rw-r--. 1 kris kris 0 2月 20 11:09 kris.log.COMPLETED -rw-rw-r--. 1 kris kris 0 2月 20 11:08 kris.tmp -rw-rw-r--. 1 kris kris 0 2月 20 11:08 kris.txt.COMPLETED -rw-r--r--. 1 kris kris 27625 2月 20 11:14 LICENSE.COMPLETED -rw-r--r--. 1 kris kris 2520 2月 20 11:13 README.md.COMPLETED 在upload中创建一个文件,就会在hdfs上创建一个文件;

也可在文件里边追加数据

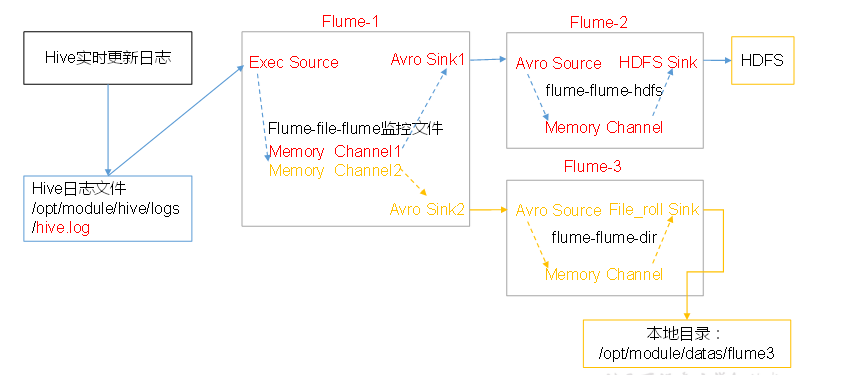

4. 单数据源多出口(选择器)

单Source多Channel、Sink

单数据源多出口(选择器):单Source多Channel、Sink

hive.log(exec)---->flume1--Sink1(avro)-->flume2--->Sink(HDFS)

---Sink2(avro)-->flume3--->Sink(file roll本地目录文件data)

准备工作

在/opt/module/flume/job目录下创建group1文件夹 [kris@hadoop101 job]$ cd group1/ 在/opt/module/datas/目录下创建flume3文件夹 [kris@hadoop101 datas]$ mkdir flume3

1.创建flume-file-flume.conf

配置1个接收日志文件的source和两个channel、两个sink,分别输送给flume-flume-hdfs和flume-flume-dir。

[kris@hadoop101 group1]$ vim flume-file-flume.conf

# Name the components on this agent a1.sources = r1 a1.sinks = k1 k2 a1.channels = c1 c2 # 将数据流复制给所有channel a1.sources.r1.selector.type = replicating # Describe/configure the source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/module/hive/logs/hive.log a1.sources.r1.shell = /bin/bash -c # Describe the sink # sink端的avro是一个数据发送者 a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop101 a1.sinks.k1.port = 4141 a1.sinks.k2.type = avro a1.sinks.k2.hostname = hadoop101 a1.sinks.k2.port = 4142 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 a1.channels.c2.type = memory a1.channels.c2.capacity = 1000 a1.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 c2 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c2

Avro是由Hadoop创始人Doug Cutting创建的一种语言无关的数据序列化和RPC框架。

注:RPC(Remote Procedure Call)—远程过程调用,它是一种通过网络从远程计算机程序上请求服务,而不需要了解底层网络技术的协议。

[kris@hadoop101 group1]$ vim flume-flume-hdfs.conf

# Name the components on this agent a2.sources = r1 a2.sinks = k1 a2.channels = c1 # Describe/configure the source # source端的avro是一个数据接收服务 a2.sources.r1.type = avro a2.sources.r1.bind = hadoop101 a2.sources.r1.port = 4141 # Describe the sink a2.sinks.k1.type = hdfs a2.sinks.k1.hdfs.path = hdfs://hadoop101:9000/flume2/%Y%m%d/%H #上传文件的前缀 a2.sinks.k1.hdfs.filePrefix = flume2- #是否按照时间滚动文件夹 a2.sinks.k1.hdfs.round = true #多少时间单位创建一个新的文件夹 a2.sinks.k1.hdfs.roundValue = 1 #重新定义时间单位 a2.sinks.k1.hdfs.roundUnit = hour #是否使用本地时间戳 a2.sinks.k1.hdfs.useLocalTimeStamp = true #积攒多少个Event才flush到HDFS一次 a2.sinks.k1.hdfs.batchSize = 100 #设置文件类型,可支持压缩 a2.sinks.k1.hdfs.fileType = DataStream #多久生成一个新的文件 a2.sinks.k1.hdfs.rollInterval = 600 #设置每个文件的滚动大小大概是128M a2.sinks.k1.hdfs.rollSize = 134217700 #文件的滚动与Event数量无关 a2.sinks.k1.hdfs.rollCount = 0 # Describe the channel a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r1.channels = c1 a2.sinks.k1.channel = c1

[kris@hadoop101 group1]$ vim flume-flume-dir.conf

# Name the components on this agent a3.sources = r1 a3.sinks = k1 a3.channels = c2 # Describe/configure the source a3.sources.r1.type = avro a3.sources.r1.bind = hadoop101 a3.sources.r1.port = 4142 # Describe the sink a3.sinks.k1.type = file_roll a3.sinks.k1.sink.directory = /opt/module/data/flume3 # Describe the channel a3.channels.c2.type = memory a3.channels.c2.capacity = 1000 a3.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r1.channels = c2 a3.sinks.k1.channel = c2

执行配置文件 分别开启对应配置文件:flume-flume-dir,flume-flume-hdfs,flume-file-flume。//从sink端往source端开启 [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a3 -f job/group1/flume-flume-dir.conf [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a2 -f job/group1/flume-flume-hdfs.conf [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/group1/flume-file-flume.conf 启动Hadoop和Hive start-dfs.sh start-yarn.sh bin/hive

检查HDFS上数据

检查/opt/module/datas/flume3目录中数据

[kris@hadoop101 ~]$ cd /opt/module/datas/flume3/ [kris@hadoop101 flume3]$ ll 总用量 4 -rw-rw-r--. 1 kris kris 0 2月 20 11:49 1550634573721-1 -rw-rw-r--. 1 kris kris 0 2月 20 11:54 1550634573721-10 -rw-rw-r--. 1 kris kris 0 2月 20 11:54 1550634573721-11 -rw-rw-r--. 1 kris kris 0 2月 20 11:50 1550634573721-2 -rw-rw-r--. 1 kris kris 0 2月 20 11:50 1550634573721-3 -rw-rw-r--. 1 kris kris 0 2月 20 11:51 1550634573721-4 -rw-rw-r--. 1 kris kris 0 2月 20 11:51 1550634573721-5 -rw-rw-r--. 1 kris kris 0 2月 20 11:52 1550634573721-6 -rw-rw-r--. 1 kris kris 0 2月 20 11:52 1550634573721-7 -rw-rw-r--. 1 kris kris 0 2月 20 11:53 1550634573721-8 -rw-rw-r--. 1 kris kris 1738 2月 20 11:53 1550634573721-9 [kris@hadoop101 flume3]$ cat 1550634573721-9 2019-02-20 11:00:42,459 INFO [main]: metastore.hivemetastoressimpl (HiveMetaStoreFsImpl.java:deleteDir(53)) - Deleted the diretory hdfs://hadoop101:9000/user/hive/warehouse/student22 2019-02-20 11:00:42,460 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogEnd(148)) - </PERFLOG method=runTasks start=1550631641861 end=1550631642460 duration=599 from=org.apache.hadoop.hive.ql.Driver> 2019-02-20 11:00:42,461 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogEnd(148)) - </PERFLOG method=Driver.execute start=1550631641860 end=1550631642461 duration=601 from=org.apache.hadoop.hive.ql.Driver> 2019-02-20 11:00:42,461 INFO [main]: ql.Driver (SessionState.java:printInfo(951)) - OK 2019-02-20 11:00:42,461 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogBegin(121)) - <PERFLOG method=releaseLocks from=org.apache.hadoop.hive.ql.Driver> 2019-02-20 11:00:42,461 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogEnd(148)) - </PERFLOG method=releaseLocks start=1550631642461 end=1550631642461 duration=0 from=org.apache.hadoop.hive.ql.Driver> 2019-02-20 11:00:42,461 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogEnd(148)) - </PERFLOG method=Driver.run start=1550631641638 end=1550631642461 duration=823 from=org.apache.hadoop.hive.ql.Driver> 2019-02-20 11:00:42,461 INFO [main]: CliDriver (SessionState.java:printInfo(951)) - Time taken: 0.824 seconds 2019-02-20 11:00:42,461 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogBegin(121)) - <PERFLOG method=releaseLocks from=org.apache.hadoop.hive.ql.Driver> 2019-02-20 11:00:42,462 INFO [main]: log.PerfLogger (PerfLogger.java:PerfLogEnd(148)) - </PERFLOG method=releaseLocks start=1550631642461 end=1550631642462 duration=1 from=org.apache.hadoop.hive.ql.Driver>

5. 单数据源多出口案例(Sink组)

单Source、Channel多Sink(负载均衡)

Flume 的负载均衡和故障转移

目的是为了提高整个系统的容错能力和稳定性。简单配置就可以轻松实现,首先需要设置 Sink 组,同一个 Sink 组内有多个子 Sink,不同 Sink 之间可以配置成负载均衡或者故障转移。

单数据源多出口(Sink组): flum1-load_balance

端口(netcat)--->flume1---Sink1(avro)-->flume2---Sink(Logger控制台)

---Sink2(avro)-->flume3---Sink(Logger控制台)

flume1配置了数据均衡的输出到各个sink端:见下

[kris@hadoop101 group2]$ cat flume-netcat-flume.conf

# Name the components on this agent a1.sources = r1 a1.channels = c1 a1.sinkgroups = g1 a1.sinks = k1 k2 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44444 a1.sinkgroups.g1.processor.type = load_balance a1.sinkgroups.g1.processor.backoff = true a1.sinkgroups.g1.processor.selector = round_robin a1.sinkgroups.g1.processor.selector.maxTimeOut=10000 # Describe the sink a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop101 a1.sinks.k1.port = 4141 a1.sinks.k2.type = avro a1.sinks.k2.hostname = hadoop101 a1.sinks.k2.port = 4142 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c1

[kris@hadoop101 group2]$ cat flume-flume-console1.conf

# Name the components on this agent a2.sources = r1 a2.sinks = k1 a2.channels = c1 # Describe/configure the source a2.sources.r1.type = avro a2.sources.r1.bind = hadoop101 a2.sources.r1.port = 4141 # Describe the sink a2.sinks.k1.type = logger # Describe the channel a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r1.channels = c1 a2.sinks.k1.channel = c1

[kris@hadoop101 group2]$ cat flume-flume-console2.conf

# Name the components on this agent a3.sources = r1 a3.sinks = k1 a3.channels = c2 # Describe/configure the source a3.sources.r1.type = avro a3.sources.r1.bind = hadoop101 a3.sources.r1.port = 4142 # Describe the sink a3.sinks.k1.type = logger # Describe the channel a3.channels.c2.type = memory a3.channels.c2.capacity = 1000 a3.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r1.channels = c2 a3.sinks.k1.channel = c2

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a3 -f job/group2/flume-flume-console2.conf -Dflume.root.logger=INFO,console [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a2 -f job/group2/flume-flume-console1.conf -Dflume.root.logger.INFO,console [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/group2/flume-netcat-flume.conf

[kris@hadoop101 group2]$ nc localhost 44444 1 OK 1 OK 2 OK 3 OK 4 oggerSink.java:95)] Event: { headers:{} body: 31 1 } 2019-02-20 15:26:37,828 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 31 1 } 2019-02-20 15:26:37,828 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 32 2 } 2019-02-20 15:26:37,829 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 33 3 } 2019-02-20 15:26:37,829 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 34 4 } 2019-02-20 15:26:37,830 (SinkRunne 2019-02-20 15:27:06,706 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 61 a } 2019-02-20 15:27:06,706 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 62 b } 2019-02-20 15:27:06,707

6. 多数据源汇总

多Source汇总数据到单Flume

7. 多数据源汇总:

group.log(exec)--->flume1--Sink(avro;hadoop103--4141)-->flume3---Sink(Logger控制台)

端口(netcat)-->flume2--Sink(avro;hadoop103-4141)-->flume3---Sink(Logger控制台)

分发Flume

[kris@hadoop101 module]$ xsync flume 在hadoop101、hadoop102以及hadoop103的/opt/module/flume/job目录下创建一个group3文件夹。 [kris@hadoop101 job]$ mkdir group3 [kris@hadoop102 job]$ mkdir group3 [kris@hadoop103 job]$ mkdir group3

1.创建flume1-logger-flume.conf

配置Source用于监控hive.log文件,配置Sink输出数据到下一级Flume。

在hadoop102上创建配置文件并打开

[kris@hadoop102 group3]$ vim flume1-logger-flume.conf

# Name the components on this agent # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/module/group.log a1.sources.r1.shell = /bin/bash -c # Describe the sink a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop103 a1.sinks.k1.port = 4141 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

2.创建flume2-netcat-flume.conf

配置Source监控端口44444数据流,配置Sink数据到下一级Flume:

在hadoop101上创建配置文件并打开

[kris@hadoop101 group3]$ vim flume2-netcat-flume.conf

# Name the components on this agent # Name the components on this agent a2.sources = r1 a2.sinks = k1 a2.channels = c1 # Describe/configure the source a2.sources.r1.type = netcat a2.sources.r1.bind = hadoop101 a2.sources.r1.port = 44444 # Describe the sink a2.sinks.k1.type = avro a2.sinks.k1.hostname = hadoop103 a2.sinks.k1.port = 4141 # Use a channel which buffers events in memory a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r1.channels = c1 a2.sinks.k1.channel = c1

3.创建flume3-flume-logger.conf

配置source用于接收flume1与flume2发送过来的数据流,最终合并后sink到控制台。

在hadoop103上创建配置文件并打开;因为前面两个avro都是hadoop103: 4141,它们的ip和端口是一样的,所以只需配置一个avro即可

[kris@hadoop103 group3]$ vim flume3-flume-logger.conf

# Name the components on this agent a3.sources = r1 a3.sinks = k1 a3.channels = c1 # Describe/configure the source a3.sources.r1.type = avro a3.sources.r1.bind = hadoop103 a3.sources.r1.port = 4141 # Describe the sink # Describe the sink a3.sinks.k1.type = logger # Describe the channel a3.channels.c1.type = memory a3.channels.c1.capacity = 1000 a3.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r1.channels = c1 a3.sinks.k1.channel = c1

4.执行配置文件

分别开启对应配置文件:flume3-flume-logger.conf,flume2-netcat-flume.conf,flume1-logger-flume.conf。

[kris@hadoop103 flume]$ bin/flume-ng agent -c conf/ -n a3 -f job/group3/flume3-flume-logger.conf -Dflume.root.logger=INFO,console [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a2 -f job/group3/flume2-netcat-flume.conf [kris@hadoop102 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/group3/flume1-logger-flume.conf

在hadoop102上向/opt/module目录下的group.log追加内容 [kris@hadoop102 module]$ echo "Hello World" > group.log [kris@hadoop102 module]$ ll 总用量 24 drwxrwxr-x. 10 kris kris 4096 2月 20 11:07 flume -rw-rw-r--. 1 kris kris 12 2月 20 16:13 group.log 在hadoop101上向44444端口发送数据 [kris@hadoop101 flume]$ nc hadoop101 44444 1 OK 2 OK 3 OK 4 检查hadoop103上数据 2019-02-20 16:13:20,748 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 48 65 6C 6C 6F 20 57 6F 72 6C 64 Hello World } 2019-02-20 16:14:46,774 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 31 1 } 2019-02-20 16:14:46,775 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:95)] Event: { headers:{} body: 32 2 }

8. 练习

案例需求:

1)flume-1监控hive.log日志,flume-1的数据传送给flume-2,flume-2将数据追加到本地文件,同时将数据传输到flume-3。

2)flume-4监控本地另一个自己创建的文件any.txt,并将数据传送给flume-3。

3)flume-3将汇总数据写入到HDFS。

请先画出结构图,再开始编写任务脚本。

hive.log(exec)--->flume-1 ---Sink1(avro;hadoop101:4141) --> flume-2--Sink1(logger本地文件) --Sink2(avro;hadoop101:4142) --> flume-3--Sink(HDFS) 本地any.txt(exec)--->flume-4--Sink(avro;hadoop101:4142)-->flume-3到HDFS 启动3、2、1、4

flume-1:

vim flume1-file-flume.conf

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/module/hive/logs/hive.log a1.sources.r1.shell = /bin/bash -c # Describe the sink # sink端的avro是一个数据发送者 a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop101 a1.sinks.k1.port = 4141 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

flume2:

vim flume2-flume-dir.conf

# Name the components on this agent a2.sources = r1 a2.sinks = k1 k2 a2.channels = c1 c2 # 将数据流复制给所有channel a2.sources.r1.selector.type = replicating # Describe/configure the source a2.sources.r1.type = avro a2.sources.r1.bind = hadoop101 a2.sources.r1.port = 4141 # Describe the sink a2.sinks.k1.type = logger a2.sinks.k2.type = avro a2.sinks.k2.hostname = hadoop101 a2.sinks.k2.port = 4142 # Describe the channel a2.channels.c1.type = memory a2.channels.c1.capacity = 1000 a2.channels.c1.transactionCapacity = 100 # Describe the channel a2.channels.c2.type = memory a2.channels.c2.capacity = 1000 a2.channels.c2.transactionCapacity = 100 # Bind the source and sink to the channel a2.sources.r1.channels =c1 c2 a2.sinks.k1.channel = c1 a2.sinks.k2.channel = c2

flume3:

vim flume3-flume-hdfs.conf

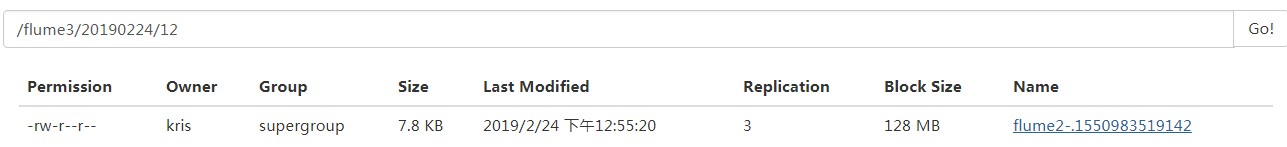

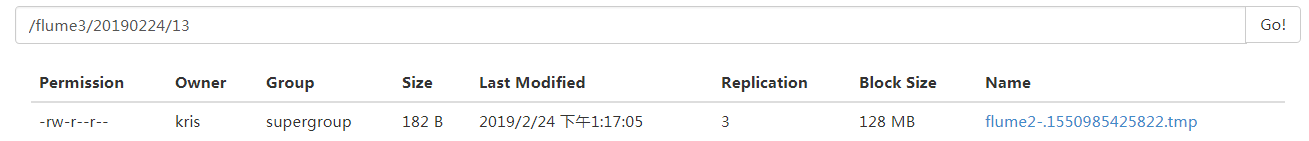

# Name the components on this agent a3.sources = r1 a3.sinks = k1 a3.channels = c1 # Describe/configure the source # source端的avro是一个数据接收服务 a3.sources.r1.type = avro a3.sources.r1.bind = hadoop101 a3.sources.r1.port = 4142 # Describe the sink a3.sinks.k1.type = hdfs a3.sinks.k1.hdfs.path = hdfs://hadoop101:9000/flume3/%Y%m%d/%H #上传文件的前缀 a3.sinks.k1.hdfs.filePrefix = flume3- #是否按照时间滚动文件夹 a3.sinks.k1.hdfs.round = true #多少时间单位创建一个新的文件夹 a3.sinks.k1.hdfs.roundValue = 1 #重新定义时间单位 a3.sinks.k1.hdfs.roundUnit = hour #是否使用本地时间戳 a3.sinks.k1.hdfs.useLocalTimeStamp = true #积攒多少个Event才flush到HDFS一次 a3.sinks.k1.hdfs.batchSize = 100 #设置文件类型,可支持压缩 a3.sinks.k1.hdfs.fileType = DataStream #多久生成一个新的文件 a3.sinks.k1.hdfs.rollInterval = 600 #设置每个文件的滚动大小大概是128M a3.sinks.k1.hdfs.rollSize = 134217700 #文件的滚动与Event数量无关 a3.sinks.k1.hdfs.rollCount = 0 # Describe the channel a3.channels.c1.type = memory a3.channels.c1.capacity = 1000 a3.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a3.sources.r1.channels = c1 a3.sinks.k1.channel = c1

flume4:

vim flume4-file-flume.conf

# Name the components on this agent a4.sources = r1 a4.sinks = k1 a4.channels = c1 # Describe/configure the source a4.sources.r1.type = exec a4.sources.r1.command = tail -F /opt/module/datas/any.txt a4.sources.r1.shell = /bin/bash -c # Describe the sink # sink端的avro是一个数据发送者 a4.sinks.k1.type = avro a4.sinks.k1.hostname = hadoop101 a4.sinks.k1.port = 4142 # Describe the channel a4.channels.c1.type = memory a4.channels.c1.capacity = 1000 a4.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a4.sources.r1.channels = c1 a4.sinks.k1.channel = c1

启动

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a3 -f job/group4/flume3-flume-hdfs.conf [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a2 -f job/group4/flume2-flume-dir.conf -Dflume.root.logger=INFO,console [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/group4/flume1-file-flume.conf [kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a4 -f job/group4/flume4-file-flume.conf 数据来源:flume1;hive.log和flume4| any.txt文件 [kris@hadoop101 datas]$ cat any.txt ##文件发生变化hdfs上会实时更新 1 2 3 4 5 《疑犯追踪》 悬疑,动作,科幻,剧情 《Lie to me》 悬疑,警匪,动作,心理,剧情 《战狼2》 战争,动作,灾难 II Love You [kris@hadoop101 datas]$ pwd /opt/module/datas

9. 自定义Source

Source是负责接收数据到Flume Agent的组件。Source组件可以处理各种类型、各种格式的日志数据,包括avro、thrift、exec、jms、spooling directory、netcat、sequence generator、syslog、http、legacy。官方提供的source类型已经很多,但是有时候并不能满足实际开发当中的需求,此时我们就需要根据实际需求自定义某些source。

官方也提供了自定义source的接口:

https://flume.apache.org/FlumeDeveloperGuide.html#source根据官方说明自定义MySource需要继承AbstractSource类并实现Configurable和PollableSource接口。

实现相应方法:

getBackOffSleepIncrement()//暂不用

getMaxBackOffSleepInterval()//暂不用

configure(Context context)//初始化context(读取配置文件内容)

process()//获取数据封装成event并写入channel,这个方法将被循环调用。

使用场景:读取MySQL数据或者其他文件系统。

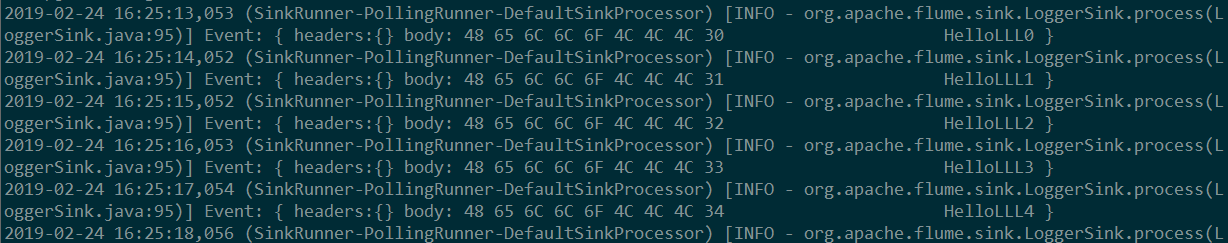

需求:使用flume接收数据,并给每条数据添加前缀,输出到控制台。前缀可从flume配置文件中配置。

import org.apache.flume.Context; import org.apache.flume.EventDeliveryException; import org.apache.flume.PollableSource; import org.apache.flume.conf.Configurable; import org.apache.flume.event.SimpleEvent; import org.apache.flume.source.AbstractSource; import java.util.HashMap; import java.util.Map; public class MySource extends AbstractSource implements Configurable, PollableSource { private String prefix; private Long delay; /** * 数据处理方法,被flume循环调用 * @return 数据读取状态 * @throws EventDeliveryException 我们异常就回滚 */ public Status process() throws EventDeliveryException { Status status = null; //Status是个enum类型,成功或失败 //创建事件 SimpleEvent event = new SimpleEvent(); //Event由可选的header和载有数据的一个byte array 构成 Map<String, String> headerMap = new HashMap<String, String>(); //Header是容纳了key-value字符串对的HashMap。 for (int i = 0; i < 5; i++){ try { event.setHeaders(headerMap); //封装事件 event.setBody((prefix + "LLL" + i).getBytes()); getChannelProcessor().processEvent(event);//将事件写入channel status = Status.READY; Thread.sleep(delay); } catch (InterruptedException e) { e.printStackTrace(); return Status.BACKOFF; } } return status; } public long getBackOffSleepIncrement() { return 0; } public long getMaxBackOffSleepInterval() { return 0; } /** * 配置自定义的Source * @param context */ public void configure(Context context) { prefix = context.getString("prefix", "Hello"); delay = context.getLong("delay", 1000L); } }

测试

1)打包

将写好的代码打包,并放到flume的lib目录(/opt/module/flume)下。

2)配置文件

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = com.atguigu.source.MySource a1.sources.r1.delay = 1000 #a1.sources.r1.field = HelloWorld # Describe the sink a1.sinks.k1.type = logger # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/mysource-flume-logger.conf -Dflume.root.logger=INFO,console

10. 自定义Sink

Sink不断地轮询Channel中的事件且批量地移除它们,并将这些事件批量写入到存储或索引系统、或者被发送到另一个Flume Agent。

Sink是完全事务性的。在从Channel批量删除数据之前,每个Sink用Channel启动一个事务。批量事件一旦成功写出到存储系统或下一个Flume Agent,Sink就利用Channel提交事务。事务一旦被提交,该Channel从自己的内部缓冲区删除事件。

Sink组件目的地包括hdfs、logger、avro、thrift、ipc、file、null、HBase、solr、自定义。官方提供的Sink类型已经很多,但是有时候并不能满足实际开发当中的需求,此时我们就需要根据实际需求自定义某些Sink。

官方也提供了自定义source的接口:

https://flume.apache.org/FlumeDeveloperGuide.html#sink根据官方说明自定义MySink需要继承AbstractSink类并实现Configurable接口。

实现相应方法:

configure(Context context)//初始化context(读取配置文件内容)

process()//从Channel读取获取数据(event),这个方法将被循环调用。

使用场景:读取Channel数据写入MySQL或者其他文件系统。

需求:使用flume接收数据,并在Sink端给每条数据添加前缀和后缀,输出到控制台。前后缀可在flume任务配置文件中配置。

import org.apache.flume.*; import org.apache.flume.conf.Configurable; import org.apache.flume.sink.AbstractSink; import org.slf4j.Logger; import org.slf4j.LoggerFactory; public class MySink extends AbstractSink implements Configurable { private static final Logger LOGGER = LoggerFactory.getLogger(AbstractSink.class); //创建logger对象 private String prefix; private String suffix; /** * Sink从channel中拉取数据并处理 * @return * @throws EventDeliveryException */ public Status process() throws EventDeliveryException { Status status = null; //声明返回值状态 Event event;//声明事件 Channel channel = getChannel();//获取当前sink绑定的channel Transaction transaction = channel.getTransaction();//获取事务 transaction.begin(); //开启事务 try { while ((event = channel.take()) == null) { Thread.sleep(500); } LOGGER.info(prefix + new String(event.getBody()) + suffix); status = Status.READY; transaction.commit(); //事务提交 } catch (Exception e) { e.printStackTrace(); status = Status.BACKOFF; transaction.rollback(); //事务回滚 } finally { transaction.close(); //关闭事务 } return status; } /** * 设置Sink * @param context 上下文环境 */ public void configure(Context context) { prefix = context.getString("prefix", "Hello"); suffix = context.getString("suffix", "kris"); } }

测试

1)打包

将写好的代码打包,并放到flume的lib目录(/opt/module/flume)下。

2)配置文件

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44444 # Describe the sink a1.sinks.k1.type = com.atguigu.source.MySink #a1.sinks.k1.prefix = kris: a1.sinks.k1.suffix = :kris # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/mysource-flume-netcat.conf -Dflume.root.logger=INFO,console [kris@hadoop101 job]$ nc localhost 44444 1 OK 2 2019-02-24 16:27:25,078 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - com.atguigu.source.MySink.process(MySink.java:32)] kris:1:kris 2019-02-24 16:27:25,777 (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - com.atguigu.source.MySink.process(MySink.java:32)] kris:2:kris

11. Flume监控之Ganglia

Ganglia的安装与部署

安装ganglia 、httpd服务与php、其他依赖

sudo rpm -Uvh http://dl.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm sudo yum -y install httpd php rrdtool perl-rrdtool rrdtool-devel apr-devel ganglia-gmetad ganglia-web ganglia-gmond

Ganglia由gmond、gmetad和gweb三部分组成。

gmond(Ganglia Monitoring Daemon)是一种轻量级服务,安装在每台需要收集指标数据的节点主机上。使用gmond,你可以很容易收集很多系统指标数据,如CPU、内存、磁盘、网络和活跃进程的数据等。

gmetad(Ganglia Meta Daemon)整合所有信息,并将其以RRD格式存储至磁盘的服务。

gweb(Ganglia Web)Ganglia可视化工具,gweb是一种利用浏览器显示gmetad所存储数据的PHP前端。在Web界面中以图表方式展现集群的运行状态下收集的多种不同指标数据。

配置

1)修改配置文件/etc/httpd/conf.d/ganglia.conf

[kris@hadoop101 flume]$ sudo vim /etc/httpd/conf.d/ganglia.conf

2)修改为红颜色的配置:

# Ganglia monitoring system php web frontend

Alias /ganglia /usr/share/ganglia

<Location /ganglia>

Order deny,allow

#Deny from all

Allow from all

# Allow from 127.0.0.1

# Allow from ::1

# Allow from .example.com

</Location>

3) 修改配置文件/etc/ganglia/gmetad.conf

[kris@hadoop101 flume]$ sudo vim /etc/ganglia/gmetad.conf

修改为:

data_source "hadoop101" 192.168.1.101

3) 修改配置文件/etc/ganglia/gmond.conf

[kris@hadoop101 flume]$ sudo vim /etc/ganglia/gmond.conf

修改为:

cluster {

name = "hadoop101"

owner = "unspecified"

latlong = "unspecified"

url = "unspecified"

}

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

# mcast_join = 239.2.11.71

host = 192.168.1.101

port = 8649

ttl = 1

}

udp_recv_channel {

# mcast_join = 239.2.11.71

port = 8649

bind = 192.168.1.101

retry_bind = true

# Size of the UDP buffer. If you are handling lots of metrics you really

# should bump it up to e.g. 10MB or even higher.

# buffer = 10485760

}

4) 修改配置文件/etc/selinux/config

[kris@hadoop101 flume]$ sudo vim /etc/selinux/config

修改为:

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

SELINUX=disabled

# SELINUXTYPE= can take one of these two values:

# targeted - Targeted processes are protected,

# mls - Multi Level Security protection.

SELINUXTYPE=targeted

尖叫提示:selinux本次生效关闭必须重启,如果此时不想重启,可以临时生效之:

[kris@hadoop101 flume]$ sudo setenforce 0

1.启动

1) 启动ganglia

[kris@hadoop101 flume]$ sudo service httpd start

[kris@hadoop101 flume]$ sudo service gmetad start

[kris@hadoop101 flume]$ sudo service gmond start

2) 打开网页浏览ganglia页面

http://192.168.1.101/ganglia

尖叫提示:如果完成以上操作依然出现权限不足错误,请修改/var/lib/ganglia目录的权限:

[kris@hadoop101 flume]$ sudo chmod -R 777 /var/lib/ganglia

2 操作Flume测试监控

1) 修改/opt/module/flume/conf目录下的flume-env.sh配置:

JAVA_OPTS="-Dflume.monitoring.type=ganglia

-Dflume.monitoring.hosts=192.168.1.101:8649

-Xms100m

-Xmx200m"

2) 启动Flume任务

[kris@hadoop101 flume]$ bin/flume-ng agent

--conf conf/

--name a1

--conf-file job/flume-netcat-logger.conf

-Dflume.root.logger==INFO,console

-Dflume.monitoring.type=ganglia

-Dflume.monitoring.hosts=192.168.1.101:8649

简写如下:

[kris@hadoop101 flume]$ bin/flume-ng agent -c conf/ -n a1 -f job/flume-netcat-logger.conf -Dflume.root.logger=INFO,console -Dflume.monitoring.type=ganglia -Dflume.monitoring.hosts=192.168.1.101:8649

3) 发送数据观察ganglia监测图

[kris@hadoop101 flume]$ nc localhost 44444

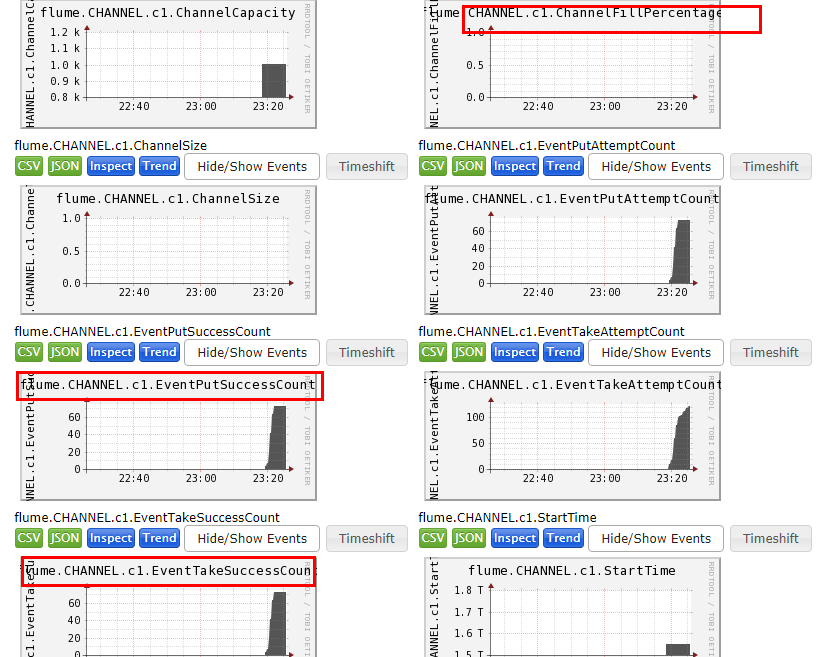

flume.CHANNEL.c1.EventPutSuccessCount flume发送的单例叫event,put叫成功接收的数据,就是往channel里边put的数据

flume.CHANNEL.c1.EventTakeSuccessCount 这个是take的数据,更日志数据做对比看有没有丢数据

flume.CHANNEL.c1.ChannelFillPercentage 这个数只要不满,就不会丢数据,如果1.0表示全部填满了;