此资料来源于网络,我只是个搬运工,链接地址没有记住,自行百度吧。

----创建项目

# scrapy startproject MyPicSpide

r # cd MyPicSpider

-----创建自己的spider 在项目的spiders文件夹里面

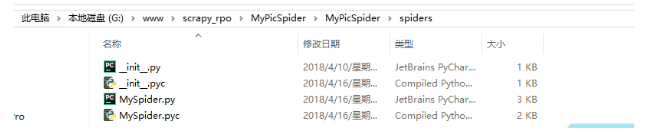

MySpider.py创建完毕

-----设置settings.py

## 设置USER_AGENT

### 设置单个 USER_AGENT = " Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)"

###设置多个

USER_AGENTS = [ "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)", "Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5", "Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11", "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20", "Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52", ]

###设置多个USER_AGENT之后,随机设置,修改中间件

middlewares.py ###在最后面增加此类,需要引入random库

class RandomUserAgent(object):

"""根据预定义的列表随机更换用户代理"""

def __init__(self, agents):

self.agents = agents

@classmethod

def from_crawler(cls, crawler):

return cls(crawler.settings.getlist('USER_AGENTS'))

def process_request(self, request, spider):

request.headers.setdefault('User-Agent', random.choice(self.agents))

setting.py

DOWNLOADER_MIDDLEWARES = {

'myproject.middlewares.RandomUserAgent': 400,

}

####设置访问范围,只访问robot.txt允许的内容

ROBOTSTXT_OBEY = True 一般设置为False。

####禁用cookies COOKIES_ENABLED = False

####开启ITEM_PIPELINES -----使用默认的ImagesPipeline,可以直接使用 ITEM_PIPELINES = {'scrapy.pipelines.images.ImagesPipeline': 1}

-----自己重写此类 ITEM_PIPELINES = {'项目名.pipelines.MyImagesPipeline': 1}

####设置下载路径 IMAGES_STORE ='G:\www\scrapy_rpo\pic'

下载的时候它会在该文件夹里面生成full文件夹,图片都存在这里面,但是涉及到很多目录的话就会很尴尬,这个我们下次再说。

####过滤图片,根据宽度,高度,低于此数据就不采集

IMAGES_MIN_HEIGHT = 110 IMAGES_MIN_WIDTH = 110

####生成缩略图 IMAGES_THUMBS = { 'small': (50, 50), 'big': (270, 270), }

####修改pipelines.py

---------使用默认的方式 什么都不写

--------重写此类,主要用于增加新的功能,看自己的选择

import scrapy

from scrapy.pipeline.images import ImagesPipeline

from scrapy.exceptions import DropItem

class MyImagesPipeline(ImagesPipeline):

def get_media_requests(self, item, info):

for image_url in item['image_urls']:

yield scrapy.Request(image_url,headers={'Referer':item['header_referer']})

def item_completed(self, results, item, info):

image_paths = [x['path'] for ok, x in results if ok]

if not image_paths:

raise DropItem("Item contains no images")

item['image_paths'] = image_paths

return item

####修改items.py,设置字段

import scrapy

class PicspiderItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

tag = scrapy.Field()

image_urls = scrapy.Field() ##图片路径

images = scrapy.Field()

image_paths = scrapy.Field()

####MySpider.py 获取图片url路径传入

def parse_item(self,response):

print response.url

item = PicspiderItem()

tag = response.xpath('//h1[@class="articleV4Tit"]/text()').extract()

#print tag[0]

#obj = BeautifulSoup(response, 'html.parser')

#li_list = obj.find('ul',{'class':'articleV4Page l'}).find_all('li')

#li_list = response.xpath('//ul[@class="articleV4Page l"]/li').extract()

#print len(li_list)

srcs = response.xpath('//*[@id="picBody"]/p/a[1]/img/@src').extract()

item['image_urls'] = srcs

return item

#item['tag'] = tag[0]

#items = []

#items.append(item)

#return items

####运行即可