本节课分成二部分讲解:

一、Spark Streaming on Polling from Flume实战

二、Spark Streaming on Polling from Flume源码

第一部分:

推模式(Flume push SparkStreaming) VS 拉模式(SparkStreaming poll Flume)

采用推模式:推模式的理解就是Flume作为缓存,存有数据。监听对应端口,如果服务可以链接,就将数据push过去。(简单,耦合要低),缺点是SparkStreaming 程序没有启动的话,Flume端会报错,同时会导致Spark Streaming 程序来不及消费的情况。

采用拉模式:拉模式就是自己定义一个sink,SparkStreaming自己去channel里面取数据,根据自身条件去获取数据,稳定性好。

Flume poll 实战:

1.Flume poll 配置

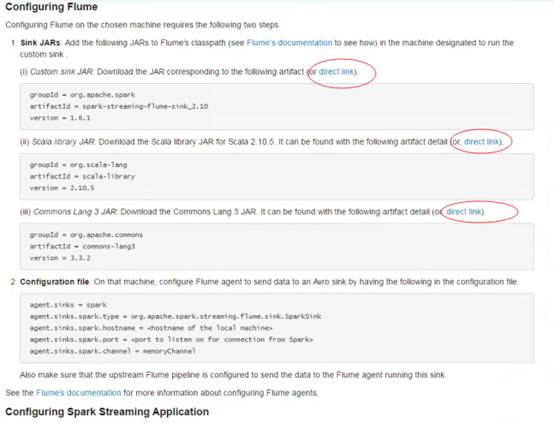

进入http://spark.apache.org/docs/latest/streaming-flume-integration.html官网,下载

spark-streaming-flume-sink_2.10-1.6.0.jar、scala-library-2.10.5.jar、commons-lang3-3.3.2.jar三个包:

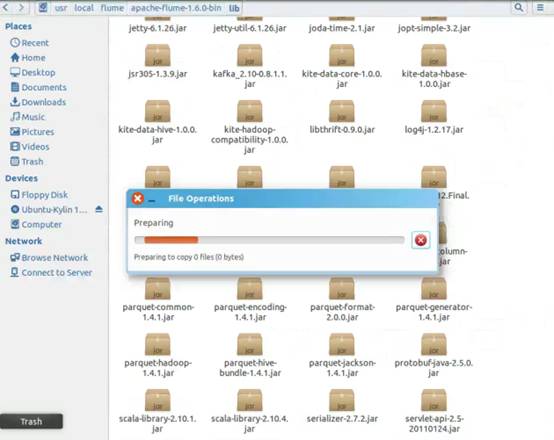

将下载后的三个jar包放入Flume安装lib目录:

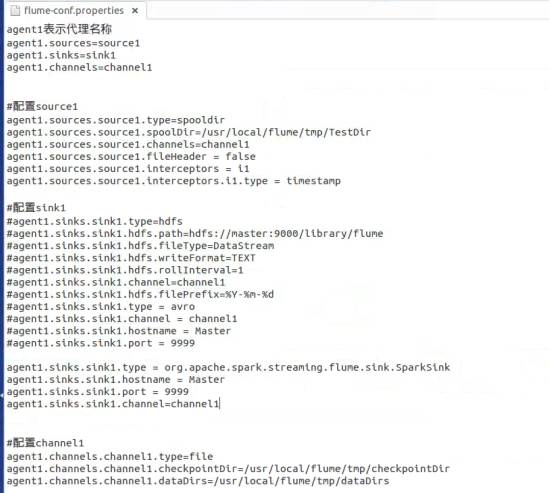

配置Flume conf环境参数:

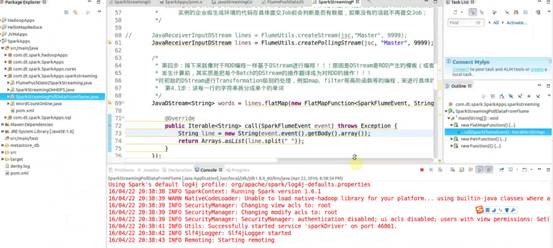

编写业务代码:

public class SparkStreamingPollDataFromFlume {

public static void main(String[] args) {

/*

* 第一步:配置SparkConf:

* 1,至少2条线程:因为Spark Streaming应用程序在运行的时候,至少有一条

* 线程用于不断的循环接收数据,并且至少有一条线程用于处理接受的数据(否则的话无法

* 有线程用于处理数据,随着时间的推移,内存和磁盘都会不堪重负);

* 2,对于集群而言,每个Executor一般肯定不止一个Thread,那对于处理Spark Streaming的

* 应用程序而言,每个Executor一般分配多少Core比较合适?根据我们过去的经验,5个左右的

* Core是最佳的(一个段子分配为奇数个Core表现最佳,例如3个、5个、7个Core等);

*/

SparkConf conf = new SparkConf().setAppName("SparkStreamingPollDataFromFlume").setMaster("local[2]");

/*

* 第二步:创建SparkStreamingContext:

* 1,这个是SparkStreaming应用程序所有功能的起始点和程序调度的核心

* SparkStreamingContext的构建可以基于SparkConf参数,也可基于持久化的SparkStreamingContext的内容

* 来恢复过来(典型的场景是Driver崩溃后重新启动,由于Spark Streaming具有连续7*24小时不间断运行的特征,

* 所有需要在Driver重新启动后继续上衣系的状态,此时的状态恢复需要基于曾经的Checkpoint);

* 2,在一个Spark Streaming应用程序中可以创建若干个SparkStreamingContext对象,使用下一个SparkStreamingContext

* 之前需要把前面正在运行的SparkStreamingContext对象关闭掉,由此,我们获得一个重大的启发SparkStreaming框架也只是

* Spark Core上的一个应用程序而已,只不过Spark Streaming框架箱运行的话需要Spark工程师写业务逻辑处理代码;

*/

JavaStreamingContext jsc = new JavaStreamingContext(conf, Durations.seconds(30));

/*

* 第三步:创建Spark Streaming输入数据来源input Stream:

* 1,数据输入来源可以基于File、HDFS、Flume、Kafka、Socket等

* 2, 在这里我们指定数据来源于网络Socket端口,Spark Streaming连接上该端口并在运行的时候一直监听该端口

* 的数据(当然该端口服务首先必须存在),并且在后续会根据业务需要不断的有数据产生(当然对于Spark Streaming

* 应用程序的运行而言,有无数据其处理流程都是一样的);

* 3,如果经常在每间隔5秒钟没有数据的话不断的启动空的Job其实是会造成调度资源的浪费,因为并没有数据需要发生计算,所以

* 实例的企业级生成环境的代码在具体提交Job前会判断是否有数据,如果没有的话就不再提交Job;

*/

JavaReceiverInputDStream lines = FlumeUtils.createPollingStream(jsc, "Master", 9999);

/*

* 第四步:接下来就像对于RDD编程一样基于DStream进行编程!!!原因是DStream是RDD产生的模板(或者说类),在Spark Streaming具体

* 发生计算前,其实质是把每个Batch的DStream的操作翻译成为对RDD的操作!!!

*对初始的DStream进行Transformation级别的处理,例如map、filter等高阶函数等的编程,来进行具体的数据计算

* 第4.1步:讲每一行的字符串拆分成单个的单词

*/

JavaDStream<String> words = lines.flatMap(new FlatMapFunction<SparkFlumeEvent, String>() { //如果是Scala,由于SAM转换,所以可以写成val words = lines.flatMap { line => line.split(" ")}

@Override

public Iterable<String> call(SparkFlumeEvent event) throws Exception {

String line = new String(event.event().getBody().array());

return Arrays.asList(line.split(" "));

}

});

/*

* 第四步:对初始的DStream进行Transformation级别的处理,例如map、filter等高阶函数等的编程,来进行具体的数据计算

* 第4.2步:在单词拆分的基础上对每个单词实例计数为1,也就是word => (word, 1)

*/

JavaPairDStream<String, Integer> pairs = words.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String word) throws Exception {

return new Tuple2<String, Integer>(word, 1);

}

});

/*

* 第四步:对初始的DStream进行Transformation级别的处理,例如map、filter等高阶函数等的编程,来进行具体的数据计算

* 第4.3步:在每个单词实例计数为1基础之上统计每个单词在文件中出现的总次数

*/

JavaPairDStream<String, Integer> wordsCount = pairs.reduceByKey(new Function2<Integer, Integer, Integer>() { //对相同的Key,进行Value的累计(包括Local和Reducer级别同时Reduce)

@Override

public Integer call(Integer v1, Integer v2) throws Exception {

return v1 + v2;

}

});

/*

* 此处的print并不会直接出发Job的执行,因为现在的一切都是在Spark Streaming框架的控制之下的,对于Spark Streaming

* 而言具体是否触发真正的Job运行是基于设置的Duration时间间隔的

* 诸位一定要注意的是Spark Streaming应用程序要想执行具体的Job,对Dtream就必须有output Stream操作,

* output Stream有很多类型的函数触发,类print、saveAsTextFile、saveAsHadoopFiles等,最为重要的一个

* 方法是foraeachRDD,因为Spark Streaming处理的结果一般都会放在Redis、DB、DashBoard等上面,foreachRDD

* 主要就是用用来完成这些功能的,而且可以随意的自定义具体数据到底放在哪里!!!

*

*/

wordsCount.print();

/*

* Spark Streaming执行引擎也就是Driver开始运行,Driver启动的时候是位于一条新的线程中的,当然其内部有消息循环体,用于

* 接受应用程序本身或者Executor中的消息;

*/

jsc.start();

jsc.awaitTermination();

jsc.close();

}

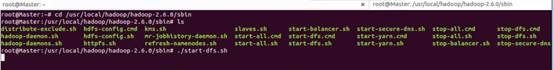

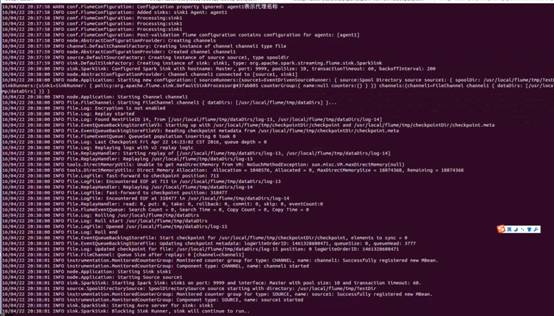

启动HDFS集群:

启动运行Flume:

启动eclipse下的应用程序:

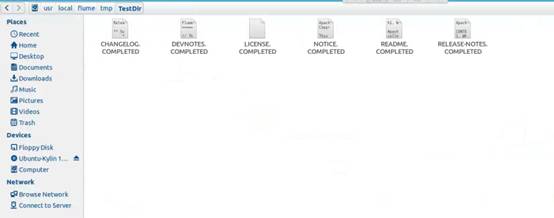

copy测试文件hellospark.txt到Flume flume-conf.properties配置文件中指定的/usr/local/flume/tmp/TestDir目录下:

隔30秒后可以在eclipse程序控制台中看到上传的文件单词统计结果。

第二部分:源码分析

1、创建createPollingStream (FlumeUtils.scala )

注意:默认的存储方式是MEMORY_AND_DISK_SER_2

/**

* Creates an input stream that is to be used with the Spark Sink deployed on a Flume agent.

* This stream will poll the sink for data and will pull events as they are available.

* This stream will use a batch size of 1000 events and run 5 threads to pull data.

* @param hostname Address of the host on which the Spark Sink is running

* @param port Port of the host at which the Spark Sink is listening

* @param storageLevel Storage level to use for storing the received objects

*/

def createPollingStream(

ssc: StreamingContext,

hostname: String,

port: Int,

storageLevel: StorageLevel = StorageLevel.MEMORY_AND_DISK_SER_2

): ReceiverInputDStream[SparkFlumeEvent] = {

createPollingStream(ssc, Seq(new InetSocketAddress(hostname, port)), storageLevel)

}

2、参数配置:默认的全局参数,private 级别配置无法修改

private val DEFAULT_POLLING_PARALLELISM = 5

private val DEFAULT_POLLING_BATCH_SIZE = 1000

/**

* Creates an input stream that is to be used with the Spark Sink deployed on a Flume agent.

* This stream will poll the sink for data and will pull events as they are available.

* This stream will use a batch size of 1000 events and run 5 threads to pull data.

* @param addresses List of InetSocketAddresses representing the hosts to connect to.

* @param storageLevel Storage level to use for storing the received objects

*/

def createPollingStream(

ssc: StreamingContext,

addresses: Seq[InetSocketAddress],

storageLevel: StorageLevel

): ReceiverInputDStream[SparkFlumeEvent] = {

createPollingStream(ssc, addresses, storageLevel,

DEFAULT_POLLING_BATCH_SIZE, DEFAULT_POLLING_PARALLELISM)

}

3、创建FlumePollingInputDstream对象

/**

* Creates an input stream that is to be used with the Spark Sink deployed on a Flume agent.

* This stream will poll the sink for data and will pull events as they are available.

* @param addresses List of InetSocketAddresses representing the hosts to connect to.

* @param maxBatchSize Maximum number of events to be pulled from the Spark sink in a

* single RPC call

* @param parallelism Number of concurrent requests this stream should send to the sink. Note

* that having a higher number of requests concurrently being pulled will

* result in this stream using more threads

* @param storageLevel Storage level to use for storing the received objects

*/

def createPollingStream(

ssc: StreamingContext,

addresses: Seq[InetSocketAddress],

storageLevel: StorageLevel,

maxBatchSize: Int,

parallelism: Int

): ReceiverInputDStream[SparkFlumeEvent] = {

new FlumePollingInputDStream[SparkFlumeEvent](ssc, addresses, maxBatchSize,

parallelism, storageLevel)

}

4、继承自ReceiverInputDstream并覆写getReciver方法,调用FlumePollingReciver接口

private[streaming] class FlumePollingInputDStream[T: ClassTag](

_ssc: StreamingContext,

val addresses: Seq[InetSocketAddress],

val maxBatchSize: Int,

val parallelism: Int,

storageLevel: StorageLevel

) extends ReceiverInputDStream[SparkFlumeEvent](_ssc) {

override def getReceiver(): Receiver[SparkFlumeEvent] = {

new FlumePollingReceiver(addresses, maxBatchSize, parallelism, storageLevel)

}

}

5、ReceiverInputDstream 构建了一个线程池,设置为后台线程;并使用lazy和工厂方法创建线程和NioClientSocket(NioClientSocket底层使用NettyServer的方式)

lazy val channelFactoryExecutor =

Executors.newCachedThreadPool(new ThreadFactoryBuilder().setDaemon(true).

setNameFormat("Flume Receiver Channel Thread - %d").build())

lazy val channelFactory =

new NioClientSocketChannelFactory(channelFactoryExecutor, channelFactoryExecutor)

6、receiverExecutor 内部也是线程池;connections是指链接分布式Flume集群的FlumeConnection实体句柄的个数,线程拿到实体句柄访问数据。

lazy val receiverExecutor = Executors.newFixedThreadPool(parallelism,

new ThreadFactoryBuilder().setDaemon(true).setNameFormat("Flume Receiver Thread - %d").build())

private lazy val connections = new LinkedBlockingQueue[FlumeConnection]()

7、启动时创建NettyTransceiver,根据并行度(默认5个)循环提交FlumeBatchFetcher

override def onStart(): Unit = {

// Create the connections to each Flume agent.

addresses.foreach(host => {

val transceiver = new NettyTransceiver(host, channelFactory)

val client = SpecificRequestor.getClient(classOf[SparkFlumeProtocol.Callback], transceiver)

connections.add(new FlumeConnection(transceiver, client))

})

for (i <- 0 until parallelism) {

logInfo("Starting Flume Polling Receiver worker threads..")

// Threads that pull data from Flume.

receiverExecutor.submit(new FlumeBatchFetcher(this))

}

}

8、FlumeBatchFetcher run方法中从Receiver中获取connection链接句柄ack跟消息确认有关

def run(): Unit = {

while (!receiver.isStopped()) {

val connection = receiver.getConnections.poll()

val client = connection.client

var batchReceived = false

var seq: CharSequence = null

try {

getBatch(client) match {

case Some(eventBatch) =>

batchReceived = true

seq = eventBatch.getSequenceNumber

val events = toSparkFlumeEvents(eventBatch.getEvents)

if (store(events)) {

sendAck(client, seq)

} else {

sendNack(batchReceived, client, seq)

}

case None =>

}

} catch {

9、获取一批一批数据方法

/**

* Gets a batch of events from the specified client. This method does not handle any exceptions

* which will be propogated to the caller.

* @param client Client to get events from

* @return [[Some]] which contains the event batch if Flume sent any events back, else [[None]]

*/

private def getBatch(client: SparkFlumeProtocol.Callback): Option[EventBatch] = {

val eventBatch = client.getEventBatch(receiver.getMaxBatchSize)

if (!SparkSinkUtils.isErrorBatch(eventBatch)) {

// No error, proceed with processing data

logDebug(s"Received batch of ${eventBatch.getEvents.size} events with sequence " +

s"number: ${eventBatch.getSequenceNumber}")

Some(eventBatch)

} else {

logWarning("Did not receive events from Flume agent due to error on the Flume agent: " +

eventBatch.getErrorMsg)

None

}

}

总结:

88课

备注:

资料来源于:DT_大数据梦工厂(IMF传奇行动绝密课程)

更多私密内容,请关注微信公众号:DT_Spark