转:http://blog.csdn.net/wbgxx333/article/details/41019453

深度神经网络已经是语音识别领域最热的话题了。从2010年开始,许多关于深度神经网络的文章在这个领域发表。许多大型科技公司(谷歌和微软)开始把DNN用到他们的产品系统里。(备注:谷歌的应该是google now,微软的应该是win7和win8操作系统里的语音识别和他的SDK等等)

但是,没有一个工具箱像kaldi这样可以很好的提供支持。因为先进的技术无时无刻不在发展,这就意味着代码需要跟上先进技术的步伐和代码的架构需要重新去思考。

我们现在在kaldi里提供两套分离的关于深度神经网络代码。一个在代码目录下的nnet/和nnetbin/,这个是由 Karel Vesely提供。此外,还有一个在代码目录nnet-cpu/和nnet-cpubin/,这个是由 Daniel Povey提供(这个代码是从Karel早期版本修改,然后重新写的)。这些代码都是很官方的,这些在以后都会发展的。

在例子目录下,比如: egs/wsj/s5/, egs/rm/s5, egs/swbd/s5 and egs/hkust/s5b,神经网络的例子脚本都可以找到。 Karel的例子脚本可以在local/run_dnn.sh或者local/run_nnet.sh,而Dan的例子脚本在local/run_nnet_cpu.sh。在运行这些脚本前,为了调整系统,run.sh你必须首先被运行。

我们会很快的把这两个神经网络的详细文档公布。现在,我们总结下这两个的最重要的区别:

1.Karel的代码,是用GPU加速的单线程的SGD训练,而Dan的代码是用多个CPU的多线程方式;

2.Karel的代码支持区分性训练,而Dan的代码不支持。

除了这些,在架构上有很多细小的区别。

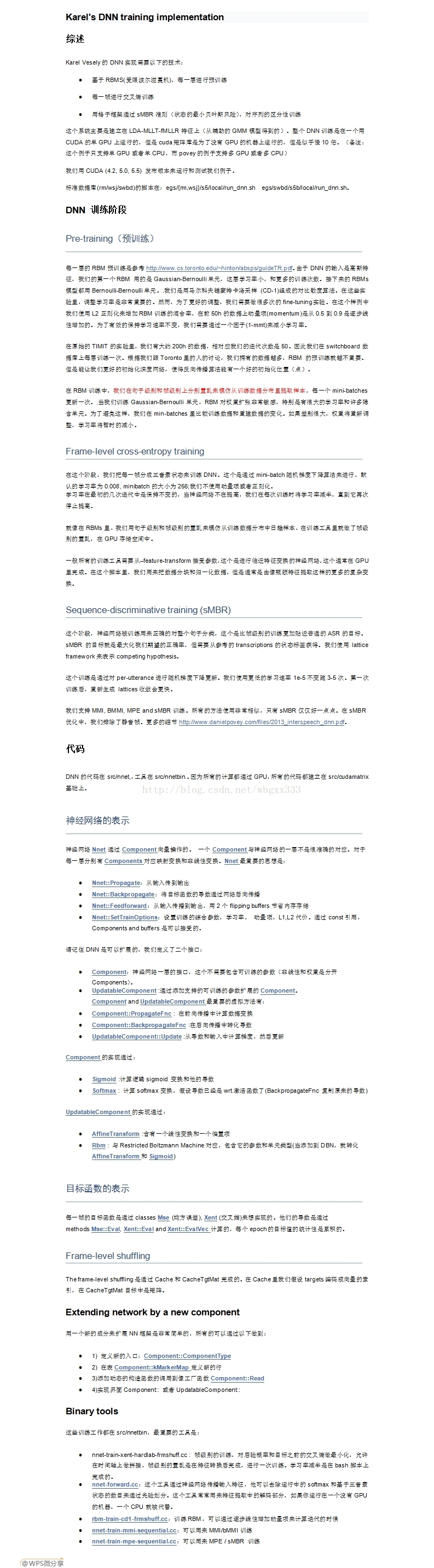

我们希望对于这些库添加更多的文档,Karel的版本的代码有一些稍微过时的文档在Karel's DNN training implementation.

中文翻译见:http://blog.csdn.net/wbgxx333/article/details/24438405

-------------------------------------------------------------------------------------------------------------------------------------------------------

-----------------------------------------------------------------------------------------------------------------------------------------------------

这个是昨晚无意中的发现,由于远在cmu大学的苗亚杰(Yajie Miao)博士的贡献,我们又可以在kaldi上使用深度学习的模块。之前在htk上使用dbn一直还没成功,希望最近可以早点实现。以下是苗亚杰博士的主页上的关于kaldi+pdnn的介绍。希望大家可以把自己的力量也贡献出来,让我们作为学生的多学习学习。

| Kaldi+PDNN -- Implementing DNN-based ASR Systems with Kaldi and PDNN |

||||||||||||||||||||||||||||

| Overview | ||||||||||||||||||||||||||||

| Kaldi+PDNN contains a set of fully-fledged Kaldi ASR recipes, which realize DNN-based acoustic modeling using the PDNN toolkit. The overall pipeline has 3 stages: 1. The initial GMM model is built with the existing Kaldi recipes 2. DNN acoustic models are trained by PDNN 3. The trained DNN model is ported back to Kaldi for hybrid decoding or further tandem system building  |

||||||||||||||||||||||||||||

| Hightlights | ||||||||||||||||||||||||||||

| Model diversity. Deep Neural Networks (DNNs); Deep Bottleneck Features (DBNFs); Deep Convolutional Networks (DCNs) PDNN toolkit. Easy and fast to implement new DNN ideas Open license. All the codes are released under Apache 2.0, the same license as Kaldi Consistency with Kaldi. Recipes follow the Kaldi style and can be integrated seemlessly with the existing setups |

||||||||||||||||||||||||||||

| Release Log | ||||||||||||||||||||||||||||

| Dec 2013 --- version 1.0 (the initial release) Feb 2014 --- version 1.1 (clean up the scripts, add the dnn+fbank recipe run-dnn-fbank.sh, enrich PDNN) |

||||||||||||||||||||||||||||

| Requirements | ||||||||||||||||||||||||||||

| 1. A GPU card should be available on your computing machine. 2. Initial model building should be run, ideally up to train_sat and align_fmllr 3. Software Requirements: * Theano. For information about Theano installation on Ubuntu Linux, refer to this document editted by Wonkyum Lee from CMU. * pfile_utils. This script (that is, kaldi-trunk/tools/install_pfile_utils.sh) installs pfile_utils automatically. |

||||||||||||||||||||||||||||

| Download | ||||||||||||||||||||||||||||

| Kaldi+PDNN is hosted on Sourceforge. You can enter your Kaldi Switchboard setup (such as egs/swbd/s5b) and download the latest version via svn:

Now the new run-*.sh scripts appear in your setup. You can run them directly. |

||||||||||||||||||||||||||||

| Recipes | ||||||||||||||||||||||||||||

|

||||||||||||||||||||||||||||

| Experiments & Results | ||||||||||||||||||||||||||||

| The recipes are developed based on the Kaldi 110-hour Switchboard setup. This is the standard system you can get if you run egs/swbd/s5b/run.sh. Our experiments follow the similar configurations as described inthis paper. We have the following data partitions. The "validation" set is used to measure frame accuracy and determine termination in DNN fine-tuning. training -- train_100k_nohup (110 hours) validation -- train_dev_nohup testing -- eval2000 (HUB5'00)

Our hybrid recipe run-dnn.sh is giving WER comparable with this paper (Table 5 for fMLLR features). We are confident to think that our recipes perform comparably with the Kaldi internal DNN setups. |

||||||||||||||||||||||||||||

| Want to Contribute? | ||||||||||||||||||||||||||||

| We look forward to your contributions. Improvement can be made on the following aspects (but not limited to): 1. Optimization to the above recipes 2 New recipes 3. Porting the recipes to other datasets 4. Experiments and results 5. Contributions to the PDNN toolkit Contact Yajie Miao (ymiao@cs.cmu.edu) if you have any questions or suggestions. |

||||||||||||||||||||||||||||

上述就是苗博士的介绍。具体可见:http://www.cs.cmu.edu/~ymiao/kaldipdnn.html。

有些复杂,后续有时间深入再看。