目录

———————————————————————————————————————————————————————————————————————————————

最近用到了ResNet残差网络,查看了原文和一些资料,在网易云课堂上学习了吴恩达老师介绍的残差网络,这里对学习的内容做一个简单的总结。我们都知道网络的宽度和深度可以很好的提高网络的性能,深的网络一般都比浅的的网络效果好,但训练一个很深的网络是非常困难的,一方面是网络越深越容易出现梯度消失和梯度爆炸问题, 然而这个问题通过BN层和ReLU激活函数等方法在很大程度上已经得到解决;另一方面当网络层数达到一定的数目以后,网络的性能就会趋于饱和,再增加网络层数的话性能就会开始退化,这说明当网络变得很深以后,网络就变得难以训练了。ResNet是2015年由何恺明等提出来的,曾在ImageNet中斩获图像分类、检测、定位三项的冠军,ResNet的提出很大程度上解决了网络退化问题(吴恩达老师解释的是梯度消失和爆炸问题)。

一、残差块(Residual Block)

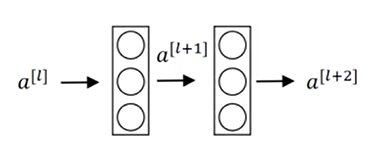

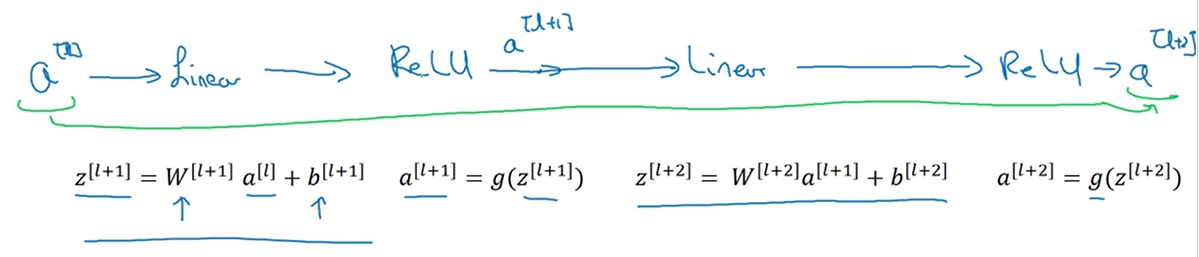

这里吴恩达老师课程中对残差块的介绍比较好理解,以一个两层神经网络为例,普通网络输入$ a^{[l]} $ 首先经过线性变换生成$ z^{[l+1]} $,然后通过ReLU激活层输出$ a^{[l+1]} $ ,同样再经过一个线性变换生成$ z^{[l+2]} $,最后通过ReLU生成$ a^{[l+2]} $,最终$$ a^{[l+2]} = g(z^{[l+2]}). $$

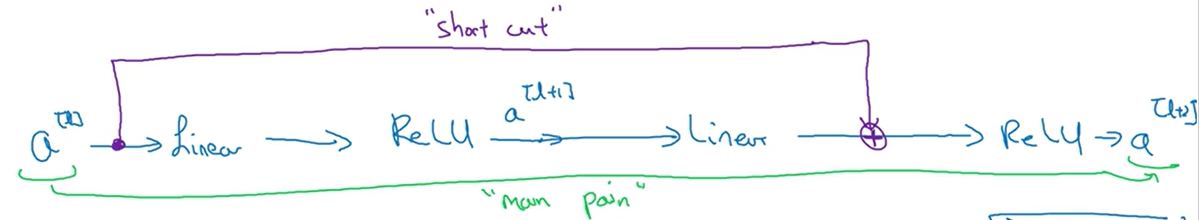

在残差网络中直接将$ a{[l]} $连接到第二个线性变换和第二个ReLU激活层之间,形成一条更便捷的路径(short cut),此时$$ a^{[l+2]} = g(z^{[l+2]}). $$变为$$ a^{[l+2]} = g(z^{[l+2]} + a^{[l]}). $$,也就是加上$ a^{[l]} $后形成了一个残差块。

二、残差网络为什么有用?

- 前向

假设输入$ x $通过一个很深的网络后通过ReLU激活函数输出为$a^{[l]}$,根据ReLU的特性此时$a geq 0$,再其后面再接一个两层的残差块输出$a^{[l+2]}$,则$a^{[l+2]}$可以表示为$$ a^{[l+2]} = g(w^{[l+2]}a^{[l+1]} + b^{[l+2]} + a^{[l]}).$$

当$w^{[l+2]}$和偏置$w^{[l+2]}$都为0时,$$ a^{[l+2]} = g(a^{[l]})=a^{[l]}.$$,这说明残差块学习这个恒等变换并不难,另外如果中间这两层学习到了一些其他有用的特征信息的话,它可能比学习恒等变换的效果更好,但是如果不加入残差块的话随着网络的不断加深,学习一个恒等变换的参数都可能变得很难,因此残差网络能在不减慢学习效率(恒等变换)的情况下还有可能提高模型的性能。

原文中如下图所示,设$ x $ 为浅层输出,$ H(x) $为深层输出,$ F(x) $为中间层结果,当$ x $ 表示的特征已经达到一个很好的程度时,中间层继续学习会导致损失增大,$ F(x) $就会慢慢趋近于0,$ x $将从short cut路径继续往下传播,这样就实现了当浅层特征很好时,后面的深层网络能达到一个恒等变换的效果。

- 反向传播

一方面是残差块将输出$ y = H(x) $ 分成了 $ F(x) + x $,变换后$ F(x) = H(x) - x$,即从原来学习一个$ x $到$ y $的映射变为学习 $ y $与$ x $ 之间的差值,这样学习任务变得更简单。 另一方面因为前向过程中存在short cut路径下的恒等映射,因此在反向传播过程中也存在这样一条捷径,只需要通过一个ReLU函数就可以将梯度传到上一个模块。

三、ResNet网络结构

ResNet就是用这种残差块来作为网络的基本结构,在论文中,作者给出了不同层数的ResNet网络,包括18层、34层、50层、101层和152层,50层及以上的称为深度残差网络,它们网络结构如下图所示。深度残差网络和浅层残差网络的主要区别在于基本结构由原来的残差块(Residual Block)变为了瓶颈残差块(Residual Bottleneck),瓶颈残差块输出通道数为输入的四倍,而残差块输入和输出通道数相等,以50层的残差网络为例,在conv2_x层中包括了3个瓶颈残差块,第一层和最后一层的通道数相差4倍, 由原来的64变为了256。

四、代码实现

from __future__ import print_function, division, absolute_import

import torch.nn as nn

import math

import torch.utils.model_zoo as model_zoo

__all__ = ['ResNet', 'resnet18', 'resnet34', 'resnet50', 'resnet101',

'resnet152']

model_urls = {

'resnet18': 'https://download.pytorch.org/models/resnet18-5c106cde.pth',

'resnet34': 'https://download.pytorch.org/models/resnet34-333f7ec4.pth',

'resnet50': 'https://download.pytorch.org/models/resnet50-19c8e357.pth',

'resnet101': 'https://download.pytorch.org/models/resnet101-5d3b4d8f.pth',

'resnet152': 'https://download.pytorch.org/models/resnet152-b121ed2d.pth',

}

def conv3x3(in_planes, out_planes, stride=1):

"3x3 convolution with padding"

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=True)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=True)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=True)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=True)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out

from torch.legacy import nn as nnl

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=True)

#self.conv1 = nnl.SpatialConvolution(3, 64, 7, 7, 2, 2, 3, 3)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=True),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

self.conv1_input = x.clone()

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x

def resnet18(pretrained=False, **kwargs):

"""Constructs a ResNet-18 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [2, 2, 2, 2], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet18']))

return model

def resnet34(pretrained=False, **kwargs):

"""Constructs a ResNet-34 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet34']))

return model

def resnet50(pretrained=False, **kwargs):

"""Constructs a ResNet-50 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet50']))

return model

def resnet101(pretrained=False, **kwargs):

"""Constructs a ResNet-101 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 23, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet101']))

return model

def resnet152(pretrained=False, **kwargs):

"""Constructs a ResNet-152 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 8, 36, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet152']))

return model