1 搭建RAC环境

IP规划

| 网卡 | node01 | node02 |

| public ip | 192.168.110.15 | 192.168.110.25 |

| vip | 192.168.110.16 | 192.168.110.26 |

| private ip | 10.10.10.1 | 10.10.10.2 |

| scan ip | 192.168.110.200 | 192.168.110.200 |

安装oracle linux 5.10 node01和node02

/swap :8G

/boot: 200M

/: 其余空间

需要安装的软件

网络配置

vi /etc/hosts (node01节点与node02节点做同样的配置)

#node01

192.168.110.15 node01 # public ip地址,对外提供服务 bridge添加网卡的时候可以设置

192.168.110.16 node01-vip #

10.10.10.1 node01-priv # 用于两个节点之间 host only 添加网卡的时候可以设置

#node02

192.168.110.25 node02

192.168.110.26 node02-vip

10.10.10.2 node02-priv

#scan 与public ip地址保持同一网段

192.168.110.200 node-scan12

12

1

#node01

2

192.168.110.15 node01 # public ip地址,对外提供服务 bridge添加网卡的时候可以设置

3

192.168.110.16 node01-vip #

4

10.10.10.1 node01-priv # 用于两个节点之间 host only 添加网卡的时候可以设置

5

6

#node02

7

192.168.110.25 node02

8

192.168.110.26 node02-vip

9

10.10.10.2 node02-priv

10

11

#scan 与public ip地址保持同一网段

12

192.168.110.200 node-scan

添加网卡用于私有网络

配置私有网络

编辑网卡配置文件: vi /etc/sysconfig/network-scripts/ifcfg-eth1

DEVICE=eth1

BOOTPROTO=static

ONBOOT=yes

HWADDR=00:0c:29:58:0d:cd #node02中的mac地址是不一样的,此mac地址值本来就有

NETMASK=255.255.255.0

#IPADDR=192.168.110.16

IPADDR=10.10.10.1 #node02为10.10.10.2

GATEWAY=192.168.110.1

TYPE=Ethernet

DNS1=114.114.114.114

DNS2=8.8.8.812

12

1

编辑网卡配置文件: vi /etc/sysconfig/network-scripts/ifcfg-eth1

2

DEVICE=eth1

3

BOOTPROTO=static

4

ONBOOT=yes

5

HWADDR=00:0c:29:58:0d:cd #node02中的mac地址是不一样的,此mac地址值本来就有

6

NETMASK=255.255.255.0

7

#IPADDR=192.168.110.16

8

IPADDR=10.10.10.1 #node02为10.10.10.2

9

GATEWAY=192.168.110.1

10

TYPE=Ethernet

11

DNS1=114.114.114.114

12

DNS2=8.8.8.8

安装cluster以及asm共享磁盘所需要的包

检查安装cluster所需要的包,根据官方文档的要求如下以及asm共享磁盘需要的lib文件包:

oracleasmlib-2.0.4-1.el5.x86_64

binutils-2.17.50.0.6 compat-libstdc++-33-3.2.3

elfutils-libelf-0.125 elfutils-libelf-devel-0.125

elfutils-libelf-devel-static-0.125 gcc-4.1.2

gcc-c++-4.1.2 glibc-2.5-24

glibc-common-2.5 glibc-devel-2.5

glibc-headers-2.5 kernel-headers-2.6.18

ksh-20060214 libaio-0.3.106

libaio-devel-0.3.106 libgcc-4.1.2

libgomp-4.1.2 libstdc++-4.1.2

libstdc++-devel-4.1.2 make-3.81

sysstat-7.0.2 unixODBC-2.2.11

unixODBC-devel-2.2.11 pdksh-5.2.14

oracleasm-2.6.18-238.el oracleasm-support-2.1.4-1.el515

15

1

检查安装cluster所需要的包,根据官方文档的要求如下以及asm共享磁盘需要的lib文件包:

2

oracleasmlib-2.0.4-1.el5.x86_64

3

binutils-2.17.50.0.6 compat-libstdc++-33-3.2.3

4

elfutils-libelf-0.125 elfutils-libelf-devel-0.125

5

elfutils-libelf-devel-static-0.125 gcc-4.1.2

6

gcc-c++-4.1.2 glibc-2.5-24

7

glibc-common-2.5 glibc-devel-2.5

8

glibc-headers-2.5 kernel-headers-2.6.18

9

ksh-20060214 libaio-0.3.106

10

libaio-devel-0.3.106 libgcc-4.1.2

11

libgomp-4.1.2 libstdc++-4.1.2

12

libstdc++-devel-4.1.2 make-3.81

13

sysstat-7.0.2 unixODBC-2.2.11

14

unixODBC-devel-2.2.11 pdksh-5.2.14

15

oracleasm-2.6.18-238.el oracleasm-support-2.1.4-1.el5

用户组与用户的创建

root#groupadd oinstall

root#groupadd dba

root#groupadd oper

root#groupadd asmadmin

root#groupadd admdba

root#groupadd asmoper

root#useradd –g oinstall –G dba,asmadmin,asmdba,asmoper grid

root#useradd –g oinstall –G dba,oper,asmdba oracle

root#passwd grid

….

passwd oracle11

11

1

root#groupadd oinstall

2

root#groupadd dba

3

root#groupadd oper

4

root#groupadd asmadmin

5

root#groupadd admdba

6

root#groupadd asmoper

7

root#useradd –g oinstall –G dba,asmadmin,asmdba,asmoper grid

8

root#useradd –g oinstall –G dba,oper,asmdba oracle

9

root#passwd grid

10

….

11

passwd oracle

增加目录及权限

root#cd /

root#mkdir u01

root#cd /u01

root#mkdir gridbase

root#mkdir grid

root#mkdir oracle

root#la –l

root#cd /

root#chown –R grid:oinstall /u01

root#chmod 775 /u01

root#cd /u01

root#chown –R oracle:oinstall oracle12

12

1

root#cd /

2

root#mkdir u01

3

root#cd /u01

4

root#mkdir gridbase

5

root#mkdir grid

6

root#mkdir oracle

7

root#la –l

8

root#cd /

9

root#chown –R grid:oinstall /u01

10

root#chmod 775 /u01

11

root#cd /u01

12

root#chown –R oracle:oinstall oracle

设置环境变量 grid用户

root#su – grid

grid$pwd

grid$vi .bash_profile

添加以下几行:

export ORACLE_SID=+ASM1 #node01

export ORACLE_SID=+ASM2 #node02

ORACLE_BASE=/u01/gridbase

ORACLE_HOME=/u01/grid

PATH=$ORACLE_HOME/bin:$PATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:$LD_LIBRARY_PATH

DISPLAY=192.168.1.23:0.0

export ORACLE_BASE ORACLE_HOME PATH LD_LIBRARY_PATH DISPLAY

13

13

1

root#su – grid

2

grid$pwd

3

grid$vi .bash_profile

4

添加以下几行:

5

export ORACLE_SID=+ASM1 #node01

6

export ORACLE_SID=+ASM2 #node02

7

ORACLE_BASE=/u01/gridbase

8

ORACLE_HOME=/u01/grid

9

PATH=$ORACLE_HOME/bin:$PATH

10

LD_LIBRARY_PATH=$ORACLE_HOME/lib:$LD_LIBRARY_PATH

11

DISPLAY=192.168.1.23:0.0

12

export ORACLE_BASE ORACLE_HOME PATH LD_LIBRARY_PATH DISPLAY

13

设置环境变量 oracle用户

root#su – oracle

oracle$pwd

oracle$vi .bash_profile

添加以下几行:

ORACLE_BASE=/u01/oracle

ORACLE_HOME=/u01/oracle/db

ORACLE_SID=cludb01 #node01注意每个节点的instance的命令规则

ORACLE_SID=cludb02 #node02注意每个节点的instance的命令规则

PATH=$ORACLE_HOME/bin:$PATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:$LD_LIBRARY_PATH

DISPLAY=192.168.1.23:0.0

export ORACLE_BASE ORACLE_HOME ORACLE_SID PATH LD_LIBRARY_PATH DISPLAY

13

13

1

root#su – oracle

2

oracle$pwd

3

oracle$vi .bash_profile

4

添加以下几行:

5

ORACLE_BASE=/u01/oracle

6

ORACLE_HOME=/u01/oracle/db

7

ORACLE_SID=cludb01 #node01注意每个节点的instance的命令规则

8

ORACLE_SID=cludb02 #node02注意每个节点的instance的命令规则

9

PATH=$ORACLE_HOME/bin:$PATH

10

LD_LIBRARY_PATH=$ORACLE_HOME/lib:$LD_LIBRARY_PATH

11

DISPLAY=192.168.1.23:0.0

12

export ORACLE_BASE ORACLE_HOME ORACLE_SID PATH LD_LIBRARY_PATH DISPLAY

13

关闭防火墙

关闭selinux

root#vi /etc/selinux/config

定位到SELINUX=enforcing修改为SELINUX=disable

关闭防火墙

root#export LANG=C

root#setup

选择System services

空格选择对应取消的服务即可。

取消:ip6tables iptables sendmail三个服务9

9

1

关闭selinux

2

root#vi /etc/selinux/config

3

定位到SELINUX=enforcing修改为SELINUX=disable

4

关闭防火墙

5

root#export LANG=C

6

root#setup

7

选择System services

8

空格选择对应取消的服务即可。

9

取消:ip6tables iptables sendmail三个服务

修改内核参数

root#vi /etc/sysctl/conf

在最后位置添加如下参数:

kernel.shmmax = 68719476736

kernel.shmall = 4294967296

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

fs.file-max = 6815744

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 4194304

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048576

fs.aio-max-nr = 1048576

14

14

1

root#vi /etc/sysctl/conf

2

在最后位置添加如下参数:

3

kernel.shmmax = 68719476736

4

kernel.shmall = 4294967296

5

kernel.shmmni = 4096

6

kernel.sem = 250 32000 100 128

7

fs.file-max = 6815744

8

net.ipv4.ip_local_port_range = 9000 65500

9

net.core.rmem_default = 4194304

10

net.core.rmem_max = 4194304

11

net.core.wmem_default = 262144

12

net.core.wmem_max = 1048576

13

fs.aio-max-nr = 1048576

14

使参数生效

root#sysctl –p #生效内核参数修改1

1

1

root#sysctl –p #生效内核参数修改

配置profile文件

修改profile文件,用于判断oracle用户或者grid用户执行ulimit,命令如下:

root#vi /etc/profile

在最后添加如下:

if [ $USER = "oracle" ] || [ $USER = "grid" ]; then

if [ $SHELL = "/bin/ksh" ]; then

ulimit -p 16384

ulimit -n 65536

else

ulimit -u 16384 -n 65536

fi

umask 022

fi12

12

1

修改profile文件,用于判断oracle用户或者grid用户执行ulimit,命令如下:

2

root#vi /etc/profile

3

在最后添加如下:

4

if [ $USER = "oracle" ] || [ $USER = "grid" ]; then

5

if [ $SHELL = "/bin/ksh" ]; then

6

ulimit -p 16384

7

ulimit -n 65536

8

else

9

ulimit -u 16384 -n 65536

10

fi

11

umask 022

12

fi

修改linux内核

root#vi /etc/security/limits.conf

在后面添加如下信息,用于配置oracle和grid的用户打开文件参数:

grid soft nproc 2047

grid hard nproc 16384

grid soft nofile 1024

grid hard nofile 65536

oracle soft nproc 2047

oracle hard nproc 16384

oracle soft nofile 1024

oracle hard nofile 6553610

10

1

root#vi /etc/security/limits.conf

2

在后面添加如下信息,用于配置oracle和grid的用户打开文件参数:

3

grid soft nproc 2047

4

grid hard nproc 16384

5

grid soft nofile 1024

6

grid hard nofile 65536

7

oracle soft nproc 2047

8

oracle hard nproc 16384

9

oracle soft nofile 1024

10

oracle hard nofile 65536

修改login

修改login,

root#vi /etc/pam.d/login

在最后一样加入

session required pam_limits.so4

4

1

修改login,

2

root#vi /etc/pam.d/login

3

在最后一样加入

4

session required pam_limits.so

调节tmpfs

调tmpfs共享内存问题,由于oracle用户很吃内存,所以调节一下tmpfs大小。

root#umount tmpfs

root#mount –t tmpfs shmfs –o size=3000m /dev/shm

修改后,还需要再修改fstab,命令如下:

root#vi /etc/fstab

修改tmpfs那一行,defaults为size=3000m6

6

1

调tmpfs共享内存问题,由于oracle用户很吃内存,所以调节一下tmpfs大小。

2

root#umount tmpfs

3

root#mount –t tmpfs shmfs –o size=3000m /dev/shm

4

修改后,还需要再修改fstab,命令如下:

5

root#vi /etc/fstab

6

修改tmpfs那一行,defaults为size=3000m

时间同步问题

关于时间同步的问题,oracle rac的两个节点对时间同步要求很高,一般采用oracle rac自带的ctssd这个服务来做时间同步,本身系统也带有ntp服务做时间同步,oracle rac的时间同步和ntp的时间服务器关系,首选ntp,所以要采用oracle rac的时间同步,需要首先关闭ntp服务,命令如下:

root#service ntpd stop

root#chkconfig ntpd off

root#mv /etc/ntp.conf /etc/ntp.conf.2014

root#rm –f /var/run/ntpd.pid5

5

1

关于时间同步的问题,oracle rac的两个节点对时间同步要求很高,一般采用oracle rac自带的ctssd这个服务来做时间同步,本身系统也带有ntp服务做时间同步,oracle rac的时间同步和ntp的时间服务器关系,首选ntp,所以要采用oracle rac的时间同步,需要首先关闭ntp服务,命令如下:

2

root#service ntpd stop

3

root#chkconfig ntpd off

4

root#mv /etc/ntp.conf /etc/ntp.conf.2014

5

root#rm –f /var/run/ntpd.pid

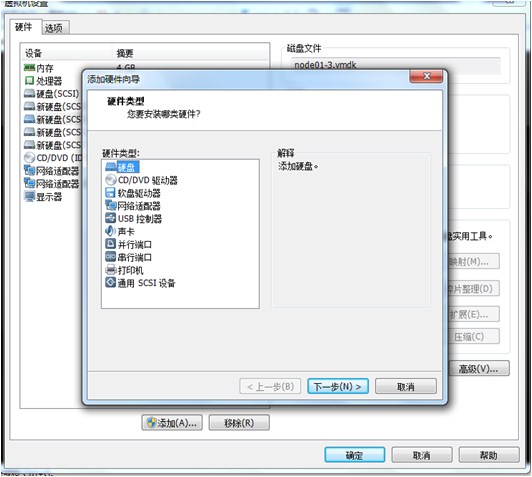

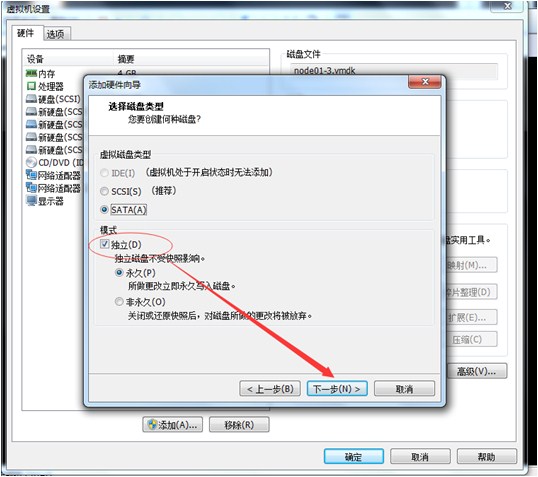

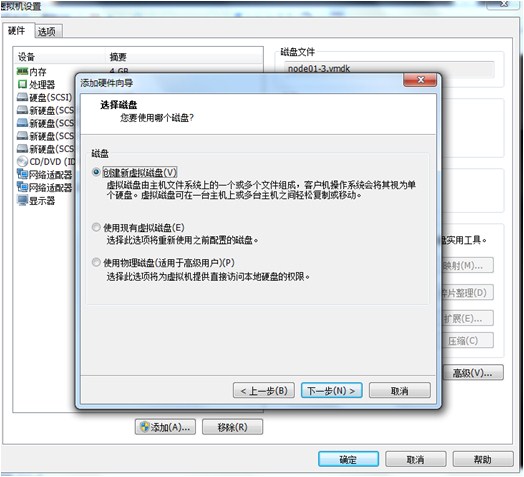

配置共享磁盘

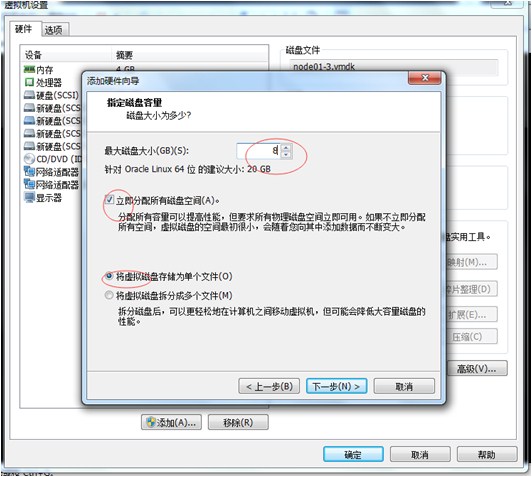

创建共享磁盘,首先增加一个虚拟机,建立好一个磁盘文件,然后修改虚拟机配置即可。

单文件;8G;立即分配;独立存储;(李新明记)

添加好磁盘后,输入以下命令确认其磁盘添加成功,

root#cd /dev

root#ls –l sd*

即可查看添加的磁盘,然后创建磁盘分区

root#fdisk sdb 根据提示选择即可。

在node01虚拟机中总共创建了5块硬盘

然后关闭虚拟机node01

10

10

1

创建共享磁盘,首先增加一个虚拟机,建立好一个磁盘文件,然后修改虚拟机配置即可。

2

单文件;8G;立即分配;独立存储;(李新明记)

3

添加好磁盘后,输入以下命令确认其磁盘添加成功,

4

root#cd /dev

5

root#ls –l sd*

6

即可查看添加的磁盘,然后创建磁盘分区

7

root#fdisk sdb 根据提示选择即可。

8

在node01虚拟机中总共创建了5块硬盘

9

然后关闭虚拟机node01

10

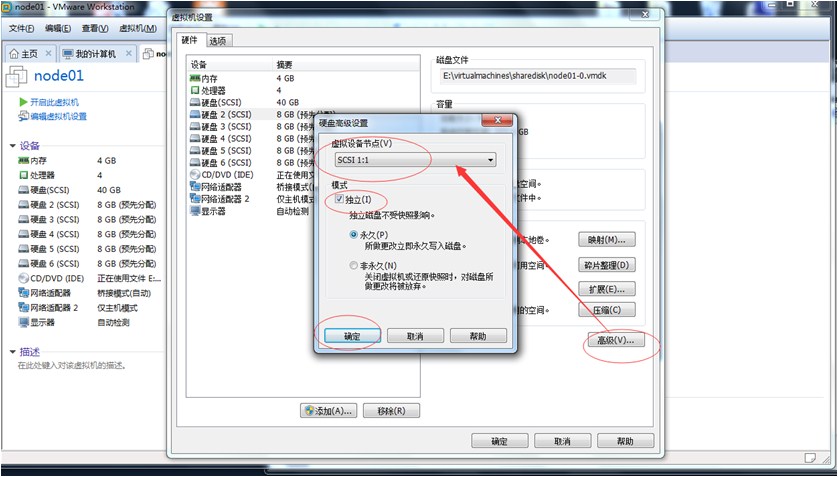

将生成的五块磁盘剪切并放入node01同级目录sharedisk文件夹中

然后将node01中的磁盘删除

然后在node01中设置,添加sharedisk中的五块磁盘

然后设置每个磁盘,如下所示操作

SCSI1:1到SCSI1:5

6

6

1

将生成的五块磁盘剪切并放入node01同级目录sharedisk文件夹中

2

然后将node01中的磁盘删除

3

然后在node01中设置,添加sharedisk中的五块磁盘

4

然后设置每个磁盘,如下所示操作

5

SCSI1:1到SCSI1:5

6

同样的方式在node02中添加5块磁盘,并对磁盘做相同的设置

然后修改node01的虚拟机文件,将磁盘改为共享模式,2

2

1

同样的方式在node02中添加5块磁盘,并对磁盘做相同的设置

2

然后修改node01的虚拟机文件,将磁盘改为共享模式,

编辑node01虚拟机文件node01.vmx(可以在文件末尾添加)

disk.locking = "false"

diskLib.dataCacheMaxSize = "0"

diskLib.dataCacheMaxReadAheadSize = "0"

diskLib.dataCachePageSize = "4096"

diskLib.maxUnsyncedWrites = "0"

scsi1:1.deviceType="disk"

scsi1:2.deviceType="disk"

scsi1:3.deviceType="disk"

scsi1:4.deviceType="disk"

scsi1:5.deviceType="disk"11

11

1

disk.locking = "false"

2

diskLib.dataCacheMaxSize = "0"

3

diskLib.dataCacheMaxReadAheadSize = "0"

4

diskLib.dataCachePageSize = "4096"

5

diskLib.maxUnsyncedWrites = "0"

6

7

scsi1:1.deviceType="disk"

8

scsi1:2.deviceType="disk"

9

scsi1:3.deviceType="disk"

10

scsi1:4.deviceType="disk"

11

scsi1:5.deviceType="disk"

编辑node02虚拟机文件node02.vmx(可以在文件末尾添加)

disk.locking = "false"

diskLib.dataCacheMaxSize = "0"

diskLib.dataCacheMaxReadAheadSize = "0"

diskLib.dataCachePageSize = "4096"

diskLib.maxUnsyncedWrites = "0"

scsi1:1.deviceType="disk"

scsi1:2.deviceType="disk"

scsi1:3.deviceType="disk"

scsi1:4.deviceType="disk"

scsi1:5.deviceType="disk"11

11

1

disk.locking = "false"

2

diskLib.dataCacheMaxSize = "0"

3

diskLib.dataCacheMaxReadAheadSize = "0"

4

diskLib.dataCachePageSize = "4096"

5

diskLib.maxUnsyncedWrites = "0"

6

7

scsi1:1.deviceType="disk"

8

scsi1:2.deviceType="disk"

9

scsi1:3.deviceType="disk"

10

scsi1:4.deviceType="disk"

11

scsi1:5.deviceType="disk"

配置裸盘

(1)在配置裸盘之前需要先格式化硬盘: (在一个节点执行即可)

#fdisk sdb

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1044, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

Using default value 1044

Command (m for help): w

The partition table has been altered!

#fdisk sdc

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1044, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

Using default value 1044

Command (m for help): w

The partition table has been altered!

#fdisk sdd

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1044, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

Using default value 1044

Command (m for help): w

The partition table has been altered!

#fdisk sde

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1044, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

Using default value 1044

Command (m for help): w

The partition table has been altered!

#fdisk sdf

Command (m for help): n

Command action

e extended

p primary partition (1-4)

p

Partition number (1-4): 1

First cylinder (1-1044, default 1): 1

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

Using default value 1044

Command (m for help): w

The partition table has been altered!

此时查看ls /dev/sd*

可以看到多了 sdb1;sdc1;sdd1;sde1;sdf1;69

69

1

#fdisk sdb

2

Command (m for help): n

3

Command action

4

e extended

5

p primary partition (1-4)

6

p

7

Partition number (1-4): 1

8

First cylinder (1-1044, default 1): 1

9

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

10

Using default value 1044

11

Command (m for help): w

12

The partition table has been altered!

13

14

#fdisk sdc

15

Command (m for help): n

16

Command action

17

e extended

18

p primary partition (1-4)

19

p

20

Partition number (1-4): 1

21

First cylinder (1-1044, default 1): 1

22

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

23

Using default value 1044

24

Command (m for help): w

25

The partition table has been altered!

26

27

28

#fdisk sdd

29

Command (m for help): n

30

Command action

31

e extended

32

p primary partition (1-4)

33

p

34

Partition number (1-4): 1

35

First cylinder (1-1044, default 1): 1

36

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

37

Using default value 1044

38

Command (m for help): w

39

The partition table has been altered!

40

41

42

#fdisk sde

43

Command (m for help): n

44

Command action

45

e extended

46

p primary partition (1-4)

47

p

48

Partition number (1-4): 1

49

First cylinder (1-1044, default 1): 1

50

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

51

Using default value 1044

52

Command (m for help): w

53

The partition table has been altered!

54

55

#fdisk sdf

56

Command (m for help): n

57

Command action

58

e extended

59

p primary partition (1-4)

60

p

61

Partition number (1-4): 1

62

First cylinder (1-1044, default 1): 1

63

Last cylinder or +size or +sizeM or +sizeK (1-1044, default 1044):

64

Using default value 1044

65

Command (m for help): w

66

The partition table has been altered!

67

68

此时查看ls /dev/sd*

69

可以看到多了 sdb1;sdc1;sdd1;sde1;sdf1;

(2)配置asmlib

用oracleasm格式化共享磁盘,使用oracleasm之前,确认其已经安装oracleasm oracleasm-support oracleasmlib,命令如下:

root#rpm –qa | grep oracleasm #确认安装oracleasm安装后,再输入如下命令:

root#oracleasm configure –i

然后按照提示输入grid用户,用户组dba,之后默认yes即可。再初始化下,命令如下:(网上有人使用的用户组是asmdba,官方文档对应的是oracle和dba)

5

5

1

用oracleasm格式化共享磁盘,使用oracleasm之前,确认其已经安装oracleasm oracleasm-support oracleasmlib,命令如下:

2

root#rpm –qa | grep oracleasm #确认安装oracleasm安装后,再输入如下命令:

3

root#oracleasm configure –i

4

然后按照提示输入grid用户,用户组dba,之后默认yes即可。再初始化下,命令如下:(网上有人使用的用户组是asmdba,官方文档对应的是oracle和dba)

5

(3)使用asmlib创建共享磁盘

root#oracleasm init

初始化后即可创建磁盘分区:

root#oracleasm createdisk DISK01 sdb1

root#oracleasm createdisk DISK02 sdc1

root#oracleasm createdisk DISK03 sdd1

root#oracleasm createdisk DISK04 sde1

root#oracleasm createdisk DISK05 sdf1

创建好后,输入

root#oracleasm listdisks 即可列出刚才创建的磁盘。9

9

1

root#oracleasm init

2

初始化后即可创建磁盘分区:

3

root#oracleasm createdisk DISK01 sdb1

4

root#oracleasm createdisk DISK02 sdc1

5

root#oracleasm createdisk DISK03 sdd1

6

root#oracleasm createdisk DISK04 sde1

7

root#oracleasm createdisk DISK05 sdf1

8

创建好后,输入

9

root#oracleasm listdisks 即可列出刚才创建的磁盘。

(4)设置磁盘永久性(未采用)根据官方文档可知,如果使用asmlib就不需要下面的操作

添加裸盘配置 http://blog.csdn.net/u014595668/article/details/51160783

在系统中生成裸设备文件 #start_udev

查看裸设备产生的结果:#raw -qa3

3

1

添加裸盘配置 http://blog.csdn.net/u014595668/article/details/51160783

2

在系统中生成裸设备文件 #start_udev

3

查看裸设备产生的结果:#raw -qa

(5)在node02虚拟机上

#node02虚拟机

# oracleasm scandisks

# oracleasm listdisks

正常情况会显示在node01上创建的共享磁盘,如果没显示,需要重启node02虚拟机,应该就可以看到了4

4

1

#node02虚拟机

2

# oracleasm scandisks

3

# oracleasm listdisks

4

正常情况会显示在node01上创建的共享磁盘,如果没显示,需要重启node02虚拟机,应该就可以看到了

配置节点互信

(1)grid用户配置节点互信

# node01

su - grid

sh-keygen -t rsa

ssh-keygen -t dsa

cd .ssh

cat *.pub > authorized_keys

# node02

su - grid

sh-keygen -t rsa

ssh-keygen -t dsa

cd .ssh

cat *.pub > authorized_keys

#node01

scp authorized_keys node02:/home/grid/auth_node01

#node02

cd ~

cat auth_node01 >> .ssh/authorized_keys

scp authorized_keys node01:/home/grid/.ssh/authorized_keys22

22

1

# node01

2

su - grid

3

sh-keygen -t rsa

4

ssh-keygen -t dsa

5

cd .ssh

6

cat *.pub > authorized_keys

7

8

# node02

9

su - grid

10

sh-keygen -t rsa

11

ssh-keygen -t dsa

12

cd .ssh

13

cat *.pub > authorized_keys

14

15

16

#node01

17

scp authorized_keys node02:/home/grid/auth_node01

18

19

#node02

20

cd ~

21

cat auth_node01 >> .ssh/authorized_keys

22

scp authorized_keys node01:/home/grid/.ssh/authorized_keys

(2)oracle用户配置节点互信

# node01

su - oracle

ssh-keygen -t rsa

ssh-keygen -t dsa

cd .ssh

cat *.pub > authorized_keys

# node02

su - oracle

ssh-keygen -t rsa

ssh-keygen -t dsa

cd .ssh

cat *.pub > authorized_keys

#node01

scp authorized_keys node02:/home/oracle/auth_node01

#node02

cd ~

cat auth_node01 >> .ssh/authorized_keys

scp authorized_keys node01:/home/oracle/.ssh/authorized_keys22

22

1

# node01

2

su - oracle

3

ssh-keygen -t rsa

4

ssh-keygen -t dsa

5

cd .ssh

6

cat *.pub > authorized_keys

7

8

# node02

9

su - oracle

10

ssh-keygen -t rsa

11

ssh-keygen -t dsa

12

cd .ssh

13

cat *.pub > authorized_keys

14

15

16

#node01

17

scp authorized_keys node02:/home/oracle/auth_node01

18

19

#node02

20

cd ~

21

cat auth_node01 >> .ssh/authorized_keys

22

scp authorized_keys node01:/home/oracle/.ssh/authorized_keys

(3)验证节点互信

#node01

ssh node02 date

exit

ssh node02-priv date

#node02

ssh node01 date

exit

ssh node01-priv date

10

10

1

#node01

2

ssh node02 date

3

exit

4

ssh node02-priv date

5

6

#node02

7

ssh node01 date

8

exit

9

ssh node01-priv date

10

2 安装grid

(1)上传grid软件并解压

上传linux.x64_11gR2_grid.zip到/u01,

unzip解压,默认会解压到/u01/grid目录,

然后chown -R grid:oinstall /u01/grid3

3

1

上传linux.x64_11gR2_grid.zip到/u01,

2

unzip解压,默认会解压到/u01/grid目录,

3

然后chown -R grid:oinstall /u01/grid

(2)安装cvuqdisk

# rpm -ivh cvuqdisk-1.0.7-1.rpm1

1

1

# rpm -ivh cvuqdisk-1.0.7-1.rpm

(3)环境检查

grid$./runcluvfy.sh stage -pre crsinst -n node01,node02 -fixup -verbose 1

1

1

grid$./runcluvfy.sh stage -pre crsinst -n node01,node02 -fixup -verbose

(4)安装xmanager并设置属性

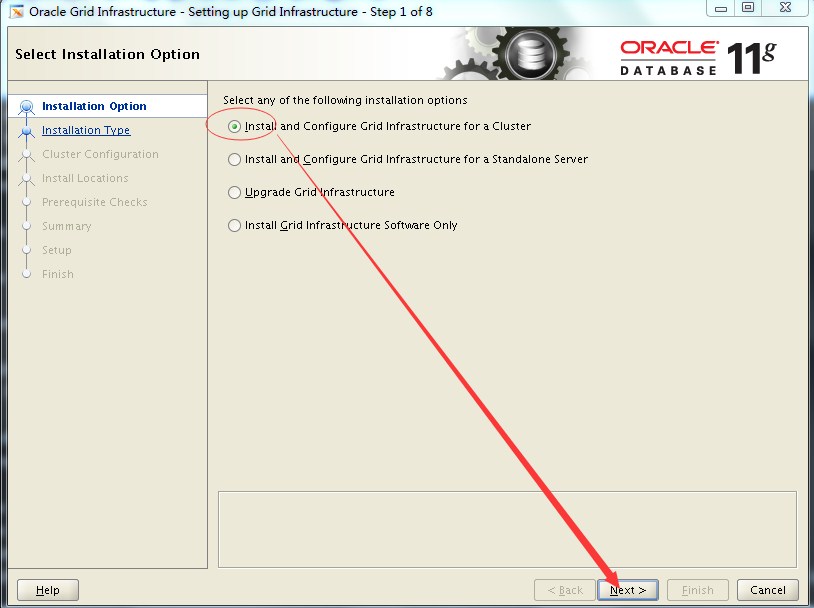

(5)安装grid

./runInstall1

1

1

./runInstall

选择第一项

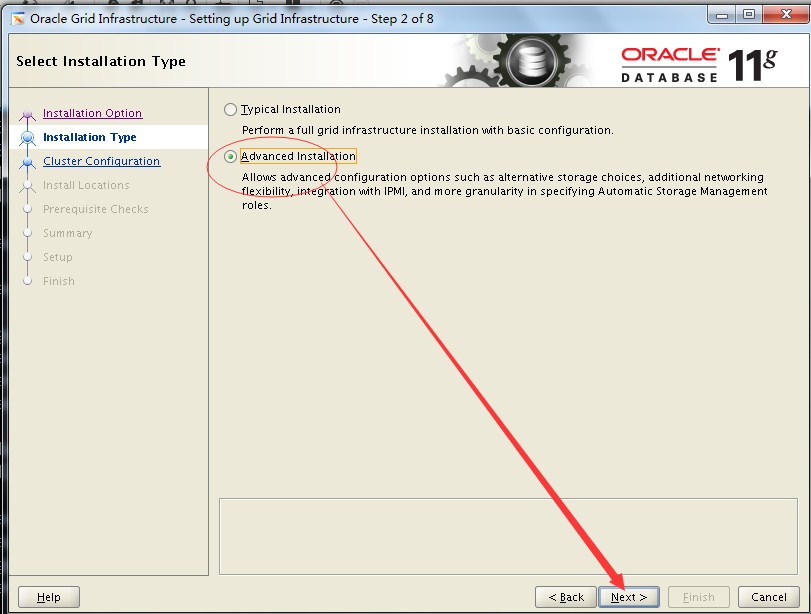

选择高级安装(高级安装:安装过程中可以定制参数)

选择英语,"下一步"

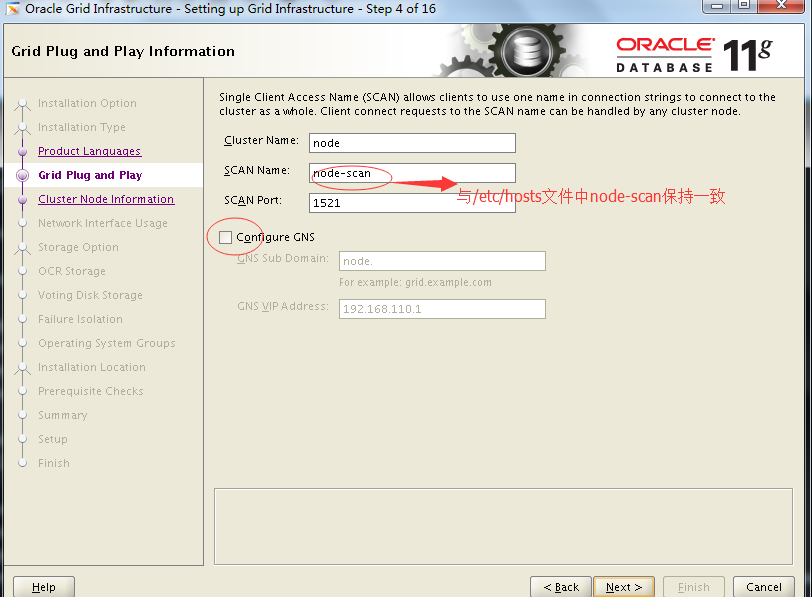

取消Configure CNS

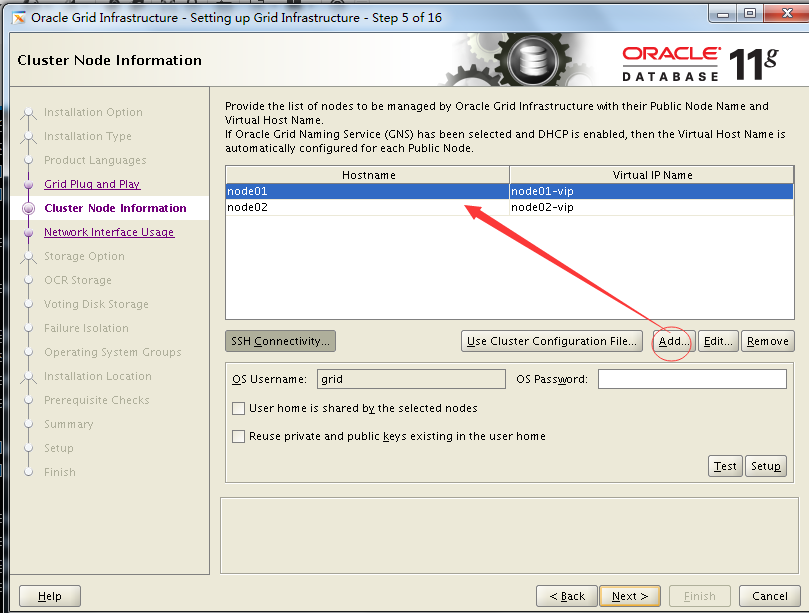

添加node02,hostname:node02; virtual ip name:node01-vip

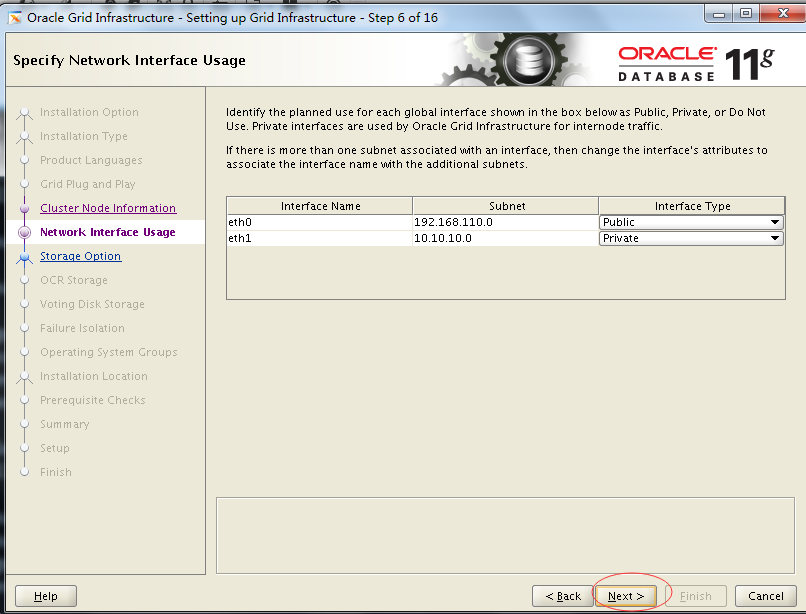

点击next

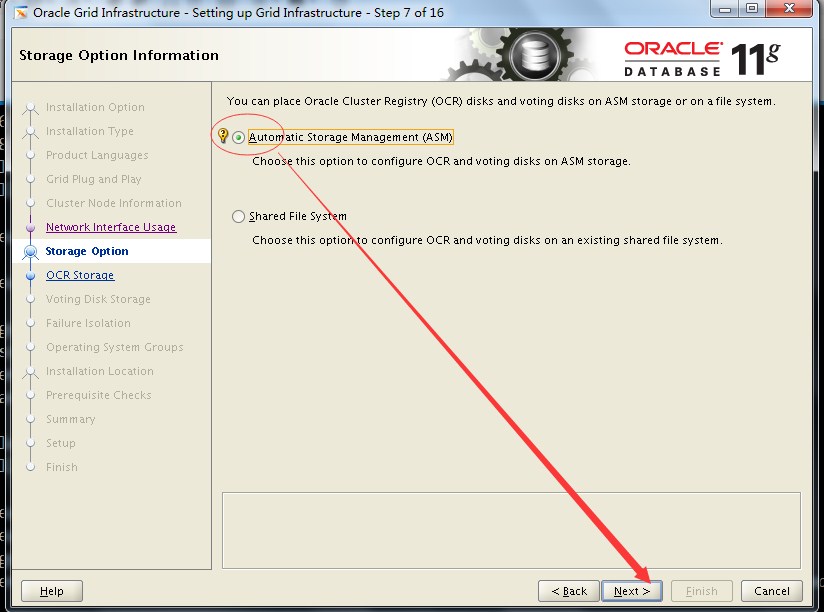

选择asm方式存储ocr(oracle cluster registry)和vot(voting disks)

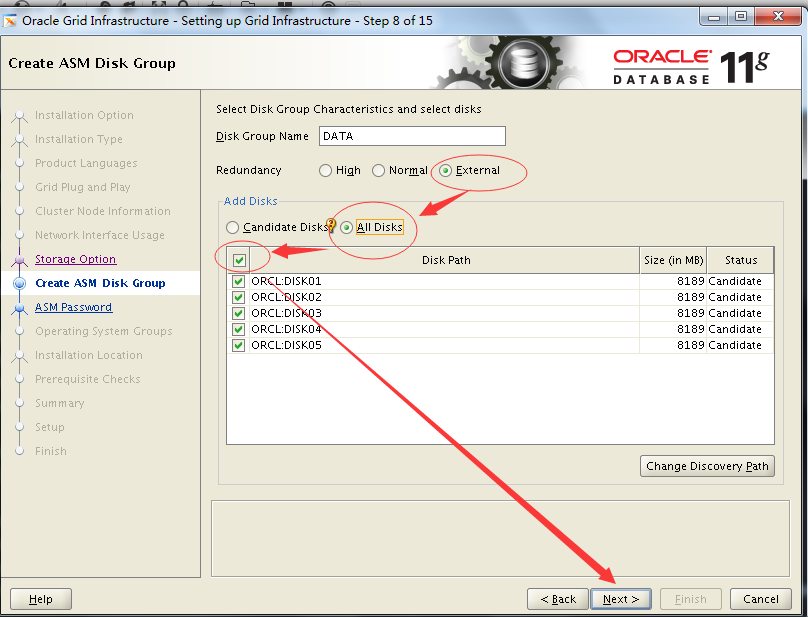

创建asm磁盘组:选择External;All Disks;勾选复选框;

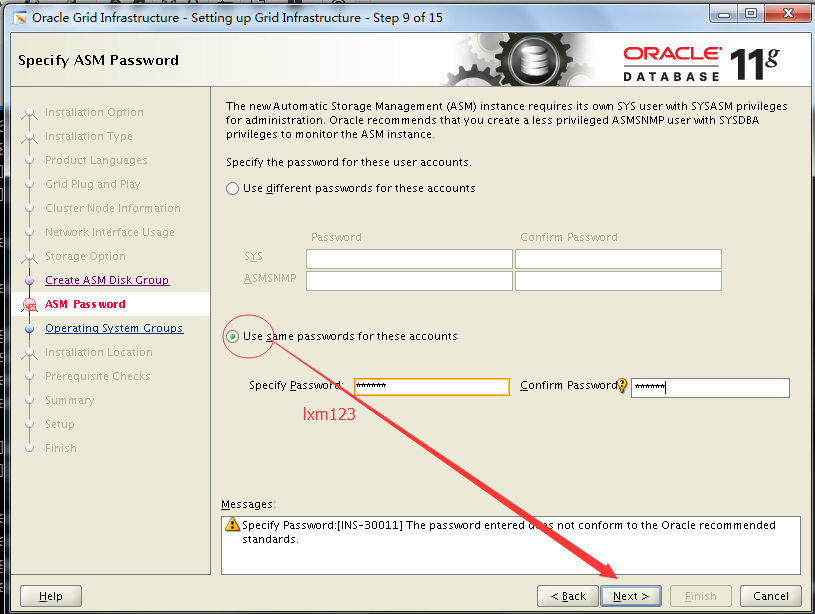

选择相同的密码,next

不适用失败隔离支持,next

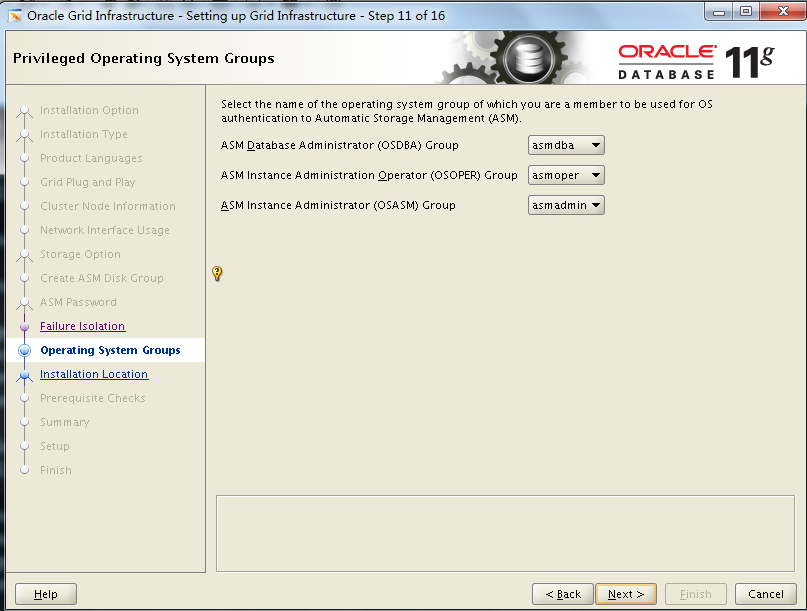

默认即可,next

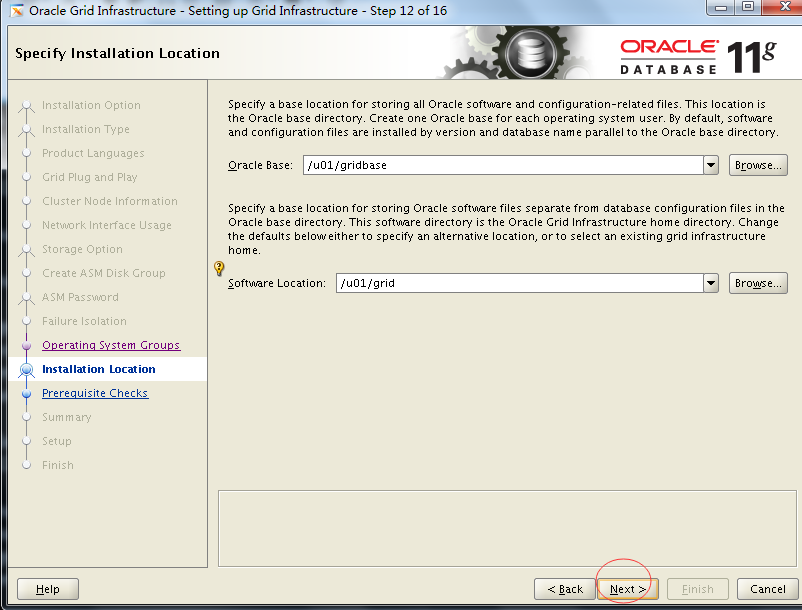

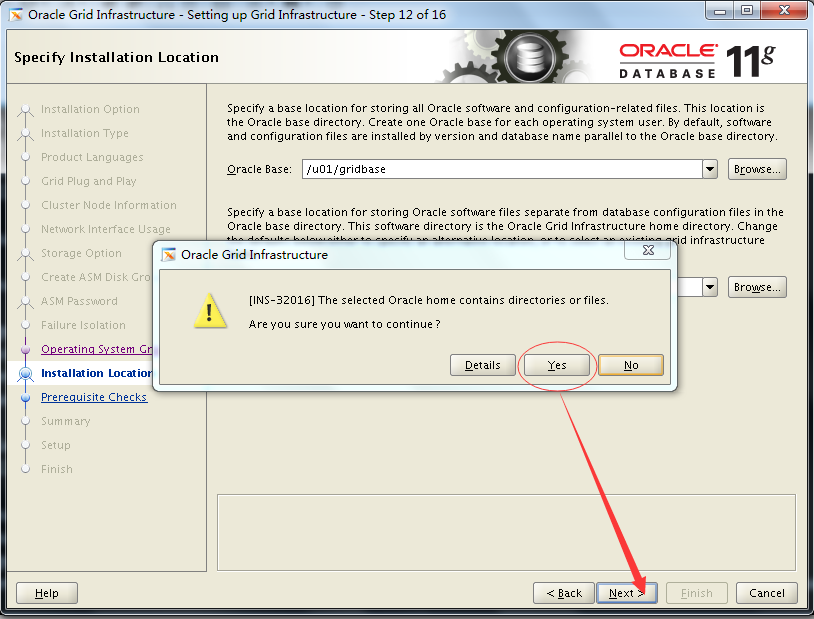

默认即可,软件会识别环境变量中的信息,对应的是ORACLE_BASE和ORACLE_HOME

选择yes

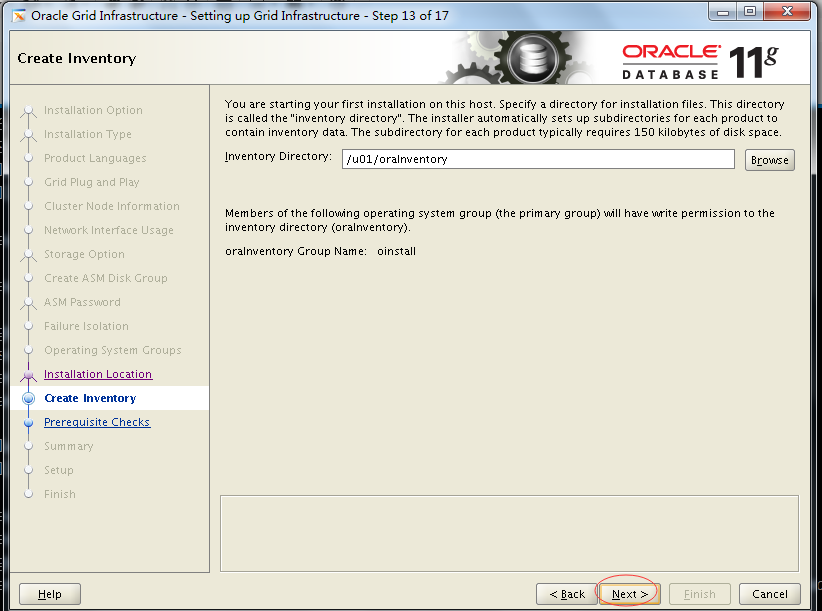

默认,next(创建inventory目录,与$GRID_BASE目录同级)

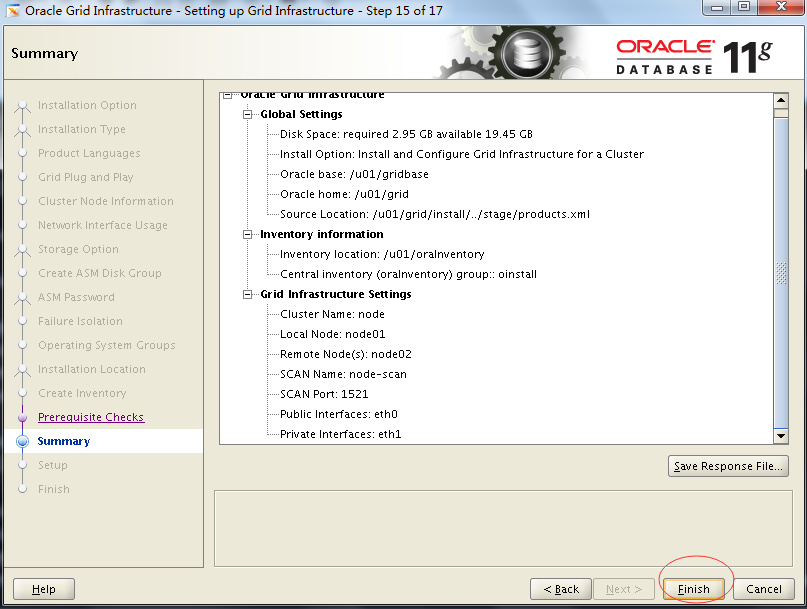

点击Finish,此时才是安装GI,之前只是在进行环境检测与安装环境准备

可以看到日志文件位置:/u01/oraInventory/logs/installActions2018-02-05_01-24-50PM.log

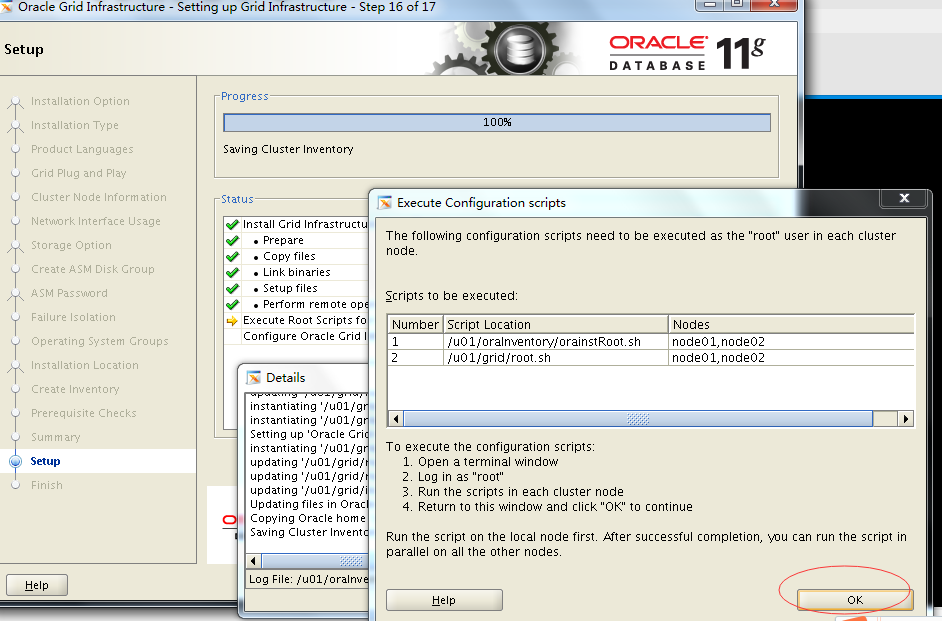

新开一个窗口,以root用户顺序执行两个shell脚本

/u01/oracleInventory/orainstRoot.sh

/u01/grid/root.sh

ssh node02

/u01/oracleInventory/orainstRoot.sh

/u01/grid/root.sh

官方文档中是先执行node01上的oraInstRoot.sh,再执行node02上的oraInstRoot.sh;再执行node01上的root.sh,再执行node02上的root.sh;

但是实际执行发现不是这样的顺序也可(先在node01上执行完之后,再在node02上执行也可)7

7

1

/u01/oracleInventory/orainstRoot.sh

2

/u01/grid/root.sh

3

ssh node02

4

/u01/oracleInventory/orainstRoot.sh

5

/u01/grid/root.sh

6

官方文档中是先执行node01上的oraInstRoot.sh,再执行node02上的oraInstRoot.sh;再执行node01上的root.sh,再执行node02上的root.sh;

7

但是实际执行发现不是这样的顺序也可(先在node01上执行完之后,再在node02上执行也可)

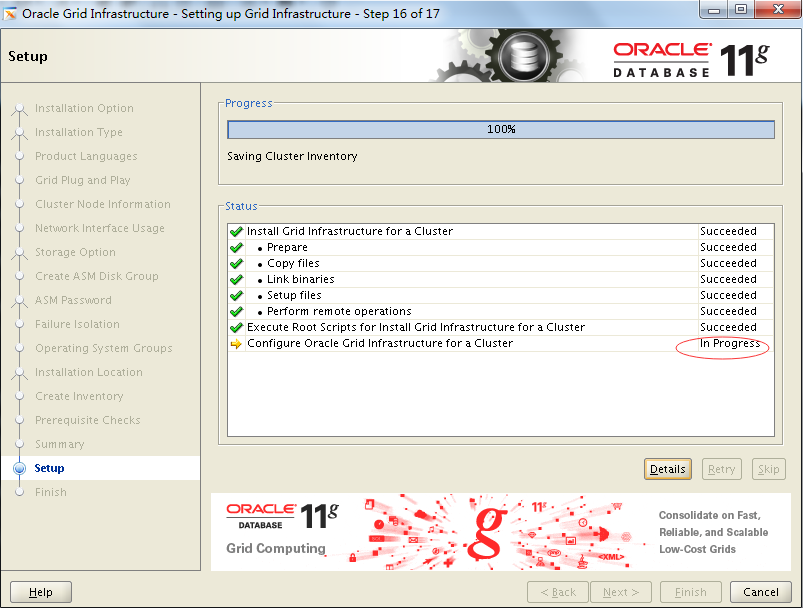

执行完成(成功)以后,点击返回窗口,点击ok

出现如下界面(在继续进行集群配置)

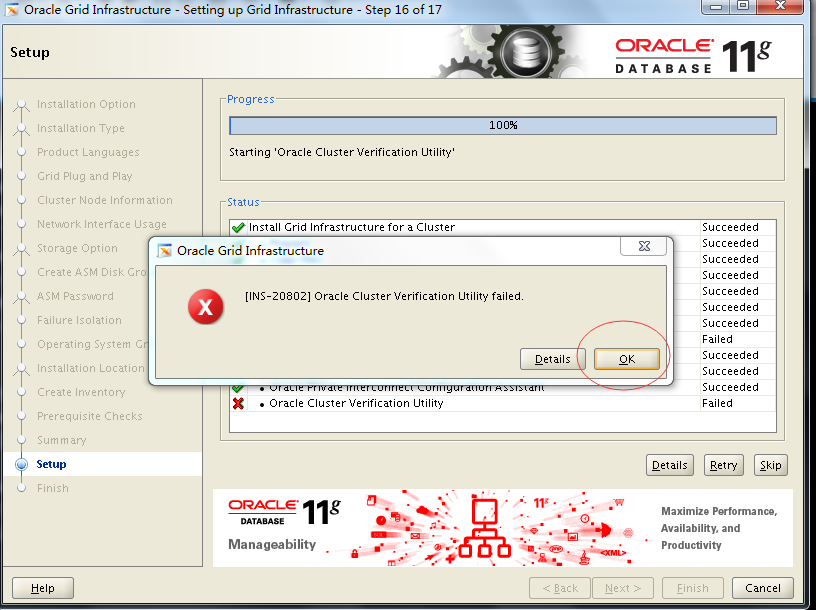

然后会出现如下界面

此错误可以忽略,只要能ping通scan ip即正常,不影响安装和使用。点击ok

点击next

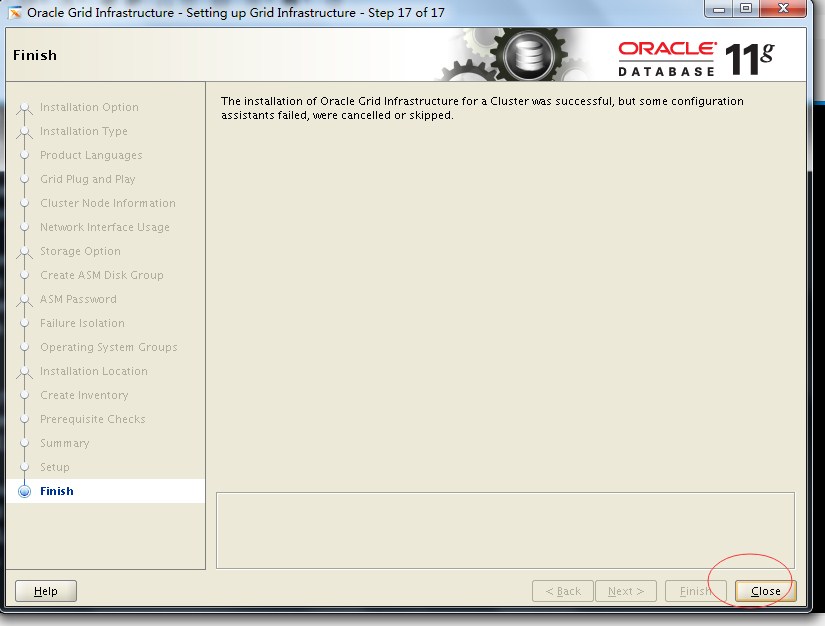

点击close

至此,Cluster集群就安装完毕了,只需要在一个节点上安装即可,会自动复制到其他节点中,这里是在node01中安装的。

(6)检查cluster

检查crs状态

[grid@node01 ~]$ crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online5

5

1

[grid@node01 ~]$ crsctl check crs

2

CRS-4638: Oracle High Availability Services is online

3

CRS-4537: Cluster Ready Services is online

4

CRS-4529: Cluster Synchronization Services is online

5

CRS-4533: Event Manager is online

检查Clusterware资源

[grid@node01 ~]$ crs_stat -t -v

Name Type R/RA F/FT Target State Host

----------------------------------------------------------------------

ora.DATA.dg ora....up.type 0/5 0/ ONLINE ONLINE node01

ora....ER.lsnr ora....er.type 0/5 0/ ONLINE ONLINE node01

ora....N1.lsnr ora....er.type 0/5 0/0 ONLINE ONLINE node01

ora.asm ora.asm.type 0/5 0/ ONLINE ONLINE node01

ora.eons ora.eons.type 0/3 0/ ONLINE ONLINE node01

ora.gsd ora.gsd.type 0/5 0/ OFFLINE OFFLINE

ora....network ora....rk.type 0/5 0/ ONLINE ONLINE node01

ora....SM1.asm application 0/5 0/0 ONLINE ONLINE node01

ora....01.lsnr application 0/5 0/0 ONLINE ONLINE node01

ora.node01.gsd application 0/5 0/0 OFFLINE OFFLINE

ora.node01.ons application 0/3 0/0 ONLINE ONLINE node01

ora.node01.vip ora....t1.type 0/0 0/0 ONLINE ONLINE node01

ora....SM2.asm application 0/5 0/0 ONLINE ONLINE node02

ora....02.lsnr application 0/5 0/0 ONLINE ONLINE node02

ora.node02.gsd application 0/5 0/0 OFFLINE OFFLINE

ora.node02.ons application 0/3 0/0 ONLINE ONLINE node02

ora.node02.vip ora....t1.type 0/0 0/0 ONLINE ONLINE node02

ora.oc4j ora.oc4j.type 0/5 0/0 OFFLINE OFFLINE

ora.ons ora.ons.type 0/3 0/ ONLINE ONLINE node01

ora.scan1.vip ora....ip.type 0/0 0/0 ONLINE ONLINE node0123

23

1

[grid@node01 ~]$ crs_stat -t -v

2

Name Type R/RA F/FT Target State Host

3

----------------------------------------------------------------------

4

ora.DATA.dg ora....up.type 0/5 0/ ONLINE ONLINE node01

5

ora....ER.lsnr ora....er.type 0/5 0/ ONLINE ONLINE node01

6

ora....N1.lsnr ora....er.type 0/5 0/0 ONLINE ONLINE node01

7

ora.asm ora.asm.type 0/5 0/ ONLINE ONLINE node01

8

ora.eons ora.eons.type 0/3 0/ ONLINE ONLINE node01

9

ora.gsd ora.gsd.type 0/5 0/ OFFLINE OFFLINE

10

ora....network ora....rk.type 0/5 0/ ONLINE ONLINE node01

11

ora....SM1.asm application 0/5 0/0 ONLINE ONLINE node01

12

ora....01.lsnr application 0/5 0/0 ONLINE ONLINE node01

13

ora.node01.gsd application 0/5 0/0 OFFLINE OFFLINE

14

ora.node01.ons application 0/3 0/0 ONLINE ONLINE node01

15

ora.node01.vip ora....t1.type 0/0 0/0 ONLINE ONLINE node01

16

ora....SM2.asm application 0/5 0/0 ONLINE ONLINE node02

17

ora....02.lsnr application 0/5 0/0 ONLINE ONLINE node02

18

ora.node02.gsd application 0/5 0/0 OFFLINE OFFLINE

19

ora.node02.ons application 0/3 0/0 ONLINE ONLINE node02

20

ora.node02.vip ora....t1.type 0/0 0/0 ONLINE ONLINE node02

21

ora.oc4j ora.oc4j.type 0/5 0/0 OFFLINE OFFLINE

22

ora.ons ora.ons.type 0/3 0/ ONLINE ONLINE node01

23

ora.scan1.vip ora....ip.type 0/0 0/0 ONLINE ONLINE node01

检查集群节点

[grid@node01 ~]$ olsnodes -n

node01 1

node02 2

[grid@node01 ~]$ olsnodes

node01

node02

[grid@node01 ~]$ olsnodes -n -i -s -t

node01 1 node01-vip Active Unpinned

node02 2 node02-vip Active Unpinned

x

10

1

[grid@node01 ~]$ olsnodes -n

2

node011

3

node022

4

[grid@node01 ~]$ olsnodes

5

node01

6

node02

7

[grid@node01 ~]$ olsnodes -n -i -s -t

8

node011node01-vipActiveUnpinned

9

node022node02-vipActiveUnpinned

10

[grid@node01 ~]$ crsctl check cluster -all

**************************************************************

node01:

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

**************************************************************

node02:

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

**************************************************************12

12

1

[grid@node01 ~]$ crsctl check cluster -all

2

**************************************************************

3

node01:

4

CRS-4537: Cluster Ready Services is online

5

CRS-4529: Cluster Synchronization Services is online

6

CRS-4533: Event Manager is online

7

**************************************************************

8

node02:

9

CRS-4537: Cluster Ready Services is online

10

CRS-4529: Cluster Synchronization Services is online

11

CRS-4533: Event Manager is online

12

**************************************************************

检查两个节点上的Oracle TNS监听器进程

[grid@node01 ~]$ ps -ef|grep lsnr|grep -v 'grep'|grep -v 'ocfs'|awk '{print$9}'

LISTENER_SCAN1

LISTENER3

3

1

[grid@node01 ~]$ ps -ef|grep lsnr|grep -v 'grep'|grep -v 'ocfs'|awk '{print$9}'

2

LISTENER_SCAN1

3

LISTENER

确认针对Oracle Clusterware文件的Oracle ASM功能:

如果在 Oracle ASM 上暗转过了OCR和表决磁盘文件,则以Grid Infrastructure 安装所有者的身份,使用给下面的命令语法来确认当前正在运行已安装的Oracle ASM

[grid@node01 ~]$ srvctl status asm -a

ASM is running on node01,node02

ASM is enabled.3

3

1

[grid@node01 ~]$ srvctl status asm -a

2

ASM is running on node01,node02

3

ASM is enabled.

3 安装oracle(只需要在节点node01上执行即可 )

向node01节点上传文件linux_x64_oracle11g.zip

# unzip linux_x64_oracle11g.zip

# chown -R oracle:oinstall linux_x64_oracle11g

# chmod -R 777 linux_x64_oracle11g

# su - oracle

[oracle@node01 ~]$ cd /u01/linux_x64_oracle11g

[oracle@node01 ~]$ ./runInstaller6

6

1

# unzip linux_x64_oracle11g.zip

2

# chown -R oracle:oinstall linux_x64_oracle11g

3

# chmod -R 777 linux_x64_oracle11g

4

# su - oracle

5

[oracle@node01 ~]$ cd /u01/linux_x64_oracle11g

6

[oracle@node01 ~]$ ./runInstaller

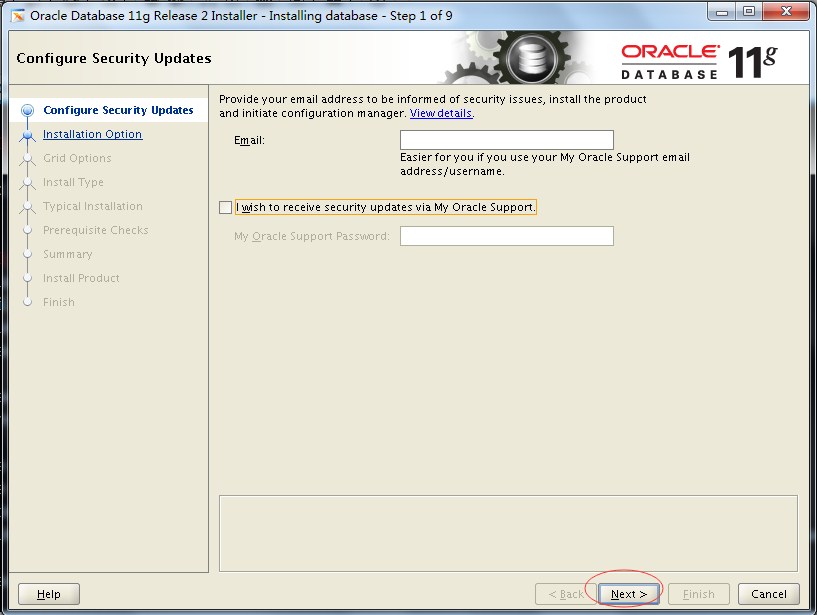

邮件不填;复选框不勾选;点击next

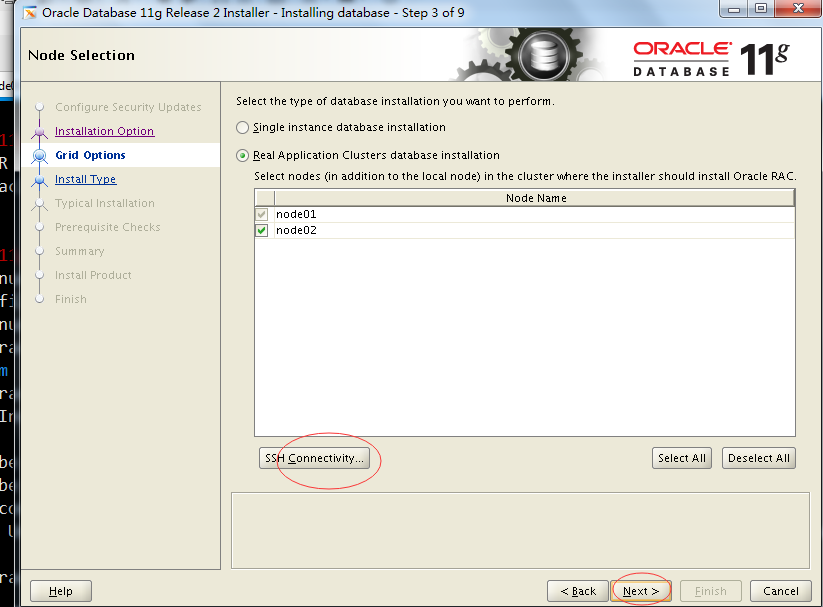

勾选第二个,next

出现如下界面

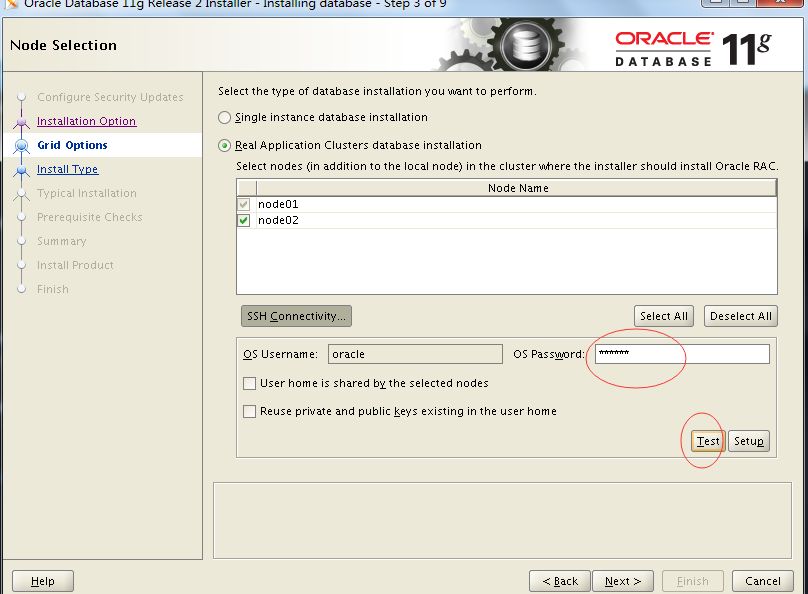

点击填写os 密码之后,点击Test,如果互信检测出错,则点击setup按钮,建立互信,然后点击next

默认,点击next

选择企业版本,点击next

默认,点击next

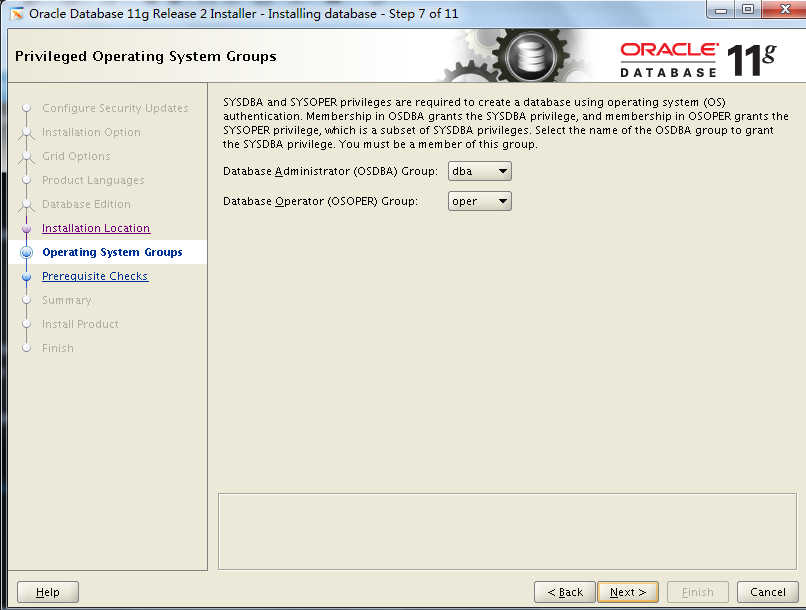

默认,点击next

保存响应文件,点击Finish,开始安装oracle

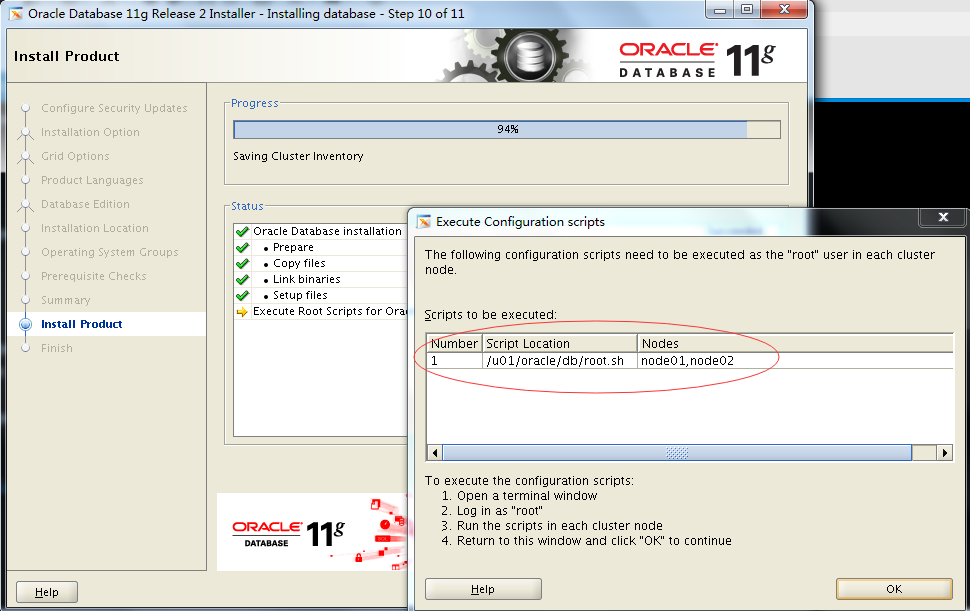

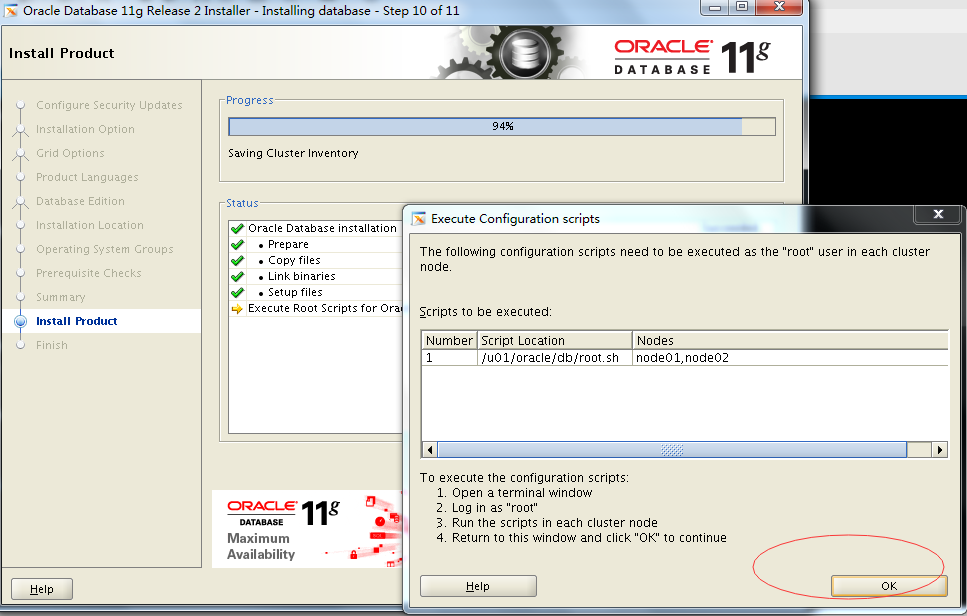

分别在node01和node02上执行root.sh脚本

然后点击ok

点击close,则oracle安装完成

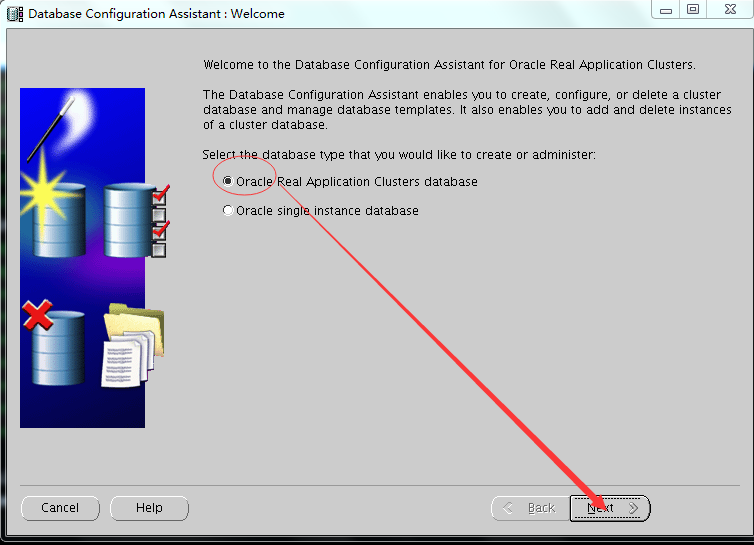

4 dbca创建数据库

# su - oracle

[oracle@node01]$ dbca2

2

1

# su - oracle

2

[oracle@node01]$ dbca

点击next

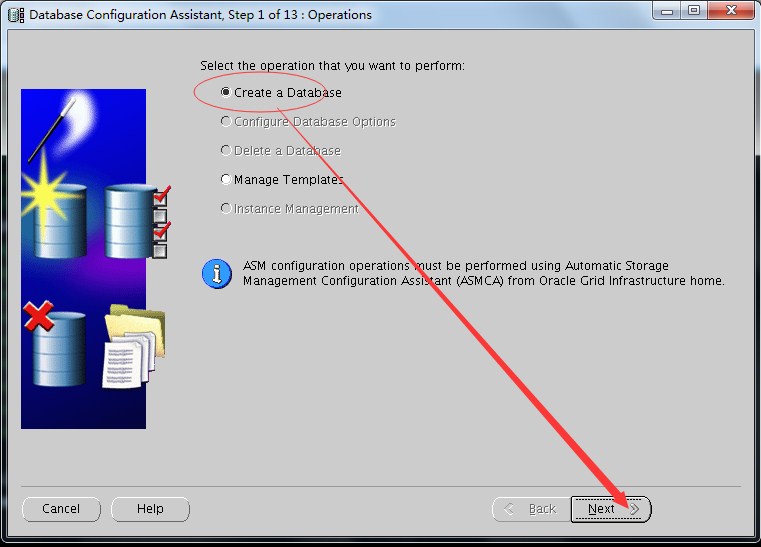

点击next

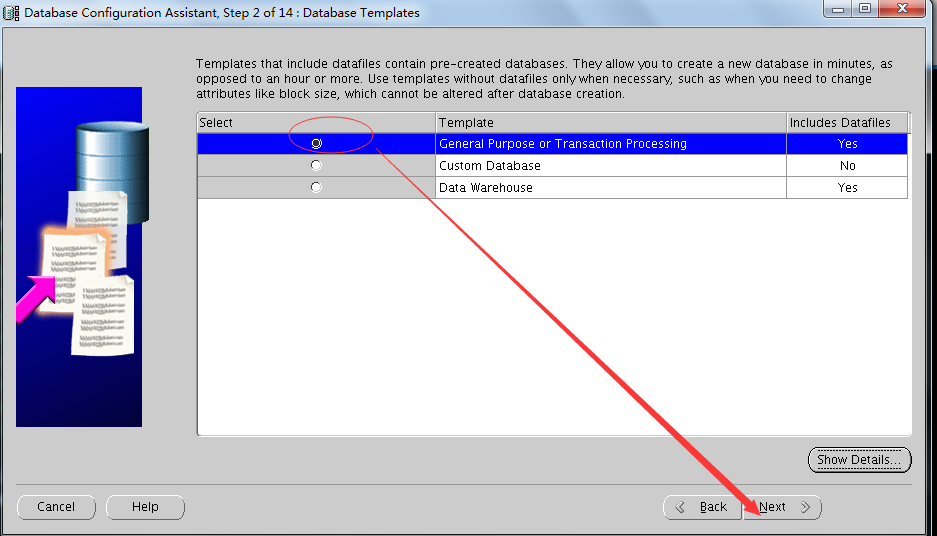

点击next(可以选择第一项或第二项,第二项是自定义数据库,可以更多的设置参数,但是创建数据库更慢)

sid前缀来自node01和node02上的$ORALCE_SID(cludb01与cludb02)

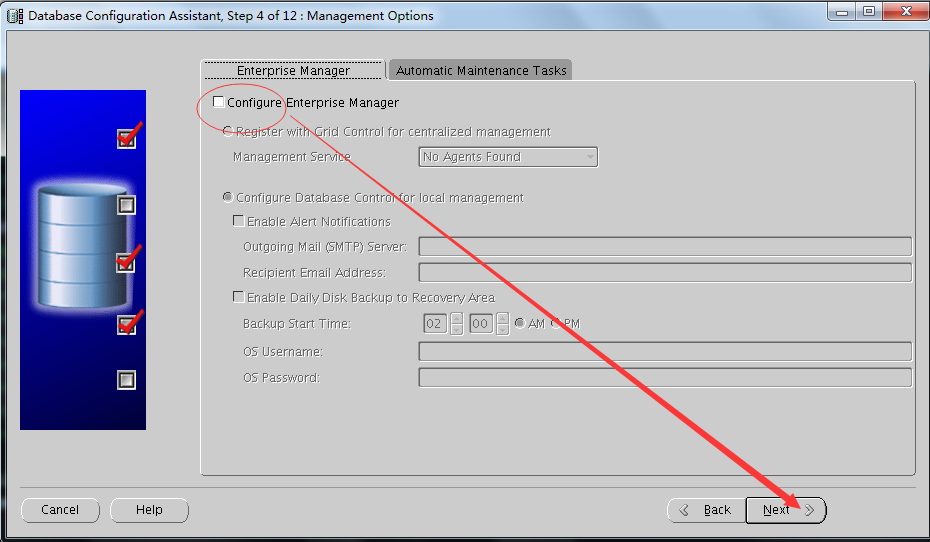

取消勾选Configure Enterprise Manager,点击next

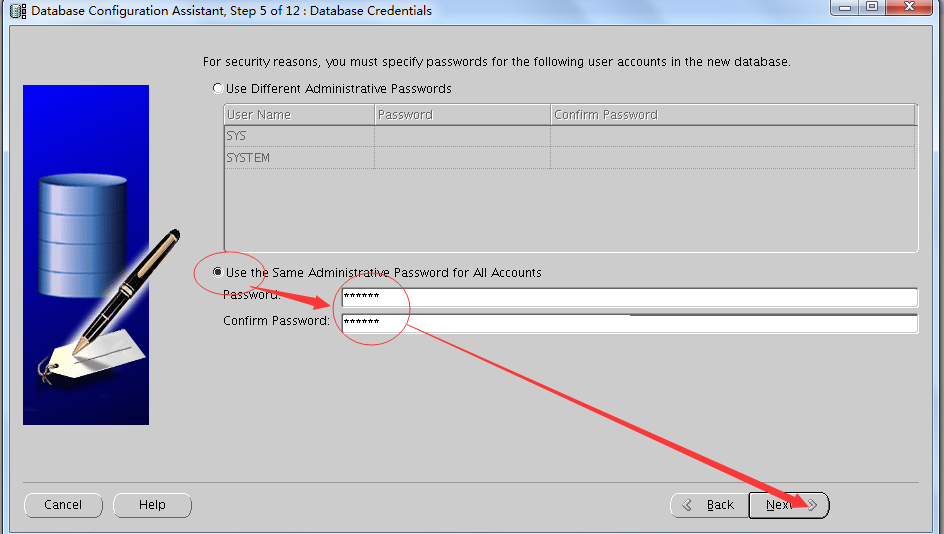

点击next

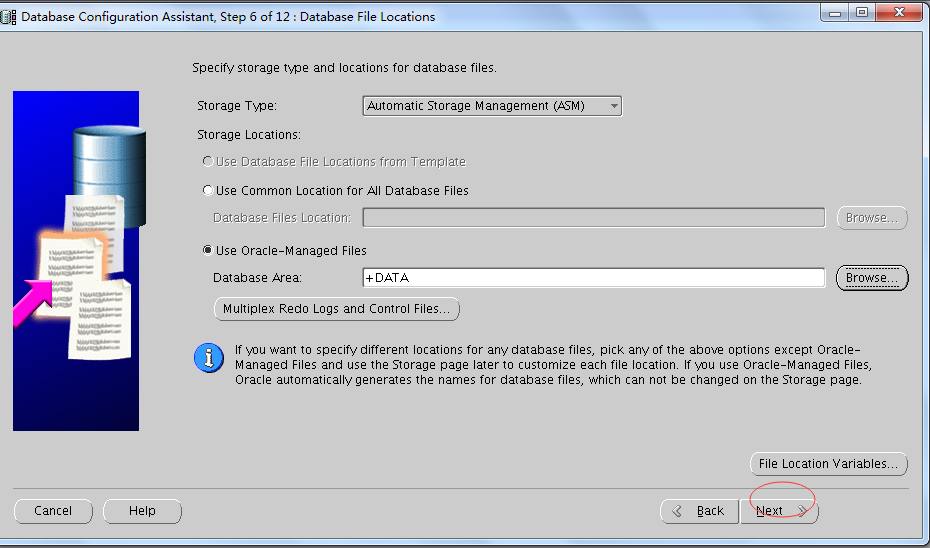

默认,点击next

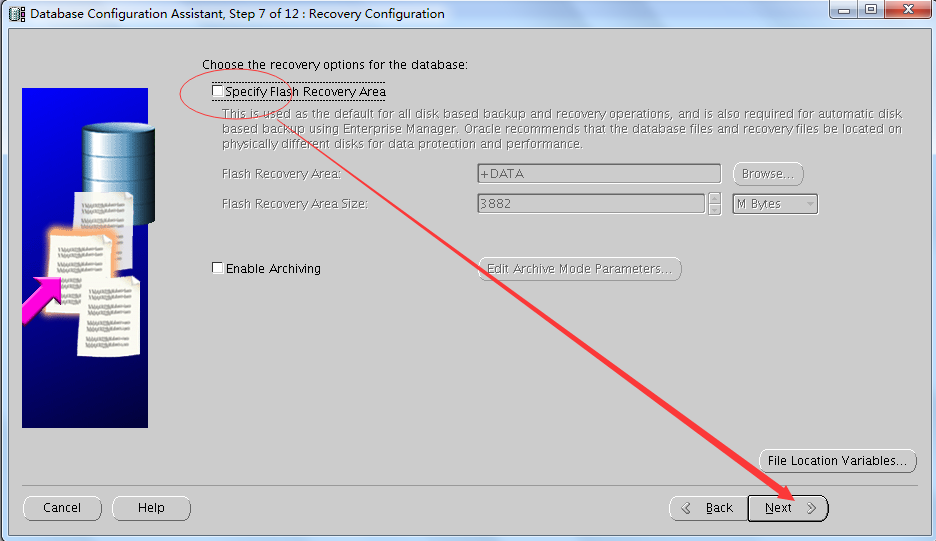

禁用FRA

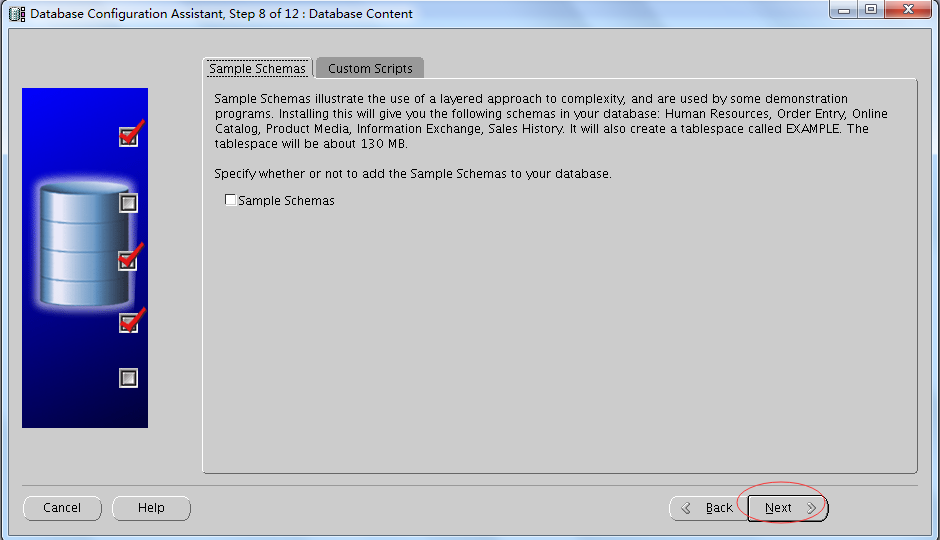

默认,点击next

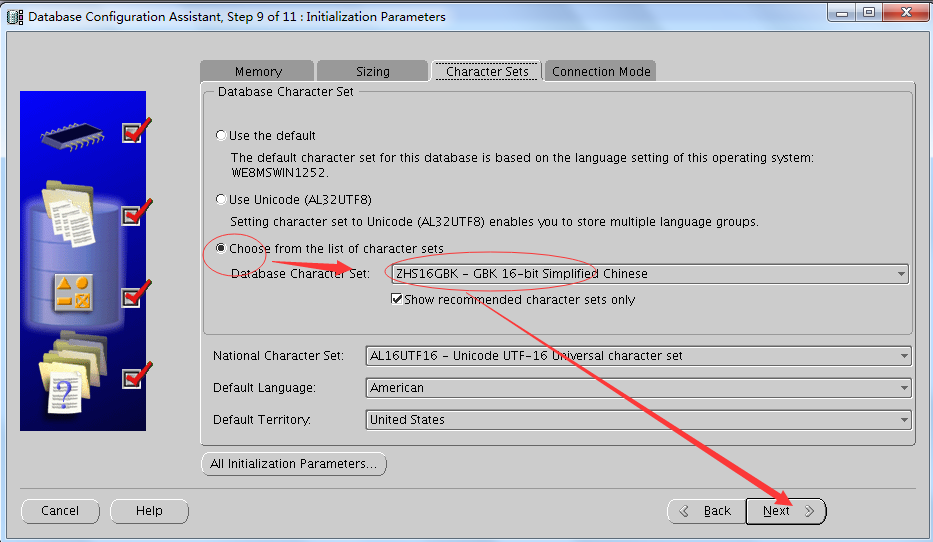

设置数据库字符集和国家字符集

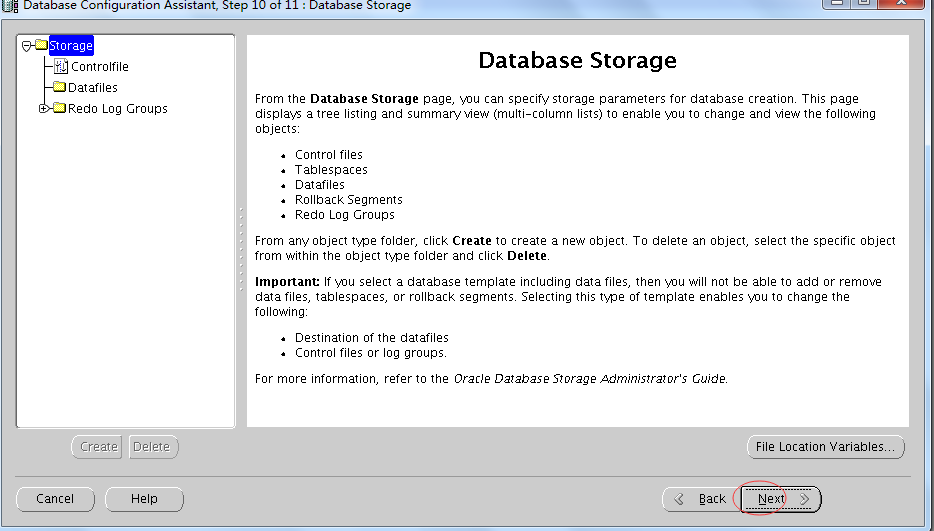

点击next

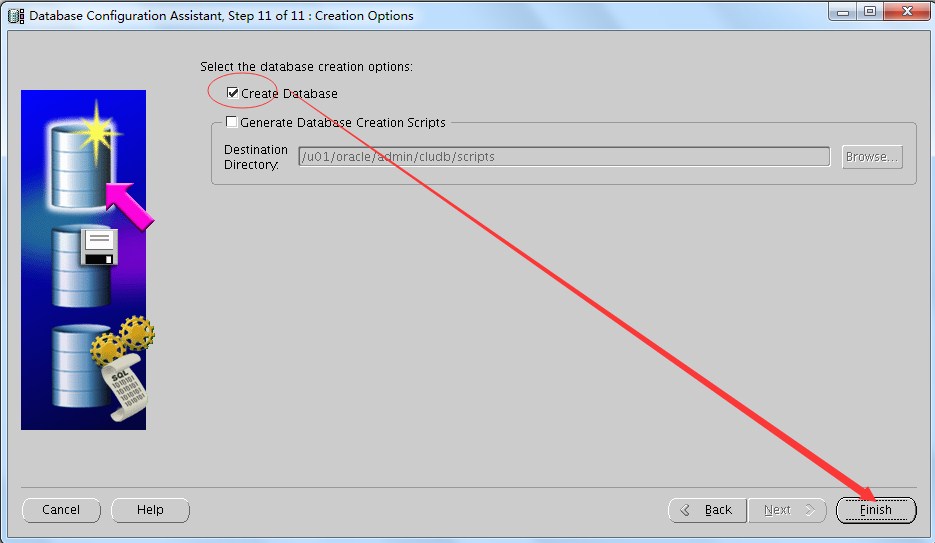

点击Finish(第二项也可以勾选)

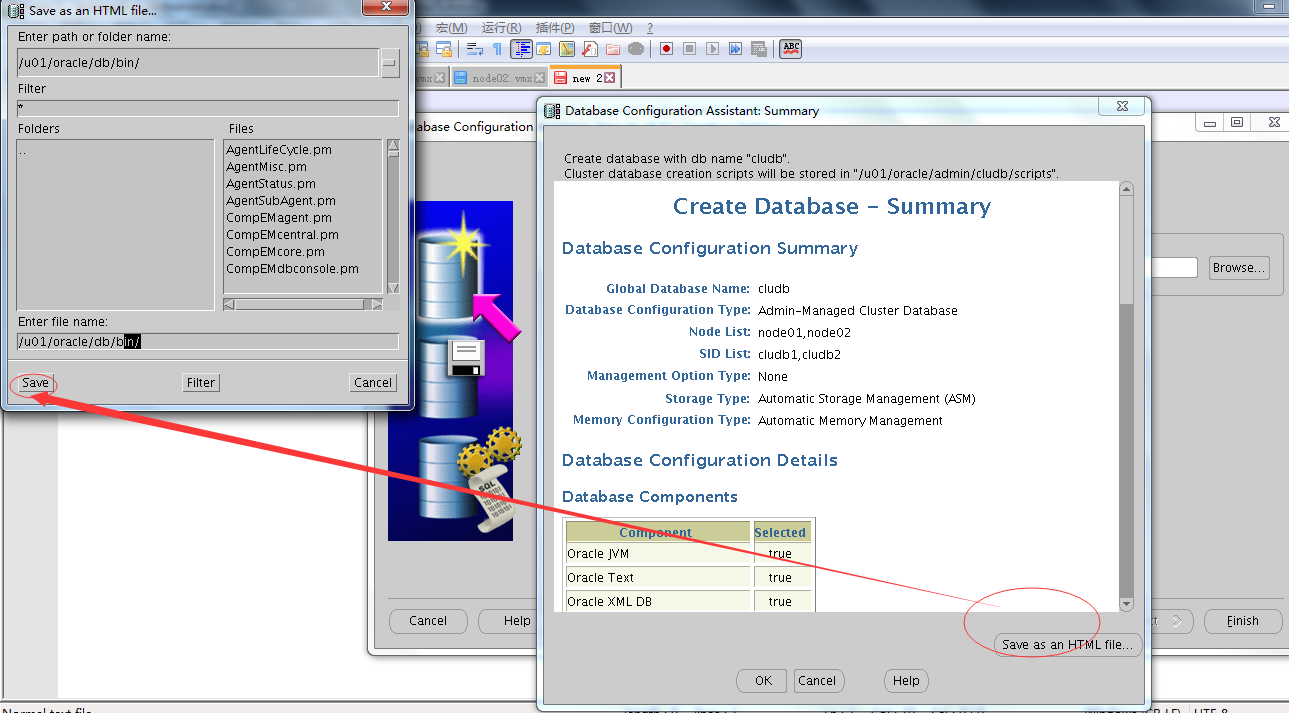

create database summary path: /u01/oracle/db/bin/

create database script path: /u01/oracle/admin/cludb/scripts2

2

1

create database summary path: /u01/oracle/db/bin/

2

create database script path: /u01/oracle/admin/cludb/scripts

5 RAC日常管理与维护

# su - grid

[grid@node01 ~]$ crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.DATA.dg ora....up.type ONLINE ONLINE node01

ora....ER.lsnr ora....er.type ONLINE ONLINE node01

ora....N1.lsnr ora....er.type ONLINE ONLINE node01

ora.asm ora.asm.type ONLINE ONLINE node01

ora.cludb.db ora....se.type ONLINE ONLINE node01

ora.eons ora.eons.type ONLINE ONLINE node01

ora.gsd ora.gsd.type OFFLINE OFFLINE

ora....network ora....rk.type ONLINE ONLINE node01

ora....SM1.asm application ONLINE ONLINE node01

ora....01.lsnr application ONLINE ONLINE node01

ora.node01.gsd application OFFLINE OFFLINE

ora.node01.ons application ONLINE ONLINE node01

ora.node01.vip ora....t1.type ONLINE ONLINE node01

ora....SM2.asm application ONLINE ONLINE node02

ora....02.lsnr application ONLINE ONLINE node02

ora.node02.gsd application OFFLINE OFFLINE

ora.node02.ons application ONLINE ONLINE node02

ora.node02.vip ora....t1.type ONLINE ONLINE node02

ora.oc4j ora.oc4j.type OFFLINE OFFLINE

ora.ons ora.ons.type ONLINE ONLINE node01

ora.scan1.vip ora....ip.type ONLINE ONLINE node01 25

25

1

# su - grid

2

[grid@node01 ~]$ crs_stat -t

3

Name Type Target State Host

4

------------------------------------------------------------

5

ora.DATA.dg ora....up.type ONLINE ONLINE node01

6

ora....ER.lsnr ora....er.type ONLINE ONLINE node01

7

ora....N1.lsnr ora....er.type ONLINE ONLINE node01

8

ora.asm ora.asm.type ONLINE ONLINE node01

9

ora.cludb.db ora....se.type ONLINE ONLINE node01

10

ora.eons ora.eons.type ONLINE ONLINE node01

11

ora.gsd ora.gsd.type OFFLINE OFFLINE

12

ora....network ora....rk.type ONLINE ONLINE node01

13

ora....SM1.asm application ONLINE ONLINE node01

14

ora....01.lsnr application ONLINE ONLINE node01

15

ora.node01.gsd application OFFLINE OFFLINE

16

ora.node01.ons application ONLINE ONLINE node01

17

ora.node01.vip ora....t1.type ONLINE ONLINE node01

18

ora....SM2.asm application ONLINE ONLINE node02

19

ora....02.lsnr application ONLINE ONLINE node02

20

ora.node02.gsd application OFFLINE OFFLINE

21

ora.node02.ons application ONLINE ONLINE node02

22

ora.node02.vip ora....t1.type ONLINE ONLINE node02

23

ora.oc4j ora.oc4j.type OFFLINE OFFLINE

24

ora.ons ora.ons.type ONLINE ONLINE node01

25

ora.scan1.vip ora....ip.type ONLINE ONLINE node01

检查集群运行状态

[grid@node01 ~]$ srvctl status database -d cludb

Instance cludb1 is running on node node01

Instance cludb2 is running on node node023

3

1

[grid@node01 ~]$ srvctl status database -d cludb

2

Instance cludb1 is running on node node01

3

Instance cludb2 is running on node node02

查看集群件的表决磁盘信息

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 65583bc1c3304f02bf6ead3e6c20becc (ORCL:DISK01) [DATA]

Located 1 voting disk(s).4

4

1

## STATE File Universal Id File Name Disk group

2

-- ----- ----------------- --------- ---------

3

1. ONLINE 65583bc1c3304f02bf6ead3e6c20becc (ORCL:DISK01) [DATA]

4

Located 1 voting disk(s).

查看集群SCAN VIP信息

SCAN name: node-scan, Network: 1/192.168.110.0/255.255.255.0/eth0

SCAN VIP name: scan1, IP: /node-scan/192.168.110.2002

2

1

SCAN name: node-scan, Network: 1/192.168.110.0/255.255.255.0/eth0

2

SCAN VIP name: scan1, IP: /node-scan/192.168.110.200

查看集群SCAN Listener信息

[grid@node01 ~]$ srvctl config scan_listener

SCAN Listener LISTENER_SCAN1 exists. Port: TCP:15212

2

1

[grid@node01 ~]$ srvctl config scan_listener

2

SCAN Listener LISTENER_SCAN1 exists. Port: TCP:1521

启、停集群数据库

关闭整个集群数据库

[grid@node01 ~]$ srvctl stop database -d cludb1

1

1

[grid@node01 ~]$ srvctl stop database -d cludb

启动整个集群数据库

[grid@node01 ~]$ srvctl start database -d cludb1

1

[grid@node01 ~]$ srvctl start database -d cludb

<wiz_tmp_tag id="wiz-table-range-border" contenteditable="false" style="display: none;">