Centos6下安装伪分布式hadoop集群,选取的hadoop版本是apache下的hadoop2.7.1,在一台linux服务器上安装hadoop后,同时拥有namenode,datanode和secondarynamenode等节点的功能,下面简单安装下。

前置准备

主要涉及防火墙关闭、jdk安装、主机名修改、ip映射、免密登录设置等。

关闭防火墙

有临时和永久,这里使用永久关闭的方式。

# 临时关闭

[root@youngchaolin ~]# service iptables stop [root@youngchaolin ~]# service iptables status iptables: Firewall is not running.

# 永久关闭 [root@youngchaolin ~]# chkconfig iptables off [root@youngchaolin ~]# service iptables status iptables: Firewall is not running.

安装jdk

需要安装jdk,这里使用1.8的版本,安装完jdk需要配置环境变量。

(1)解压压缩包

tar -zxvf jdk-8u181-linux-x64.tar.gz

(2)配置环境变量,vim修改/etc/profile文件,修改完成后保存退出。

# 配置jdk JAVA_HOME=/home/software/jdk1.8.0_181 PATH=$JAVA_HOME/bin:$PATH

(3)java -version查看是否安装成功,ok。

[root@youngchaolin /home/software/jdk1.8.0_181]# java -version java version "1.8.0_181" Java(TM) SE Runtime Environment (build 1.8.0_181-b13) Java HotSpot(TM) 64-Bit Server VM (build 25.181-b13, mixed mode)

修改主机名

修改主机名,是为了后面方面配置,这里我修改主机名为node01。

(1)修改/etc/sysconfig/network,将主机名修改为node01。

[root@youngchaolin /home/software]# vim /etc/sysconfig/network

# network修改后,最好为数字和字母组合

NETWORKING=yes

HOSTNAME=node01

(2)修改network后source /etc/sysconfig/network使修改生效。

# 主机已变成node01

[root@node01 ~]#

将主机名和ip进行映射

需要修改/etc/hosts文件,这在完全分布式配置中也需要修改,配置完可以方便免密登录,修改完后重启主机。

# 修改hosts文件 [root@node01 ~]# vim /etc/hosts # 添加如下内容 192.168.200.100 node01

配置免密登录

(1)生成公私钥,一路回车到底即可,这样在~/.ssh目录下会生成公私钥对。

[root@node01 ~]# ssh-keygen Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Created directory '/root/.ssh'. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: 39:06:64:52:7d:e1:62:18:3b:cb:81:5e:d6:8c:82:c0 root@node01 The key's randomart image is: +--[ RSA 2048]----+ |o ..=. .. | | E . = B... | | . o X =.. | | . = * o | | . o S | | . . | | | | | | | +-----------------+

(2)使用ssh-copy-id root@node01将当前机器的公钥发送给node01,保存在~/.ssh的authorized_key文件中,发现报错找不到ssh-copy-id的命令。

[root@node01 ~]# ssh-copy-id root@node01 -bash: ssh-copy-id: command not found

(3)使用如下命令安装openssh-clients后,就可以使用上述命令了。

[root@node01 ~/.ssh]# yum install openssh-clients

(4)第一连接会提示是否连接,选择yes连接成功后会在~/.known_hosts文件中添加远程登录机器的信息,后面就不再提示了,完成后测试免密登录和登出。

[root@node01 ~/.ssh]# ssh-copy-id root@node01 The authenticity of host 'node01 (192.168.200.100)' can't be established. RSA key fingerprint is 6e:ff:8a:94:8c:80:d7:ba:45:fe:ff:5d:e5:5a:60:71. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'node01,192.168.200.100' (RSA) to the list of known hosts. root@node01's password: Now try logging into the machine, with "ssh 'root@node01'", and check in: .ssh/authorized_keys to make sure we haven't added extra keys that you weren't expecting.

# 免密登录测试ok [root@node01 ~/.ssh]# ssh node01 Last login: Fri Dec 6 05:34:56 2019 from 192.168.200.10 welcom to clyang's Linux of Centos6.9 # 登出 [root@node01 ~]# logout Connection to node01 closed. [root@node01 ~/.ssh]# ll total 16

# 配置完后会有四个文件,具体作用参考文末博文 -rw-------. 1 root root 393 Dec 6 05:38 authorized_keys -rw-------. 1 root root 1675 Dec 6 05:35 id_rsa -rw-r--r--. 1 root root 393 Dec 6 05:35 id_rsa.pub -rw-r--r--. 1 root root 404 Dec 6 05:38 known_hosts

安装hadoop

接下来就可以正式安装hadoop了,需要先准备好hadoop的压缩包,这里准备的是apache下的hadoop2.7.1版本。

解压hadoop

解压压缩文件到安装目录/home/software下。

[root@node01 /home/software]# tar -xf hadoop2.7.1.tar.gz

修改hadoop-env.sh

需要指定JAVA_HOME,即前面配置的jdk的路径,还需要指定HADOOP_CONF_DIR目录,即hadoop的配置文件目录。

# The java implementation to use. export JAVA_HOME=/home/software/jdk1.8.0_181 # The jsvc implementation to use. Jsvc is required to run secure datanodes # that bind to privileged ports to provide authentication of data transfer # protocol. Jsvc is not required if SASL is configured for authentication of # data transfer protocol using non-privileged ports. #export JSVC_HOME=${JSVC_HOME} export HADOOP_CONF_DIR=/home/software/hadoop-2.7.1/etc/hadoop

编辑core-site.xml

在这里配置的属性名一般以hadoop/fs开头,具体参考注释,只在configuration里添加property即可,后续好几个核心文件配置都是如此。

<configuration> <!--指定文件系统--> <property> <name>fs.defaultFS</name> <value>hdfs://node01:9000</value> </property> <!--指定hdfs数据存储目录--> <property> <name>hadoop.tmp.dir</name> <value>/home/software/hadoop-2.7.1/tmp</value> </property> <!--开启回收站策略--> <property> <name>fs.trash.interval</name> <value>1440</value> </property> </configuration>

编辑hdfs-site.xml

对于伪分布式系统,副本数需设置为1,否则启动hadoop集群后会一直处于安全模式。

<configuration> <!--hdfs文件的副本数--> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

编辑mapred-site.xml

hadoop中MapReduce计算在hadoop2.0版本是依赖yarn的,因此需要配置。

<configuration> <!--mapreduce运行基于yarn,也可以调整为local--> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <property> <name>mapreduce.job.ubertask.enable</name> <value>true</value> </property> <!--配置jobhistory--> <property> <name>mapreduce.jobhistory.address</name> <value>node01:10020</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>node01:19888</value> </property> </configuration>

编辑yarn-site.xml

资源调度器yarn相关的配置。

<configuration> <!-- Site specific YARN configuration properties --> <!--指定YARN中ResourceMamager的地址--> <property> <name>yarn.resourcemanager.hostname</name> <value>node01</value> </property> <!--指定NodeManager中数据的获取方式--> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.log.server.url</name> <value>http://node01:19888/jobhistory/logs</value> </property> <property> <name>yarn.log-aggregation-enable</name> <value>true</value> </property> </configuration>

编辑slaves文件

配置集群有多少个节点,现在只有node01,就配置一个。

# slaves文件中添加节点名

node01

配置hadoop环境变量

在/etc/profile添加hadoop的环境变量,将bin和sbin下的命令添加,这样系统就可以识别了。

# 配置hadoop

export HADOOP_HOME=/home/software/hadoop-2.7.1

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

source使其生效,可以使用echo $PATH命令查看下环境变量。

[root@node01 /home/software/hadoop-2.7.1/etc/hadoop]# echo $PATH

/home/software/jdk1.8.0_181/bin:/home/software/jdk1.8.0_181/bin:/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin:/home/software/hadoop-2.7.1/bin:/home/software/hadoop-2.7.1/sbin

格式化hadoop

这一步很关键,如果前面配置成功,这一步也将成功,否则失败,使用hadoop namenode -format命令。

记录

[root@node01 /home/software/hadoop-2.7.1]# hadoop namenode -format DEPRECATED: Use of this script to execute hdfs command is deprecated. Instead use the hdfs command for it. 19/12/06 06:18:45 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = node01/192.168.200.100 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.7.1 STARTUP_MSG: classpath = /home/software/hadoop-2.7.1/etc/hadoop:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/paranamer-2.3.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-cli-1.2.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-math3-3.1.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/gson-2.2.4.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jsr305-3.0.0.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jersey-json-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/httpcore-4.2.5.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/junit-4.11.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/activation-1.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-net-3.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/xz-1.0.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/hadoop-auth-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/asm-3.2.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-httpclient-3.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-lang-2.6.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/httpclient-4.2.5.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jetty-6.1.26.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-digester-1.8.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jettison-1.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-io-2.4.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/curator-client-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jsch-0.1.42.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/hadoop-annotations-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jersey-server-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jersey-core-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/guava-11.0.2.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/xmlenc-0.52.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/servlet-api-2.5.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/curator-framework-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/avro-1.7.4.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/jsp-api-2.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/log4j-1.2.17.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-codec-1.4.jar:/home/software/hadoop-2.7.1/share/hadoop/common/lib/commons-collections-3.2.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/hadoop-nfs-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/hadoop-common-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/common/hadoop-common-2.7.1-tests.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/asm-3.2.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/commons-io-2.4.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/guava-11.0.2.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/hadoop-hdfs-2.7.1-tests.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/hdfs/hadoop-hdfs-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-cli-1.2.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/aopalliance-1.0.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jersey-json-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/javax.inject-1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/activation-1.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jersey-client-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/xz-1.0.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/asm-3.2.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-lang-2.6.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jetty-6.1.26.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jettison-1.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-io-2.4.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jersey-server-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/guice-3.0.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jersey-core-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/guava-11.0.2.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/servlet-api-2.5.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/log4j-1.2.17.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-codec-1.4.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/lib/commons-collections-3.2.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-api-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-common-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-registry-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-common-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-client-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/javax.inject-1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/junit-4.11.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/xz-1.0.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/asm-3.2.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/guice-3.0.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.1-tests.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.1.jar:/home/software/hadoop-2.7.1/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.1.jar:/home/software/hadoop-2.7.1/contrib/capacity-scheduler/*.jar:/home/software/hadoop-2.7.1/contrib/capacity-scheduler/*.jar STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r 15ecc87ccf4a0228f35af08fc56de536e6ce657a; compiled by 'jenkins' on 2015-06-29T06:04Z STARTUP_MSG: java = 1.8.0_181 ************************************************************/ 19/12/06 06:18:45 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 19/12/06 06:18:45 INFO namenode.NameNode: createNameNode [-format] 19/12/06 06:18:45 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable Formatting using clusterid: CID-269e786e-8cc8-4d37-b9aa-8ba8973a6d30 19/12/06 06:18:45 INFO namenode.FSNamesystem: No KeyProvider found. 19/12/06 06:18:45 INFO namenode.FSNamesystem: fsLock is fair:true 19/12/06 06:18:45 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000 19/12/06 06:18:45 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true 19/12/06 06:18:45 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.000 19/12/06 06:18:45 INFO blockmanagement.BlockManager: The block deletion will start around 2019 Dec 06 06:18:45 19/12/06 06:18:45 INFO util.GSet: Computing capacity for map BlocksMap 19/12/06 06:18:45 INFO util.GSet: VM type = 64-bit 19/12/06 06:18:45 INFO util.GSet: 2.0% max memory 966.7 MB = 19.3 MB 19/12/06 06:18:45 INFO util.GSet: capacity = 2^21 = 2097152 entries 19/12/06 06:18:46 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false 19/12/06 06:18:46 INFO blockmanagement.BlockManager: defaultReplication = 1 19/12/06 06:18:46 INFO blockmanagement.BlockManager: maxReplication = 512 19/12/06 06:18:46 INFO blockmanagement.BlockManager: minReplication = 1 19/12/06 06:18:46 INFO blockmanagement.BlockManager: maxReplicationStreams = 2 19/12/06 06:18:46 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks = false 19/12/06 06:18:46 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000 19/12/06 06:18:46 INFO blockmanagement.BlockManager: encryptDataTransfer = false 19/12/06 06:18:46 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 1000 19/12/06 06:18:46 INFO namenode.FSNamesystem: fsOwner = root (auth:SIMPLE) 19/12/06 06:18:46 INFO namenode.FSNamesystem: supergroup = supergroup 19/12/06 06:18:46 INFO namenode.FSNamesystem: isPermissionEnabled = true 19/12/06 06:18:46 INFO namenode.FSNamesystem: HA Enabled: false 19/12/06 06:18:46 INFO namenode.FSNamesystem: Append Enabled: true 19/12/06 06:18:46 INFO util.GSet: Computing capacity for map INodeMap 19/12/06 06:18:46 INFO util.GSet: VM type = 64-bit 19/12/06 06:18:46 INFO util.GSet: 1.0% max memory 966.7 MB = 9.7 MB 19/12/06 06:18:46 INFO util.GSet: capacity = 2^20 = 1048576 entries 19/12/06 06:18:46 INFO namenode.FSDirectory: ACLs enabled? false 19/12/06 06:18:46 INFO namenode.FSDirectory: XAttrs enabled? true 19/12/06 06:18:46 INFO namenode.FSDirectory: Maximum size of an xattr: 16384 19/12/06 06:18:46 INFO namenode.NameNode: Caching file names occuring more than 10 times 19/12/06 06:18:46 INFO util.GSet: Computing capacity for map cachedBlocks 19/12/06 06:18:46 INFO util.GSet: VM type = 64-bit 19/12/06 06:18:46 INFO util.GSet: 0.25% max memory 966.7 MB = 2.4 MB 19/12/06 06:18:46 INFO util.GSet: capacity = 2^18 = 262144 entries 19/12/06 06:18:46 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033 19/12/06 06:18:46 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0 19/12/06 06:18:46 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000 19/12/06 06:18:46 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 10 19/12/06 06:18:46 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 10 19/12/06 06:18:46 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,25 19/12/06 06:18:46 INFO namenode.FSNamesystem: Retry cache on namenode is enabled 19/12/06 06:18:46 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis 19/12/06 06:18:46 INFO util.GSet: Computing capacity for map NameNodeRetryCache 19/12/06 06:18:46 INFO util.GSet: VM type = 64-bit 19/12/06 06:18:46 INFO util.GSet: 0.029999999329447746% max memory 966.7 MB = 297.0 KB 19/12/06 06:18:46 INFO util.GSet: capacity = 2^15 = 32768 entries 19/12/06 06:18:46 INFO namenode.FSImage: Allocated new BlockPoolId: BP-15601104-192.168.200.100-1575584326589 19/12/06 06:18:46 INFO common.Storage: Storage directory /home/software/hadoop-2.7.1/tmp/dfs/name has been successfully formatted. 19/12/06 06:18:46 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 19/12/06 06:18:46 INFO util.ExitUtil: Exiting with status 0 19/12/06 06:18:46 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at node01/192.168.200.100 ************************************************************/

如果能看到如下提示出现,说明格式化成功,

格式化后,可以看到在hadoop根目录会生成tmp目录,并且在里面会先出现一个name的文件夹,即namenode保存的数据在这里。

[root@node01 /home/software/hadoop-2.7.1/tmp/dfs]# ll total 4 drwxr-xr-x. 3 root root 4096 Dec 6 06:18 name

启动hadoop集群

前面配置了hadoop的环境变量,任意位置start-all.sh可以启动集群,会将namenode、datanode、secondarynode、yarn等一并启动,可以使用jps命令查看进程号+进程名。

[root@node01 /home/software/hadoop-2.7.1/tmp/dfs]# jps 2308 ResourceManager 1988 DataNode 1862 NameNode 2409 NodeManager 2154 SecondaryNameNode 2622 Jps

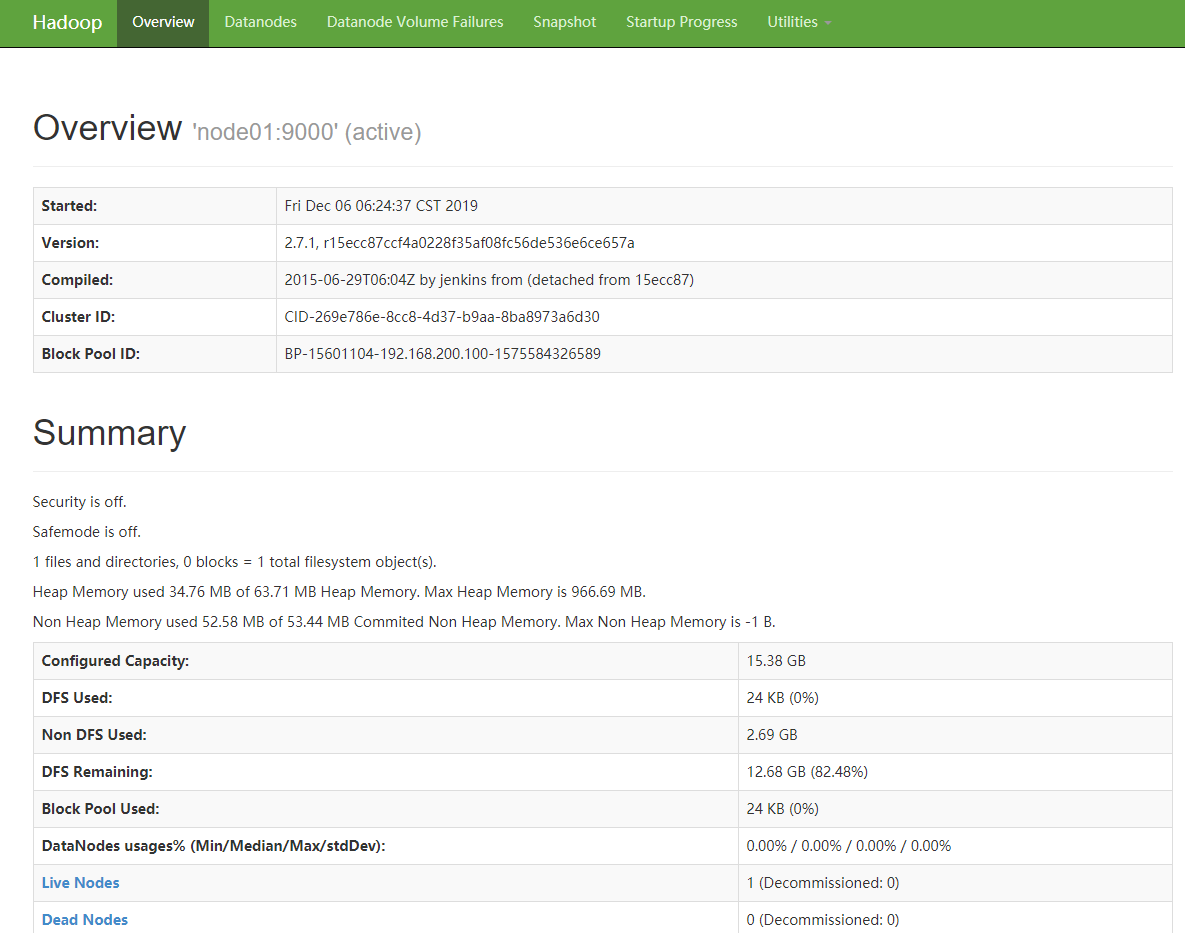

这样可以通过web来访问到hadoop集群,以下是节点的默认访问端口。

NameNode→ 50070

DataNode → 50075

SecondaryNameNode → 50090

ResourceManager → 8088

通过192.168.200.100:50070访问namenode,如果window也修改了hosts文件,则直接用localhost:50070也可以访问,这样在使用hdfs下载时可以直接下载。

需要注意的是,启动hadoop集群后,原有的dfs目录下会多出data和namesecondary目录,分别对应datanode和secondarynamenode,在完全分布式这三个是分开的一般,伪分布式就在一起了。

[root@node01 /home/software/hadoop-2.7.1/tmp/dfs]# ll total 12 drwx------. 3 root root 4096 Dec 6 06:24 data drwxr-xr-x. 3 root root 4096 Dec 6 06:24 name drwxr-xr-x. 3 root root 4096 Dec 6 06:34 namesecondary

以上就完成了hadoop集群的伪分布式安装,除了分布式安装和HA分布式安装,这也是一种安装的方式,记录一下。

参考博文:

(1)https://www.cnblogs.com/areyouready/p/8872181.html 关闭防火墙

(2)https://www.cnblogs.com/youngchaolin/p/11706692.html 公钥私钥理解