HashMap需要注意以下几方面

(1) HasMap底层是用Node数组table存储数据, 它是采用懒加载的方式初始化的, 初始长度16, 加载因为0.75, 每次扩容2倍, 在扩容时,移动节点都是采用尾插法. 而JDK8之前都是采用的头插法(这里有个典型的问题,多线程可能死循环).

(2) JDK8解决key的hash冲突采用的扰乱算法很简单,一次非一次位移,共两次. 而JDK8之前为解决这个问题共用了9次. 其原因是JDK8之前为了均推, JDK8无所谓了,反正底层是红黑树.

(3) 当hash冲链表个数达到8个时,链表会转成红黑树. 而当红黑树的节点小于等于6个时,又会由树转成链表.

为啥链表个数是8时,由链表转树呢?

因为根据泊松分布概率算出来,hash冲突8个时的概率是千万分之六, 如果是9个,概念会更低,千万分之一都不到.

为啥6个时, 又会由树转链表呢?

这可能是出于两方面的原因吧,如果是7,put,remove时,会造成一会儿树转表,一会表转树,影响性能. 另一方面链表长底为6时,查询最快为1,最慢为6, 平均一下3.5, 树节点为6时,运气不好也会查3次,感觉差不了多少,更重的是,链表插入快,树不行.

(4) 树节点的顺序首先是通过hash值比较,记为dir, 如果dir <= 0 ,往左添加 ,否则往右添加. 如果通过hash比较不出key的大小来, 就会通过compareTo方法进行比较,得出dir值.

1. putVal方法

该方法主要做以下几件事:

(1) 首先判断HashMap底层的table是否初始化,如果没有,就调用resize()方法进行初始化table操作. 注意resize方法即可以初始化table操作,也可以对table进行扩容

(2) 根据当前key的hash值和table的size值,计算key对应的valu值应该存储在table表中的下标值,记为i

(3) 如果table[i]为空,就创建一个Node节点(节点封装了key,value相关的数据)存放在table[i]上

(4) 如果table[i]已经有值了,我们将该值记为p,注意这个p肯定table表中的元素,同时也可能是链表中的头节点, 这又分成3种情况处理

<1> 如果key与p节点的key完全相等,那就覆盖oldValue

<2> 如果p是一颗树.....(跳过,没看懂)

<3> 除去上面两种情况之外,P的屁股后面肯定挂着一个链表, 这就需要对链表中的每个元素进行遍历,判断当链表中的节点key与当前put的key是否相等,如果相等,也是将oldValue进行覆盖,否则就是new一个新的Node节点,然后挂在链表的屁股后面. 同时也会对这个链表的节点长度进行判断,如果超过8,则会调用treeifyBin方法,进行链表转树的操作

(5) 源码

/** * Map put方法的实现 */ final V putVal(int hash, K key, V value, boolean onlyIfAbsent,boolean evict) { Node<K,V>[] tab; Node<K,V> p; int n, i; // 如果table是null,就是还没有初始化(jdk8中, table是在第一次使用的时候初始化的) if ((tab = table) == null || (n = tab.length) == 0) // resize()方法对table进行初始化或者2倍扩容 n = (tab = resize()).length; // table的length减1与当前key的hash值的与运算,即是这个key在table中存储下标 // & 运行, 二进制位数都是1结果才是1,否则是0 // table中是否存储着当前key对应的value值 if ((p = tab[i = (n - 1) & hash]) == null) // 不存在这个key,如果存在,就挂链表 // 创建一个新的Node,存储到table表中下标为i的slot位置 tab[i] = newNode(hash, key, value, null); else {// 挂链表 Node<K,V> e; K k; // 1. 对table中的数据进行覆盖判断,因为p是链表的头节点,是存放在table中的 if (p.hash == hash && ((k = p.key) == key || (key != null && key.equals(k)))) e = p; // 将覆盖前的p赋值给e, 注意:这儿并没有进行覆盖 // 2. 处理树的情况(先跳过) else if (p instanceof TreeNode) e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value); // 3. 链表的情况,有hash冲突的数据,直接挂在原节点的next上 else { for (int binCount = 0; ; ++binCount) { if ((e = p.next) == null) { // 将key,value封装成一个Node节点,然后挂在p的next上 p.next = newNode(hash, key, value, null); if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st // 链表转树结构 treeifyBin(tab, hash); break; } // 对链表节点中数据进行覆盖判断,注意前面有段相同的代码,那是对table中的数据(链表头节点)进行覆盖操作 if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) break;// 如果key相同,break跳出for循环,执行后面的逻辑 p = e; } } // 存在映射关系的key if (e != null) { // existing mapping for key V oldValue = e.value; if (!onlyIfAbsent || oldValue == null) e.value = value; // 用新的value值去覆盖老的value值 afterNodeAccess(e); return oldValue; // 返回覆盖前的value值 } } // 记录HashMap修改的次数 ++modCount; // 记录key-value映射的次数,相当于HashMap的size if (++size > threshold) // 如果size大于threshold,就需要进行扩容 resize(); // 移除更老的数据, 这里暂时不看 afterNodeInsertion(evict); return null; // 返回null }

2. resize方法

该方法有两个作用,一是对table进行初始化操作,一是对table进行扩容操作. 原则上每次扩容2倍. 这个方法的重点是看它如何将oldTab中的元素转移到newTab中去的.

源码使用了for循环,遍历出oldTab中的每一个元素,我们记为e, 然后再对e的相关属性进行判断, 同样分为3种情况

(1) 如果e.next==null, 表明e节点屁股后面即没跟树,也没跟链表,即是e.key无hash冲突的情况. 这样情况最简单, 通过计算e.hash值然后& 扩容后的table长度,即为e在newTab中的存放位置

(2) e节点就是一颗树的情况, 跳过

(3) e屁股后面挂着链表的情况,也没看太懂

源码显示,通过 e.hash & oldCap 将e屁股后面挂的链表拆分成了两个链表, 然后将这两个新的链表分析挂在newTab的两个槽位上. 这儿比较神奇,原本处于同一个链表结构的数据(oldTab),有hash冲突, 现在通过扩容,挂在了newTab的两个槽位上,表明这两个槽位的中key不存在hash冲突了, 这是不是从侧面说明了,扩容减少了hash冲突的机率.

源码

// 对HashMap底层table进行初始化或者扩容 final Node<K,V>[] resize() { // 1. 将原先的table赋值给变量oldTab Node<K,V>[] oldTab = table; // oldTab的容量值,即原table中有多少个元素 int oldCap = (oldTab == null) ? 0 : oldTab.length; // 原先扩容的阈值 int oldThr = threshold; // 定义了两个变量,新的table的容量和阈值 int newCap, newThr = 0; if (oldCap > 0) { // 表示原table中有元素 if (oldCap >= MAXIMUM_CAPACITY) { // 如果原来table(扩容前)的元素个数大于等于 1073741824 threshold = Integer.MAX_VALUE; // 直接将阈值设置为Integer的Max_VALUE值 return oldTab; } // newCap在 oldCap的基础扩容1倍 else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY && oldCap >= DEFAULT_INITIAL_CAPACITY) newThr = oldThr << 1; // double threshold } else if (oldThr > 0) // initial capacity was placed in threshold newCap = oldThr; else { // zero initial threshold signifies using defaults newCap = DEFAULT_INITIAL_CAPACITY; newThr = (int)(DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY); } if (newThr == 0) { float ft = (float)newCap * loadFactor; newThr = (newCap < MAXIMUM_CAPACITY && ft < (float)MAXIMUM_CAPACITY ? (int)ft : Integer.MAX_VALUE); } threshold = newThr; // 这儿就是第一次使用时,对table进行初始化 Node<K,V>[] newTab = (Node<K,V>[])new Node[newCap]; table = newTab; // 将初始化的这个newTab赋值到table // 下面应该是重点: 扩容(将扩容前table中的元素移动到扩容后的table中去). 如果是初始化, 不会进入if条件里面去 if (oldTab != null) { // 通过for循环遍历取出扩容前table中的每个元素 for (int j = 0; j < oldCap; ++j) { Node<K,V> e; // 只对非空元素进行处理 if ((e = oldTab[j]) != null) { // 将oldTab中的j号位置的元素取出来赋值给e这个变量 oldTab[j] = null; // 将oldTab中j号位置置空 // 下面这段逻辑就是将扩容前table中的元素移动到扩容后table, 具体分为3种情况 //1 . 第一种情况,也是最简单的, 无hash冲突,也就是说无链表 if (e.next == null) newTab[e.hash & (newCap - 1)] = e; // 将e这个元素放到newTab(扩容后的table)的 e.hash & (newCap - 1) 这个位置 // 有hash冲突,并且后面的链表已经转成了红黑树 else if (e instanceof TreeNode) ((TreeNode<K,V>)e).split(this, newTab, j, oldCap); // 有hash冲突,但后边还是链表 else { Node<K,V> loHead = null, loTail = null; Node<K,V> hiHead = null, hiTail = null; Node<K,V> next; // 这个do...while循环将oldTab[j]元素后面链表中的节点分别挂在两个链表上,一个是lo...., 一个是hi...., // 然后将lo,hi两个拆分出来的链表挂在扩容后的newTab的不同位置上 // <1> 原index <2> 原index+oldTab的length do { next = e.next; if ((e.hash & oldCap) == 0) { // lo.. 链表 if (loTail == null) loHead = e; else loTail.next = e; loTail = e; }else { // hi..链表 if (hiTail == null) hiHead = e; else hiTail.next = e; hiTail = e; } } while ((e = next) != null); if (loTail != null) { loTail.next = null; newTab[j] = loHead; // 将lo链表挂到newTab[j]位置上 } if (hiTail != null) { hiTail.next = null; newTab[j + oldCap] = hiHead; // 将hi链表挂到newTab[j + oldCap]位置上 } } } } } return newTab; }

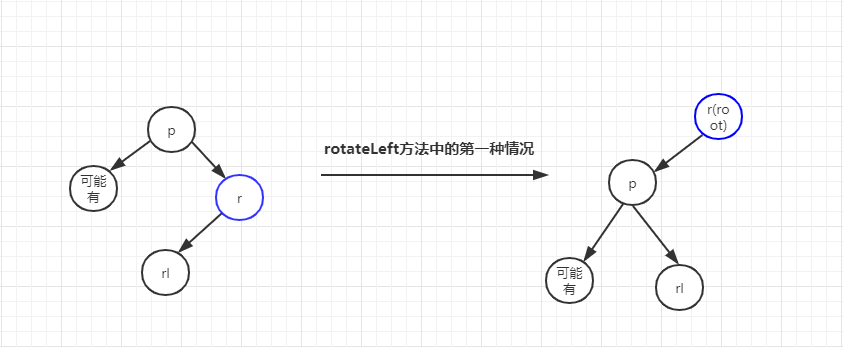

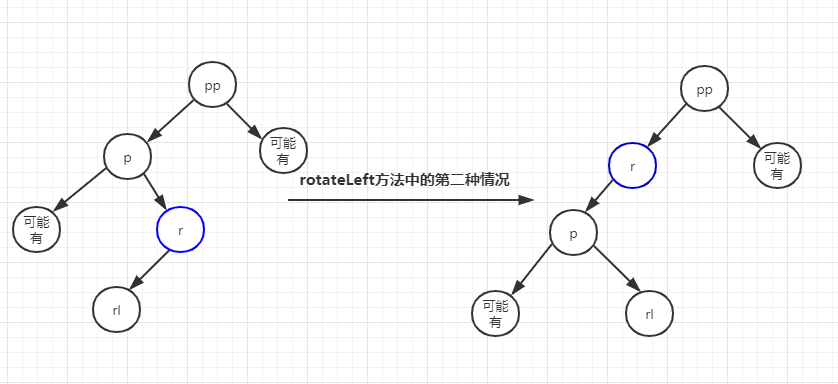

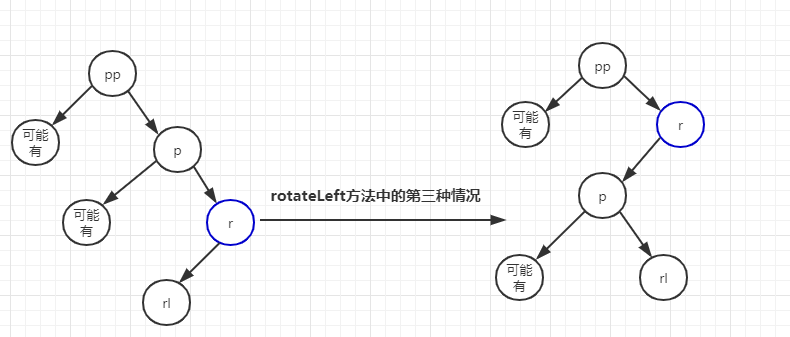

左旋分析

// 左旋 static <K, V> TreeNode<K, V> rotateLeft(TreeNode<K, V> root, TreeNode<K, V> p) { // 忧伤, 读代码 r 节点是左旋的支撑点, TreeNode<K, V> r, pp, rl; if (p != null && (r = p.right) != null) {// 这一步,p的右节点是r, 这个r不就是前面的x吗 // 支撑节点的左子变成原父节点的右子树. // 因为下面两行的功能对于下面的if...else if...else都适用,所以提到前面来了 if ((rl = p.right = r.left) != null) // 如果等于,就说明当前添加节点无左子树 rl.parent = p; // 下面三个条件一起看 if ((pp = r.parent = p.parent) == null) { // if情况,p是根节点(这种情况最简单) (root = r).red = false; // r成了根节点 ------------第一种情况 } else if (pp.left == p) { pp.left = r; // ------------第二种情况 } else { // 这种情况应该是: p是pp的右子树 pp.right = r; // ------------第三种情况 } // 下面两句代码的意思就是: p成了r左子树 r.left = p; p.parent = r; } return root; }

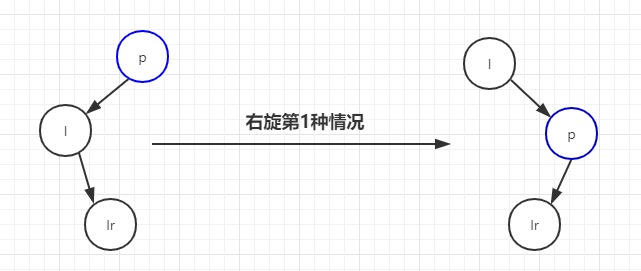

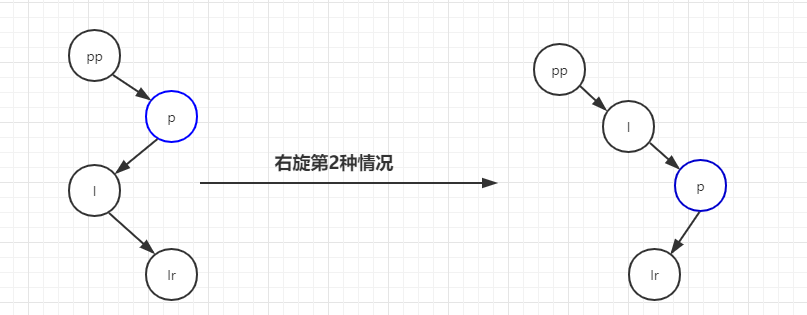

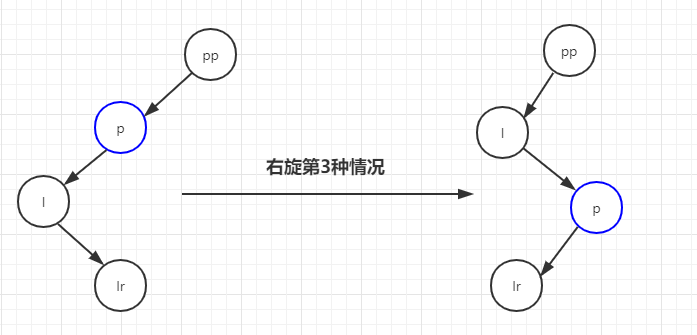

右旋分析

/** * 右旋 ,其实跟左旋是一样一样的道理,只是旋转的中心节点不同而已 * @param root 根节点 * @param p 其[实是当前节点的爷节点 * @param <K> * @param <V> * @return */ static <K, V> TreeNode<K, V> rotateRight(TreeNode<K, V> root, TreeNode<K, V> p) { TreeNode<K, V> l, pp, lr; if (p != null && (l = p.left) != null) { // 将l的右子树移动到p的左子树位置 (右旋就是干这事嘛,) if ((lr = p.left = l.right) != null) lr.parent = p; if ((pp = l.parent = p.parent) == null) // p就是根节点 (root = l).red = false; else if (pp.right == p) pp.right = l; else pp.left = l; l.right = p; p.parent = l; } return root; }

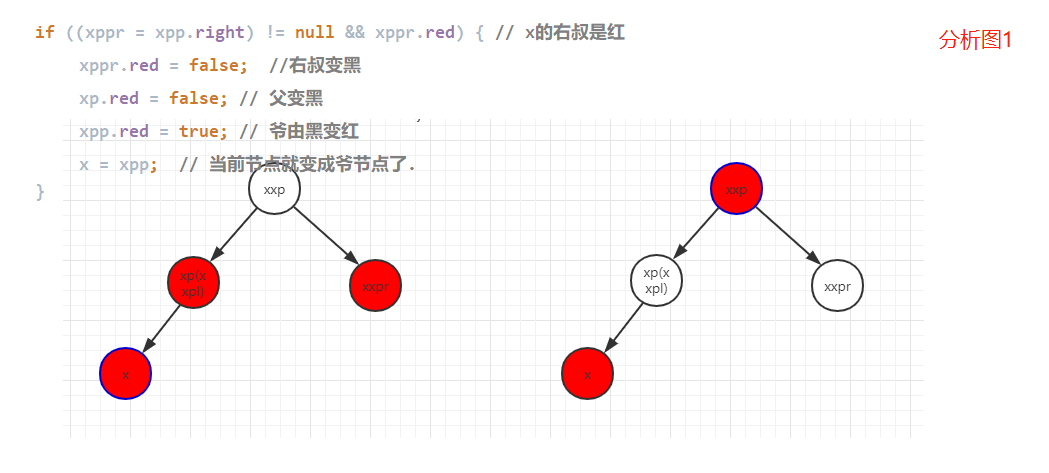

balanceInsertion方法代码分析示意图

/** * 这个方法主要是在干变色的事, 并判断何时该旋转, * 而具体的旋转逻辑由 rotateLeft 和 rotateRight 两个方法完成 * * @param root * @param x 刚添加到树上的节点 * @param <K> * @param <V> * @return */ static <K, V> TreeNode<K, V> balanceInsertion(TreeNode<K, V> root, TreeNode<K, V> x) { // 根据红黑树的性质,新添加的元素节点一定是红色 x.red = true; /** * xp : x节点的父节点 * xpp : x节点的父父节点,爷节点 * xppl : x爷节点左子节点 * xppr : x爷节点的右子节点 */ // 又是一个无限循环 for (TreeNode<K, V> xp, xpp, xppl, xppr; ; ) { // 如果x节点的父节点是null, if ((xp = x.parent) == null) { // 那么x节点就会成为root节点,变黑色 x.red = false; return x; } else if (!xp.red || (xpp = xp.parent) == null) // 如果x的父节点是黑色或者 x的爷爷节点是null /* 如何理解? !xp.red代码分析: x是新增量节点,肯定是红色,xp父节点不是红色, 所以肯定不需要变色,也不需要旋转, 红黑树是平衡的,直接返回root节点即可 (xpp = xp.parent) == null 分析: 爷节点是空,说明红黑树的深度为2. 所以无论x是添加到left ,还是right, 都是平衡的,直接返回root节点即可 */ return root; /* *如果代码执行到这儿,那么x一定有父节点和爷节点,且爷节点是黑,那么父节点就是红; */ if (xp == (xppl = xpp.left)) { // xp在爷节点的左子树上 /* *if的变色逻辑就是: * 叔,父都是红色(由它可以推出爷是黑), 这时需要变色 * 叔,父由红变黑,爷由黑变红 * 只需变色即可重新自平衡 */ if ((xppr = xpp.right) != null && xppr.red) { // x的右叔是红 xppr.red = false; //右叔变黑 xp.red = false; // 父变黑 xpp.red = true; // 爷由黑变红 x = xpp; // 当前节点就变成爷节点了. } else { /** * 进入这个else的条件有: * 1. 右叔为空,无节点(这种情况如果存在,是单左旋) * 2. 右叔存在,但是为黑色 */ if (x == xp.right) {// x在xp的右子树上 // 左旋(以父节点作为支点左旋,所以将xp赋值给x) // 即然这儿发生左旋, 那么xp一定是红,右叔一定是黑... root = rotateLeft(root, x = xp); xpp = (xp = x.parent) == null ? null : xp.parent; // 这句代码的作用呢???????????? } if (xp != null) { // 这个判断是什么意思 ?????????? // 为什么再一次判断xp不为空??????? // 经历过rotateLeft之后的xp与未rotateLeft之前xp是同一个节点吗????? 答案肯定是! xp.red = false;// 父黑 if (xpp != null) { xpp.red = true; // 爷红 root = rotateRight(root, xpp); } } } } else { if (xppl != null && xppl.red) { xppl.red = false; xp.red = false; xpp.red = true; x = xpp; } else { if (x == xp.left) { root = rotateRight(root, x = xp); xpp = (xp = x.parent) == null ? null : xp.parent; } if (xp != null) { xp.red = false; if (xpp != null) { xpp.red = true; root = rotateLeft(root, xpp); } } } } } }

补充HashMap类源码

/* * Copyright (c) 1997, 2017, Oracle and/or its affiliates. All rights reserved. * ORACLE PROPRIETARY/CONFIDENTIAL. Use is subject to license terms. * * * * * * * * * * * * * * * * * * * * */ package qinfeng.redblacktree; import sun.misc.SharedSecrets; import java.io.IOException; import java.io.InvalidObjectException; import java.io.Serializable; import java.lang.reflect.ParameterizedType; import java.lang.reflect.Type; import java.util.*; import java.util.function.BiConsumer; import java.util.function.BiFunction; import java.util.function.Consumer; import java.util.function.Function; /** * Hash table based implementation of the <tt>Map</tt> interface. This * implementation provides all of the optional map operations, and permits * <tt>null</tt> values and the <tt>null</tt> key. (The <tt>HashMap</tt> * class is roughly equivalent to <tt>Hashtable</tt>, except that it is * unsynchronized and permits nulls.) This class makes no guarantees as to * the order of the map; in particular, it does not guarantee that the order * will remain constant over time. * * <p>This implementation provides constant-time performance for the basic * operations (<tt>get</tt> and <tt>put</tt>), assuming the hash function * disperses the elements properly among the buckets. Iteration over * collection views requires time proportional to the "capacity" of the * <tt>HashMap</tt> instance (the number of buckets) plus its size (the number * of key-value mappings). Thus, it's very important not to set the initial * capacity too high (or the load factor too low) if iteration performance is * important. * * <p>An instance of <tt>HashMap</tt> has two parameters that affect its * performance: <i>initial capacity</i> and <i>load factor</i>. The * <i>capacity</i> is the number of buckets in the hash table, and the initial * capacity is simply the capacity at the time the hash table is created. The * <i>load factor</i> is a measure of how full the hash table is allowed to * get before its capacity is automatically increased. When the number of * entries in the hash table exceeds the product of the load factor and the * current capacity, the hash table is <i>rehashed</i> (that is, internal data * structures are rebuilt) so that the hash table has approximately twice the * number of buckets. * * <p>As a general rule, the default load factor (.75) offers a good * tradeoff between time and space costs. Higher values decrease the * space overhead but increase the lookup cost (reflected in most of * the operations of the <tt>HashMap</tt> class, including * <tt>get</tt> and <tt>put</tt>). The expected number of entries in * the map and its load factor should be taken into account when * setting its initial capacity, so as to minimize the number of * rehash operations. If the initial capacity is greater than the * maximum number of entries divided by the load factor, no rehash * operations will ever occur. * * <p>If many mappings are to be stored in a <tt>HashMap</tt> * instance, creating it with a sufficiently large capacity will allow * the mappings to be stored more efficiently than letting it perform * automatic rehashing as needed to grow the table. Note that using * many keys with the same {@code hashCode()} is a sure way to slow * down performance of any hash table. To ameliorate impact, when keys * are {@link Comparable}, this class may use comparison order among * keys to help break ties. * * <p><strong>Note that this implementation is not synchronized.</strong> * If multiple threads access a hash map concurrently, and at least one of * the threads modifies the map structurally, it <i>must</i> be * synchronized externally. (A structural modification is any operation * that adds or deletes one or more mappings; merely changing the value * associated with a key that an instance already contains is not a * structural modification.) This is typically accomplished by * synchronizing on some object that naturally encapsulates the map. * <p> * If no such object exists, the map should be "wrapped" using the * {@link Collections#synchronizedMap Collections.synchronizedMap} * method. This is best done at creation time, to prevent accidental * unsynchronized access to the map:<pre> * Map m = Collections.synchronizedMap(new HashMap(...));</pre> * * <p>The iterators returned by all of this class's "collection view methods" * are <i>fail-fast</i>: if the map is structurally modified at any time after * the iterator is created, in any way except through the iterator's own * <tt>remove</tt> method, the iterator will throw a * {@link ConcurrentModificationException}. Thus, in the face of concurrent * modification, the iterator fails quickly and cleanly, rather than risking * arbitrary, non-deterministic behavior at an undetermined time in the * future. * * <p>Note that the fail-fast behavior of an iterator cannot be guaranteed * as it is, generally speaking, impossible to make any hard guarantees in the * presence of unsynchronized concurrent modification. Fail-fast iterators * throw <tt>ConcurrentModificationException</tt> on a best-effort basis. * Therefore, it would be wrong to write a program that depended on this * exception for its correctness: <i>the fail-fast behavior of iterators * should be used only to detect bugs.</i> * * <p>This class is a member of the * <a href="{@docRoot}/../technotes/guides/collections/index.html"> * Java Collections Framework</a>. * * @param <K> the type of keys maintained by this map * @param <V> the type of mapped values * @author Doug Lea * @author Josh Bloch * @author Arthur van Hoff * @author Neal Gafter * @see Object#hashCode() * @see Collection * @see Map * @see TreeMap * @see Hashtable * @since 1.2 */ public class HashMap<K, V> extends AbstractMap<K, V> implements Map<K, V>, Cloneable, Serializable { private static final long serialVersionUID = 362498820763181265L; /* * Implementation notes. * * This map usually acts as a binned (bucketed) hash table, but * when bins get too large, they are transformed into bins of * TreeNodes, each structured similarly to those in * java.util.TreeMap. Most methods try to use normal bins, but * relay to TreeNode methods when applicable (simply by checking * instanceof a node). Bins of TreeNodes may be traversed and * used like any others, but additionally support faster lookup * when overpopulated. However, since the vast majority of bins in * normal use are not overpopulated, checking for existence of * tree bins may be delayed in the course of table methods. * * 实现说明 * HashMap通常被看成是一个hash table容器,不过当这个容器变得很大时,就会转换成TreeNodes类型的容器, * 这种容器与TreeMap的结构很类似. HashMap中大多数都是使用的hash table容器,但是在适当的时候会转成TreeNode. * 红黑树(Bins of TreeNodes)可以像其容器一样进行遍历和使用,而且在数据量时,支持更快的查找. * 然而,大多数情况,hash table存储就可以了, 所以在检测是否存在tree bin结构时,可能造成延迟 * * * Tree bins (i.e., bins whose elements are all TreeNodes) are * ordered primarily by hashCode, but in the case of ties, if two * elements are of the same "class C implements Comparable<C>", * type then their compareTo method is used for ordering. (We * conservatively check generic types via reflection to validate * this -- see method comparableClassFor). The added complexity * of tree bins is worthwhile in providing worst-case O(log n) * operations when keys either have distinct hashes or are * orderable, Thus, performance degrades gracefully under * accidental or malicious usages in which hashCode() methods * return values that are poorly distributed, as well as those in * which many keys share a hashCode, so long as they are also * Comparable. (If neither of these apply, we may waste about a * factor of two in time and space compared to taking no * precautions. But the only known cases stem from poor user * programming practices that are already so slow that this makes * little difference.) * * 红黑树是首先使用hashCode进行排序. 其次是调用compareTo方法进行比较(通过反射去检测它的泛型,具体方法见comparableClassFor), * 当key拥有不同的hashCode或者是有序时,时间复杂度是O(log n), 所以说引入红黑树是值得的. * * * Because TreeNodes are about twice the size of regular nodes, we * use them only when bins contain enough nodes to warrant use * (see TREEIFY_THRESHOLD). And when they become too small (due to * removal or resizing) they are converted back to plain bins. In * usages with well-distributed user hashCodes, tree bins are * rarely used. Ideally, under random hashCodes, the frequency of * nodes in bins follows a Poisson distribution * (http://en.wikipedia.org/wiki/Poisson_distribution) with a * parameter of about 0.5 on average for the default resizing * threshold of 0.75, although with a large variance because of * resizing granularity. Ignoring variance, the expected * occurrences of list size k are (exp(-0.5) * pow(0.5, k) / * factorial(k)). The first values are: * * 0: 0.60653066 * 1: 0.30326533 * 2: 0.07581633 * 3: 0.01263606 * 4: 0.00157952 * 5: 0.00015795 * 6: 0.00001316 * 7: 0.00000094 * 8: 0.00000006 * more: less than 1 in ten million * * The root of a tree bin is normally its first node. However, * sometimes (currently only upon Iterator.remove), the root might * be elsewhere, but can be recovered following parent links * (method TreeNode.root()). * * All applicable internal methods accept a hash code as an * argument (as normally supplied from a public method), allowing * them to call each other without recomputing user hashCodes. * Most internal methods also accept a "tab" argument, that is * normally the current table, but may be a new or old one when * resizing or converting. * * When bin lists are treeified, split, or untreeified, we keep * them in the same relative access/traversal order (i.e., field * Node.next) to better preserve locality, and to slightly * simplify handling of splits and traversals that invoke * iterator.remove. When using comparators on insertion, to keep a * total ordering (or as close as is required here) across * rebalancings, we compare classes and identityHashCodes as * tie-breakers. * * The use and transitions among plain vs tree modes is * complicated by the existence of subclass LinkedHashMap. See * below for hook methods defined to be invoked upon insertion, * removal and access that allow LinkedHashMap internals to * otherwise remain independent of these mechanics. (This also * requires that a map instance be passed to some utility methods * that may create new nodes.) * * The concurrent-programming-like SSA-based coding style helps * avoid aliasing errors amid all of the twisty pointer operations. */ /** * The default initial capacity - MUST be a power of two. */ static final int DEFAULT_INITIAL_CAPACITY = 1 << 4; // aka 16 /** * The maximum capacity, used if a higher value is implicitly specified * by either of the constructors with arguments. * MUST be a power of two <= 1<<30. */ static final int MAXIMUM_CAPACITY = 1 << 30; /** * The load factor used when none specified in constructor. */ static final float DEFAULT_LOAD_FACTOR = 0.75f; /** * The bin count threshold for using a tree rather than list for a * bin. Bins are converted to trees when adding an element to a * bin with at least this many nodes. The value must be greater * than 2 and should be at least 8 to mesh with assumptions in * tree removal about conversion back to plain bins upon * shrinkage. */ static final int TREEIFY_THRESHOLD = 8; /** * The bin count threshold for untreeifying a (split) bin during a * resize operation. Should be less than TREEIFY_THRESHOLD, and at * most 6 to mesh with shrinkage detection under removal. */ static final int UNTREEIFY_THRESHOLD = 6; /** * The smallest table capacity for which bins may be treeified. * (Otherwise the table is resized if too many nodes in a bin.) * Should be at least 4 * TREEIFY_THRESHOLD to avoid conflicts * between resizing and treeification thresholds. */ static final int MIN_TREEIFY_CAPACITY = 64; /** * Basic hash bin node, used for most entries. (See below for * TreeNode subclass, and in LinkedHashMap for its Entry subclass.) */ static class Node<K, V> implements Map.Entry<K, V> { final int hash; final K key; V value; Node<K, V> next; Node(int hash, K key, V value, Node<K, V> next) { this.hash = hash; this.key = key; this.value = value; this.next = next; } public final K getKey() { return key; } public final V getValue() { return value; } public final String toString() { return key + "=" + value; } public final int hashCode() { return Objects.hashCode(key) ^ Objects.hashCode(value); } public final V setValue(V newValue) { V oldValue = value; value = newValue; return oldValue; } public final boolean equals(Object o) { if (o == this) return true; if (o instanceof Map.Entry) { Map.Entry<?, ?> e = (Map.Entry<?, ?>) o; if (Objects.equals(key, e.getKey()) && Objects.equals(value, e.getValue())) return true; } return false; } } /* ---------------- Static utilities -------------- */ /** * Computes key.hashCode() and spreads (XORs) higher bits of hash * to lower. Because the table uses power-of-two masking, sets of * hashes that vary only in bits above the current mask will * always collide. (Among known examples are sets of Float keys * holding consecutive whole numbers in small tables.) So we * apply a transform that spreads the impact of higher bits * downward. There is a tradeoff between speed, utility, and * quality of bit-spreading. Because many common sets of hashes * are already reasonably distributed (so don't benefit from * spreading), and because we use trees to handle large sets of * collisions in bins, we just XOR some shifted bits in the * cheapest possible way to reduce systematic lossage, as well as * to incorporate impact of the highest bits that would otherwise * never be used in index calculations because of table bounds. */ static final int hash(Object key) { int h; return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16); } /** * Returns x's Class if it is of the form "class C implements * Comparable<C>", else null. */ static Class<?> comparableClassFor(Object x) { if (x instanceof Comparable) { Class<?> c; Type[] ts, as; Type t; ParameterizedType p; if ((c = x.getClass()) == String.class) // bypass checks return c; if ((ts = c.getGenericInterfaces()) != null) { for (int i = 0; i < ts.length; ++i) { if (((t = ts[i]) instanceof ParameterizedType) && ((p = (ParameterizedType) t).getRawType() == Comparable.class) && (as = p.getActualTypeArguments()) != null && as.length == 1 && as[0] == c) // type arg is c return c; } } } return null; } /** * Returns k.compareTo(x) if x matches kc (k's screened comparable * class), else 0. */ @SuppressWarnings({"rawtypes", "unchecked"}) // for cast to Comparable static int compareComparables(Class<?> kc, Object k, Object x) { return (x == null || x.getClass() != kc ? 0 : ((Comparable) k).compareTo(x)); } /** * Returns a power of two size for the given target capacity. */ static final int tableSizeFor(int cap) { int n = cap - 1; n |= n >>> 1; n |= n >>> 2; n |= n >>> 4; n |= n >>> 8; n |= n >>> 16; return (n < 0) ? 1 : (n >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : n + 1; } /* ---------------- Fields -------------- */ /** * The table, initialized on first use, and resized as * necessary. When allocated, length is always a power of two. * (We also tolerate length zero in some operations to allow * bootstrapping mechanics that are currently not needed.) */ transient Node<K, V>[] table; /** * Holds cached entrySet(). Note that AbstractMap fields are used * for keySet() and values(). */ transient Set<Map.Entry<K, V>> entrySet; /** * The number of key-value mappings contained in this map. */ transient int size; /** * The number of times this HashMap has been structurally modified * Structural modifications are those that change the number of mappings in * the HashMap or otherwise modify its internal structure (e.g., * rehash). This field is used to make iterators on Collection-views of * the HashMap fail-fast. (See ConcurrentModificationException). */ transient int modCount; /** * The next size value at which to resize (capacity * load factor). * * @serial */ // (The javadoc description is true upon serialization. // Additionally, if the table array has not been allocated, this // field holds the initial array capacity, or zero signifying // DEFAULT_INITIAL_CAPACITY.) int threshold; /** * The load factor for the hash table. * * @serial */ final float loadFactor; /* ---------------- Public operations -------------- */ /** * Constructs an empty <tt>HashMap</tt> with the specified initial * capacity and load factor. * * @param initialCapacity the initial capacity * @param loadFactor the load factor * @throws IllegalArgumentException if the initial capacity is negative * or the load factor is nonpositive */ public HashMap(int initialCapacity, float loadFactor) { if (initialCapacity < 0) throw new IllegalArgumentException("Illegal initial capacity: " + initialCapacity); if (initialCapacity > MAXIMUM_CAPACITY) initialCapacity = MAXIMUM_CAPACITY; if (loadFactor <= 0 || Float.isNaN(loadFactor)) throw new IllegalArgumentException("Illegal load factor: " + loadFactor); this.loadFactor = loadFactor; this.threshold = tableSizeFor(initialCapacity); } /** * Constructs an empty <tt>HashMap</tt> with the specified initial * capacity and the default load factor (0.75). * * @param initialCapacity the initial capacity. * @throws IllegalArgumentException if the initial capacity is negative. */ public HashMap(int initialCapacity) { this(initialCapacity, DEFAULT_LOAD_FACTOR); } /** * Constructs an empty <tt>HashMap</tt> with the default initial capacity * (16) and the default load factor (0.75). */ public HashMap() { this.loadFactor = DEFAULT_LOAD_FACTOR; // all other fields defaulted } /** * Constructs a new <tt>HashMap</tt> with the same mappings as the * specified <tt>Map</tt>. The <tt>HashMap</tt> is created with * default load factor (0.75) and an initial capacity sufficient to * hold the mappings in the specified <tt>Map</tt>. * * @param m the map whose mappings are to be placed in this map * @throws NullPointerException if the specified map is null */ public HashMap(Map<? extends K, ? extends V> m) { this.loadFactor = DEFAULT_LOAD_FACTOR; putMapEntries(m, false); } /** * Implements Map.putAll and Map constructor * * @param m the map * @param evict false when initially constructing this map, else * true (relayed to method afterNodeInsertion). */ final void putMapEntries(Map<? extends K, ? extends V> m, boolean evict) { int s = m.size(); if (s > 0) { if (table == null) { // pre-size float ft = ((float) s / loadFactor) + 1.0F; int t = ((ft < (float) MAXIMUM_CAPACITY) ? (int) ft : MAXIMUM_CAPACITY); if (t > threshold) threshold = tableSizeFor(t); } else if (s > threshold) resize(); for (Map.Entry<? extends K, ? extends V> e : m.entrySet()) { K key = e.getKey(); V value = e.getValue(); putVal(hash(key), key, value, false, evict); } } } /** * Returns the number of key-value mappings in this map. * * @return the number of key-value mappings in this map */ public int size() { return size; } /** * Returns <tt>true</tt> if this map contains no key-value mappings. * * @return <tt>true</tt> if this map contains no key-value mappings */ public boolean isEmpty() { return size == 0; } /** * Returns the value to which the specified key is mapped, * or {@code null} if this map contains no mapping for the key. * * <p>More formally, if this map contains a mapping from a key * {@code k} to a value {@code v} such that {@code (key==null ? k==null : * key.equals(k))}, then this method returns {@code v}; otherwise * it returns {@code null}. (There can be at most one such mapping.) * * <p>A return value of {@code null} does not <i>necessarily</i> * indicate that the map contains no mapping for the key; it's also * possible that the map explicitly maps the key to {@code null}. * The {@link #containsKey containsKey} operation may be used to * distinguish these two cases. * * @see #put(Object, Object) */ public V get(Object key) { Node<K, V> e; return (e = getNode(hash(key), key)) == null ? null : e.value; } /** * Implements Map.get and related methods * * @param hash hash for key * @param key the key * @return the node, or null if none */ final Node<K, V> getNode(int hash, Object key) { Node<K, V>[] tab; Node<K, V> first, e; int n; K k; if ((tab = table) != null && (n = tab.length) > 0 && (first = tab[(n - 1) & hash]) != null) { if (first.hash == hash && // always check first node ((k = first.key) == key || (key != null && key.equals(k)))) return first; if ((e = first.next) != null) { if (first instanceof TreeNode) return ((TreeNode<K, V>) first).getTreeNode(hash, key); do { if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) return e; } while ((e = e.next) != null); } } return null; } /** * Returns <tt>true</tt> if this map contains a mapping for the * specified key. * * @param key The key whose presence in this map is to be tested * @return <tt>true</tt> if this map contains a mapping for the specified * key. */ public boolean containsKey(Object key) { return getNode(hash(key), key) != null; } /** * Associates the specified value with the specified key in this map. * If the map previously contained a mapping for the key, the old * value is replaced. * * @param key key with which the specified value is to be associated * @param value value to be associated with the specified key * @return the previous value associated with <tt>key</tt>, or * <tt>null</tt> if there was no mapping for <tt>key</tt>. * (A <tt>null</tt> return can also indicate that the map * previously associated <tt>null</tt> with <tt>key</tt>.) */ public V put(K key, V value) { return putVal(hash(key), key, value, false, true); } /** * Implements Map.put and related methods * * @param hash hash for key * @param key the key * @param value the value to put * @param onlyIfAbsent if true, don't change existing value * @param evict if false, the table is in creation mode. * @return previous value, or null if none */ final V putVal(int hash, K key, V value, boolean onlyIfAbsent, boolean evict) { Node<K, V>[] tab; Node<K, V> p; int n, i; if ((tab = table) == null || (n = tab.length) == 0) n = (tab = resize()).length; if ((p = tab[i = (n - 1) & hash]) == null) tab[i] = newNode(hash, key, value, null); else { Node<K, V> e; K k; if (p.hash == hash && ((k = p.key) == key || (key != null && key.equals(k)))) e = p; else if (p instanceof TreeNode) e = ((TreeNode<K, V>) p).putTreeVal(this, tab, hash, key, value); else { // 循环遍历链表节点 for (int binCount = 0; ; ++binCount) { if ((e = p.next) == null) { //创建一个Node节点,然后通过尾插法添加到链表上 p.next = newNode(hash, key, value, null); // 链表节点等于8时开始转红黑树 if (binCount >= TREEIFY_THRESHOLD - 1) // 开始转树 treeifyBin(tab, hash); break; } if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) break; p = e; } } if (e != null) { // existing mapping for key V oldValue = e.value; if (!onlyIfAbsent || oldValue == null) e.value = value; afterNodeAccess(e); return oldValue; } } ++modCount; if (++size > threshold) resize(); afterNodeInsertion(evict); return null; } /** * Initializes or doubles table size. If null, allocates in * accord with initial capacity target held in field threshold. * Otherwise, because we are using power-of-two expansion, the * elements from each bin must either stay at same index, or move * with a power of two offset in the new table. * * @return the table */ final Node<K, V>[] resize() { Node<K, V>[] oldTab = table; int oldCap = (oldTab == null) ? 0 : oldTab.length; int oldThr = threshold; int newCap, newThr = 0; if (oldCap > 0) { if (oldCap >= MAXIMUM_CAPACITY) { threshold = Integer.MAX_VALUE; return oldTab; } else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY && oldCap >= DEFAULT_INITIAL_CAPACITY) newThr = oldThr << 1; // double threshold } else if (oldThr > 0) // initial capacity was placed in threshold newCap = oldThr; else { // zero initial threshold signifies using defaults newCap = DEFAULT_INITIAL_CAPACITY; newThr = (int) (DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY); } if (newThr == 0) { float ft = (float) newCap * loadFactor; newThr = (newCap < MAXIMUM_CAPACITY && ft < (float) MAXIMUM_CAPACITY ? (int) ft : Integer.MAX_VALUE); } threshold = newThr; @SuppressWarnings({"rawtypes", "unchecked"}) Node<K, V>[] newTab = (Node<K, V>[]) new Node[newCap]; table = newTab; if (oldTab != null) { for (int j = 0; j < oldCap; ++j) { Node<K, V> e; // 遍历oldTab中的每个元素,然后将其移动到新的tab中去 if ((e = oldTab[j]) != null) { oldTab[j] = null; if (e.next == null) { //key无hash冲突的情况 newTab[e.hash & (newCap - 1)] = e; } else if (e instanceof TreeNode) ((TreeNode<K, V>) e).split(this, newTab, j, oldCap); else { // 链表Node的情况 Node<K, V> loHead = null, loTail = null; Node<K, V> hiHead = null, hiTail = null; Node<K, V> next; do { next = e.next; if ((e.hash & oldCap) == 0) { if (loTail == null) loHead = e; else loTail.next = e; loTail = e; } else { if (hiTail == null) hiHead = e; else hiTail.next = e; hiTail = e; } } while ((e = next) != null); if (loTail != null) { loTail.next = null; newTab[j] = loHead; } if (hiTail != null) { hiTail.next = null; newTab[j + oldCap] = hiHead; } } } } } return newTab; } /** * Replaces all linked nodes in bin at index for given hash unless * table is too small, in which case resizes instead. */ final void treeifyBin(Node<K, V>[] tab, int hash) { int n; // table的长度 int index; // 当前添加的key在tab中的位置 Node<K, V> e; // 当前添加的值的节点所在链表的头节点(第一次) // 为啥table的长度小于MIN_TREEIFY_CAPACITY 也要调用resize方法呢? if (tab == null || (n = tab.length) < MIN_TREEIFY_CAPACITY) resize(); else if ((e = tab[index = (n - 1) & hash]) != null) { // hd : 头节点 // tl : 尾节点 TreeNode<K, V> hd = null, tl = null; do { // 将普通Node节点转换成TreeNode节点, TreeNode<K, V> p = replacementTreeNode(e, null); if (tl == null) hd = p; // 第一个肯定是链表的头节头,赋值给了hd else { // 将tl与p进行双向绑定 p.prev = tl; tl.next = p; } tl = p; // 尾插法, 每次遍历转换成TreeNode节点都添加到上一个节点的尾部 } while ((e = e.next) != null); // 将头节点放在tab表中的index位置 if ((tab[index] = hd) != null) // 下面才是真正的转树方法 hd.treeify(tab); } } /** * Copies all of the mappings from the specified map to this map. * These mappings will replace any mappings that this map had for * any of the keys currently in the specified map. * * @param m mappings to be stored in this map * @throws NullPointerException if the specified map is null */ public void putAll(Map<? extends K, ? extends V> m) { putMapEntries(m, true); } /** * Removes the mapping for the specified key from this map if present. * * @param key key whose mapping is to be removed from the map * @return the previous value associated with <tt>key</tt>, or * <tt>null</tt> if there was no mapping for <tt>key</tt>. * (A <tt>null</tt> return can also indicate that the map * previously associated <tt>null</tt> with <tt>key</tt>.) */ public V remove(Object key) { Node<K, V> e; return (e = removeNode(hash(key), key, null, false, true)) == null ? null : e.value; } /** * Implements Map.remove and related methods * * @param hash hash for key * @param key the key * @param value the value to match if matchValue, else ignored * @param matchValue if true only remove if value is equal * @param movable if false do not move other nodes while removing * @return the node, or null if none */ final Node<K, V> removeNode(int hash, Object key, Object value, boolean matchValue, boolean movable) { Node<K, V>[] tab; Node<K, V> p; int n, index; if ((tab = table) != null && (n = tab.length) > 0 && (p = tab[index = (n - 1) & hash]) != null) { Node<K, V> node = null, e; K k; V v; if (p.hash == hash && ((k = p.key) == key || (key != null && key.equals(k)))) node = p; else if ((e = p.next) != null) { if (p instanceof TreeNode) node = ((TreeNode<K, V>) p).getTreeNode(hash, key); else { do { if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) { node = e; break; } p = e; } while ((e = e.next) != null); } } if (node != null && (!matchValue || (v = node.value) == value || (value != null && value.equals(v)))) { if (node instanceof TreeNode) ((TreeNode<K, V>) node).removeTreeNode(this, tab, movable); else if (node == p) tab[index] = node.next; else p.next = node.next; ++modCount; --size; afterNodeRemoval(node); return node; } } return null; } /** * Removes all of the mappings from this map. * The map will be empty after this call returns. */ public void clear() { Node<K, V>[] tab; modCount++; if ((tab = table) != null && size > 0) { size = 0; for (int i = 0; i < tab.length; ++i) tab[i] = null; } } /** * Returns <tt>true</tt> if this map maps one or more keys to the * specified value. * * @param value value whose presence in this map is to be tested * @return <tt>true</tt> if this map maps one or more keys to the * specified value */ public boolean containsValue(Object value) { Node<K, V>[] tab; V v; if ((tab = table) != null && size > 0) { for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) { if ((v = e.value) == value || (value != null && value.equals(v))) return true; } } } return false; } /** * Returns a {@link Set} view of the keys contained in this map. * The set is backed by the map, so changes to the map are * reflected in the set, and vice-versa. If the map is modified * while an iteration over the set is in progress (except through * the iterator's own <tt>remove</tt> operation), the results of * the iteration are undefined. The set supports element removal, * which removes the corresponding mapping from the map, via the * <tt>Iterator.remove</tt>, <tt>Set.remove</tt>, * <tt>removeAll</tt>, <tt>retainAll</tt>, and <tt>clear</tt> * operations. It does not support the <tt>add</tt> or <tt>addAll</tt> * operations. * * @return a set view of the keys contained in this map */ public Set<K> keySet() { Set<K> ks = keySet; if (ks == null) { ks = new KeySet(); keySet = ks; } return ks; } final class KeySet extends AbstractSet<K> { public final int size() { return size; } public final void clear() { HashMap.this.clear(); } public final Iterator<K> iterator() { return new KeyIterator(); } public final boolean contains(Object o) { return containsKey(o); } public final boolean remove(Object key) { return removeNode(hash(key), key, null, false, true) != null; } public final Spliterator<K> spliterator() { return new KeySpliterator<>(HashMap.this, 0, -1, 0, 0); } public final void forEach(Consumer<? super K> action) { Node<K, V>[] tab; if (action == null) throw new NullPointerException(); if (size > 0 && (tab = table) != null) { int mc = modCount; for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) action.accept(e.key); } if (modCount != mc) throw new ConcurrentModificationException(); } } } /** * Returns a {@link Collection} view of the values contained in this map. * The collection is backed by the map, so changes to the map are * reflected in the collection, and vice-versa. If the map is * modified while an iteration over the collection is in progress * (except through the iterator's own <tt>remove</tt> operation), * the results of the iteration are undefined. The collection * supports element removal, which removes the corresponding * mapping from the map, via the <tt>Iterator.remove</tt>, * <tt>Collection.remove</tt>, <tt>removeAll</tt>, * <tt>retainAll</tt> and <tt>clear</tt> operations. It does not * support the <tt>add</tt> or <tt>addAll</tt> operations. * * @return a view of the values contained in this map */ public Collection<V> values() { Collection<V> vs = values; if (vs == null) { vs = new Values(); values = vs; } return vs; } final class Values extends AbstractCollection<V> { public final int size() { return size; } public final void clear() { HashMap.this.clear(); } public final Iterator<V> iterator() { return new ValueIterator(); } public final boolean contains(Object o) { return containsValue(o); } public final Spliterator<V> spliterator() { return new ValueSpliterator<>(HashMap.this, 0, -1, 0, 0); } public final void forEach(Consumer<? super V> action) { Node<K, V>[] tab; if (action == null) throw new NullPointerException(); if (size > 0 && (tab = table) != null) { int mc = modCount; for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) action.accept(e.value); } if (modCount != mc) throw new ConcurrentModificationException(); } } } /** * Returns a {@link Set} view of the mappings contained in this map. * The set is backed by the map, so changes to the map are * reflected in the set, and vice-versa. If the map is modified * while an iteration over the set is in progress (except through * the iterator's own <tt>remove</tt> operation, or through the * <tt>setValue</tt> operation on a map entry returned by the * iterator) the results of the iteration are undefined. The set * supports element removal, which removes the corresponding * mapping from the map, via the <tt>Iterator.remove</tt>, * <tt>Set.remove</tt>, <tt>removeAll</tt>, <tt>retainAll</tt> and * <tt>clear</tt> operations. It does not support the * <tt>add</tt> or <tt>addAll</tt> operations. * * @return a set view of the mappings contained in this map */ public Set<Map.Entry<K, V>> entrySet() { Set<Map.Entry<K, V>> es; return (es = entrySet) == null ? (entrySet = new EntrySet()) : es; } final class EntrySet extends AbstractSet<Map.Entry<K, V>> { public final int size() { return size; } public final void clear() { HashMap.this.clear(); } public final Iterator<Map.Entry<K, V>> iterator() { return new EntryIterator(); } public final boolean contains(Object o) { if (!(o instanceof Map.Entry)) return false; Map.Entry<?, ?> e = (Map.Entry<?, ?>) o; Object key = e.getKey(); Node<K, V> candidate = getNode(hash(key), key); return candidate != null && candidate.equals(e); } public final boolean remove(Object o) { if (o instanceof Map.Entry) { Map.Entry<?, ?> e = (Map.Entry<?, ?>) o; Object key = e.getKey(); Object value = e.getValue(); return removeNode(hash(key), key, value, true, true) != null; } return false; } public final Spliterator<Map.Entry<K, V>> spliterator() { return new EntrySpliterator<>(HashMap.this, 0, -1, 0, 0); } public final void forEach(Consumer<? super Map.Entry<K, V>> action) { Node<K, V>[] tab; if (action == null) throw new NullPointerException(); if (size > 0 && (tab = table) != null) { int mc = modCount; for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) action.accept(e); } if (modCount != mc) throw new ConcurrentModificationException(); } } } // Overrides of JDK8 Map extension methods @Override public V getOrDefault(Object key, V defaultValue) { Node<K, V> e; return (e = getNode(hash(key), key)) == null ? defaultValue : e.value; } @Override public V putIfAbsent(K key, V value) { return putVal(hash(key), key, value, true, true); } @Override public boolean remove(Object key, Object value) { return removeNode(hash(key), key, value, true, true) != null; } @Override public boolean replace(K key, V oldValue, V newValue) { Node<K, V> e; V v; if ((e = getNode(hash(key), key)) != null && ((v = e.value) == oldValue || (v != null && v.equals(oldValue)))) { e.value = newValue; afterNodeAccess(e); return true; } return false; } @Override public V replace(K key, V value) { Node<K, V> e; if ((e = getNode(hash(key), key)) != null) { V oldValue = e.value; e.value = value; afterNodeAccess(e); return oldValue; } return null; } @Override public V computeIfAbsent(K key, Function<? super K, ? extends V> mappingFunction) { if (mappingFunction == null) throw new NullPointerException(); int hash = hash(key); Node<K, V>[] tab; Node<K, V> first; int n, i; int binCount = 0; TreeNode<K, V> t = null; Node<K, V> old = null; if (size > threshold || (tab = table) == null || (n = tab.length) == 0) n = (tab = resize()).length; if ((first = tab[i = (n - 1) & hash]) != null) { if (first instanceof TreeNode) old = (t = (TreeNode<K, V>) first).getTreeNode(hash, key); else { Node<K, V> e = first; K k; do { if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) { old = e; break; } ++binCount; } while ((e = e.next) != null); } V oldValue; if (old != null && (oldValue = old.value) != null) { afterNodeAccess(old); return oldValue; } } V v = mappingFunction.apply(key); if (v == null) { return null; } else if (old != null) { old.value = v; afterNodeAccess(old); return v; } else if (t != null) t.putTreeVal(this, tab, hash, key, v); else { tab[i] = newNode(hash, key, v, first); if (binCount >= TREEIFY_THRESHOLD - 1) treeifyBin(tab, hash); } ++modCount; ++size; afterNodeInsertion(true); return v; } public V computeIfPresent(K key, BiFunction<? super K, ? super V, ? extends V> remappingFunction) { if (remappingFunction == null) throw new NullPointerException(); Node<K, V> e; V oldValue; int hash = hash(key); if ((e = getNode(hash, key)) != null && (oldValue = e.value) != null) { V v = remappingFunction.apply(key, oldValue); if (v != null) { e.value = v; afterNodeAccess(e); return v; } else removeNode(hash, key, null, false, true); } return null; } @Override public V compute(K key, BiFunction<? super K, ? super V, ? extends V> remappingFunction) { if (remappingFunction == null) throw new NullPointerException(); int hash = hash(key); Node<K, V>[] tab; Node<K, V> first; int n, i; int binCount = 0; TreeNode<K, V> t = null; Node<K, V> old = null; if (size > threshold || (tab = table) == null || (n = tab.length) == 0) n = (tab = resize()).length; if ((first = tab[i = (n - 1) & hash]) != null) { if (first instanceof TreeNode) old = (t = (TreeNode<K, V>) first).getTreeNode(hash, key); else { Node<K, V> e = first; K k; do { if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) { old = e; break; } ++binCount; } while ((e = e.next) != null); } } V oldValue = (old == null) ? null : old.value; V v = remappingFunction.apply(key, oldValue); if (old != null) { if (v != null) { old.value = v; afterNodeAccess(old); } else removeNode(hash, key, null, false, true); } else if (v != null) { if (t != null) t.putTreeVal(this, tab, hash, key, v); else { tab[i] = newNode(hash, key, v, first); if (binCount >= TREEIFY_THRESHOLD - 1) treeifyBin(tab, hash); } ++modCount; ++size; afterNodeInsertion(true); } return v; } @Override public V merge(K key, V value, BiFunction<? super V, ? super V, ? extends V> remappingFunction) { if (value == null) throw new NullPointerException(); if (remappingFunction == null) throw new NullPointerException(); int hash = hash(key); Node<K, V>[] tab; Node<K, V> first; int n, i; int binCount = 0; TreeNode<K, V> t = null; Node<K, V> old = null; if (size > threshold || (tab = table) == null || (n = tab.length) == 0) n = (tab = resize()).length; if ((first = tab[i = (n - 1) & hash]) != null) { if (first instanceof TreeNode) old = (t = (TreeNode<K, V>) first).getTreeNode(hash, key); else { Node<K, V> e = first; K k; do { if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k)))) { old = e; break; } ++binCount; } while ((e = e.next) != null); } } if (old != null) { V v; if (old.value != null) v = remappingFunction.apply(old.value, value); else v = value; if (v != null) { old.value = v; afterNodeAccess(old); } else removeNode(hash, key, null, false, true); return v; } if (value != null) { if (t != null) t.putTreeVal(this, tab, hash, key, value); else { tab[i] = newNode(hash, key, value, first); if (binCount >= TREEIFY_THRESHOLD - 1) treeifyBin(tab, hash); } ++modCount; ++size; afterNodeInsertion(true); } return value; } @Override public void forEach(BiConsumer<? super K, ? super V> action) { Node<K, V>[] tab; if (action == null) throw new NullPointerException(); if (size > 0 && (tab = table) != null) { int mc = modCount; for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) action.accept(e.key, e.value); } if (modCount != mc) throw new ConcurrentModificationException(); } } @Override public void replaceAll(BiFunction<? super K, ? super V, ? extends V> function) { Node<K, V>[] tab; if (function == null) throw new NullPointerException(); if (size > 0 && (tab = table) != null) { int mc = modCount; for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) { e.value = function.apply(e.key, e.value); } } if (modCount != mc) throw new ConcurrentModificationException(); } } /* ------------------------------------------------------------ */ // Cloning and serialization /** * Returns a shallow copy of this <tt>HashMap</tt> instance: the keys and * values themselves are not cloned. * * @return a shallow copy of this map */ @SuppressWarnings("unchecked") @Override public Object clone() { HashMap<K, V> result; try { result = (HashMap<K, V>) super.clone(); } catch (CloneNotSupportedException e) { // this shouldn't happen, since we are Cloneable throw new InternalError(e); } result.reinitialize(); result.putMapEntries(this, false); return result; } // These methods are also used when serializing HashSets final float loadFactor() { return loadFactor; } final int capacity() { return (table != null) ? table.length : (threshold > 0) ? threshold : DEFAULT_INITIAL_CAPACITY; } /** * Save the state of the <tt>HashMap</tt> instance to a stream (i.e., * serialize it). * * @serialData The <i>capacity</i> of the HashMap (the length of the * bucket array) is emitted (int), followed by the * <i>size</i> (an int, the number of key-value * mappings), followed by the key (Object) and value (Object) * for each key-value mapping. The key-value mappings are * emitted in no particular order. */ private void writeObject(java.io.ObjectOutputStream s) throws IOException { int buckets = capacity(); // Write out the threshold, loadfactor, and any hidden stuff s.defaultWriteObject(); s.writeInt(buckets); s.writeInt(size); internalWriteEntries(s); } /** * Reconstitute the {@code HashMap} instance from a stream (i.e., * deserialize it). */ private void readObject(java.io.ObjectInputStream s) throws IOException, ClassNotFoundException { // Read in the threshold (ignored), loadfactor, and any hidden stuff s.defaultReadObject(); reinitialize(); if (loadFactor <= 0 || Float.isNaN(loadFactor)) throw new InvalidObjectException("Illegal load factor: " + loadFactor); s.readInt(); // Read and ignore number of buckets int mappings = s.readInt(); // Read number of mappings (size) if (mappings < 0) throw new InvalidObjectException("Illegal mappings count: " + mappings); else if (mappings > 0) { // (if zero, use defaults) // Size the table using given load factor only if within // range of 0.25...4.0 float lf = Math.min(Math.max(0.25f, loadFactor), 4.0f); float fc = (float) mappings / lf + 1.0f; int cap = ((fc < DEFAULT_INITIAL_CAPACITY) ? DEFAULT_INITIAL_CAPACITY : (fc >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : tableSizeFor((int) fc)); float ft = (float) cap * lf; threshold = ((cap < MAXIMUM_CAPACITY && ft < MAXIMUM_CAPACITY) ? (int) ft : Integer.MAX_VALUE); // Check Map.Entry[].class since it's the nearest public type to // what we're actually creating. SharedSecrets.getJavaOISAccess().checkArray(s, Map.Entry[].class, cap); @SuppressWarnings({"rawtypes", "unchecked"}) Node<K, V>[] tab = (Node<K, V>[]) new Node[cap]; table = tab; // Read the keys and values, and put the mappings in the HashMap for (int i = 0; i < mappings; i++) { @SuppressWarnings("unchecked") K key = (K) s.readObject(); @SuppressWarnings("unchecked") V value = (V) s.readObject(); putVal(hash(key), key, value, false, false); } } } /* ------------------------------------------------------------ */ // iterators abstract class HashIterator { Node<K, V> next; // next entry to return Node<K, V> current; // current entry int expectedModCount; // for fast-fail int index; // current slot HashIterator() { expectedModCount = modCount; Node<K, V>[] t = table; current = next = null; index = 0; if (t != null && size > 0) { // advance to first entry do { } while (index < t.length && (next = t[index++]) == null); } } public final boolean hasNext() { return next != null; } final Node<K, V> nextNode() { Node<K, V>[] t; Node<K, V> e = next; if (modCount != expectedModCount) throw new ConcurrentModificationException(); if (e == null) throw new NoSuchElementException(); if ((next = (current = e).next) == null && (t = table) != null) { do { } while (index < t.length && (next = t[index++]) == null); } return e; } public final void remove() { Node<K, V> p = current; if (p == null) throw new IllegalStateException(); if (modCount != expectedModCount) throw new ConcurrentModificationException(); current = null; K key = p.key; removeNode(hash(key), key, null, false, false); expectedModCount = modCount; } } final class KeyIterator extends HashIterator implements Iterator<K> { public final K next() { return nextNode().key; } } final class ValueIterator extends HashIterator implements Iterator<V> { public final V next() { return nextNode().value; } } final class EntryIterator extends HashIterator implements Iterator<Map.Entry<K, V>> { public final Map.Entry<K, V> next() { return nextNode(); } } /* ------------------------------------------------------------ */ // spliterators static class HashMapSpliterator<K, V> { final HashMap<K, V> map; Node<K, V> current; // current node int index; // current index, modified on advance/split int fence; // one past last index int est; // size estimate int expectedModCount; // for comodification checks HashMapSpliterator(HashMap<K, V> m, int origin, int fence, int est, int expectedModCount) { this.map = m; this.index = origin; this.fence = fence; this.est = est; this.expectedModCount = expectedModCount; } final int getFence() { // initialize fence and size on first use int hi; if ((hi = fence) < 0) { HashMap<K, V> m = map; est = m.size; expectedModCount = m.modCount; Node<K, V>[] tab = m.table; hi = fence = (tab == null) ? 0 : tab.length; } return hi; } public final long estimateSize() { getFence(); // force init return (long) est; } } static final class KeySpliterator<K, V> extends HashMapSpliterator<K, V> implements Spliterator<K> { KeySpliterator(HashMap<K, V> m, int origin, int fence, int est, int expectedModCount) { super(m, origin, fence, est, expectedModCount); } public KeySpliterator<K, V> trySplit() { int hi = getFence(), lo = index, mid = (lo + hi) >>> 1; return (lo >= mid || current != null) ? null : new KeySpliterator<>(map, lo, index = mid, est >>>= 1, expectedModCount); } public void forEachRemaining(Consumer<? super K> action) { int i, hi, mc; if (action == null) throw new NullPointerException(); HashMap<K, V> m = map; Node<K, V>[] tab = m.table; if ((hi = fence) < 0) { mc = expectedModCount = m.modCount; hi = fence = (tab == null) ? 0 : tab.length; } else mc = expectedModCount; if (tab != null && tab.length >= hi && (i = index) >= 0 && (i < (index = hi) || current != null)) { Node<K, V> p = current; current = null; do { if (p == null) p = tab[i++]; else { action.accept(p.key); p = p.next; } } while (p != null || i < hi); if (m.modCount != mc) throw new ConcurrentModificationException(); } } public boolean tryAdvance(Consumer<? super K> action) { int hi; if (action == null) throw new NullPointerException(); Node<K, V>[] tab = map.table; if (tab != null && tab.length >= (hi = getFence()) && index >= 0) { while (current != null || index < hi) { if (current == null) current = tab[index++]; else { K k = current.key; current = current.next; action.accept(k); if (map.modCount != expectedModCount) throw new ConcurrentModificationException(); return true; } } } return false; } public int characteristics() { return (fence < 0 || est == map.size ? Spliterator.SIZED : 0) | Spliterator.DISTINCT; } } static final class ValueSpliterator<K, V> extends HashMapSpliterator<K, V> implements Spliterator<V> { ValueSpliterator(HashMap<K, V> m, int origin, int fence, int est, int expectedModCount) { super(m, origin, fence, est, expectedModCount); } public ValueSpliterator<K, V> trySplit() { int hi = getFence(), lo = index, mid = (lo + hi) >>> 1; return (lo >= mid || current != null) ? null : new ValueSpliterator<>(map, lo, index = mid, est >>>= 1, expectedModCount); } public void forEachRemaining(Consumer<? super V> action) { int i, hi, mc; if (action == null) throw new NullPointerException(); HashMap<K, V> m = map; Node<K, V>[] tab = m.table; if ((hi = fence) < 0) { mc = expectedModCount = m.modCount; hi = fence = (tab == null) ? 0 : tab.length; } else mc = expectedModCount; if (tab != null && tab.length >= hi && (i = index) >= 0 && (i < (index = hi) || current != null)) { Node<K, V> p = current; current = null; do { if (p == null) p = tab[i++]; else { action.accept(p.value); p = p.next; } } while (p != null || i < hi); if (m.modCount != mc) throw new ConcurrentModificationException(); } } public boolean tryAdvance(Consumer<? super V> action) { int hi; if (action == null) throw new NullPointerException(); Node<K, V>[] tab = map.table; if (tab != null && tab.length >= (hi = getFence()) && index >= 0) { while (current != null || index < hi) { if (current == null) current = tab[index++]; else { V v = current.value; current = current.next; action.accept(v); if (map.modCount != expectedModCount) throw new ConcurrentModificationException(); return true; } } } return false; } public int characteristics() { return (fence < 0 || est == map.size ? Spliterator.SIZED : 0); } } static final class EntrySpliterator<K, V> extends HashMapSpliterator<K, V> implements Spliterator<Map.Entry<K, V>> { EntrySpliterator(HashMap<K, V> m, int origin, int fence, int est, int expectedModCount) { super(m, origin, fence, est, expectedModCount); } public EntrySpliterator<K, V> trySplit() { int hi = getFence(), lo = index, mid = (lo + hi) >>> 1; return (lo >= mid || current != null) ? null : new EntrySpliterator<>(map, lo, index = mid, est >>>= 1, expectedModCount); } public void forEachRemaining(Consumer<? super Map.Entry<K, V>> action) { int i, hi, mc; if (action == null) throw new NullPointerException(); HashMap<K, V> m = map; Node<K, V>[] tab = m.table; if ((hi = fence) < 0) { mc = expectedModCount = m.modCount; hi = fence = (tab == null) ? 0 : tab.length; } else mc = expectedModCount; if (tab != null && tab.length >= hi && (i = index) >= 0 && (i < (index = hi) || current != null)) { Node<K, V> p = current; current = null; do { if (p == null) p = tab[i++]; else { action.accept(p); p = p.next; } } while (p != null || i < hi); if (m.modCount != mc) throw new ConcurrentModificationException(); } } public boolean tryAdvance(Consumer<? super Map.Entry<K, V>> action) { int hi; if (action == null) throw new NullPointerException(); Node<K, V>[] tab = map.table; if (tab != null && tab.length >= (hi = getFence()) && index >= 0) { while (current != null || index < hi) { if (current == null) current = tab[index++]; else { Node<K, V> e = current; current = current.next; action.accept(e); if (map.modCount != expectedModCount) throw new ConcurrentModificationException(); return true; } } } return false; } public int characteristics() { return (fence < 0 || est == map.size ? Spliterator.SIZED : 0) | Spliterator.DISTINCT; } } /* ------------------------------------------------------------ */ // LinkedHashMap support /* * The following package-protected methods are designed to be * overridden by LinkedHashMap, but not by any other subclass. * Nearly all other internal methods are also package-protected * but are declared final, so can be used by LinkedHashMap, view * classes, and HashSet. */ // Create a regular (non-tree) node Node<K, V> newNode(int hash, K key, V value, Node<K, V> next) { return new Node<>(hash, key, value, next); } // For conversion from TreeNodes to plain nodes Node<K, V> replacementNode(Node<K, V> p, Node<K, V> next) { return new Node<>(p.hash, p.key, p.value, next); } // Create a tree bin node TreeNode<K, V> newTreeNode(int hash, K key, V value, Node<K, V> next) { return new TreeNode<>(hash, key, value, next); } // For treeifyBin TreeNode<K, V> replacementTreeNode(Node<K, V> p, Node<K, V> next) { return new TreeNode<>(p.hash, p.key, p.value, next); } /** * Reset to initial default state. Called by clone and readObject. */ void reinitialize() { table = null; entrySet = null; keySet = null; values = null; modCount = 0; threshold = 0; size = 0; } // Callbacks to allow LinkedHashMap post-actions void afterNodeAccess(Node<K, V> p) { } void afterNodeInsertion(boolean evict) { } void afterNodeRemoval(Node<K, V> p) { } // Called only from writeObject, to ensure compatible ordering. void internalWriteEntries(java.io.ObjectOutputStream s) throws IOException { Node<K, V>[] tab; if (size > 0 && (tab = table) != null) { for (int i = 0; i < tab.length; ++i) { for (Node<K, V> e = tab[i]; e != null; e = e.next) { s.writeObject(e.key); s.writeObject(e.value); } } } } /* ------------------------------------------------------------ */ // Tree bins /** * Entry for Tree bins. Extends LinkedHashMap.Entry (which in turn * extends Node) so can be used as extension of either regular or * linked node. */ static final class TreeNode<K, V> extends LinkedHashMap.Entry<K, V> { TreeNode<K, V> parent; // red-black tree links TreeNode<K, V> left; TreeNode<K, V> right; TreeNode<K, V> prev; // needed to unlink next upon deletion boolean red; TreeNode(int hash, K key, V val, Node<K, V> next) { super(hash, key, val, next); } /** * 返回该节点的根节点 */ final TreeNode<K, V> root() { for (TreeNode<K, V> r = this, p; ; ) { if ((p = r.parent) == null) return r; r = p; } } /** * Ensures that the given root is the first node of its bin. */ static <K, V> void moveRootToFront(Node<K, V>[] tab, TreeNode<K, V> root) { int n; //tab 的length if (root != null && tab != null && (n = tab.length) > 0) { // tab的index一直都是用这个小算法去计算的, 大佬你为什么封装成一个方法呢??????????? int index = (n - 1) & root.hash; TreeNode<K, V> first = (TreeNode<K, V>) tab[index]; //拿到tab中的元素 if (root != first) { // 如果这个root已经不是tab数组中的元素,说明tree旋转了, 根节点发生了变化 Node<K, V> rn; tab[index] = root; // 将root放进入,覆盖了tab数组中原来index位置的数据 TreeNode<K, V> rp = root.prev; // 你妹的,prev你又出现了????? /** * 老子服了,,.,,,,,, * 你把root节点从这个链上摘出来, 放到原先链的前面, 我忍了,,, * 但是你把rp和rn拿出来互相引用, 后面又没地方去使用,,你想干咩也??? */ if ((rn = root.next) != null) ((TreeNode<K, V>) rn).prev = rp; if (rp != null) rp.next = rn; // 把root放在原先链的前面, 这又不是前插法了..................... if (first != null) first.prev = root; root.next = first; root.prev = null; } assert checkInvariants(root); } } /** * Finds the node starting at root p with the given hash and key. * The kc argument caches comparableClassFor(key) upon first use * comparing keys. */ final TreeNode<K, V> find(int h, Object k, Class<?> kc) { TreeNode<K, V> p = this; do { int ph, dir; K pk; TreeNode<K, V> pl = p.left, pr = p.right, q; if ((ph = p.hash) > h) p = pl; else if (ph < h) p = pr; else if ((pk = p.key) == k || (k != null && k.equals(pk))) return p; else if (pl == null) p = pr; else if (pr == null) p = pl; else if ((kc != null || (kc = comparableClassFor(k)) != null) && (dir = compareComparables(kc, k, pk)) != 0) p = (dir < 0) ? pl : pr; else if ((q = pr.find(h, k, kc)) != null) return q; else p = pl; } while (p != null); return null; } /** * Calls find for root node. */ final TreeNode<K, V> getTreeNode(int h, Object k) { return ((parent != null) ? root() : this).find(h, k, null); } /** * Tie-breaking utility for ordering insertions when equal * hashCodes and non-comparable. We don't require a total * order, just a consistent insertion rule to maintain * equivalence across rebalancings. Tie-breaking further than * necessary simplifies testing a bit. */ static int tieBreakOrder(Object a, Object b) { int d; if (a == null || b == null || (d = a.getClass().getName(). compareTo(b.getClass().getName())) == 0) d = (System.identityHashCode(a) <= System.identityHashCode(b) ? -1 : 1); return d; } /** * Forms tree of the nodes linked from this node. * 通过两层for循环完成TreeNode链表到红黑树的转换 * 外层遍历链表中的节点, 内层循环确定该节点应该添加到树的那个位置上 * * @return root of tree */ final void treeify(Node<K, V>[] tab) { TreeNode<K, V> root = null; // this就是hd,头节点 for (TreeNode<K, V> x = this, next; x != null; x = next) { // 第一次循环时,x肯定是头节点hd next = (TreeNode<K, V>) x.next; // 声明了左右节点都是null x.left = x.right = null; if (root == null) { // 第一次循环,root肯定是空,所以进入if代码块. x.parent = null; // 头节点的父节点肯定是null x.red = false; // 根据红黑树的性,根节点肯定是黑色 root = x; // 将链表的头节点弄成树的root(根)节点 } else { // 非首次for循环进入else代码块,x节点是即将要添加到树上的TreeNode节点. K k = x.key; int h = x.hash; Class<?> kc = null; // 这个for循环有点意思 , 什么条件让它一直执行下去的呢? for (TreeNode<K, V> p = root; ; ) { int dir; // 两临两个TreeNode节点hashCode值的比较结果,用于确定节点在树中的位置 int ph; // 已经是树上的节点的hash值 K pk = p.key; if ((ph = p.hash) > h) // 树上结点的hash大于待加入树上的节点的hash值, dir = -1, 左插 dir = -1; else if (ph < h) // 树上结点的hash小于待加入树上的节点的hash值, dir =1 , 右插 dir = 1; else if ((kc == null && (kc = comparableClassFor(k)) == null) || (dir = compareComparables(kc, k, pk)) == 0) //调用了compareTo方法比较,结果返回1 或者 -1 . 没有0的情况 dir = tieBreakOrder(k, pk); // 整个了临时变量 , p肯定会成为待添加节点x的父节点 TreeNode<K, V> xp = p; // dir = -1 , 左插. dir = 1 ,右插 // 这行代码很有意思呀,它是内层for循环能执行下去的关键 if ((p = (dir <= 0) ? p.left : p.right) == null) { x.parent = xp; // xp成了x的父节点 if (dir <= 0) xp.left = x; // 左子树上 else xp.right = x; // 右子树上 // 红黑树添加了元素,平衡有可能被破坏,这儿需要进行旋转或者变色 root = balanceInsertion(root, x); break; } } } } moveRootToFront(tab, root); } /** * Returns a list of non-TreeNodes replacing those linked from * this node. * 由TreeNode类型转成Node类型节点,也是从头节点开始依次往下进行的 */ final Node<K, V> untreeify(HashMap<K, V> map) { Node<K, V> hd = null, tl = null; // this为啥是Node类型? 因为TreeNode是Node的子类. for (Node<K, V> q = this; q != null; q = q.next) { Node<K, V> p = map.replacementNode(q, null); if (tl == null) hd = p; // TreeNode链的头转成了Node链的头 else tl.next = p; tl = p; } return hd; } /** * Tree version of putVal. * 树结构的putVal方法 , 该方法只负责找到合适的位置插入元素, 平衡啥的都交给balanceInsertion方法去完成 * * @param h : k的hash值, * @param k : put方法的key * @param v : put方法的value * @param map : 这儿传入map变量的目的,竟然为了调用了它的newTreeNode方法创建一个TreeNode对象.....好点奇怪.... */ final TreeNode<K, V> putTreeVal(HashMap<K, V> map, Node<K, V>[] tab, int h, K k, V v) { Class<?> kc = null; boolean searched = false; // 找到当前节点this所在树的根节点 TreeNode<K, V> root = (parent != null) ? root() : this; // 从根节点开始遍历这颗树 for (TreeNode<K, V> p = root; ; ) { int dir; // hash值比较的结果,这个值的大小决定了当前插入的节点是插左还是插右 int ph; // 父节点的hash K pk; // 父节点的key if ((ph = p.hash) > h) { //父节的hash与当前put key的hash进行比较 dir = -1; } else if (ph < h) { dir = 1; } else if ((pk = p.key) == k || (k != null && k.equals(pk))) { return p; // key已经存在了 } else if ( // 这儿是使用compareTo方法去比较, 反正就是要比较出大小来 (kc == null && (kc = comparableClassFor(k)) == null) || (dir = compareComparables(kc, k, pk)) == 0) { if (!searched) { TreeNode<K, V> q, ch; searched = true; if (((ch = p.left) != null && (q = ch.find(h, k, kc)) != null) || ((ch = p.right) != null && (q = ch.find(h, k, kc)) != null)) return q; } dir = tieBreakOrder(k, pk); } // 把p弄个临时变量存起来, 因为p马上就要变了, TreeNode<K, V> xp = p; // 根据dir的大小来决定是往左遍历,还是往右遍历... // 注意呀, 下面这个if代码也是很有意思的, 如果p(此p以非前p了)不为空,就会循环执行前面的逻辑呀 if ((p = (dir <= 0) ? p.left : p.right) == null) { // 进入if, 说明找到了可插入的点了, // 这儿为啥用到了Node类的属性呢???? // 为啥要获取它的next节点信息 Node<K, V> xpn = xp.next; // 为啥创建TreeNode对象时要去关联next节点??????????????? // 难道是为了tree退化成链表时使用???? TreeNode<K, V> x = map.newTreeNode(h, k, v, xpn); if (dir <= 0) xp.left = x; else xp.right = x; xp.next = x; x.parent = x.prev = xp; // 这是干吗? 有啥用????????????????????????? if (xpn != null) { ((TreeNode<K, V>) xpn).prev = x;// 你妹的, 不是已经是有关系了吗, 你还来搞另一条关系干啥, 你到底想哪样??? } // 因为添加了一个节点,树会自平衡, 原root节点可能会发生改变,所以moveRootToFront会将新的root节点,移到头部去 moveRootToFront(tab, balanceInsertion(root, x)); return null; } } } /** * Removes the given node, that must be present before this call. * This is messier than typical red-black deletion code because we * cannot swap the contents of an interior node with a leaf * successor that is pinned by "next" pointers that are accessible * independently during traversal. So instead we swap the tree * linkages. If the current tree appears to have too few nodes, * the bin is converted back to a plain bin. (The test triggers * somewhere between 2 and 6 nodes, depending on tree structure). */ final void removeTreeNode(HashMap<K, V> map, Node<K, V>[] tab, boolean movable) { int n; if (tab == null || (n = tab.length) == 0) return; int index = (n - 1) & hash; TreeNode<K, V> first = (TreeNode<K, V>) tab[index], root = first, rl; TreeNode<K, V> succ = (TreeNode<K, V>) next, pred = prev; if (pred == null) tab[index] = first = succ; else pred.next = succ; if (succ != null) succ.prev = pred; if (first == null) return; if (root.parent != null) root = root.root(); if (root == null || root.right == null || (rl = root.left) == null || rl.left == null) { tab[index] = first.untreeify(map); // too small return; } TreeNode<K, V> p = this, pl = left, pr = right, replacement; if (pl != null && pr != null) { TreeNode<K, V> s = pr, sl; while ((sl = s.left) != null) // find successor s = sl; boolean c = s.red; s.red = p.red; p.red = c; // swap colors TreeNode<K, V> sr = s.right; TreeNode<K, V> pp = p.parent; if (s == pr) { // p was s's direct parent p.parent = s; s.right = p; } else { TreeNode<K, V> sp = s.parent; if ((p.parent = sp) != null) { if (s == sp.left) sp.left = p; else sp.right = p; } if ((s.right = pr) != null) pr.parent = s; } p.left = null; if ((p.right = sr) != null) sr.parent = p; if ((s.left = pl) != null) pl.parent = s; if ((s.parent = pp) == null) root = s; else if (p == pp.left) pp.left = s; else pp.right = s; if (sr != null) replacement = sr; else replacement = p; } else if (pl != null) replacement = pl; else if (pr != null) replacement = pr; else replacement = p; if (replacement != p) { TreeNode<K, V> pp = replacement.parent = p.parent; if (pp == null) root = replacement; else if (p == pp.left) pp.left = replacement; else pp.right = replacement; p.left = p.right = p.parent = null; } TreeNode<K, V> r = p.red ? root : balanceDeletion(root, replacement); if (replacement == p) { // detach TreeNode<K, V> pp = p.parent; p.parent = null; if (pp != null) { if (p == pp.left) pp.left = null; else if (p == pp.right) pp.right = null; } } if (movable) moveRootToFront(tab, r); } /** * Splits nodes in a tree bin into lower and upper tree bins, * or untreeifies if now too small. Called only from resize; * see above discussion about split bits and indices. * * @param map the map * @param tab the table for recording bin heads * @param index the index of the table being split * 当前节点在oldTab中的index值 * @param bit the bit of hash to split on * oldTab的length */ final void split(HashMap<K, V> map, Node<K, V>[] tab, int index, int bit) { TreeNode<K, V> b = this; // Relink into lo and hi lists, preserving order TreeNode<K, V> loHead = null, loTail = null; TreeNode<K, V> hiHead = null, hiTail = null; int lc = 0, hc = 0; // 这里同样使用了尾插法,将链表拆分成了两个新的链表 for (TreeNode<K, V> e = b, next; e != null; e = next) { next = (TreeNode<K, V>) e.next; e.next = null; if ((e.hash & bit) == 0) { if ((e.prev = loTail) == null) loHead = e; else loTail.next = e; loTail = e; ++lc; // 累加计数 } else { if ((e.prev = hiTail) == null) hiHead = e; else hiTail.next = e; hiTail = e; ++hc; } } if (loHead != null) { // 如果拆分之后低位链表节点个数小于6, 要退化成普通节点 if (lc <= UNTREEIFY_THRESHOLD) tab[index] = loHead.untreeify(map); else { tab[index] = loHead; if (hiHead != null) // (else is already treeified)// 已经由Node类型转成了TreeNode类型 // 转树 loHead.treeify(tab); } } if (hiHead != null) { if (hc <= UNTREEIFY_THRESHOLD) tab[index + bit] = hiHead.untreeify(map); else { tab[index + bit] = hiHead; if (loHead != null) hiHead.treeify(tab); } } } /* ------------------------------------------------------------ */ // Red-black tree methods, all adapted from CLR // 左旋 static <K, V> TreeNode<K, V> rotateLeft(TreeNode<K, V> root, TreeNode<K, V> p) { // 忧伤, 读代码 r 节点是左旋的支撑点, TreeNode<K, V> r, pp, rl; if (p != null && (r = p.right) != null) {// 这一步,p的右节点是r, 这个r不就是前面的x吗 // 支撑节点的左子变成原父节点的右子树. // 因为下面两行的功能对于下面的if...else if...else都适用,所以提到前面来了 if ((rl = p.right = r.left) != null) // 如果等于,就说明当前添加节点无左子树 rl.parent = p; // 下面三个条件一起看 if ((pp = r.parent = p.parent) == null) { // if情况,p是根节点(这种情况最简单) (root = r).red = false; // r成了根节点 ------------第一种情况 } else if (pp.left == p) { pp.left = r; // ------------第二种情况 } else { // 这种情况应该是: p是pp的右子树 pp.right = r; // ------------第三种情况 } // 下面两句代码的意思就是: p成了r左子树 r.left = p; p.parent = r; } return root; } /** * 右旋 ,其实跟左旋是一样一样的道理,只是旋转的中心节点不同而已 * * @param root 根节点 * @param p 其[实是当前节点的爷节点 * @param <K> * @param <V> * @return */ static <K, V> TreeNode<K, V> rotateRight(TreeNode<K, V> root, TreeNode<K, V> p) { TreeNode<K, V> l, pp, lr; if (p != null && (l = p.left) != null) { // 将l的右子树移动到p的左子树位置 (右旋就是干这事嘛,) if ((lr = p.left = l.right) != null) lr.parent = p; if ((pp = l.parent = p.parent) == null) // p就是根节点 (root = l).red = false; else if (pp.right == p) pp.right = l; else pp.left = l; l.right = p; p.parent = l; } return root; } /** * 这个方法主要是在干变色的事, 并判断何时该旋转, * 而具体的旋转逻辑由 rotateLeft 和 rotateRight 两个方法完成 * * @param root * @param x 刚添加到树上的节点 * @param <K> * @param <V> * @return */ static <K, V> TreeNode<K, V> balanceInsertion(TreeNode<K, V> root, TreeNode<K, V> x) { // 根据红黑树的性质,新添加的元素节点一定是红色 x.red = true; /** * xp : x节点的父节点 * xpp : x节点的父父节点,爷节点 * xppl : x爷节点左子节点 * xppr : x爷节点的右子节点 */ // 又是一个无限循环 for (TreeNode<K, V> xp, xpp, xppl, xppr; ; ) { // 如果x节点的父节点是null, if ((xp = x.parent) == null) { // 那么x节点就会成为root节点,变黑色 x.red = false; return x; } else if (!xp.red || (xpp = xp.parent) == null) // 如果x的父节点是黑色或者 x的爷爷节点是null /* 如何理解? !xp.red代码分析: x是新增量节点,肯定是红色,xp父节点不是红色, 所以肯定不需要变色,也不需要旋转, 红黑树是平衡的,直接返回root节点即可 (xpp = xp.parent) == null 分析: 爷节点是空,说明红黑树的深度为2. 所以无论x是添加到left ,还是right, 都是平衡的,直接返回root节点即可 */ return root; /* *如果代码执行到这儿,那么x一定有父节点和爷节点,且爷节点是黑,那么父节点就是红; */ if (xp == (xppl = xpp.left)) { // xp在爷节点的左子树上 /* *if的变色逻辑就是: * 叔,父都是红色(由它可以推出爷是黑), 这时需要变色 * 叔,父由红变黑,爷由黑变红 * 只需变色即可重新自平衡 */ if ((xppr = xpp.right) != null && xppr.red) { // x的右叔是红 xppr.red = false; //右叔变黑 xp.red = false; // 父变黑 xpp.red = true; // 爷由黑变红 x = xpp; // 当前节点就变成爷节点了. } else { /** * 进入这个else的条件有: * 1. 右叔为空,无节点(这种情况如果存在,是单左旋) * 2. 右叔存在,但是为黑色 */ if (x == xp.right) {// x在xp的右子树上 // 左旋(以父节点作为支点左旋,所以将xp赋值给x) // 即然这儿发生左旋, 那么xp一定是红,右叔一定是黑... root = rotateLeft(root, x = xp); xpp = (xp = x.parent) == null ? null : xp.parent; // 这句代码的作用呢???????????? } if (xp != null) { // 这个判断是什么意思 ?????????? // 为什么再一次判断xp不为空??????? // 经历过rotateLeft之后的xp与未rotateLeft之前xp是同一个节点吗????? 答案肯定是! xp.red = false;// 父黑 if (xpp != null) { xpp.red = true; // 爷红 root = rotateRight(root, xpp); } } } } else { if (xppl != null && xppl.red) { xppl.red = false; xp.red = false; xpp.red = true; x = xpp; } else { if (x == xp.left) { root = rotateRight(root, x = xp); xpp = (xp = x.parent) == null ? null : xp.parent; } if (xp != null) { xp.red = false; if (xpp != null) { xpp.red = true; root = rotateLeft(root, xpp); } } } } } } static <K, V> TreeNode<K, V> balanceDeletion(TreeNode<K, V> root, TreeNode<K, V> x) { for (TreeNode<K, V> xp, xpl, xpr; ; ) { if (x == null || x == root) return root; else if ((xp = x.parent) == null) { x.red = false; return x; } else if (x.red) { x.red = false; return root; } else if ((xpl = xp.left) == x) { if ((xpr = xp.right) != null && xpr.red) { xpr.red = false; xp.red = true; root = rotateLeft(root, xp); xpr = (xp = x.parent) == null ? null : xp.right; } if (xpr == null) x = xp; else { TreeNode<K, V> sl = xpr.left, sr = xpr.right; if ((sr == null || !sr.red) && (sl == null || !sl.red)) { xpr.red = true; x = xp; } else { if (sr == null || !sr.red) { if (sl != null) sl.red = false; xpr.red = true; root = rotateRight(root, xpr); xpr = (xp = x.parent) == null ? null : xp.right; } if (xpr != null) { xpr.red = (xp == null) ? false : xp.red; if ((sr = xpr.right) != null) sr.red = false; } if (xp != null) { xp.red = false; root = rotateLeft(root, xp); } x = root; } } } else { // symmetric if (xpl != null && xpl.red) { xpl.red = false; xp.red = true; root = rotateRight(root, xp); xpl = (xp = x.parent) == null ? null : xp.left; } if (xpl == null) x = xp; else { TreeNode<K, V> sl = xpl.left, sr = xpl.right; if ((sl == null || !sl.red) && (sr == null || !sr.red)) { xpl.red = true; x = xp; } else { if (sl == null || !sl.red) { if (sr != null) sr.red = false; xpl.red = true; root = rotateLeft(root, xpl); xpl = (xp = x.parent) == null ? null : xp.left; } if (xpl != null) { xpl.red = (xp == null) ? false : xp.red; if ((sl = xpl.left) != null) sl.red = false; } if (xp != null) { xp.red = false; root = rotateRight(root, xp); } x = root; } } } } } /** * Recursive invariant check */ static <K, V> boolean checkInvariants(TreeNode<K, V> t) { TreeNode<K, V> tp = t.parent, tl = t.left, tr = t.right, tb = t.prev, tn = (TreeNode<K, V>) t.next; if (tb != null && tb.next != t) return false; if (tn != null && tn.prev != t) return false; if (tp != null && t != tp.left && t != tp.right) return false; if (tl != null && (tl.parent != t || tl.hash > t.hash)) return false; if (tr != null && (tr.parent != t || tr.hash < t.hash)) return false; if (t.red && tl != null && tl.red && tr != null && tr.red) return false; if (tl != null && !checkInvariants(tl)) return false; if (tr != null && !checkInvariants(tr)) return false; return true; } } }

未完待续....