文本生成模型

序列模型

问题

对于一个序列预测问题:

(1)输入的时间变化序列:

(2)在t时刻通过模型预测下一时刻,即:

难点

(1)内部状态难以建模、观察

(2)长时间窗口内的状态难以建模、观察

建模思路

(1)引入内部的隐状态变量

simple RNN

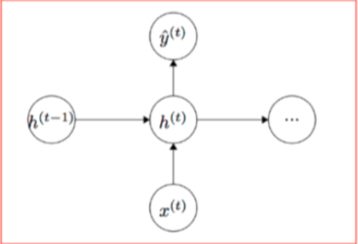

rnn的基本结构如下:

前向传播

其中:

(1)

是t时刻输入

是t时刻输入(2)

是状态层,在0时刻初始化

是状态层,在0时刻初始化(3)

函数是激励函数(sigmoid, tanh)

函数是激励函数(sigmoid, tanh)(4)

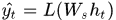

是输出层函数(softmax多分类)

是输出层函数(softmax多分类)

代价函数:

模型的参数:

(1) :将向量从hidden_dim变换到hidden_dim

:将向量从hidden_dim变换到hidden_dim

(2) :将向量从input_dim变换到hidden_dim

:将向量从input_dim变换到hidden_dim

(3) :将向量从hidden_dim变换到output_dim

:将向量从hidden_dim变换到output_dim

(4) :bias向量

:bias向量

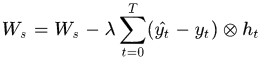

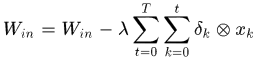

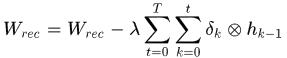

模型训练:BPTT (back propagation through time)

bptt算法的基本思想是:把所有时刻的误差累加起来,成为一个梯度。

其中:

从这个迭代式子里可以看到,每个时刻的梯度由当前时刻前的一系列时刻决定

梯度消失现象

对于sigmoid函数,当值接近0或1时,梯度接近0,梯度消失

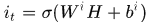

LSTM cell

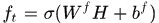

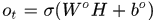

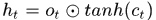

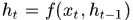

前向传播

Encoder-Decoder Framework

基本框架

(1)Encoder对输入序列 进行编码,即

进行编码,即

其中:

是

是 时刻的隐状态;

时刻的隐状态; 是Encoder的输出向量

是Encoder的输出向量(2)Decoder的作用是给定Encoder的输出向量

和

和 时刻之前的隐状态,预测当前时刻的状态,并输出。也即:

时刻之前的隐状态,预测当前时刻的状态,并输出。也即:

对于rnn模型,每个条件概率有:

其中,

是当前的隐状态,

是当前的隐状态, 为Encoder的输出向量

为Encoder的输出向量

attention机制

区别:对传统的Decoder进行调整,引入context vector ,也即

,也即

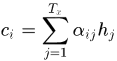

其中每个

由如下归一化得到:

由如下归一化得到:

其中

输出序列的位置,

输出序列的位置, 为输入序列的位置,也即对输入序列和输出序列的位置对应关系进行了建模。

为输入序列的位置,也即对输入序列和输出序列的位置对应关系进行了建模。称

为alignment model

为alignment model