不多说,直接上干货!

spark-submit在哪个位置

[spark@master ~]$ cd $SPARK_HOME/bin [spark@master bin]$ pwd /usr/local/spark/spark-1.6.1-bin-hadoop2.6/bin [spark@master bin]$ ll total 92 -rwxr-xr-x. 1 spark spark 1099 Feb 27 2016 beeline -rw-r--r--. 1 spark spark 932 Feb 27 2016 beeline.cmd -rw-r--r--. 1 spark spark 1910 Feb 27 2016 load-spark-env.cmd -rw-r--r--. 1 spark spark 2143 Feb 27 2016 load-spark-env.sh -rwxr-xr-x. 1 spark spark 3459 Feb 27 2016 pyspark -rw-r--r--. 1 spark spark 1486 Feb 27 2016 pyspark2.cmd -rw-r--r--. 1 spark spark 1000 Feb 27 2016 pyspark.cmd -rwxr-xr-x. 1 spark spark 2384 Feb 27 2016 run-example -rw-r--r--. 1 spark spark 2682 Feb 27 2016 run-example2.cmd -rw-r--r--. 1 spark spark 1012 Feb 27 2016 run-example.cmd -rwxr-xr-x. 1 spark spark 2858 Feb 27 2016 spark-class -rw-r--r--. 1 spark spark 2365 Feb 27 2016 spark-class2.cmd -rw-r--r--. 1 spark spark 1010 Feb 27 2016 spark-class.cmd -rwxr-xr-x. 1 spark spark 1049 Feb 27 2016 sparkR -rw-r--r--. 1 spark spark 1010 Feb 27 2016 sparkR2.cmd -rw-r--r--. 1 spark spark 998 Feb 27 2016 sparkR.cmd -rwxr-xr-x. 1 spark spark 3026 Feb 27 2016 spark-shell -rw-r--r--. 1 spark spark 1528 Feb 27 2016 spark-shell2.cmd -rw-r--r--. 1 spark spark 1008 Feb 27 2016 spark-shell.cmd -rwxr-xr-x. 1 spark spark 1075 Feb 27 2016 spark-sql -rwxr-xr-x. 1 spark spark 1050 Feb 27 2016 spark-submit -rw-r--r--. 1 spark spark 1126 Feb 27 2016 spark-submit2.cmd -rw-r--r--. 1 spark spark 1010 Feb 27 2016 spark-submit.cmd [spark@master bin]$

打包Spark application

将Spark application打成assemblyed jar。我们都知道,其实我们写好的一个Spark application,它除了spark本身的jar包和hdfs的jar包之外,它还有第三方其他的jar包对吧!所以,我们一般借助于maven或sbt的方式来打到最后的一个assemblyed jar。(同时,注意,只打包需要的依赖!!)

构建工具:

1.maven--maven-shade-plugin

请移步,

Spark编程环境搭建(基于Intellij IDEA的Ultimate版本)(包含Java和Scala版的WordCount)(博主强烈推荐)

2.sbt

这种方式,我不多说。个人偏爱好maven。

3、更多方式见

使用spark-submit启动Spark application

$SPARK_HOME/bin/spark-submit --class <main-class> --master --deploy-mode --conf = ... # other options [application-arguments]

请移步,见

Spark on YARN简介与运行wordcount(master、slave1和slave2)(博主推荐)

Spark standalone简介与运行wordcount(master、slave1和slave2)

spark-submit usage

Usage: spark-submit [options] [app arguments]

Usage: spark-submit --kill [submission ID] --master [spark://...]

Usage: spark-submit --status [submission ID] --master [spark://...]

[spark@master ~]$ $SPARK_HOME/bin/spark-submit

或者

[spark@master ~]$ $SPARK_HOME/bin/spark-submit --help

[spark@master ~]$ $SPARK_HOME/bin/spark-submit 或者 $SPARK_HOME/bin/spark-submit --help Usage: spark-submit [options] <app jar | python file> [app arguments] Usage: spark-submit --kill [submission ID] --master [spark://...] Usage: spark-submit --status [submission ID] --master [spark://...] Options: --master MASTER_URL spark://host:port, mesos://host:port, yarn, or local. --deploy-mode DEPLOY_MODE Whether to launch the driver program locally ("client") or on one of the worker machines inside the cluster ("cluster") (Default: client). --class CLASS_NAME Your application's main class (for Java / Scala apps). --name NAME A name of your application. --jars JARS Comma-separated list of local jars to include on the driver and executor classpaths. --packages Comma-separated list of maven coordinates of jars to include on the driver and executor classpaths. Will search the local maven repo, then maven central and any additional remote repositories given by --repositories. The format for the coordinates should be groupId:artifactId:version. --exclude-packages Comma-separated list of groupId:artifactId, to exclude while resolving the dependencies provided in --packages to avoid dependency conflicts. --repositories Comma-separated list of additional remote repositories to search for the maven coordinates given with --packages. --py-files PY_FILES Comma-separated list of .zip, .egg, or .py files to place on the PYTHONPATH for Python apps. --files FILES Comma-separated list of files to be placed in the working directory of each executor. --conf PROP=VALUE Arbitrary Spark configuration property. --properties-file FILE Path to a file from which to load extra properties. If not specified, this will look for conf/spark-defaults.conf. --driver-memory MEM Memory for driver (e.g. 1000M, 2G) (Default: 1024M). --driver-java-options Extra Java options to pass to the driver. --driver-library-path Extra library path entries to pass to the driver. --driver-class-path Extra class path entries to pass to the driver. Note that jars added with --jars are automatically included in the classpath. --executor-memory MEM Memory per executor (e.g. 1000M, 2G) (Default: 1G). --proxy-user NAME User to impersonate when submitting the application. --help, -h Show this help message and exit --verbose, -v Print additional debug output --version, Print the version of current Spark Spark standalone with cluster deploy mode only: --driver-cores NUM Cores for driver (Default: 1). Spark standalone or Mesos with cluster deploy mode only: --supervise If given, restarts the driver on failure. --kill SUBMISSION_ID If given, kills the driver specified. --status SUBMISSION_ID If given, requests the status of the driver specified. Spark standalone and Mesos only: --total-executor-cores NUM Total cores for all executors. Spark standalone and YARN only: --executor-cores NUM Number of cores per executor. (Default: 1 in YARN mode, or all available cores on the worker in standalone mode) YARN-only: --driver-cores NUM Number of cores used by the driver, only in cluster mode (Default: 1). --queue QUEUE_NAME The YARN queue to submit to (Default: "default"). --num-executors NUM Number of executors to launch (Default: 2). --archives ARCHIVES Comma separated list of archives to be extracted into the working directory of each executor. --principal PRINCIPAL Principal to be used to login to KDC, while running on secure HDFS. --keytab KEYTAB The full path to the file that contains the keytab for the principal specified above. This keytab will be copied to the node running the Application Master via the Secure Distributed Cache, for renewing the login tickets and the delegation tokens periodically. [spark@master ~]$

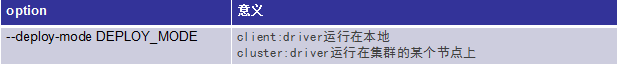

spark-submit option—运行模式相关

设置Spark的运行模式,根据需求选择

典型的Master URL:

注意:--deploy-mode不是spark on yarn专有

典型的Master URL:

spark-submit options—常规

spark-submit options—classpath相关、driver、executor相关

spark-submit options—资源、配置相关

spark-submit options—YARN-only

以下options只有在Saprk on YARN模式下才有效

spark-submit options—其他

Advanced Dependency Management

依赖包分发方式

--jars

1.file—绝对路径,file:/xxxx

2.hdfs、http、https、ftp

3.local

--repositories、--packages

--py-files(仅限python app)

Clean up

Jars和files会被拷贝到每个executor的工作目录,需要定期清理:

Spark on yarn会自动清理(spark.yarn.preserve.staging.files设置为flase,默认就是false)

Spark standalone(spark.worker.cleanup.appDataTtl)