首先,说明,我kafk的server.properties是

kafka的server.properties配置文件参考示范(图文详解)(多种方式)

问题详情

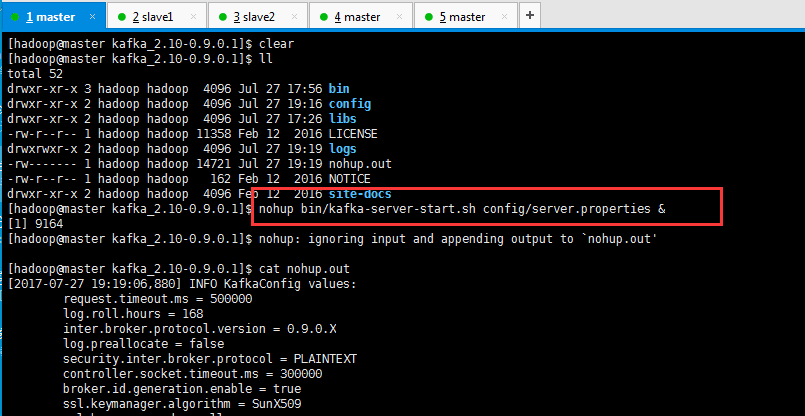

然后,我启动时,出现如下

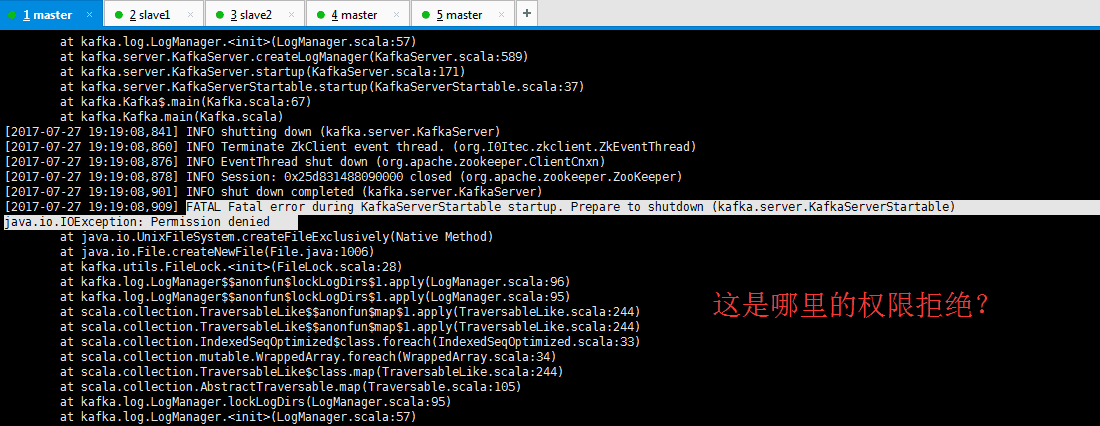

[hadoop@master kafka_2.10-0.9.0.1]$ nohup bin/kafka-server-start.sh config/server.properties & [1] 9164 [hadoop@master kafka_2.10-0.9.0.1]$ nohup: ignoring input and appending output to `nohup.out' [hadoop@master kafka_2.10-0.9.0.1]$ cat nohup.out [2017-07-27 19:19:06,880] INFO KafkaConfig values: request.timeout.ms = 500000 log.roll.hours = 168 inter.broker.protocol.version = 0.9.0.X log.preallocate = false security.inter.broker.protocol = PLAINTEXT controller.socket.timeout.ms = 300000 broker.id.generation.enable = true ssl.keymanager.algorithm = SunX509 ssl.key.password = null log.cleaner.enable = false ssl.provider = null num.recovery.threads.per.data.dir = 1 background.threads = 10 unclean.leader.election.enable = true sasl.kerberos.kinit.cmd = /usr/bin/kinit replica.lag.time.max.ms = 300000 ssl.endpoint.identification.algorithm = null auto.create.topics.enable = false zookeeper.sync.time.ms = 2000 ssl.client.auth = none ssl.keystore.password = null log.cleaner.io.buffer.load.factor = 0.9 offsets.topic.compression.codec = 0 log.retention.hours = 72 log.dirs = /data/kafka-log/log/9092 ssl.protocol = TLS log.index.size.max.bytes = 10485760 sasl.kerberos.min.time.before.relogin = 60000 log.retention.minutes = null connections.max.idle.ms = 600000 ssl.trustmanager.algorithm = PKIX offsets.retention.minutes = 1440 max.connections.per.ip = 2147483647 replica.fetch.wait.max.ms = 1000 metrics.num.samples = 2 port = 9092 offsets.retention.check.interval.ms = 600000 log.cleaner.dedupe.buffer.size = 134217728 log.segment.bytes = 536870912 group.min.session.timeout.ms = 6000 producer.purgatory.purge.interval.requests = 100 min.insync.replicas = 1 ssl.truststore.password = null log.flush.scheduler.interval.ms = 9223372036854775807 socket.receive.buffer.bytes = 1048576 leader.imbalance.per.broker.percentage = 10 num.io.threads = 8 zookeeper.connect = master:2181,slave1:2181,slave2:2181 queued.max.requests = 16 offsets.topic.replication.factor = 3 replica.socket.timeout.ms = 50000 offsets.topic.segment.bytes = 104857600 replica.high.watermark.checkpoint.interval.ms = 5000 broker.id = 0 ssl.keystore.location = null listeners = PLAINTEXT://:9092 log.flush.interval.messages = 10000 principal.builder.class = class org.apache.kafka.common.security.auth.DefaultPrincipalBuilder log.retention.ms = null offsets.commit.required.acks = -1 sasl.kerberos.principal.to.local.rules = [DEFAULT] group.max.session.timeout.ms = 500000 num.replica.fetchers = 4 advertised.listeners = null replica.socket.receive.buffer.bytes = 65536 delete.topic.enable = false log.index.interval.bytes = 4096 metric.reporters = [] compression.type = producer log.cleanup.policy = delete controlled.shutdown.max.retries = 3 log.cleaner.threads = 1 quota.window.size.seconds = 1 zookeeper.connection.timeout.ms = 300000 offsets.load.buffer.size = 5242880 zookeeper.session.timeout.ms = 6000 ssl.cipher.suites = null authorizer.class.name = sasl.kerberos.ticket.renew.jitter = 0.05 sasl.kerberos.service.name = null controlled.shutdown.enable = true offsets.topic.num.partitions = 50 quota.window.num = 11 message.max.bytes = 1000000 log.cleaner.backoff.ms = 15000 log.roll.jitter.hours = 0 log.retention.check.interval.ms = 600000 replica.fetch.max.bytes = 1048576 log.cleaner.delete.retention.ms = 86400000 fetch.purgatory.purge.interval.requests = 100 log.cleaner.min.cleanable.ratio = 0.5 offsets.commit.timeout.ms = 500000 zookeeper.set.acl = false log.retention.bytes = 4294967296 offset.metadata.max.bytes = 4096 leader.imbalance.check.interval.seconds = 300 quota.consumer.default = 9223372036854775807 log.roll.jitter.ms = null reserved.broker.max.id = 1000 replica.fetch.backoff.ms = 1000 advertised.host.name = null quota.producer.default = 9223372036854775807 log.cleaner.io.buffer.size = 524288 controlled.shutdown.retry.backoff.ms = 5000 log.dir = /tmp/kafka-logs log.flush.offset.checkpoint.interval.ms = 60000 log.segment.delete.delay.ms = 60000 num.partitions = 2 num.network.threads = 8 socket.request.max.bytes = 104857600 sasl.kerberos.ticket.renew.window.factor = 0.8 log.roll.ms = null ssl.enabled.protocols = [TLSv1.2, TLSv1.1, TLSv1] socket.send.buffer.bytes = 1048576 log.flush.interval.ms = 1000 ssl.truststore.location = null log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308 default.replication.factor = 1 metrics.sample.window.ms = 30000 auto.leader.rebalance.enable = true host.name = ssl.truststore.type = JKS advertised.port = null max.connections.per.ip.overrides = replica.fetch.min.bytes = 1 ssl.keystore.type = JKS (kafka.server.KafkaConfig) [2017-07-27 19:19:07,514] INFO starting (kafka.server.KafkaServer) [2017-07-27 19:19:07,548] INFO Connecting to zookeeper on master:2181,slave1:2181,slave2:2181 (kafka.server.KafkaServer) [2017-07-27 19:19:07,662] INFO Starting ZkClient event thread. (org.I0Itec.zkclient.ZkEventThread) [2017-07-27 19:19:07,715] INFO Client environment:zookeeper.version=3.4.6-1569965, built on 02/20/2014 09:09 GMT (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,715] INFO Client environment:host.name=master (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,715] INFO Client environment:java.version=1.7.0_79 (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,715] INFO Client environment:java.vendor=Oracle Corporation (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,715] INFO Client environment:java.home=/home/hadoop/app/jdk1.7.0_79/jre (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,715] INFO Client environment:java.class.path=/home/hadoop/app/jdk/lib:.:/home/hadoop/app/jdk/lib:/home/hadoop/app/jdk/jre/lib:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/scala-library-2.10.5.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka_2.10-0.9.0.1-test.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/validation-api-1.1.0.Final.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/slf4j-api-1.7.6.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jetty-io-9.2.12.v20150709.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/aopa lliance-repackaged-2.4.0-b31.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jopt-simple-3.2.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/javax.servlet-api-3.1.0.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-client-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-guava-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka_2.10-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/slf4j-log4j12-1.7.6.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/javax.annotation-api-1.2.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/connect-file-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/metrics-core-2.2.0.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/javax.inject-1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-server-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/argparse4j-0.5.0.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka-tools-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jackson-annotations-2.5.0.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-common-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/connect-json-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-media-jaxb-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jetty-util-9.2.12.v20150709.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/osgi-resource-locator-1.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka_2.10-0.9.0.1-sources.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/hk2-utils-2.4.0-b31.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jetty-http-9.2.12.v20150709.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka-clients-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka_2.10-0.9.0.1-javadoc.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jackson-databind-2.5.4.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/javassist-3.18.1-GA.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jackson-jaxrs-base-2.5.4.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jackson-module-jaxb-annotations-2.5.4.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jackson-jaxrs-json-provider-2.5.4.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/javax.inject-2.4.0-b31.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/connect-runtime-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-container-servlet-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka-log4j-appender-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/log4j-1.2.17.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/javax.ws.rs-api-2.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/zookeeper-3.4.6.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/hk2-api-2.4.0-b31.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jetty-server-9.2.12.v20150709.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jetty-servlet-9.2.12.v20150709.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jersey-container-servlet-core-2.22.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/hk2-locator-2.4.0-b31.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/connect-api-0.9.0.1.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jetty-security-9.2.12.v20150709.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/zkclient-0.7.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/jackson-core-2.5.4.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/lz4-1.2.0.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/snappy-java-1.1.1.7.jar:/home/hadoop/app/kafka_2.10-0.9.0.1/bin/../libs/kafka_2.10-0.9.0.1-scaladoc.jar (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,721] INFO Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,721] INFO Client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,721] INFO Client environment:java.compiler=<NA> (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,722] INFO Client environment:os.name=Linux (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,722] INFO Client environment:os.arch=amd64 (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,724] INFO Client environment:os.version=2.6.32-431.el6.x86_64 (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,724] INFO Client environment:user.name=hadoop (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,724] INFO Client environment:user.home=/home/hadoop (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,724] INFO Client environment:user.dir=/home/hadoop/app/kafka_2.10-0.9.0.1 (org.apache.zookeeper.ZooKeeper) [2017-07-27 19:19:07,731] INFO Initiating client connection, connectString=master:2181,slave1:2181,slave2:2181 sessionTimeout=6000 watcher=org.I0Itec.zkclient.ZkClient@21683789 (org.apache.zo okeeper.ZooKeeper) [2017-07-27 19:19:07,914] INFO Waiting for keeper state SyncConnected (org.I0Itec.zkclient.ZkClient) [2017-07-27 19:19:08,061] INFO Opening socket connection to server slave1/192.168.80.146:2181. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn) [2017-07-27 19:19:08,080] INFO Socket connection established to slave1/192.168.80.146:2181, initiating session (org.apache.zookeeper.ClientCnxn) [2017-07-27 19:19:08,123] INFO Session establishment complete on server slave1/192.168.80.146:2181, sessionid = 0x25d831488090000, negotiated timeout = 6000 (org.apache.zookeeper.ClientCnxn) [2017-07-27 19:19:08,137] INFO zookeeper state changed (SyncConnected) (org.I0Itec.zkclient.ZkClient) [2017-07-27 19:19:08,830] FATAL Fatal error during KafkaServer startup. Prepare to shutdown (kafka.server.KafkaServer) java.io.IOException: Permission denied at java.io.UnixFileSystem.createFileExclusively(Native Method) at java.io.File.createNewFile(File.java:1006) at kafka.utils.FileLock.<init>(FileLock.scala:28) at kafka.log.LogManager$$anonfun$lockLogDirs$1.apply(LogManager.scala:96) at kafka.log.LogManager$$anonfun$lockLogDirs$1.apply(LogManager.scala:95) at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:244) at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:244) at scala.collection.IndexedSeqOptimized$class.foreach(IndexedSeqOptimized.scala:33) at scala.collection.mutable.WrappedArray.foreach(WrappedArray.scala:34) at scala.collection.TraversableLike$class.map(TraversableLike.scala:244) at scala.collection.AbstractTraversable.map(Traversable.scala:105) at kafka.log.LogManager.lockLogDirs(LogManager.scala:95) at kafka.log.LogManager.<init>(LogManager.scala:57) at kafka.server.KafkaServer.createLogManager(KafkaServer.scala:589) at kafka.server.KafkaServer.startup(KafkaServer.scala:171) at kafka.server.KafkaServerStartable.startup(KafkaServerStartable.scala:37) at kafka.Kafka$.main(Kafka.scala:67) at kafka.Kafka.main(Kafka.scala) [2017-07-27 19:19:08,841] INFO shutting down (kafka.server.KafkaServer) [2017-07-27 19:19:08,860] INFO Terminate ZkClient event thread. (org.I0Itec.zkclient.ZkEventThread)

问题分析

是我在新建

要么执行启动命令的用户,要么写日志的文件目录权限

写日志的文件目录权限问题,新建快了,忘记chown了

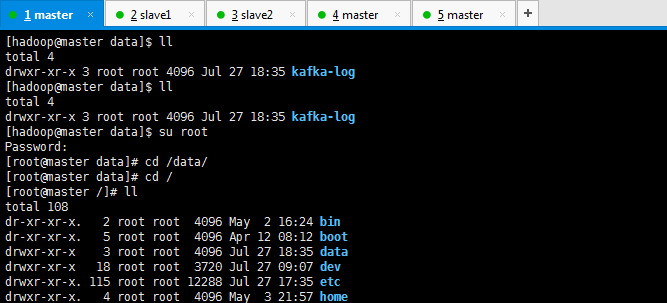

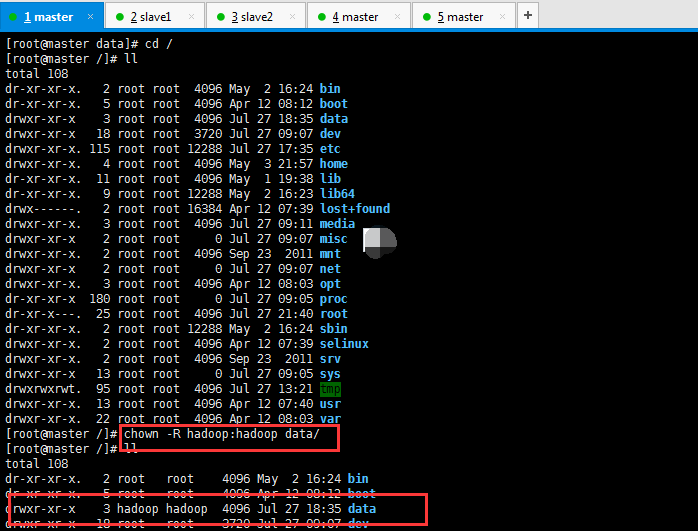

解决办法

[hadoop@master data]$ ll total 4 drwxr-xr-x 3 root root 4096 Jul 27 18:35 kafka-log [hadoop@master data]$ ll total 4 drwxr-xr-x 3 root root 4096 Jul 27 18:35 kafka-log [hadoop@master data]$ su root Password: [root@master data]# cd /data/ [root@master data]# cd / [root@master /]# ll total 108 dr-xr-xr-x. 2 root root 4096 May 2 16:24 bin dr-xr-xr-x. 5 root root 4096 Apr 12 08:12 boot drwxr-xr-x 3 root root 4096 Jul 27 18:35 data drwxr-xr-x 18 root root 3720 Jul 27 09:07 dev drwxr-xr-x. 115 root root 12288 Jul 27 17:35 etc drwxr-xr-x. 4 root root 4096 May 3 21:57 home dr-xr-xr-x. 11 root root 4096 May 1 19:38 lib dr-xr-xr-x. 9 root root 12288 May 2 16:23 lib64 drwx------. 2 root root 16384 Apr 12 07:39 lost+found drwxr-xr-x. 3 root root 4096 Jul 27 09:11 media drwxr-xr-x 2 root root 0 Jul 27 09:07 misc drwxr-xr-x. 2 root root 4096 Sep 23 2011 mnt drwxr-xr-x 2 root root 0 Jul 27 09:07 net drwxr-xr-x. 3 root root 4096 Apr 12 08:03 opt dr-xr-xr-x 180 root root 0 Jul 27 09:05 proc dr-xr-x---. 25 root root 4096 Jul 27 21:40 root dr-xr-xr-x. 2 root root 12288 May 2 16:24 sbin drwxr-xr-x. 2 root root 4096 Apr 12 07:39 selinux drwxr-xr-x. 2 root root 4096 Sep 23 2011 srv drwxr-xr-x 13 root root 0 Jul 27 09:05 sys drwxrwxrwt. 95 root root 4096 Jul 27 13:21 tmp drwxr-xr-x. 13 root root 4096 Apr 12 07:40 usr drwxr-xr-x. 22 root root 4096 Apr 12 08:03 var [root@master /]# chown -R hadoop:hadoop data/ [root@master /]# ll total 108 dr-xr-xr-x. 2 root root 4096 May 2 16:24 bin dr-xr-xr-x. 5 root root 4096 Apr 12 08:12 boot drwxr-xr-x 3 hadoop hadoop 4096 Jul 27 18:35 data drwxr-xr-x 18 root root 3720 Jul 27 09:07 dev drwxr-xr-x. 115 root root 12288 Jul 27 17:35 etc drwxr-xr-x. 4 root root 4096 May 3 21:57 home dr-xr-xr-x. 11 root root 4096 May 1 19:38 lib dr-xr-xr-x. 9 root root 12288 May 2 16:23 lib64 drwx------. 2 root root 16384 Apr 12 07:39 lost+found drwxr-xr-x. 3 root root 4096 Jul 27 09:11 media drwxr-xr-x 2 root root 0 Jul 27 09:07 misc drwxr-xr-x. 2 root root 4096 Sep 23 2011 mnt drwxr-xr-x 2 root root 0 Jul 27 09:07 net drwxr-xr-x. 3 root root 4096 Apr 12 08:03 opt dr-xr-xr-x 180 root root 0 Jul 27 09:05 proc dr-xr-x---. 25 root root 4096 Jul 27 21:40 root dr-xr-xr-x. 2 root root 12288 May 2 16:24 sbin drwxr-xr-x. 2 root root 4096 Apr 12 07:39 selinux drwxr-xr-x. 2 root root 4096 Sep 23 2011 srv drwxr-xr-x 13 root root 0 Jul 27 09:05 sys drwxrwxrwt. 95 root root 4096 Jul 27 13:21 tmp drwxr-xr-x. 13 root root 4096 Apr 12 07:40 usr drwxr-xr-x. 22 root root 4096 Apr 12 08:03 var [root@master /]#

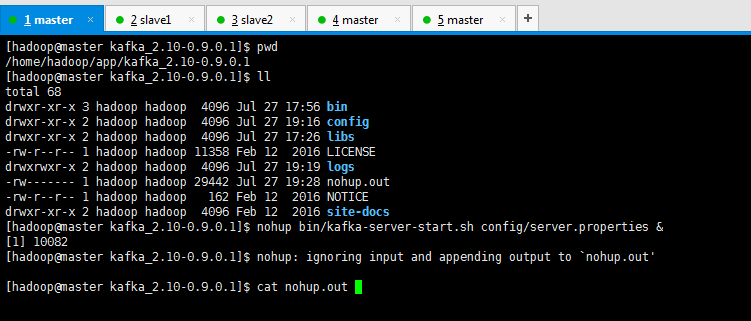

然后,再次启动

[hadoop@master kafka_2.10-0.9.0.1]$ pwd /home/hadoop/app/kafka_2.10-0.9.0.1 [hadoop@master kafka_2.10-0.9.0.1]$ ll total 68 drwxr-xr-x 3 hadoop hadoop 4096 Jul 27 17:56 bin drwxr-xr-x 2 hadoop hadoop 4096 Jul 27 19:16 config drwxr-xr-x 2 hadoop hadoop 4096 Jul 27 17:26 libs -rw-r--r-- 1 hadoop hadoop 11358 Feb 12 2016 LICENSE drwxrwxr-x 2 hadoop hadoop 4096 Jul 27 19:19 logs -rw------- 1 hadoop hadoop 29442 Jul 27 19:28 nohup.out -rw-r--r-- 1 hadoop hadoop 162 Feb 12 2016 NOTICE drwxr-xr-x 2 hadoop hadoop 4096 Feb 12 2016 site-docs [hadoop@master kafka_2.10-0.9.0.1]$ nohup bin/kafka-server-start.sh config/server.properties & [1] 10082 [hadoop@master kafka_2.10-0.9.0.1]$ nohup: ignoring input and appending output to `nohup.out' [hadoop@master kafka_2.10-0.9.0.1]$ cat nohup.out

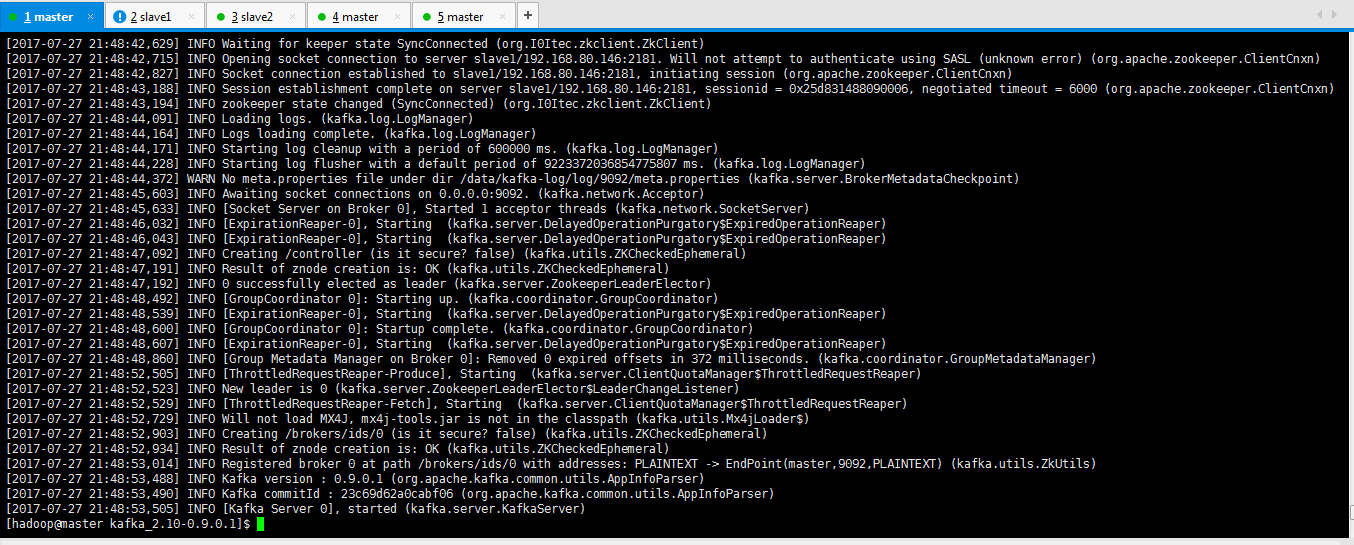

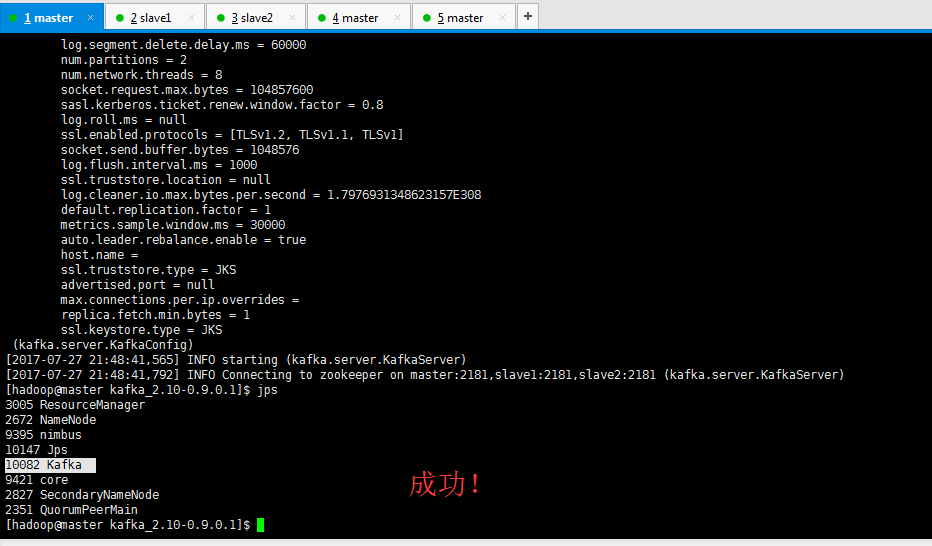

[2017-07-27 21:48:42,629] INFO Waiting for keeper state SyncConnected (org.I0Itec.zkclient.ZkClient) [2017-07-27 21:48:42,715] INFO Opening socket connection to server slave1/192.168.80.146:2181. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn) [2017-07-27 21:48:42,827] INFO Socket connection established to slave1/192.168.80.146:2181, initiating session (org.apache.zookeeper.ClientCnxn) [2017-07-27 21:48:43,188] INFO Session establishment complete on server slave1/192.168.80.146:2181, sessionid = 0x25d831488090006, negotiated timeout = 6000 (org.apache.zookeeper.ClientCnxn) [2017-07-27 21:48:43,194] INFO zookeeper state changed (SyncConnected) (org.I0Itec.zkclient.ZkClient) [2017-07-27 21:48:44,091] INFO Loading logs. (kafka.log.LogManager) [2017-07-27 21:48:44,164] INFO Logs loading complete. (kafka.log.LogManager) [2017-07-27 21:48:44,171] INFO Starting log cleanup with a period of 600000 ms. (kafka.log.LogManager) [2017-07-27 21:48:44,228] INFO Starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.LogManager) [2017-07-27 21:48:44,372] WARN No meta.properties file under dir /data/kafka-log/log/9092/meta.properties (kafka.server.BrokerMetadataCheckpoint) [2017-07-27 21:48:45,603] INFO Awaiting socket connections on 0.0.0.0:9092. (kafka.network.Acceptor) [2017-07-27 21:48:45,633] INFO [Socket Server on Broker 0], Started 1 acceptor threads (kafka.network.SocketServer) [2017-07-27 21:48:46,032] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:48:46,043] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:48:47,092] INFO Creating /controller (is it secure? false) (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:48:47,191] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:48:47,192] INFO 0 successfully elected as leader (kafka.server.ZookeeperLeaderElector) [2017-07-27 21:48:48,492] INFO [GroupCoordinator 0]: Starting up. (kafka.coordinator.GroupCoordinator) [2017-07-27 21:48:48,539] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:48:48,600] INFO [GroupCoordinator 0]: Startup complete. (kafka.coordinator.GroupCoordinator) [2017-07-27 21:48:48,607] INFO [ExpirationReaper-0], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:48:48,860] INFO [Group Metadata Manager on Broker 0]: Removed 0 expired offsets in 372 milliseconds. (kafka.coordinator.GroupMetadataManager) [2017-07-27 21:48:52,505] INFO [ThrottledRequestReaper-Produce], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2017-07-27 21:48:52,523] INFO New leader is 0 (kafka.server.ZookeeperLeaderElector$LeaderChangeListener) [2017-07-27 21:48:52,529] INFO [ThrottledRequestReaper-Fetch], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2017-07-27 21:48:52,729] INFO Will not load MX4J, mx4j-tools.jar is not in the classpath (kafka.utils.Mx4jLoader$) [2017-07-27 21:48:52,903] INFO Creating /brokers/ids/0 (is it secure? false) (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:48:52,934] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:48:53,014] INFO Registered broker 0 at path /brokers/ids/0 with addresses: PLAINTEXT -> EndPoint(master,9092,PLAINTEXT) (kafka.utils.ZkUtils) [2017-07-27 21:48:53,488] INFO Kafka version : 0.9.0.1 (org.apache.kafka.common.utils.AppInfoParser) [2017-07-27 21:48:53,490] INFO Kafka commitId : 23c69d62a0cabf06 (org.apache.kafka.common.utils.AppInfoParser) [2017-07-27 21:48:53,505] INFO [Kafka Server 0], started (kafka.server.KafkaServer) [hadoop@master kafka_2.10-0.9.0.1]$

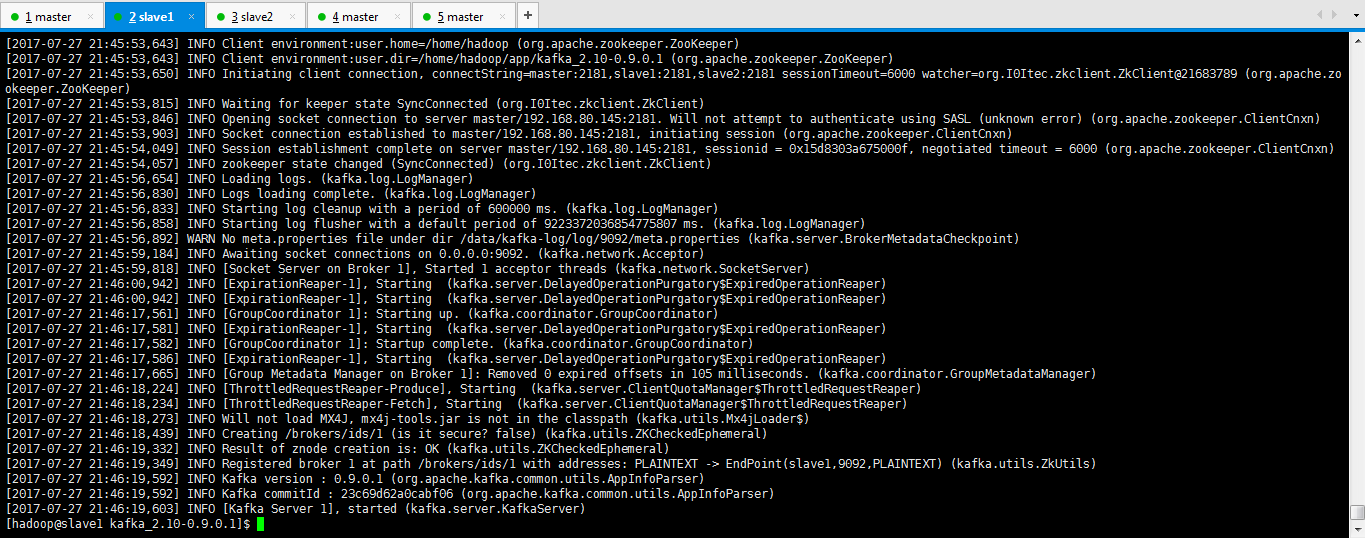

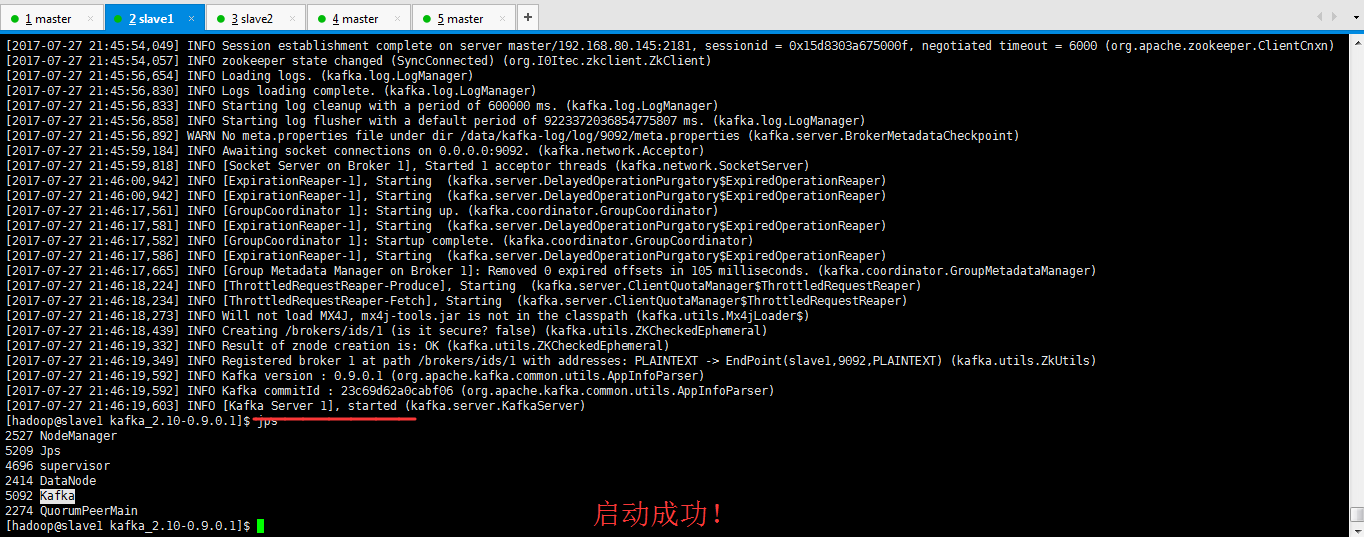

[2017-07-27 21:45:54,049] INFO Session establishment complete on server master/192.168.80.145:2181, sessionid = 0x15d8303a675000f, negotiated timeout = 6000 (org.apache.zookeeper.ClientCnxn) [2017-07-27 21:45:54,057] INFO zookeeper state changed (SyncConnected) (org.I0Itec.zkclient.ZkClient) [2017-07-27 21:45:56,654] INFO Loading logs. (kafka.log.LogManager) [2017-07-27 21:45:56,830] INFO Logs loading complete. (kafka.log.LogManager) [2017-07-27 21:45:56,833] INFO Starting log cleanup with a period of 600000 ms. (kafka.log.LogManager) [2017-07-27 21:45:56,858] INFO Starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.LogManager) [2017-07-27 21:45:56,892] WARN No meta.properties file under dir /data/kafka-log/log/9092/meta.properties (kafka.server.BrokerMetadataCheckpoint) [2017-07-27 21:45:59,184] INFO Awaiting socket connections on 0.0.0.0:9092. (kafka.network.Acceptor) [2017-07-27 21:45:59,818] INFO [Socket Server on Broker 1], Started 1 acceptor threads (kafka.network.SocketServer) [2017-07-27 21:46:00,942] INFO [ExpirationReaper-1], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:46:00,942] INFO [ExpirationReaper-1], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:46:17,561] INFO [GroupCoordinator 1]: Starting up. (kafka.coordinator.GroupCoordinator) [2017-07-27 21:46:17,581] INFO [ExpirationReaper-1], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:46:17,582] INFO [GroupCoordinator 1]: Startup complete. (kafka.coordinator.GroupCoordinator) [2017-07-27 21:46:17,586] INFO [ExpirationReaper-1], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:46:17,665] INFO [Group Metadata Manager on Broker 1]: Removed 0 expired offsets in 105 milliseconds. (kafka.coordinator.GroupMetadataManager) [2017-07-27 21:46:18,224] INFO [ThrottledRequestReaper-Produce], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2017-07-27 21:46:18,234] INFO [ThrottledRequestReaper-Fetch], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2017-07-27 21:46:18,273] INFO Will not load MX4J, mx4j-tools.jar is not in the classpath (kafka.utils.Mx4jLoader$) [2017-07-27 21:46:18,439] INFO Creating /brokers/ids/1 (is it secure? false) (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:46:19,332] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:46:19,349] INFO Registered broker 1 at path /brokers/ids/1 with addresses: PLAINTEXT -> EndPoint(slave1,9092,PLAINTEXT) (kafka.utils.ZkUtils) [2017-07-27 21:46:19,592] INFO Kafka version : 0.9.0.1 (org.apache.kafka.common.utils.AppInfoParser) [2017-07-27 21:46:19,592] INFO Kafka commitId : 23c69d62a0cabf06 (org.apache.kafka.common.utils.AppInfoParser) [2017-07-27 21:46:19,603] INFO [Kafka Server 1], started (kafka.server.KafkaServer) [hadoop@slave1 kafka_2.10-0.9.0.1]$ jps 2527 NodeManager 5209 Jps 4696 supervisor 2414 DataNode 5092 Kafka 2274 QuorumPeerMain [hadoop@slave1 kafka_2.10-0.9.0.1]$

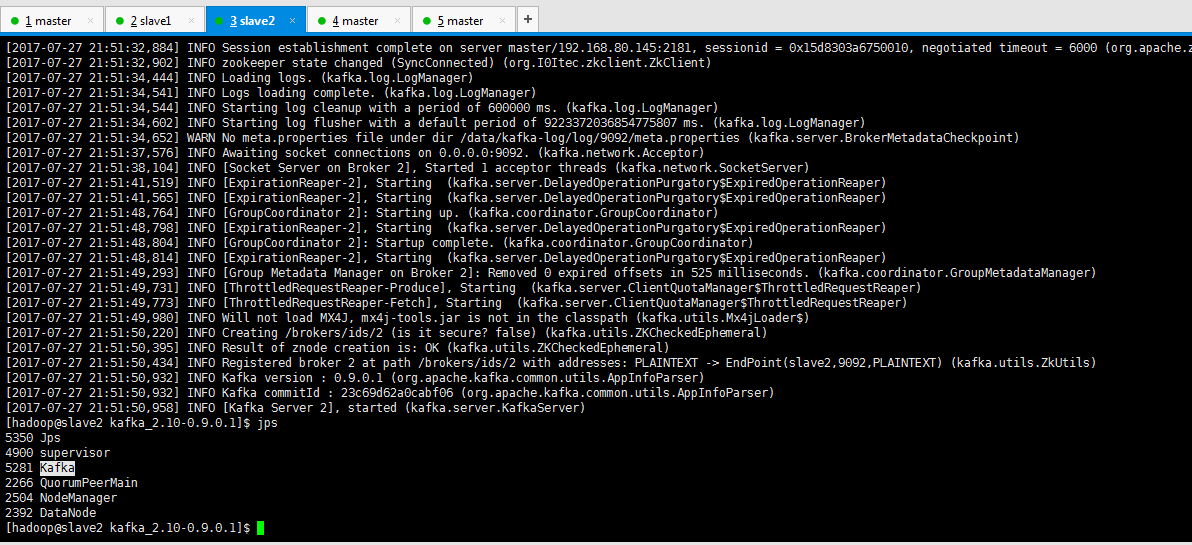

[2017-07-27 21:51:32,884] INFO Session establishment complete on server master/192.168.80.145:2181, sessionid = 0x15d8303a6750010, negotiated timeout = 6000 (org.apache.zookeeper.ClientCnxn) [2017-07-27 21:51:32,902] INFO zookeeper state changed (SyncConnected) (org.I0Itec.zkclient.ZkClient) [2017-07-27 21:51:34,444] INFO Loading logs. (kafka.log.LogManager) [2017-07-27 21:51:34,541] INFO Logs loading complete. (kafka.log.LogManager) [2017-07-27 21:51:34,544] INFO Starting log cleanup with a period of 600000 ms. (kafka.log.LogManager) [2017-07-27 21:51:34,602] INFO Starting log flusher with a default period of 9223372036854775807 ms. (kafka.log.LogManager) [2017-07-27 21:51:34,652] WARN No meta.properties file under dir /data/kafka-log/log/9092/meta.properties (kafka.server.BrokerMetadataCheckpoint) [2017-07-27 21:51:37,576] INFO Awaiting socket connections on 0.0.0.0:9092. (kafka.network.Acceptor) [2017-07-27 21:51:38,104] INFO [Socket Server on Broker 2], Started 1 acceptor threads (kafka.network.SocketServer) [2017-07-27 21:51:41,519] INFO [ExpirationReaper-2], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:51:41,565] INFO [ExpirationReaper-2], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:51:48,764] INFO [GroupCoordinator 2]: Starting up. (kafka.coordinator.GroupCoordinator) [2017-07-27 21:51:48,798] INFO [ExpirationReaper-2], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:51:48,804] INFO [GroupCoordinator 2]: Startup complete. (kafka.coordinator.GroupCoordinator) [2017-07-27 21:51:48,814] INFO [ExpirationReaper-2], Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper) [2017-07-27 21:51:49,293] INFO [Group Metadata Manager on Broker 2]: Removed 0 expired offsets in 525 milliseconds. (kafka.coordinator.GroupMetadataManager) [2017-07-27 21:51:49,731] INFO [ThrottledRequestReaper-Produce], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2017-07-27 21:51:49,773] INFO [ThrottledRequestReaper-Fetch], Starting (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2017-07-27 21:51:49,980] INFO Will not load MX4J, mx4j-tools.jar is not in the classpath (kafka.utils.Mx4jLoader$) [2017-07-27 21:51:50,220] INFO Creating /brokers/ids/2 (is it secure? false) (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:51:50,395] INFO Result of znode creation is: OK (kafka.utils.ZKCheckedEphemeral) [2017-07-27 21:51:50,434] INFO Registered broker 2 at path /brokers/ids/2 with addresses: PLAINTEXT -> EndPoint(slave2,9092,PLAINTEXT) (kafka.utils.ZkUtils) [2017-07-27 21:51:50,932] INFO Kafka version : 0.9.0.1 (org.apache.kafka.common.utils.AppInfoParser) [2017-07-27 21:51:50,932] INFO Kafka commitId : 23c69d62a0cabf06 (org.apache.kafka.common.utils.AppInfoParser) [2017-07-27 21:51:50,958] INFO [Kafka Server 2], started (kafka.server.KafkaServer) [hadoop@slave2 kafka_2.10-0.9.0.1]$ jps 5350 Jps 4900 supervisor 5281 Kafka 2266 QuorumPeerMain 2504 NodeManager 2392 DataNode [hadoop@slave2 kafka_2.10-0.9.0.1]$

成功!