先说一个小知识,助于理解代码中各个层之间维度是怎么变换的。

卷积函数:一般只用来改变输入数据的维度,例如3维到16维。

Conv2d()

Conv2d(in_channels:int,out_channels:int,kernel_size:Union[int,tuple],stride=1,padding=o):

"""

:param in_channels: 输入的维度

:param out_channels: 通过卷积核之后,要输出的维度

:param kernel_size: 卷积核大小

:param stride: 移动步长

:param padding: 四周添多少个零

"""

一个小例子:

import torch

import torch.nn

# 定义一个16张照片,每个照片3个通道,大小是28*28

x= torch.randn(16,3,32,32)

# 改变照片的维度,从3维升到16维,卷积核大小是5

conv= torch.nn.Conv2d(3,16,kernel_size=5,stride=1,padding=0)

res=conv(x)

print(res.shape)

# torch.Size([16, 16, 28, 28])

# 维度升到16维,因为卷积核大小是5,步长是1,所以照片的大小缩小了,变成28

卷积神经网络实战之ResNet18:

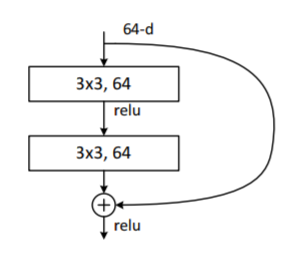

下面放一个ResNet18的一个示意图,

ResNet18主要是在层与层之间,加入了一个短接层,可以每隔k个层,进行一次短接。网络层的层数不是 越深就越好。

ResNet18就是,如果在原先的基础上再加上k层,如果有小优化,则保留,如果比原先结果还差,那就利用短接层,直接跳过。

ResNet18的构造如下:

ResNet18(

(conv1): Sequential(

(0): Conv2d(3, 64, kernel_size=(3, 3), stride=(3, 3))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

(blk1): ResBlk(

(conv1): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(bn1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(128, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(bn2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(extra): Sequential(

(0): Conv2d(64, 128, kernel_size=(1, 1), stride=(2, 2))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(blk2): ResBlk(

(conv1): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(bn1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(256, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(bn2): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(extra): Sequential(

(0): Conv2d(128, 256, kernel_size=(1, 1), stride=(2, 2))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(blk3): ResBlk(

(conv1): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(extra): Sequential(

(0): Conv2d(256, 512, kernel_size=(1, 1), stride=(2, 2))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

)

)

(blk4): ResBlk(

(conv1): Conv2d(512, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(bn1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(conv2): Conv2d(512, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(bn2): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(extra): Sequential()

)

(outlayer): Linear(in_features=512, out_features=10, bias=True)

)

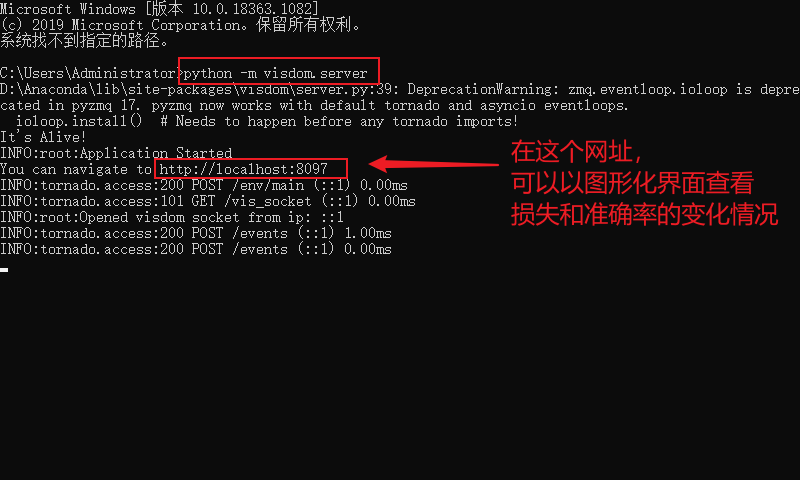

程序运行前,先启动visdom,如果没有配置好visdom环境的,先百度安装好visdom环境

- 1.使用快捷键win+r,在输入框输出cmd,然后在命令行窗口里输入

python -m visdom.server,启动visdom

代码实战

定义一个名为

resnet.py的文件,代码如下

import torch

from torch import nn

from torch.nn import functional as F

# 定义两个卷积层 + 一个短接层

class ResBlk(nn.Module):

def __init__(self,ch_in,ch_out,stride=1):

super(ResBlk, self).__init__()

# 两个卷积层

self.conv1=nn.Conv2d(ch_in,ch_out,kernel_size=3,stride=stride,padding=1)

self.bn1=nn.BatchNorm2d(ch_out)

self.conv2=nn.Conv2d(ch_out,ch_out,kernel_size=3,stride=1,padding=1)

self.bn2=nn.BatchNorm2d(ch_out)

# 短接层

self.extra=nn.Sequential()

if ch_out != ch_in:

self.extra=nn.Sequential(

nn.Conv2d(ch_in,ch_out,kernel_size=1,stride=stride),

nn.BatchNorm2d(ch_out)

)

def forward(self,x):

"""

:param x: [b,ch,h,w]

:return:

"""

out=F.relu(self.bn1(self.conv1(x)))

out=self.bn2(self.conv2(out))

# 短接层

# element-wise add: [b,ch_in,h,w]=>[b,ch_out,h,w]

out=self.extra(x)+out

return out

class ResNet18(nn.Module):

def __init__(self):

super(ResNet18, self).__init__()

# 定义预处理层

self.conv1=nn.Sequential(

nn.Conv2d(3,64,kernel_size=3,stride=3,padding=0),

nn.BatchNorm2d(64)

)

# 定义堆叠ResBlock层

# followed 4 blocks

# [b,64,h,w]-->[b,128,h,w]

self.blk1=ResBlk(64,128,stride=2)

# [b,128,h,w]-->[b,256,h,w]

self.blk2=ResBlk(128,256,stride=2)

# [b,256,h,w]-->[b,512,h,w]

self.blk3=ResBlk(256,512,stride=2)

# [b,512,h,w]-->[b,512,h,w]

self.blk4=ResBlk(512,512,stride=2)

# 定义全连接层

self.outlayer=nn.Linear(512,10)

def forward(self,x):

"""

:param x:

:return:

"""

# 1.预处理层

x=F.relu(self.conv1(x))

# 2. 堆叠ResBlock层:channel会慢慢的增加, 长和宽会慢慢的减少

# [b,64,h,w]-->[b,512,h,w]

x=self.blk1(x)

x=self.blk2(x)

x=self.blk3(x)

x=self.blk4(x)

# print("after conv:",x.shape) # [b,512,2,2]

# 不管原先什么后面两个维度是多少,都化成[1,1],

# [b,512,1,1]

x=F.adaptive_avg_pool2d(x,[1,1])

# print("after pool2d:",x.shape) # [b,512,1,1]

# 将[b,512,1,1]打平成[b,512*1*1]

x=x.view(x.size(0),-1)

# 3.放到全连接层,进行打平

# [b,512]-->[b,10]

x=self.outlayer(x)

return x

def main():

blk=ResBlk(64,128,stride=2)

temp=torch.randn(2,64,32,32)

out=blk(temp)

# print('block:',out.shape)

x=torch.randn(2,3,32,32)

model=ResNet()

out=model(x)

# print("resnet:",out.shape)

if __name__ == '__main__':

main()

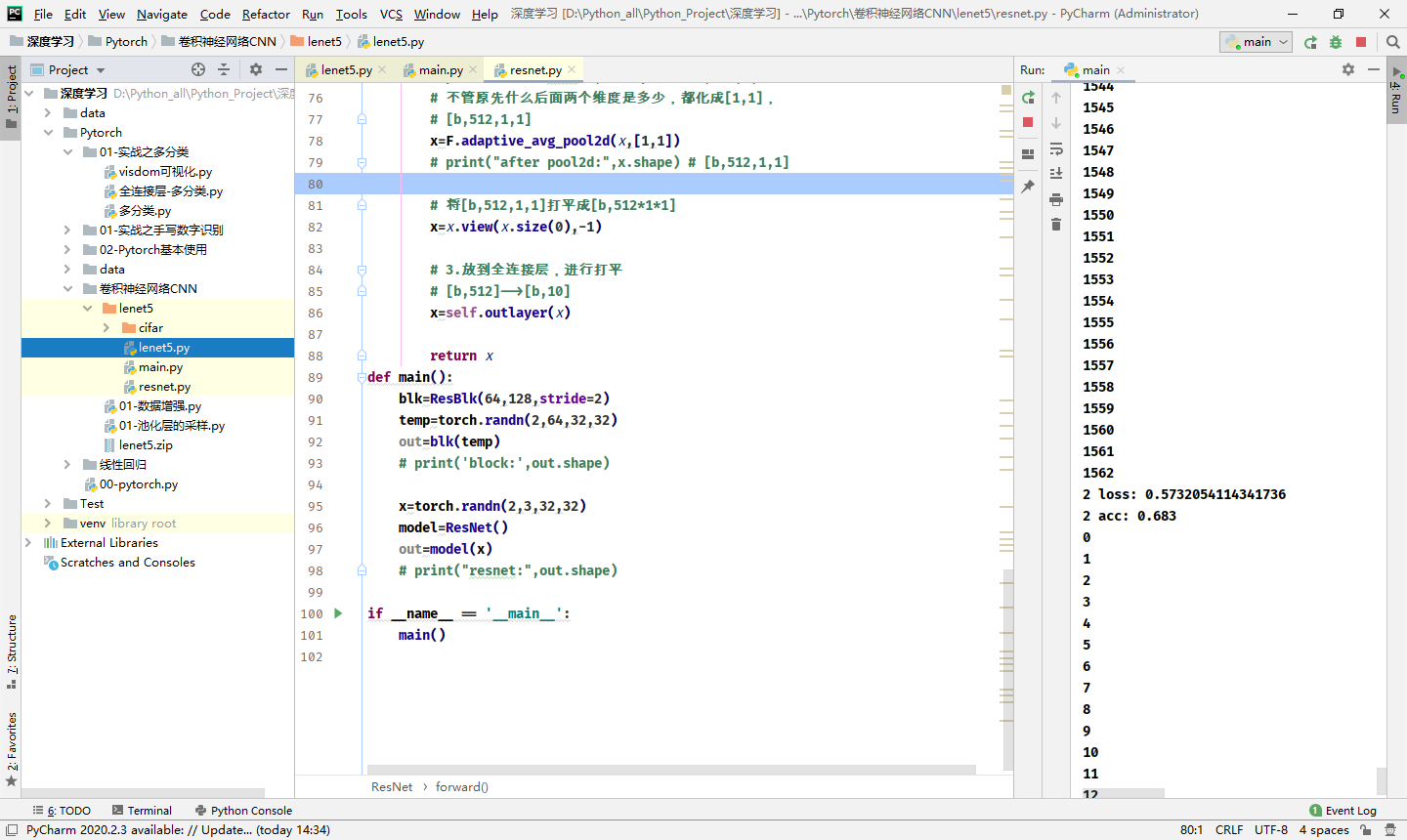

定义一个名为

main.py的文件,代码如下

import torch

from torchvision import datasets

from torchvision import transforms

from torch.utils.data import DataLoader

from torch import nn,optim

from visdom import Visdom

# from lenet5 import Lenet5

from resnet import ResNet18

import time

def main():

batch_siz=32

cifar_train = datasets.CIFAR10('cifar',True,transform=transforms.Compose([

transforms.Resize((32,32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]),download=True)

cifar_train=DataLoader(cifar_train,batch_size=batch_siz,shuffle=True)

cifar_test = datasets.CIFAR10('cifar',False,transform=transforms.Compose([

transforms.Resize((32,32)),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225])

]),download=True)

cifar_test=DataLoader(cifar_test,batch_size=batch_siz,shuffle=True)

x,label = iter(cifar_train).next()

print('x:',x.shape,'label:',label.shape)

# 指定运行到cpu //GPU

device=torch.device('cpu')

# model = Lenet5().to(device)

model = ResNet18().to(device)

# 调用损失函数use Cross Entropy loss交叉熵

# 分类问题使用CrossEntropyLoss比MSELoss更合适

criteon = nn.CrossEntropyLoss().to(device)

# 定义一个优化器

optimizer=optim.Adam(model.parameters(),lr=1e-3)

print(model)

viz=Visdom()

viz.line([0.],[0.],win="loss",opts=dict(title='Lenet5 Loss'))

viz.line([0.],[0.],win="acc",opts=dict(title='Lenet5 Acc'))

# 训练train

for epoch in range(1000):

# 变成train模式

model.train()

# barchidx:下标,x:[b,3,32,32],label:[b]

str_time=time.time()

for barchidx,(x,label) in enumerate(cifar_train):

# 将x,label放在gpu上

x,label=x.to(device),label.to(device)

# logits:[b,10]

# label:[b]

logits = model(x)

loss = criteon(logits,label)

# viz.line([loss.item()],[barchidx],win='loss',update='append')

# backprop

optimizer.zero_grad()

loss.backward()

optimizer.step()

# print(barchidx)

end_time=time.time()

print('第 {} 次训练用时: {}'.format(epoch,(end_time-str_time)))

viz.line([loss.item()],[epoch],win='loss',update='append')

print(epoch,'loss:',loss.item())

# 变成测试模式

model.eval()

with torch.no_grad():

# 测试test

# 正确的数目

total_correct=0

total_num=0

for x,label in cifar_test:

# 将x,label放在gpu上

x,label=x.to(device),label.to(device)

# [b,10]

logits=model(x)

# [b]

pred=logits.argmax(dim=1)

# [b] = [b'] 统计相等个数

total_correct+=pred.eq(label).float().sum().item()

total_num+=x.size(0)

acc=total_correct/total_num

print(epoch,'acc:',acc)

print("------------------------------")

viz.line([acc],[epoch],win='acc',update='append')

# viz.images(x.view(-1, 3, 32, 32), win='x')

if __name__ == '__main__':

main()

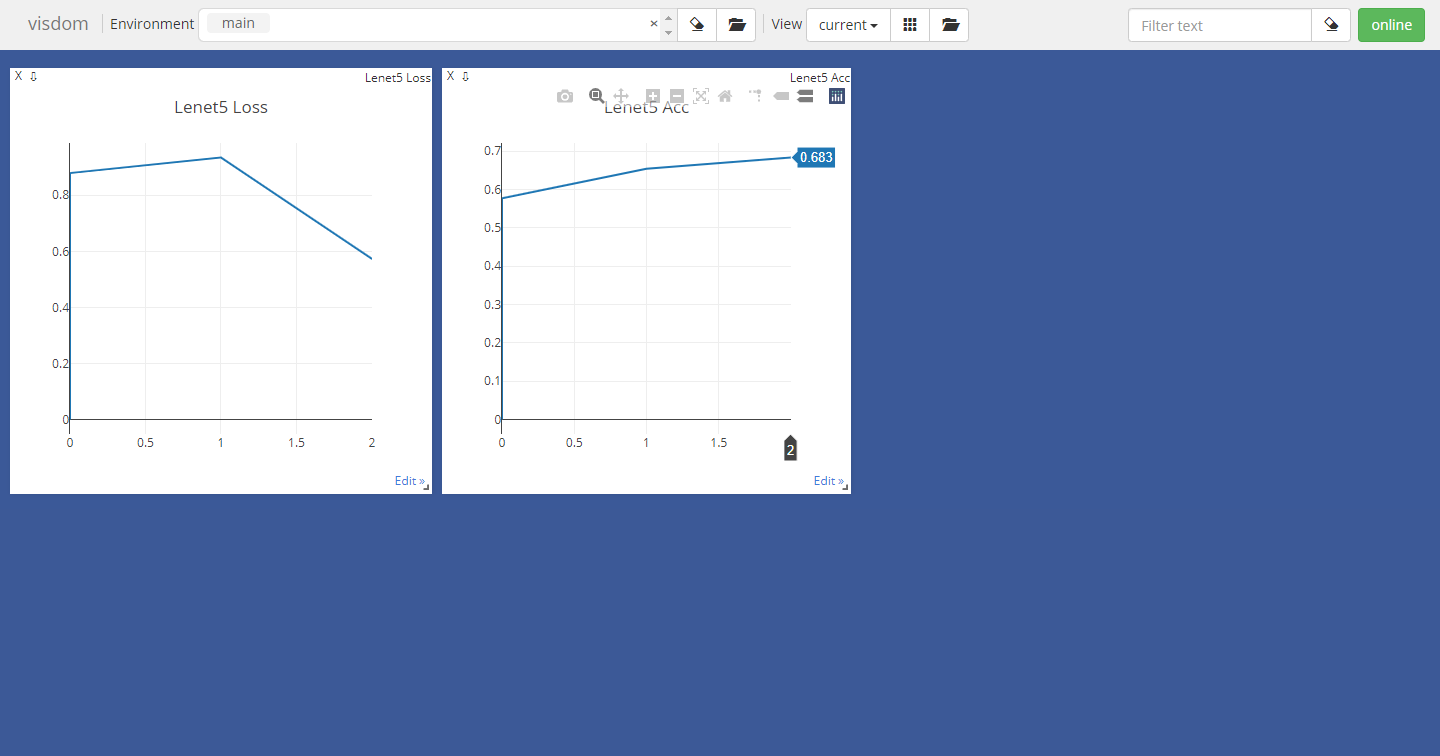

测试结果

ResNet跑起来太费劲了,需要用GPU跑,但是我的电脑不支持GPU,头都大了,用cpu跑二十多分钟学习一次,头都大了。