Motivation

If y only takes a finite set of discrete values such as {0,1}, then using Linear Regression to predict a (hat y>1/hat y<0) does not make sense at all. But fortunately we can fix Linear Regression to produce a value between [0,1].

Details

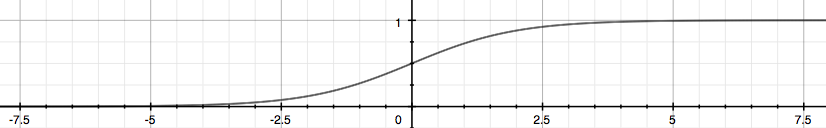

We choose sigmoid/logistic function to map the value:

We can assume that:

Or more compactly:

Now we will use maximum likelihood to fit parameters ( heta), assume n training examples are independent, then the likelihood of the parameters is:

To make life easier, we use the log likelihood:

Let's first take out one example ((x,y)) to derive the stochastic gradient ascent rule:

Then we can update the parameters:

Here we use maximum likelihood to get the update rule. Generally we would like to minimize the object function. So we can add a negative sign to the maximum likelihood's formula, it is called logistic loss. Thus there exists another way to understand it.

The loss on a single sample can be formulated as follows:

If y=1 and the prediction=1, then loss=0; else if y=1 and the prediction=0, then loss=(+infin) is a huge penalty for the totally wrong prediction. It is the same for y=0.

We can unify the two cases together and the loss for the whole training data is:

Here the reason why we don't use the MSE loss such as Linear Regression is that the (J( heta)) is non-convex and very hard to optimize for the global optimum.

To make life easier again, we can write the formula as the vectorized version:

Then our goal is to minimize (J( heta)) and get appropriate parameters ( heta) and use (h_ heta(x)=frac{1}{1+e^{- heta^Tx}}) to get our predictions.

Since it is a little complex to get answer analytically, so we still use Gradient Descent to minimize the loss numerically. The update rule is the same as the above one:

Here you should notice that all ( heta_j) should be updated simultaneously when you program. Again the vectorized version:

It is the same formula as the Linear Regression except that (h_ heta(x)) is different.

牛顿法

除了用梯度上升法去最大化(l( heta)),牛顿迭代法也能干这件事。

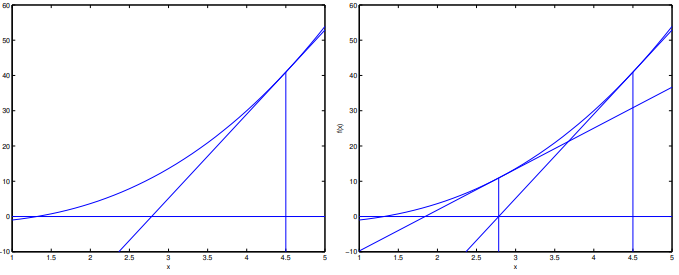

普通同学都是在求方程的零点(f( heta)=0)时接触到牛顿法,其更新规则为:

这个规则可以理解为:我们一直在用一个线性函数去近似(f),因此希望下一次迭代的( heta)就是该线性函数的零点:

再结合一点高中数学,(l( heta))极大值点处的一阶导数为0,因此只要令(l^{'}( heta)=0)就能解出对应的( heta):

由于逻辑回归中( heta)是向量而非scalar,因此需要稍稍改变下更新规则:

其中,Hessian阵中的元素为(H_{ij}=frac{partial^2l( heta)}{partial heta_ipartial heta_j})。

牛顿法通常比梯度上升收敛快得多,因为利用了(l( heta))的二阶信息,但是存储和求解(H^{-1})开销会比较大。