图示说明:

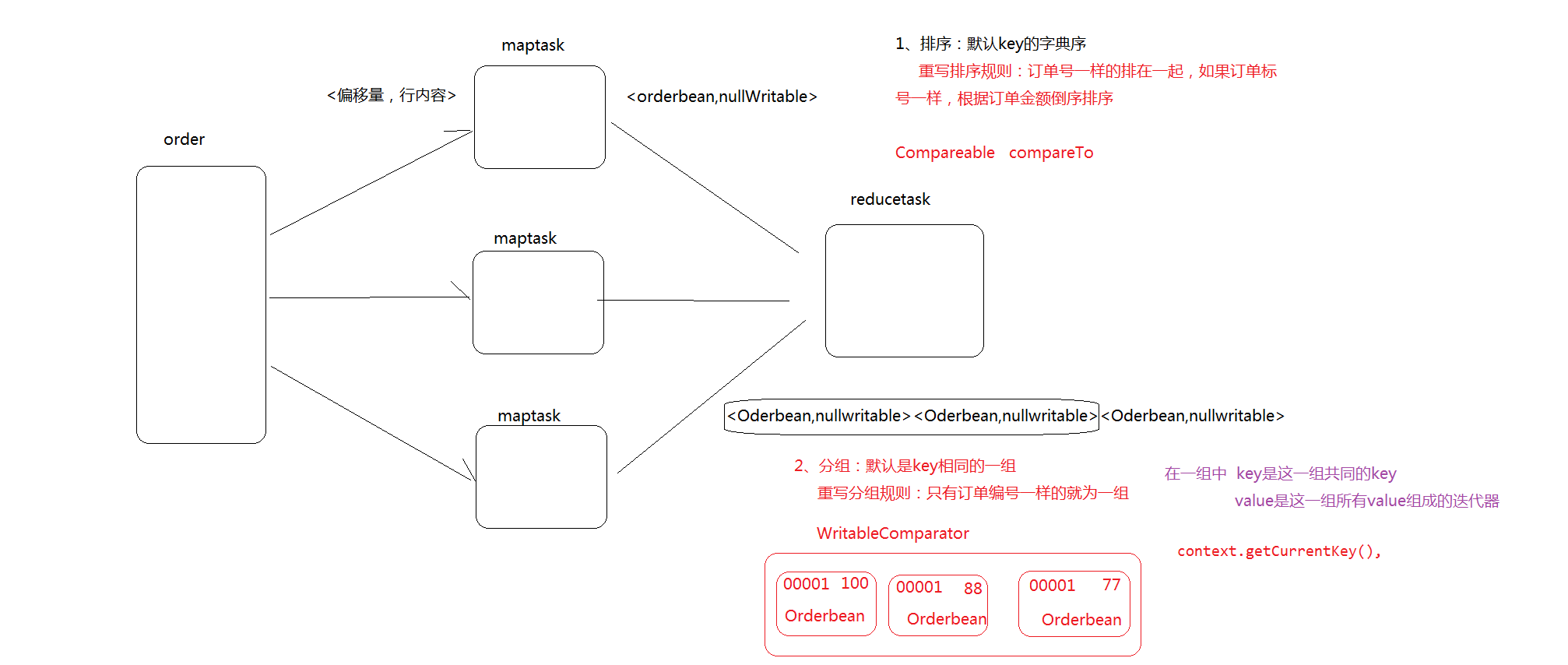

有如下订单数据:

现在需要求出每一个订单中最贵的商品。

(1)利用“订单id和成交金额”作为key,可以将map阶段读取到的所有订单数据按照id分区,按照金额排序,发送到reduce。

(2)在reduce端利用groupingcomparator将订单id相同的kv聚合成组,然后取第一个即是最大值。

代码实现:

定义订单信息OrderBean

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

public class OrderBean implements WritableComparable<OrderBean> {

private int order_id; // 订单id号

private double price; // 价格

public OrderBean() {

super();

}

public OrderBean(int order_id, double price) {

super();

this.order_id = order_id;

this.price = price;

}

@Override

public void write(DataOutput out) throws IOException {

out.writeInt(order_id);

out.writeDouble(price);

}

@Override

public void readFields(DataInput in) throws IOException {

order_id = in.readInt();

price = in.readDouble();

}

@Override

public String toString() {

return order_id + " " + price;

}

public int getOrder_id() {

return order_id;

}

public void setOrder_id(int order_id) {

this.order_id = order_id;

}

public double getPrice() {

return price;

}

public void setPrice(double price) {

this.price = price;

}

// todo 排序规则 根据订单号正序进行排序 如果订单号相同 则根据价格倒序排序

@Override

public int compareTo(OrderBean o) {

int result ;

if (order_id > o.getOrder_id()) {

result = 1;

} else if (order_id < o.getOrder_id()) {

result = -1;

} else {

// 价格倒序排序

result = price > o.getPrice() ? -1 : 1;

}

return result;

}

}69

1

import java.io.DataInput;

2

import java.io.DataOutput;

3

import java.io.IOException;

4

5

public class OrderBean implements WritableComparable<OrderBean> {

6

private int order_id; // 订单id号

7

private double price; // 价格

8

9

public OrderBean() {

10

super();

11

}

12

13

public OrderBean(int order_id, double price) {

14

super();

15

this.order_id = order_id;

16

this.price = price;

17

}

18

19

20

public void write(DataOutput out) throws IOException {

21

out.writeInt(order_id);

22

out.writeDouble(price);

23

}

24

25

26

public void readFields(DataInput in) throws IOException {

27

order_id = in.readInt();

28

price = in.readDouble();

29

}

30

31

32

public String toString() {

33

return order_id + " " + price;

34

}

35

36

public int getOrder_id() {

37

return order_id;

38

}

39

40

public void setOrder_id(int order_id) {

41

this.order_id = order_id;

42

}

43

44

public double getPrice() {

45

return price;

46

}

47

48

public void setPrice(double price) {

49

this.price = price;

50

}

51

52

// todo 排序规则 根据订单号正序进行排序 如果订单号相同 则根据价格倒序排序

53

54

public int compareTo(OrderBean o) {

55

56

int result ;

57

58

if (order_id > o.getOrder_id()) {

59

result = 1;

60

} else if (order_id < o.getOrder_id()) {

61

result = -1;

62

} else {

63

// 价格倒序排序

64

result = price > o.getPrice() ? -1 : 1;

65

}

66

67

return result;

68

}

69

}

编写OrderMapper处理流程

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class OrderMapper extends Mapper<LongWritable, Text, OrderBean, NullWritable> {

OrderBean k = new OrderBean();

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 1 获取一行

String line = value.toString();

// 2 截取

String[] fields = line.split(" ");

// 3 封装对象

k.setOrder_id(Integer.parseInt(fields[0]));

k.setPrice(Double.parseDouble(fields[2]));

// 4 写出

context.write(k, NullWritable.get());

}

}27

1

import org.apache.hadoop.io.LongWritable;

2

import org.apache.hadoop.io.NullWritable;

3

import org.apache.hadoop.io.Text;

4

import org.apache.hadoop.mapreduce.Mapper;

5

6

import java.io.IOException;

7

8

public class OrderMapper extends Mapper<LongWritable, Text, OrderBean, NullWritable> {

9

OrderBean k = new OrderBean();

10

11

12

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

13

14

// 1 获取一行

15

String line = value.toString();

16

17

// 2 截取

18

String[] fields = line.split(" ");

19

20

// 3 封装对象

21

k.setOrder_id(Integer.parseInt(fields[0]));

22

k.setPrice(Double.parseDouble(fields[2]));

23

24

// 4 写出

25

context.write(k, NullWritable.get());

26

}

27

}

编写OrderPartitioner处理流程

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Partitioner;

//todo 重新分区规则 订单号一样的 来到同一个分区中

public class OrderPartitioner extends Partitioner<OrderBean, NullWritable> {

@Override

public int getPartition(OrderBean key, NullWritable value, int numReduceTasks) {

return (key.getOrder_id() & Integer.MAX_VALUE) % numReduceTasks;

}

}12

1

import org.apache.hadoop.io.NullWritable;

2

import org.apache.hadoop.mapreduce.Partitioner;

3

4

//todo 重新分区规则 订单号一样的 来到同一个分区中

5

public class OrderPartitioner extends Partitioner<OrderBean, NullWritable> {

6

7

8

public int getPartition(OrderBean key, NullWritable value, int numReduceTasks) {

9

10

return (key.getOrder_id() & Integer.MAX_VALUE) % numReduceTasks;

11

}

12

}

编写OrderGroupingComparator处理流程

import org.apache.hadoop.io.WritableComparable;

import org.apache.hadoop.io.WritableComparator;

//todo 自定义分组规则 订单号一样的来到同一个分组中

public class OrderGroupingComparator extends WritableComparator {

protected OrderGroupingComparator() {

super(OrderBean.class, true);

}

@Override

public int compare(WritableComparable a, WritableComparable b) {

OrderBean aBean = (OrderBean) a;

OrderBean bBean = (OrderBean) b;

int result;

if (aBean.getOrder_id() > bBean.getOrder_id()) {

result = 1;

} else if (aBean.getOrder_id() < bBean.getOrder_id()) {

result = -1;

} else {

result = 0;

}

return result;

}

}29

1

import org.apache.hadoop.io.WritableComparable;

2

import org.apache.hadoop.io.WritableComparator;

3

4

//todo 自定义分组规则 订单号一样的来到同一个分组中

5

public class OrderGroupingComparator extends WritableComparator {

6

7

protected OrderGroupingComparator() {

8

super(OrderBean.class, true);

9

}

10

11

12

public int compare(WritableComparable a, WritableComparable b) {

13

14

OrderBean aBean = (OrderBean) a;

15

OrderBean bBean = (OrderBean) b;

16

17

int result;

18

19

if (aBean.getOrder_id() > bBean.getOrder_id()) {

20

result = 1;

21

} else if (aBean.getOrder_id() < bBean.getOrder_id()) {

22

result = -1;

23

} else {

24

result = 0;

25

}

26

27

return result;

28

}

29

}

编写OrderReducer处理流程

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class OrderReducer extends Reducer<OrderBean, NullWritable, OrderBean, NullWritable> {

@Override

protected void reduce(OrderBean key, Iterable<NullWritable> values, Context context)

throws IOException, InterruptedException {

System.out.println(key);

for (NullWritable value : values) {

System.out.println(value);

}

context.write(key, NullWritable.get());

}

}18

1

import org.apache.hadoop.io.NullWritable;

2

import org.apache.hadoop.mapreduce.Reducer;

3

4

import java.io.IOException;

5

6

public class OrderReducer extends Reducer<OrderBean, NullWritable, OrderBean, NullWritable> {

7

8

9

protected void reduce(OrderBean key, Iterable<NullWritable> values, Context context)

10

throws IOException, InterruptedException {

11

System.out.println(key);

12

for (NullWritable value : values) {

13

System.out.println(value);

14

}

15

16

context.write(key, NullWritable.get());

17

}

18

}

编写OrderDriver处理流程

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class OrderDriver {

public static void main(String[] args) throws Exception, IOException {

// 1 获取配置信息

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2 设置jar包加载路径

job.setJarByClass(OrderDriver.class);

// 3 加载map/reduce类

job.setMapperClass(OrderMapper.class);

job.setReducerClass(OrderReducer.class);

// 4 设置map输出数据key和value类型

job.setMapOutputKeyClass(OrderBean.class);

job.setMapOutputValueClass(NullWritable.class);

// 5 设置最终输出数据的key和value类型

job.setOutputKeyClass(OrderBean.class);

job.setOutputValueClass(NullWritable.class);

// 6 设置输入数据和输出数据路径

FileInputFormat.setInputPaths(job, new Path("D:\TiePiHeTao\input"));

FileOutputFormat.setOutputPath(job, new Path("D:\TiePiHeTao\output"));

// // 10 设置reduce端的分组

job.setGroupingComparatorClass(OrderGroupingComparator.class);

// 7 设置分区

job.setPartitionerClass(OrderPartitioner.class);

// 8 设置reduce个数

job.setNumReduceTasks(3);

// 9 提交

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}49

1

import org.apache.hadoop.conf.Configuration;

2

import org.apache.hadoop.fs.Path;

3

import org.apache.hadoop.io.NullWritable;

4

import org.apache.hadoop.mapreduce.Job;

5

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

6

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

7

import java.io.IOException;

8

9

public class OrderDriver {

10

11

public static void main(String[] args) throws Exception, IOException {

12

13

// 1 获取配置信息

14

Configuration conf = new Configuration();

15

Job job = Job.getInstance(conf);

16

17

// 2 设置jar包加载路径

18

job.setJarByClass(OrderDriver.class);

19

20

// 3 加载map/reduce类

21

job.setMapperClass(OrderMapper.class);

22

job.setReducerClass(OrderReducer.class);

23

24

// 4 设置map输出数据key和value类型

25

job.setMapOutputKeyClass(OrderBean.class);

26

job.setMapOutputValueClass(NullWritable.class);

27

28

// 5 设置最终输出数据的key和value类型

29

job.setOutputKeyClass(OrderBean.class);

30

job.setOutputValueClass(NullWritable.class);

31

32

// 6 设置输入数据和输出数据路径

33

FileInputFormat.setInputPaths(job, new Path("D:\TiePiHeTao\input"));

34

FileOutputFormat.setOutputPath(job, new Path("D:\TiePiHeTao\output"));

35

36

//// 10 设置reduce端的分组

37

job.setGroupingComparatorClass(OrderGroupingComparator.class);

38

39

// 7 设置分区

40

job.setPartitionerClass(OrderPartitioner.class);

41

42

// 8 设置reduce个数

43

job.setNumReduceTasks(3);

44

45

// 9 提交

46

boolean result = job.waitForCompletion(true);

47

System.exit(result ? 0 : 1);

48

}

49

}

总结:

- 默认规则:key相同 为一组

- 自定义分组:

-

继承 WritableComparator 重写compare方法 根据该方法返回的结果来判断是否相等 只要你指定返回为0 那么mr就认为相等31继承 WritableComparator2重写compare方法 根据该方法返回的结果来判断是否相等3只要你指定返回为0 那么mr就认为相等 - 自定义分组如何生效

-

job.setGroupingComparatorClass(OrderGrouping.class);11job.setGroupingComparatorClass(OrderGrouping.class); - 自定义排序和自定义分组的梳理

- 自定义排序 正数 大于 、负数小于、零等于

- 自定义分组 零相等 、非零不相等