我们知道,有的网页必须要登录才能访问其内容。scrapy登录的实现一般就三种方式。

1.在第一次请求中直接携带用户名和密码。

2.必须要访问一次目标地址,服务器返回一些参数,例如验证码,一些特定的加密字符串等,自己通过相应手段分析与提取,第二次请求时带上这些参数即可。可以参考https://www.cnblogs.com/bertwu/p/13210539.html

3.不必花里胡哨,直接手动登录成功,然后提取出cookie,加入到访问头中即可。

本文以第三种为例,实现scrapy携带cookie访问购物车。

1.先手动登录自己的淘宝账号,从中提取出cookie,如下图中所示。

2.cmd中workon自己的虚拟环境,创建项目 (scrapy startproject taobao)

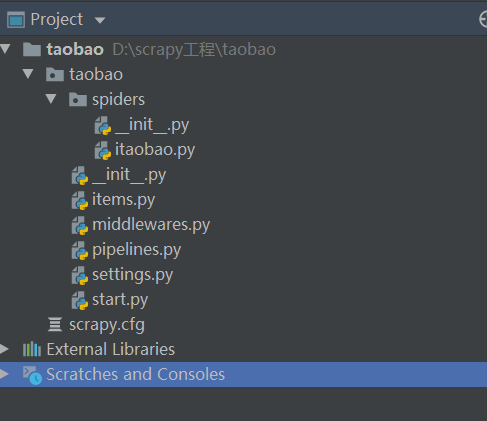

3.pycharm打开项目目录 ,在terminal中输入(scrapy genspider itaobao taobao.com),得到如下的目录结构

4.setting中设置相应配置

5. 在itaobao中写业务代码。我们先不加人cookie直接访问购物车,代码如下:

import scrapy class ItaobaoSpider(scrapy.Spider): name = 'itaobao' allowed_domains = ['taobao.com'] start_urls = [ 'https://cart.taobao.com/cart.htm?spm=a1z02.1.a2109.d1000367.OOeipq&nekot=1470211439694'] # 第一次就直接访问购物车 def parse(self, response): print(response.text)

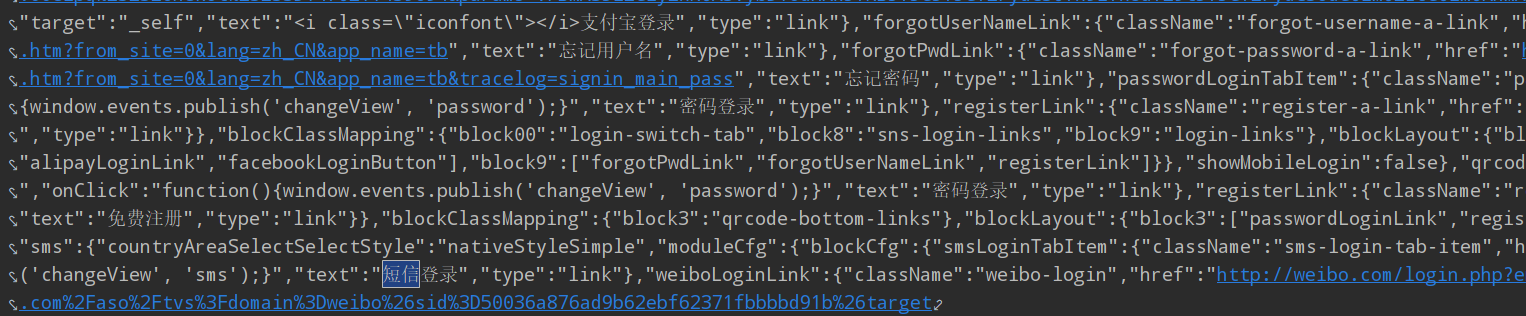

响应回来信息如下

明显是跳转到登录页面的意思。

6.言归正传,正确的代码如下,需要重写

1 import scrapy 2 3 4 class ItaobaoSpider(scrapy.Spider): 5 name = 'itaobao' 6 allowed_domains = ['taobao.com'] 7 8 # start_urls = ['https://cart.taobao.com/cart.htm?spm=a1z02.1.a2109.d1000367.OOeipq&nekot=1470211439694'] 9 # 需要重写start_requests方法 10 def start_requests(self): 11 url = "https://cart.taobao.com/cart.htm?spm=a1z02.1.a2109.d1000367.OOeipq&nekot=1470211439694" 12 # 此处的cookie为手动登录后从浏览器粘贴下来的值 13 cookie = "thw=cn; cookie2=16b0fe13709f2a71dc06ab1f15dcc97b; _tb_token_=fe3431e5fe755;" 14 " _samesite_flag_=true; ubn=p; ucn=center; t=538b39347231f03177d588275aba0e2f;" 15 " tk_trace=oTRxOWSBNwn9dPyorMJE%2FoPdY8zfvmw%2Fq5hoqmmiKd74AJ%2Bt%2FNCZ%" 16 "2FSIX9GYWSRq4bvicaWHhDMtcR6rWsf0P6XW5ZT%2FgUec9VF0Ei7JzUpsghuwA4cBMNO9EHkGK53r%" 17 "2Bb%2BiCEx98Frg5tzE52811c%2BnDmTNlzc2ZBkbOpdYbzZUDLaBYyN9rEdp9BVnFGP1qVAAtbsnj35zfBVfe09E%" 18 "2BvRfUU823q7j4IVyan1lagxILINo%2F%2FZK6omHvvHqA4cu2IaVAhy5MzzodyJhmXmOpBiz9Pg%3D%3D; " 19 "cna=5c3zFvLEEkkCAW8SYSQ2GkGo; sgcookie=E3EkJ6LRpL%2FFRZIBoXfnf; unb=578051633; " 20 "uc3=id2=Vvl%2F7ZJ%2BJYNu&nk2=r7kpR6Vbl9KdZe14&lg2=URm48syIIVrSKA%3D%3D&vt3=F8dBxGJsy36E3EwQ%2BuQ%3D;" 21 " csg=c99a3c3d; lgc=%5Cu5929%5Cu4ED9%5Cu8349%5Cu5929%5Cu4ED9%5Cu8349; cookie17=Vvl%2F7ZJ%2BJYNu;" 22 " dnk=%5Cu5929%5Cu4ED9%5Cu8349%5Cu5929%5Cu4ED9%5Cu8349; skt=4257a8fa00b349a7; existShop=MTU5MzQ0MDI0MQ%3D%3D;" 23 " uc4=nk4=0%40rVtT67i5o9%2Bt%2BQFc65xFQrUP0rGVA%2Fs%3D&id4=0%40VH93OXG6vzHVZgTpjCrALOFhU4I%3D;" 24 " tracknick=%5Cu5929%5Cu4ED9%5Cu8349%5Cu5929%5Cu4ED9%5Cu8349; _cc_=W5iHLLyFfA%3D%3D; " 25 "_l_g_=Ug%3D%3D; sg=%E8%8D%893d; _nk_=%5Cu5929%5Cu4ED9%5Cu8349%5Cu5929%5Cu4ED9%5Cu8349;" 26 " cookie1=VAmiexC8JqC30wy9Q29G2%2FMPHkz4fpVNRQwNz77cpe8%3D; tfstk=cddPBI0-Kbhyfq5IB_1FRmwX4zaRClfA" 27 "_qSREdGTI7eLP5PGXU5c-kQm2zd2HGhcE; mt=ci=8_1; v=0; uc1=cookie21=VFC%2FuZ9ainBZ&cookie15=VFC%2FuZ9ayeYq2g%3D%3D&cookie" 28 "16=WqG3DMC9UpAPBHGz5QBErFxlCA%3D%3D&existShop=false&pas=0&cookie14=UoTV75eLMpKbpQ%3D%3D&cart_m=0;" 29 " _m_h5_tk=cbe3780ec220a82fe10e066b8184d23f_1593451560729; _m_h5_tk_enc=c332ce89f09d49c68e13db9d906c8fa3; " 30 "l=eBxAcQbPQHureJEzBO5aourza7796IRb8sPzaNbMiInca6MC1hQ0PNQD5j-MRdtjgtChRe-PWBuvjdeBWN4dbNRMPhXJ_n0xnxvO.; " 31 "isg=BJ2drKVLn8Ww-Ht9N195VKUWrHmXutEMHpgqKF9iKfRAFrxIJAhD3DbMRAoQ1unE" 32 cookies = {} 33 # 提取键值对 请求头中携带cookie必须是一个字典,所以要把原生的cookie字符串转换成cookie字典 34 for cookie in cookie.split(';'): 35 key, value = cookie.split("=", 1) 36 cookies[key] = value 37 yield scrapy.Request(url=url, cookies=cookies, callback=self.parse) 38 39 def parse(self, response): 40 print(response.text)

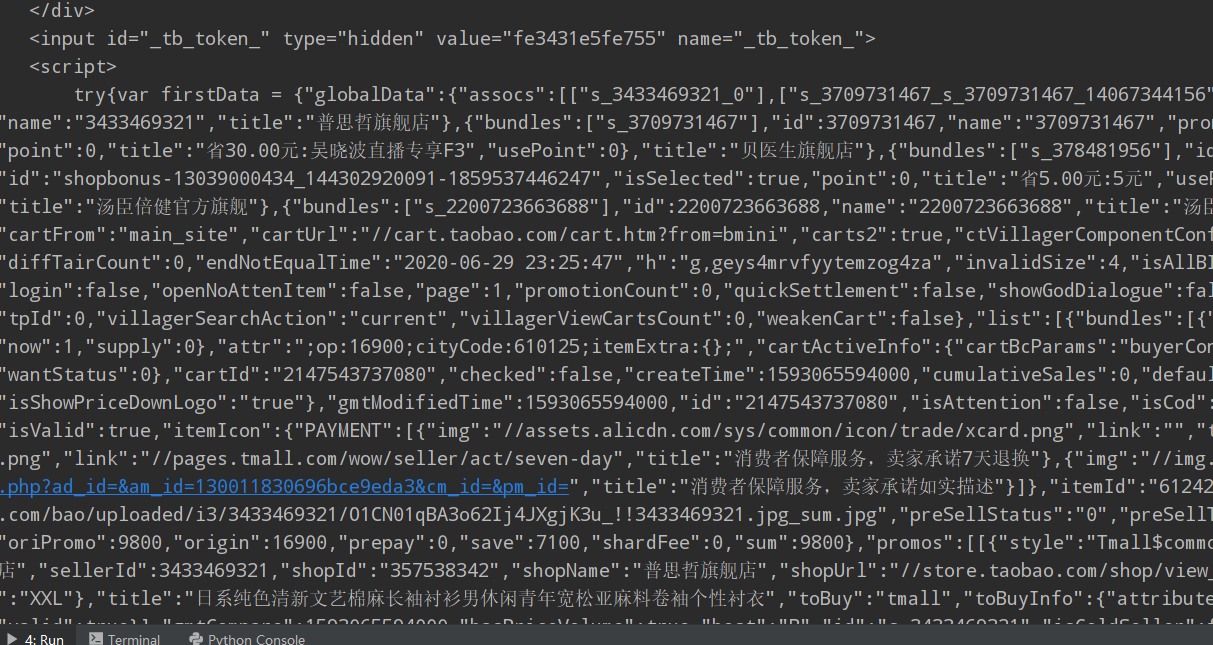

响应信息如下(部分片段):

很明显这是自己购物车的真实源代码。

好了,大功告成啦,接下来就可以按照业务需求用xpath(自己喜欢用这种方式)提取自己想要的信息了。