#创建单词表 def createVocabList(dataSet): vocabSet = set([]) #创建一个空的集合 for document in dataSet: vocabSet = vocabSet | set(document) #union of the two sets return list(vocabSet) def trainNB0(trainMatrix,trainCategory): numTrainDocs = len(trainMatrix) #训练矩阵的行数 numWords = len(trainMatrix[0])#字母表的维度,即训练矩阵的列数 pAbusive = sum(trainCategory)/float(numTrainDocs) #先验信息 p0Num = ones(numWords); p1Num = ones(numWords) #改为 ones() p0Denom = 2.0; p1Denom = 2.0 #改成 2.0 for i in range(numTrainDocs): if trainCategory[i] == 1: p1Num += trainMatrix[i] p1Denom += sum(trainMatrix[i]) else: p0Num += trainMatrix[i] p0Denom += sum(trainMatrix[i]) p1Vect = log(p1Num/p1Denom) #改为 log() p0Vect = log(p0Num/p0Denom) #改为 log() return p0Vect,p1Vect,pAbusive #返回先验信息PAbusive,返回确定分类的条件下的每个单词出现的概率(此时概率为频率) def classifyNB(vec2Classify, p0Vec, p1Vec, pClass1): p1 = sum(vec2Classify * p1Vec) + log(pClass1) #此时p1vec为对原始数组分别取对数之后的矩阵了,利用log(a*b)=sum(log(a)+log(b))再sum求和 #pClass1为先验概率,此时p1就是最终的概率值。同理p0,根据后验概率最大准则,判别 p0 = sum(vec2Classify * p0Vec) + log(1.0 - pClass1) if p1 > p0: return 1 else: return 0 #定义词袋模型,词出现几次算几次 def bagOfWords2VecMN(vocabList, inputSet): returnVec = [0]*len(vocabList) #初始化矩阵 for word in inputSet: if word in vocabList: returnVec[vocabList.index(word)] += 1 return returnVec def textParse(bigString): #input is big string, #output is word list import re listOfTokens = re.split(r'W*', bigString) return [tok.lower() for tok in listOfTokens if len(tok) > 2] def spamTest(): """" 文本矩阵化,构建文本矩阵和分类矩阵; 注意:由于有个文本的编码解码有问题,我给直接过滤掉了,所以最后矩阵有49行而不是50行 """ docList=[];classList=[];fullText=[] spam_name_lists=["email/spam/{}.txt".format(i) for i in range(1,26)] ham_name_lists=["email/ham/{}.txt".format(i) for i in range(1,26)] for spam_name_list in spam_name_lists: with open(spam_name_list) as f: try: a=textParse(f.read()) docList.append(a) classList.append(1) fullText.extend(docList) except Exception as e: print(e) pass for ham_name_list in ham_name_lists: with open(ham_name_list) as f: try: a=textParse(f.read()) docList.append(a) classList.append(0) fullText.extend(docList) except Exception as e: print(e) pass vocabList = createVocabList(docList) #创建词汇表 trainingSet=list(range(49));testSet=[] #随机的构建测试集和训练集,留存交叉验证的方法 for i in range(10): #测试集大小为10,训练集大小为49-10=39 randIndex=int(random.uniform(0,len(trainingSet))) testSet.append(trainingSet[randIndex]) trainingSet.pop(randIndex) trainMat=[]; trainClasses = [] for docIndex in trainingSet: trainMat.append(bagOfWords2VecMN(vocabList, docList[docIndex])) trainClasses.append(classList[docIndex]) p0V,p1V,pSpam = trainNB0(array(trainMat),array(trainClasses)) errorCount = 0 for docIndex in testSet: #classify the remaining items wordVector = bagOfWords2VecMN(vocabList, docList[docIndex]) if classifyNB(array(wordVector),p0V,p1V,pSpam) != classList[docIndex]: errorCount += 1 print("classification error", docList[docIndex]) print('the error rate is: ', float(errorCount) / len(testSet)) #return vocabList,fullText if __name__ == '__main__': spamTest()

待处理的数据为放在两个文件夹中的各25个txt文本,文本信息为电子邮件内容,文件夹spam中的25个邮件都是正常邮件;ham中的25个邮件是垃圾邮件;

利用朴素贝叶斯算法,训练分类器,采取交叉验证的方式,结果证明,分类器能够很好的识别垃圾邮件;

代码主要参考【机器学习实战】,但是有的代码已经不能用了,而且有的有问题,做了一点修改。希望对看到文章的童鞋有点参考。朴素贝叶斯的思想不再过度叙述,参考互联网;

整个示意流程如下:

(1)文本处理(读取文本,分词)——>

(2)根据分好词的文本数据建立词汇表(函数createVocabList【参数为文本数据】),矩阵化(函数bagOfWords2VecMN,【参数为词汇表、待处理的“文本数据”】)——>

(3)拆分数据为训练集、测试集——>

(4)训练分类器——>

(5)测试分类器——>end

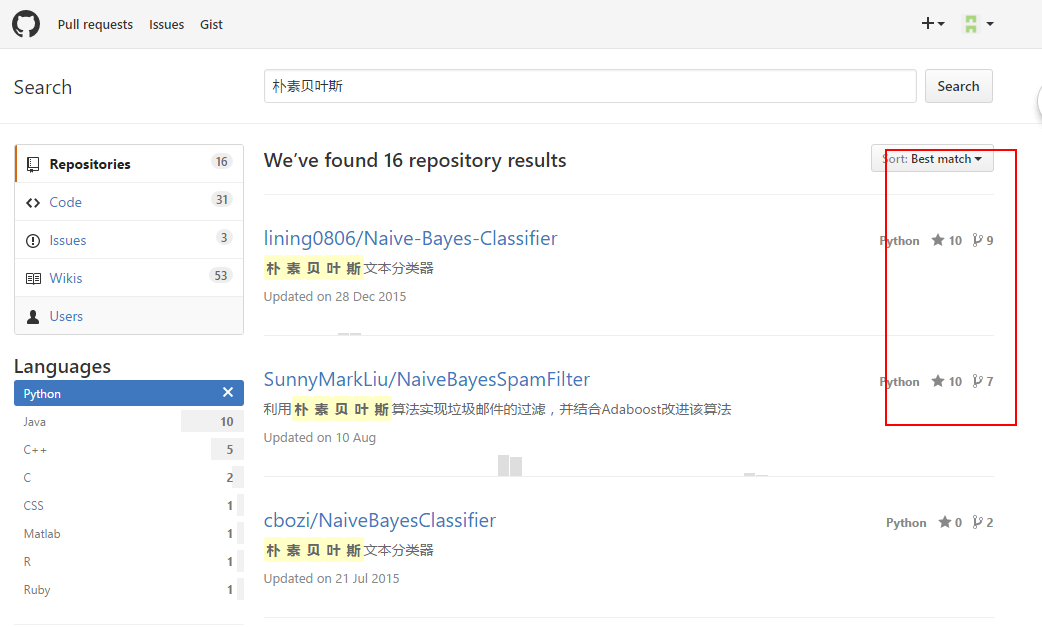

学习朴素贝叶斯的其他文章,建议看看github上的这几个项目,链接如下

https://github.com/search?l=Python&q=%E6%9C%B4%E7%B4%A0%E8%B4%9D%E5%8F%B6%E6%96%AF&type=Repositories&utf8=%E2%9C%93