一、概述

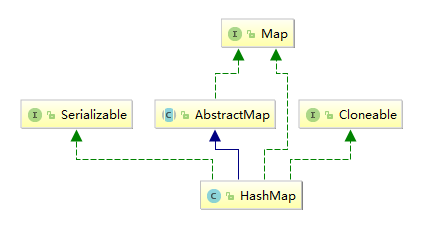

HashMap是基于哈希表的Map接口实现的,它存储的是内容是键值对<key,value>映射,不保证映射的顺序

数据结构为链表散列,jdk1.8以后链表深度大于8会转为红黑树

HashMap的实例有两个参数影响性能,初始化容量initialCapacity(16)和loadFactor加载因子(0.75)

二、源码

1、属性

static final int DEFAULT_INITIAL_CAPACITY = 1 << 4;

map初始的容量16,之所以要是2的幂次,为了方便元素插入时使用位运算计算存放的位置(取模效率较低),也为了更方便扩容(避免扩容后重复处理哈希碰撞)

static final int MAXIMUM_CAPACITY = 1 << 30;

上限取了int类型最大的2的幂次

static final float DEFAULT_LOAD_FACTOR = 0.75f;

负载因子太小了浪费空间并且会发生更多次数的resize,太大了哈希冲突增加会导致性能不好,所以0.75只是一个折中的选择

static final int TREEIFY_THRESHOLD = 8;

当链表长度大于等于8时(且数组长度大于等于64),链表转为红黑树结构,之所以是8因为在负载因子为0.75的情况下(长度为length的数组放入0.75*length个元素),链表长度达到8的概率为0.00000006,非常小(一般只有分布非常不均匀的时候才会触发)

static final int UNTREEIFY_THRESHOLD = 6;

当红黑树个数小于等于6时,重新退化为链表,没有用7因为增加一个差值防止链表和红黑树频繁转换

static final int MIN_TREEIFY_CAPACITY = 64;

当哈希表容量大于等于64时才允许链表到红黑树的转换

2、构造方法

public HashMap() {

//设置负载因子

this.loadFactor = DEFAULT_LOAD_FACTOR; // all other fields defaulted

}

public HashMap(int initialCapacity) {

//设置初始大小和负载因子

this(initialCapacity, DEFAULT_LOAD_FACTOR);

}

public HashMap(int initialCapacity, float loadFactor) {

//校验范围

if (initialCapacity < 0)

throw new IllegalArgumentException("Illegal initial capacity: " + initialCapacity);

if (initialCapacity > MAXIMUM_CAPACITY)

initialCapacity = MAXIMUM_CAPACITY;

if (loadFactor <= 0 || Float.isNaN(loadFactor))

throw new IllegalArgumentException("Illegal load factor: " + loadFactor);

this.loadFactor = loadFactor;

//设置阈值(如果长度入参不是2的幂次,返回最接近的2的幂次)

this.threshold = tableSizeFor(initialCapacity);

}

public HashMap(Map<? extends K, ? extends V> m) {

this.loadFactor = DEFAULT_LOAD_FACTOR;

//填充map

putMapEntries(m, false);

}

final void putMapEntries(Map<? extends K, ? extends V> m, boolean evict) {

int s = m.size();

if (s > 0) {

if (table == null) { // pre-size

float ft = ((float)s / loadFactor) + 1.0F;

int t = ((ft < (float)MAXIMUM_CAPACITY) ? (int)ft : MAXIMUM_CAPACITY);

if (t > threshold)

threshold = tableSizeFor(t);

}

else if (s > threshold)

//扩容

resize();

//将m中的所有元素添加至HashMap中

for (Map.Entry<? extends K, ? extends V> e : m.entrySet()) {

K key = e.getKey();

V value = e.getValue();

putVal(hash(key), key, value, false, evict);

}

}

}

3、put方法

public V put(K key, V value) {

return putVal(hash(key), key, value, false, true);

}

final V putVal(int hash, K key, V value, boolean onlyIfAbsent, boolean evict) {

Node<K,V>[] tab; Node<K,V> p; int n, i;

//table未初始化或者长度为0,进行扩容

if ((tab = table) == null || (n = tab.length) == 0)

n = (tab = resize()).length;

//通过位运算判断元素放在桶中的位置

if ((p = tab[i = (n - 1) & hash]) == null)

tab[i] = newNode(hash, key, value, null);

//发生碰撞,桶中已有元素

else {

Node<K,V> e; K k;

//比较key和hash相同,将要覆盖value

if (p.hash == hash && ((k = p.key) == key || (key != null && key.equals(k))))

e = p;

//如果是红黑树

else if (p instanceof TreeNode)

e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);

//如果是链表

else {

for (int binCount = 0; ; ++binCount) {

if ((e = p.next) == null) {

p.next = newNode(hash, key, value, null);

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

if (e.hash == hash && ((k = e.key) == key || (key != null && key.equals(k))))

break;

p = e;

}

}

if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}

}

//告诉迭代器修改过数量了

++modCount;

//如果实际大小大于阈值就扩容

if (++size > threshold)

resize();

//插入后回调

afterNodeInsertion(evict);

return null;

}

4、get方法

public V get(Object key) {

Node<K,V> e;

return (e = getNode(hash(key), key)) == null ? null : e.value;

}

final Node<K,V> getNode(int hash, Object key) {

Node<K,V>[] tab; Node<K,V> first, e; int n; K k;

//如果table已经初始化,根据哈希寻找元素不为空

if ((tab = table) != null && (n = tab.length) > 0 &&

(first = tab[(n - 1) & hash]) != null) {

//如果第一个元素就像等,数组

if (first.hash == hash && // always check first node

((k = first.key) == key || (key != null && key.equals(k))))

return first;

if ((e = first.next) != null) {

//如果是红黑树

if (first instanceof TreeNode)

return ((TreeNode<K,V>)first).getTreeNode(hash, key);

do {

//在链表中查找

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

return e;

} while ((e = e.next) != null);

}

}

return null;

}

5、resize方法

final Node<K,V>[] resize() {

// 当前table保存

Node<K,V>[] oldTab = table;

// 保存table大小

int oldCap = (oldTab == null) ? 0 : oldTab.length;

// 保存当前阈值

int oldThr = threshold;

int newCap, newThr = 0;

// 之前table大小大于0

if (oldCap > 0) {

// 之前table大于最大容量

if (oldCap >= MAXIMUM_CAPACITY) {

// 阈值为最大整形

threshold = Integer.MAX_VALUE;

return oldTab;

}

// 容量翻倍,使用左移,效率更高

else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY &&

oldCap >= DEFAULT_INITIAL_CAPACITY)

// 阈值翻倍

newThr = oldThr << 1; // double threshold

}

// 之前阈值大于0

else if (oldThr > 0)

newCap = oldThr;

// oldCap = 0并且oldThr = 0,使用缺省值(如使用HashMap()构造函数,之后再插入一个元素会调用resize函数,会进入这一步)

else {

newCap = DEFAULT_INITIAL_CAPACITY;

newThr = (int)(DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY);

}

// 新阈值为0

if (newThr == 0) {

float ft = (float)newCap * loadFactor;

newThr = (newCap < MAXIMUM_CAPACITY && ft < (float)MAXIMUM_CAPACITY ?

(int)ft : Integer.MAX_VALUE);

}

threshold = newThr;

@SuppressWarnings({"rawtypes","unchecked"})

// 初始化table

Node<K,V>[] newTab = (Node<K,V>[])new Node[newCap];

table = newTab;

// 之前的table已经初始化过

if (oldTab != null) {

// 复制元素,重新进行hash

for (int j = 0; j < oldCap; ++j) {

Node<K,V> e;

if ((e = oldTab[j]) != null) {

oldTab[j] = null;

if (e.next == null)

newTab[e.hash & (newCap - 1)] = e;

else if (e instanceof TreeNode)

((TreeNode<K,V>)e).split(this, newTab, j, oldCap);

else { // preserve order

Node<K,V> loHead = null, loTail = null;

Node<K,V> hiHead = null, hiTail = null;

Node<K,V> next;

// 将同一桶中的元素根据(e.hash & oldCap)是否为0进行分割,分成两个不同的链表,完成rehash

do {

next = e.next;

if ((e.hash & oldCap) == 0) {

if (loTail == null)

loHead = e;

else

loTail.next = e;

loTail = e;

}

else {

if (hiTail == null)

hiHead = e;

else

hiTail.next = e;

hiTail = e;

}

} while ((e = next) != null);

if (loTail != null) {

loTail.next = null;

newTab[j] = loHead;

}

if (hiTail != null) {

hiTail.next = null;

newTab[j + oldCap] = hiHead;

}

}

}

}

}

return newTab;

}