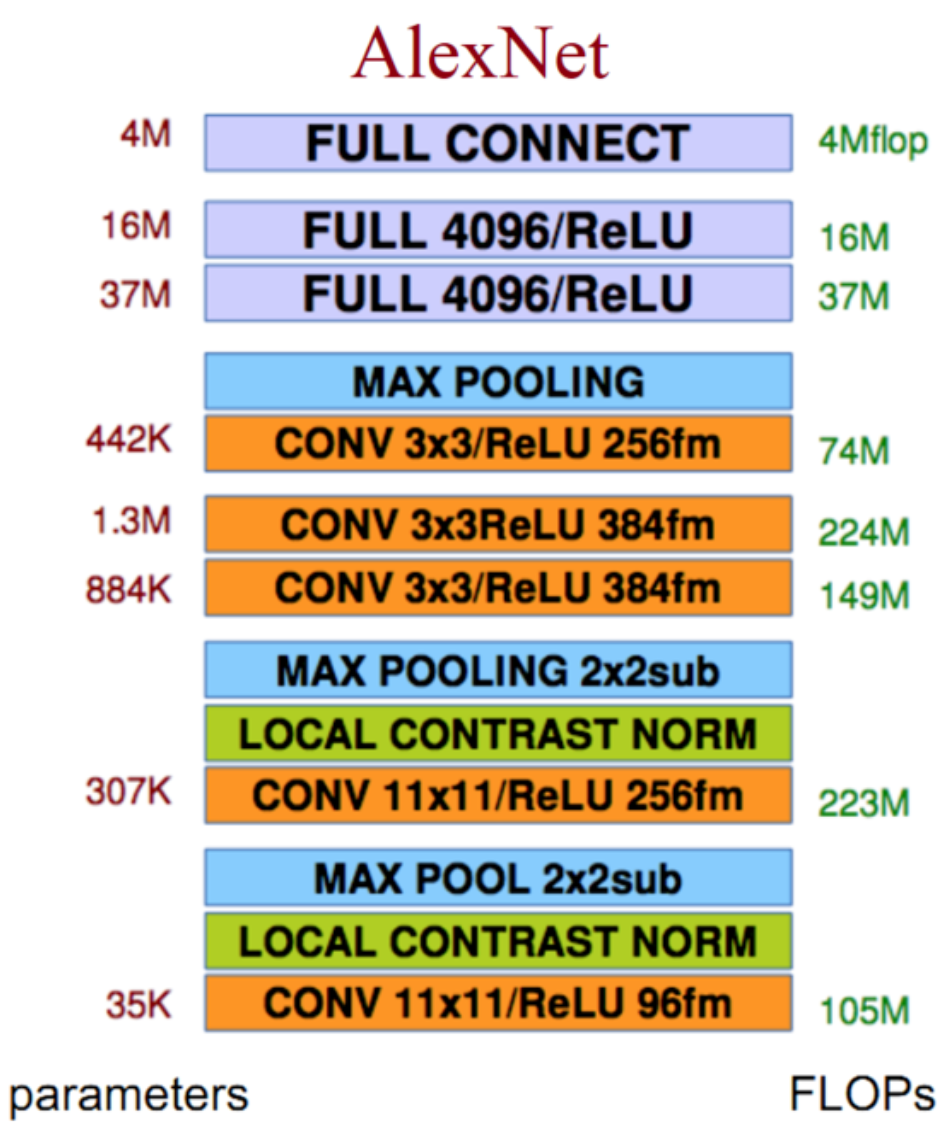

网络结构

创新点

Relu激活函数:效果好于sigmoid,且解决了梯度弥散问题

Dropout层:Alexnet验证了dropout层的效果

重叠的最大池化:此前以平均池化为主,最大池化避免了平均池化的模糊效果;而重叠的池化步长和池化窗口大小设置增加了特征的丰富性

LRN层:局部响应归一化层,这个层能够使得响应大的神经元变得相对更大,其他的收到抑制,理论上增加了泛化能力,不过实际上在大大增加了训练时间的同时并没有对效果有明显的提升,故除了AlexNet外基本没有使用;提出原因在于Relu函数输出不想之前的tanh和sigmoid有一个有限值域,所以LRN加以限制,似乎作用并不明显

代码实现

# Author : Hellcat

# Time : 2017/12/8

import math

import time

import tensorflow as tf

from datetime import datetime

batch_size = 32

num_batches = 100

def print_activations(t):

'''

查看tensor信息

:param t: tensor对象

:return: 隶属节点的名称,张量本身尺寸

'''

print(t.op.name, ' ', t.get_shape().as_list())

def inference(images):

parameters = []

with tf.name_scope('conv1') as scope:

kernel = tf.Variable(tf.truncated_normal([11,11,3,64],

dtype=tf.float32, stddev=1e-4),

name='weights')

conv = tf.nn.conv2d(images, kernel,[1,4,4,1],padding='SAME')

biases = tf.Variable(tf.constant(0.,shape=[64],dtype=tf.float32),

trainable=True,name='biases')

bias = tf.nn.bias_add(conv, biases)

conv1 = tf.nn.relu(bias,name=scope)

print_activations(conv1)

parameters += [kernel,biases]

lrn1 = tf.nn.lrn(conv1,4,bias=1.,alpha=0.001/9,beta=0.75,name='lrn1')

pool1 = tf.nn.max_pool(lrn1,ksize=[1,3,3,1],strides=[1,2,2,1],

padding='VALID',name='pool1')

print_activations(pool1)

with tf.name_scope('conv2') as scope:

kernel = tf.Variable(tf.truncated_normal([5,5,64,192],

dtype=tf.float32,stddev=1e-1),name='weights')

conv = tf.nn.conv2d(pool1,kernel,[1,1,1,1],padding='SAME')

biases = tf.Variable(tf.constant(0.,shape=[192],dtype=tf.float32),

trainable=True,name='biases')

bias = tf.nn.bias_add(conv,biases)

conv2 = tf.nn.relu(bias,name=scope)

print_activations(conv2)

parameters += [kernel,biases]

lrn2 = tf.nn.lrn(conv2,4,bias=1.,alpha=0.001/9,beta=0.75,name='lrn2')

pool2 = tf.nn.max_pool(lrn2,ksize=[1,3,3,1],strides=[1,2,2,1],

padding='VALID',name='pool2')

print_activations(pool2)

with tf.name_scope('conv3') as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,192,384],

dtype=tf.float32,stddev=1e-1),name='weights')

conv = tf.nn.conv2d(pool2,kernel,[1,1,1,1],padding='SAME')

biases = tf.Variable(tf.constant(0.,shape=[384],dtype=tf.float32),

trainable=True,name='biases')

bias = tf.nn.bias_add(conv,biases)

conv3 = tf.nn.relu(bias,name=scope)

parameters += [kernel,biases]

print_activations(conv3)

with tf.name_scope('conv4') as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,384,256],

dtype=tf.float32,stddev=1e-1),name='weights')

conv = tf.nn.conv2d(conv3,kernel,[1,1,1,1],padding='SAME')

biases = tf.Variable(tf.constant(0.,shape=[256],dtype=tf.float32),

trainable=True,name='biases')

bias = tf.nn.bias_add(conv,biases)

conv4 = tf.nn.relu(bias,name=scope)

parameters += [kernel,biases]

print_activations(conv4)

with tf.name_scope('conv5') as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,256,256],

dtype=tf.float32,stddev=1e-1),name='weights')

conv = tf.nn.conv2d(conv4,kernel,[1,1,1,1],padding='SAME')

biases = tf.Variable(tf.constant(0.,shape=[256],dtype=tf.float32),

trainable=True,name='biases')

bias = tf.nn.bias_add(conv,biases)

conv5 = tf.nn.relu(bias,name=scope)

parameters += [kernel,biases]

print_activations(conv5)

pool5 = tf.nn.max_pool(conv5, ksize=[1,3,3,1],

strides=[1,2,2,1],padding='VALID',name='pool5')

print_activations(pool5)

with tf.name_scope('fcl1') as scope:

reshape = tf.reshape(pool5,[batch_size,-1])

dim = reshape.get_shape()[1].value

weights = tf.Variable(tf.truncated_normal([dim,4096],stddev=0.001),dtype=tf.float32)

biases = tf.Variable(tf.constant(0.1,shape=[4096]),dtype=tf.float32)

fcl1 = tf.nn.relu(tf.nn.bias_add(tf.matmul(reshape,weights),biases))

fcl1 = tf.nn.dropout(fcl1,keep_prob=0.75)

parameters += [weights,biases]

print_activations(fcl1)

with tf.name_scope('fcl2') as scope:

weights = tf.Variable(tf.truncated_normal([4096,4096],stddev=0.001),dtype=tf.float32)

biases = tf.Variable(tf.constant(0.1,shape=[4096]),dtype=tf.float32)

fcl2 = tf.nn.relu(tf.nn.bias_add(tf.matmul(fcl1,weights),biases))

fcl2 = tf.nn.dropout(fcl2,keep_prob=0.75)

parameters += [weights,biases]

print_activations(fcl2)

with tf.name_scope('fcl3') as scope:

weights = tf.Variable(tf.truncated_normal([4096,10],stddev=0.001),dtype=tf.float32)

biases = tf.Variable(tf.constant(0.1,shape=[10]),dtype=tf.float32)

fcl3 = tf.nn.bias_add(tf.matmul(fcl2,weights),biases)

parameters += [weights,biases]

print_activations(fcl3)

return fcl3, parameters

def time_tensorflow_run(session, target, info_string):

'''

网路运行时间测试函数

:param session: 会话对象

:param target: 运行目标节点

:param info_string:提示字符

:return: None

'''

num_steps_burn_in = 10 # 预热轮数

total_duration = 0.0 # 总时间

total_duration_squared = 0.0 # 总时间平方和

for i in range(num_steps_burn_in + num_batches):

start_time = time.time()

_ = session.run(target)

duration = time.time() - start_time # 本轮时间

if i >= num_steps_burn_in:

if not i % 10:

print('%s: step %d, duration = %.3f' %

(datetime.now(),i-num_steps_burn_in,duration))

total_duration += duration

total_duration_squared += duration**2

mn = total_duration/num_batches # 平均耗时

vr = total_duration_squared/num_batches - mn**2

sd = math.sqrt(vr)

print('%s:%s across %d steps, %.3f +/- %.3f sec / batch' %

(datetime.now(), info_string, num_batches, mn, sd))

def run_benchmark():

with tf.Graph().as_default():

image_size = 224

images = tf.Variable(tf.random_normal([batch_size,image_size,image_size,3],

dtype=tf.float32,

stddev=1e-1))

target_layer,parameters = inference(images)

init = tf.global_variables_initializer()

sess = tf.Session()

sess.run(init)

time_tensorflow_run(sess,target_layer,'Forward')

objective = tf.nn.l2_loss(target_layer) # 模拟目标函数

grad = tf.gradients(objective, parameters) # 梯度求解

time_tensorflow_run(sess, grad,'Forward-backward') # 反向传播

# 参数更新并未计入时耗中

if __name__=='__main__':

run_benchmark()

输出结果

conv1 [32, 56, 56, 64]

conv1/pool1 [32, 27, 27, 64]

conv2 [32, 27, 27, 192]

conv2/pool2 [32, 13, 13, 192]

conv3 [32, 13, 13, 384]

conv4 [32, 13, 13, 256]

conv5 [32, 13, 13, 256]

conv5/pool5 [32, 6, 6, 256]

fcl1/Relu [32, 4096]

fcl2/Relu [32, 4096]

fcl3/BiasAdd [32, 10]

2017-12-11 15:54:04.515831: I C: f_jenkinshomeworkspace el-winMwindowsPY35 ensorflowcoreplatformcpu_feature_guard.cc:137] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX AVX2

2017-12-11 15:54:25.862993: step 0, duration = 1.664

2017-12-11 15:54:42.982326: step 10, duration = 1.522

2017-12-11 15:54:59.837156: step 20, duration = 1.514

2017-12-11 15:55:14.968393: step 30, duration = 1.482

2017-12-11 15:55:29.901042: step 40, duration = 1.488

2017-12-11 15:55:44.832427: step 50, duration = 1.481

2017-12-11 15:55:59.978011: step 60, duration = 1.533

2017-12-11 15:56:15.705833: step 70, duration = 1.557

2017-12-11 15:56:32.164702: step 80, duration = 2.052

2017-12-11 15:56:49.980907: step 90, duration = 1.985

2017-12-11 15:57:06.628665:Forward across 100 steps, 0.163 +/- 0.492 sec / batch

2017-12-11 15:58:31.174363: step 0, duration = 7.852

2017-12-11 15:59:49.693544: step 10, duration = 7.901

2017-12-11 16:01:16.828232: step 20, duration = 8.463

2017-12-11 16:02:40.500340: step 30, duration = 6.796

2017-12-11 16:03:45.091418: step 40, duration = 6.690

2017-12-11 16:04:50.500747: step 50, duration = 5.797

2017-12-11 16:05:48.492420: step 60, duration = 5.754

2017-12-11 16:06:46.093358: step 70, duration = 5.737

2017-12-11 16:07:43.135421: step 80, duration = 5.704

2017-12-11 16:08:40.122760: step 90, duration = 5.626

2017-12-11 16:09:30.885931:Forward-backward across 100 steps, 0.663 +/- 2.016 sec / batch