对网站资源探测工具进行调整,并且添加代理,防止多次访问ip被封的情况。

#获取代理,并写入agents列 def agent_list(url): global agent_lists agent_lists = [] header = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:57.0) Gecko/20100101 Firefox/57.0'} r = requests.get(url,headers = header) agent_info = BeautifulSoup(r.content,'html.parser').find(id = "ip_list").find_all('tr')[1:] for i in range(len(agent_info)): info = agent_info[i].find_all('td') agents = {info[5].string : 'http://' + info[1].string} agent_lists.append(agents)

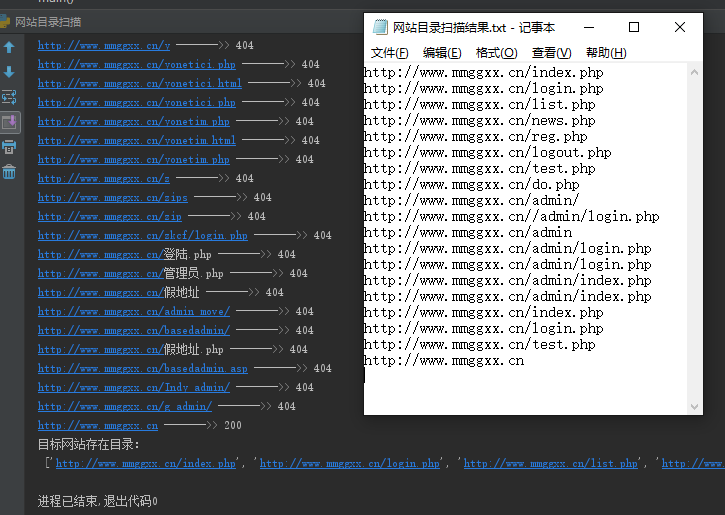

修改后的 网站资源扫描工具:

#!/usr/bin/env python3 # -*- coding: utf-8 -*- # by 默不知然 2018-03-15 import threading from threading import Thread from bs4 import BeautifulSoup import time import sys import requests import getopt import random #创建类,并对目标网站发起请求 class scan_thread (threading.Thread): global real_url_list real_url_list = [] def __init__(self,url): threading.Thread.__init__(self) self.url = url def run(self): try: proxy = random.sample(agent_lists,1)[0] r = requests.get(self.url, proxies = proxy) print(self.url,'------->>',str(r.status_code)) if int(r.status_code) == 200: real_url_list.append(self.url) l[0] = l[0] - 1 except Exception as e: print(e) #获取字典并构造url并声明扫描线程 def url_makeup(dicts,url,threshold): global url_list global l url_list = [] l =[] l.append(0) dic = str(dicts) with open (dic,'r') as f: code_list = f.readlines() for i in code_list: url_list.append(url+i.replace(' ','').replace(' ','')) while len(url_list): try: if l[0] < threshold: n = url_list.pop(0) l[0] = l[0] + 1 thread = scan_thread(n) thread.start() except KeyboardInterrupt: print('用户停止了程序,完成目录扫描。') sys.exit() #获取输入参数 def get_args(): global get_url global get_dicts global get_threshold try: options,args = getopt.getopt(sys.argv[1:],"u:f:n:") except getopt.GetoptError: print("错误参数") sys.exit() for option,arg in options: if option == '-u': get_url = arg if option == '-f': get_dicts = arg if option == '-n': get_threshold = int(arg) #获取代理,并写入agents列 def agent_list(url): global agent_lists agent_lists = [] header = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:57.0) Gecko/20100101 Firefox/57.0'} r = requests.get(url,headers = header) agent_info = BeautifulSoup(r.content,'html.parser').find(id = "ip_list").find_all('tr')[1:] for i in range(len(agent_info)): info = agent_info[i].find_all('td') agents = {info[5].string : 'http://' + info[1].string} agent_lists.append(agents) #主函数,运行扫描程序 def main(): agent_url = 'http://www.xicidaili.com/nn/1' agent_list(agent_url) get_args() url = get_url dicts = get_dicts threshold = get_threshold url_makeup(dicts,url,threshold) time.sleep(0.5) print('目标网站存在目录: ',' ', real_url_list) with open(r'网站目录扫描结果.txt','w') as f: for i in real_url_list: f.write(i) f.write(' ') if __name__ == '__main__': main()

对某网站扫描结果: