1.读取

file_path=r'D:PycharmProjectsdataSMSSpamCollection' sms=open(file_path,'r',encoding='utf-8') sms_data=[] sms_label=[] csv_reader=csv.reader(sms,delimiter=' ') for line in csv_reader: sms_label.append(line[0]) sms_data.append(preprocessing(line[1]))#对每封邮件做预处理 sms.close() print(sms_label) print(sms_data)

2.数据预处理

import csv import nltk from nltk.corpus import stopwords from nltk.stem import WordNetLemmatizer print(nltk.__doc__) def get_wordnet_pos(treebank_tag): if treebank_tag.startswith('J'): return nltk.corpus.wordnet.ADJ elif treebank_tag.startswith('V'): return nltk.corpus.wordnet.VERB elif treebank_tag.startswith('N'): return nltk.corpus.wordnet.NOUN elif treebank_tag.startswith('R'): return nltk.corpus.wordnet.ADV else: return nltk.corpus.wordnet.NOUN #预处理 def preprocessing(text): tokens = [word for sent in nltk.sent_tokenize(text) for word in nltk.word_tokenize(sent)]#分词 stops = stopwords.words("english")#停用词 tokens = [token for token in tokens if token not in stops]#去掉停用词 tokens = [token.lower() for token in tokens if len(token) >= 3]#将大写字母变为小写 tag=nltk.pos_tag(tokens)#词性 lmtzr = WordNetLemmatizer() tokens = [lmtzr.lemmatize(token,pos=get_wordnet_pos(tag[i][1])) for i,token in enumerate(tokens)] preprocessed_text = ''.join(tokens) return preprocessed_text

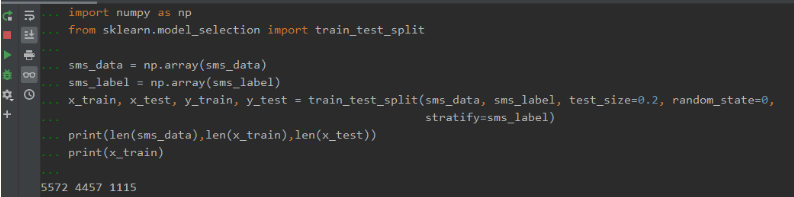

3.数据划分—训练集和测试集数据划分

from sklearn.model_selection import train_test_split

x_train,x_test, y_train, y_test = train_test_split(data, target, test_size=0.2, random_state=0, stratify=y_train)

# 按0.8:0.2比例分为训练集和测试集 import numpy as np from sklearn.model_selection import train_test_split sms_data = np.array(sms_data) sms_label = np.array(sms_label) x_train, x_test, y_train, y_test = train_test_split(sms_data, sms_label, test_size=0.2, random_state=0, stratify=sms_label) print(len(sms_data),len(x_train),len(x_test)) print(x_train)

4.文本特征提取

sklearn.feature_extraction.text.CountVectorizer

sklearn.feature_extraction.text.TfidfVectorizer

from sklearn.feature_extraction.text import TfidfVectorizer

tfidf2 = TfidfVectorizer()

观察邮件与向量的关系

向量还原为邮件

4.模型选择

from sklearn.naive_bayes import GaussianNB

from sklearn.naive_bayes import MultinomialNB

说明为什么选择这个模型?

5.模型评价:混淆矩阵,分类报告

from sklearn.metrics import confusion_matrix

confusion_matrix = confusion_matrix(y_test, y_predict)

说明混淆矩阵的含义

from sklearn.metrics import classification_report

说明准确率、精确率、召回率、F值分别代表的意义