支持向量机(SVM)中最核心的是什么?个人理解就是前4个字——“支持向量”,一旦在两类或多累样本集中定位到某些特定的点作为支持向量,就可以依据这些支持向量计算出来分类超平面,再依据超平面对类别进行归类划分就是水到渠成的事了。有必要回顾一下什么是支持向量机中的支持向量。

上图中需要对红色和蓝色的两类训练样本进行区分,实现绿线是决策面(超平面),最靠近决策面的2个实心红色样本和1个实心蓝色样本分别是两类训练样本的支持向量,决策面所在的位置是使得两类支持向量与决策面之间的间隔都达到最大时决策面所处的位置。一般情况下,训练样本都会存在噪声,这就导致其中一类样本的一个或多个样本跑到了决策面的另一边,掺杂到另一类样本中。针对这种情况,SVM加入了松弛变量(惩罚变量)来应对,确保这些噪声样本不会被作为支持向量,而不管它们离超平面的距离有多近。包括SVM中的另一个重要概念“核函数”,也是为训练样本支持向量的确定提供支持的。

在OpenCV中,SVM的训练、归类流程如下:

1. 获取训练样本

SVM是一种有监督的学习分类方法,所以对于给出的训练样本,要明确每个样本的归类是0还是1,即每个样本都需要标注一个确切的类别标签,提供给SVM训练使用。对于样本的特征,以及特征的维度,SVM并没有限定,可以使用如Haar、角点、Sift、Surf、直方图等各种特征作为训练样本的表述参与SVM的训练。Opencv要求训练数据存储在float类型的Mat结构中。最简单的情况下,假定有两类训练样本,样本的维度是二维,每类包含3个样本,可以定义如下:

float labels[4] = {1.0, -1.0, -1.0, -1.0}; //样本数据

Mat labelsMat(3, 1, CV_32FC1, labels); //样本标签

float trainingData[4][2] = { {501, 10}, {255, 10}, {501, 255}, {10, 501} }; //Mat结构特征数据

Mat trainingDataMat(3, 2, CV_32FC1, trainingData); //Mat结构标签2. 设置SVM参数

Opencv中SVM的参数设置在CvSVMParams类中,常用的设置包括SVM的类型,核函数类型和算法的终止条件,松弛变量等。可以按如下设置:

CvSVMParams params;

params.svm_type = CvSVM::C_SVC;

params.kernel_type = CvSVM::LINEAR;

params.term_crit = cvTermCriteria(CV_TERMCRIT_ITER, 100, 1e-6);CVSVM::C_SVC类型是SVM中最常被用到的类型,它的重要特征是可以处理非完美分类的问题(即训练数据不可以被线性分割),包括线性可分割和不可分割。

核函数的目的是为了将训练样本映射到更有利于线性分割的样本集中。映射的结果是增加了样本向量的维度。这一过程通过核函数来完成。若设置核函数的类型是CvSVM::LINEAR表示不需要进行高维空间映射。

算法终止条件,SVM训练的过程是一个通过迭代方式解决约束条件下的二次优化问题,可以设定一个最大迭代次数和容许误差的组合,以允许算法在适当的条件下停止计算。

3. 训练支持向量

通过Opencv中的CvSVM::train函数对训练样本进行训练,并建立SVM模型。

CvSVM SVM;

SVM.train(trainingDataMat, labelsMat, Mat(), Mat(), params);4. 不同训练样本区域分割

函数CvSVM::predict可以运用之前建立的支持向量机的模型来判断测试样本空间中样本所属的类别,一种比较直观的方式是对不同类别样本所在的空间进行涂色,如下代码把两类样本空间分别图上绿色和蓝色,以示区分:

Vec3b green(0,255,0), blue (255,0,0);

// Show the decision regions given by the SVM

for (int i = 0; i < image.rows; ++i)

for (int j = 0; j < image.cols; ++j)

{

Mat sampleMat = (Mat_<float>(1,2) << i,j);

float response = SVM.predict(sampleMat);

if (response == 1)

image.at<Vec3b>(j, i) = green;

else if (response == -1)

image.at<Vec3b>(j, i) = blue;

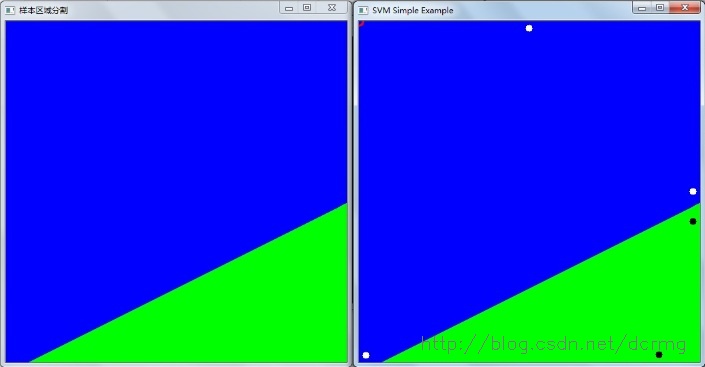

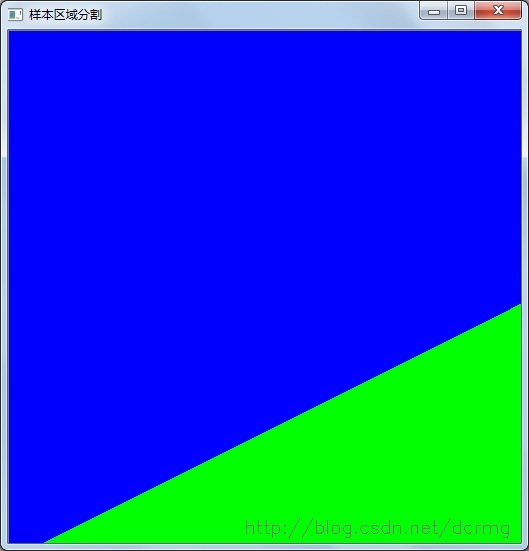

}样本区域分割效果,蓝色和绿色区域代表不同的样本空间,两者交界处就是分类超平面位置:

5. 绘制支持向量

函数SVM.get_support_vector_count 可以获取到支持向量的数量信息,函数SVM.get_support_vector根据输入支持向量的索引号来获取指定位置的支持向量,如下代码获取支持向量的数量,并在样本空间中用红色小圆圈逐个标识出来:

thickness = 2;

lineType = 8;

int c = SVM.get_support_vector_count();

for (int i = 0; i < c; ++i)

{

const float* v = SVM.get_support_vector(i);

circle( image, Point( (int) v[0], (int) v[1]), 6, Scalar(0, 0, 255), thickness, lineType);

}以上就是OpenCV中SVM样本训练以及归类的详细流程,无论是线性可分割还是线性不可分割都可以按照这5个基本步骤进行。

以下分别是SVM中线性可分二分类、线性不可分二分类和线性不可分多(三)分类问题的Opencv代码实现。

一、线性可分二分类问题

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/ml/ml.hpp>

using namespace cv;

int main()

{

// Data for visual representation

int width = 512, height = 512;

Mat image = Mat::zeros(height, width, CV_8UC3);

// Set up training data

float labels[5] = {1.0, -1.0, -1.0, -1.0,1.0}; //样本数据

Mat labelsMat(4, 1, CV_32FC1, labels); //样本标签

float trainingData[5][2] = { {501, 300}, {255, 10}, {501, 255}, {10, 501},{450,500} }; //Mat结构特征数据

Mat trainingDataMat(4, 2, CV_32FC1, trainingData); //Mat结构标签

// Set up SVM's parameters

CvSVMParams params;

params.svm_type = CvSVM::C_SVC;

params.kernel_type = CvSVM::LINEAR;

params.term_crit = cvTermCriteria(CV_TERMCRIT_ITER, 100, 1e-6);

// Train the SVM

CvSVM SVM;

SVM.train(trainingDataMat, labelsMat, Mat(), Mat(), params);

Vec3b green(0,255,0), blue (255,0,0);

// Show the decision regions given by the SVM

for (int i = 0; i < image.rows; ++i)

for (int j = 0; j < image.cols; ++j)

{

Mat sampleMat = (Mat_<float>(1,2) << i,j);

float response = SVM.predict(sampleMat);

if (response == 1)

image.at<Vec3b>(j, i) = green;

else if (response == -1)

image.at<Vec3b>(j, i) = blue;

}

imshow("样本区域分割",image);

waitKey();

// Show the training data

int thickness = -1;

int lineType = 8;

circle( image, Point(501, 300), 5, Scalar( 0, 0, 0), thickness, lineType);

circle( image, Point(255, 10), 5, Scalar(255, 255, 255), thickness, lineType);

circle( image, Point(501, 255), 5, Scalar(255, 255, 255), thickness, lineType);

circle( image, Point( 10, 501), 5, Scalar(255, 255, 255), thickness, lineType);

circle( image, Point( 450, 500), 5, Scalar(0, 0, 0), thickness, lineType);

// Show support vectors

thickness = 2;

lineType = 8;

int c = SVM.get_support_vector_count();

for (int i = 0; i < c; ++i)

{

const float* v = SVM.get_support_vector(i);

circle( image, Point( (int) v[0], (int) v[1]), 6, Scalar(0, 0, 255), thickness, lineType);

}

imwrite("result.png", image); // save the image

imshow("SVM Simple Example", image); // show it to the user

waitKey(0);

}线性可分二分类问题的分类效果:

图中右上角红色的圆圈代表支持向量,位置明显是错的,据不可靠消息,这个版本OpenCV的获取支持向量的函数get_support_vector_count是有问题,具体各位可以自己验证一下。

二、 线性不可分割问题

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/ml/ml.hpp>

#define NTRAINING_SAMPLES 100 // Number of training samples per class

#define FRAC_LINEAR_SEP 0.9f // Fraction of samples which compose the linear separable part

using namespace cv;

using namespace std;

int main()

{

// Data for visual representation

const int WIDTH = 512, HEIGHT = 512;

Mat I = Mat::zeros(HEIGHT, WIDTH, CV_8UC3);

//--------------------- 1. Set up training data randomly ---------------------------------------

Mat trainData(2*NTRAINING_SAMPLES, 2, CV_32FC1);

Mat labels (2*NTRAINING_SAMPLES, 1, CV_32FC1);

RNG rng(100); // Random value generation class

// Set up the linearly separable part of the training data

int nLinearSamples = (int) (FRAC_LINEAR_SEP * NTRAINING_SAMPLES);

// Generate random points for the class 1

Mat trainClass = trainData.rowRange(0, nLinearSamples);

// The x coordinate of the points is in [0, 0.4)

Mat c = trainClass.colRange(0, 1);

rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(0.4 * WIDTH));

// The y coordinate of the points is in [0, 1)

c = trainClass.colRange(1,2);

rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(HEIGHT));

// Generate random points for the class 2

trainClass = trainData.rowRange(2*NTRAINING_SAMPLES-nLinearSamples, 2*NTRAINING_SAMPLES);

// The x coordinate of the points is in [0.6, 1]

c = trainClass.colRange(0 , 1);

rng.fill(c, RNG::UNIFORM, Scalar(0.6*WIDTH), Scalar(WIDTH));

// The y coordinate of the points is in [0, 1)

c = trainClass.colRange(1,2);

rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(HEIGHT));

//------------------ Set up the non-linearly separable part of the training data ---------------

// Generate random points for the classes 1 and 2

trainClass = trainData.rowRange( nLinearSamples, 2*NTRAINING_SAMPLES-nLinearSamples);

// The x coordinate of the points is in [0.4, 0.6)

c = trainClass.colRange(0,1);

rng.fill(c, RNG::UNIFORM, Scalar(0.4*WIDTH), Scalar(0.6*WIDTH));

// The y coordinate of the points is in [0, 1)

c = trainClass.colRange(1,2);

rng.fill(c, RNG::UNIFORM, Scalar(1), Scalar(HEIGHT));

//------------------------- Set up the labels for the classes ---------------------------------

labels.rowRange( 0, NTRAINING_SAMPLES).setTo(1); // Class 1

labels.rowRange(NTRAINING_SAMPLES, 2*NTRAINING_SAMPLES).setTo(2); // Class 2

//------------------------ 2. Set up the support vector machines parameters --------------------

CvSVMParams params;

params.svm_type = SVM::C_SVC;

params.C = 0.1;

params.kernel_type = SVM::LINEAR;

params.term_crit = TermCriteria(CV_TERMCRIT_ITER, (int)1e7, 1e-6);

//------------------------ 3. Train the svm ----------------------------------------------------

cout << "Starting training process" << endl;

CvSVM svm;

svm.train(trainData, labels, Mat(), Mat(), params);

cout << "Finished training process" << endl;

//------------------------ 4. Show the decision regions ----------------------------------------

Vec3b green(0,100,0), blue (100,0,0);

for (int i = 0; i < I.rows; ++i)

for (int j = 0; j < I.cols; ++j)

{

Mat sampleMat = (Mat_<float>(1,2) << i, j);

float response = svm.predict(sampleMat);

if (response == 1) I.at<Vec3b>(j, i) = green;

else if (response == 2) I.at<Vec3b>(j, i) = blue;

}

//----------------------- 5. Show the training data --------------------------------------------

int thick = -1;

int lineType = 8;

float px, py;

// Class 1

for (int i = 0; i < NTRAINING_SAMPLES; ++i)

{

px = trainData.at<float>(i,0);

py = trainData.at<float>(i,1);

circle(I, Point( (int) px, (int) py ), 3, Scalar(0, 255, 0), thick, lineType);

}

// Class 2

for (int i = NTRAINING_SAMPLES; i <2*NTRAINING_SAMPLES; ++i)

{

px = trainData.at<float>(i,0);

py = trainData.at<float>(i,1);

circle(I, Point( (int) px, (int) py ), 3, Scalar(255, 0, 0), thick, lineType);

}

//------------------------- 6. Show support vectors --------------------------------------------

thick = 2;

lineType = 8;

int x = svm.get_support_vector_count();

for (int i = 0; i < x; ++i)

{

const float* v = svm.get_support_vector(i);

circle( I, Point( (int) v[0], (int) v[1]), 6, Scalar(128, 128, 128), thick, lineType);

}

imwrite("result.png", I); // save the Image

imshow("线性不可分二类问题", I); // show it to the user

waitKey(0);

}

训练样本空间最优分割面和训练样本分布如下所示,在最优分割面附近有4个样本点被当做噪点:

三、线性不可分多(三)分类问题

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/ml/ml.hpp>

#include <CTYPE.H>

#define NTRAINING_SAMPLES 100 // Number of training samples per class

#define FRAC_LINEAR_SEP 0.9f // Fraction of samples which compose the linear separable part

using namespace cv;

using namespace std;

int main(int argc, char* argv[])

{

int size = 400; // height and widht of image

const int s = 1000; // number of data

int i, j,sv_num;

IplImage* img;

CvSVM svm ;

CvSVMParams param;

CvTermCriteria criteria; // 停止迭代标准

CvRNG rng = cvRNG();

CvPoint pts[s]; // 定义1000个点

float data[s*2]; // 点的坐标

int res[s]; // 点的类别

CvMat data_mat, res_mat;

CvScalar rcolor;

const float* support;

// 图像区域的初始化

img = cvCreateImage(cvSize(size,size),IPL_DEPTH_8U,3);

cvZero(img);

// 学习数据的生成

for (i=0; i<s;++i)

{

pts[i].x = cvRandInt(&rng)%size;

pts[i].y = cvRandInt(&rng)%size;

if (pts[i].y>50*cos(pts[i].x*CV_PI/100)+200)

{

cvLine(img,cvPoint(pts[i].x-2,pts[i].y-2),cvPoint(pts[i].x+2,pts[i].y+2),CV_RGB(255,0,0));

cvLine(img,cvPoint(pts[i].x+2,pts[i].y-2),cvPoint(pts[i].x-2,pts[i].y+2),CV_RGB(255,0,0));

res[i]=1;

}

else

{

if (pts[i].x>200)

{

cvLine(img,cvPoint(pts[i].x-2,pts[i].y-2),cvPoint(pts[i].x+2,pts[i].y+2),CV_RGB(0,255,0));

cvLine(img,cvPoint(pts[i].x+2,pts[i].y-2),cvPoint(pts[i].x-2,pts[i].y+2),CV_RGB(0,255,0));

res[i]=2;

}

else

{

cvLine(img,cvPoint(pts[i].x-2,pts[i].y-2),cvPoint(pts[i].x+2,pts[i].y+2),CV_RGB(0,0,255));

cvLine(img,cvPoint(pts[i].x+2,pts[i].y-2),cvPoint(pts[i].x-2,pts[i].y+2),CV_RGB(0,0,255));

res[i]=3;

}

}

}

// 学习数据的现实

cvNamedWindow("SVM训练样本空间及分类",CV_WINDOW_AUTOSIZE);

cvShowImage("SVM训练样本空间及分类",img);

cvWaitKey(0);

// 学习参数的生成

for (i=0;i<s;++i)

{

data[i*2] = float(pts[i].x)/size;

data[i*2+1] = float(pts[i].y)/size;

}

cvInitMatHeader(&data_mat,s,2,CV_32FC1,data);

cvInitMatHeader(&res_mat,s,1,CV_32SC1,res);

criteria = cvTermCriteria(CV_TERMCRIT_EPS,1000,FLT_EPSILON);

param = CvSVMParams(CvSVM::C_SVC,CvSVM::RBF,10.0,8.0,1.0,10.0,0.5,0.1,NULL,criteria);

svm.train(&data_mat,&res_mat,NULL,NULL,param);

// 学习结果绘图

for (i=0;i<size;i++)

{

for (j=0;j<size;j++)

{

CvMat m;

float ret = 0.0;

float a[] = {float(j)/size,float(i)/size};

cvInitMatHeader(&m,1,2,CV_32FC1,a);

ret = svm.predict(&m);

switch((int)ret)

{

case 1:

rcolor = CV_RGB(100,0,0);

break;

case 2:

rcolor = CV_RGB(0,100,0);

break;

case 3:

rcolor = CV_RGB(0,0,100);

break;

}

cvSet2D(img,i,j,rcolor);

}

}

// 为了显示学习结果,通过对输入图像区域的所有像素(特征向量)进行分类,然后对输入的像素用所属颜色等级的颜色绘图

for(i=0;i<s;++i)

{

CvScalar rcolor;

switch(res[i])

{

case 1:

rcolor = CV_RGB(255,0,0);

break;

case 2:

rcolor = CV_RGB(0,255,0);

break;

case 3:

rcolor = CV_RGB(0,0,255);

break;

}

cvLine(img,cvPoint(pts[i].x-2,pts[i].y-2),cvPoint(pts[i].x+2,pts[i].y+2),rcolor);

cvLine(img,cvPoint(pts[i].x+2,pts[i].y-2),cvPoint(pts[i].x-2,pts[i].y+2),rcolor);

}

// 支持向量的绘制

sv_num = svm.get_support_vector_count();

for (i=0; i<sv_num;++i)

{

support = svm.get_support_vector(i);

cvCircle(img,cvPoint((int)(support[0]*size),(int)(support[i]*size)),5,CV_RGB(200,200,200));

}

cvNamedWindow("SVM",CV_WINDOW_AUTOSIZE);

cvShowImage("SVM分类结果及支持向量",img);

cvWaitKey(0);

cvDestroyWindow("SVM");

cvReleaseImage(&img);

return 0;

}

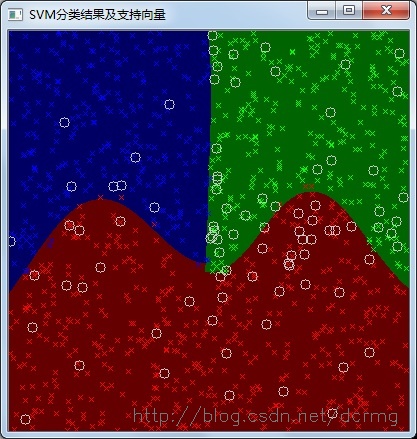

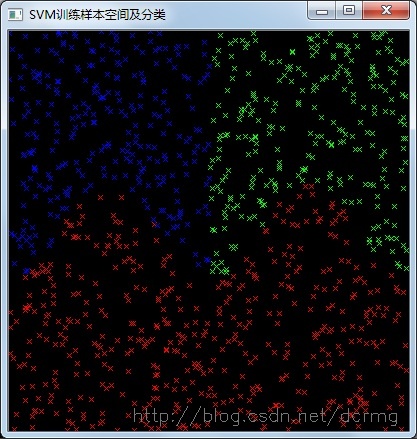

训练样本空间及分类:

SVM分类效果及支持向量: