以往都是用java运行spark的没问题,今天用scala在eclipse上运行spark的代码倒是出现了错误 ,记录

首先是当我把相关的包导入好后,Run,报错:

Exception in thread "main" org.apache.spark.SparkException: A master URL must be set in your configuration at org.apache.spark.SparkContext.<init>(SparkContext.scala:185) at SparkDemo.SimpleApp$.main(SimpleApp.scala:13) at SparkDemo.SimpleApp.main(SimpleApp.scala) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:606) at com.intellij.rt.execution.application.AppMain.main(AppMain.java:140) Using Spark‘s default log4j profile: org/apache/spark/log4j-defaults.propertie

通过百度下找到了相似的解决,在IntelliJ解决办法:在IDE中点击Run -> Edit Configuration,在右侧VM options中输入“-Dspark.master=local”,指示本程序本地单线程运行

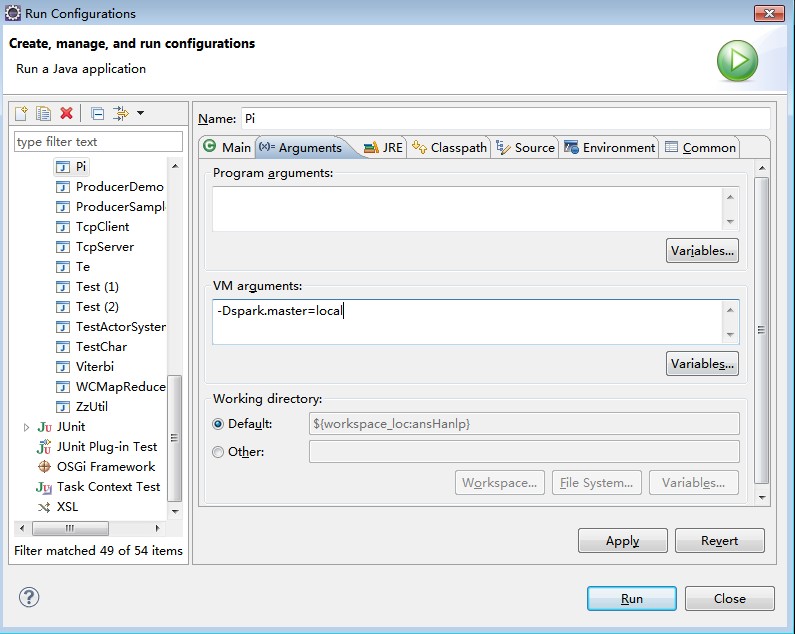

但是我在eclipse中运行,应该是同样的道理,在eclipse中进行配置,Run -> Run Configuration 在 Arguments 中配置 VM arguments 配置-Dspark.master=local

再次运行,依旧出错:

Exception in thread "main" java.lang.NoSuchMethodError: scala.collection.immutable.HashSet$.empty()Lscala/collection/immutable/HashSet; at akka.actor.ActorCell$.<init>(ActorCell.scala:336) at akka.actor.ActorCell$.<clinit>(ActorCell.scala) at akka.actor.RootActorPath.$div(ActorPath.scala:159) at akka.actor.LocalActorRefProvider.<init>(ActorRefProvider.scala:464) at akka.actor.LocalActorRefProvider.<init>(ActorRefProvider.scala:452) at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:39) at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:27)

eclipse中Spark版本为1.2.0,运行环境scala为2.11,看来Spark版本和Scala版本还是存在一些兼容性问题,,eclipse中编译eclipse变为2.10版本问题将解决。