HttpClient is a HTTP/1.1 compliant HTTP agent implementation based on HttpCore.

It also provides reusable components for client-side authentication, HTTP state management, and HTTP connection management.

http://hc.apache.org/index.html

为什么使用HttpClient连接池?

使用连接池的好处主要有

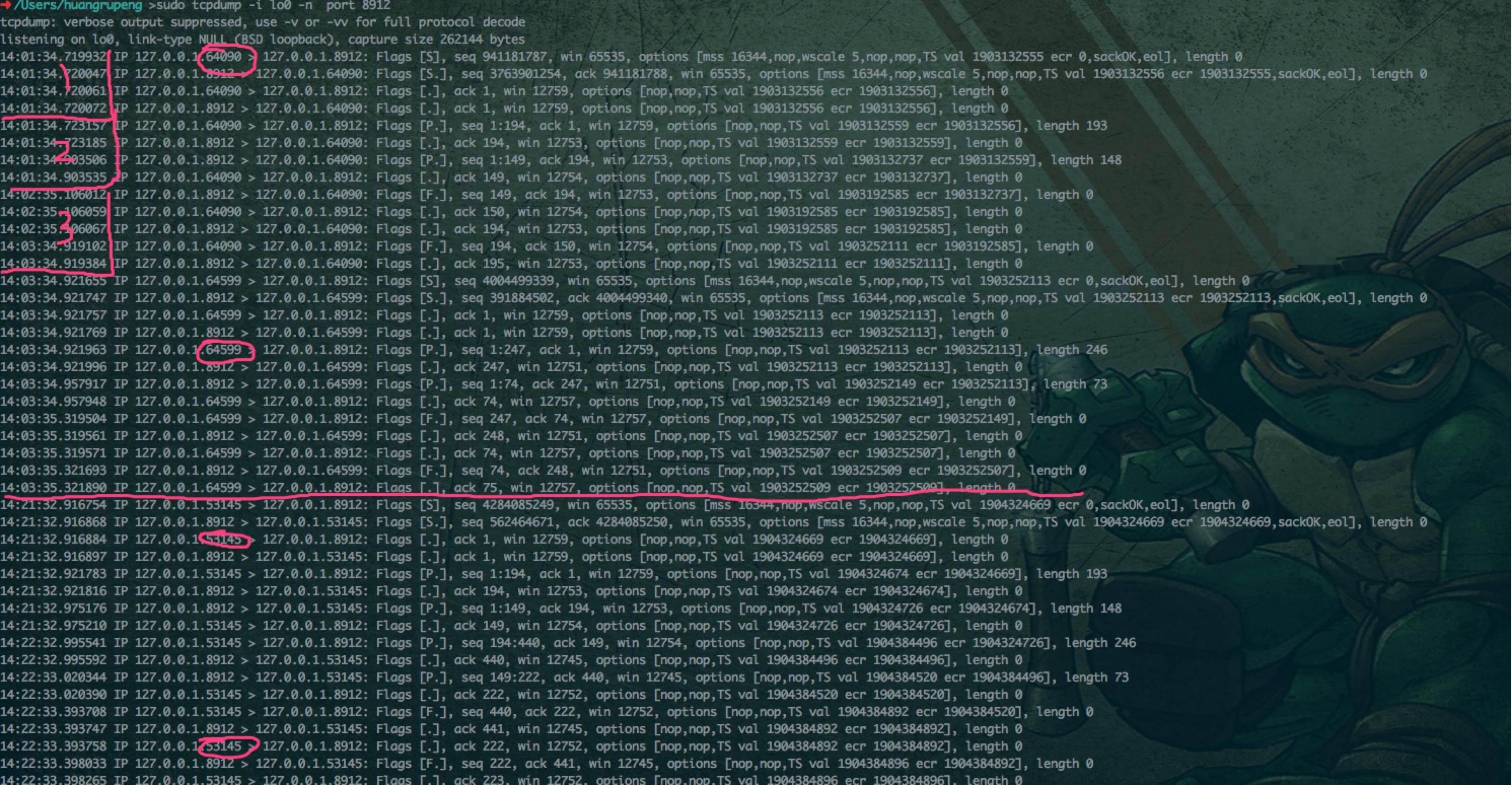

在 keep-alive 时间内,可以使用同一个 tcp 连接发起多次 http 请求。

如果不使用连接池,在大并发的情况下,每次连接都会打开一个端口,使系统资源很快耗尽,无法建立新的连接,可以限定最多打开的端口数。

我的理解是连接池内的连接数其实就是可以同时创建多少个 tcp 连接,httpClient 维护着两个 Set,leased(被占用的连接集合) 和 avaliabled(可用的连接集合) 两个集合,释放连接就是将被占用连接放到可用连接里面。

什么是 Keep-Alive

HTTP1.1 默认启用 Keep-Alive,我们的 client(如浏览器)访问 http-server(比如 tomcat/nginx/apache)连接,其实就是发起一次 tcp 连接,要经历连接三次握手,关闭四次握手,在一次连接里面,可以发起多个 http 请求。

如果没有 Keep-Alive,每次 http 请求都要发起一次 tcp 连接。

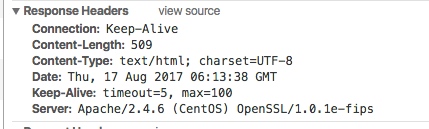

下图是 apache-server 一次 http 请求返回的响应头,可以看到连接方式就是 Keep-Alive,另外还有 Keep-Alive 头,

其中timeout=5 5s 之内如果没有发起新的 http 请求,服务器将断开这次 tcp 连接,如果发起新的请求,断开连接时间将继续刷新为 5s

max=100 的意思在这次 tcp 连接之内,最多允许发送 100 次 http 请求,100 次之后,即使在 timeout 时间之内发起新的请求,服务器依然会断开这次 tcp 连接

对同一个 httpClient 多次调用如下方法之后,并没有释放连接,最终导致了下面的问题:

解决办法:

方法1:每次使用过HttpClient后,就关闭连接。

方法2:使用HttpClient连接池,具体的组件就是PoolingHttpClientConnectionManager

"pool-3-thread-4@10651" prio=5 tid=0x4f nid=NA waiting

java.lang.Thread.State: WAITING

at sun.misc.Unsafe.park(Unsafe.java:-1)

at java.util.concurrent.locks.LockSupport.park(LockSupport.java:175)

at java.util.concurrent.locks.AbstractQueuedSynchronizer$ConditionObject.await(AbstractQueuedSynchronizer.java:2039)

at org.apache.http.pool.AbstractConnPool.getPoolEntryBlocking(AbstractConnPool.java:377)

at org.apache.http.pool.AbstractConnPool.access$200(AbstractConnPool.java:67)

at org.apache.http.pool.AbstractConnPool$2.get(AbstractConnPool.java:243)

- locked <0x2d1c> (a org.apache.http.pool.AbstractConnPool$2)

at org.apache.http.pool.AbstractConnPool$2.get(AbstractConnPool.java:191)

at org.apache.http.impl.conn.PoolingHttpClientConnectionManager.leaseConnection(PoolingHttpClientConnectionManager.java:282)

at org.apache.http.impl.conn.PoolingHttpClientConnectionManager$1.get(PoolingHttpClientConnectionManager.java:269)

at org.apache.http.impl.execchain.MainClientExec.execute(MainClientExec.java:191)

at org.apache.http.impl.execchain.ProtocolExec.execute(ProtocolExec.java:185)

at org.apache.http.impl.execchain.RetryExec.execute(RetryExec.java:89)

at org.apache.http.impl.execchain.RedirectExec.execute(RedirectExec.java:111)

at org.apache.http.impl.client.InternalHttpClient.doExecute(InternalHttpClient.java:185)

at org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:83)

at org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:108)

at org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:56)

下面报错的原因:使用了连接池,但连接不够用,造成大量等待。等待时间超过RequestConfig的ConnectionRequestTimeout就会抛下面的异常

如果没有设置ConnectionRequestTimeout参数(连接不够用时等待超时时间),当连接池连接不够用,就会线程阻塞。因此一定要设置,设置后,不会阻塞线程,而是直接抛异常

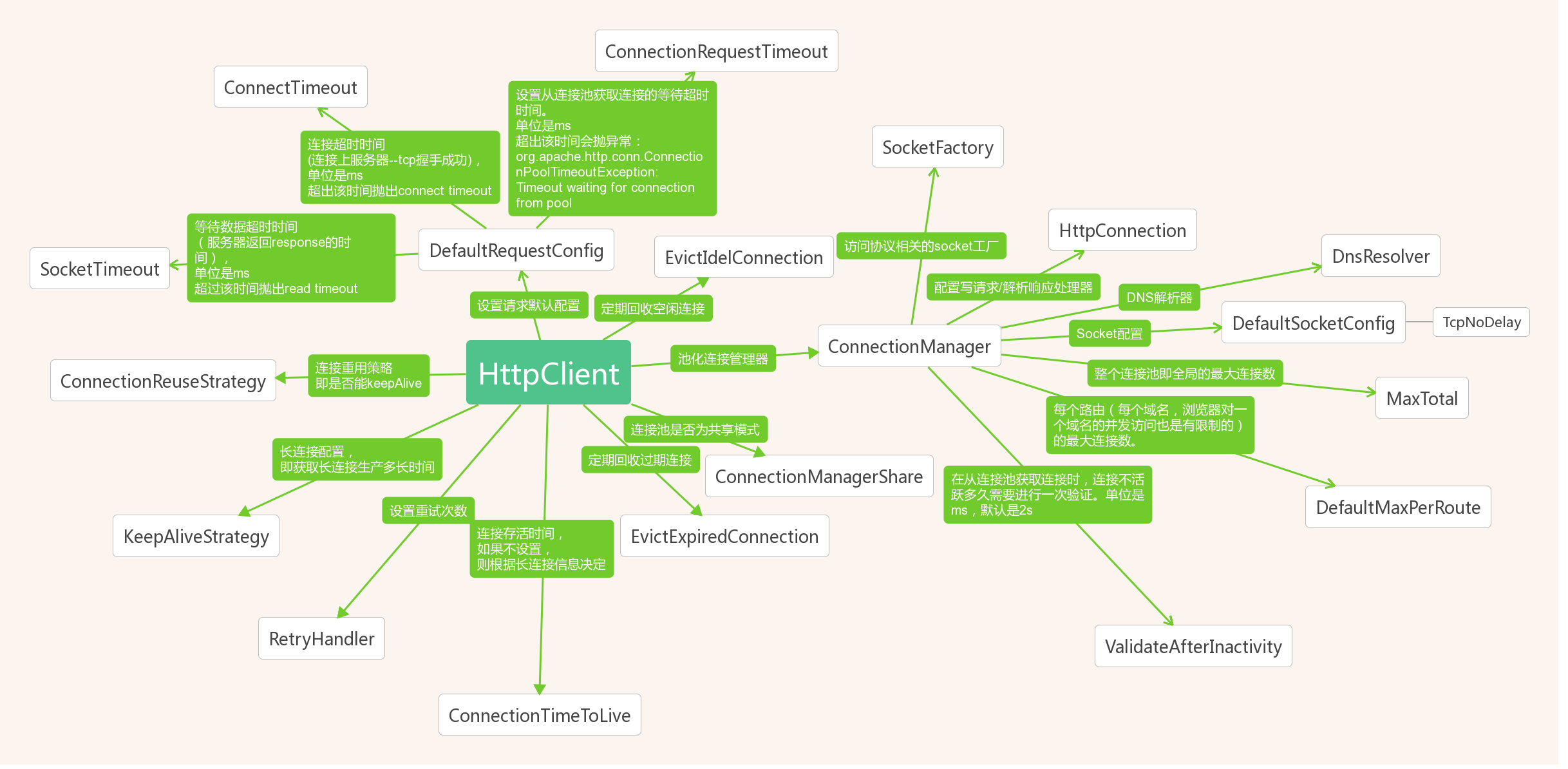

connectionRequestTimeout:从连接池中获取连接的超时时间,超过该时间未拿到可用连接,会抛出org.apache.http.conn.ConnectionPoolTimeoutException: Timeout waiting for connection from pool connectTimeout:连接上服务器(握手成功)的时间,超出该时间抛出connect timeout socketTimeout:服务器返回数据(response)的时间,超过该时间抛出read timeout 通过打断点的方式我们知道,HttpClients在我们没有指定连接工厂的时候默认使用的是连接池工厂org.apache.http.impl.conn.PoolingHttpClientConnectionManager.PoolingHttpClientConnectionManager(Registry<ConnectionSocketFactory>),

所以我们需要配置一下从连接池获取连接池的超时时间。

以上3个超时相关的参数如果未配置,默认为-1,意味着无限大,就是一直阻塞等待!

作者:烫烫烫烫烫烫烫烫烫烫烫烫烫烫烫

链接:https://www.jianshu.com/p/f38a62efaa96

來源:简书

著作权归作者所有。商业转载请联系作者获得授权,非商业转载请注明出处。

org.apache.http.conn.ConnectionPoolTimeoutException: Timeout waiting for connection from pool at org.apache.http.impl.conn.PoolingHttpClientConnectionManager.leaseConnection(PoolingHttpClientConnectionManager.java:292) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.conn.PoolingHttpClientConnectionManager$1.get(PoolingHttpClientConnectionManager.java:269) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.execchain.MainClientExec.execute(MainClientExec.java:191) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.execchain.ProtocolExec.execute(ProtocolExec.java:185) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.execchain.RetryExec.execute(RetryExec.java:89) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.execchain.RedirectExec.execute(RedirectExec.java:111) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.client.InternalHttpClient.doExecute(InternalHttpClient.java:185) ~[httpclient-4.5.3.jar!/:4.5.3] at org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:83) ~[httpclient-4.5.3.jar!/:4.5.3] at com.hujiang.career.databank.spider.download.CustomHttpClientDownloader.download(CustomHttpClientDownloader.java:88) ~[classes!/:1.0.0] at us.codecraft.webmagic.Spider.processRequest(Spider.java:404) [webmagic-core-0.7.3.jar!/:na] at us.codecraft.webmagic.Spider.access$000(Spider.java:61) [webmagic-core-0.7.3.jar!/:na] at us.codecraft.webmagic.Spider$1.run(Spider.java:320) [webmagic-core-0.7.3.jar!/:na] at us.codecraft.webmagic.thread.CountableThreadPool$1.run(CountableThreadPool.java:74) [webmagic-core-0.7.3.jar!/:na] at java.util.concurrent.ThreadPoolExecutor.runWorker(Unknown Source) [na:1.8.0_144] at java.util.concurrent.ThreadPoolExecutor$Worker.run(Unknown Source) [na:1.8.0_144] at java.lang.Thread.run(Unknown Source) [na:1.8.0_144]

相对BasicHttpClientConnectionManager来说,PoolingHttpClientConnectionManager是个更复杂的类,它管理着连接池,可以同时为很多线程提供http连接请求。

Connections are pooled on a per route basis.

当请求一个新的连接时,如果连接池有有可用的持久连接,连接管理器就会使用其中的一个,而不是再创建一个新的连接。

《亿级流量网站构架核心技术》 P236

import org.apache.http.client.config.RequestConfig; import org.apache.http.config.Registry; import org.apache.http.config.RegistryBuilder; import org.apache.http.config.SocketConfig; import org.apache.http.conn.DnsResolver; import org.apache.http.conn.HttpConnectionFactory; import org.apache.http.conn.ManagedHttpClientConnection; import org.apache.http.conn.routing.HttpRoute; import org.apache.http.conn.socket.ConnectionSocketFactory; import org.apache.http.conn.socket.PlainConnectionSocketFactory; import org.apache.http.conn.ssl.SSLConnectionSocketFactory; import org.apache.http.impl.DefaultConnectionReuseStrategy; import org.apache.http.impl.client.*; import org.apache.http.impl.conn.DefaultHttpResponseParserFactory; import org.apache.http.impl.conn.ManagedHttpClientConnectionFactory; import org.apache.http.impl.conn.PoolingHttpClientConnectionManager; import org.apache.http.impl.conn.SystemDefaultDnsResolver; import org.apache.http.impl.io.DefaultHttpRequestWriterFactory; import org.slf4j.Logger; import org.slf4j.LoggerFactory; import org.springframework.context.annotation.Bean; import org.springframework.context.annotation.Configuration; import org.springframework.http.client.ClientHttpRequestFactory; import org.springframework.http.client.HttpComponentsClientHttpRequestFactory; import org.springframework.web.client.RestTemplate; import java.io.IOException; import java.util.concurrent.TimeUnit; /** * Created by tang.cheng on 2016/10/19. */ @Configuration public class RestTemplateConfig { private static final Logger LOGGER = LoggerFactory.getLogger(RestTemplateConfig.class); @Bean public RestTemplate restTemplate() { ClientHttpRequestFactory requestFactory = new HttpComponentsClientHttpRequestFactory(getHttpClient()); return new RestTemplate(requestFactory); } //httpclient 4.5.2使用连接池的经典配置 private CloseableHttpClient getHttpClient() { //注册访问协议相关的Socket工厂 Registry<ConnectionSocketFactory> socketFactoryRegistry = RegistryBuilder.<ConnectionSocketFactory>create() .register("http", PlainConnectionSocketFactory.INSTANCE) .register("https", SSLConnectionSocketFactory.getSocketFactory()) .build(); //HttpConnectionFactory:配置写请求/解析响应处理器 HttpConnectionFactory<HttpRoute, ManagedHttpClientConnection> connectionFactory = new ManagedHttpClientConnectionFactory( DefaultHttpRequestWriterFactory.INSTANCE, DefaultHttpResponseParserFactory.INSTANCE ); //DNS解析器 DnsResolver dnsResolver = SystemDefaultDnsResolver.INSTANCE; //创建连接池管理器 PoolingHttpClientConnectionManager manager = new PoolingHttpClientConnectionManager(socketFactoryRegistry, connectionFactory, dnsResolver); //设置默认的socket参数 manager.setDefaultSocketConfig(SocketConfig.custom().setTcpNoDelay(true).build()); manager.setMaxTotal(300);//设置最大连接数。高于这个值时,新连接请求,需要阻塞,排队等待 //路由是对MaxTotal的细分。 // 每个路由实际最大连接数默认值是由DefaultMaxPerRoute控制。 // MaxPerRoute设置的过小,无法支持大并发:ConnectionPoolTimeoutException:Timeout waiting for connection from pool manager.setDefaultMaxPerRoute(200);//每个路由的最大连接 manager.setValidateAfterInactivity(5 * 1000);//在从连接池获取连接时,连接不活跃多长时间后需要进行一次验证,默认为2s //配置默认的请求参数 RequestConfig defaultRequestConfig = RequestConfig.custom() .setConnectTimeout(2 * 1000)//连接超时设置为2s .setSocketTimeout(5 * 1000)//等待数据超时设置为5s .setConnectionRequestTimeout(2 * 1000)//从连接池获取连接的等待超时时间设置为2s // .setProxy(new Proxy(Proxy.Type.HTTP, new InetSocketAddress("192.168.0.2", 1234))) //设置代理 .build(); CloseableHttpClient closeableHttpClient = HttpClients.custom() .setConnectionManager(manager) .setConnectionManagerShared(false)//连接池不是共享模式,这个共享是指与其它httpClient是否共享 .evictIdleConnections(60, TimeUnit.SECONDS)//定期回收空闲连接 .evictExpiredConnections()//回收过期连接 .setConnectionTimeToLive(60, TimeUnit.SECONDS)//连接存活时间,如果不设置,则根据长连接信息决定 .setDefaultRequestConfig(defaultRequestConfig)//设置默认的请求参数 .setConnectionReuseStrategy(DefaultConnectionReuseStrategy.INSTANCE)//连接重用策略,即是否能keepAlive .setKeepAliveStrategy(DefaultConnectionKeepAliveStrategy.INSTANCE)//长连接配置,即获取长连接生产多长时间 .setRetryHandler(new DefaultHttpRequestRetryHandler(0, false))//设置重试次数,默认为3次;当前是禁用掉 .build(); /** *JVM停止或重启时,关闭连接池释放掉连接 */ Runtime.getRuntime().addShutdownHook(new Thread() { @Override public void run() { try { LOGGER.info("closing http client"); closeableHttpClient.close(); LOGGER.info("http client closed"); } catch (IOException e) { LOGGER.error(e.getMessage(), e); } } }); return closeableHttpClient; } }

1.1.5. Ensuring release of low level resources

In order to ensure proper release of system resources one must close either the content stream associated with the entity or the response itself

CloseableHttpClient httpclient = HttpClients.createDefault(); HttpGet httpget = new HttpGet("http://localhost/"); CloseableHttpResponse response = httpclient.execute(httpget); try { HttpEntity entity = response.getEntity(); if (entity != null) { InputStream instream = entity.getContent(); try { // do something useful } finally { instream.close(); } } } finally { response.close(); }

The difference between closing the content stream and closing the response is that the former will attempt to keep the underlying connection alive by consuming the entity content while the latter immediately shuts down and discards the connection.

closing the content stream : 关闭内容连接,尝试通过使用实体内容来保持底层连接的活动

closing the response : 关闭并废弃掉连接

Please note that the HttpEntity#writeTo(OutputStream) method is also required to ensure proper release of system resources once the entity has been fully written out. If this method obtains an instance of java.io.InputStream by calling HttpEntity#getContent(), it is also expected to close the stream in a finally clause.

When working with streaming entities, one can use the EntityUtils#consume(HttpEntity) method to ensure that the entity content has been fully consumed and the underlying stream has been closed.

There can be situations, however, when only a small portion of the entire response content needs to be retrieved and the performance penalty for consuming the remaining content and making the connection reusable is too high, in which case one can terminate the content stream by closing the response.

CloseableHttpClient httpclient = HttpClients.createDefault(); HttpGet httpget = new HttpGet("http://localhost/"); CloseableHttpResponse response = httpclient.execute(httpget); try { HttpEntity entity = response.getEntity(); if (entity != null) { InputStream instream = entity.getContent(); int byteOne = instream.read(); int byteTwo = instream.read(); // Do not need the rest } } finally { response.close(); }

try (CloseableHttpResponse res = HttpClientUtil.getHttpClient().execute(post)) { // 输出 response = JSON.parseObject(EntityUtils.toString(res.getEntity()), UploadMediaResponse.class); } catch (Exception e) { log.error(e.getMessage(), e); }

The connection will not be reused, but all level resources held by it will be correctly deallocated.

https://hc.apache.org/httpcomponents-client-ga/tutorial/html/fundamentals.html#d5e206

httpclient参数配置 https://segmentfault.com/a/1190000010771138

http://hc.apache.org/httpcomponents-client-ga/examples.html

/* http://hc.apache.org/httpcomponents-client-4.5.x/httpclient/examples/org/apache/http/examples/client/ClientConfiguration.java */ package org.apache.http.examples.client; import java.net.InetAddress; import java.net.UnknownHostException; import java.nio.charset.CodingErrorAction; import java.util.Arrays; import javax.net.ssl.SSLContext; import org.apache.http.Consts; import org.apache.http.Header; import org.apache.http.HttpHost; import org.apache.http.HttpRequest; import org.apache.http.HttpResponse; import org.apache.http.ParseException; import org.apache.http.client.CookieStore; import org.apache.http.client.CredentialsProvider; import org.apache.http.client.config.AuthSchemes; import org.apache.http.client.config.CookieSpecs; import org.apache.http.client.config.RequestConfig; import org.apache.http.client.methods.CloseableHttpResponse; import org.apache.http.client.methods.HttpGet; import org.apache.http.client.protocol.HttpClientContext; import org.apache.http.config.ConnectionConfig; import org.apache.http.config.MessageConstraints; import org.apache.http.config.Registry; import org.apache.http.config.RegistryBuilder; import org.apache.http.config.SocketConfig; import org.apache.http.conn.DnsResolver; import org.apache.http.conn.HttpConnectionFactory; import org.apache.http.conn.ManagedHttpClientConnection; import org.apache.http.conn.routing.HttpRoute; import org.apache.http.conn.socket.ConnectionSocketFactory; import org.apache.http.conn.socket.PlainConnectionSocketFactory; import org.apache.http.conn.ssl.SSLConnectionSocketFactory; import org.apache.http.impl.DefaultHttpResponseFactory; import org.apache.http.impl.client.BasicCookieStore; import org.apache.http.impl.client.BasicCredentialsProvider; import org.apache.http.impl.client.CloseableHttpClient; import org.apache.http.impl.client.HttpClients; import org.apache.http.impl.conn.DefaultHttpResponseParser; import org.apache.http.impl.conn.DefaultHttpResponseParserFactory; import org.apache.http.impl.conn.ManagedHttpClientConnectionFactory; import org.apache.http.impl.conn.PoolingHttpClientConnectionManager; import org.apache.http.impl.conn.SystemDefaultDnsResolver; import org.apache.http.impl.io.DefaultHttpRequestWriterFactory; import org.apache.http.io.HttpMessageParser; import org.apache.http.io.HttpMessageParserFactory; import org.apache.http.io.HttpMessageWriterFactory; import org.apache.http.io.SessionInputBuffer; import org.apache.http.message.BasicHeader; import org.apache.http.message.BasicLineParser; import org.apache.http.message.LineParser; import org.apache.http.ssl.SSLContexts; import org.apache.http.util.CharArrayBuffer; import org.apache.http.util.EntityUtils; /** * This example demonstrates how to customize and configure the most common aspects * of HTTP request execution and connection management. */ public class ClientConfiguration { public final static void main(String[] args) throws Exception { // Use custom message parser / writer to customize the way HTTP // messages are parsed from and written out to the data stream. HttpMessageParserFactory<HttpResponse> responseParserFactory = new DefaultHttpResponseParserFactory() { @Override public HttpMessageParser<HttpResponse> create( SessionInputBuffer buffer, MessageConstraints constraints) { LineParser lineParser = new BasicLineParser() { @Override public Header parseHeader(final CharArrayBuffer buffer) { try { return super.parseHeader(buffer); } catch (ParseException ex) { return new BasicHeader(buffer.toString(), null); } } }; return new DefaultHttpResponseParser( buffer, lineParser, DefaultHttpResponseFactory.INSTANCE, constraints) { @Override protected boolean reject(final CharArrayBuffer line, int count) { // try to ignore all garbage preceding a status line infinitely return false; } }; } }; HttpMessageWriterFactory<HttpRequest> requestWriterFactory = new DefaultHttpRequestWriterFactory(); // Use a custom connection factory to customize the process of // initialization of outgoing HTTP connections. Beside standard connection // configuration parameters HTTP connection factory can define message // parser / writer routines to be employed by individual connections. HttpConnectionFactory<HttpRoute, ManagedHttpClientConnection> connFactory = new ManagedHttpClientConnectionFactory( requestWriterFactory, responseParserFactory); // Client HTTP connection objects when fully initialized can be bound to // an arbitrary network socket. The process of network socket initialization, // its connection to a remote address and binding to a local one is controlled // by a connection socket factory. // SSL context for secure connections can be created either based on // system or application specific properties. SSLContext sslcontext = SSLContexts.createSystemDefault(); // Create a registry of custom connection socket factories for supported // protocol schemes. Registry<ConnectionSocketFactory> socketFactoryRegistry = RegistryBuilder.<ConnectionSocketFactory>create() .register("http", PlainConnectionSocketFactory.INSTANCE) .register("https", new SSLConnectionSocketFactory(sslcontext)) .build(); // Use custom DNS resolver to override the system DNS resolution. DnsResolver dnsResolver = new SystemDefaultDnsResolver() { @Override public InetAddress[] resolve(final String host) throws UnknownHostException { if (host.equalsIgnoreCase("myhost")) { return new InetAddress[] { InetAddress.getByAddress(new byte[] {127, 0, 0, 1}) }; } else { return super.resolve(host); } } }; // Create a connection manager with custom configuration. PoolingHttpClientConnectionManager connManager = new PoolingHttpClientConnectionManager( socketFactoryRegistry, connFactory, dnsResolver); // Create socket configuration SocketConfig socketConfig = SocketConfig.custom() .setTcpNoDelay(true) .build(); // Configure the connection manager to use socket configuration either // by default or for a specific host. connManager.setDefaultSocketConfig(socketConfig); connManager.setSocketConfig(new HttpHost("somehost", 80), socketConfig); // Validate connections after 1 sec of inactivity connManager.setValidateAfterInactivity(1000); // Create message constraints MessageConstraints messageConstraints = MessageConstraints.custom() .setMaxHeaderCount(200) .setMaxLineLength(2000) .build(); // Create connection configuration ConnectionConfig connectionConfig = ConnectionConfig.custom() .setMalformedInputAction(CodingErrorAction.IGNORE) .setUnmappableInputAction(CodingErrorAction.IGNORE) .setCharset(Consts.UTF_8) .setMessageConstraints(messageConstraints) .build(); // Configure the connection manager to use connection configuration either // by default or for a specific host. connManager.setDefaultConnectionConfig(connectionConfig); connManager.setConnectionConfig(new HttpHost("somehost", 80), ConnectionConfig.DEFAULT); // Configure total max or per route limits for persistent connections // that can be kept in the pool or leased by the connection manager. connManager.setMaxTotal(100); connManager.setDefaultMaxPerRoute(10); connManager.setMaxPerRoute(new HttpRoute(new HttpHost("somehost", 80)), 20); // Use custom cookie store if necessary. CookieStore cookieStore = new BasicCookieStore(); // Use custom credentials provider if necessary. CredentialsProvider credentialsProvider = new BasicCredentialsProvider(); // Create global request configuration RequestConfig defaultRequestConfig = RequestConfig.custom() .setCookieSpec(CookieSpecs.DEFAULT) .setExpectContinueEnabled(true) .setTargetPreferredAuthSchemes(Arrays.asList(AuthSchemes.NTLM, AuthSchemes.DIGEST)) .setProxyPreferredAuthSchemes(Arrays.asList(AuthSchemes.BASIC)) .build(); // Create an HttpClient with the given custom dependencies and configuration. CloseableHttpClient httpclient = HttpClients.custom() .setConnectionManager(connManager) .setDefaultCookieStore(cookieStore) .setDefaultCredentialsProvider(credentialsProvider) .setProxy(new HttpHost("myproxy", 8080)) .setDefaultRequestConfig(defaultRequestConfig) .build(); try { HttpGet httpget = new HttpGet("http://httpbin.org/get"); // Request configuration can be overridden at the request level. // They will take precedence over the one set at the client level. RequestConfig requestConfig = RequestConfig.copy(defaultRequestConfig) .setSocketTimeout(5000) .setConnectTimeout(5000) .setConnectionRequestTimeout(5000) .setProxy(new HttpHost("myotherproxy", 8080)) .build(); httpget.setConfig(requestConfig); // Execution context can be customized locally. HttpClientContext context = HttpClientContext.create(); // Contextual attributes set the local context level will take // precedence over those set at the client level. context.setCookieStore(cookieStore); context.setCredentialsProvider(credentialsProvider); System.out.println("executing request " + httpget.getURI()); CloseableHttpResponse response = httpclient.execute(httpget, context); try { System.out.println("----------------------------------------"); System.out.println(response.getStatusLine()); System.out.println(EntityUtils.toString(response.getEntity())); System.out.println("----------------------------------------"); // Once the request has been executed the local context can // be used to examine updated state and various objects affected // by the request execution. // Last executed request context.getRequest(); // Execution route context.getHttpRoute(); // Target auth state context.getTargetAuthState(); // Proxy auth state context.getTargetAuthState(); // Cookie origin context.getCookieOrigin(); // Cookie spec used context.getCookieSpec(); // User security token context.getUserToken(); } finally { response.close(); } } finally { httpclient.close(); } } }

一个HttpClient的demo:

public class HttpClientTests { static PoolingHttpClientConnectionManager cm; static { cm = new PoolingHttpClientConnectionManager(); cm.setMaxTotal(20); // 最大连接数 cm.setDefaultMaxPerRoute(cm.getMaxTotal()); } HttpClient client; @Test public void testClient() throws Exception { CookieStore cookieStore = new BasicCookieStore(); RequestConfig requestConfig = RequestConfig.custom() .setConnectTimeout(3 * 1000) // 请求超时时间 .setSocketTimeout(60 * 1000) // 等待数据超时时间 .setConnectionRequestTimeout(500). // 连接超时时间 build(); client = HttpClients.custom() .setConnectionManager(cm) .setDefaultRequestConfig(requestConfig) .setDefaultCookieStore(cookieStore) .setKeepAliveStrategy((response, context) -> { HeaderElementIterator it = new BasicHeaderElementIterator( response.headerIterator(HTTP.CONN_KEEP_ALIVE)); while (it.hasNext()) { HeaderElement he = it.nextElement(); String param = he.getName(); String value = he.getValue(); if (value != null && param.equalsIgnoreCase("timeout")) { try { return Long.parseLong(value) * 1000; } catch (NumberFormatException ignore) { } } } return 5 * 1000; // 设置 Keep-alive 时间为 5s }) .build(); URIBuilder builder = new URIBuilder(); builder.setScheme("http").setHost("localhost:8912").setPath("login"); builder.addParameter("username", "dahuang"); builder.addParameter("password", "dahuang123"); HttpGet get = new HttpGet(builder.build()); HttpResponse response = client.execute(get); Thread.sleep(120 * 1000); // 线程休眠时间 HttpResponse response1 = client.execute(get); }

// 维持登录状态 CookieStore cookieStore = new BasicCookieStore(); HttpClient httpClient = HttpClientBuilder.create().setDefaultCookieStore(cookieStore).build(); // 多次调用 HttpGet get = new HttpGet("http://localhost:8912/hello"); HttpResponse response = httpClient.execute(get);

使用 httpclient 连接池及注意事项 http://hrps.me/2017/08/14/java-httpclient/

HttpClient连接池的连接保持、超时和失效机制

HTTP是一种无连接的 事务协议 ,底层使用的还是TCP,连接池复用的就是TCP连接,目的就是在一个TCP连接上进行多次的HTTP请求从而提高性能。每次HTTP请求结束的时候,HttpClient会判断连接是否可以保持,如果可以则交给连接管理器进行管理以备下次重用,否则直接关闭连接。这里涉及到三个问题:

1、如何判断连接是否可以保持?

要想保持连接,首先客户端需要告诉服务器希望保持长连接,这就是所谓的Keep-Alive模式(又称持久连接,连接重用),

HTTP1.0中默认是关闭的,需要在HTTP头加入"Connection: Keep-Alive",才能启用Keep-Alive;

HTTP1.1中默认启用Keep-Alive,加入"Connection: close ",才关闭。

但客户端设置了Keep-Alive并不能保证连接就可以保持,这里情况比较复。要想在一个TCP上进行多次的HTTP会话,关键是如何判断一次HTTP会话结束了?非Keep-Alive模式下可以使用EOF(-1)来判断,但Keep-Alive时服务器不会自动断开连接,有两种最常见的方式。

使用Conent-Length

顾名思义,Conent-Length表示实体内容长度,客户端(服务器)可以根据这个值来判断数据是否接收完成。当请求的资源是静态的页面或图片,服务器很容易知道内容的大小,但如果遇到动态的内容,或者文件太大想多次发送怎么办?

使用Transfer-Encoding

当需要一边产生数据,一边发给客户端,服务器就需要使用 Transfer-Encoding: chunked 这样的方式来代替 Content-Length,Chunk编码将数据分成一块一块的发送。它由若干个Chunk串连而成,以一个标明长度为0 的chunk标示结束。每个Chunk分为头部和正文两部分,头部内容指定正文的字符总数(十六进制的数字 )和数量单位(一般不写),正文部分就是指定长度的实际内容,两部分之间用回车换行(CRLF) 隔开。在最后一个长度为0的Chunk中的内容是称为footer的内容,是一些附加的Header信息。

对于如何判断消息实体的长度,实际情况还要复杂的多,可以参考这篇文章:https://zhanjindong.com/2015/05/08/http-keep-alive-header

总结下HttpClient如何判断连接是否保持:

检查返回response报文头的Transfer-Encoding字段,若该字段值存在且不为chunked,则连接不保持,直接关闭。

检查返回的response报文头的Content-Length字段,若该字段值为空或者格式不正确(多个长度,值不是整数),则连接不保持,直接关闭。

检查返回的response报文头的Connection字段(若该字段不存在,则为Proxy-Connection字段)值:

如果这俩字段都不存在,则1.1版本默认为保持, 1.0版本默认为连接不保持,直接关闭。

如果字段存在,若字段值为close 则连接不保持,直接关闭;若字段值为keep-alive则连接标记为保持。

2、 保持多长时间?

保持时间计时开始时间为连接交换至连接池的时间。 保持时长计算规则为:获取response中 Keep-Alive字段中timeout值,若该存在,则保持时间为 timeout值*1000,单位毫秒。若不存在,则连接保持时间设置为-1,表示为无穷。

3、保持过程中如何保证连接没有失效?

很难保证。传统阻塞I/O模型,只有当I/O操做的时候,socket才能响应I/O事件。当TCP连接交给连接管理器后,它可能还处于“保持连接”的状态,但是无法监听socket状态和响应I/O事件。如果这时服务器将连接关闭的话,客户端是没法知道这个状态变化的,从而也无法采取适当的手段来关闭连接。

针对这种情况,HttpClient采取一个策略,通过一个后台的监控线程定时的去检查连接池中连接是否还“新鲜”,如果过期了,或者空闲了一定时间则就将其从连接池里删除掉。ClientConnectionManager提供了 closeExpiredConnections和closeIdleConnections两个方法。

参考文章

引申阅读

http://www.cnblogs.com/zhanjindong/p/httpclient-connection-pool.html

2.1.持久连接

两个主机建立连接的过程是很复杂的一个过程,涉及到多个数据包的交换,并且也很耗时间。Http连接需要的三次握手开销很大,这一开销对于比较小的http消息来说更大。但是如果我们直接使用已经建立好的http连接,这样花费就比较小,吞吐率更大。

HTTP/1.1默认就支持Http连接复用。兼容HTTP/1.0的终端也可以通过声明来保持连接,实现连接复用。HTTP代理也可以在一定时间内保持连接不释放,方便后续向这个主机发送http请求。这种保持连接不释放的情况实际上是建立的持久连接。HttpClient也支持持久连接。

2.2.HTTP连接路由

HttpClient既可以直接、又可以通过多个中转路由(hops)和目标服务器建立连接。HttpClient把路由分为三种plain(明文 ),tunneled(隧道)和layered(分层)。隧道连接中使用的多个中间代理被称作代理链。

客户端直接连接到目标主机或者只通过了一个中间代理,这种就是Plain路由。客户端通过第一个代理建立连接,通过代理链tunnelling,这种情况就是Tunneled路由。不通过中间代理的路由不可能时tunneled路由。客户端在一个已经存在的连接上进行协议分层,这样建立起来的路由就是layered路由。协议只能在隧道—>目标主机,或者直接连接(没有代理),这两种链路上进行分层。

2.2.1.路由计算

RouteInfo接口包含了数据包发送到目标主机过程中,经过的路由信息。HttpRoute类继承了RouteInfo接口,是RouteInfo的具体实现,这个类是不允许修改的。HttpTracker类也实现了RouteInfo接口,它是可变的,HttpClient会在内部使用这个类来探测到目标主机的剩余路由。HttpRouteDirector是个辅助类,可以帮助计算数据包的下一步路由信息。这个类也是在HttpClient内部使用的。

HttpRoutePlanner接口可以用来表示基于http上下文情况下,客户端到服务器的路由计算策略。HttpClient有两个HttpRoutePlanner的实现类。SystemDefaultRoutePlanner这个类基于java.net.ProxySelector,它默认使用jvm的代理配置信息,这个配置信息一般来自系统配置或者浏览器配置。DefaultProxyRoutePlanner这个类既不使用java本身的配置,也不使用系统或者浏览器的配置。它通常通过默认代理来计算路由信息。

2.2.2. 安全的HTTP连接

为了防止通过Http消息传递的信息不被未授权的第三方获取、截获,Http可以使用SSL/TLS协议来保证http传输安全,这个协议是当前使用最广的。当然也可以使用其他的加密技术。但是通常情况下,Http信息会在加密的SSL/TLS连接上进行传输。

2.3. HTTP连接管理器

2.3.1. 管理连接和连接管理器

Http连接是复杂,有状态的,线程不安全的对象,所以它必须被妥善管理。一个Http连接在同一时间只能被一个线程访问。HttpClient使用一个叫做Http连接管理器的特殊实体类来管理Http连接,这个实体类要实现HttpClientConnectionManager接口。Http连接管理器在新建http连接时,作为工厂类;管理持久http连接的生命周期;同步持久连接(确保线程安全,即一个http连接同一时间只能被一个线程访问)。Http连接管理器和ManagedHttpClientConnection的实例类一起发挥作用,ManagedHttpClientConnection实体类可以看做http连接的一个代理服务器,管理着I/O操作。如果一个Http连接被释放或者被它的消费者明确表示要关闭,那么底层的连接就会和它的代理进行分离,并且该连接会被交还给连接管理器。这是,即使服务消费者仍然持有代理的引用,它也不能再执行I/O操作,或者更改Http连接的状态。

下面的代码展示了如何从连接管理器中取得一个http连接:

HttpClientContext context = HttpClientContext.create(); HttpClientConnectionManager connMrg = new BasicHttpClientConnectionManager(); HttpRoute route = new HttpRoute(new HttpHost("www.yeetrack.com", 80)); // 获取新的连接. 这里可能耗费很多时间 ConnectionRequest connRequest = connMrg.requestConnection(route, null); // 10秒超时 HttpClientConnection conn = connRequest.get(10, TimeUnit.SECONDS); try { // 如果创建连接失败 if (!conn.isOpen()) { // establish connection based on its route info connMrg.connect(conn, route, 1000, context); // and mark it as route complete connMrg.routeComplete(conn, route, context); } // 进行自己的操作. } finally { connMrg.releaseConnection(conn, null, 1, TimeUnit.MINUTES); }

如果要终止连接,可以调用ConnectionRequest的cancel()方法。这个方法会解锁被ConnectionRequest类get()方法阻塞的线程。

2.3.2.简单连接管理器

BasicHttpClientConnectionManager是个简单的连接管理器,它一次只能管理一个连接。尽管这个类是线程安全的,它在同一时间也只能被一个线程使用。BasicHttpClientConnectionManager会尽量重用旧的连接来发送后续的请求,并且使用相同的路由。如果后续请求的路由和旧连接中的路由不匹配,BasicHttpClientConnectionManager就会关闭当前连接,使用请求中的路由重新建立连接。如果当前的连接正在被占用,会抛出java.lang.IllegalStateException异常。

2.3.3.连接池管理器

相对BasicHttpClientConnectionManager来说,PoolingHttpClientConnectionManager是个更复杂的类,它管理着连接池,可以同时为很多线程提供http连接请求。Connections are pooled on a per route basis.当请求一个新的连接时,如果连接池有有可用的持久连接,连接管理器就会使用其中的一个,而不是再创建一个新的连接。

PoolingHttpClientConnectionManager维护的连接数在每个路由基础和总数上都有限制。默认,每个路由基础上的连接不超过2个,总连接数不能超过20。在实际应用中,这个限制可能会太小了,尤其是当服务器也使用Http协议时。

下面的例子演示了如果调整连接池的参数:

PoolingHttpClientConnectionManager cm = new PoolingHttpClientConnectionManager(); // 将最大连接数增加到200 cm.setMaxTotal(200); // 将每个路由基础的连接增加到20 cm.setDefaultMaxPerRoute(20); //将目标主机的最大连接数增加到50 HttpHost localhost = new HttpHost("www.yeetrack.com", 80); cm.setMaxPerRoute(new HttpRoute(localhost), 50); CloseableHttpClient httpClient = HttpClients.custom() .setConnectionManager(cm) .build();

2.3.4.关闭连接管理器

当一个HttpClient的实例不在使用,或者已经脱离它的作用范围,我们需要关掉它的连接管理器,来关闭掉所有的连接,释放掉这些连接占用的系统资源。

CloseableHttpClient httpClient = <...>

httpClient.close();

2.4.多线程请求执行

当使用了请求连接池管理器(比如PoolingClientConnectionManager)后,HttpClient就可以同时执行多个线程的请求了。

PoolingClientConnectionManager会根据它的配置来分配请求连接。如果连接池中的所有连接都被占用了,那么后续的请求就会被阻塞,直到有连接被释放回连接池中。为了防止永远阻塞的情况发生,我们可以把http.conn-manager.timeout的值设置成一个整数。如果在超时时间内,没有可用连接,就会抛出ConnectionPoolTimeoutException异常。

PoolingHttpClientConnectionManager cm = new PoolingHttpClientConnectionManager(); CloseableHttpClient httpClient = HttpClients.custom() .setConnectionManager(cm) .build(); // URL列表数组 String[] urisToGet = { "http://www.domain1.com/", "http://www.domain2.com/", "http://www.domain3.com/", "http://www.domain4.com/" }; // 为每个url创建一个线程,GetThread是自定义的类 GetThread[] threads = new GetThread[urisToGet.length]; for (int i = 0; i < threads.length; i++) { HttpGet httpget = new HttpGet(urisToGet[i]); threads[i] = new GetThread(httpClient, httpget); } // 启动线程 for (int j = 0; j < threads.length; j++) { threads[j].start(); } // join the threads for (int j = 0; j < threads.length; j++) { threads[j].join(); }

即使HttpClient的实例是线程安全的,可以被多个线程共享访问,但是仍旧推荐每个线程都要有自己专用实例的HttpContext。

下面是GetThread类的定义:

static class GetThread extends Thread { private final CloseableHttpClient httpClient; private final HttpContext context; private final HttpGet httpget; public GetThread(CloseableHttpClient httpClient, HttpGet httpget) { this.httpClient = httpClient; this.context = HttpClientContext.create(); this.httpget = httpget; } @Override public void run() { try { CloseableHttpResponse response = httpClient.execute( httpget, context); try { HttpEntity entity = response.getEntity(); } finally { response.close(); } } catch (ClientProtocolException ex) { // Handle protocol errors } catch (IOException ex) { // Handle I/O errors } } }

2.5. 连接回收策略

经典阻塞I/O模型的一个主要缺点就是只有当组侧I/O时,socket才能对I/O事件做出反应。当连接被管理器收回后,这个连接仍然存活,但是却无法监控socket的状态,也无法对I/O事件做出反馈。如果连接被服务器端关闭了,客户端监测不到连接的状态变化(也就无法根据连接状态的变化,关闭本地的socket)。

HttpClient为了缓解这一问题造成的影响,会在使用某个连接前,监测这个连接是否已经过时,如果服务器端关闭了连接,那么连接就会失效。这种过时检查并不是100%有效,并且会给每个请求增加10到30毫秒额外开销。唯一一个可行的,且does not involve a one thread per socket model for idle connections的解决办法,是建立一个监控线程,来专门回收由于长时间不活动而被判定为失效的连接。这个监控线程可以周期性的调用ClientConnectionManager类的closeExpiredConnections()方法来关闭过期的连接,回收连接池中被关闭的连接。它也可以选择性的调用ClientConnectionManager类的closeIdleConnections()方法来关闭一段时间内不活动的连接。

public static class IdleConnectionMonitorThread extends Thread { private final HttpClientConnectionManager connMgr; private volatile boolean shutdown; public IdleConnectionMonitorThread(HttpClientConnectionManager connMgr) { super(); this.connMgr = connMgr; } @Override public void run() { try { while (!shutdown) { synchronized (this) { wait(5000); // 关闭失效的连接 connMgr.closeExpiredConnections(); // 可选的, 关闭30秒内不活动的连接 connMgr.closeIdleConnections(30, TimeUnit.SECONDS); } } } catch (InterruptedException ex) { // terminate } } public void shutdown() { shutdown = true; synchronized (this) { notifyAll(); } } }

2.6. 连接存活策略

Http规范没有规定一个持久连接应该保持存活多久。有些Http服务器使用非标准的Keep-Alive头消息和客户端进行交互,服务器端会保持数秒时间内保持连接。HttpClient也会利用这个头消息。如果服务器返回的响应中没有包含Keep-Alive头消息,HttpClient会认为这个连接可以永远保持。然而,很多服务器都会在不通知客户端的情况下,关闭一定时间内不活动的连接,来节省服务器资源。在某些情况下默认的策略显得太乐观,我们可能需要自定义连接存活策略。

ConnectionKeepAliveStrategy myStrategy = new ConnectionKeepAliveStrategy() { public long getKeepAliveDuration(HttpResponse response, HttpContext context) { // Honor 'keep-alive' header HeaderElementIterator it = new BasicHeaderElementIterator( response.headerIterator(HTTP.CONN_KEEP_ALIVE)); while (it.hasNext()) { HeaderElement he = it.nextElement(); String param = he.getName(); String value = he.getValue(); if (value != null && param.equalsIgnoreCase("timeout")) { try { return Long.parseLong(value) * 1000; } catch(NumberFormatException ignore) { } } } HttpHost target = (HttpHost) context.getAttribute( HttpClientContext.HTTP_TARGET_HOST); if ("www.naughty-server.com".equalsIgnoreCase(target.getHostName())) { // Keep alive for 5 seconds only return 5 * 1000; } else { // otherwise keep alive for 30 seconds return 30 * 1000; } } }; CloseableHttpClient client = HttpClients.custom() .setKeepAliveStrategy(myStrategy) .build();

2.7.socket连接工厂

Http连接使用java.net.Socket类来传输数据。这依赖于ConnectionSocketFactory接口来创建、初始化和连接socket。这样也就允许HttpClient的用户在代码运行时,指定socket初始化的代码。PlainConnectionSocketFactory是默认的创建、初始化明文socket(不加密)的工厂类。

创建socket和使用socket连接到目标主机这两个过程是分离的,所以我们可以在连接发生阻塞时,关闭socket连接。

HttpClientContext clientContext = HttpClientContext.create(); PlainConnectionSocketFactory sf = PlainConnectionSocketFactory.getSocketFactory(); Socket socket = sf.createSocket(clientContext); int timeout = 1000; //ms HttpHost target = new HttpHost("www.yeetrack.com"); InetSocketAddress remoteAddress = new InetSocketAddress( InetAddress.getByName("www.yeetrack.com", 80); //connectSocket源码中,实际没有用到target参数 sf.connectSocket(timeout, socket, target, remoteAddress, null, clientContext);

2.7.1.安全SOCKET分层

LayeredConnectionSocketFactory是ConnectionSocketFactory的拓展接口。分层socket工厂类可以在明文socket的基础上创建socket连接。分层socket主要用于在代理服务器之间创建安全socket。HttpClient使用SSLSocketFactory这个类实现安全socket,SSLSocketFactory实现了SSL/TLS分层。请知晓,HttpClient没有自定义任何加密算法。它完全依赖于Java加密标准(JCE)和安全套接字(JSEE)拓展。

2.7.2.集成连接管理器

自定义的socket工厂类可以和指定的协议(Http、Https)联系起来,用来创建自定义的连接管理器。

ConnectionSocketFactory plainsf = <...> LayeredConnectionSocketFactory sslsf = <...> Registry<ConnectionSocketFactory> r = RegistryBuilder.<ConnectionSocketFactory>create() .register("http", plainsf) .register("https", sslsf) .build(); HttpClientConnectionManager cm = new PoolingHttpClientConnectionManager(r); HttpClients.custom() .setConnectionManager(cm) .build();

2.7.3.SSL/TLS定制

HttpClient使用SSLSocketFactory来创建ssl连接。SSLSocketFactory允许用户高度定制。它可以接受javax.net.ssl.SSLContext这个类的实例作为参数,来创建自定义的ssl连接。

HttpClientContext clientContext = HttpClientContext.create(); KeyStore myTrustStore = <...> SSLContext sslContext = SSLContexts.custom() .useTLS() .loadTrustMaterial(myTrustStore) .build(); SSLConnectionSocketFactory sslsf = new SSLConnectionSocketFactory(sslContext);

2.7.4.域名验证

除了信任验证和在ssl/tls协议层上进行客户端认证,HttpClient一旦建立起连接,就可以选择性验证目标域名和存储在X.509证书中的域名是否一致。这种验证可以为服务器信任提供额外的保障。X509HostnameVerifier接口代表主机名验证的策略。在HttpClient中,X509HostnameVerifier有三个实现类。重要提示:主机名有效性验证不应该和ssl信任验证混为一谈。

StrictHostnameVerifier: 严格的主机名验证方法和java 1.4,1.5,1.6验证方法相同。和IE6的方式也大致相同。这种验证方式符合RFC 2818通配符。The hostname must match either the first CN, or any of the subject-alts. A wildcard can occur in the CN, and in any of the subject-alts.

BrowserCompatHostnameVerifier: 这种验证主机名的方法,和Curl及firefox一致。The hostname must match either the first CN, or any of the subject-alts. A wildcard can occur in the CN, and in any of the subject-alts.StrictHostnameVerifier和BrowserCompatHostnameVerifier方式唯一不同的地方就是,带有通配符的域名(比如*.yeetrack.com),BrowserCompatHostnameVerifier方式在匹配时会匹配所有的的子域名,包括 a.b.yeetrack.com .

AllowAllHostnameVerifier: 这种方式不对主机名进行验证,验证功能被关闭,是个空操作,所以它不会抛出javax.net.ssl.SSLException异常。HttpClient默认使用BrowserCompatHostnameVerifier的验证方式。如果需要,我们可以手动执行验证方式。

SSLContext sslContext = SSLContexts.createSystemDefault(); SSLConnectionSocketFactory sslsf = new SSLConnectionSocketFactory( sslContext, SSLConnectionSocketFactory.STRICT_HOSTNAME_VERIFIER);

2.8.HttpClient代理服务器配置

尽管,HttpClient支持复杂的路由方案和代理链,它同样也支持直接连接或者只通过一跳的连接。

使用代理服务器最简单的方式就是,指定一个默认的proxy参数。

HttpHost proxy = new HttpHost("someproxy", 8080); DefaultProxyRoutePlanner routePlanner = new DefaultProxyRoutePlanner(proxy); CloseableHttpClient httpclient = HttpClients.custom() .setRoutePlanner(routePlanner) .build();

我们也可以让HttpClient去使用jre的代理服务器。

SystemDefaultRoutePlanner routePlanner = new SystemDefaultRoutePlanner( ProxySelector.getDefault()); CloseableHttpClient httpclient = HttpClients.custom() .setRoutePlanner(routePlanner) .build();

又或者,我们也可以手动配置RoutePlanner,这样就可以完全控制Http路由的过程。

HttpRoutePlanner routePlanner = new HttpRoutePlanner() { public HttpRoute determineRoute( HttpHost target, HttpRequest request, HttpContext context) throws HttpException { return new HttpRoute(target, null, new HttpHost("someproxy", 8080), "https".equalsIgnoreCase(target.getSchemeName())); } }; CloseableHttpClient httpclient = HttpClients.custom() .setRoutePlanner(routePlanner) .build(); } }

HttpClient4.3教程 第二章 连接管理http://www.yeetrack.com/?p=782