1.k8S核心资源管理方法

1.1.陈述式资源管理方法

1.1.1.管理名称空间资源

1.1.1.1.查看名称空间

[root@hdss7-21 ~]# kubectl get namespace

NAME STATUS AGE

default Active 6d12h

kube-node-lease Active 6d12h

kube-public Active 6d12h

kube-system Active 6d12h

[root@hdss7-21 ~]# kubectl get ns

NAME STATUS AGE

default Active 6d12h

kube-node-lease Active 6d12h

kube-public Active 6d12h

kube-system Active 6d12h

1.1.1.2.查看名称空间内的资源

[root@hdss7-21 ~]# kubectl get all -n default

//查询default名称空间下所有的资源,默认直接 kubectl get all 和 -n default 同等作用

NAME READY STATUS RESTARTS AGE //pod资源

pod/nginx-ds-mcvxt 1/1 Running 1 6d13h

pod/nginx-ds-zsnz9 1/1 Running 1 6d13h

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE //service资源

service/kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 6d12h

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE //pod控制器资源

daemonset.apps/nginx-ds 2 2 2 2 2 <none> 6d5h

1.1.1.3.创建名称空间

[root@hdss7-21 ~]# kubectl create namespace app //namespace 可以简写为ns

namespace/app created

[root@hdss7-21 ~]# kubectl get namespace

NAME STATUS AGE

app Active 13s

default Active 6d13h

kube-node-lease Active 6d13h

kube-public Active 6d13h

kube-system Active 6d13h

1.1.1.4.删除名称空间

[root@hdss7-21 ~]# kubectl delete namespace app

namespace "app" deleted

[root@hdss7-21 ~]# kubectl get namespace

NAME STATUS AGE

default Active 6d13h

kube-node-lease Active 6d13h

kube-public Active 6d13h

kube-system Active 6d13h

1.1.2.管理Deployment资源

1.1.2.1.创建Deployment

[root@hdss7-21 ~]# kubectl create deployment nginx-dp --image=harbor.od.com/public/nginx:v1.7.9 -n kube-public

deployment.apps/nginx-dp created

1.1.2.2.查看Deployment

简单查看

[root@hdss7-21 ~]# kubectl get deployment -n kube-public

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-dp 1/1 1 1 64s

扩展查看

[root@hdss7-21 ~]# kubectl get pods -n kube-public

NAME READY STATUS RESTARTS AGE

nginx-dp-5dfc689474-v96vj 1/1 Running 0 90s

详细描述

[root@hdss7-21 ~]# kubectl describe deployment nginx-dp -n kube-public

Name: nginx-dp

Namespace: kube-public

CreationTimestamp: Sat, 23 Nov 2019 15:48:18 +0800

Labels: app=nginx-dp

Annotations: deployment.kubernetes.io/revision: 1 //注解

Selector: app=nginx-dp

Replicas: 1 desired | 1 updated | 1 total | 1 available | 0 unavailable

预期一个

StrategyType: RollingUpdate //更新策略,默认滚动发布

MinReadySeconds: 0

RollingUpdateStrategy: 25% max unavailable, 25% max surge

Pod Template:

Labels: app=nginx-dp

Containers:

nginx:

Image: harbor.od.com/public/nginx:v1.7.9

Port: <none>

Host Port: <none>

Environment: <none>

Mounts: <none>

Volumes: <none>

Conditions:

Type Status Reason

---- ------ ------

Available True MinimumReplicasAvailable

Progressing True NewReplicaSetAvailable

OldReplicaSets: <none>

NewReplicaSet: nginx-dp-5dfc689474 (1/1 replicas created)

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal ScalingReplicaSet 3h deployment-controller Scaled up replica set nginx-dp-5dfc689474 to 1

1.1.2.3.查看pod资源

[root@hdss7-21 ~]# kubectl get pods -n kube-public -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-dp-5dfc689474-v96vj 1/1 Running 0 174m 172.7.21.3 hdss7-21.host.com <none> <none>

1.1.2.4.进入pod资源

[root@hdss7-21 ~]# kubectl exec -ti nginx-dp-5dfc689474-v96vj /bin/bash -n kube-public

root@nginx-dp-5dfc689474-v96vj:/# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

8: eth0@if9: <BROADCAST,MULTICAST,UP,LOWER_UP,M-DOWN> mtu 1500 qdisc noqueue state UP

link/ether 02:42:ac:07:15:03 brd ff:ff:ff:ff:ff:ff

inet 172.7.21.3/24 brd 172.7.21.255 scope global eth0

valid_lft forever preferred_lft forever

注意,也可以用docker exec,必须是pod运行的那台主机

1.1.2.5.删除pod资源(重启)

[root@hdss7-21 ~]# watch -n 1 'kubectl describe deployment nginx-dp -n kube-public|grep -C 5 Event'

[root@hdss7-21 ~]# kubectl delete pod nginx-dp-5dfc689474-v96vj -n kube-public

pod "nginx-dp-5dfc689474-v96vj" deleted

[root@hdss7-21 ~]# kubectl get pods -n kube-public -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-dp-5dfc689474-ggxl5 1/1 Running 0 5m29s 172.7.22.3 hdss7-22.host.com <none> <none>

使用watch观察pod重建状态变化

强制删除参数:--force --grace-period=0

1.1.2.6.删除deployment

[root@hdss7-21 ~]# kubectl delete deployment nginx-dp -n kube-public

deployment.extensions "nginx-dp" deleted

[root@hdss7-21 ~]# kubectl get all -n kube-public

No resources found.

1.1.3.管理Service资源

1.1.3.1.创建service

重新创建回来

[root@hdss7-21 ~]# kubectl create deployment nginx-dp --image=harbor.od.com/public/nginx:v1.7.9 -n kube-public

deployment.apps/nginx-dp created

[root@hdss7-21 ~]# kubectl get all -n kube-public

NAME READY STATUS RESTARTS AGE

pod/nginx-dp-5dfc689474-ggsn2 1/1 Running 0 17s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/nginx-dp 1/1 1 1 17s

NAME DESIRED CURRENT READY AGE

replicaset.apps/nginx-dp-5dfc689474 1 1 1 17s

创建service

[root@hdss7-21 ~]# kubectl expose deployment nginx-dp --port=80 -n kube-public

service/nginx-dp exposed

1.1.3.2.查看service

[root@hdss7-21 ~]# kubectl get all -n kube-public //看到多出来一个service

NAME READY STATUS RESTARTS AGE

pod/nginx-dp-5dfc689474-ggsn2 1/1 Running 0 112s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/nginx-dp ClusterIP 192.168.95.151 <none> 80/TCP 24s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/nginx-dp 1/1 1 1 112s

NAME DESIRED CURRENT READY AGE

replicaset.apps/nginx-dp-5dfc689474 1 1 1 112s

#######由于我们没有安装flannel插件,所有只能到运行pod主机查看###########

[root@hdss7-21 ~]# kubectl get pod -n kube-public -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-dp-5dfc689474-ggsn2 1/1 Running 0 9m40s 172.7.22.3 hdss7-22.host.com <none> <none>

[root@hdss7-22 ~]# curl 192.168.95.151

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

[root@hdss7-22 ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.0.1:443 nq

-> 10.4.7.21:6443 Masq 1 0 0

-> 10.4.7.22:6443 Masq 1 0 0

TCP 192.168.95.151:80 nq

-> 172.7.22.3:80 Masq 1 0 0

1.1.3.3.详细查看service

[root@hdss7-22 ~]# kubectl describe svc nginx-dp -n kube-public

Name: nginx-dp

Namespace: kube-public

Labels: app=nginx-dp

Annotations: <none>

Selector: app=nginx-dp

Type: ClusterIP

IP: 192.168.95.151

Port: <unset> 80/TCP

TargetPort: 80/TCP

Endpoints: 172.7.22.3:80

Session Affinity: None

Events: <none>

1.1.3.4.deployment资源,查看LVS调度

[root@hdss7-22 ~]# kubectl scale deployment nginx-dp --replicas=2 -n kube-public

deployment.extensions/nginx-dp scaled

[root@hdss7-22 ~]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.0.1:443 nq

-> 10.4.7.21:6443 Masq 1 0 0

-> 10.4.7.22:6443 Masq 1 0 0

TCP 192.168.95.151:80 nq

-> 172.7.21.3:80 Masq 1 0 0

-> 172.7.22.3:80 Masq 1 0 0

注意:这个192.168.95.151是预先设置生成的虚拟IP,只在集群内部生效,比如hdss7-11是ping不通的,但是后面我们会有服务暴露的方法traefik来实现。因为没有路由。无法跟外部通信。

1.1.4.陈述式用法总结

- 8s集群管理资源的唯一入口是通过响应的方法调用 apiserver的借口

- kubectl是官方的CLI命令行工具,用于和apiserver通信,将用户在命令行输入的命令转化成apiserver可以识别的信息,今儿实现管理k8s资源

- kubectl命令大全:

* --help

* http://doce.kubernetes.org.cn - 陈述式资源管理可以管理90%以上的资源管理需求,缺点也很明显

*命令冗长,复杂,难记忆

*特定场景下,无法满足管理需求

*资源增删查容易,改很痛苦

1.2.声明式资源管理方法

1.2.1.查看资源配置清单

[root@hdss7-22 ~]# kubectl get pods nginx-dp-5dfc689474-hw6vm -o yaml -n kube-public

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: "2019-11-23T11:38:48Z"

generateName: nginx-dp-5dfc689474-

labels:

app: nginx-dp

pod-template-hash: 5dfc689474

name: nginx-dp-5dfc689474-hw6vm

namespace: kube-public

ownerReferences:

- apiVersion: apps/v1

blockOwnerDeletion: true

controller: true

kind: ReplicaSet

name: nginx-dp-5dfc689474

uid: e0f91f5a-a601-4728-b57e-77f82e2dcc5f

resourceVersion: "173324"

selfLink: /api/v1/namespaces/kube-public/pods/nginx-dp-5dfc689474-hw6vm

uid: 6ecd27e5-89cd-4803-bc9a-6c281c8e3f16

spec:

containers:

- image: harbor.od.com/public/nginx:v1.7.9

imagePullPolicy: IfNotPresent

name: nginx

[root@hdss7-22 ~]# kubectl get service -n kube-public

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nginx-dp ClusterIP 192.168.95.151 <none> 80/TCP 50m

[root@hdss7-22 ~]# kubectl get service nginx-dp -o yaml -n kube-public

apiVersion: v1

kind: Service

metadata:

creationTimestamp: "2019-11-23T11:25:55Z"

labels:

app: nginx-dp

name: nginx-dp

namespace: kube-public

resourceVersion: "172227"

selfLink: /api/v1/namespaces/kube-public/services/nginx-dp

uid: f6cc8c7f-50f1-4c75-8eac-8d4a20133af1

spec:

clusterIP: 192.168.95.151

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx-dp

sessionAffinity: None

type: ClusterIP

status:

loadBalancer: {}

1.2.2.解释资源配置清单(apiversion、kind、meadata、spec)

[root@hdss7-22 ~]# kubectl explain service.metadata

KIND: Service

VERSION: v1

RESOURCE: metadata <Object>

DESCRIPTION:

Standard object's metadata. More info:

https://git.k8s.io/community/contributors/devel/api-conventions.md#metadata

ObjectMeta is metadata that all persisted resources must have, which

includes all objects users must create.

FIELDS:

annotations <map[string]string>

Annotations is an unstructured key value map stored with a resource that

may be set by external tools to store and retrieve arbitrary metadata. They

are not queryable and should be preserved when modifying objects. More

info: http://kubernetes.io/docs/user-guide/annotations

clusterName <string>

The name of the cluster which the object belongs to. This is used to

distinguish resources with same name and namespace in different clusters.

This field is not set anywhere right now and apiserver is going to ignore

it if set in create or update request.

1.2.3.创建资源配置清单

[root@hdss7-200 ~]# vi nginx-ds-svc.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx-ds

name: nginx-ds

namespace: default

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx-ds

sessionAffinity: None

type: ClusterIP

1.2.4.应用资源配置清单

[root@hdss7-21 ~]# kubectl create -f nginx-ds-svc.yaml

service/nginx-ds created

查看(默认default名称空间)

[root@hdss7-21 ~]# kubectl get svc -n default

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 6d17h

nginx-ds ClusterIP 192.168.189.230 <none> 80/TCP 110s

[root@hdss7-21 ~]# kubectl get svc nginx-ds -o yaml

apiVersion: v1

kind: Service

metadata:

creationTimestamp: "2019-11-23T12:28:20Z"

labels:

app: nginx-ds

name: nginx-ds

namespace: default

resourceVersion: "177549"

selfLink: /api/v1/namespaces/default/services/nginx-ds

uid: 24add3a1-cf18-4c29-85fc-65e45f54edbb

spec:

clusterIP: 192.168.189.230

ports:

- port: 80

protocol: TCP

targetPort: 80

selector:

app: nginx-ds

sessionAffinity: None

type: ClusterIP

status:

loadBalancer: {}

1.2.5.修改资源配置清单并应用

1.2.5.1.在线修改

[root@hdss7-21 ~]# kubectl edit svc nginx-ds

Edit cancelled, no changes made. //修改port801

[root@hdss7-21 ~]# kubectl edit svc nginx-ds

service/nginx-ds edited

查看:

[root@hdss7-21 ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 6d18h

nginx-ds ClusterIP 192.168.189.230 <none> 801/TCP 11m

1.2.5.2.离线修改

[root@hdss7-21 ~]# vi nginx-ds-svc.yaml

[root@hdss7-21 ~]# kubectl apply -f nginx-ds-svc.yaml

daemonset.extensions/nginx-ds configured

vim /opt/kubernetes/server/bin/kube-apiserver.sh

需要在kube-apiserver.sh的配置文件中指定端口范围,--service-node-port-range?10-29999??这个范围在第一次启动service资源的时候不会出问题,但是在apply的时候,会受到限制,王导默认的是3000-29999?,修改以后重启apiserver

1.2.6.删除资源配置清单

1.2.6.1.陈述式删除

[root@hdss7-21 ~]# kubectl delete svc nginx-ds

service "nginx-ds" deleted

1.2.6.2.声明式删除

[root@hdss7-21 ~]# kubectl delete -f nginx-ds-svc.yaml

service "nginx-ds" deleted

1.2.7.声明式用法总结

- 声明式资源管理,依赖于统一资源配置清单文件对资源进行管理

- 对资源的管理,通过事先定义在统一配置清单内,在通过陈述式-f命令应用到k8s集群里

- 语法格式:kubectl create/apply/delete -f /path/to/yaml

- 不懂的,善用explain查询

2.k8s的核心插件

2.1.K8S的CNI网络插件-Flannel

2.1.1.集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| hdss7-21.host.com | flannel | 10.4.7.21 |

| hdss7-22.host.com | flannel | 10.4.7.22 |

注意:这里部署以hdss7-21.host.com为例,另外一台运算节点方法类似

2.1.2.下载软件,解压,做软链

[root@hdss7-21 ~]# cd /opt/src/

[root@hdss7-21 src]# wget https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz

[root@hdss7-21 src]# mkdir /opt/flannel-v0.11.0

[root@hdss7-21 src]# tar xf flannel-v0.11.0-linux-amd64.tar.gz -C /opt/flannel-v0.11.0/

[root@hdss7-21 src]# ln -s /opt/flannel-v0.11.0/ /opt/flannel

2.1.3.最终目录结构

[root@hdss7-21 flannel]# mkdir /opt/flannel/cert

[root@hdss7-21 flannel]# ll

total 34436

drwxr-xr-x 2 root root 6 Nov 23 21:35 cert

-rwxr-xr-x 1 root root 35249016 Jan 29 2019 flanneld

-rwxr-xr-x 1 root root 2139 Oct 23 2018 mk-docker-opts.sh

-rw-r--r-- 1 root root 4300 Oct 23 2018 README.md

2.1.4.拷贝证书

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/ca.pem .

root@hdss7-200's password:

ca.pem 100% 1346 961.7KB/s 00:00

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/client.pem .

root@hdss7-200's password:

client.pem 100% 1363 19.3KB/s 00:00

[root@hdss7-21 cert]# scp hdss7-200:/opt/certs/client-key.pem .

root@hdss7-200's password:

client-key.pem

2.1.5.创建配置

[root@hdss7-21 flannel]# vi subnet.env

FLANNEL_NETWORK=172.7.0.0/16

FLANNEL_SUBNET=172.7.21.1/24

FLANNEL_MTU=1500

FLANNEL_IPMASQ=false

注意:其他节点不同,SUBNET记得更改

2.1.6.创建启动脚本

[root@hdss7-21 flannel]# vi flanneld.sh

!/bin/sh

./flanneld

--public-ip=10.4.7.21

--etcd-endpoints=https://10.4.7.12:2379,https://10.4.7.21:2379,https://10.4.7.22:2379

--etcd-keyfile=./cert/client-key.pem

--etcd-certfile=./cert/client.pem

--etcd-cafile=./cert/ca.pem

--iface=eth0

--subnet-file=./subnet.env

--healthz-port=2401

注意:其他节点不同,public-ip记得更改

2.1.7.检查配置,权限,创建日志目录

[root@hdss7-21 flannel]# chmod +x flanneld.sh

[root@hdss7-21 flannel]# mkdir -p /data/logs/flanneld

2.1.8.创建supervisor配置

[root@hdss7-21 flannel]# vi /etc/supervisord.d/flannel.ini

[program:flanneld-7-21]

command=/opt/flannel/flanneld.sh ; the program (relative uses PATH, can take args)

numprocs=1 ; number of processes copies to start (def 1)

directory=/opt/flannel ; directory to cwd to before exec (def no cwd)

autostart=true ; start at supervisord start (default: true)

autorestart=true ; retstart at unexpected quit (default: true)

startsecs=30 ; number of secs prog must stay running (def. 1)

startretries=3 ; max # of serial start failures (default 3)

exitcodes=0,2 ; 'expected' exit codes for process (default 0,2)

stopsignal=QUIT ; signal used to kill process (default TERM)

stopwaitsecs=10 ; max num secs to wait b4 SIGKILL (default 10)

user=root ; setuid to this UNIX account to run the program

redirect_stderr=true ; redirect proc stderr to stdout (default false)

stdout_logfile=/data/logs/flanneld/flanneld.stdout.log ; stderr log path, NONE for none; default AUTO

stdout_logfile_maxbytes=64MB ; max # logfile bytes b4 rotation (default 50MB)

stdout_logfile_backups=4 ; # of stdout logfile backups (default 10)

stdout_capture_maxbytes=1MB ; number of bytes in 'capturemode' (default 0)

stdout_events_enabled=false ; emit events on stdout writes (default false)

注意:其他节点不同,记得修改program

2.1.9.操作etcd,增加host-gw

[root@hdss7-21 etcd]# ./etcdctl set /coreos.com/network/config '{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}'

{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}

查看

[root@hdss7-21 etcd]# ./etcdctl get /coreos.com/network/config

{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}

2.1.10.启动服务并检查

[root@hdss7-21 flannel]# supervisorctl update

flanneld-7-21: added process group

[root@hdss7-21 flannel]# supervisorctl status

flanneld-7-21 RUNNING pid 8173, uptime 0:01:49

[root@hdss7-21 flannel]# tail -fn 200 /data/logs/flanneld/flanneld.stdout.log

I1123 21:53:59.294735 8174 main.go:527] Using interface with name eth0 and address 10.4.7.21

I1123 21:53:59.294855 8174 main.go:540] Using 10.4.7.21 as external address

2019-11-23 21:53:59.295437 I | warning: ignoring ServerName for user-provided CA for backwards compatibility is deprecated

I1123 21:53:59.295497 8174 main.go:244] Created subnet manager: Etcd Local Manager with Previous Subnet: 172.7.21.0/24

I1123 21:53:59.295502 8174 main.go:247] Installing signal handlers

I1123 21:53:59.295794 8174 main.go:587] Start healthz server on 0.0.0.0:2401

I1123 21:53:59.306259 8174 main.go:386] Found network config - Backend type: host-gw

I1123 21:53:59.309982 8174 local_manager.go:201] Found previously leased subnet (172.7.21.0/24), reusing

I1123 21:53:59.312191 8174 local_manager.go:220] Allocated lease (172.7.21.0/24) to current node (10.4.7.21)

I1123 21:53:59.312442 8174 main.go:317] Wrote subnet file to ./subnet.env

I1123 21:53:59.312449 8174 main.go:321] Running backend.

I1123 21:53:59.312717 8174 route_network.go:53] Watching for new subnet leases

I1123 21:53:59.314605 8174 main.go:429] Waiting for 22h59m59.994825456s to renew lease

I1123 21:53:59.315253 8174 iptables.go:145] Some iptables rules are missing; deleting and recreating rules

I1123 21:53:59.315274 8174 iptables.go:167] Deleting iptables rule: -s 172.7.0.0/16 -j ACCEPT

I1123 21:53:59.316551 8174 iptables.go:167] Deleting iptables rule: -d 172.7.0.0/16 -j ACCEPT

I1123 21:53:59.318336 8174 iptables.go:155] Adding iptables rule: -s 172.7.0.0/16 -j ACCEPT

I1123 21:53:59.327024 8174 iptables.go:155] Adding iptables rule: -d 172.7.0.0/16 -j ACCEPT

2.1.11安装部署启动检查所有集群规划节点

- 其他节点基本和hdss7-21相同,注意修改一下文件:

- subnet.env

- flanneld.sh

- /etc/supervisord.d/flannel.ini

2.1.12.再次验证集群,POD之间网络互通

[root@hdss7-22 flannel]# ping 172.7.21.2

PING 172.7.21.2 (172.7.21.2) 56(84) bytes of data.

64 bytes from 172.7.21.2: icmp_seq=1 ttl=63 time=0.554 ms

64 bytes from 172.7.21.2: icmp_seq=2 ttl=63 time=0.485 ms

[root@hdss7-21 flannel]# ping 172.7.22.2

PING 172.7.22.2 (172.7.22.2) 56(84) bytes of data.

64 bytes from 172.7.22.2: icmp_seq=1 ttl=63 time=0.271 ms

64 bytes from 172.7.22.2: icmp_seq=2 ttl=63 time=0.196 ms

2.1.13.在各运算节点上优化iptables规则

2.1.13.1.编辑并应用nginx-ds.yaml

hdss7-21 上

[root@hdss7-21 ~]# vi nginx-ds.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: nginx-ds

spec:

template:

metadata:

labels:

app: nginx-ds

spec:

containers:

- name: my-nginx

image: harbor.od.com/public/nginx:curl

ports:

- containerPort: 80

[root@hdss7-21 ~]# kubectl apply -f nginx-ds.yaml

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

daemonset.extensions/nginx-ds configured

2.1.13.2.重启pod加载nginx:url

[root@hdss7-21 ~]# kubectl get pods -n default

NAME READY STATUS RESTARTS AGE

nginx-ds-mcvxt 1/1 Running 1 6d22h

nginx-ds-zsnz9 1/1 Running 1 6d22h

[root@hdss7-21 ~]# kubectl delete pod nginx-ds-mcvxt

pod "nginx-ds-mcvxt" deleted

[root@hdss7-21 ~]# kubectl delete pod nginx-ds-zsnz9

pod "nginx-ds-zsnz9" deleted

[root@hdss7-21 ~]# kubectl get pods -n default -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-d5kl8 1/1 Running 0 44s 172.7.22.2 hdss7-22.host.com <none> <none>

nginx-ds-jtn62 1/1 Running 0 56s 172.7.21.2 hdss7-21.host.com <none> <none>

2.1.13.3.进入21.2的节点pod,curl hdss7-22的主机

[root@hdss7-21 ~]# kubectl exec nginx-ds-jtn62 /bin/bash

[root@hdss7-21 ~]# kubectl exec -ti nginx-ds-jtn62 /bin/bash

root@nginx-ds-jtn62:/#

root@nginx-ds-jtn62:/#

root@nginx-ds-jtn62:/#

root@nginx-ds-jtn62:/# curl 172.7.22.2

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

2.1.13.4.查看hdss7-22的nginx访问日志

[root@hdss7-22 flannel]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-d5kl8 1/1 Running 0 5m24s 172.7.22.2 hdss7-22.host.com <none> <none>

nginx-ds-jtn62 1/1 Running 0 5m36s 172.7.21.2 hdss7-21.host.com <none> <none>

[root@hdss7-22 flannel]#

[root@hdss7-22 flannel]#

[root@hdss7-22 flannel]#

[root@hdss7-22 flannel]#

[root@hdss7-22 flannel]# kubectl logs -f nginx-ds-d5kl8

10.4.7.21 - - [23/Nov/2019:16:57:45 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.38.0" "-"

10.4.7.21 - - [23/Nov/2019:17:01:37 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.38.0" "-"

//由此可看出节点访问ip是10.4.7.21,不应该是物理机的ip,说明做了snat转换,而我们希望看到的是容器的真实IP

2.1.13.5.安装iptables-services并设置规则

注意:另一节点,注意iptables规则略有不同,其他运算节点执行时注意修改

- 安装iptables-services并设置开机启动

[root@hdss7-21 ~]# yum install iptables-services -y

[root@hdss7-21 ~]# systemctl start iptables

[root@hdss7-21 ~]# systemctl enable iptables

- 优化SNAT规则,各运算节点之前的各POD之前的网络通信不再出网

[root@hdss7-21 ~]# iptables -t nat -D POSTROUTING -s 172.7.21.0/24 ! -o docker0 -j MASQUERADE

[root@hdss7-21 ~]# iptables -t nat -I POSTROUTING -s 172.7.21.0/24 ! -d 172.7.0.0/16 ! -o docker0 -j MASQUERADE

[root@hdss7-21 ~]# iptables-save |grep -i postrouting

iptables -t filter -D INPUT -j REJECT --reject-with icmp-host-prohibited

iptables -t filter -D FORWARD -j REJECT --reject-with icmp-host-prohibited

##########规则定义#########

10.4.7.21主机上的,来源是172.7.21.0/24段的docker的ip,目标ip不是172.7.0.0/16段,网络发包不从docker0桥设备出站的,才进行SNAT转换

2.1.14.个运算节点保存iptables规则

- 各运算节点保存iptables规则

~]# service iptables save

iptables: Saving firewall rules to /etc/sysconfig/iptables:[ OK ]

- 各自访问对方节点,并查看nginx-access日志,可看到现在暴露的都是容器ip

[root@hdss7-21 ~]# kubectl logs -f nginx-ds-jtn62

172.7.22.2 - - [23/Nov/2019:17:46:48 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.38.0" "-"

[root@hdss7-22 ~]# kubectl logs -f nginx-ds-d5kl8

10.4.7.21 - - [23/Nov/2019:17:01:37 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.38.0" "-"

172.7.21.2 - - [23/Nov/2019:17:43:34 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.38.0" "-"

2.1.15.原理剖析

flannetl host-gw模型

注意:此模型前提条件,所有的宿主机在同一个二层网络下,也就是说他们指向的是同一个网关设备,此模型效率最高

[root@hdss7-21 ~}#route add -net 172.7.22.0/24 gw 10.4.7.22 dev eth0

[root@hdss7-22~}#route add -net 172.7.21.0/24 gw 10.4.7.21 dev eth0

[root@hdss7-21 flannel]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.4.7.254 0.0.0.0 UG 100 0 0 eth0

10.4.7.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.7.21.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

172.7.22.0 10.4.7.22 255.255.255.0 UG 0 0 0 eth0

[root@hdss7-22 flannel]# route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 10.4.7.254 0.0.0.0 UG 100 0 0 eth0

10.4.7.0 0.0.0.0 255.255.255.0 U 100 0 0 eth0

172.7.21.0 10.4.7.21 255.255.255.0 UG 0 0 0 eth0

172.7.22.0 0.0.0.0 255.255.255.0 U 0 0 0 docker0

注意还要优化一条iptables规则:

~]# iptables -t filter -I FORWARD -d 172.7.21.0/24 -j ACCEPT

2.1.16.flannel VxLAN模型

使用方法:

1、先停止flennel.sh ---通过supervisor stop flanneld-7-[21.22]

2、删除host-gw模型创建的路由

route del -net 172.7.21.0/24 gw 10.4.7.21 hdss7-22上

route del -net 172.7.22.0/24 gw 10.4.7.22 hdss7-21上

3、在etcd节点修改

./etcdctl get /coreos.com/network/config

./etcdctl rm /coreos.com/network/config

etcd]# ./etcdctl set /coreos.com/network/config '{"Network": "172.7.0.0/16", "Backend": {"Type": "VxLAN"}}'

4、supervisorctl start flanneld-7-21

supervisorctl start flanneld-7-22

5、查看ifconfig 会多了一个flannel 1的设备,route -n是没有路由的

2.1.17.flannel直接路由模型(智能判定)

类似与mysql日志的mixed模式

'{"Network": "172.7.0.0/16", "Backend": {"Type": "VxLAN","Directrouting": true}}'

2.2.K8S的服务发现插件-CoreDNS

实现k8s里的DNS功能的插件

- kube-dns-kebernetes-v1.2至v1.10

- Coredns-kubenetes-v1.11至今

注意k8s里的dns不是万能的!它应该只负责自动维护“服务名”-->“集群网络IP”之间的关系

2.2.1.部署k8s的内网资源配置清单

注意:在运维主机hdss-200上,配置一个nginx虚拟主机,用以提供k8s统一的资源访问清单入口

2.2.1.1.配置nginx

[root@hdss7-200 html]# vi /etc/nginx/conf.d/k8s-yaml.od.com.conf

server {

listen 80;

server_name k8s-yaml.od.com;

location / {

autoindex on;

default_type text/plain;

root /data/k8s-yaml;

}

}

[root@hdss7-200 html]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

[root@hdss7-200 html]# nginx -s reload

建立yaml目录和coredns的yaml目录

[root@hdss7-200 data]# mkdir /data/k8s-yaml

[root@hdss7-200 data]# cd k8s-yaml/

[root@hdss7-200 k8s-yaml]# mkdir coredns

2.2.1.2.配置dns解析

[root@hdss7-11 ~]# vi /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019111003 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200

k8s-yaml A 10.4.7.200

[root@hdss7-11 ~]# systemctl restart named

[root@hdss7-11 ~]# dig -t A k8s-yaml.od.com @10.4.7.11 +short

10.4.7.200

2.2.1.3.浏览器访问k8s-yaml.od.com

**可以看到所有的目录和yaml文件

2.2.2.部署coredns

吐血推荐黄导之kubernetes内部域名解析原理、弊端及优化方式----黄导

2.2.2.1.下载docker镜像并打包推到harbor仓库

[root@hdss7-200 ~]# docker pull coredns/coredns:1.6.1

[root@hdss7-200 coredns]# docker tag c0f6e815079e harbor.od.com/public/coredns:v1.6.1

[root@hdss7-200 coredns]# docker push harbor.od.com/public/coredns:v1.6.1

2.2.2.2.准备资源配置清单

[https://github.com/kubernetes/kubernetes/blob/master/cluster/addons/dns/coredns/coredns.yaml.base]

rbac.yaml

[root@hdss7-200 coredns]# vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: coredns

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: Reconcile

name: system:coredns

rules:

- apiGroups:

- ""

resources:

- endpoints

- services

- pods

- namespaces

verbs:

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

labels:

kubernetes.io/bootstrapping: rbac-defaults

addonmanager.kubernetes.io/mode: EnsureExists

name: system:coredns

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:coredns

subjects:

- kind: ServiceAccount

name: coredns

namespace: kube-system

cm.yaml

[root@hdss7-200 coredns]# vi cm.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: coredns

namespace: kube-system

data:

Corefile: |

.:53 {

errors

log

health

ready

kubernetes cluster.local 192.168.0.0/16

forward . 10.4.7.11

cache 30

loop

reload

loadbalance

}

dp.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/name: "CoreDNS"

spec:

replicas: 1

selector:

matchLabels:

k8s-app: coredns

template:

metadata:

labels:

k8s-app: coredns

spec:

priorityClassName: system-cluster-critical

serviceAccountName: coredns

containers:

- name: coredns

image: harbor.od.com/public/coredns:v1.6.1

args:

- -conf

- /etc/coredns/Corefile

volumeMounts:

- name: config-volume

mountPath: /etc/coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

livenessProbe:

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 5

dnsPolicy: Default

volumes:

- name: config-volume

configMap:

name: coredns

items:

- key: Corefile

path: Corefile

svc.yaml

apiVersion: v1

kind: Service

metadata:

name: coredns

namespace: kube-system

labels:

k8s-app: coredns

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "CoreDNS"

spec:

selector:

k8s-app: coredns

clusterIP: 192.168.0.2

ports:

- name: dns

port: 53

protocol: UDP

- name: dns-tcp

port: 53

- name: metrics

port: 9153

protocol: TCP

2.2.2.3.应用资源配置清单

在任意运算节点上应用

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/rbac.yaml

serviceaccount/coredns created

clusterrole.rbac.authorization.k8s.io/system:coredns created

clusterrolebinding.rbac.authorization.k8s.io/system:coredns created

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/cm.yaml

configmap/coredns created

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/dp.yaml

deployment.apps/coredns created

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/svc.yaml

service/coredns created

2.2.2.4.查看创建的资源

[root@hdss7-21 ~]# kubectl get all -n kube-system

NAME READY STATUS RESTARTS AGE

pod/coredns-6b6c4f9648-wrrbt 1/1 Running 0 111s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/coredns ClusterIP 192.168.0.2 <none> 53/UDP,53/TCP,9153/TCP 99s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/coredns 1/1 1 1 111s

NAME DESIRED CURRENT READY AGE

replicaset.apps/coredns-6b6c4f9648 1 1 1 111s

详细查看

[root@hdss7-21 ~]# kubectl get all -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/coredns-6b6c4f9648-wrrbt 1/1 Running 0 4m56s 172.7.21.4 hdss7-21.host.com <none> <none>

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/coredns ClusterIP 192.168.0.2 <none> 53/UDP,53/TCP,9153/TCP 4m44s k8s-app=coredns

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/coredns 1/1 1 1 4m56s coredns harbor.od.com/public/coredns:v1.6.1 k8s-app=coredns

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

replicaset.apps/coredns-6b6c4f9648 1 1 1 4m56s coredns harbor.od.com/public/coredns:v1.6.1 k8s-app=coredns,pod-template-hash=6b6c4f9648

2.2.2.5.验证coredns

[root@hdss7-21 ~]# dig -t A www.baidu.com @192.168.0.2 +short

www.a.shifen.com.

39.156.66.18

39.156.66.14

[root@hdss7-21 ~]# dig -t A hdss7-21.host.com @192.168.0.2 +short

10.4.7.21 //自建dns是coredns上级dns,所以差得到

[root@hdss7-21 ~]# kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 192.168.0.1 <none> 443/TCP 7d <none>

[root@hdss7-21 ~]#

[root@hdss7-21 ~]# kubectl get pods -n kube-public

NAME READY STATUS RESTARTS AGE

nginx-dp-5dfc689474-ggsn2 1/1 Running 0 7h23m

nginx-dp-5dfc689474-hw6vm 1/1 Running 0 7h8m

查看:

[root@hdss7-21 ~]# kubectl expose deployment nginx-dp --port=80 -n kube-public

[root@hdss7-21 ~]# kubectl get svc -o wide -n kube-public

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

nginx-dp ClusterIP 192.168.95.151 <none> 80/TCP 7h21m app=nginx-dp

验证:

[root@hdss7-21 ~]# dig -t A nginx-dp @192.168.0.2 +short

[root@hdss7-21 ~]# dig -t A nginx-dp.kube-public.svc.cluster.local. @192.168.0.2 +short

192.168.95.151

找台宿主机验证

查看:

[root@hdss7-21 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-ds-d5kl8 1/1 Running 0 120m 172.7.22.2 hdss7-22.host.com <none> <none>

nginx-ds-jtn62 1/1 Running 0 120m 172.7.21.2 hdss7-21.host.com <none> <none>

进入宿主机容器:

[root@hdss7-21 ~]# kubectl exec -ti nginx-ds-jtn62 /bin/bash

root@nginx-ds-jtn62:/#

验证:

root@nginx-ds-jtn62:/# curl 192.168.95.151

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

root@nginx-ds-jtn62:/# curl nginx-dp.kube-public

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

为什么容器里不用加FQDN?

原因:

root@nginx-ds-jtn62:/# cat /etc/resolv.conf

nameserver 192.168.0.2

search default.svc.cluster.local svc.cluster.local cluster.local host.com

options ndots:5 //dns递归查询的层级,默认5层,效率低,可以参考黄导文章

2.3.K8S的服务暴露插件-Traefik

起因:其实此时外部无法解析到,coredns只对内部解析

[root@hdss7-21 ~]# curl nginx-dp.kube-public.svc.cluster.local.

curl: (6) Could not resolve host: nginx-dp.kube-public.svc.cluster.local.; Unknown error

由来:以上案例,k8s的dns实现了服务在集群"内"被自动发现,那如何是的服务在k8s集群 "外"被使用和访问呢?

2.3.1.NodePort

注意:无法使用kube-proxy的ipvs模型,只能用iptables模型,调度算法也只支持 RR。

2.3.1.1.修改nginx-ds的service资源配置清单

2.3.1.2.重建nginx-ds的service资源

2.3.1.3.查看service

2.3.1.4.浏览器访问

略。。。以后更新

2.3.2.部署traefik(ingress控制器)

注意:

- Ingress只能调度并爆露7层应用,特指http和https协议

- Ingress 是k8s API的标准资源类型之一,也是一种核心资源,它其实就是一组基于域名和URL路径,把用户的请求转发至指定Service资源的规则

- 可以将集群外部的请求流量,转发至集群内部,从而实现服务爆露

- Ingress控制器是能够为Igress资源监听某套接字,然后根据Ingress规则匹配机制路由调度流量的一个组件。

- 谁白了,Ingress没啥神秘的,就是个nginx+一段go脚本而已

2.3.2.1.准备traefik镜像,打包,并上传到harbor仓库

运维主机hdss7-200上

[root@hdss7-200 k8s-yaml]# docker pull traefik:v1.7.2-alpine

[root@hdss7-200 k8s-yaml]# docker images|grep traefik

traefik v1.7.2-alpine add5fac61ae5 13 months ago 72.4MB

[root@hdss7-200 k8s-yaml]# docker tag add5fac61ae5 harbor.od.com/public/traefik:v1.7.2

[root@hdss7-200 k8s-yaml]# docker push harbor.od.com/public/traefik:v1.7.2

The push refers to repository [harbor.od.com/public/traefik]

a02beb48577f: Pushed

ca22117205f4: Pushed

3563c211d861: Pushed

df64d3292fd6: Pushed

v1.7.2: digest: sha256:6115155b261707b642341b065cd3fac2b546559ba035d0262650b3b3bbdd10ea size: 1157

2.3.2.2.准备资源配置清单

运维主机hdss7-200上

官方的yaml文件

rbac.yaml

[root@hdss7-200 k8s-yaml]# mkdir traefik

[root@hdss7-200 k8s-yaml]# cd traefik/

[root@hdss7-200 traefik]# vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: traefik-ingress-controller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: traefik-ingress-controller

rules:

- apiGroups:

- ""

resources:

- services

- endpoints

- secrets

verbs:

- get

- list

- watch

- apiGroups:

- extensions

resources:

- ingresses

verbs:

- get

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: traefik-ingress-controller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: traefik-ingress-controller

subjects:

- kind: ServiceAccount

name: traefik-ingress-controller

namespace: kube-system

ds.ymal

[root@hdss7-200 traefik]# vi ds.yaml

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: traefik-ingress

namespace: kube-system

labels:

k8s-app: traefik-ingress

spec:

template:

metadata:

labels:

k8s-app: traefik-ingress

name: traefik-ingress

spec:

serviceAccountName: traefik-ingress-controller

terminationGracePeriodSeconds: 60

containers:

- image: harbor.od.com/public/traefik:v1.7.2

name: traefik-ingress

ports:

- name: controller

containerPort: 80

hostPort: 81

- name: admin-web

containerPort: 8080

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

args:

- --api

- --kubernetes

- --logLevel=INFO

- --insecureskipverify=true

- --kubernetes.endpoint=https://10.4.7.10:7443

- --accesslog

- --accesslog.filepath=/var/log/traefik_access.log

- --traefiklog

- --traefiklog.filepath=/var/log/traefik.log

- --metrics.prometheus

svc.yaml

kind: Service

apiVersion: v1

metadata:

name: traefik-ingress-service

namespace: kube-system

spec:

selector:

k8s-app: traefik-ingress

ports:

- protocol: TCP

port: 80

name: controller

- protocol: TCP

port: 8080

name: admin-web

ingress.yaml

[root@hdss7-200 traefik]# vi ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: traefik-web-ui

namespace: kube-system

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: traefik.od.com

http:

paths:

- path: /

backend:

serviceName: traefik-ingress-service

servicePort: 8080

2.3.2.3.应用资源配置清单

任意一台运算节点上

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/rbac.yaml

serviceaccount/traefik-ingress-controller created

clusterrole.rbac.authorization.k8s.io/traefik-ingress-controller created

clusterrolebinding.rbac.authorization.k8s.io/traefik-ingress-controller created

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/ds.yaml

daemonset.extensions/traefik-ingress created

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/svc.yaml

service/traefik-ingress-service created

[root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/ingress.yaml

ingress.extensions/traefik-web-ui created

2.3.2.4.检查创建的资源

[root@hdss7-21 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6b6c4f9648-wrrbt 1/1 Running 0 108m

traefik-ingress-9z6wd 1/1 Running 0 10m

traefik-ingress-ksznv 1/1 Running 0 10m

报错:

[root@hdss7-21 ~]# kubectl describe pods traefik-ingress-ksznv -n kube-system

Warning FailedCreatePodSandBox 6m23s kubelet, hdss7-21.host.com Failed create pod sandbox: rpc error: code = Unknown desc = failed to start sandbox container for pod "traefik-ingress-ksznv": Error response from daemon: driver failed programming external connectivity on endpoint k8s_POD_traefik-ingress-ksznv_kube-system_d1389546-d27b-47cd-92c1-f5a8963043fd_0 (2f032861a4eb0e5240554e388b8ae8a5efd9ead3c56e50840aacdf43570c434b): (iptables failed: iptables --wait -t filter -A DOCKER ! -i docker0 -o docker0 -p tcp -d 172.7.21.5 --dport 80 -j ACCEPT: iptables: No chain/target/match by that name.

解决:

systemctl restart docker.service

2.3.3.解析域名

[root@hdss7-11 ~]# vi /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019111004 ; serial

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200

k8s-yaml A 10.4.7.200

traefik A 10.4.7.10

[root@hdss7-11 ~]# systemctl restart named

2.3.4.配置反代

注意:hdss7-11和hdss7-12都要配置

[root@hdss7-11 ~]# vi /etc/nginx/conf.d/od.com.conf

upstream default_backend_traefik {

server 10.4.7.21:81 max_fails=3 fail_timeout=10s;

server 10.4.7.22:81 max_fails=3 fail_timeout=10s;

}

server {

server_name *.od.com;

location / {

proxy_pass http://default_backend_traefik;

proxy_set_header Host $http_host;

proxy_set_header x-forwarded-for $proxy_add_x_forwarded_for;

}

}

[root@hdss7-11 ~]# nginx -t

[root@hdss7-11 ~]# nginx -s reload

2.3.5.浏览器访问

2.4.k8S的GUI资源管理插件-仪表盘

2.4.1.部署kubernetes-dashboard

2.4.1.1.准备dashboard镜像

[root@hdss7-200 harbor]# docker pull k8scn/kubernetes-dashboard-amd64:v1.8.3

v1.8.3: Pulling from k8scn/kubernetes-dashboard-amd64

a4026007c47e: Pull complete

Digest: sha256:ebc993303f8a42c301592639770bd1944d80c88be8036e2d4d0aa116148264ff

Status: Downloaded newer image for k8scn/kubernetes-dashboard-amd64:v1.8.3

docker.io/k8scn/kubernetes-dashboard-amd64:v1.8.3

[root@hdss7-200 harbor]# docker images|grep dashboard

k8scn/kubernetes-dashboard-amd64 v1.8.3 fcac9aa03fd6 18 months ago 102MB

[root@hdss7-200 harbor]# docker tag fcac9aa03fd6 harbor.od.com/public/dashboard:v1.8.3

[root@hdss7-200 harbor]# docker push harbor.od.com/public/dashboard:v1.8.3

The push refers to repository [harbor.od.com/public/dashboard.od.com]

23ddb8cbb75a: Pushed

v1.8.3: digest: sha256:ebc993303f8a42c301592639770bd1944d80c88be8036e2d4d0aa116148264ff size: 529

2.4.1.2.创建资源配置清单

资源配置清单来源

运维主机hdss7-200上

[root@hdss7-200 harbor]# mkdir -p /data/k8s-yaml/dashboard && cd /data/k8s-yaml/dashboard

[root@hdss7-200 dashboard]# vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

name: kubernetes-dashboard-admin

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard-admin

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard-admin

namespace: kube-system

[root@hdss7-200 dashboard]# vi dp.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

priorityClassName: system-cluster-critical

containers:

- name: kubernetes-dashboard

image: harbor.od.com/public/dashboard:v1.8.3

resources:

limits:

cpu: 100m

memory: 300Mi

requests:

cpu: 50m

memory: 100Mi

ports:

- containerPort: 8443

protocol: TCP

args:

# PLATFORM-SPECIFIC ARGS HERE

- --auto-generate-certificates

volumeMounts:

- name: tmp-volume

mountPath: /tmp

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

volumes:

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard-admin

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

[root@hdss7-200 dashboard]# vi svc.yaml

apiVersion: v1

kind: Service

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

k8s-app: kubernetes-dashboard

ports:

- port: 443

targetPort: 8443

[root@hdss7-200 dashboard]# vi ingress.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: kubernetes-dashboard

namespace: kube-system

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: dashboard.od.com

http:

paths:

- backend:

serviceName: kubernetes-dashboard

servicePort: 443

2.4.1.3.应用资源配置清单

[root@hdss7-21 containers]# kubectl apply -f http://k8s-yaml.od.com/dashboard/rbac.yaml

serviceaccount/kubernetes-dashboard-admin created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard-admin created

[root@hdss7-21 containers]# kubectl apply -f http://k8s-yaml.od.com/dashboard/dp.yaml

deployment.apps/kubernetes-dashboard created

[root@hdss7-21 containers]# kubectl apply -f http://k8s-yaml.od.com/dashboard/svc.yaml

service/kubernetes-dashboard created

[root@hdss7-21 containers]# kubectl apply -f http://k8s-yaml.od.com/dashboard/ingress.yaml

ingress.extensions/kubernetes-dashboard created

2.4.1.4.查看创建的资源

[root@hdss7-21 containers]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6b6c4f9648-wrrbt 1/1 Running 0 6d8h

kubernetes-dashboard-76dcdb4677-t4swp 0/1 ImagePullBackOff 0 10m

traefik-ingress-jsrcs 1/1 Running 0 24h

traefik-ingress-v4qxh 1/1 Running 0 24h

[root@hdss7-21 containers]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

coredns ClusterIP 192.168.0.2 <none> 53/UDP,53/TCP,9153/TCP 6d8h

kubernetes-dashboard ClusterIP 192.168.134.43 <none> 443/TCP 10m

traefik-ingress-service ClusterIP 192.168.130.180 <none> 80/TCP,8080/TCP 6d6h

[root@hdss7-21 containers]# kubectl get ingress -n kube-system

NAME HOSTS ADDRESS PORTS AGE

kubernetes-dashboard dashboard.od.com 80 11m

traefik-web-ui traefik.od.com 80 6d6h

2.4.2.解析域名

dhss7-11上

[root@hdss7-11 conf.d]# vi /var/named/od.com.zone

$ORIGIN od.com.

$TTL 600 ; 10 minutes

@ IN SOA dns.od.com. dnsadmin.od.com. (

2019111005 ; serial //前滚一个序列号

10800 ; refresh (3 hours)

900 ; retry (15 minutes)

604800 ; expire (1 week)

86400 ; minimum (1 day)

)

NS dns.od.com.

$TTL 60 ; 1 minute

dns A 10.4.7.11

harbor A 10.4.7.200

k8s-yaml A 10.4.7.200

traefik A 10.4.7.10

dashboard A 10.4.7.10

[root@hdss7-11 conf.d]# systemctl restart named

[root@hdss7-11 conf.d]# dig -t A dashboard.od.com @10.4.7.11 +short

10.4.7.10

[root@hdss7-21 containers]# dig -t A dashboard.od.com @192.168.0.2 +short

10.4.7.10

注意:生产上不建议直接restart named,建议rndc 来 reload

2.4.3.浏览器访问

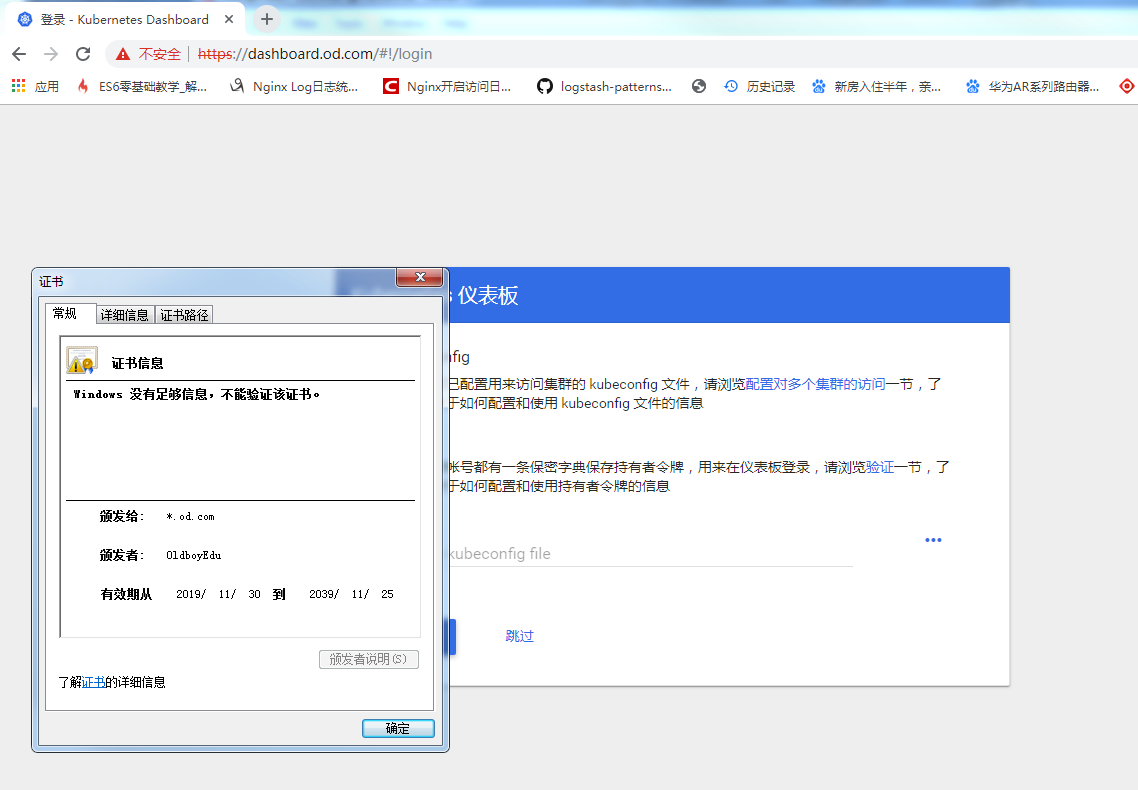

注意:dashboardv1.8.3直接可以跳过,需要升级更高版本,拿令牌登陆,需要https,上图也可以看到,现实不安全的连接

//令牌命令行获取方式:

[root@hdss7-21 conf]# kubectl get secret -n kube-system

NAME TYPE DATA AGE

coredns-token-mhstl kubernetes.io/service-account-token 3 6d10h

default-token-ntmvw kubernetes.io/service-account-token 3 13d

kubernetes-dashboard-admin-token-ws4ck kubernetes.io/service-account-token 3 137m

kubernetes-dashboard-key-holder Opaque 2 94m

traefik-ingress-controller-token-55b2f kubernetes.io/service-account-token 3 6d9h

conf]# kubectl describe secret kubernetes-dashboard-admin-token-ws4ck -n kube-system

2.4.4.配置认证

2.4.4.1.openssl签发证书(可选)

setp1:先去创建dashboard.od.com网站的私钥

[root@hdss7-200 certs]# (umask 077; openssl genrsa -out dashboard.od.com.key 2048)

Generating RSA private key, 2048 bit long modulus

....................+++

........+++

e is 65537 (0x10001)

setp2:openssl命令去做证书签发的请求文件

[root@hdss7-200 certs]# openssl req -new -key dashboard.od.com.key -out dashboard.od.com.csr -subj "/CN=dashboard.od.com/C=CN/ST=BJ/L=Beijing/O=OldboyEdu/OU=ops"

[root@hdss7-200 certs]#ls -l

-rw------- 1 root root 1675 Nov 30 13:18 dashboard.od.com.key

-rw-r--r-- 1 root root 1005 Nov 30 13:28 dashboard.od.com.csr

setp3: x509签发证书

[root@hdss7-200 certs]# openssl x509 -req -in dashboard.od.com.csr -CA ca.pem -CAkey ca-key.pem -CAcreateserial -out dashboard.od.com.crt -days 3650

Signature ok

subject=/CN=dashboard.od.com/C=CN/ST=BJ/L=Beijing/O=OldboyEdu/OU=ops

Getting CA Private Key

[root@hdss7-200 certs]#ls -l

-rw-r--r-- 1 root root 1196 Nov 30 13:36 dashboard.od.com.crt

-rw------- 1 root root 1675 Nov 30 13:18 dashboard.od.com.key

-rw-r--r-- 1 root root 1005 Nov 30 13:28 dashboard.od.com.csr

setp4:查看证书

[root@hdss7-200 certs]# cfssl-certinfo -cert dashboard.od.com.crt

2.4.4.2.cfssl签发证书

setp1:找一个json文件然后修改域名

[root@hdss7-200 certs]# cp client-csr.json od.com-csr.json

[root@hdss7-200 certs]# vi od.com-csr.json

{

"CN": "*.od.com",

"hosts": [

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "beijing",

"L": "beijing",

"O": "od",

"OU": "ops"

}

]

}

setp2:签发

[root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server od.com-csr.json |cfssl-json -bare od.com

setp3:查看生成的证书

[root@hdss7-200 certs]# ls -l

-rw-r--r-- 1 root root 993 Nov 30 14:02 od.com.csr

-rw-r--r-- 1 root root 280 Nov 30 13:58 od.com-csr.json

-rw------- 1 root root 1679 Nov 30 14:02 od.com-key.pem

-rw-r--r-- 1 root root 1363 Nov 30 14:02 od.com.pem

2.4.4.3.拷贝证书

hdss7-11上

[root@hdss7-11 nginx]# ls

conf.d fastcgi.conf fastcgi_params koi-utf mime.types nginx.conf scgi_params uwsgi_params win-utf

default.d fastcgi.conf.default fastcgi_params.default koi-win mime.types.default nginx.conf.default scgi_params.default uwsgi_params.default

[root@hdss7-11 nginx]# mkdir certs

[root@hdss7-11 nginx]# cd certs/

[root@hdss7-11 certs]# scp hdss7-200:/opt/certs/od.com-key.pem .

[root@hdss7-11 certs]# scp hdss7-200:/opt/certs/od.com.pem .

2.4.4.4.创建nginx配置文件

[root@hdss7-11 conf.d]# vi dashboard.od.com.conf

server {

listen 80;

server_name dashboard.od.com;

rewrite ^(.*)$ https://${server_name}$1 permanent;

}

server {

listen 443 ssl;

server_name dashboard.od.com;

ssl_certificate "certs/od.com.pem";

ssl_certificate_key "certs/od.com-key.pem";

ssl_session_cache shared:SSL:1m;

ssl_session_timeout 10m;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_prefer_server_ciphers on;

location / {

proxy_pass http://default_backend_traefik;

proxy_set_header Host $http_host;

proxy_set_header x-forwarded-for $proxy_add_x_forwarded_for;

}

}

[root@hdss7-11 nginx]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

[root@hdss7-11 nginx]# nginx -s reload

2.4.4.5.浏览器访问

2.4.4.5.1.windows 浏览器访问

2.4.4.5.2.windows导出ca证书到windows桌面

由于证书是自签的,所以需要ca导入本地浏览器

[root@hdss7-200 certs]# sz ca.pem

2.4.4.5.3.windows改扩展名为crt并安装

注意:此法新版浏览器失效

2.4.4.6.找一个令牌进行测试

[root@hdss7-21 conf]# kubectl get secret -n kube-system

NAME TYPE DATA AGE

coredns-token-mhstl kubernetes.io/service-account-token 3 6d12h

default-token-ntmvw kubernetes.io/service-account-token 3 13d

kubernetes-dashboard-admin-token-ws4ck kubernetes.io/service-account-token 3 4h9m

kubernetes-dashboard-key-holder Opaque 2 3h25m

traefik-ingress-controller-token-55b2f kubernetes.io/service-account-token 3 6d10h

[root@hdss7-21 conf]# kubectl describe secret kubernetes-dashboard-admin-token-ws4ck -n kube-system

Name: kubernetes-dashboard-admin-token-ws4ck

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: kubernetes-dashboard-admin

kubernetes.io/service-account.uid: 80808715-32d9-41b1-bd78-7ed7ab3af849

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1346 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC1hZG1pbi10b2tlbi13czRjayIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC1hZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjgwODA4NzE1LTMyZDktNDFiMS1iZDc4LTdlZDdhYjNhZjg0OSIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlLXN5c3RlbTprdWJlcm5ldGVzLWRhc2hib2FyZC1hZG1pbiJ9.xfboNXWurS7FEEOstO85MRElasKlvy-gapGLLHPJYHWjPi03gl5OAWXvDQuDJ9vXBrY33jsCkuCj0BgTFMKgXFuQANAaQ3pmg8Vs5_ViW19n4z5QI0E8jfV0rV_vqEz-lc5oXEHtnfGMkkkdr7PkVlZI4PpZgAE6oLjFAoKcmYgTy8Q32EYZf1VmhneB_OdHIw_bh_L1M_HRo9q3bSWESOWWVS68tmW0ZBHphd-Ntt5XqgkJygTYgKEtY-K8DtE_8anJOT0c4hvlc1PTwp1xmbyKwvJgxMuEXiTnPndgHA5rq-8LwuXs8pDc3llRDYVfCutr4ik9KqUSP-Md7Txfow

粘贴token登陆

2.4.5.部署heapster(官方今后废弃)

2.4.5.1.准备heapster镜像(需要kexue上网)

[root@hdss7-200 certs]# docker pull quay.io/bitnami/heapster:1.5.4

[root@hdss7-200 src]# docker tag c359b95ad38b harbor.od.com/public/heapster:v1.5.4

[root@hdss7-200 src]# docker push harbor.od.com/public/heapster:v1.5.4

2.4.5.2.准备资源配置清单

hdss7-200上

[root@hdss7-200 k8s-yaml]# mkdir -p /data/k8s-yaml/dashboard/heapster

[root@hdss7-200 k8s-yaml]# cd dashboard/heapster/

[root@hdss7-200 heapster]# vi rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: heapster

namespace: kube-system

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: heapster

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:heapster

subjects:

- kind: ServiceAccount

name: heapster

namespace: kube-system

[root@hdss7-200 heapster]# vi dp.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: heapster

namespace: kube-system

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: heapster

spec:

serviceAccountName: heapster

containers:

- name: heapster

image: harbor.od.com/public/heapster:v1.5.4

imagePullPolicy: IfNotPresent

command:

- /opt/bitnami/heapster/bin/heapster

- --source=kubernetes:https://kubernetes.default

[root@hdss7-200 heapster]# vi svc.yaml

apiVersion: v1

kind: Service

metadata:

labels:

task: monitoring

# For use as a Cluster add-on (https://github.com/kubernetes/kubernetes/tree/master/cluster/addons)

# If you are NOT using this as an addon, you should comment out this line.

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: Heapster

name: heapster

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 8082

selector:

k8s-app: heapster

2.4.5.3.应用资源配置清单

任意运算节点上

[root@hdss7-21 conf]# kubectl apply -f http://k8s-yaml.od.com/dashboard/heapster/rbac.yaml

serviceaccount/heapster created

clusterrolebinding.rbac.authorization.k8s.io/heapster created

[root@hdss7-21 conf]# kubectl apply -f http://k8s-yaml.od.com/dashboard/heapster/dp.yaml

deployment.extensions/heapster created

[root@hdss7-21 conf]# kubectl apply -f http://k8s-yaml.od.com/dashboard/heapster/svc.yaml

service/heapster created

查看:

[root@hdss7-21 conf]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6b6c4f9648-7mr4w 1/1 Running 0 3h55m

heapster-b5b9f794-gz6mf 1/1 Running 0 68s

kubernetes-dashboard-76dcdb4677-kncnz 1/1 Running 0 3h58m

traefik-ingress-jsrcs 1/1 Running 0 29h

traefik-ingress-v4qxh 1/1 Running 0 29h

2.4.5.4.重启dashboard(图表仅供参考)

3.K8S集群平滑回退或升级

注意:生产根据业务来规划升级时间,这里以hdss7-21为例

3.1.环境描述

可以看到我们集群现在是v1.15.2版本,我们要升级v1.15.4版本

[root@hdss7-21 conf]# kubectl get node

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready master,node 13d v1.15.2

hdss7-22.host.com Ready master,node 13d v1.15.2

3.2.下线升级的节点

修改nginx.conf,把此节点注释掉,此处略。。。

删除节点之前可以看到两个节点,pod随机运行在21.22两个节点上

[root@hdss7-21 conf]# kubectl get node

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready master,node 13d v1.15.2

hdss7-22.host.com Ready master,node 13d v1.15.2

[root@hdss7-21 conf]# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-6b6c4f9648-7mr4w 1/1 Running 0 4h7m 172.7.22.6 hdss7-22.host.com <none> <none>

heapster-b5b9f794-gz6mf 1/1 Running 0 13m 172.7.21.4 hdss7-21.host.com <none> <none>

kubernetes-dashboard-76dcdb4677-kncnz 1/1 Running 0 4h11m 172.7.22.5 hdss7-22.host.com <none> <none>

traefik-ingress-jsrcs 1/1 Running 0 29h 172.7.21.5 hdss7-21.host.com <none> <none>

traefik-ingress-v4qxh 1/1 Running 0 29h 172.7.22.4 hdss7-22.host.com <none> <none>

删除节点之后,可以看到只剩一个节点,pod全部调度到hdss7-22节点上

[root@hdss7-21 conf]# kubectl delete node hdss7-21.host.com

node "hdss7-21.host.com" deleted

[root@hdss7-21 conf]# kubectl get node

NAME STATUS ROLES AGE VERSION

hdss7-22.host.com Ready master,node 13d v1.15.2

[root@hdss7-21 conf]# kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-6b6c4f9648-7mr4w 1/1 Running 0 4h8m 172.7.22.6 hdss7-22.host.com <none> <none>

heapster-b5b9f794-h84z9 1/1 Running 0 24s 172.7.22.8 hdss7-22.host.com <none> <none>

kubernetes-dashboard-76dcdb4677-kncnz 1/1 Running 0 4h12m 172.7.22.5 hdss7-22.host.com <none> <none>

traefik-ingress-v4qxh 1/1 Running 0 29h 172.7.22.4 hdss7-22.host.com <none> <none>

[root@hdss7-21 conf]# dig -t A kubernetes.default.svc.cluster.local @192.168.0.2 +short //可以看到集群内的服务根本不受影响

192.168.0.1

3.3.解压,改名,创建软链接

解压:

[root@hdss7-21 opt]# mkdir 123

[root@hdss7-21 opt]# cd src/

[root@hdss7-21 src]# tar xfv kubernetes-server-linux-amd64-v1.15.4.tar.gz -C /opt/123/

改名:

[root@hdss7-21 src]# cd ../123/

[root@hdss7-21 123]# mv kubernetes/ ../kubernetes-v1.15.4

[root@hdss7-21 opt]# rm -rf 123/

软链接:

[root@hdss7-21 opt]# ll

lrwxrwxrwx 1 root root 24 Nov 17 01:35 kubernetes -> /opt/kubernetes-v1.15.2/

drwxr-xr-x 4 root root 50 Nov 17 01:37 kubernetes-v1.15.2

drwxr-xr-x 4 root root 79 Sep 18 23:09 kubernetes-v1.15.4

[root@hdss7-21 opt]# rm -f kubernetes

[root@hdss7-21 opt]# ln -s /opt/kubernetes-v1.15.4/ /opt/kubernetes

[root@hdss7-21 opt]# ll

total 4

lrwxrwxrwx 1 root root 24 Nov 17 01:35 kubernetes -> /opt/kubernetes-v1.15.4/

drwxr-xr-x 4 root root 76 Nov 30 16:07 kubernetes-v1.15.2

drwxr-xr-x 4 root root 79 Sep 18 23:09 kubernetes-v1.15.4

drwxr-xr-x 2 root root 4096 Nov 23 21:26 src

删除无用的文件:

[root@hdss7-21 opt]# cd kubernetes

[root@hdss7-21 kubernetes]# ls

addons kubernetes-src.tar.gz LICENSES server

[root@hdss7-21 kubernetes]# rm -f kubernetes-src.tar.gz

[root@hdss7-21 kubernetes]# cd server/bin/

[root@hdss7-21 bin]# rm -fr *.tar

[root@hdss7-21 bin]# rm -fr *_tag

3.4.拷贝conf文件和cert文件和sh脚本

[root@hdss7-21 bin]# mkdir conf

[root@hdss7-21 bin]# mkdir cert

[root@hdss7-21 bin]# cp /opt/kubernetes-v1.15.2/server/bin/cert/* ./cert/

[root@hdss7-21 bin]# cp /opt/kubernetes-v1.15.2/server/bin/conf/* ./conf/

[root@hdss7-21 bin]# cp /opt/kubernetes-v1.15.2/server/bin/*.sh .

3.5.重启服务并检查

注意:生产上要一个一个重启,etcd,flannel不需要重启

[root@hdss7-21 bin]# supervisorctl restart all

[root@hdss7-21 bin]# supervisorctl status

etcd-server-7-21 RUNNING pid 9595, uptime 0:04:40

flanneld-7-21 RUNNING pid 12236, uptime 0:00:35

kube-apiserver-7-21 RUNNING pid 9655, uptime 0:04:40

kube-controller-manager-7-21 RUNNING pid 9671, uptime 0:04:40

kube-kubelet-7-21 RUNNING pid 11628, uptime 0:01:55

kube-proxy-7-21 RUNNING pid 9691, uptime 0:04:40

kube-scheduler-7-21 RUNNING pid 9706, uptime 0:04:40

[root@hdss7-21 bin]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

hdss7-21.host.com Ready <none> 7m26s v1.15.4

hdss7-22.host.com Ready master,node 13d v1.15.2

[root@hdss7-21 bin]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-6b6c4f9648-7mr4w 1/1 Running 0 4h46m 172.7.22.6 hdss7-22.host.com <none> <none>

heapster-b5b9f794-h84z9 1/1 Running 0 37m 172.7.22.8 hdss7-22.host.com <none> <none>

kubernetes-dashboard-76dcdb4677-kncnz 1/1 Running 0 4h50m 172.7.22.5 hdss7-22.host.com <none> <none>

traefik-ingress-6jgm6 1/1 Running 0 8m52s 172.7.21.2 hdss7-21.host.com <none> <none>

traefik-ingress-v4qxh 1/1 Running 0 30h 172.7.22.4 hdss7-22.host.com <none> <none>