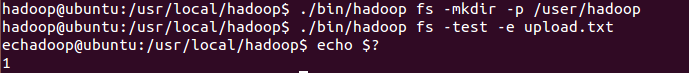

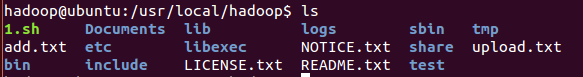

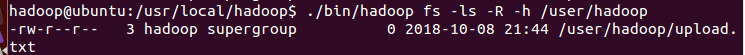

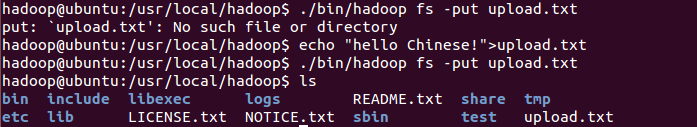

(一)编程实现以下功能,并利用 Hadoop 提供的 Shell 命令完成相同任务: (1) 向 HDFS 中上传任意文本文件,如果指定的文件在 HDFS 中已经存在,则由用户来指定是追加到原有文件末尾还是覆盖原有的文件; 上传文件

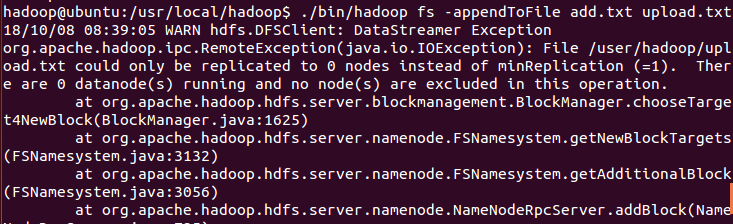

追加文件

覆盖文件

编程: package org.apache.hadoop.examples; import java.io.FileInputStream; import java.io.IOException; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FSDataOutputStream; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; public class CopyFormLocalFile { //judge if the path exists public static boolean test(Configuration conf,String path) { try(FileSystem fs=FileSystem.get(conf)){ return fs.exists(new Path(path)); }catch(IOException e){ e.printStackTrace(); return false; } } //Copies the file to the specified path, overwriting if the path already exists public static void copyFromLoalFile(Configuration conf,String localFilePath,String remoteFilePath){ Path localPath=new Path(localFilePath); Path remotePath=new Path(remoteFilePath); try(FileSystem fs= FileSystem.get(conf)) { fs.copyFromLocalFile(false,true,localPath,remotePath); } catch (IOException e) { // TODO Auto-generated catch block e.printStackTrace(); } } //Append file contents public static void appendToFile(Configuration conf,String localFilePath,String remoteFilePath){ Path remotePath=new Path(remoteFilePath); try(FileSystem fs= FileSystem.get(conf); FileInputStream in=new FileInputStream(localFilePath);) { FSDataOutputStream out=fs.append(remotePath); byte[] data=new byte[1024]; int read=-1; while((read=in.read(data))>0){ out.write(data,0,read); } out.close(); } catch (IOException e) { // TODO Auto-generated catch block e.printStackTrace(); } } public static void main(String[] args){ Configuration conf=new Configuration(); conf.set("fs.default", "hdfs://localhost:9000"); String localFilePath="/usr/loacl/hadoop/add.txt"; String remoteFilePath="/user/hadoop/upload.txt"; String choice="overwrite"; try { /* 判断文件是否存在 */ boolean fileExists = false; if (CopyFormLocalFile.test(conf, remoteFilePath)) { fileExists = true; System.out.println(remoteFilePath + " 已存在."); } else { System.out.println(remoteFilePath + " 不存在."); } /* 进行处理 */ if (!fileExists) { CopyFormLocalFile.copyFromLoalFile(conf, localFilePath, remoteFilePath); System.out.println(localFilePath + " 已上传至 " + remoteFilePath); } else if (choice.equals("overwrite")) { // 选择覆盖 CopyFormLocalFile.copyFromLoalFile(conf, localFilePath, remoteFilePath); System.out.println(localFilePath + " 已覆盖 " + remoteFilePath); } else if (choice.equals("append")) { // 选择追加 CopyFormLocalFile.appendToFile(conf, localFilePath, remoteFilePath); System.out.println(localFilePath + " 已追加至 " + remoteFilePath); } } catch (Exception e) { e.printStackTrace(); } } } (2)从 HDFS 中下载指定文件,如果本地文件与要下载的文件名称相同,则自动对下载的文件重命名;

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import org.apache.hadoop.fs.FileSystem; import java.io.*; public class CopyToLocal { /** * 下载文件到本地 判断本地路径是否已存在,若已存在,则自动进行重命名 */ public static void copyToLocal(Configuration conf, String remoteFilePath, String localFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf)) { File f = new File(localFilePath); /* 如果文件名存在,自动重命名(在文件名后面加上 _0, _1 ...) */ if (f.exists()) { System.out.println(localFilePath + " 已存在."); Integer i = Integer.valueOf(0); while (true) { f = new File(localFilePath + "_" + i.toString()); if (!f.exists()) { localFilePath = localFilePath + "_" + i.toString(); break; } else { i++; continue; } } System.out.println("将重新命名为: " + localFilePath); } // 下载文件到本地 Path localPath = new Path(localFilePath); fs.copyToLocalFile(remotePath, localPath); } catch (IOException e) { // TODO Auto-generated catch block e.printStackTrace(); } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String localFilePath = "/usr/local/hadoop/file1.txt"; // 本地路径 String remoteFilePath = "/user/hadoop/file1.txt"; // HDFS路径 try { CopyToLocal.copyToLocal(conf, remoteFilePath, localFilePath); System.out.println("下载完成"); } catch (Exception e) { e.printStackTrace(); } } } (3)将 HDFS 中指定文件的内容输出到终端中;

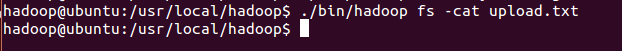

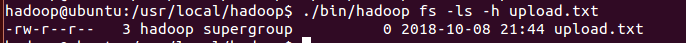

源代码: package org.apache.hadoop.examples; import java.io.*; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; public class cat { /** * 读取文件内容 */ public static void cat(Configuration conf, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf); FSDataInputStream in = fs.open(remotePath); BufferedReader d = new BufferedReader(new InputStreamReader(in));) { String line; while ((line = d.readLine()) != null) { System.out.println(line); } } catch (IOException e) { e.printStackTrace(); } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "/user/hadoop/file1.txt"; // HDFS路径 try { System.out.println("读取文件: " + remoteFilePath); cat.cat(conf, remoteFilePath); System.out.println(" 读取完成"); } catch (Exception e) { e.printStackTrace(); } } } (4)显示 HDFS 中指定的文件的读写权限、大小、创建时间、路径等信息;

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import org.apache.hadoop.fs.FileSystem; import java.io.*; import java.text.SimpleDateFormat; public class fileList { /** * 显示指定文件的信息 */ public static void ls(Configuration conf, String remoteFilePath) { try (FileSystem fs = FileSystem.get(conf)) { Path remotePath = new Path(remoteFilePath); FileStatus[] fileStatuses = fs.listStatus(remotePath); for (FileStatus s : fileStatuses) { System.out.println("路径: " + s.getPath().toString()); System.out.println("权限: " + s.getPermission().toString()); System.out.println("大小: " + s.getLen()); /* 返回的是时间戳,转化为时间日期格式 */ long timeStamp = s.getModificationTime(); SimpleDateFormat format = new SimpleDateFormat( "yyyy-MM-dd HH:mm:ss"); String date = format.format(timeStamp); System.out.println("时间: " + date); } } catch (IOException e) { e.printStackTrace(); } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "/user/hadoop/file1.txt"; // HDFS路径 try { System.out.println("读取文件信息: " + remoteFilePath); fileList.ls(conf, remoteFilePath); System.out.println(" 读取完成"); } catch (Exception e) { e.printStackTrace(); } } } (5) 给定 HDFS 中某一个目录,输出该目录下的所有文件的读写权限、大小、创建时间、路径等信息,如果该文件是目录,则递归输出该目录下所有文件相关信息;

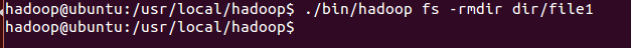

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import org.apache.hadoop.fs.FileSystem; import java.io.*; import java.text.SimpleDateFormat; public class ListDir { /** * 显示指定文件夹下所有文件的信息(递归) */ public static void lsDir(Configuration conf, String remoteDir) { try (FileSystem fs = FileSystem.get(conf)) { Path dirPath = new Path(remoteDir); /* 递归获取目录下的所有文件 */ RemoteIterator<LocatedFileStatus> remoteIterator = fs.listFiles(dirPath, true); /* 输出每个文件的信息 */ while (remoteIterator.hasNext()) { FileStatus s = remoteIterator.next(); System.out.println("路径: " + s.getPath().toString()); System.out.println("权限: " + s.getPermission().toString()); System.out.println("大小: " + s.getLen()); /* 返回的是时间戳,转化为时间日期格式 */ Long timeStamp = s.getModificationTime(); SimpleDateFormat format = new SimpleDateFormat( "yyyy-MM-dd HH:mm:ss"); String date = format.format(timeStamp); System.out.println("时间: " + date); System.out.println(); } } catch (IOException e) { e.printStackTrace(); } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteDir = "/user/hadoop"; // HDFS路径 try { System.out.println("(递归)读取目录下所有文件的信息: " + remoteDir); ListDir.lsDir(conf, remoteDir); System.out.println("读取完成"); } catch (Exception e) { e.printStackTrace(); } } } (5)提供一个 HDFS 内的文件的路径,对该文件进行创建和删除操作。如果文件所在目录不存在,则自动创建目录;

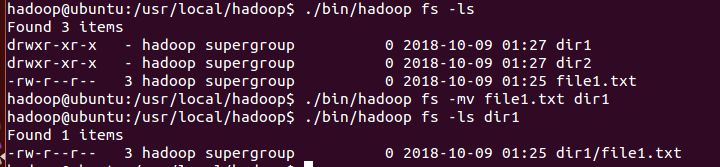

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import java.io.*; public class RemoveOrMake { /** * 判断路径是否存在 */ public static boolean test(Configuration conf, String path) { try (FileSystem fs = FileSystem.get(conf)) { return fs.exists(new Path(path)); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 创建目录 */ public static boolean mkdir(Configuration conf, String remoteDir) { try (FileSystem fs = FileSystem.get(conf)) { Path dirPath = new Path(remoteDir); return fs.mkdirs(dirPath); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 创建文件 */ public static void touchz(Configuration conf, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf)) { FSDataOutputStream outputStream = fs.create(remotePath); outputStream.close(); } catch (IOException e) { e.printStackTrace(); } } /** * 删除文件 */ public static boolean rm(Configuration conf, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf)) { return fs.delete(remotePath, false); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "/user/hadoop/dir3/file2.txt"; // HDFS路径 String remoteDir = "/user/tiny/dir3"; // HDFS路径对应的目录 try { /* 判断路径是否存在,存在则删除,否则进行创建 */ if (RemoveOrMake.test(conf, remoteFilePath)) { RemoveOrMake.rm(conf, remoteFilePath); // 删除 System.out.println("删除文件: " + remoteFilePath); } else { if (!RemoveOrMake.test(conf, remoteDir)) { // 若目录不存在,则进行创建 RemoveOrMake.mkdir(conf, remoteDir); System.out.println("创建文件夹: " + remoteDir); } RemoveOrMake.touchz(conf, remoteFilePath); System.out.println("创建文件: " + remoteFilePath); } } catch (Exception e) { e.printStackTrace(); } } } (7)提供一个 HDFS 的目录的路径,对该目录进行创建和删除操作。创建目录时,如果目录文件所在目录不存在,则自动创建相应目录;删除目录时,由用户指定当该目录不为空时是否还删除该目录;

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import java.io.*; import java.util.Scanner; public class RemoveOrMake { /** * 判断路径是否存在 */ public static boolean test(Configuration conf, String path) { try (FileSystem fs = FileSystem.get(conf)) { return fs.exists(new Path(path)); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 创建目录 */ public static boolean mkdir(Configuration conf, String remoteDir) { try (FileSystem fs = FileSystem.get(conf)) { Path dirPath = new Path(remoteDir); return fs.mkdirs(dirPath); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 创建文件 */ public static void touchz(Configuration conf, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf)) { FSDataOutputStream outputStream = fs.create(remotePath); outputStream.close(); } catch (IOException e) { e.printStackTrace(); } } /** * 删除文件 */ public static boolean rm(Configuration conf, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); System.out.println("目录目录不为空,是否要继续删除(1:继续删除/2:不用:"); Scanner in = new Scanner(System.in); int n = in.nextInt(); try (FileSystem fs = FileSystem.get(conf)) { return fs.delete(remotePath, false); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "/user/hadoop/dir3/file2.txt"; // HDFS路径 String remoteDir = "/user/tiny/dir3"; // HDFS路径对应的目录 try { /* 判断路径是否存在,存在则删除,否则进行创建 */ if (RemoveOrMake.test(conf, remoteFilePath)) { RemoveOrMake.rm(conf, remoteFilePath); // 删除 System.out.println("删除文件: " + remoteFilePath); } else { if (!RemoveOrMake.test(conf, remoteDir)) { // 若目录不存在,则进行创建 RemoveOrMake.mkdir(conf, remoteDir); System.out.println("创建文件夹: " + remoteDir); } RemoveOrMake.touchz(conf, remoteFilePath); System.out.println("创建文件: " + remoteFilePath); } } catch (Exception e) { e.printStackTrace(); } } } (6)向 HDFS 中指定的文件追加内容,由用户指定内容追加到原有文件的开头或结尾;

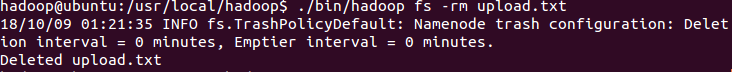

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import org.apache.hadoop.fs.FileSystem; import java.io.*; public class AppendToFile { /** * 判断路径是否存在 */ public static boolean test(Configuration conf, String path) { try (FileSystem fs = FileSystem.get(conf)) { return fs.exists(new Path(path)); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 追加文本内容 */ public static void appendContentToFile(Configuration conf, String content, String remoteFilePath) { try (FileSystem fs = FileSystem.get(conf)) { Path remotePath = new Path(remoteFilePath); /* 创建一个文件输出流,输出的内容将追加到文件末尾 */ FSDataOutputStream out = fs.append(remotePath); out.write(content.getBytes()); out.close(); } catch (IOException e) { e.printStackTrace(); } } /** * 追加文件内容 */ public static void appendToFile(Configuration conf, String localFilePath, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf); FileInputStream in = new FileInputStream(localFilePath);) { FSDataOutputStream out = fs.append(remotePath); byte[] data = new byte[1024]; int read = -1; while ((read = in.read(data))>0) { out.write(data, 0, read); } out.close(); } catch (IOException e) { e.printStackTrace(); } } /** * 移动文件到本地 移动后,删除源文件 */ public static void moveToLocalFile(Configuration conf, String remoteFilePath, String localFilePath) { try (FileSystem fs = FileSystem.get(conf)) { Path remotePath = new Path(remoteFilePath); Path localPath = new Path(localFilePath); fs.moveToLocalFile(remotePath, localPath); } catch (IOException e) { e.printStackTrace(); } } /** * 创建文件 */ public static void touchz(Configuration conf, String remoteFilePath) { try (FileSystem fs = FileSystem.get(conf)) { Path remotePath = new Path(remoteFilePath); FSDataOutputStream outputStream = fs.create(remotePath); outputStream.close(); } catch (IOException e) { e.printStackTrace(); } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "/user/hadoop/file1.txt"; // HDFS文件 String content = "新追加的内容 "; String choice = "after"; // 追加到文件末尾 // String choice = "before"; // 追加到文件开头 try { /* 判断文件是否存在 */ if (!AppendToFile.test(conf, remoteFilePath)) { System.out.println("文件不存在: " + remoteFilePath); } else { if (choice.equals("after")) { // 追加在文件末尾 AppendToFile.appendContentToFile(conf, content, remoteFilePath); System.out.println("已追加内容到文件末尾" + remoteFilePath); } else if (choice.equals("before")) { // 追加到文件开头 /* 没有相应的api可以直接操作,因此先把文件移动到本地,创建一个新的HDFS,再按顺序追加内容 */ String localTmpPath = "/user/hadoop/tmp.txt"; AppendToFile.moveToLocalFile(conf, remoteFilePath, localTmpPath); // 移动到本地 AppendToFile.touchz(conf, remoteFilePath); // 创建一个新文件 AppendToFile.appendContentToFile(conf, content, remoteFilePath); // 先写入新内容 AppendToFile.appendToFile(conf, localTmpPath, remoteFilePath); // 再写入原来内容 System.out.println("已追加内容到文件开头: " + remoteFilePath); } } } catch (Exception e) { e.printStackTrace(); } } } (7)删除 HDFS 中指定的文件;

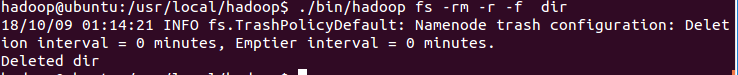

源代码: package org.apache.hadoop.examples; import java.io.IOException; import java.util.Scanner; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; public class Remove { /** * 删除文件 */ public static boolean rm(Configuration conf, String remoteFilePath) { Path remotePath = new Path(remoteFilePath); try (FileSystem fs = FileSystem.get(conf)) { return fs.delete(remotePath, false); } catch (IOException e) { e.printStackTrace(); return false; } } public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "/user/hadoop/dir3/file2.txt"; // HDFS路径 try { RemoveOrMake.rm(conf, remoteFilePath); // 删除 System.out.println("删除文件: " + remoteFilePath); } catch (Exception e) { e.printStackTrace(); } } } (8)在 HDFS 中,将文件从源路径移动到目的路径。

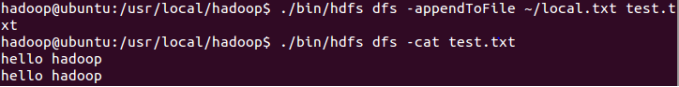

源代码: package org.apache.hadoop.examples; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.*; import org.apache.hadoop.fs.FileSystem; import java.io.*; public class MoveFile { public static boolean mv(Configuration conf, String remoteFilePath, String remoteToFilePath) { try (FileSystem fs = FileSystem.get(conf)) { Path srcPath = new Path(remoteFilePath); Path dstPath = new Path(remoteToFilePath); return fs.rename(srcPath, dstPath); } catch (IOException e) { e.printStackTrace(); return false; } } /** * 主函数 */ public static void main(String[] args) { Configuration conf = new Configuration(); conf.set("fs.defaultFS", "hdfs://localhost:9000"); String remoteFilePath = "hdfs:///user/hadoop/file1.txt"; // 源文件HDFS路径 String remoteToFilePath = "hdfs:///user/hadoop/dir1"; // 目的HDFS路径 try { if (MoveFile.mv(conf, remoteFilePath, remoteToFilePath)) { System.out.println("将文件 " + remoteFilePath + " 移动到 " + remoteToFilePath); } else { System.out.println("操作失败(源文件不存在或移动失败)"); } } catch (Exception e) { e.printStackTrace(); } } } (二)编程实现一个类“MyFSDataInputStream”,该类继承“org.apache.hadoop.fs.FSDataInputStream”,要求如下:实现按行读取 HDFS 中指定文件的方法“readLine()”,如果读到文件末尾, 则返回空,否则返回文件一行的文本。 源代码: package org.apache.hadoop.examples; import java.io.BufferedReader; import java.io.IOException; import java.io.InputStream; import java.io.InputStreamReader; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FSDataInputStream; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; public class MyFSDataInputStream extends FSDataInputStream{ public MyFSDataInputStream(InputStream in) { super(in); // TODO Auto-generated constructor stub } public static String readline(Configuration conf,String remoteFilePath){ try (FileSystem fs=FileSystem.get(conf)){ Path remotePath=new Path(remoteFilePath); FSDataInputStream in=fs.open(remotePath); BufferedReader d=new BufferedReader(new InputStreamReader(in)); String line=null; if((line=d.readLine())!=null){ d.close(); in.close(); return line; } return null; } catch (IOException e) { // TODO Auto-generated catch block e.printStackTrace(); return null; } } public static void main(String[] args){ Configuration conf=new Configuration(); conf.set("fs.default", "hdfs://localhost:9000"); String remoteFilePath="/user/hadoop/file1.txt"; System.out.println("read file:"+remoteFilePath); System.out.println(MyFSDataInputStream.readline(conf, remoteFilePath)); System.out.println(" read finish!"); } } (三)查看 Java 帮助手册或其它资料,用“java.net.URL”和“org.apache.hadoop.fs.FsURLStrea mHandlerFactory”编程完成输出 HDFS 中指定文件的文本到终端中。 源代码: package org.apache.hadoop.examples; import java.io.IOException; import java.io.InputStream; import java.net.MalformedURLException; import java.net.URL; import org.apache.hadoop.fs.FsUrlStreamHandlerFactory; import org.apache.hadoop.io.IOUtils; public class fileUrl { static{ URL.setURLStreamHandlerFactory(new FsUrlStreamHandlerFactory()); } public static void cat(String remoteFilePath){ try { InputStream in=new URL("hdfs","localhost",9000,remoteFilePath).openStream(); IOUtils.copyBytes(in, System.out, 4096,false); IOUtils.closeStream(in); } catch ( IOException e) { // TODO Auto-generated catch block e.printStackTrace(); } } public static void main(String[] args){ String remoteFilePath="/user/hadoop/file1.txt";//file route System.out.println("read file:"+remoteFilePath); fileUrl.cat(remoteFilePath); System.out.println(" read finish!"); } } 2.实验中出现问题:(说明和截图) 追加文件

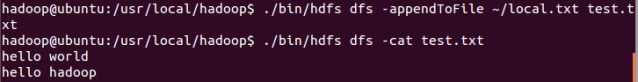

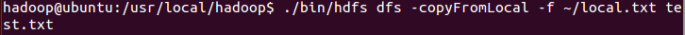

覆盖文件

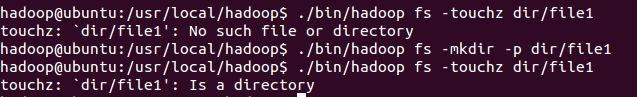

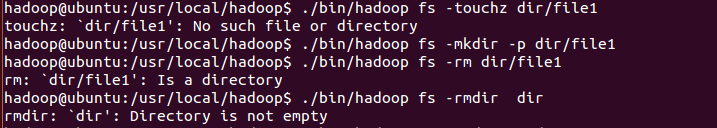

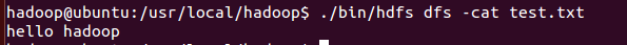

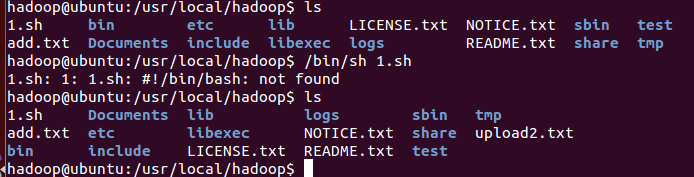

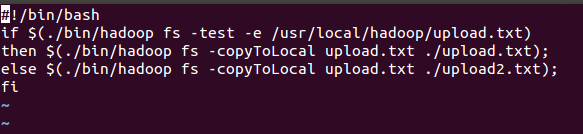

执行shell文件

3.解决方案:(列出遇到的问题和解决办法,列出没有解决的问题): 重建虚拟机,并且配置hadoop,重新执行命令,问题解决。