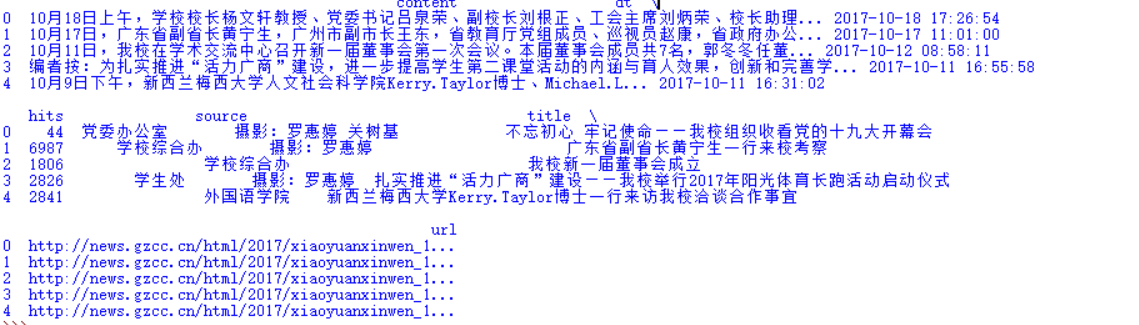

1.结构化:

- 单条新闻的详情字典:news

- 一个列表页所有单条新闻汇总列表:newsls.append(news)

- 所有列表页的所有新闻汇总列表:newstotal.extend(newsls)

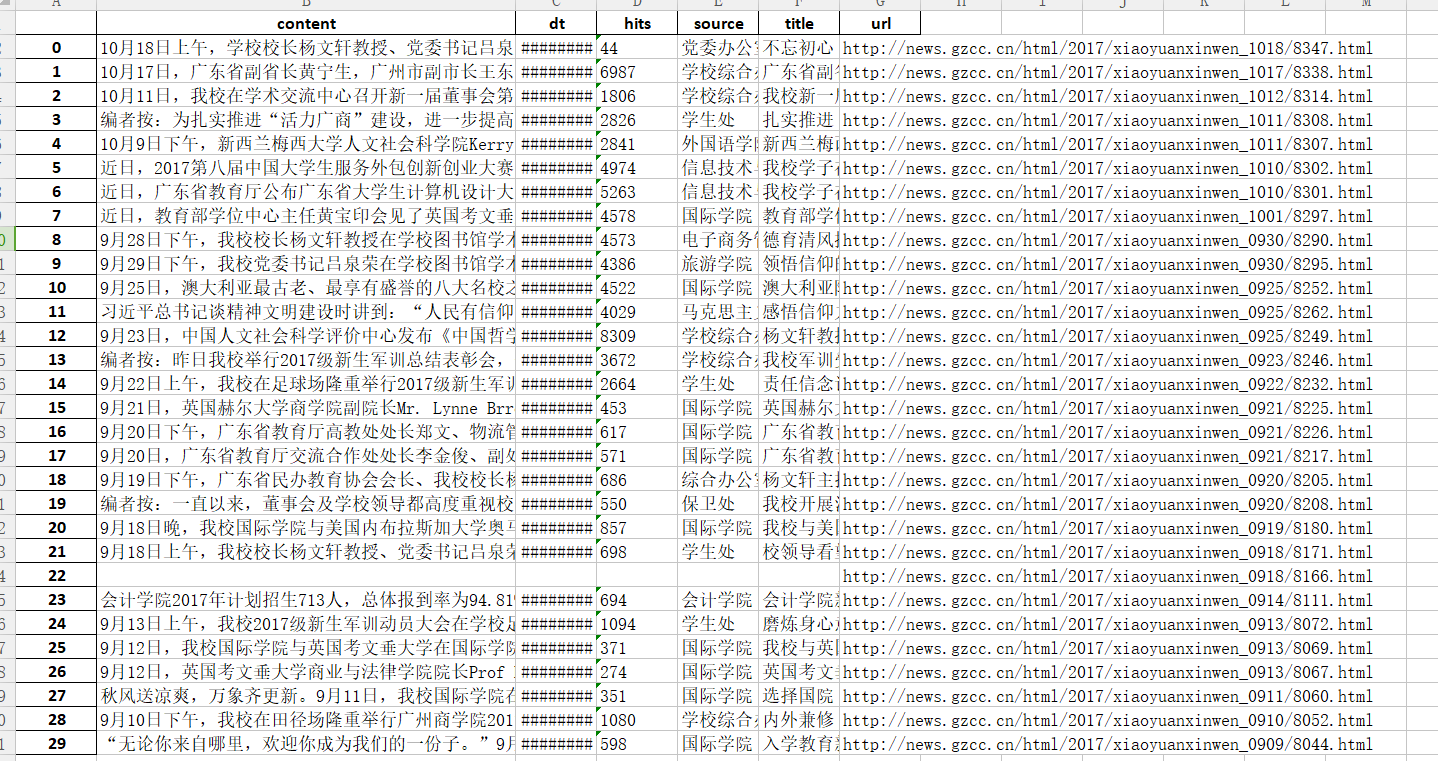

2.转换成pandas的数据结构DataFrame

3.从DataFrame保存到excel

4.从DataFrame保存到sqlite3数据库

import requests from bs4 import BeautifulSoup import re from datetime import * import pandas def gethits(url_1): li_id =re.search('_.*/(.*).html',url_1).groups(0)[0] hits = requests.get('http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80'.format(li_id)).text.split('.')[-1].rstrip('''');''').lstrip(''''html(''') return hits def getdetail(url): res = requests.get(url) res.encoding = 'utf-8' soup = BeautifulSoup(res.text,'html.parser') news = {} news['url'] = url if(soup.select('.show-title')): news['title'] = soup.select('.show-title')[0].text info = soup.select('.show-info')[0].text news['dt'] = datetime.strptime(info.lstrip('发布时间:')[0:19],'%Y-%m-%d %H:%M:%S') news['source'] = re.search('来源:(.*)点击',info).group(1).strip() news['content'] = soup.select('.show-content')[0].text.strip() news['hits'] = gethits(url) else: pass return news def onepage(url_page): res = requests.get(url_page) res.encoding = 'utf-8' soup = BeautifulSoup(res.text,'html.parser') newsls = [] for news in soup.select('li'): if len(news.select('.news-list-title'))>0: newsls.append(getdetail(news.select('a')[0]['href'])) return newsls url_main="http://news.gzcc.cn/html/xiaoyuanxinwen/" res = requests.get(url_main) res.encoding = 'utf-8' soup = BeautifulSoup(res.text,'html.parser') newstotal = [] newstotal.extend(onepage(url_main)) pages = int(soup.select('.a1')[0].text.rstrip('条'))//10+1 for i in range(2,pages+1): url_page = "http://news.gzcc.cn/html/xiaoyuanxinwen/{}.html".format(i) newstotal.extend(onepage(url_page)) df = pandas.DataFrame(newstotal) print(df.head()) df.to_excel('news.xlsx')