1 import requests

2 import re

3 from bs4 import BeautifulSoup

4 import traceback

5

6 def getHTMLText(url):

7 try:

8 r = requests.get(url, timeout = 30)

9 r.raise_for_status()

10 r.encoding = r.apparent_encoding

11 return r.text

12 except:

13 return ""

14

15 def getStockList(lst, stockURL):

16 html = getHTMLText(stockURL)

17 soup = BeautifulSoup(html, 'html.parser')

18 a = soup.find_all('a')

19 for i in a:

20 try:

21 href = i.attrs['href']

22 lst.append(re.findall(r"[s][hz]d{6}",href)[0])

23 except:

24 continue

25

26 def getStockInfo(lst, stockURL, fpath):

27 for stock in lst:

28 url = stockURL + stock +".html"

29 html = getHTMLText(url)

30 try:

31 if html == "":

32 continue

33 infoDict = {}

34 soup = BeautifulSoup(html, 'html.parser')

35 stockInfo = soup.find('div',attrs={'class':'stock-bets'})

36

37 name = stockInfo.find_all(attrs={'class':'bets-name'})[0]

38 infoDict.update({'股票名称':name.text.split()[0]})

39

40 keyList = stockInfo.find_all('dt')

41 valueList = stockInfo.find_all('dd')

42 for i in range(len(keyList)):

43 key = keyList[i].text

44 val = valueList[i].text

45 infoDict[key] = val

46

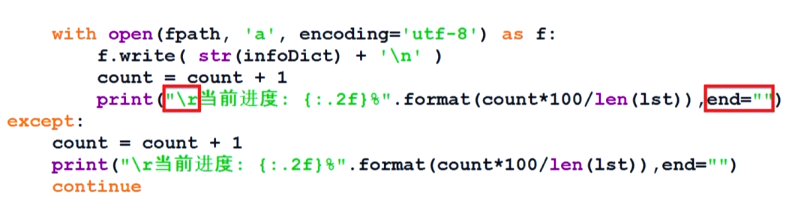

47 with open(fpath,'a',encoding='utf-8') as f:

48 f.write(str(infoDict) + '

')

49 except:

50 traceback.print_exc()

51 continue

52

53 def main():

54 stock_list_url = 'http://quote.eastmoney.com/stocklist.html'

55 stock_info_url = 'https://gupiao.baidu.com/stock/'

56 output_file = 'G://Learning materials//Python//python网页爬虫与信息提取//BaiduStockInfo.txt'

57 slist = []

58 getStockList(slist,stock_list_url)

59 getStockInfo(slist,stock_info_url,output_file)

60 main()