【应用】预测新产品上市后的基于时间的销售数据

【领域】Neural networks; RNNs; Encoder-Decoder;

【文章要点】

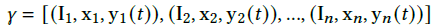

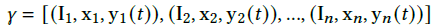

1. 使用历史数据进行训练,预测一个新产品上市后的销售情况

2. 数据:产品图像数据I+产品属性数据x(如 design attributes such as color, pattern, sleeve style etc. or merchandising attributes such as list price, promotion etc.)

3. 问题定义:销售数据:

输入数据:

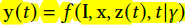

需要求取的是:

同时,还需要考虑一些外部因素,如周末,节日,重大节日,促销等, 外部的因素表示为

综上,求取的是

4. 系统整体示意:

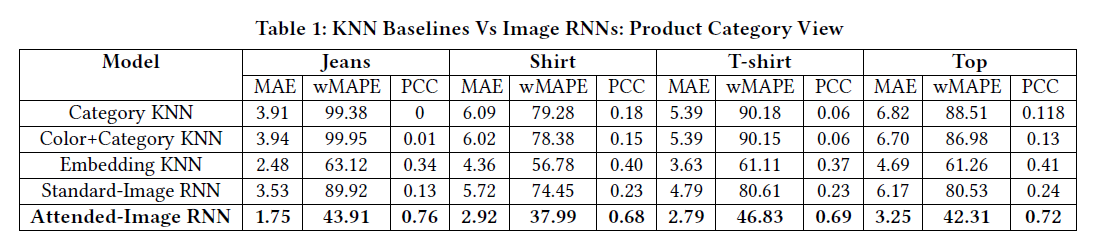

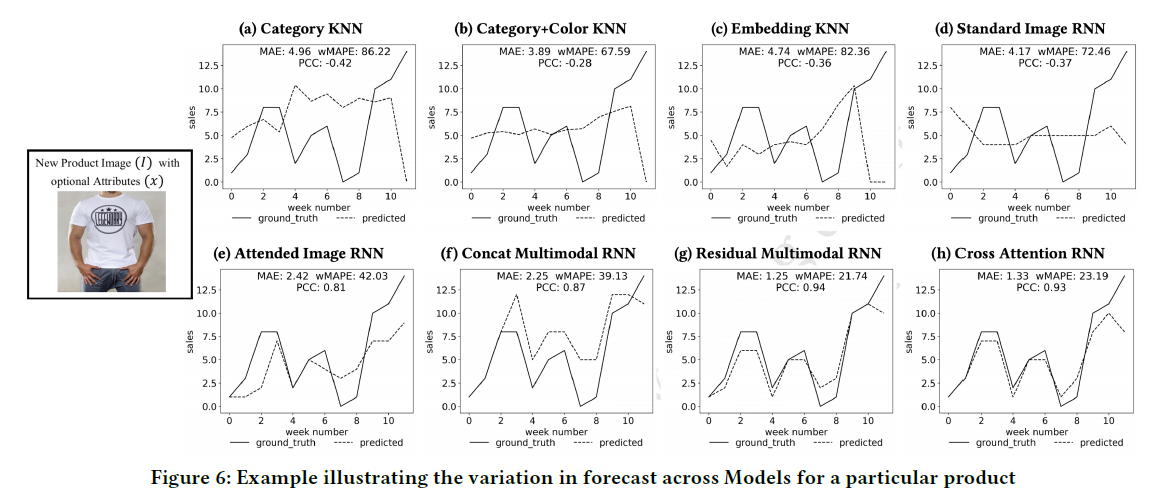

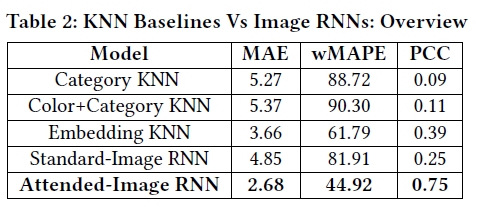

5. 传统方法:使用KNN。即将新产品与历史产品做比对,得到最为相似的K个旧产品,使用旧产品的历史数据集成并做响应的预测

1) Attribute KNN. 使用商品属性的距离,求取紧邻,使用距离作为权重的参考,将k个商品的历史销售数据集成。θ为距离相关的数据

2) Embedding KNN. 等不能直接量化的特征embedding,embedding后再求K个紧邻,随后同上。Φ为求embedding

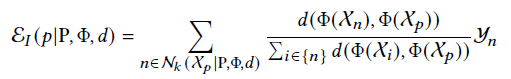

6. 基于Encoder-Decoder的时间序列模型。探索了多个模型

1)Sequence learning with encoded image input (Image RNN)

Encoder模块为给定的输入图像计算一个紧凑的嵌入,并将其与时间特征合并,然后再把encoder生成的数据输入到RNN decoder

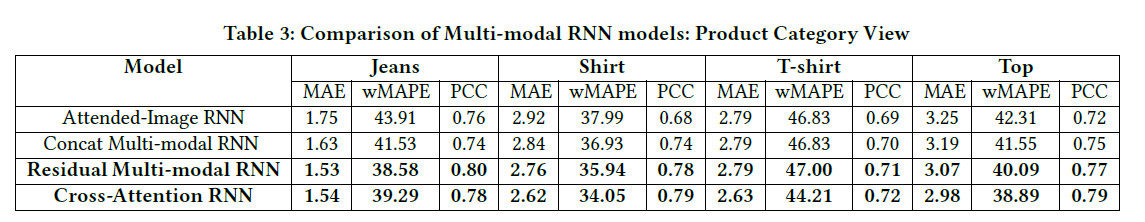

2) Sequence learning with encoded multi-modal inputs.(Multi-modal RNN)

与1)不同的是,将属性标签做了embedding,一起加进去网络中

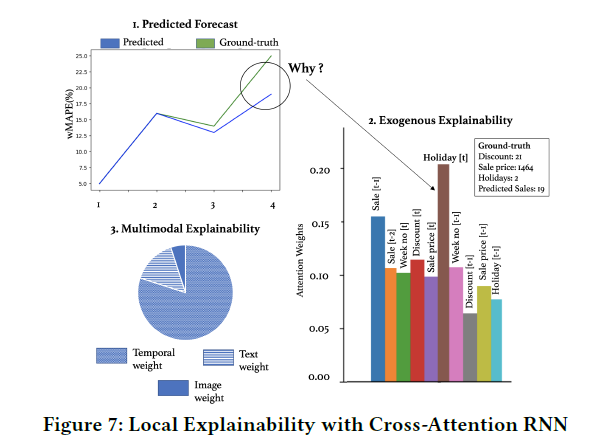

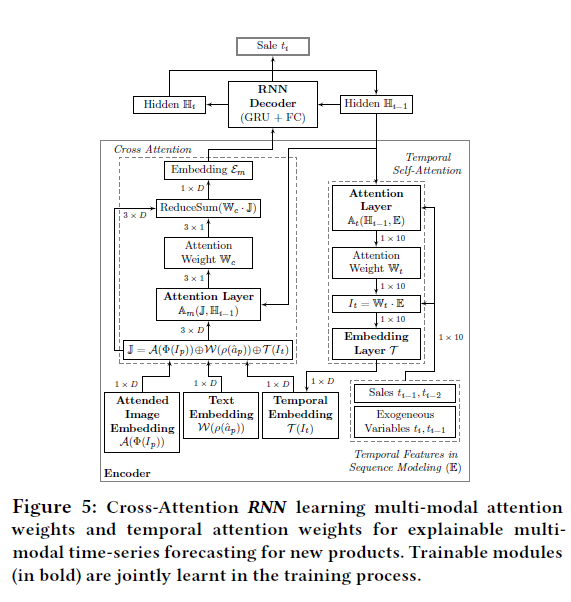

3) Explainable sequence learning with attended multi-modal inputs.(Cross-Attention RNN)

加入可解释性的部分?使用Cross-Attention

6. 实验结果

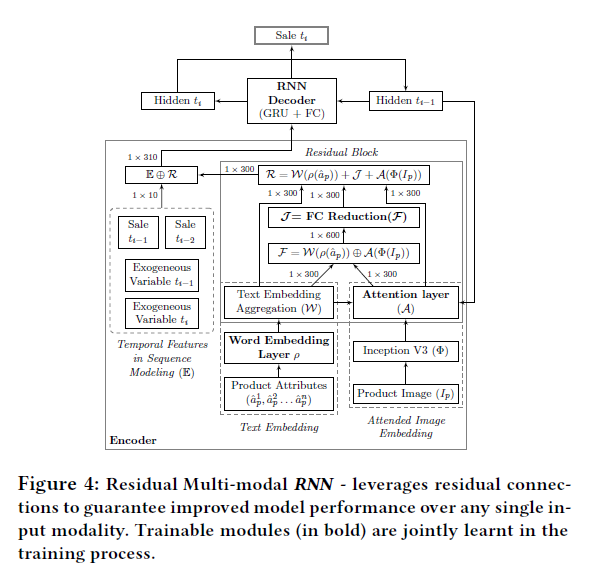

7. 可解释性的结果