Pytorch多GPU训练

临近放假, 服务器上的GPU好多空闲, 博主顺便研究了一下如何用多卡同时训练

原理

多卡训练的基本过程

- 首先把模型加载到一个主设备

- 把模型只读复制到多个设备

- 把大的batch数据也等分到不同的设备

- 最后将所有设备计算得到的梯度合并更新主设备上的模型参数

代码实现(以Minist为例)

#!/usr/bin/python3

# coding: utf-8

import torch

from torchvision import datasets, transforms

import torchvision

from tqdm import tqdm

device_ids = [3, 4, 6, 7]

BATCH_SIZE = 64

transform = transforms.Compose([transforms.ToTensor(),

transforms.Normalize(mean=[0.5,0.5,0.5],std=[0.5,0.5,0.5])])

data_train = datasets.MNIST(root = "./data/",

transform=transform,

train = True,

download = True)

data_test = datasets.MNIST(root="./data/",

transform = transform,

train = False)

data_loader_train = torch.utils.data.DataLoader(dataset=data_train,

# 这里注意batch size要对应放大倍数

batch_size = BATCH_SIZE * len(device_ids),

shuffle = True,

num_workers=2)

data_loader_test = torch.utils.data.DataLoader(dataset=data_test,

batch_size = BATCH_SIZE * len(device_ids),

shuffle = True,

num_workers=2)

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.conv1 = torch.nn.Sequential(

torch.nn.Conv2d(1, 64, kernel_size=3, stride=1, padding=1),

torch.nn.ReLU(),

torch.nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1),

torch.nn.ReLU(),

torch.nn.MaxPool2d(stride=2, kernel_size=2),

)

self.dense = torch.nn.Sequential(

torch.nn.Linear(14 * 14 * 128, 1024),

torch.nn.ReLU(),

torch.nn.Dropout(p=0.5),

torch.nn.Linear(1024, 10)

)

def forward(self, x):

x = self.conv1(x)

x = x.view(-1, 14 * 14 * 128)

x = self.dense(x)

return x

model = Model()

model = torch.nn.DataParallel(model, device_ids=device_ids) # 声明所有可用设备

model = model.cuda(device=device_ids[0]) # 模型放在主设备

cost = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters())

n_epochs = 50

for epoch in range(n_epochs):

running_loss = 0.0

running_correct = 0

print("Epoch {}/{}".format(epoch, n_epochs))

print("-"*10)

for data in tqdm(data_loader_train):

X_train, y_train = data

# 注意数据也是放在主设备

X_train, y_train = X_train.cuda(device=device_ids[0]), y_train.cuda(device=device_ids[0])

outputs = model(X_train)

_,pred = torch.max(outputs.data, 1)

optimizer.zero_grad()

loss = cost(outputs, y_train)

loss.backward()

optimizer.step()

running_loss += loss.data.item()

running_correct += torch.sum(pred == y_train.data)

testing_correct = 0

for data in data_loader_test:

X_test, y_test = data

X_test, y_test = X_test.cuda(device=device_ids[0]), y_test.cuda(device=device_ids[0])

outputs = model(X_test)

_, pred = torch.max(outputs.data, 1)

testing_correct += torch.sum(pred == y_test.data)

print("Loss is:{:.4f}, Train Accuracy is:{:.4f}%, Test Accuracy is:{:.4f}".format(running_loss/len(data_train),

100*running_correct/len(data_train),

100*testing_correct/len(data_test)))

torch.save(model.state_dict(), "model_parameter.pkl")

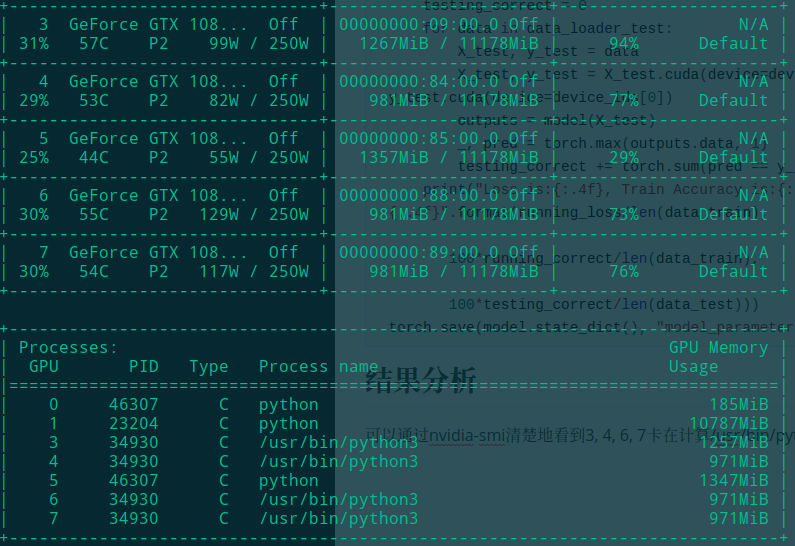

结果分析

可以通过nvidia-smi清楚地看到3, 4, 6, 7卡在计算/usr/bin/python3进程(进程号都为34930)

从实际加速效果来看, 由于minist是小数据集, 可能调度带来的overhead反而比计算的开销大, 因此加速不明显. 但是到大数据集上训练时, 多卡的优势就会体现出来了