引入

在学习爬虫之前可以先大致的了解一下HTTP协议~

HTTP协议:https://www.cnblogs.com/peng104/p/9846613.html

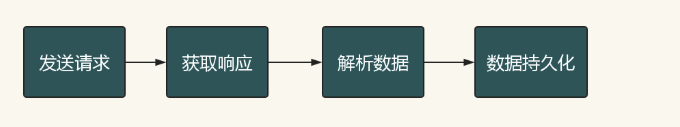

爬虫的基本流程

简介

简介:Requests是用python语言基于urllib编写的,采用的是Apache2 Licensed开源协议的HTTP库,Requests它会比urllib更加方便,可以节约我们大量的工作。一句话,requests是python实现的最简单易用的HTTP库,建议爬虫使用requests库。默认安装好python之后,是没有安装requests模块的,需要单独通过pip安装

安装方法:pip install requests

开源地址:https://github.com/kennethreitz/requests

中文文档 API: http://docs.python-requests.org/zh_CN/latest/index.html

基本语法

requests模块支持的请求:

import requests requests.get("http://httpbin.org/get") requests.post("http://httpbin.org/post") requests.put("http://httpbin.org/put") requests.delete("http://httpbin.org/delete") requests.head("http://httpbin.org/get") requests.options("http://httpbin.org/get")

get请求

1. 基本请求

import requests response=requests.get('https://www.jd.com/',) with open("jd.html","wb") as f: f.write(response.content)

2. 含参数请求

import requests response=requests.get('https://s.taobao.com/search?q=手机') response=requests.get('https://s.taobao.com/search',params={"q":"三只松鼠"})

3. 含请求头

import requests response=requests.get('https://dig.chouti.com/', headers={ 'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/62.0.3202.75 Safari/537.36', } )

4. 含cookies请求

import uuid import requests url = 'http://httpbin.org/cookies' cookies = dict(sbid=str(uuid.uuid4())) res = requests.get(url, cookies=cookies) print(res.text)

5. request.session()

import requests session=requests.session() res1=session.get("https://www.zhihu.com/explore") print(session.cookies.get_dict()) res2=session.get("https://www.zhihu.com/question/30565354/answer/463324517",cookies={"abs":"123"}

post请求

1. data参数

requests.post()用法与requests.get()完全一致,特殊的是requests.post()多了一个data参数,用来存放请求体数据

response=requests.post("http://httpbin.org/post",params={"a":"10"}, data={"name":"peng"})

2. 发送json数据

import requests res1=requests.post(url='http://httpbin.org/post', data={'name':'yuan'}) #没有指定请求头,#默认的请求头:application/x-www-form-urlencoed print(res1.json()) res2=requests.post(url='http://httpbin.org/post',json={'age':"22",}) #默认的请求头:application/json print(res2.json())

response对象

1. 常见属性

import requests respone=requests.get('https://sh.lianjia.com/ershoufang/') # respone属性 print(respone.text) print(respone.content) print(respone.status_code) print(respone.headers) print(respone.cookies) print(respone.cookies.get_dict()) print(respone.cookies.items()) print(respone.url) print(respone.history) print(respone.encoding)

2. 编码问题

import requests response=requests.get('http://www.autohome.com/news') #response.encoding='gbk' #汽车之家网站返回的页面内容为gb2312编码的,而requests的默认编码为ISO-8859-1,如果不设置成gbk则中文乱码 with open("res.html","w") as f: f.write(response.text)

3. 下载二进制文件(图片,视频,音频)

import requests response=requests.get('http://bangimg1.dahe.cn/forum/201612/10/200447p36yk96im76vatyk.jpg') with open("res.png","wb") as f: # f.write(response.content) # 比如下载视频时,如果视频100G,用response.content然后一下子写到文件中是不合理的 for line in response.iter_content(): f.write(line)

4. 解析json数据

import requests import json response=requests.get('http://httpbin.org/get') res1=json.loads(response.text) #太麻烦 res2=response.json() #直接获取json数据 print(res1==res2)

5. Redirection and History

默认情况下,除了 HEAD, Requests 会自动处理所有重定向。可以使用响应对象的 history 方法来追踪重定向。Response.history 是一个 Response 对象的列表,为了完成请求而创建了这些对象。这个对象列表按照从最老到最近的请求进行排序。

>>> r = requests.get('http://github.com') >>> r.url 'https://github.com/' >>> r.status_code 200 >>> r.history [<Response [301]>]

另外,还可以通过 allow_redirects 参数禁用重定向处理:

>>> r = requests.get('http://github.com', allow_redirects=False) >>> r.status_code 301 >>> r.history []

进阶用法

proxies代理

如果需要使用代理,你可以通过为任意请求方法提供 proxies 参数来配置单个请求:

import requests # 根据协议类型,选择不同的代理 proxies = { "http": "http://12.34.56.79:9527", "https": "http://12.34.56.79:9527", } response = requests.get("http://www.baidu.com", proxies = proxies) print(response.text)

也可以通过本地环境变量 HTTP_PROXY 和 HTTPS_PROXY 来配置代理:

export HTTP_PROXY="http://12.34.56.79:9527" export HTTPS_PROXY="https://12.34.56.79:9527"

私密代理

import requests # 如果代理需要使用HTTP Basic Auth,可以使用下面这种格式: proxy = { "http": "mr_mao_hacker:sffqry9r@61.158.163.130:16816" } response = requests.get("http://www.baidu.com", proxies = proxy) print(response.text)

web客户端验证

如果是Web客户端验证,需要添加 auth = (账户名, 密码)

import requests auth=('test', '123456') response = requests.get('http://192.168.199.107', auth = auth) print(response.text)

两个栗子

1、模拟GitHub登录,获取登录信息

import requests import re #请求1: r1=requests.get('https://github.com/login') r1_cookie=r1.cookies.get_dict() #拿到初始cookie(未被授权) authenticity_token=re.findall(r'name="authenticity_token".*?value="(.*?)"',r1.text)[0] #从页面中拿到CSRF TOKEN print("authenticity_token",authenticity_token) #第二次请求:带着初始cookie和TOKEN发送POST请求给登录页面,带上账号密码 data={ 'commit':'Sign in', 'utf8':'✓', 'authenticity_token':authenticity_token, 'login':'你的github账号?', 'password':'你的密码' } #请求2: r2=requests.post('https://github.com/session', data=data, cookies=r1_cookie, # allow_redirects=False ) print(r2.status_code) #200 print(r2.url) #看到的是跳转后的页面:https://github.com/ print(r2.history) #看到的是跳转前的response:[<Response [302]>] print(r2.history[0].text) #看到的是跳转前的response.text with open("result.html","wb") as f: f.write(r2.content)

2、爬取豆瓣电影信息

import requests import re import json import time from concurrent.futures import ThreadPoolExecutor pool=ThreadPoolExecutor(50) def getPage(url): response=requests.get(url) return response.text def parsePage(res): com=re.compile('<div class="item">.*?<div class="pic">.*?<em .*?>(?P<id>d+).*?<span class="title">(?P<title>.*?)</span>' '.*?<span class="rating_num" .*?>(?P<rating_num>.*?)</span>.*?<span>(?P<comment_num>.*?)评价</span>',re.S) iter_result=com.finditer(res) return iter_result def gen_movie_info(iter_result): for i in iter_result: yield { "id":i.group("id"), "title":i.group("title"), "rating_num":i.group("rating_num"), "comment_num":i.group("comment_num"), } def stored(gen): with open("move_info.txt","a",encoding="utf8") as f: for line in gen: data=json.dumps(line,ensure_ascii=False) f.write(data+" ") def spider_movie_info(url): res=getPage(url) iter_result=parsePage(res) gen=gen_movie_info(iter_result) stored(gen) def main(num): url='https://movie.douban.com/top250?start=%s&filter='%num pool.submit(spider_movie_info,url) #spider_movie_info(url) if __name__ == '__main__': before=time.time() count=0 for i in range(10): main(count) count+=25 after=time.time() print("总共耗费时间:",after-before)