本篇主要是利用 pyquery来定位抓取数据,而不用xpath,通过和xpath比较,pyquery效率要高。

主要代码:

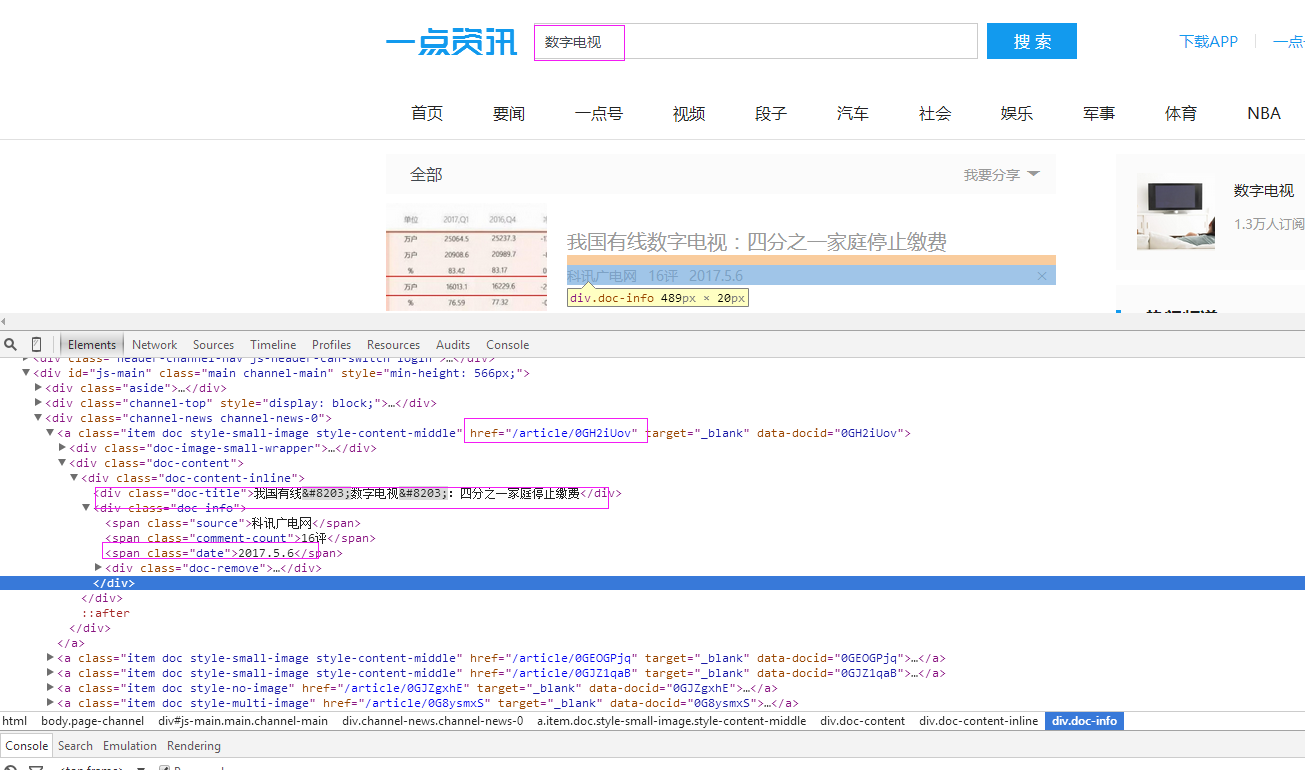

# coding=utf-8 import os import re from selenium import webdriver import selenium.webdriver.support.ui as ui import time from datetime import datetime from selenium.webdriver.common.action_chains import ActionChains import IniFile from threading import Thread from pyquery import PyQuery as pq import LogFile import mongoDB class yidianzixunSpider(object): def __init__(self): logfile = os.path.join(os.path.dirname(os.getcwd()), time.strftime('%Y-%m-%d') + '.txt') self.log = LogFile.LogFile(logfile) configfile = os.path.join(os.path.dirname(os.getcwd()), 'setting.conf') cf = IniFile.ConfigFile(configfile) self.webSearchUrl_list = cf.GetValue("yidianzixun", "webSearchUrl").split(';') self.keyword_list = cf.GetValue("section", "information_keywords").split(';') self.db = mongoDB.mongoDbBase() self.start_urls = [] for url in self.webSearchUrl_list: self.start_urls.append(url) self.driver = webdriver.PhantomJS() self.wait = ui.WebDriverWait(self.driver, 2) self.driver.maximize_window() def scroll_foot(self): ''' 滚动条拉到底部 :return: ''' js = "" # 如何利用chrome驱动或phantomjs抓取 if self.driver.name == "chrome" or self.driver.name == 'phantomjs': js = "var q=document.body.scrollTop=10000" # 如何利用IE驱动抓取 elif self.driver.name == 'internet explorer': js = "var q=document.documentElement.scrollTop=10000" return self.driver.execute_script(js) def Comapre_to_days(self, leftdate, rightdate): ''' 比较连个字符串日期,左边日期大于右边日期多少天 :param leftdate: 格式:2017-04-15 :param rightdate: 格式:2017-04-15 :return: 天数 ''' l_time = time.mktime(time.strptime(leftdate, '%Y-%m-%d')) r_time = time.mktime(time.strptime(rightdate, '%Y-%m-%d')) result = int(l_time - r_time) / 86400 return result def date_isValid(self, strDateText): ''' 判断日期时间字符串是否合法:如果给定时间大于当前时间是合法,或者说当前时间给定的范围内 :param strDateText: 四种格式 '2小时前'; '2天前' ; '昨天' ;'2017.2.12 ' :return: True:合法;False:不合法 ''' currentDate = time.strftime('%Y-%m-%d') if strDateText.find('分钟前') > 0 or strDateText.find('刚刚') > -1: return True, currentDate elif strDateText.find('小时前') > 0: datePattern = re.compile(r'd{1,2}') ch = int(time.strftime('%H')) # 当前小时数 strDate = re.findall(datePattern, strDateText) if len(strDate) == 1: if int(strDate[0]) <= ch: # 只有小于当前小时数,才认为是今天 return True, currentDate return False, '' def log_print(self, msg): ''' # 日志函数 # :param msg: 日志信息 # :return: # ''' print '%s: %s' % (time.strftime('%Y-%m-%d %H-%M-%S'), msg) def scrapy_date(self): strsplit = '------------------------------------------------------------------------------------' for link in self.start_urls: for i in range(5): self.scroll_foot() # time.sleep(1) self.driver.get(link) selenium_html = self.driver.execute_script("return document.documentElement.outerHTML") doc = pq(selenium_html) infoList = [] self.log.WriteLog(strsplit) self.log_print(strsplit) Elements = doc('a[class^="item doc style-"]') # class属性内容以item doc style-开始的元素 for element in Elements.items(): date = element('span[class="date"]').text().encode('utf8').strip() flag, strDate = self.date_isValid(date) if flag: title = element('div[class="doc-title"]').text().encode('utf8').strip() for keyword in self.keyword_list: if title.find(keyword) > -1: url = 'http://www.yidianzixun.com' + element.attr('href') source = element('span[class="source"]').text().encode('utf8').strip() dictM = {'title': title, 'date': strDate, 'url': url, 'keyword': keyword, 'introduction': title, 'source': source} infoList.append(dictM) self.log.WriteLog('title:%s'%title) self.log.WriteLog('url:%s' % url) self.log.WriteLog('source:%s' % source) self.log.WriteLog('kword:%s' % keyword) self.log_print('title:%s' % title) self.log_print('url:%s' % url) self.log_print('source:%s' % source) self.log_print('kword:%s' % keyword) break if len(infoList)>0: self.db.SaveInformations(infoList) self.driver.close() self.driver.quit() # obj = yidianzixunSpider() # obj.scrapy_date()