在学习spark streaming时,建议先学习和掌握RDD。spark streaming无非是针对流式数据处理这个场景,在RDD基础上做了一层封装,简化流式数据处理过程。

spark streaming 引入一些新的概念和方法,本文将介绍这方面的知识。主要包括以下几点:

- 初始化流上下文

- Discretized Streams离散数据流

- Input DStreams and Receivers

- Transformations on DStreams

- Output Operations on DStreams

- DataFrame and SQL Operations

Basic Concepts

Initializing StreamingContext

SparkConf conf = new SparkConf().setAppName(appName).setMaster(master);

JavaStreamingContext ssc = new JavaStreamingContext(conf, new Duration(1000));

./bin/spark-submit

--class <main-class>

--master <master-url>

--deploy-mode <deploy-mode>

--conf <key>=<value>

... # other options

<application-jar>

[application-arguments]

- Run on a Spark standalone cluster in client deploy mode

./bin/spark-submit

--class org.apache.spark.examples.SparkPi

--master spark://207.184.161.138:7077

--executor-memory 20G

--total-executor-cores 100

/path/to/examples.jar

1000

Only one StreamingContext can be active in a JVM at the same time.**==在虚拟机中一次只能运行一个StreamingContext.

stop() on StreamingContext also stops the SparkContext. To stop only the StreamingContext, set the optional parameter of stop() called stopSparkContext to false.

stop()默认停止StreamingContext时同时停止SparkContext。通过参数可设置只停止StreamingContext。

A SparkContext can be re-used to create multiple StreamingContexts, as long as the previous StreamingContext is stopped (without stopping the SparkContext) before the next StreamingContext is created.

SparkContext 可以创建多个StreamingContexts,只要前一个StreamingContext已停止。

Discretized Streams (DStreams)

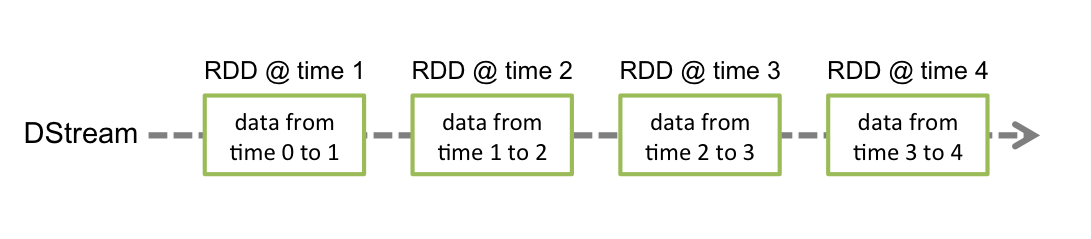

a DStream is represented by a continuous series of RDDs, which is Spark’s abstraction of an immutable, distributed dataset.

DStream离散数据流是不可变的、分布式数据集的抽象,它代表一系列连续的RDDs.

Input DStreams and Receivers

两种数据源

- 基本数据源:文件、socket

- 高级数据源: Kafka, Flume, Kinesis

But note that a Spark worker/executor is a long-running task, hence it occupies one of the cores allocated to the Spark Streaming application. Therefore, it is important to remember that a Spark Streaming application needs to be allocated enough cores (or threads, if running locally) to process the received data, as well as to run the receiver(s).

spark的worker是长期运行的任务,需要占据cpu的一个核。所以在集群上使用时,需要有足够的多cpu核,在本地运行时,需要足够多的线程。所以spark对CPU核数、内存有要求。

- 注意事项

- when running locally, always use “local[n]” as the master URL, where n > number of receivers to run (see Spark Properties for information on how to set the master). 运行本地模式时,n > 接收者数量。

- Extending the logic to running on a cluster, the number of cores allocated to the Spark Streaming application must be more than the number of receivers. Otherwise the system will receive data, but not be able to process it.在集群运行时,分配给spark的核数>接收者数量,否则系统只能接收数据,不能处理数据。

Receiver Reliability

两类接收者:

- 可靠接收者:数据源允许确认转换的数据。

- 不可靠接收者

Transformations on DStreams

A few of these transformations are worth discussing in more detail.

UpdateStateByKey Operation

在更新的时候可以保持任意的状态。

have to do two steps.

- Define the state - The state can be an arbitrary data type.

- Define the state update function - Specify with a function how to update the state using the previous state and the new values from an input stream.

Transform Operation

arbitrary RDD-to-RDD functions to be applied on a DStream.在DStream中可以使用任意的RDD-to-RDD函数。因为DStream本身是对RDD的封装,所以RDD的转换,DStream都支持。

This allows you to do time-varying RDD operations, that is, RDD operations, number of partitions, broadcast variables, etc. can be changed between batches. 允许执行基于时间变化的RDD操作,比如在批处理中 操作RDD,分组的数量、广播变量等。

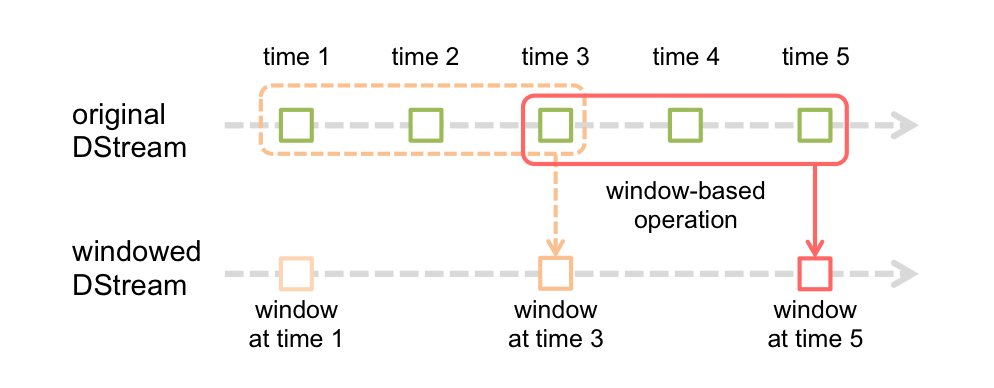

Window Operations

window operation needs to specify two parameters.

- window length - The duration of the window (3 in the figure).

- sliding interval - The interval at which the window operation is performed (2 in the figure).

Join Operations

- Stream-stream joins

- Stream-dataset joins

Output Operations on DStreams

Output operations allow DStream’s data to be pushed out to external systems like a database or a file systems.输出操作运行数据流推送到外部系统,比如数据库或者文件。

Design Patterns for using foreachRDD

dstream.foreachRDD(rdd -> {

rdd.foreachPartition(partitionOfRecords -> {

// ConnectionPool is a static, lazily initialized pool of connections

Connection connection = ConnectionPool.getConnection();

while (partitionOfRecords.hasNext()) {

connection.send(partitionOfRecords.next());

}

ConnectionPool.returnConnection(connection); // return to the pool for future reuse

});

});

Other points to remember:

-

DStreams are executed lazily by the output operations, just like RDDs are lazily executed by RDD actions. Specifically, RDD actions inside the DStream output operations force the processing of the received data. Hence, if your application does not have any output operation, or has output operations like dstream.foreachRDD() without any RDD action inside them, then nothing will get executed. The system will simply receive the data and discard it.数据流根据输出操作进行延迟处理。所以必须要有输出操作或者 在forechRDD()中有RDD的动作。

-

By default, output operations are executed one-at-a-time. And they are executed in the order they are defined in the application.输出操作一次一个。

DataFrame and SQL Operations

- Dataset is a new interface added in Spark 1.6 that provides the benefits of RDDs (strong typing, ability to use powerful lambda functions) with the benefits of Spark SQL’s optimized execution engine. A Dataset can be constructed from JVM objects and then manipulated using functional transformations (map, flatMap, filter, etc.).

DateSet吸收了RDD的优点,和sparksql优化执行引擎的好处。通过JVM Objects构造Dataset。

SparkSession

SparkSession spark = SparkSession

.builder()

.appName("Java Spark SQL basic example")

.config("spark.some.config.option", "some-value")

.getOrCreate();

- DataFrame: Untyped Dataset Operations (aka DataFrame Operations)

无类型的Dataset

Dataset<Row> sqlDF = spark.sql("SELECT * FROM people");

Each RDD is converted to a DataFrame, registered as a temporary table and then queried using SQL.将RDD转换成DataFrame,注册一个临时表,然后使用sql查询。