作业1

1)、实验内容:在中国气象网(http://www.weather.com.cn)给定城市集的7日天气预报,并保存在数据库。

这个代码在书上有,我们只是做了一个复现。

代码如下:

import urllib.request

import sqlite3

class WeatherDB:

#打开数据库的方法

def openDB(self):

self.con=sqlite3.connect("weathers.db")

self.cursor=self.con.cursor()

try:

self.cursor.execute("create table weathers (wCity varchar(16),wDate varchar(16),wWeather varchar(64),wTemp varchar(32),constraint pk_weather primary key (wCity,wDate))")

except:

self.cursor.execute("delete from weathers")

#关闭数据库的方法

def closeDB(self):

self.con.commit()

self.con.close()

#插入数据的方法

def insert(self, city, date, weather, temp):

try:

self.cursor.execute("insert into weathers (wCity,wDate,wWeather,wTemp) values (?,?,?,?)",

(city, date, weather, temp))

except Exception as err:

print(err)

#打印数据库内容的方法

def show(self):

self.cursor.execute("select * from weathers")

rows = self.cursor.fetchall()

print("%-16s%-16s%-32s%-16s" % ("city", "date", "weather", "temp"))

for row in rows:

print("%-16s%-16s%-32s%-16s" % (row[0], row[1], row[2], row[3]))

class WeatherForecast:

def __init__(self):

self.headers = {

"User-Agent": "Mozilla/5.0 (Windows; U; Windows NT 6.0 x64; en-US; rv:1.9pre) Gecko/2008072421 Minefield/3.0.2pre"}

self.cityCode = {"北京": "101010100", "上海": "101020100", "广州": "101280101", "深圳": "101280601"}

def forecastCity(self, city):

if city not in self.cityCode.keys():

print(city + " code cannot be found")

return

url = "http://www.weather.com.cn/weather/" + self.cityCode[city] + ".shtml"

#爬虫模板开始

try:

req = urllib.request.Request(url, headers=self.headers)

data = urllib.request.urlopen(req)

data = data.read()

dammit = UnicodeDammit(data, ["utf-8", "gbk"])

data = dammit.unicode_markup

soup = BeautifulSoup(data, "lxml")

lis = soup.select("ul[class='t clearfix'] li")

for li in lis:

try:

date=li.select('h1')[0].text

weather=li.select('p[class="wea"]')[0].text

temp=li.select('p[class="tem"] span')[0].text+"/"+li.select('p[class="tem"] i')[0].text

print(city,date,weather,temp)

self.db.insert(city,date,weather,temp)

except Exception as err:

print(err)

except Exception as err:

print(err)

#整个过程的方法

def process(self, cities):

self.db = WeatherDB()

self.db.openDB()

for city in cities:

self.forecastCity(city)

# self.db.show()

self.db.closeDB()

ws = WeatherForecast()

ws.process(["北京", "上海", "广州", "深圳"])

print("完成")

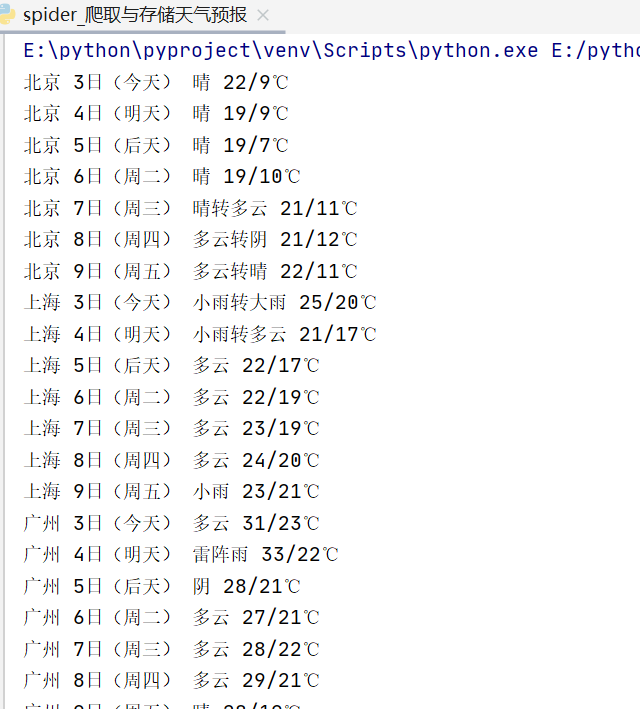

结果如下:

2)、心得体会

这次实验是书上原封不动的实验的复现,我们从中学习到了一点数据库的使用的tips,希望以后能熟练的运用爬虫的同时也可以用数据库作为辅助来储存数据。

作业2

1)、实验内容:用requests和BeautifulSoup库方法定向爬取股票相关信息。

代码如下:

import requests

import json

#获得数据的方法

def getHtml_data_031804139(url):

head = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:70.0) Gecko/20100101 Firefox/70.0',

'Cookie': 'qgqp_b_id=54fe349b4e3056799d45a271cb903df3; st_si=24637404931419; st_pvi=32580036674154; st_sp=2019-11-12%2016%3A29%3A38; st_inirUrl=; st_sn=1; st_psi=2019111216485270-113200301321-3411409195; st_asi=delete'

}

try:

r = requests.get(url, timeout=30, headers=head)

jsons = r.text[41:][:-2]#将前后用不着的字符排除

text_json = json.loads(jsons)

return text_json#返回json值

except:

return ""

def main():

url = 'http://49.push2.eastmoney.com/api/qt/clist/get?cb=jQuery11240918880626239239_1602070531441&pn=1&pz=20&po=1&np=1&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&fid=f3&fs=m:1+t:2,m:1+t:23&fields=f1,f2,f3,f4,f5,f6,f7,f8,f9,f10,f12,f13,f14,f15,f16,f17,f18,f20,f21,f23,f24,f25,f22,f11,f62,f128,f136,f115,f152&_=1602070531442'

result=getHtml_data_031804139(url)

#打印结果

i = 0

print("序号 ", "代码 ", "名称 ", "最新价(元) ", "涨跌幅 (%) ", "跌涨额(元) ", "成交量 ", "成交额(元) ", "涨幅(%) ")

for f in result['data']['diff']:

i += 1

#将对应的json值打印出来

print(str(i) + " ", f['f12'] + " ", f['f14'] + " ", str(f['f2']) + " ", str(f['f3']) + "% ",str(f['f4']) + " ", str(f['f5']) + " ", str(f['f6']) + " ", str(f['f7']) + "%")

main()

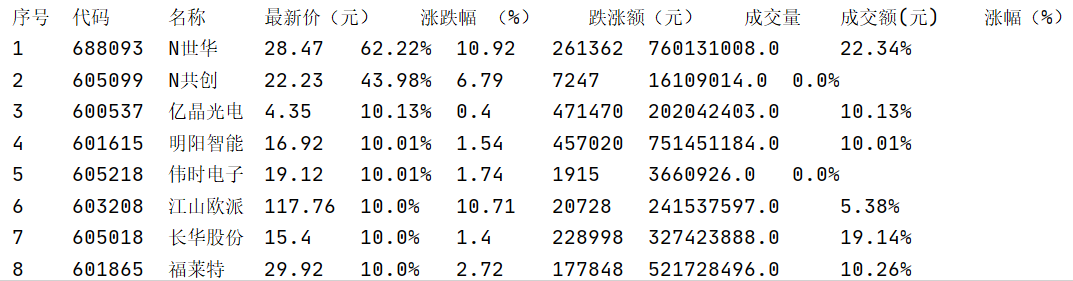

实验结果:

2)、心得体会

这次实验最主要的是找到对应的含有json数据的url,然后分析对应的json数据,同时要注意去除不需要的字符,然后对应提取打印出来即可。

作业3

1)、实验内容:根据自选3位数+学号后3位选取股票,获取印股票信息。抓包方法同作②。

代码如下:

import requests

import json

#获得数据的方法

def getHtml_data_031804139(url):

head = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:70.0) Gecko/20100101 Firefox/70.0',

'Cookie': 'qgqp_b_id=54fe349b4e3056799d45a271cb903df3; st_si=24637404931419; st_pvi=32580036674154; st_sp=2019-11-12%2016%3A29%3A38; st_inirUrl=; st_sn=1; st_psi=2019111216485270-113200301321-3411409195; st_asi=delete'

}

try:

r = requests.get(url, timeout=30, headers=head)

jsons = r.text[41:][:-2]#将前后用不着的字符排除

text_json = json.loads(jsons)

return text_json#返回json值

except:

return ""

def main():

#这里用的是np=3,即第三页的数据

url = 'http://49.push2.eastmoney.com/api/qt/clist/get?cb=jQuery11240918880626239239_1602070531441&pn=1&pz=20&po=1&np=3&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&fid=f3&fs=m:1+t:2,m:1+t:23&fields=f1,f2,f3,f4,f5,f6,f7,f8,f9,f10,f12,f13,f14,f15,f16,f17,f18,f20,f21,f23,f24,f25,f22,f11,f62,f128,f136,f115,f152&_=1602070531442'

result=getHtml_data_031804139(url)

#打印结果

i = 0

print("序号 ", "代码 ", "名称 ", "最新价(元) ", "涨跌幅 (%) ", "跌涨额(元) ", "成交量 ", "成交额(元) ", "涨幅(%) ")

for f in result['data']['diff']:

i += 1

#判断是不是139结尾

if (str(f['f12']).endswith("139")):

#将对应的json值打印出来

print(str(i) + " ", f['f12'] + " ", f['f14'] + " ", str(f['f2']) + " ", str(f['f3']) + "% ",str(f['f4']) + " ", str(f['f5']) + " ", str(f['f6']) + " ", str(f['f7']) + "%")

main()

实验结果:

2)、心得体会

有了第二题的基础,第三题只要加一个判断条件就可以,我是手动改变url里np值,然后找到的这四支股票。